The Regulation Pendulum and AI’s National Security Reckoning

The Trump administration’s approach to AI regulation has been one of the most dramatic policy reversals in recent memory. In January 2025, the president signed Executive Order 14179, “Removing Barriers to American Leadership in Artificial Intelligence,” revoking the Biden administration’s AI safety executive order on Day One and signaling that the United States would pursue AI dominance through deregulation.

The Unleashing Prosperity Through Deregulation executive order followed immediately, reinforcing the administration’s position that regulation was the obstacle standing between American industry and global AI supremacy.

By July 2025, America’s AI Action Plan codified this into a 25-page framework built around a simple thesis, the fastest path to winning the AI race is removing every regulatory barrier in the way. “Build Baby Build” was the operating philosophy, and any suggestion of pre-deployment safety testing was treated as an innovation-killing concession to the competition.

Less than a year later, the White House is actively studying an executive order that would require government vetting of frontier AI models before public release, with top economic adviser Kevin Hassett comparing it to the FDA’s drug approval process. NIST’s Center for AI Standards and Innovation (CAISI) has signed pre-deployment testing agreements with Google DeepMind, Microsoft, and xAI, covering cyber, biological, and chemical national security risks, with developers providing models that have reduced or removed safeguards for thorough evaluation. CAISI has already completed more than 40 evaluations, including assessments of unreleased models.

What happened between “Build Baby Build” and “test it before you ship it”?

The answer, in large part, is that the AI cyber capability curve forced a reckoning that the deregulatory narrative couldn’t survive.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The Capability Curve Forced the Conversation

I’ve been tracking the acceleration of AI cyber capabilities across multiple pieces this year, and the data that accumulated between January 2025 and May 2026 tells a story that even the most committed deregulators couldn’t ignore.

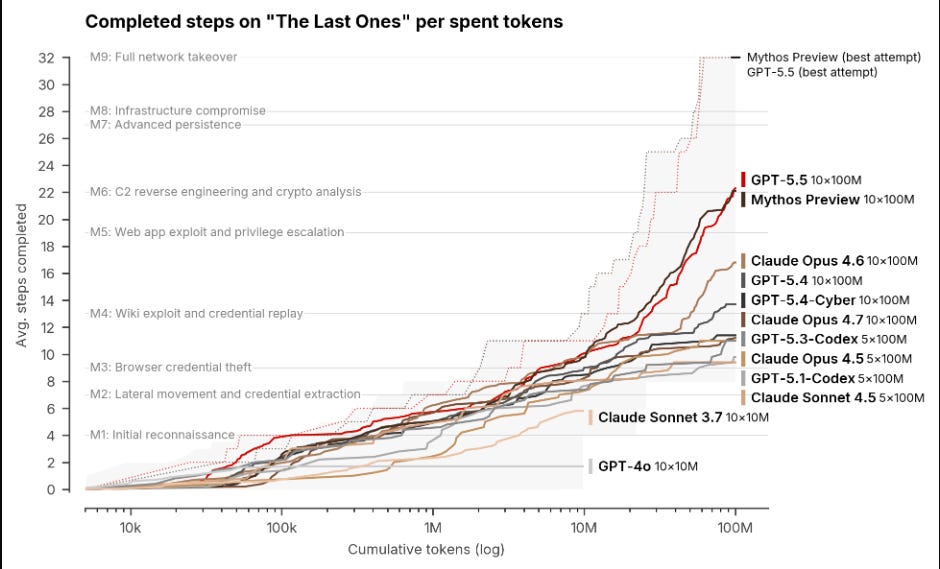

As I covered in The AI Cyber Capability Curve, the UK AI Safety Institute evaluated both Anthropic’s Claude Mythos Preview and OpenAI’s GPT-5.5 and found that both frontier models can autonomously execute multi-stage attack chains spanning the full kill chain. Mythos completed a 32-step corporate network attack simulation, moving from reconnaissance through privilege escalation, lateral movement, and full network takeover, in 3 out of 10 attempts. GPT-5.5 scored 90.5% on expert-level cyber tasks.

Two different models from two different labs, both independently demonstrating autonomous offensive capabilities that would have been dismissed as speculative two years ago.

The reporting around the policy reversal confirms what the technical community already understood. Anthropic’s Mythos is cited explicitly as the catalyst for the administration’s reconsideration. The White House wants to avoid the fallout if an AI-enabled cyberattack occurs and is also evaluating whether frontier models could yield offensive cyber capabilities useful to the Pentagon and intelligence agencies. The dual-use nature of these capabilities, simultaneously a national security asset and a national security threat, is what makes the policy problem genuinely hard.

But the capability concern extends well beyond frontier models. AISLE’s research on the “jagged frontier” demonstrated that models as small as 3.6 billion parameters, running at $0.11 per million tokens, detected the same FreeBSD vulnerability that Anthropic highlighted as a flagship Mythos discovery.

Eight out of eight models, including open-weights models that anyone can download and run locally, found it. As I discussed in Vulnerability Management in the Age of AI, the CSA’s AI vulnerability storm paper reinforced that frontier models like Mythos are the acceleration, not the starting gun. The capability is an emergent property of model architectures at multiple scales, and each patch becomes an exploit blueprint as AI accelerates patch-diffing and reverse engineering.

This creates the fundamental policy tension the administration is now wrestling with. You can’t regulate your way out of a capability that’s already embedded in open-weights models running on consumer hardware. But you also can’t pretend the national security implications don’t exist when models from regulated American labs are demonstrating capabilities that governments have legitimate reasons to understand before they’re released to the world.

The Market Failure Argument

The case for introducing controls isn’t abstract. Software security has been a market failure for decades, and AI is amplifying every dimension of that failure simultaneously.

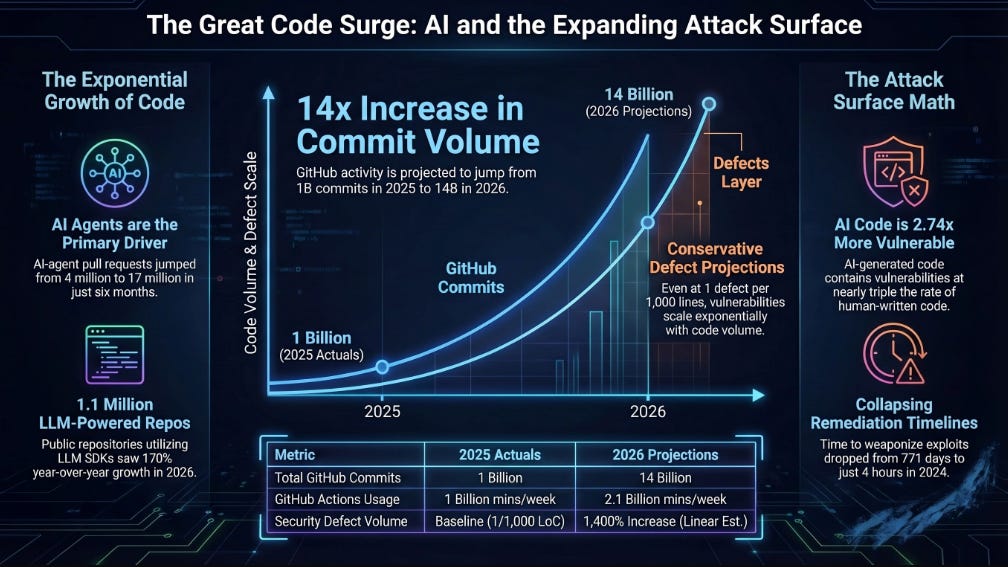

As I’ve covered across several pieces this year, the attack surface is expanding at rates that make historical comparisons meaningless. In The Attack Surface Exponential, I laid out the data showing GitHub on pace for 14 billion commits in 2026, a 14x year-over-year increase driven by AI coding agents.

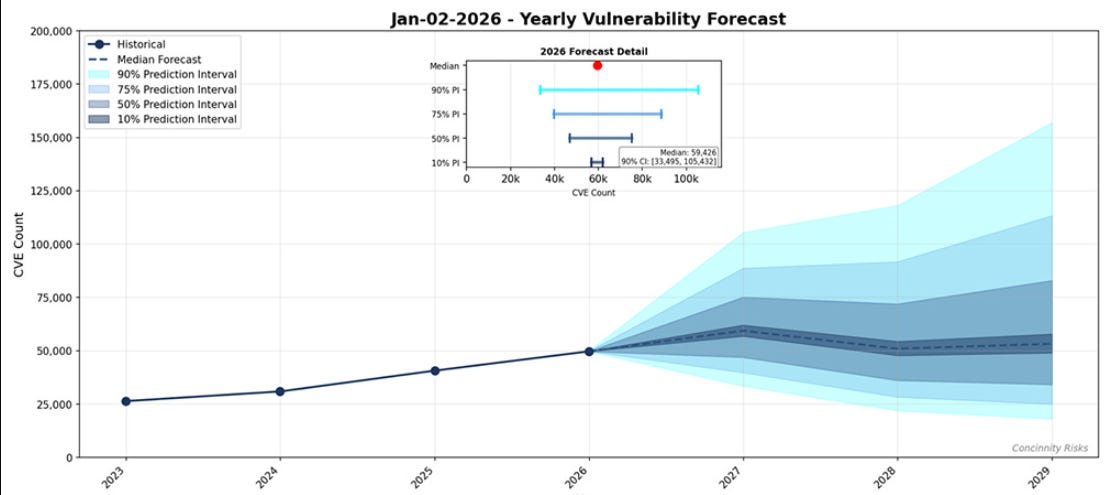

FIRST projects approximately 59,000 new CVEs this year. More code is being written faster than ever before, much of it by developers leveraging AI tools that prioritize velocity over security review, and the vulnerability density of AI-generated code remains elevated.

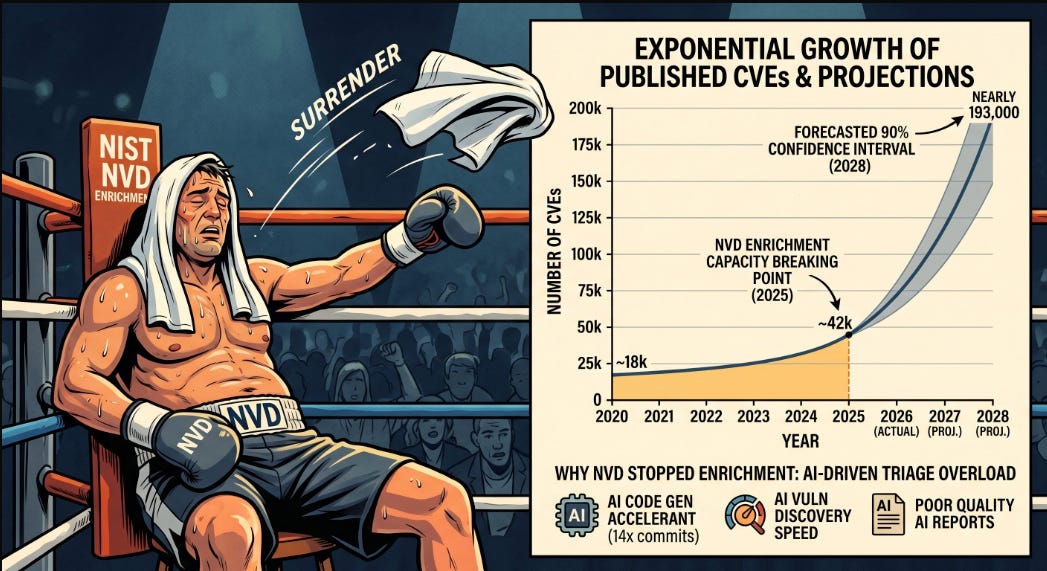

The infrastructure defenders depend on is collapsing under the weight. As I documented in The NVD Just Threw In The Towel, NIST reclassified approximately 29,000 backlogged CVEs into a “Not Scheduled” category and announced it would only prioritize CVEs in the CISA KEV catalog, CVEs affecting federal government software, and CVEs tied to critical software under EO 14028.

The remediation gap I’ve been writing about since Vulnpocalypse keeps widening, because AI is accelerating the discovery and exploitation side of the equation at exponential rates while the remediation side remains constrained by organizational complexity, legacy architecture, and human bottlenecks.

The incentive structure argument I keep returning to is critical here. Vendors ship insecure software because competitive pressure rewards features over hardening. Developers write insecure code because they’re incentivized to prioritize velocity and speed to market.

As I explored in Software’s Iron Triangle, you can have it cheap, fast, or secure, and the market consistently chooses the first two. This is the textbook definition of a market failure, where rational actors following their individual incentives produce a collectively harmful outcome that the market can’t self-correct. As Jim Dempsey argued on the Lawfare podcast, some degree of software liability and regulatory intervention is necessary precisely because the market has demonstrated it won’t solve this problem voluntarily.

I covered the broader trajectory in Software Liability, Safe Harbor, and the National Cyber Strategy, and the current administration’s moves on AI safety testing represent a continuation of that logic, even if they’d never frame it that way. When frontier models can autonomously discover and exploit vulnerabilities in critical software, and when that capability is proliferating to smaller and cheaper models, the argument that the market will self-regulate becomes untenable.

As I wrote in my piece on Trump’s National Cyber Strategy, the tension between deregulation and national security was always going to force a reckoning, and Mythos accelerated the timeline.

The EU Cautionary Tale

Here is where the analysis gets uncomfortable, because the case for regulation and the case against going too far are both backed by real-world evidence.

The European Union has spent the better part of a decade positioning itself as a regulatory superpower, building a compliance stack that now includes the GDPR, the Digital Services Act, the Digital Markets Act, the Cyber Resilience Act, and the EU AI Act, with enforcement provisions that can impose fines of up to 7% of global revenue. The AI Act alone carries compliance costs that exceed €50,000 per high-risk system, with organizations reporting a 40% increase in overall compliance burden.

EU AI-startup venture capital funding fell by roughly 15% in 2024 amid regulatory concerns. Small and mid-size European tech companies face annual revenue-at-risk of $109,000 to $375,000 per firm from regulatory-driven delays in accessing frontier AI tools.

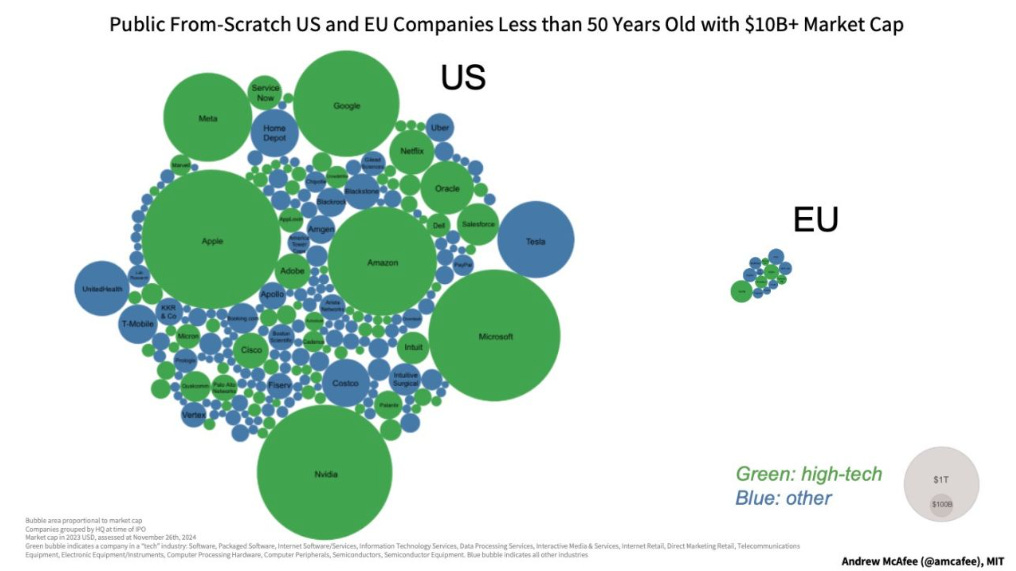

The macroeconomic picture tells the story even more clearly. The EU’s nominal GDP once nearly matched the United States at roughly $16 trillion in 2014. By 2026, the IMF projects U.S. GDP at $31.8 trillion and EU GDP at $22.5 trillion, a gap that has widened to 41%. American startups are 40% more likely to receive venture capital, and the overall VC investment in the U.S. is roughly five times larger than in Europe. None of the world’s top 10 technology companies by market capitalization are European.

The EU leads in automotive and some industrial technologies but lags significantly in software, AI, and the platform economies that are driving disproportionate economic growth globally.

I’m not arguing that regulation caused all of Europe’s economic underperformance. Demographics, energy costs, market fragmentation, and the absence of a unified capital market all contribute. But the regulatory approach has undeniably created friction that compounds those structural disadvantages.

When your compliance infrastructure adds 40% to the cost of deploying an AI system and your startup ecosystem is hemorrhaging VC funding because investors see regulatory risk as a drag on returns, you’ve created conditions where innovation migrates to jurisdictions with lighter regulatory footprints. The EU has effectively demonstrated what happens when the pursuit of being a regulatory superpower collides with the economic reality that the most regulated markets aren’t the most innovative ones.

As I noted in Compliance Does Equal Security, compliance and security are not the same thing, and a regulatory regime that optimizes for compliance can actually reduce security by diverting resources from effective controls to paperwork and audit theater. The risk with the U.S. pivot toward AI model testing is that the implementation slides from legitimate national security evaluation into the kind of compliance-heavy framework that has demonstrably slowed European innovation without proportionally improving outcomes.

The China Dimension

The geopolitical stakes make the policy calculus even harder. The administration’s own intelligence demonstrates that the AI competition with China isn’t theoretical. NSTM-4, issued by OSTP in April 2026, presents evidence that Chinese AI firms including DeepSeek, Moonshot AI, and MiniMax have been conducting industrial-scale adversarial distillation campaigns against American frontier models.

DeepSeek’s R1 model demonstrated reasoning capabilities comparable to OpenAI’s o1 at a reported training cost of $6 million, and the memo documents over 24,000 fraudulent accounts generating more than 16 million interactions with Claude to extract reasoning capabilities, chain-of-thought processes, and agentic behaviors.

China isn’t just stealing AI capabilities. It’s integrating them into military systems at a pace that the U.S. intelligence community is watching carefully. China has developed autonomous drone swarms coordinating attacks without human input, integrated neural networks with hypersonic glide vehicles for automatic target recognition and precision guidance, and has refused to discuss international bans on AI-controlled weapons systems including nuclear command and control applications. The competitive dynamic is not abstract.

Every month the U.S. spends debating regulatory frameworks is a month China spends deploying.

This is the strategic tension that makes the regulation debate so much more than a policy dispute between safety advocates and innovation advocates. If the U.S. imposes pre-release testing requirements that add months to the deployment timeline for frontier models, the commercial and military advantage of developing those models domestically erodes.

If the U.S. doesn’t impose any controls and a frontier model enables a catastrophic cyber attack or the proliferation of offensive capabilities to adversarial actors, the national security consequences are severe. The fact that Chinese labs are already replicating frontier-level capabilities through distillation means that withholding models from the U.S. market doesn’t keep the capabilities out of adversarial hands. It just ensures the U.S. pays the regulatory cost without capturing the security benefit.

The Innovation-Security Equilibrium

The honest assessment is that there is no clean answer here, and anyone who tells you otherwise is selling something or lacks a strong understanding of the complexities of these issues and their intersections.

The risks from AI to cybersecurity are real and documented. Frontier models can discover and exploit vulnerabilities autonomously, compress attack timelines from weeks to minutes, and execute multi-stage kill chains without human intervention. Open-weights models costing fractions of a cent per query can replicate significant portions of those capabilities.

The remediation infrastructure that defenders depend on, from the NVD to enterprise vulnerability management programs, was built for a world where threats moved at human speed and is visibly buckling under the pressure of AI-accelerated discovery and exploitation. The market failure in software security, where competitive incentives consistently produce insecure products, means voluntary self-regulation has not and will not close these gaps.

The risks from overregulation are also real and documented. The EU has spent a decade building the most comprehensive technology regulatory apparatus in the world, and the result is a 41% GDP gap with the United States, a venture capital ecosystem roughly one-fifth the size of America’s, and a technology sector that leads in none of the platform categories driving global economic growth.

Every major frontier AI model in production today was developed in the United States or China, not in the EU. Regulation-as-revenue, where fines measured in percentages of global turnover become a fiscal instrument rather than a behavioral correction mechanism, creates its own perverse incentives.

The U.S. approach that appears to be emerging through CAISI is more targeted than the EU model, focused specifically on national security evaluation of frontier capabilities rather than comprehensive compliance frameworks governing all AI applications. The pre-deployment testing agreements with Google DeepMind, Microsoft, and xAI are scoped to cyber, biological, and chemical risks, with classified environments available for sensitive evaluations. This is meaningfully different from the EU AI Act’s broad regulatory apparatus that applies to every “high-risk” AI system regardless of whether it has national security implications.

Whether this targeted approach can be maintained as political pressure builds is the open question. The pattern in regulatory history is clear. Targeted interventions tend to expand. The FDA analogy that Hassett used is instructive precisely because the FDA’s regulatory scope has grown enormously over its history, often in ways that add cost and delay without proportional safety benefit.

If CAISI’s pre-deployment testing evolves from a focused national security evaluation into a broad compliance gateway that adds months to every model release, the U.S. will find itself facing the same innovation-regulation tradeoff that has constrained Europe.

What the Practitioner Needs to Know

For security leaders watching this policy evolution, the practical implications matter more than the political dynamics. Regardless of where the regulatory pendulum settles, the capability curve isn’t waiting for policy consensus.

The structural reality is that AI-accelerated offense is here, it’s proliferating across model sizes and price points, and the defensive infrastructure the industry has relied on for decades is struggling to keep pace. The NVD’s effective capitulation means vulnerability management programs need alternative enrichment sources. The remediation gap means prioritization becomes existential rather than aspirational. The attack surface exponential means traditional AppSec and DevSecOps processes that already struggled to keep up with human-speed development velocity are fundamentally outmatched against AI-accelerated code generation.

Pre-deployment model testing by CAISI addresses one slice of this problem, the frontier-model-as-weapon scenario. But the jagged frontier research from AISLE demonstrates that the offensive capability isn’t concentrated in frontier models. It’s distributed across model scales, and the orchestration systems and harnesses that turn models into effective cyber tools are the real multiplier, not the raw model weights.

Regulating model releases, even if done well, doesn’t address the broader structural challenge of an industry that produces more code, more vulnerabilities, and more attack surface than it can secure.

The tension between innovation and security isn’t going to be resolved by policy. It’s going to be managed, imperfectly, through a combination of targeted regulatory intervention, market-driven adoption of AI-augmented defense, and the slow evolution of incentive structures that currently reward shipping fast over shipping secure. As I’ve argued consistently across pieces like Vulnpocalypse and The AI Cyber Capability Curve, the organizations that invest in AI-augmented defensive capabilities with the same urgency that adversaries bring to offense will be better positioned regardless of what the regulatory framework looks like.

The administration’s pivot from “Build Baby Build” to pre-release safety testing is a signal that the national security establishment has internalized what the cybersecurity community has been watching play out in real time.

The capability curve doesn’t care about regulatory philosophy. It doesn’t slow down for executive orders or compliance frameworks. The question isn’t whether to regulate or not to regulate. It’s whether the approach the U.S. lands on preserves enough innovation velocity to stay ahead of China while introducing enough oversight to prevent the worst outcomes of uncontrolled frontier model deployment.

Getting that balance right matters more than almost any other technology policy decision the current administration will make, and the margin for error in either direction is thinner than most policymakers realize.

" The White House wants to avoid the fallout if an AI-enabled cyberattack occurs and is also evaluating whether frontier models could yield offensive cyber capabilities useful to the Pentagon and intelligence agencies."

That sentence nails the real problems on the head.

Someone told Trump he'd get blamed for something if something happened.

Trump hates that.

OTOH, Palantir - among others - undoubtedly told him it'd be great if the US could expand its drive for world hegemony using AI.

Trump loves that idea.

" Software security has been a market failure for decades,"

No.

Software PRODUCTION has been a market failure since its inception.

As I've said before, what is necessary is a total revision of how software is produced, resulting in provably correct software which only does what it is supposed to do, down to the smallest function, and nothing more. Not to mention, hardware needs to follow.

Granted, this will NOT solve the overall security problem - because THAT problem is LITERALLY unsolvable.

But having reasonably provably correct software would be a massive improvement over the shit we're producing now.

As for "regulation" being able to solve anything, this is so laughable I won't even bother - especially when the regulation is being done by one state instead of an international consortium of people who actually know what they're doing.

Anyone who thinks anyone in the Trump administration - or the people behind them - has a clue what they're doing themselves have no clue. See: Iran war. See: RFK Jr. See: DOGE.

And this brings us to the bullshit anti-China nonsense about China AI results being solely based on "stolen US tech" - the same nonsense US corporations and the US state have been screaming about for decades in every industry against every Asian country from Japan in the '80s to China now.

It's entirely a demographic issue. China is three times the population of the US, has an equally - or probably better - educated population, and is pouring money into technology development for the benefit of the nation - rather than seeking $1.5 trillion a year for war fighting capability to achieve world hegemony. As a result, China's real production economy is vastly bigger than the US and it's rapidly improving technology is home-grown - or at worst benefits from the general sharing of tech which should be the goal of every nation.

Go talk to some US ex-pats living in China and get a different perspective. Stop relying on normal human racism and US Deep State propaganda.

The US bleats this anti-Chinese propaganda because it can't compete due to its own poor educational system, its poor government priorities, its poor social organization, and its poor geopolitical priorities.

Not to mention that the ENTIRE idiotic notion of "staying ahead of China" represents a basically racist, zero-sum philosophy which is precisely why the US is at war with two countries at the moment and striving to start a third one with China.

Not to mention that it can NOT succeed given the demographics I noted above.

"Getting that balance right matters more than almost any other technology policy decision the current administration will make..."

Not even close. The US and the entire world is currently guaranteed a global recession this summer and fall and almost certainly a global Depression by end of the year, according to economists, as a result of the global supply chain shock resulting from the closure of the Strait of Hormuz as a result of this administrations idiotic - and entirely illegal by any normative measure - war with Iran.

This is the result of the decisions made by this administration - and the moneyed interests behind them. See: Miriam Adelson.

It is likely to result in a major halt to AI data center building - building costs are already being reported as increasing significantly directly as a result of current events. The further impact on all industries - including software and AI - are no doubt being evaluated by corporations as I write this. And as most economists have said, we're not even feeling the start of the impact of this war as of this month.

The number one decision the US needs to make RIGHT NOW is to walk away from this war as fast as possible, regardless of whether the idiot (allegedly) in charge can take a loss to his ego or not.

Great piece, as always. Thank you!