Vulnerability Management in the Age of AI-Accelerated Everything

I co-authored Effective Vulnerability Management in 2024 because I believed the industry’s approach to vulnerability management was fundamentally misaligned with how software was actually being built, deployed, and attacked.

Two years later, every structural pressure I identified in that book has intensified by an order of magnitude, and several new ones have emerged that I didn’t fully anticipate.

The Cloud Security Alliance, in collaboration with SANS, [un]prompted, and the OWASP Gen AI Security Project, just published “The AI Vulnerability Storm: Building a Mythos-ready Security Program”, and it’s one of the more comprehensive practitioner-oriented publications out there of what AI-accelerated offense means for vulnerability management programs. It deserves a close read from every CISO and security leader who is still running a vulnerability management program designed for a world that no longer exists.

I recently delivered a talk at Cloud Security Alliance’s Agentic AI Security Summit last week titled “The Vulnpocalypse Is Here - Now What?” which is below, and is inline with this blog, so check it out:

The Structural Problem

The CSA paper frames the current moment as an “AI vulnerability storm,” and while the branding might sound dramatic, the underlying analysis is grounded in data I’ve been tracking across multiple articles this year.

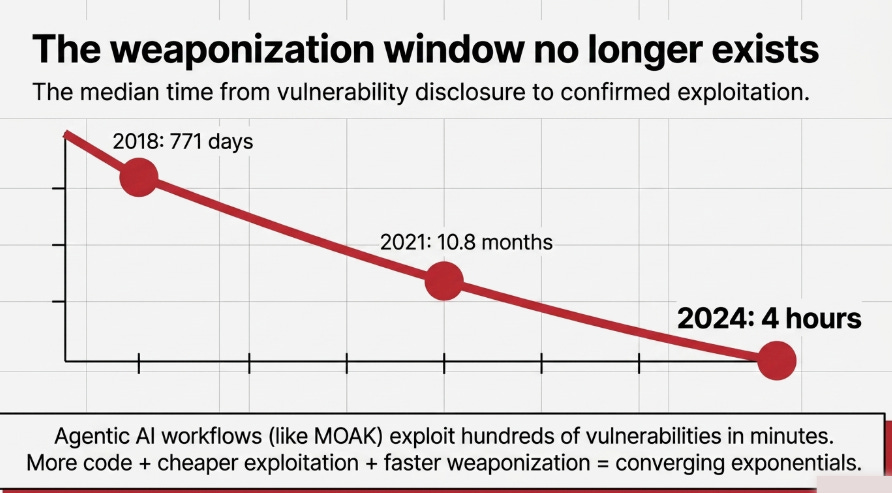

The core thesis is that AI has created a structural asymmetry between offense and defense that existing vulnerability management programs were never designed to handle. AI lowers the cost and skill floor for discovering and exploiting vulnerabilities faster than organizations can patch them, and the window between discovery and weaponization has collapsed from years to hours.

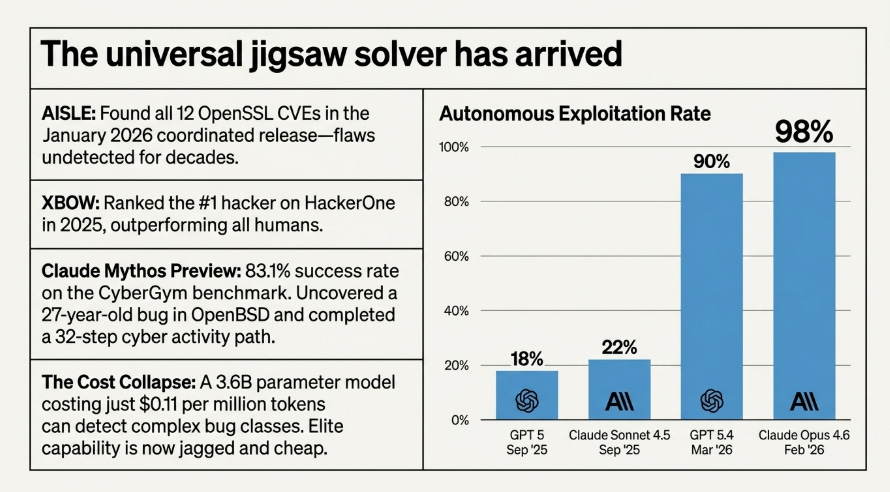

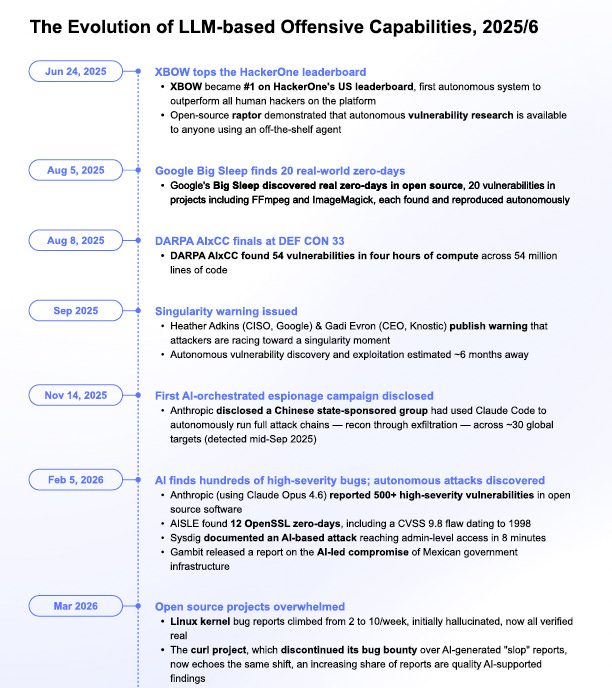

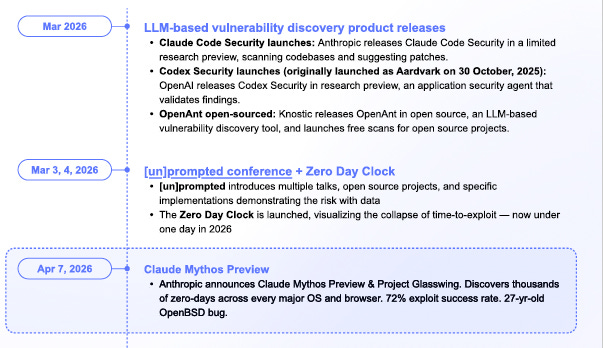

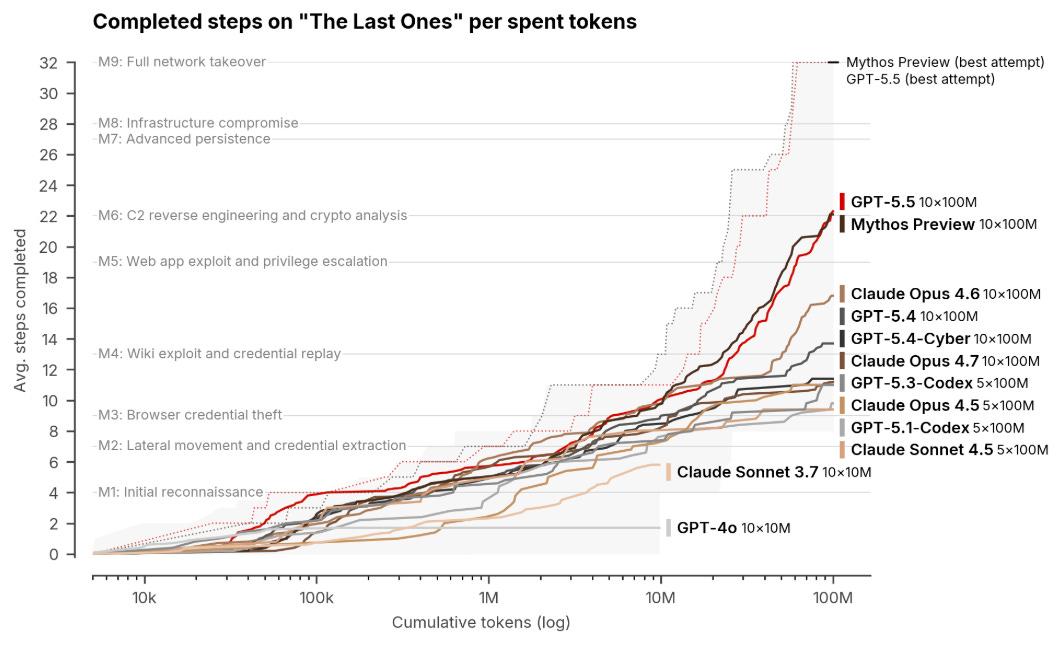

The CSA paper walks through the evolution of LLM-based Offensive Cyber Capabilities from 2025-2026:

As I covered in The AI Cyber Capability Curve, we’re watching this play out across multiple frontier models simultaneously.

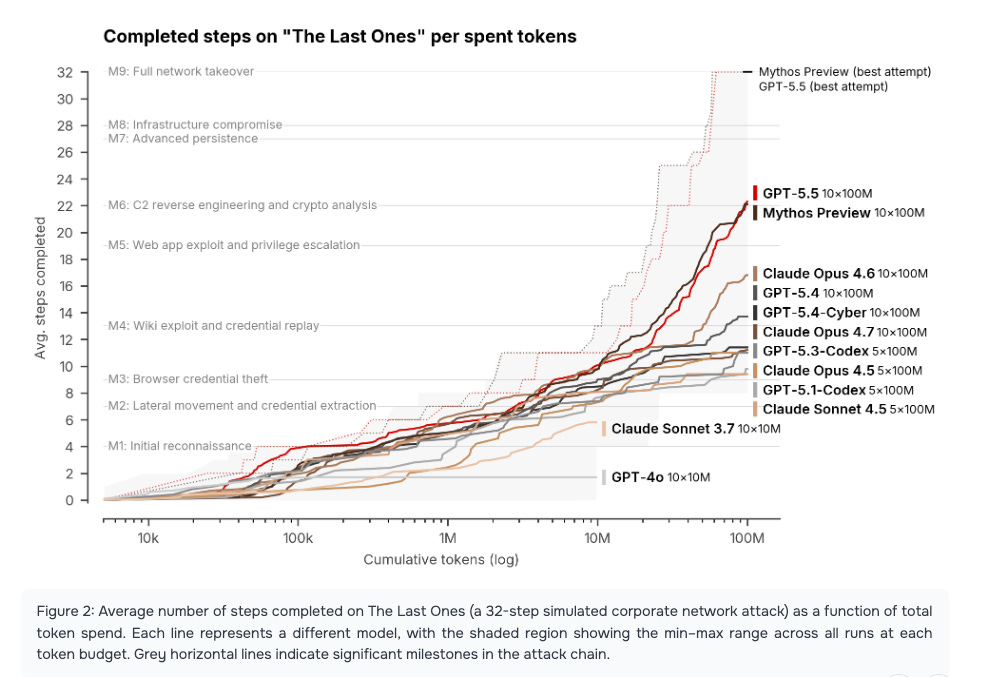

The UK AI Safety Institute evaluated both Anthropic’s Claude Mythos Preview and OpenAI’s GPT-5.5 and found that both models can autonomously execute multi-stage attack chains spanning the full kill chain, from reconnaissance through lateral movement to objective completion. Mythos generated 181 working exploits on Firefox where Claude Opus 4.6 succeeded only twice under the same conditions. GPT-5.5 scored 90.5% on expert-level cyber tasks on a pass basis. Two different models from two different labs, both independently demonstrating offensive capabilities that would have seemed theoretical two years ago.

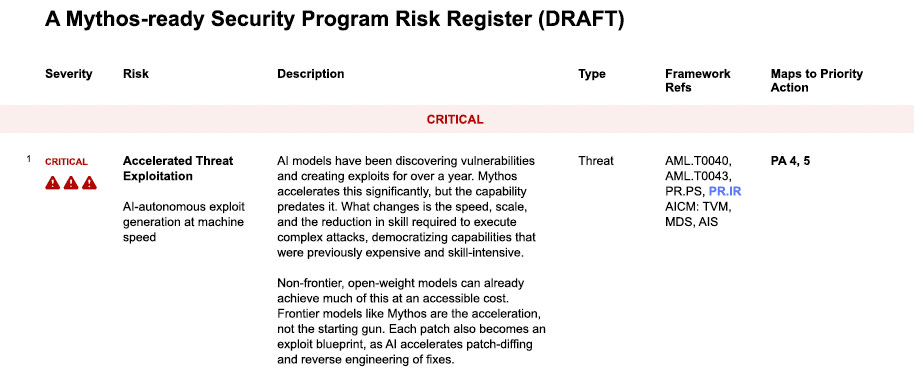

The CSA paper’s risk register captures this in structured form, with “Accelerated Threat Exploitation” rated as the top critical risk. Non-frontier, open-weight models can already achieve much of this at accessible cost as I have discussed using examples such as AISLE. As the paper notes, frontier models like Mythos are the acceleration, not the starting gun. Each patch becomes an exploit blueprint, as AI accelerates patch-diffing and reverse engineering of fixes. This is the reality that vulnerability management programs need to internalize.

More Code, Fewer Guardrails

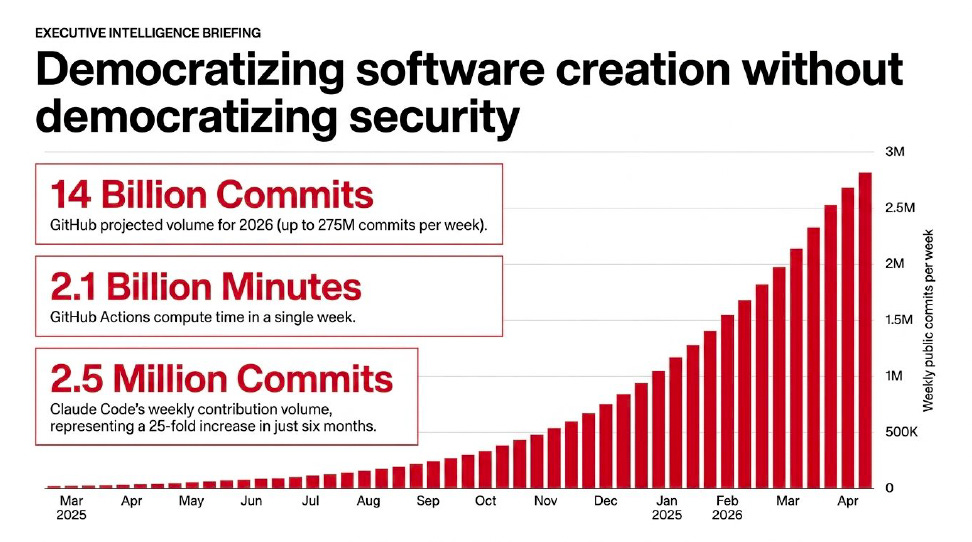

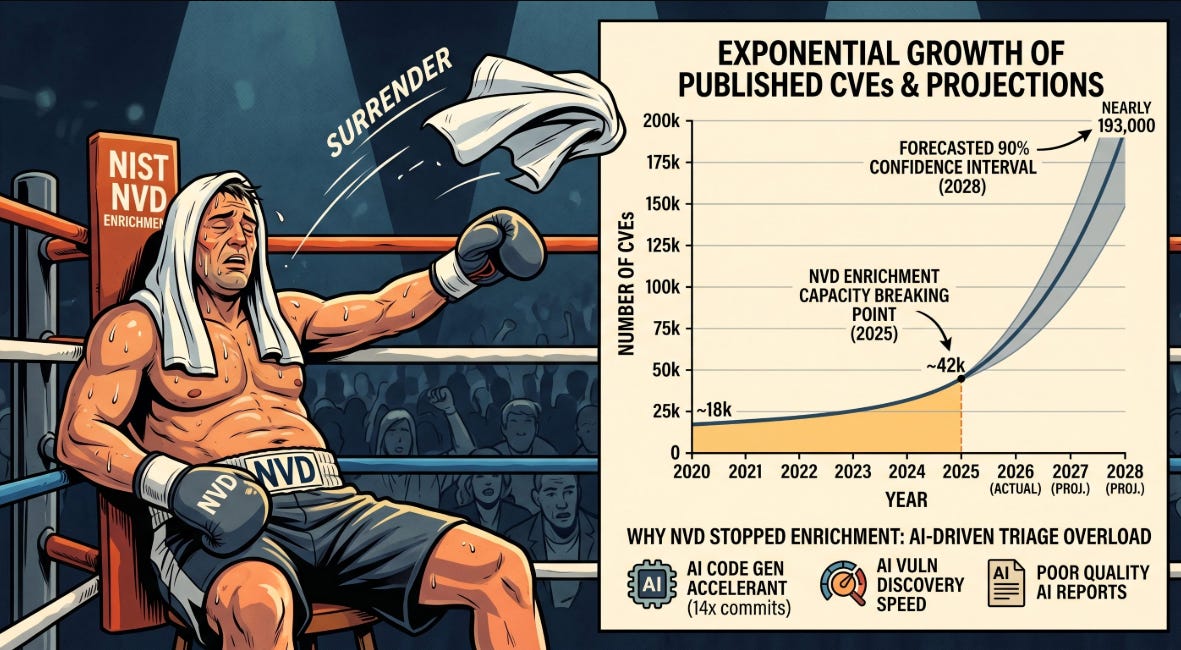

The offensive capability acceleration hits an environment where the attack surface is expanding at rates that dwarf historical precedent. As I wrote in The Attack Surface Exponential, GitHub crossed 1 billion commits in 2025 and is now on pace for 14 billion in 2026, a 14x year-over-year increase driven almost entirely by AI coding agents.

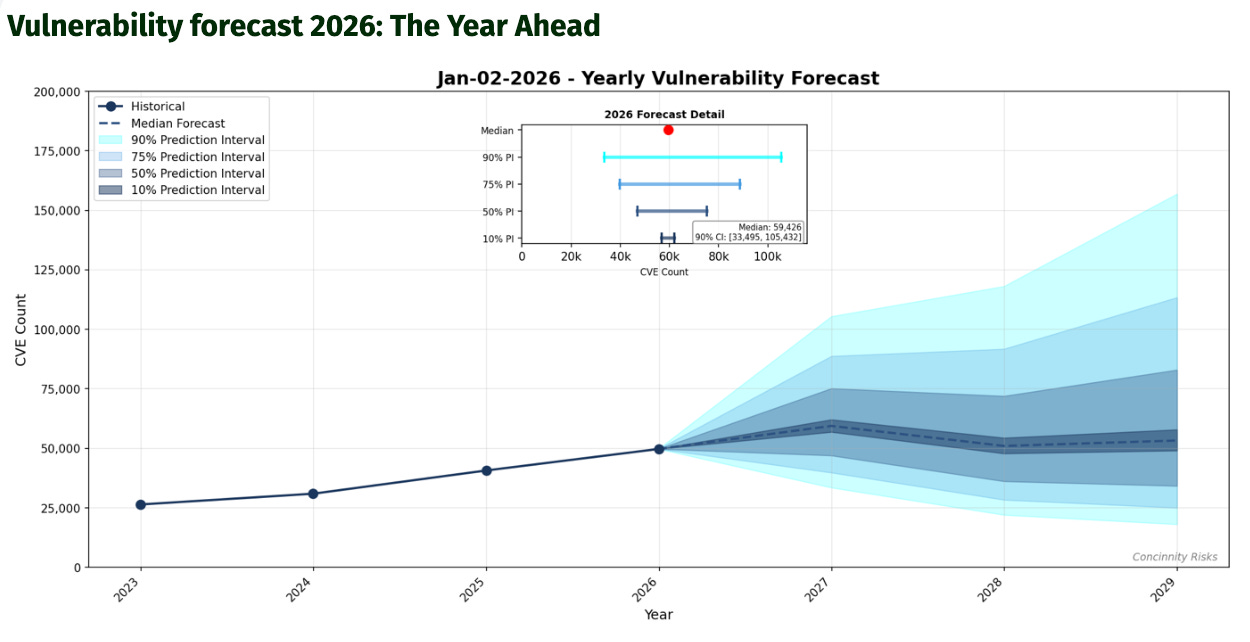

FIRST projects approximately 59,000 new CVEs this year, the first time the industry will cross 50,000 in a single calendar year. More code is being written faster than ever before, much of it by developers leveraging AI tools that prioritize velocity over security review.

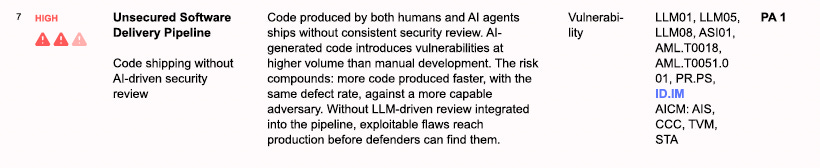

The CSA paper rates “Unsecured Software Delivery Pipeline” as a high-severity risk, noting that code produced by both humans and AI agents ships without consistent security review.

AI-generated code introduces vulnerabilities at higher volume than manual development, and the risk compounds because more code is being produced faster, with the same defect rate, against a more capable adversary. Without LLM-driven review integrated into the pipeline, exploitable flaws reach production before defenders can find them.

This is the same dynamic I explored in Vulnpocalypse, where I laid out the math behind why the remediation gap keeps widening. Organizations are already carrying vulnerability backlogs numbering in the hundreds of thousands, with remediation rates sitting at roughly 10% per month and average patch deployment times measured in weeks. The CSA paper puts it bluntly, quarterly pen tests and reactive patching cycles cannot keep pace with continuous AI-driven discovery. Existing CVE/NVD infrastructure and patch prioritization workflows were built for dozens of critical CVEs per month, not hundreds.

The Infrastructure Is Collapsing

The CSA paper identifies “Continuous Vulnerability Management Maturity Gap” and “Threat Detection Dependent on Lagging Intelligence” as high-severity risks, and both connect directly to the infrastructure collapse I’ve been documenting. As I covered in The NVD Just Threw In The Towel, NIST moved approximately 29,000 backlogged CVEs into a “Not Scheduled” category, clearing the visible backlog by reclassifying the problem rather than solving it. Going forward, the NVD will only prioritize CVEs in the CISA KEV catalog, CVEs affecting federal government software, and CVEs tied to critical software under Executive Order 14028.

The CSA paper reaches the same conclusion from a different angle. The CVE system itself may not scale to AI-generated discovery rates, and novel vulnerabilities have no listing in KEV by definition. For organizations that built their entire vulnerability management workflow around the assumption that the NVD would provide timely, comprehensive enrichment data, that assumption no longer holds, and this is happening at the exact moment when the volume of vulnerabilities being discovered is accelerating beyond anything the system was designed to handle.

Linux kernel bug reports climbed from 2 to 10 per week, initially dismissed as hallucinations but now increasingly verified as real findings. Mozilla reported that Mythos discovered 271 vulnerabilities in Firefox, though only 3 warranted CVEs, in their blog “the zero-days are numbered”, which shows the good that can be done using frontier models to harden critical software.

The curl project, which had previously discontinued its bug bounty over AI-generated “slop” reports, now acknowledges that an increasing share of submissions are quality AI-supported findings. The infrastructure that defenders depend on for triage, prioritization, and enrichment is buckling under pressure from both directions, more AI-discovered vulnerabilities coming in and less institutional capacity to process them.

What “AI-Ready” Actually Means

This is where the CSA paper moves from diagnosis to prescription, and I think their framing is well done because it avoids the trap of either dismissing the fundamentals or pretending that AI changes everything overnight.

The paper’s concept of a “Mythos-ready Security Program” is built around what they call “minimum viable resilience,” which means upgrading measurements to a higher maturity level on key metrics such as cost of exploitation, early detection of compromise, and blast radius containment. The framing acknowledges that Mythos is only the first wave, and any program organizations build must prepare them not just to return to equilibrium but to maintain balance for the waves ahead.

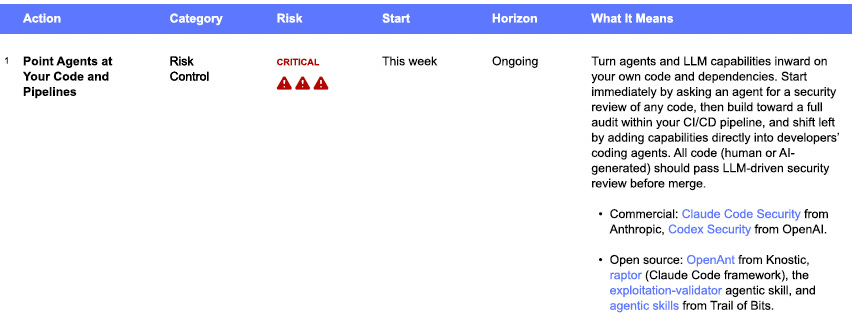

Their risk register identifies 13 distinct risks across four severity levels, with 5 rated critical and 8 rated high. What I find most useful is the priority action timetable, which assigns aggressive timelines that start with “this week” for the most critical actions.

The first priority action, “Point Agents at Your Code and Pipelines,” calls for immediately turning agents inward on your own code and dependencies, starting with an LLM-driven security review and building toward a continuous VulnOps capability. Mozilla’s use of Mythos is a great example of doing just this. All code, whether human or AI-generated, should pass LLM-driven security review before merge.

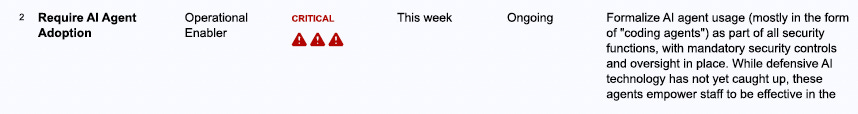

The second critical action, “Require AI Agent Adoption,” is where the paper makes its strongest and most pragmatic argument. The reality is that offensive AI technology can be repurposed for defensive purposes, and coding agents are useful across the board from GRC to incident response, far beyond their original purpose with code.

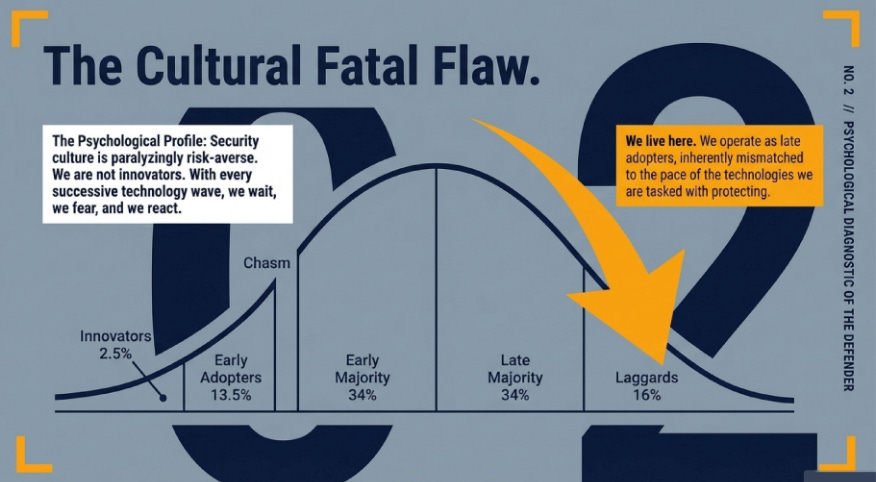

This sort of early adopter and innovator cultural shift among cyber as it relates to AI is the exact point I advocated for a couple of weeks ago at SANS AI Security Summit in D.C. where I argued that cybersecurity is historically a late adopter and laggard of emerging technologies, unlike the business and adversaries alike, and this perpetuates the bolted on rather than built in nature of cyber. With AI, we have an opportunity to flip that paradigm, but it requires leaning in early on AI for cybersecurity. Failing to be an early adopter and innovator with each technology wave is what I argued was our cultural fatal flaw:

The resulting asymmetry isn’t just technological but cultural. Teams that don’t adopt AI coding agents cannot match the speed or scale of AI-augmented threats, regardless of their technical skill. The paper argues that optional adoption programs have not been shown to overcome cultural barriers, and that adoption needs to be required rather than encouraged.

The Fundamentals Still Matter

Here is where my perspective as someone who wrote a book on the fundamentals of effective vulnerability management converges with the CSA paper’s recommendations. The paper explicitly calls out “Increase focus on the basics” as a key CISO takeaway, noting that segmentation, patching known vulnerabilities, identity and access management, and defense-in-depth/breadth all increase the difficulty for attackers. To lower latent risk, expanding these efforts while there is time is prudent.

This isn’t a contradiction with the AI-forward recommendations. It’s a recognition that the two approaches are complementary and interdependent. AI-accelerated discovery doesn’t make patching less important. It makes patching more important while simultaneously making it harder, which is why the paper also recommends automating remediation capabilities and preparing triage and deployment capacity to handle a potential flood of patches as new critical vulnerabilities are disclosed through programs like Project Glasswing.

The fundamentals I laid out in Effective Vulnerability Management, asset inventory, risk-based prioritization, stakeholder alignment, and measured remediation cadences, still form the foundation. What’s changed is that the tempo at which those fundamentals need to execute has accelerated beyond what human-speed processes can sustain without AI augmentation.

Where this gets challenging is that most organizations already struggled with doing the fundamentals in the pre-AI era, so doing them at the scope, scale and velocity AI-driven vulnerability management demands will be a major hurdle and impossible for those who don’t adopt AI to help.

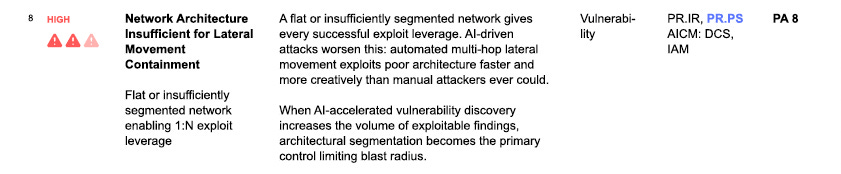

The paper’s risk #8, “Network Architecture Insufficient for Lateral Movement Containment,” reinforces this point. A flat or insufficiently segmented network gives every successful exploit leverage that AI-driven attacks will exploit faster and more creatively than manual attacks ever could. When AI-accelerated vulnerability discovery increases the volume of exploitable findings, architectural segmentation becomes the primary control limiting blast radius.

A perfect example of AI’s exponential improvements on this front is from the UK’S AISI evaluations of Mythos and GPT-5.5. They didn’t just look at the models ability to find isolated bugs in code bases, but their lab “The Last Ones”, is a 32 step full kill chain scenario, and both models were able to complete it end-to-end in some attempts, something no other models had done prior.

This means fixing isolated bugs via patching isn’t enough, you need a zero trust architecture, with defense in depth and the ability to limit blast radius to mitigate AI-driven attack risks.

That’s not an AI-specific recommendation. It’s a networking fundamental that becomes orders of magnitude more important when the threat moves at machine speed.

The Liability Dimension

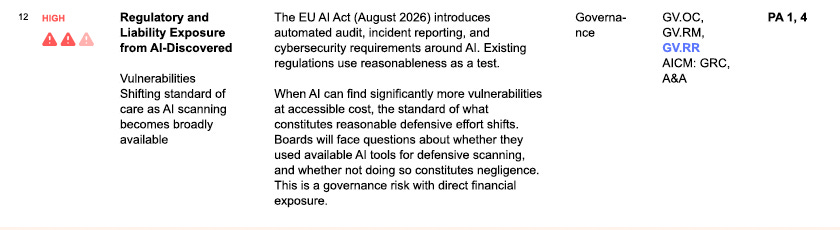

One of the more consequential risks in the CSA paper’s register is #12, “Regulatory and Liability Exposure from AI-Discovered Vulnerabilities.” The argument is straightforward, when AI can find significantly more vulnerabilities at accessible cost, the standard of what constitutes reasonable defensive effort shifts.

Boards will face questions about whether they used available AI tools for defensive scanning, and whether not doing so constitutes negligence. The EU AI Act, arriving in August 2026, introduces automated audit, incident reporting, and cybersecurity requirements that will formalize this expectation.

This connects to the broader argument I’ve been making about software liability and the incentive structures that drive security behavior. If the standard of care shifts to include AI-augmented vulnerability discovery and remediation, organizations that don’t adopt these capabilities aren’t just accepting risk.

They’re potentially accepting legal exposure. The CSA paper positions this as a governance risk with direct financial exposure, and I think they’re right, particularly as insurance underwriters begin incorporating AI-readiness into their risk models.

The Human Cost

The CSA paper addresses something that most frameworks and technical guidance documents ignore entirely, the human cost of this transition. Security teams are caught in a vice where AI is simultaneously accelerating the frequency of vulnerability reports they must respond to, the volume of code their organizations are shipping, and the expanding attack surface. Beyond a workforce already at capacity that is absorbing exponential increases in workload, staff are operating with increased uncertainty about their own roles and relevance.

“Burnout and attrition in security functions represent a direct operational risk”

The paper treats burnout and attrition as direct operational risks rather than HR concerns, noting that the expertise needed to navigate this transition is scarce, takes years to develop, and is not replaceable on short timescales.

This is the human dimension of the structural problem I’ve been writing about. The industry is asking security teams to do more, faster, against a more capable adversary, with the same headcount, while simultaneously learning to integrate AI into their own workflows.

The CSA paper’s recommendation to treat security team resilience, including sustainable workload, mental health support, and retention, as a strategic priority with the same urgency as the technical challenges is a necessary corrective to guidance that treats practitioners as interchangeable inputs to a process.

What This Requires

The CSA paper’s subtitle asks organizations to build a “Mythos-ready” security program, but the more honest framing is that organizations need to build vulnerability management programs that are ready for a world where the pace of discovery, exploitation, and attack never stops accelerating. Mythos was the moment the industry couldn’t ignore the trend, but the trend predates Mythos and will outlast it as the next generation of models arrives.

Each model will increasingly get better at vulnerability discovery, exploitation, multi-step kill chain execution and overall offensive capabilities.

What the CSA paper, the data I’ve laid out in The AI Cyber Capability Curve, and the infrastructure collapse documented in the NVD’s capitulation all point to is a necessary evolution in how vulnerability management operates. Not an abandonment of the fundamentals, but a recognition that executing those fundamentals at human speed against machine-speed threats is no longer viable.

The organizations that emerge in a stronger position from this transition will be the ones that integrate AI into their defensive workflows with the same urgency that attackers have already integrated it into their offensive ones, while simultaneously doubling down on the architectural and operational basics that determine how much damage any single vulnerability can actually cause.

If asymmetry in cost to respond to AI threats is such a great ratio to the cost of conducting the threats, at what point can attackers cost-effectively overwhelm even a mature cybersecurity team, effectively causing a Denial of Service?

We are in a bind for burnout and AI upskilling. The situation is 29% of teams do not have the budget to hire enough people. Even if they do have the budget, 30% cannot find people with the needed skills, with AI being the #1 security team skill needed at 42%. Then only ¼ of them invest in upskilling, but how are the underfunded teams going to invest in upskilling for AI skills?

I am waiting on organizations to get their legal team underwater with the EU AI Act. Then cue all your incoming third-party questionnaires you don't have answers to because your insecure product is a liability to them. I think the industry history shows new threats and regulations create headline costs to organizations so they get scared straight to invest.

Data sourced from the ISC2 Cybersecurity Workforce Study 2025.

Chris one thing I really like about your posts is you always bring the data. Many of us can say we feel this change in our gut, but you show us facts and figures and help us quantify this dynamic situation and I appreciate that.