Trump’s National Cyber Strategy: AI, Deregulation, and Going on Offense

The new strategy makes some bold moves, leaning into AI, slashing regulatory burden, and putting offensive cyber on the table. Here’s what it means for the industry.

The White House released President Trump’s Cyber Strategy for America this month, and it’s worth a close read, not because it’s a perfect document, but because it signals where this administration intends to take cybersecurity policy for the next several years.

For those of us who have been tracking the evolution of U.S. cyber strategy across multiple administrations, this one marks a clear inflection point. The emphasis has shifted. The priorities have been reordered, and whether you agree with every decision or not, the direction is unmistakable.

I’ve covered the evolution of U.S. cyber policy extensively through my work on Resilient Cyber, including conversations with Jim Dempsey on cyber policy and regulation, discussions with Matt Cronin on the prior National Cyber Strategy and cyber law, and deep dives into the U.S. AI Action Plan and its intersection with economics, technology, and national security. Each of those conversations was anchored in the same fundamental tension: how does the U.S. balance the need for security with the imperative to innovate, compete, and win?

This new strategy answers that question with a clear thesis: innovation wins, regulation loses, and offense is no longer optional. Let me break down the key themes.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The Strategic Framing: Deterrence, Not Just Defense

The strategy is organized around four pillars:

Defending the homeland

Disrupting and dismantling threat actors

Shaping market forces

Investing in a resilient future

If that structure sounds familiar, it should, it echoes elements of the Biden-era National Cybersecurity Strategy, which I discussed with Matt Cronin when it was released, but the emphasis is dramatically different.

Where the previous strategy leaned heavily into rebalancing responsibility, shifting the burden of cybersecurity from end users to technology suppliers and manufacturers, this strategy leans into deterrence and offensive action.

The document explicitly calls for imposing costs on adversaries through all instruments of national power, including offensive cyber operations. It names China, Russia, Iran, and North Korea as the principal threats and makes clear that the U.S. intends to take the fight to them, not just absorb the blows.

This is a significant philosophical shift. The previous strategy’s framing was largely defensive and regulatory: make better products, hold suppliers accountable, regulate critical infrastructure. This strategy’s framing is adversarial: identify threats, disrupt operations, impose consequences. Both approaches have merit, but the change in emphasis tells you everything about where this administration’s priorities lie.

AI Takes Center Stage and Agents Aren’t Far Behind

If there’s one theme that runs through this strategy more than any other, it’s artificial intelligence. AI is referenced throughout as both a transformative defensive capability and an emerging attack surface that demands immediate attention. The strategy calls for promoting AI-enabled cyber defense, accelerating AI adoption across federal agencies, and ensuring that AI systems deployed in critical infrastructure are secure by design.

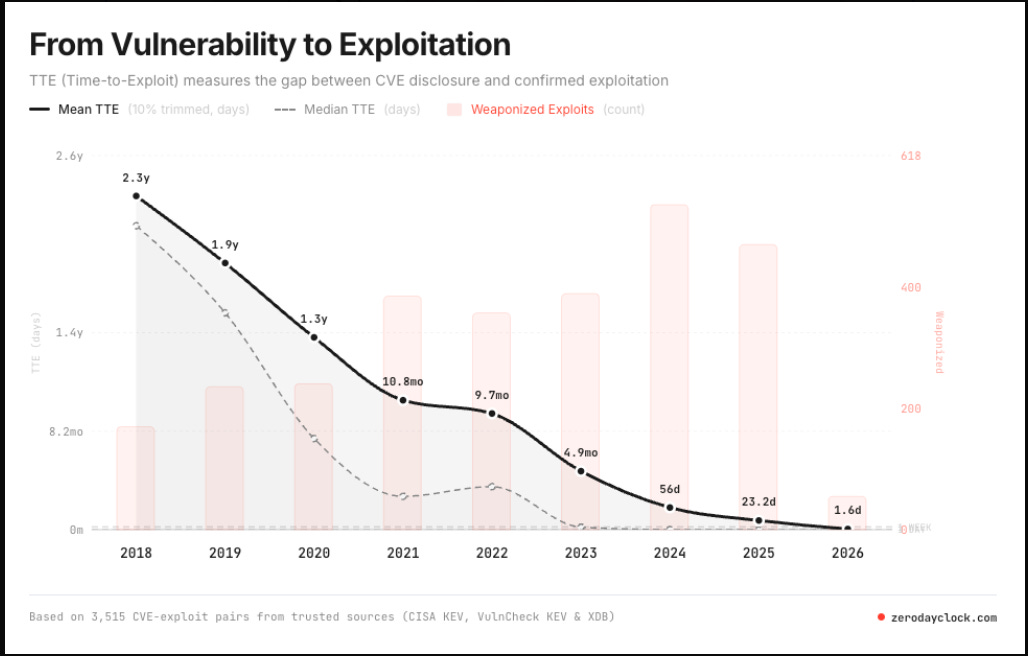

What’s particularly notable is the explicit acknowledgment that AI is a double-edged sword. The document recognizes that adversaries are actively leveraging AI to enhance their offensive capabilities, from automating vulnerability discovery to generating sophisticated social engineering campaigns. I specifically discussed this acceleration of exploitation in my recent piece titled “The Zero Day Clock Is Ticking: Why the Collapse of Exploitation Timelines Changes Everything”.

The NCS also calls for investment in AI-driven threat detection, automated response capabilities, and AI security testing to stay ahead of adversary adoption curves.

I covered the intersection of AI and national security extensively in “AI — Incentives, Economics, Technology and National Security,” where I broke down the U.S. AI Action Plan and its implications across economics, geopolitics, and cybersecurity. The cyber strategy extends that trajectory. It positions AI not as a nice-to-have but as a strategic imperative, a technology the U.S. must dominate to maintain its security posture. This makes sense, and aligns with the administrations AI Action Plan it previously published as well.

What the strategy doesn’t do, and this is important, is address agentic AI with any specificity. It references AI capabilities broadly and even leveraging agentic AI for scale network defense and disruption, but doesn’t grapple with the governance challenges posed by autonomous agents that make decisions, take actions, use tools, and chain operations without human oversight. I’ve been writing about this gap for months. In “The Agentic AI Governance Blind Spot,” I called out that none of the major governance frameworks — NIST AI RMF, ISO 42001, the EU AI Act, mention agents. This strategy, despite its emphasis on AI, continues that trend by mentioning the use of agents, but not necessarily how that adoption will be governed or secured, especially in large complex environments, or among a workforce that tends to lag commercial innovation and methodologies.

This matters because agents aren’t theoretical anymore. They strategy aims for them to be deployed at scale across federal agencies, defense systems, and critical infrastructure. When those agents can browse the web, execute code, call APIs, and interact with external services, the governance requirements are fundamentally different from those for a traditional ML model producing predictions for human review. The strategy’s silence on agentic AI governance and security is a missed opportunity, one that organizations will need to address themselves, using frameworks like AIUC-1, which I recently covered as the first compliance standard purpose-built for AI agents.

Reducing Regulatory Burden: Innovation Over Compliance

If the AI emphasis is the strategy’s thesis, the deregulatory posture is its operating principle. The document is unambiguous: the current regulatory landscape for cybersecurity is too fragmented, too burdensome, and too focused on compliance theater at the expense of actual security outcomes.

The strategy calls for harmonizing cybersecurity regulations across federal agencies, reducing duplicative requirements, and ensuring that compliance obligations don’t impose disproportionate costs on organizations, particularly critical infrastructure operators and small businesses. It specifically targets the patchwork of sector-specific regulations that currently force organizations to comply with multiple overlapping frameworks that often conflict with one another.

This is music to a lot of practitioners’ ears, and frankly, it’s hard to argue with the diagnosis. Anyone who has worked in federal cybersecurity knows the regulatory landscape is a mess. Organizations in the defense industrial base are simultaneously navigating CMMC, NIST 800-171, FedRAMP, DFARS, and sector-specific regulations that often ask for the same information in different formats with different submission timelines. The compliance overhead is real, and it diverts resources from activities that actually reduce risk.

That said, I want to be nuanced here. In my conversation with Jim Dempsey on cyber policy and regulation, we explored the tension between regulation and innovation at length. Jim, who has described software liability as the “third rail” of cybersecurity policy, makes the point that absent some regulatory pressure, the market will not self-correct. Software suppliers have externalized the cost of security onto customers for decades, and the competitive dynamics of speed-to-market and revenue will always deprioritize security unless there are meaningful consequences for insecurity.

I explored this further in “Software: Liability, Safe Harbor and National Security,” where I broke down Jim’s proposed framework for a software liability floor, a process-based safe harbor, and the concept of a “zone of automatic liability” for routinely exploited flaws. The previous National Cyber Strategy explicitly called for shifting the burden to those best positioned to address it. This strategy doesn’t abandon that principle entirely, but it clearly deprioritizes it in favor of reducing regulatory friction.

The risk is obvious. If you reduce regulatory burden without a corresponding mechanism to incentivize security investment, you’re relying on market forces that have demonstrably failed to produce secure products for decades. The strategy tries to thread this needle by emphasizing Secure-by-Design principles and public-private partnerships, but it remains to be seen whether voluntary adoption will achieve what regulatory pressure could not. I won’t hold my breath here, as we have already seen and know how voluntary expectations play out in cybersecurity.

Going on Offense: The Rise of Proactive Cyber Operations

The most striking departure from previous strategies is the explicit emphasis on offensive cyber operations. The document calls for enhancing U.S. offensive cyber capabilities, using all instruments of national power to disrupt adversary operations, and, perhaps most significantly, expanding the role of the private sector in supporting those operations.

This isn’t entirely new territory. Persistent engagement and defend forward have been part of U.S. Cyber Command’s operational philosophy since 2018. But this strategy takes it further by signaling that the private sector should play a more active role in the nation’s offensive cyber posture. The strategy emphasizes public-private partnerships for threat intelligence sharing, joint operational planning, and leveraging commercial capabilities to support national security objectives.

This is directly relevant to the work being done at Dartmouth by Winnona DeSombre and Sergey Bratus, whose paper “The Road Ahead: Strengthening U.S. Cyber Operations Through Private Sector Partnerships” examines how the private sector currently supports U.S. cyber operations and how that relationship should evolve. Their research, produced through the Institute for Security, Technology and Society, identifies a critical finding: cyberspace dominance now requires both high and low equity capabilities and opportunistic access at scale. The private sector, through government contractors, small companies, and individuals, already actively supports these operations, but the legal and policy frameworks governing that participation haven’t kept pace.

The strategy’s emphasis on offensive operations raises important questions about authorities, oversight, escalation risks, and the boundaries between intelligence operations and acts of war. It also raises questions about liability and accountability when private sector entities participate in operations that cross those boundaries. These are questions that the cybersecurity policy community will need to grapple with seriously, not just theoretically.

That said, I think the strategic logic is sound. Purely defensive cybersecurity has demonstrable limitations. When adversaries can attack at will, at machine speed, with minimal consequences, the calculus only changes when you impose costs. The strategy recognizes this and is willing to act on it. Whether the authorities, resources, and partnerships are in place to execute that vision is another question entirely.

We’ve long quipped “defenders have to be right all the time, attackers only need to be right once”, and in cyberspace, this means just sitting back trying to defend an endless barrage of increasingly AI-enabled attacks, while the attackers continue unabated. Everyone knows the best way to get a bully to stop is to stand up to them, and I am curious to see how this shift in the U.S. approach to imposing costs on malicious actors can potentially lead to changes in their activities towards our society and digital infrastructure.

Critical Infrastructure and the Workforce Question

The strategy reaffirms the importance of defending critical infrastructure and acknowledges the persistent threats from nation-state actors embedding themselves in U.S. systems. It references the reality that adversaries, particularly China, have been pre-positioning in critical infrastructure for potential future disruption , a threat vector that has been extensively documented over the past two years through campaigns like Volt Typhoon and Salt Typhoon.

The approach here is a mix of voluntary frameworks, information sharing, and public-private collaboration. The strategy calls for sector-specific risk management, AI-enabled defense for under-resourced organizations, and improved information sharing through existing mechanisms like ISACs. It also emphasizes the importance of securing the technology supply chain, which has been a recurring theme across administrations.

On the workforce front, the strategy acknowledges the persistent cybersecurity talent shortage and calls for expanding the pipeline through education, training, and partnerships with academia. This is an area where AI could have the most immediate impact. If AI can automate routine SOC operations, vulnerability triage, and compliance documentation, it frees human analysts to focus on the judgment-intensive work that machines can’t do. The strategy gestures toward this but doesn’t develop it with the specificity it deserves.

What’s Missing

No strategy document is perfect, and this one has notable gaps.

First, as I’ve already noted, the treatment of AI is broad but shallow when it comes to the specific governance challenges of agentic AI. Agents are being deployed now, and the strategy’s silence on the unique risks they introduce, autonomous action, tool use, multi-agent coordination, accountability gaps, is a real omission.

Second, the strategy’s deregulatory posture, while understandable, doesn’t adequately address the market failure problem. Cybersecurity has been widely described as a market failure because the economic incentives for suppliers don’t align with the security needs of consumers and the nation. Simply reducing regulation doesn’t fix that misalignment, it potentially makes it worse. The strategy would benefit from a more developed position on what replaces regulatory pressure as the mechanism for driving security investment.

Third, the software liability question is conspicuously absent. The previous National Cyber Strategy made software liability a centerpiece. This one largely sidesteps it. Given that software continues to underpin every aspect of critical infrastructure and national security, the absence of a clear position on supplier accountability is notable. Jim Dempsey’s work on defining a software liability floor with an accompanying safe harbor remains the most developed framework for this problem, and it’s a conversation the industry needs to continue having regardless of whether this particular strategy engages with it.

Fourth, while the offensive cyber emphasis is welcome, the strategy provides limited detail on how authorities, oversight, and escalation management will work in practice, particularly as private sector participation expands. The Dartmouth research I referenced highlights the need for clearer legal and policy frameworks governing private sector involvement in cyber operations. The strategy identifies the aspiration but not the mechanism.

The Bottom Line

This strategy is a clear statement of priorities: AI dominance, reduced regulatory burden, and offensive cyber operations. It positions cybersecurity as a national security imperative rather than a compliance exercise, and it signals that the U.S. intends to compete aggressively rather than regulate defensively. For practitioners, it means the policy wind is blowing toward innovation, speed, and operational capability, not toward compliance frameworks and liability regimes.

Whether that’s the right balance depends on your perspective. I’ve argued consistently that we need both, the incentive structures that regulation provides and the operational capability that innovation enables. This strategy bets heavily on the latter. The market failure problem doesn’t disappear because you stop talking about it. Software doesn’t become more secure because you reduce the regulatory pressure to make it so, and AI agents don’t govern themselves just because the frameworks haven’t caught up.

But I’ll give the strategy this: it takes the adversary seriously. It recognizes that the threat environment has fundamentally changed, that purely defensive postures are insufficient, and that AI is the defining technology of this era, for both attackers and defenders. The organizations that succeed in this environment will be the ones that move fast, adopt AI aggressively, build offensive and defensive capability in equal measure, and figure out the governance themselves rather than waiting for frameworks that may never arrive.

The strategy tells you where the government is heading. The question is whether the industry is ready to meet it there, and whether the gaps it leaves behind get filled before adversaries exploit them.