Vulnpocalypse: AI, Open Source, and the Race to Remediate

Frontier labs and startups are finding decades-old vulnerabilities in hours and the capacity to remediate can't keep up, but attackers will.

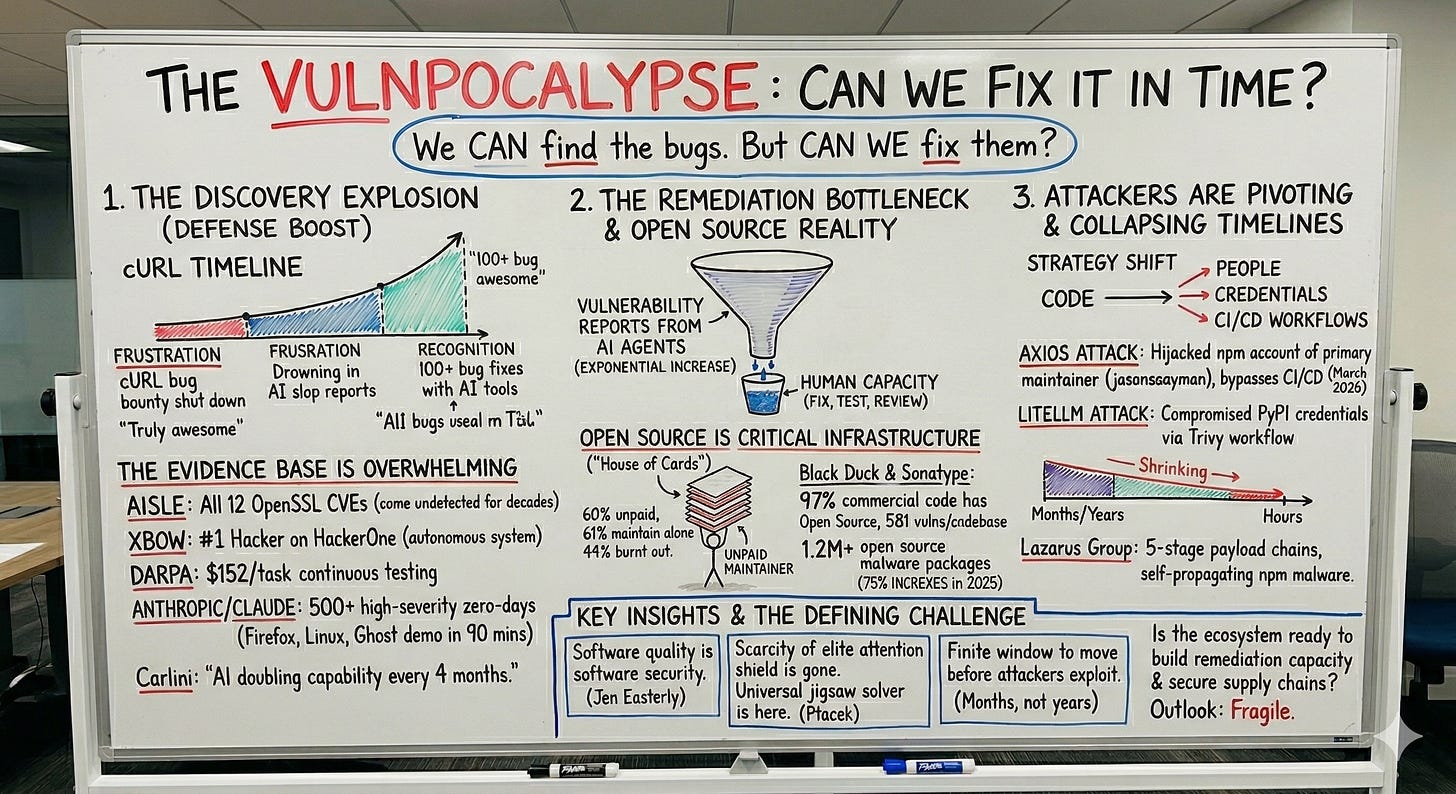

In January 2026, Daniel Stenberg, the maintainer of cURL, one of the most widely used open source projects on the planet, shut down the project’s bug bounty program on HackerOne. The reason was straightforward, he was drowning in AI-generated slop. Confident, detailed, and completely fabricated vulnerability reports were flooding in from people using LLMs to chase bounty payouts. The volume was unsustainable, and the signal-to-noise ratio had collapsed.

Fast forward a few months. Stenberg is now crediting AI-assisted tools with helping fix over 100 bugs in cURL. Bugs that survived years of aggressive fuzzing, compiler flags, static analysis, and multiple human security audits. The turning point came when researcher Joshua Rogers used AI-assisted tools like ZeroPath to systematically analyze cURL’s codebase, filtering results through his own expertise before submitting anything. Stenberg’s assessment of the findings? “Truly awesome.”

That arc, from frustration to recognition, tells you everything about where we are right now. AI is fundamentally changing vulnerability research. And as Thomas Ptacek recently argued in his widely discussed essay “Vulnerability Research Is Cooked”, the implications are far more profound than most of the industry has internalized.

The question is no longer whether AI can find the bugs, it can. The question is whether we can fix them before attackers exploit them, and right now, the evidence suggests we’re losing that race.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The Evidence Base Has Gotten Impossible to Ignore

The data points have been stacking up across multiple independent efforts, and together they paint a picture that the industry cannot afford to dismiss.

I recently sat down with AISLE to discuss what their autonomous analyzer has accomplished. The numbers are staggering: AISLE found all 12 CVEs in OpenSSL’s January 2026 coordinated release, every single one. When you include the CVEs from the fall 2025 release, AISLE is credited with discovering 13 of 14 OpenSSL CVEs assigned across both releases, 15 total. Some of these vulnerabilities had been sitting in OpenSSL’s codebase for decades, undetected by thousands of security researchers, extensive fuzzing campaigns, and multiple audits. This is OpenSSL, the cryptographic library that underpins a massive portion of the internet’s secure communications.

XBOW became the number one ranked hacker on HackerOne in 2025, the first time an autonomous system outperformed every human participant on a major bug bounty platform. They have identified over 1,000 vulnerabilities across companies like AT&T, Epic Games, Ford, and Disney. DARPA’s AI Cyber Challenge saw autonomous systems analyze 54 million lines of code at roughly $152 per task, making continuous security testing economically viable at a scale that was previously unthinkable.

And then there is what the frontier labs themselves are doing. Nicholas Carlini from Anthropic’s Frontier Red Team presented at Unprompted 2026 and laid out findings the industry needs to take seriously when it comes to the ability of LLMs to identify vulnerabilities in widely used critical open source projects and beyond.

Anthropic has used Claude to discover and validate over 500 high-severity zero-day vulnerabilities across production open source codebases. That includes 22 Firefox vulnerabilities found in collaboration with Mozilla over just two weeks, and a Linux kernel heap buffer overflow in the NFS v4 daemon that had been sitting unnoticed since 2003.

In a live demo, Carlini showed Claude finding a blind SQL injection in Ghost, a publishing platform with 50,000 GitHub stars that had never had a critical security vulnerability in its history, it took 90 minutes. Carlini’s assessment is blunt, AI capability for vulnerability research is doubling roughly every four months. His words:

“Current models are already better vulnerability researchers than I am, and in a year, they will likely be better than everyone.”

Ptacek frames the broader dynamic well in his piece “Vulnerability Research is Cooked”. He argues that vulnerability researchers have historically spent about 20% of their time on the computer science and 80% on what amounts to giant, time-consuming jigsaw puzzles.

“Within the next few months, coding agents will drastically alter both the practice and the economics of exploit development. Frontier model improvement won’t be a slow burn, but rather a step function. Substantial amounts of high-impact vulnerability research (maybe even most of it) will happen simply by pointing an agent at a source tree and typing “find me zero days”.

Now everybody has a universal jigsaw solver. Before you feed a frontier LLM a single token of context, it already encodes vast amounts of correlation across massive bodies of source code, plus the complete library of documented bug classes that exploit development builds on. The scarcity of elite attention that used to shield us from a flood of discovered vulnerabilities is gone.

The Open Source Reality

This is where the conversation gets systemic, and where I think the implications extend far beyond the technology itself.

Open source software runs everything. Google, iPhones, the national power grid, medical devices, military systems. As Chinmayi Sharma wrote in her Lawfare piece on open source security, our digital infrastructure is built on a house of cards. Eric Brewer of Google has explicitly called open source software “critical infrastructure.” I previously did a long form discussion on open sources challenges, and its role as critical infrastructure with Chinmayi.

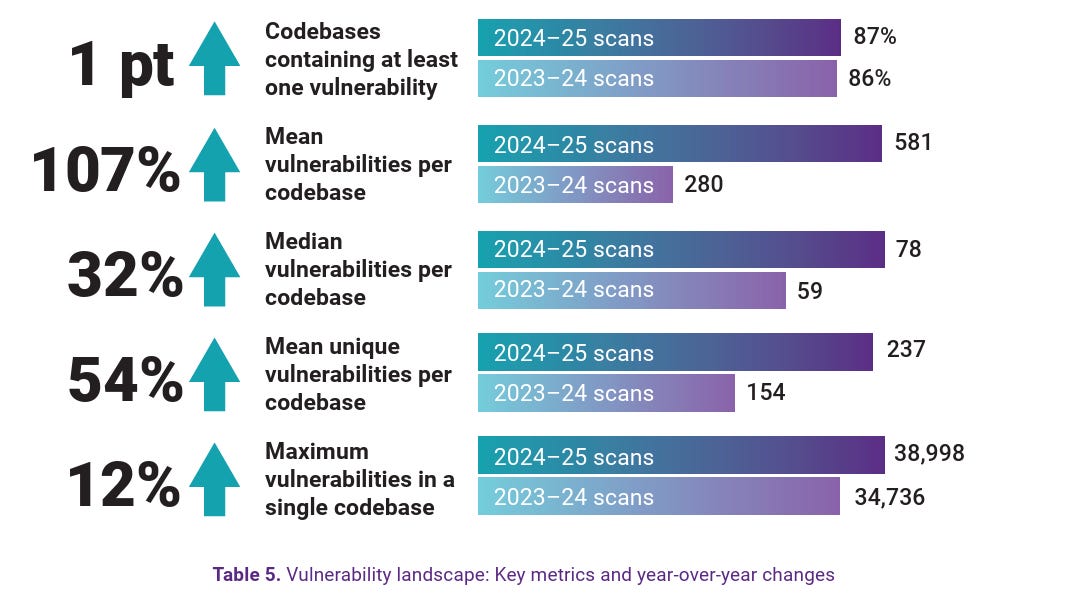

The numbers reinforce just how pervasive open source has become. According to the 2026 Black Duck OSSRA report, 97% of audited commercial codebases contain open source components. Open source vulnerabilities doubled to 581 per codebase as AI adoption explodes, with 87% of codebases at risk. The mean number of files per codebase grew by 74% year-over-year, while the average number of open source components increased by 30%. And 97% of organizations are now using open source AI models in development, adding yet another layer of dependency.

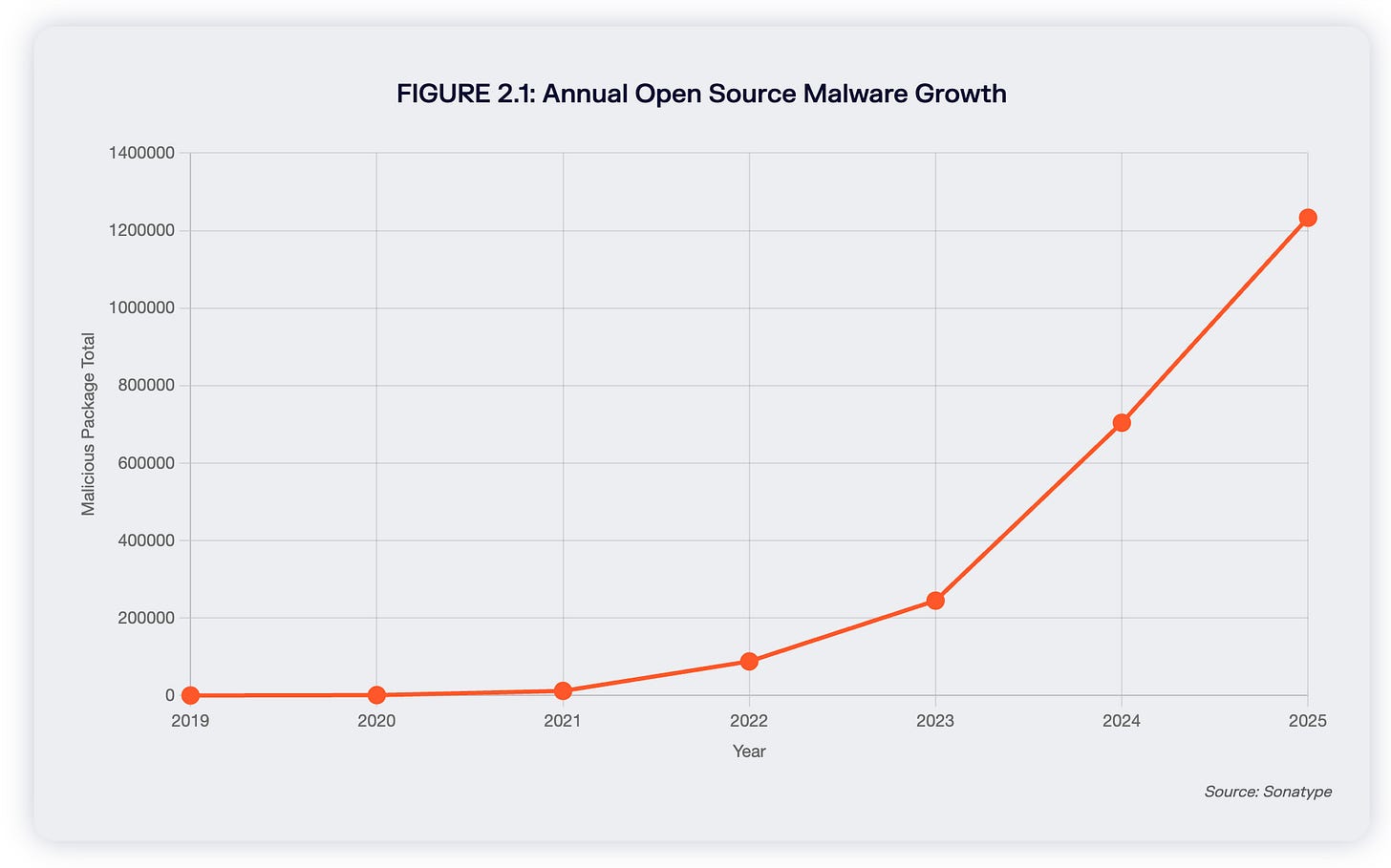

Sonatype’s 2026 State of the Software Supply Chain report shows developers downloaded components 9.8 trillion times in 2025 across Maven Central, PyPI, npm, and NuGet. Open source malware has surpassed 1.2 million packages, with 454,648 new malicious packages discovered in 2025 alone, a 75% increase. Sonatype observed an 18% decline in actively maintained open source projects, meaning the codebase is growing while the people maintaining it are shrinking.

Who is maintaining all of this? According to Tidelift’s annual survey, 60% of open source maintainers are unpaid. 61% of those unpaid maintainers maintain their projects alone, no co-maintainers, no support team. Just one person responsible for code that might be running in millions of production environments. 60% of maintainers have quit or considered quitting, with 44% citing burnout as the reason. I covered the broader dynamics of this in my piece on the 2025 open source security landscape.

Now think about what happens when AI starts generating high-quality vulnerability reports at scale against these codebases. Daniel Stenberg is one of the most dedicated and capable open source maintainers alive, and even he was overwhelmed. Multiply that across the estimated 1.4 million unique maintainers in the ecosystem, most of whom have far fewer resources than cURL.

Attackers Are Pivoting to Maintainers, CI/CD, and the Infrastructure Itself

While AI accelerates vulnerability discovery on the defensive side, attackers are simultaneously exploiting the structural weaknesses of the open source ecosystem with increasing sophistication. The attack patterns in 2025 and 2026 show a clear pivot, adversaries are moving beyond poisoning individual libraries and are now targeting the maintainers, the CI/CD infrastructure, and the trust mechanisms that hold the ecosystem together.

The Axios supply chain attack in March 2026 illustrates this perfectly. Axios has over 300 million weekly downloads and is used in virtually every Node.js and browser project that makes HTTP requests. The attacker did not submit a malicious pull request or publish a typosquatted package. They hijacked the npm account of jasonsaayman, the primary maintainer, changed the email to an anonymous ProtonMail address, and manually published infected packages via the npm CLI, completely bypassing the normal GitHub Actions CI/CD process. The compromised versions introduced a hidden dependency that functioned as a cross-platform remote access Trojan.

The LiteLLM compromise followed a similar pattern of targeting trust infrastructure. The TeamPCP threat actor compromised LiteLLM’s PyPI publishing credentials through a chain that originated from a misconfigured CI/CD workflow in another open source project, Trivy. The malicious packages deployed credential harvesting, Kubernetes lateral movement, and persistent backdoors. The same campaign hit npm packages with self-propagating worms. The malicious packages were live for about 40 minutes before being quarantined, but in the world of automated dependency resolution, 40 minutes is more than enough.

And then there was the tj-actions/changed-files compromise in March 2025, which exposed an entirely different attack surface, GitHub Actions. The attacker exploited a leaked personal access token from a reviewdog maintainer, used an automated invitation process to join a maintainer team, and pushed malicious commits that redirected version tags. The payload scanned GitHub Actions runner memory for secrets and exfiltrated them through GitHub’s own logs. The attack went undetected for four months.

These attacks share a common thread. Adversaries are no longer just targeting the code. They are targeting the people, the credentials, the CI/CD workflows, and the trust relationships that the entire ecosystem depends on. The attack surface has expanded from “malicious code in a package” to “compromise the human or automated system that publishes the package.”

That is a fundamentally different problem, and it requires a fundamentally different defensive posture. Sonatype’s 2026 report confirms this is industrializing, the Lazarus Group has evolved from simple droppers to five-stage payload chains, with more than 800 Lazarus-associated packages identified this year, concentrated overwhelmingly in npm, and the first-ever self-replicating npm malware proved that open source malware can now propagate autonomously through ecosystems.

Remediation Is the Real Bottleneck

Jen Easterly, the former CISA director and now CEO of RSAC, wrote a piece in Foreign Affairs arguing that we do not have a cybersecurity problem, we have a software quality problem. She is right that AI has the potential to address systemic software quality issues at scale, finding flaws and potentially fixing them before they ever ship. The window to take advantage of this technology is real.

But the problem is finding bugs is only half the problem, and with the introduction of AI, definitely not the harder half. Finding bugs is getting exponentially easier and cheaper.

Fixing them still requires human judgment, code review, testing, regression analysis, and deployment. For open source projects maintained by unpaid volunteers, often a single person, the capacity to absorb a flood of legitimate vulnerability reports simply does not exist. For enterprise organizations, every fix competes with feature development, every patch cycle requires change management, and every remediation decision has to weigh security risk against business continuity, release schedules, and revenue targets, and as we know, security almost always loses to competing priorities such as speed to market and revenue.

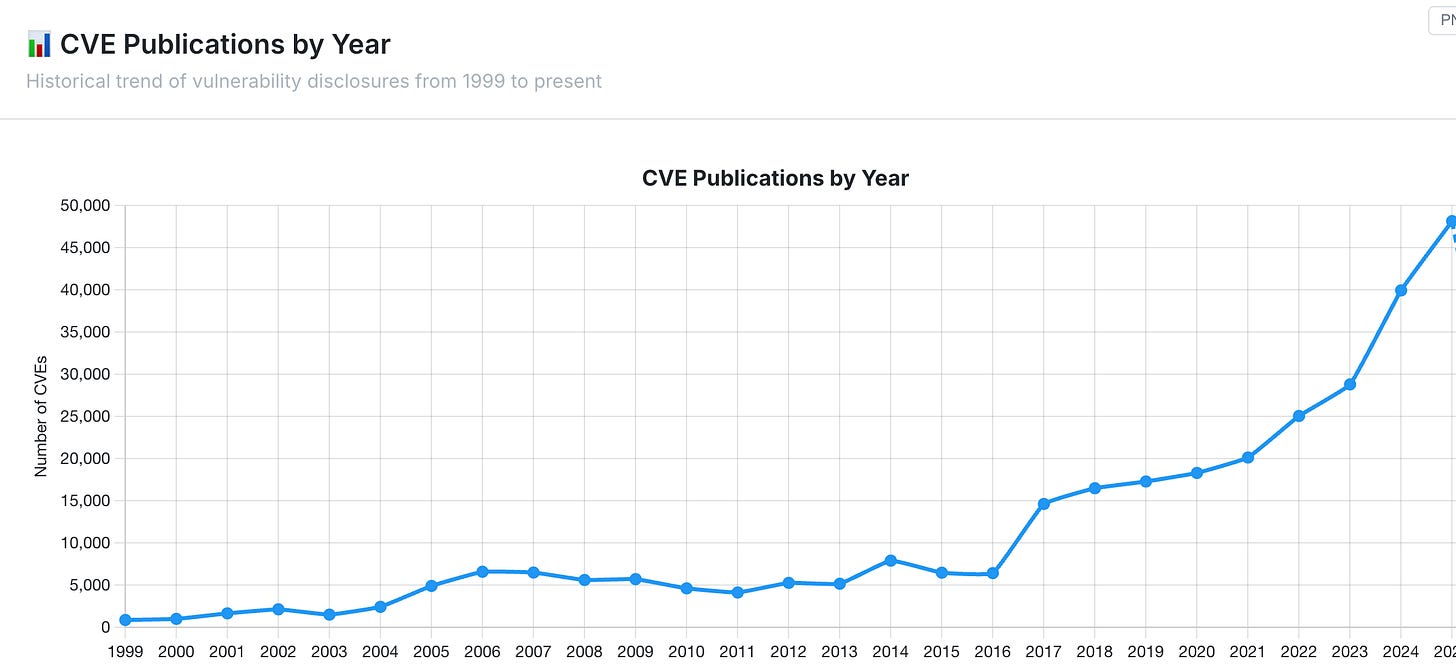

Over 48,000 CVEs were published in 2025 alone, a 21% increase over the prior year. The Black Duck OSSRA data shows that 90% of audited codebases contain open source components more than four years out-of-date. These are not obscure dependencies. Applications contain an average of 911 open source components, and many of those carry known, unpatched vulnerabilities that organizations have not gotten to yet.

Now add autonomous discovery tools scanning both open source and private repositories continuously. As frontier labs, AppSec vendors, and open source tools turn AI-powered discovery on proprietary codebases and internal applications, enterprise organizations will face the same flood of legitimate findings that open source maintainers are already struggling with.

The volume is about to overwhelm existing remediation workflows across the entire software ecosystem, and the organizations that struggle to patch known, publicly disclosed vulnerabilities in a timely manner are about to face an order-of-magnitude increase in findings that need attention. This doesn’t even account for the fact that many vulnerabilities don’t have formal CVE identifiers or the various attack vectors such as malicious packages, compromised maintainers and more.

The Exploitation Timeline Is Collapsing

And while we struggle with remediation capacity, the other side of the equation is accelerating at a pace that should alarm everyone.

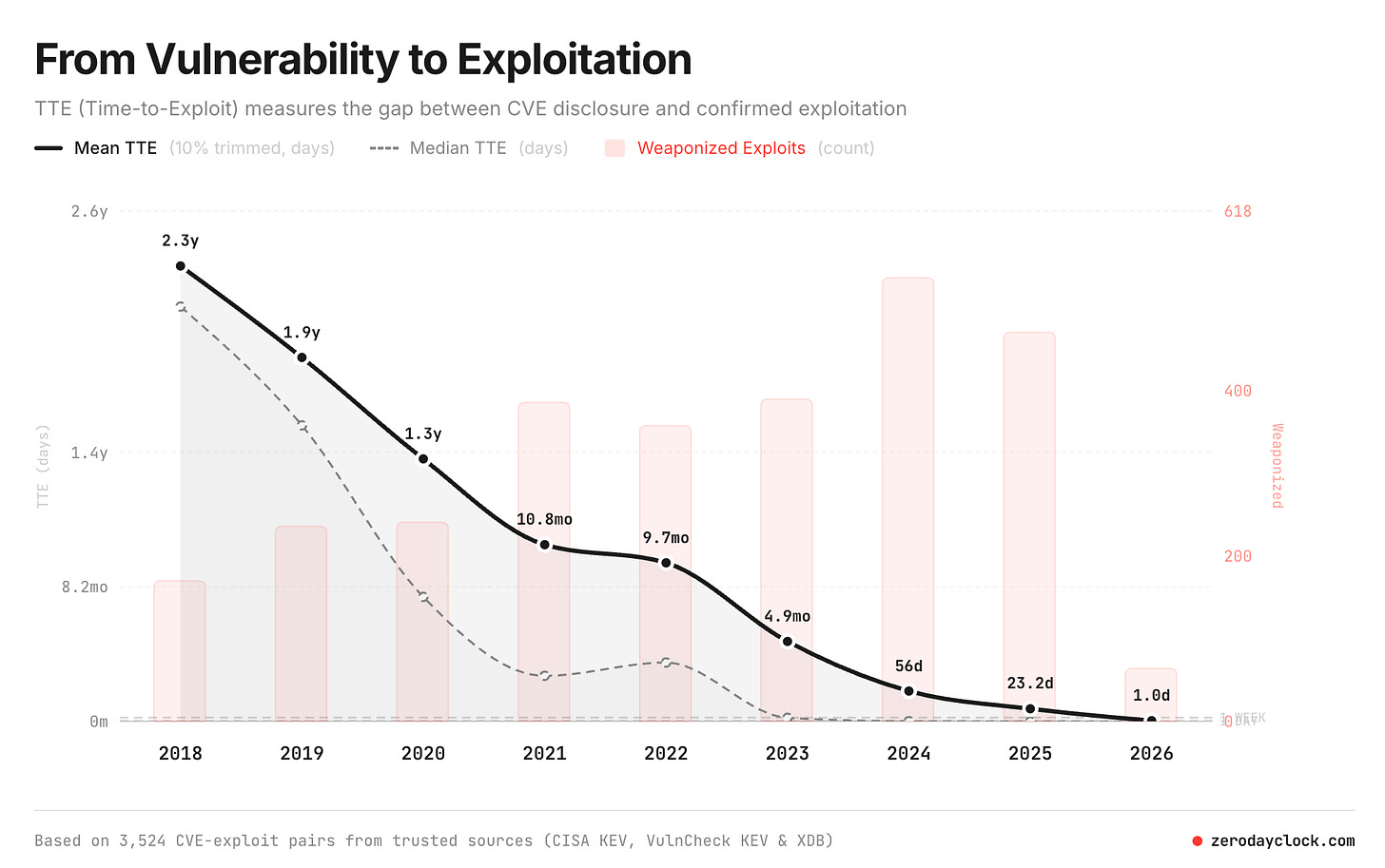

I recently interviewed Sergej Epp about his Zero Day Clock project, which tracks the collapse of exploitation timelines. In 2018, the median time from vulnerability disclosure to first observed exploit was 771 days. By 2023, it was 6 days. By 2024, it was measured in hours. And in 2025, the majority of exploited vulnerabilities were weaponized before they were even publicly disclosed. 67% of exploited CVEs in 2026 are zero-days, up from 16% in 2018.

AI is industrializing exploit generation alongside discovery. Researchers have demonstrated AI agents generating over 40 working exploits for a single flaw for $50, and AI agent swarms finding over 100 exploitable vulnerabilities across AMD, Intel, NVIDIA, Dell, Lenovo, and IBM drivers in 30 days for $600 total.

As Ptacek notes in his essay, no defense looks flimsier now than closed-source code, because reversing was already mostly a speed bump, and agents can reason directly from assembly. A four-layer system of sandboxes, kernels, hypervisors, and IPC schemes is, to an agent, just an iterated version of the same problem. The economics of offensive security have fundamentally shifted, and the implications extend well beyond open source into every proprietary codebase and commercial product on the market.

And unlike defenders, attackers do not have competing priorities. They do not have sprint planning meetings. They do not have to justify headcount to a CFO. They do not have to balance security fixes against speed to market and revenue. Their pipeline is simple, find, weaponize, exploit, and there’s plenty of low hanging fruit and the landscape is only getting more porous, driven by AI-driven development.

The Defining Challenge

Here is how I think about this. We have a finite and closing window to use AI to find and fix vulnerabilities before malicious actors find and exploit them. The technology to discover bugs at scale is here. AISLE proved it with OpenSSL. Anthropic proved it with 500 zero-days. XBOW proved it on HackerOne. cURL proved it with over 100 bug fixes, and if Carlini is right that this capability is doubling every four months, the window to get ahead of attackers is measured in months, not years.

But moving first only matters if we can actually remediate what we find, and right now, the remediation pipeline, both in open source and in enterprise environments, is the constraint. The open source ecosystem is maintained by a shrinking, burned-out workforce being asked to absorb an exponential increase in findings while simultaneously being targeted by increasingly sophisticated supply chain attacks. Enterprise organizations face the same remediation volume challenge, compounded by organizational friction and competing business priorities.

At the same time, attackers are not just finding bugs faster. They are attacking the ecosystem’s trust infrastructure directly, compromising maintainer accounts, CI/CD pipelines, and the automated workflows that modern software delivery depends on. The attack surface is not just the code. It is the entire system of human and automated trust that makes open source work.

This is the defining challenge for the cybersecurity ecosystem. Not whether AI can find the bugs, we know it can. But whether we can build the remediation capacity, the tooling, the institutional support, and the economic incentives to fix them at the speed the threat demands, and whether we can secure the supply chain infrastructure itself from the adversaries who are already inside it.

I have been writing about the evolution of vulnerability management and AppSec for a while now, and the trajectory is clear. The defenders have the opportunity to move first. AI gives us the ability to find and fix vulnerabilities at a scale and speed that was previously impossible. But that advantage is meaningless if we cannot build the remediation capacity to match, and it is meaningless if we cannot secure the supply chain infrastructure that attackers are already inside.

The technology is ready, the question is whether the ecosystem is, and unfortunately I don’t feel too optimistic about that, given the complexity, stakeholders, competing incentives and other systemic challenges.

I have written entire books on software supply chain security, vulnerability management and AppSec and the challenges then were immense, and that was before the introduction of AI and agents.

Things are about to get a whole lot worse, at least in the near term future.