The Software Supply Chain Cannot Scale on Trust Alone

Why the ship, scan, and patch model finally broke - and what has to replace it

I have been writing about software supply chain security on Resilient Cyber for several years now, and even before that, in my book Software Transparency.

In CVE Cost Conundrums, I broke down the economic reality of vulnerability management and how organizations are bleeding engineering hours on triage, patching, and compliance reporting rather than building products.

In Vulnpocalypse, I laid out the case that AI-accelerated vulnerability discovery was about to overwhelm the industry’s capacity to respond, and in Vulnerability Velocity and Exploitation Timelines, I traced how exploitation windows have collapsed from months to hours.

This piece connects all three threads. Because the software supply chain is not just under attack. It is expanding at a rate that makes the old model of ship, scan, and patch structurally unworkable.

I also will be discussing Chainguard, a team I’ve long been a fan of, who continues to expand their offerings to match the evolving attack dynamics and alleviate organizations of toil and let them focus on their core competencies, providing value to their customers.

March 2026 Was a Wake-Up Call

If you needed a single month to illustrate why the current approach to supply chain security is failing, March 2026 delivered it in concentrated form.

Between March 19 and March 31, five major open source projects were compromised in rapid succession. First, Aqua Security’s Trivy vulnerability scanner was hit on March 19, then Checkmarx’s AST GitHub Actions, then LiteLLM, the widely used AI proxy library on PyPI, on March 24, then Telnyx on March 27 and finally, Axios, one of the most popular JavaScript libraries in the world with over 100 million weekly downloads, was compromised on March 31 when attackers released poisoned versions that installed a Remote Access Trojan on every machine that pulled the update.

The Trivy attack is a particularly interesting one. TeamPCP used an AI-assisted tool and approximately $150 to gain access to Aqua Security’s credentials, giving them the ability to publish malicious versions of a Trivy container image and dozens of GitHub Actions. Any team that ran a Trivy scan during a three-day window likely had their secrets exposed. The attackers then used those harvested credentials to pivot into widely used Python and JavaScript libraries, expanding the blast radius to dozens if not hundreds of downstream organizations.

The Axios compromise was attributed by Microsoft Threat Intelligence to Sapphire Sleet, a North Korean state actor. This was not a hobbyist operation, it was a nation-state weaponizing the trust model that the entire open source ecosystem runs on, despite industry leaders such as Ken Thompson pointing out the folly of this blind trust 40+ years ago.

“You can’t trust code you did not totally create yourself” - Ken Thompson

As Chainguard CEO Dan Lorenc pointed out, North Korea showed us they can take over basically any project at will, and every malware scanner in the world missed LiteLLM. Chainguard’s own analysis in their blog post Open Source Died in March put it bluntly. The problem is not that open source itself is broken. The Linux kernel, curl, and OpenSSL did not get more or less dangerous because of what happened in March.

The problem is how enterprises consume open source. PyPI and npm are distribution mechanisms that carry open source software with assumed trust baked into every layer. That assumed trust is what attackers are exploiting, and it is what organizations need to stop relying on.

To help orgs stay protected from future attacks, Chainguard offers a free forever plan where you can access their catalog of 2,000+ hardened images, and select five of your choosing to use completely free. You can sign up for free here.

The Complexity of the Modern Supply Chain

The reason these attacks land is that the modern software supply chain has become extraordinarily complex, and most organizations have neither the tooling nor the staffing to secure it end to end.

Consider what a typical enterprise depends on. Container base images pulled from public registries. Hundreds or thousands of open source libraries across npm, PyPI, and Maven. GitHub Actions automating CI/CD pipelines. OS packages in the build environment, and now increasingly, AI agent skills, MCP servers, and extensions that are being pulled into developer workflows with minimal vetting. Every one of those layers is an attack surface, and every one of them is growing.

The industry has spent the better part of a decade talking about software composition analysis, SBOMs, and shifting security left. Those efforts are not worthless, but they are reactive by design.

You pull a dependency, you scan it, you find a vulnerability, you patch it. The problem is that this cycle assumes you have the time and capacity to respond before an attacker exploits what you missed. In March 2026, the organizations that were compromised were not running outdated security practices. They were running the current standard, but the current standard was not enough.

Chainguard’s Dan Lorenc has been making this argument for years, and the data is proving him right. Cybercrime, nation-states, and AI are all converging on the software supply chain as the highest-leverage attack vector in the ecosystem. The cost to mount these attacks is dropping. The volume is increasing, and the blast radius when they succeed is expanding because of how deeply these components are embedded in downstream applications.

This is a point Tony Turner and I tried to emphasize in our book Software Transparency and this was before the rapid rise of LLMs, GenAI, Agents, and the ever more porous and problematic software supply chain that we have now. The software supply chain simply represents a high ROI target for attackers due to its pervasiveness in consumption and usage.

AI Is Accelerating Both Sides of the Problem

The AI dimension of this story runs in two directions, and both of them are making the supply chain harder to secure.

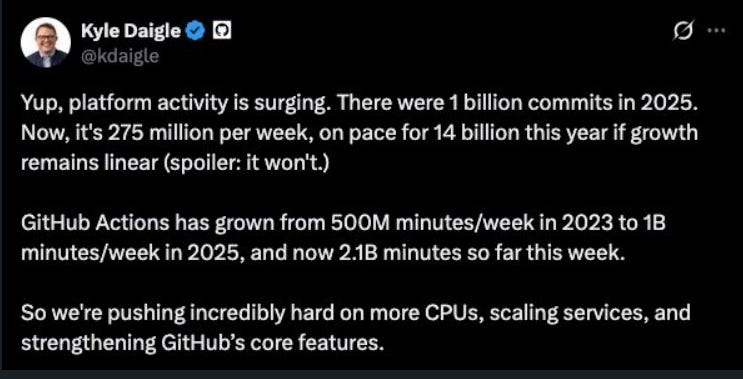

On the production side, the physical constraints of software development are disappearing. GitHub hit 1 billion commits in 2025. As of April 2026, the platform is processing 275 million commits per week, putting it on pace for 14 billion commits this year. That is a 14x year-over-year increase. GitHub Actions usage has grown from 500 million minutes per week in 2023, to 1 billion in 2025, to 2.1 billion in a single week in April 2026.

AI coding agents from Anthropic, OpenAI, Cursor, Windsurf, and others have transformed developers from line-by-line coders into orchestrators managing fleets of AI agents that assemble software in days instead of weeks.

As I discussed in Vibe Coding Conundrums and A Security Vibe Check, much of this growth is coming from developers who are less security-conscious than the experienced practitioners who came before them. Research shows AI-generated code contains vulnerabilities at 2.74 times the rate of human-written code. AI-assisted commits show roughly double the baseline rate of credential exposure. And the sheer volume means the absolute number of vulnerabilities entering production is growing exponentially alongside the code itself.

Every one of those commits is pulling open source dependencies. As AI generates code, it is pulling open source artifacts to drive token efficiency, which means your codebase is growing and so is your open source attack surface. This is happening at a pace where engineering and security teams cannot reasonably manage the supply chain risk factors, even with good tooling and mature processes.

On the offensive side, AI has turned vulnerability discovery and exploitation into a scalable, low-cost operation. As Chainguard noted in AI Is Finding Vulnerabilities Faster Than Anyone Can Patch Them, AI can find vulnerabilities at machine speed, but the humans who maintain the world’s most critical open source projects still operate at human speed, often as volunteers.

Anthropic’s Claude Mythos Preview found thousands of high-severity vulnerabilities including a 27-year-old bug in OpenBSD. AISLE discovered all 12 CVEs in the January 2026 coordinated release of OpenSSL, with three of those bugs having been present since the late 1990s. MOAK demonstrated the first agentic AI workflow capable of exploiting hundreds of known dangerous vulnerabilities in minutes, not just discovering them but weaponizing them.

Mean time-to-exploit for a CVE went from 63 days in 2018 to negative one day in 2024 to negative seven days in 2025. Attackers can now exploit a vulnerability for a full week before a patch even exists. As Chainguard framed it in Ship and Patch Doesn’t Cut It in the AI Era, the traditional vulnerability management cycle has become a lose-lose proposition. Patch too fast and you expose your developer secrets to the next supply chain attack. Patch too slow and you fall victim to an exploited CVE.

The meme below also painfully summarizes the challenges defenders face between quickly updating to mitigate risk, and the fact that quickly updating can be a risk in and of itself.

The Cost and Burden Nobody Talks About

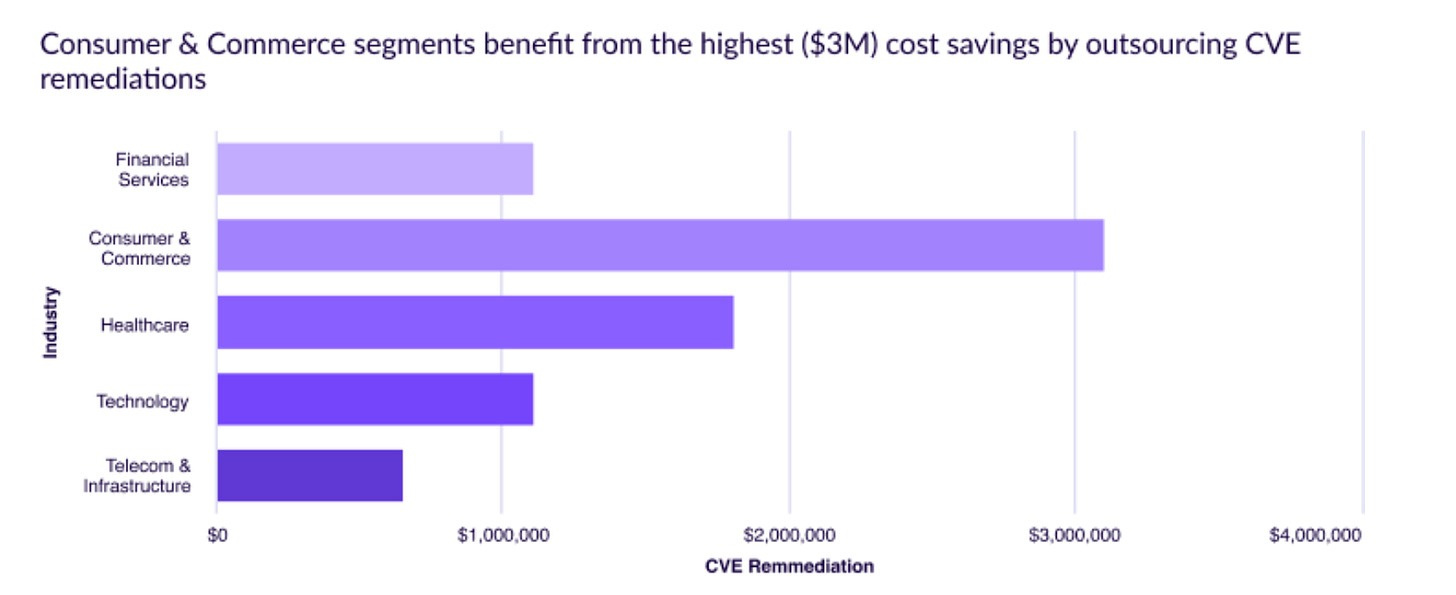

Beyond the security risk, there is an economic and operational burden that most organizations underestimate. As I covered in CVE Cost Conundrums, Chainguard’s research found that organizations that outsourced CVE remediation realized $2.1 million in annual savings, with even more substantial savings in regulated industries like insurance and healthcare.

The math behind those savings is not hard to follow. Engineering and development teams spend enormous amounts of time on CVE management. Triaging, patching, validating, documenting, and reporting. Every hour spent chasing down a vulnerability in a transitive dependency four levels deep in your container image is an hour not spent building product or providing value to customers. At scale, this becomes a material drag on engineering velocity and business outcomes.

Then layer on the compliance dimension. FedRAMP 20x is formalizing requirements around SBOMs, continuous monitoring, and supply chain transparency. Organizations selling into the U.S. Federal or other regulated markets need to demonstrate that they are tracking components and proving that vulnerabilities are remediated swiftly.

SOC 2, ISO 27001, PCI DSS, and industry-specific frameworks are all tightening their expectations around software supply chain governance. Every one of these compliance requirements adds overhead, and most organizations are doing the work manually or with stitched-together tooling that was not built for this scale.

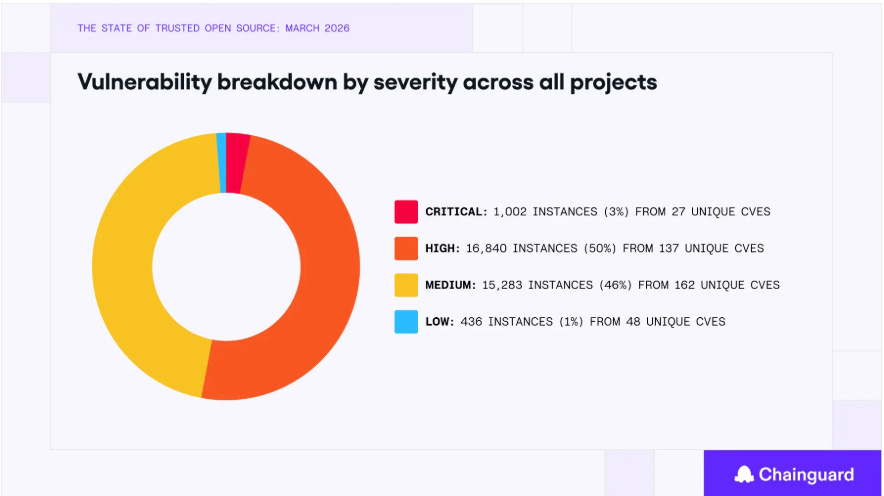

The burden compounds when you consider that the volume of CVEs is not slowing down. Chainguard’s State of Trusted Open Source report for March 2026 tracked 377 unique CVEs and over 33,000 fix instances across their container image catalog, representing a 145% increase in unique vulnerabilities and over 300% more fixes compared to the previous quarter. That acceleration reflects both faster development and AI-driven vulnerability discovery hitting the ecosystem simultaneously.

Chainguard’s Expanding Coverage

This is the context in which Chainguard’s evolution makes the most sense. The company started with a focused thesis around hardened container images. Minimal images, built from source, rebuilt daily, shipped with zero known CVEs and signed SBOMs. That was already a significant improvement over the status quo of pulling unvetted images from Docker Hub and hoping for the best.

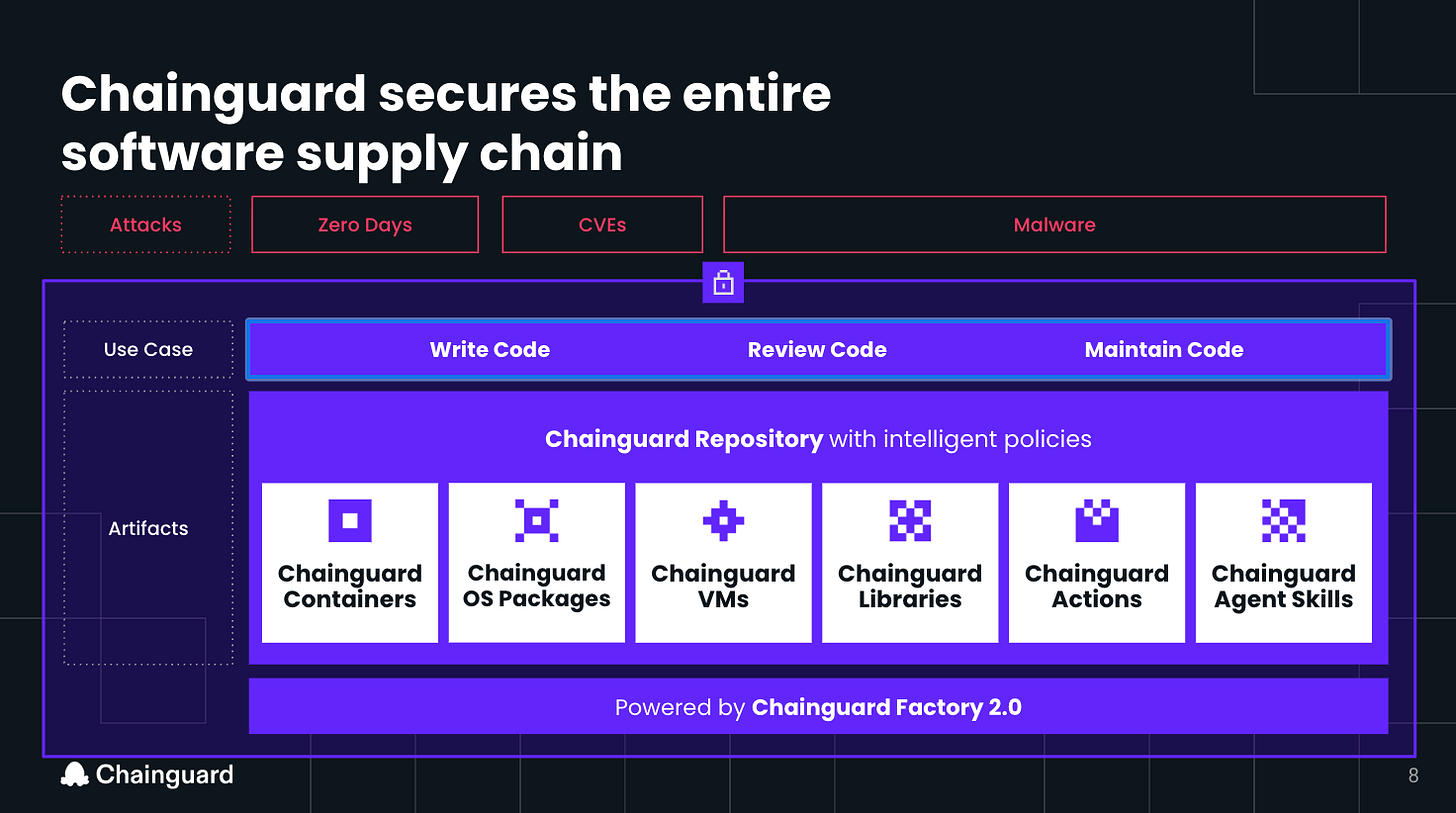

But the attack surface has grown beyond containers, and Chainguard has expanded with it. At Chainguard Assemble 2026, the company announced a full portfolio of products covering the broader SDLC.

Chainguard Libraries replaces public npm, PyPI, and Maven registries with a source-verified mirror. Only packages with SBOMs and provenance are included. Malicious binaries and tampered releases are blocked before they reach your environment, and poisoned transitive dependencies are excluded by construction rather than by scanning.

Chainguard Actions provides hardened, cryptographically pinned GitHub Actions built from source. This addresses the exact attack vector that was exploited in the Trivy and Checkmarx compromises, where version tags were force-pushed to redirect CI/CD pipelines to attacker-controlled code.

Chainguard Agent Skills embeds verified, policy-governed context directly into AI coding agent workflows, so that dependency selection defaults to trusted sources rather than public registries when agents are assembling code.

The Chainguard Repository ties all of this together into a unified catalog where platform teams can access containers, libraries, OS packages, CI/CD workflows, and agent skills through a single endpoint with built-in security policies and visibility. Security and compliance teams define rules once as code, and the repository enforces those decisions consistently across both human developers and AI agents.

They also offer Guardener, their AI-native migration agent, automates the process of replacing existing artifacts with Chainguard equivalents. It points at your existing Dockerfile, maps packages to Chainguard equivalents, converts images into hardened multi-stage builds, and outputs a ready-to-run Dockerfile with a before-and-after migration report. The same drop-in pattern applies to libraries, actions, and OS packages. Developers keep using the same tools and workflows they rely on today. This is an innovative way to enable the transition to secure alternatives without disrupting the business or developers.

The strategic insight here is that Chainguard is not building a scanner. They are building a factory. The Chainguard Factory rebuilds everything from source daily, applies compiler hardening flags, strips post-install scripts, and ships with full provenance and build attestation on every artifact.

AI-driven reconciliation bots trigger dependency updates and vulnerability-based rebuilds automatically, so images stay current without manual intervention. Chainguard remediates CVEs in an average of two days, and only 22% of CVE remediations require direct human intervention. Their container images carry 97.6% fewer CVEs than industry alternatives.

What This Means for Security Leaders

The software supply chain security problem has three compounding dimensions that are all accelerating simultaneously. The attack surface is growing exponentially as AI-driven development pushes code production to unprecedented volumes. The attacks against the supply chain are becoming cheaper, faster, and more effective, amplified by the same AI capabilities driving development. We’re seeing the full blown industrialization of exploitation.

The compliance and governance requirements organizations must meet are expanding, adding overhead to teams that are already stretched thin, and further making security a cost center rather than a business enabler.

The old model assumed that security teams could keep pace with developers. That was already questionable when humans were writing every line of code. It is untenable when AI agents are producing 275 million commits a week on a single platform, and the old trust model assumed that pulling packages from public registries was safe enough. March 2026 proved definitively that it is not, and the attacks and incident velocity continues to grow.

The organizations that get ahead of this will be the ones that shift from reactive vulnerability management to proactive supply chain integrity. That means consuming open source from verified sources with full provenance rather than pulling whatever an AI agent recommends from a public registry.

That means securing not just container images, but libraries, CI/CD actions, OS packages, and the AI agent context that is increasingly driving development decisions. Attackers see the full underlying infrastructure and ecosystem driving the modern SDLC and are targeting it accordingly.

It means doing this in a way that does not require every organization to build and maintain the infrastructure to do it themselves, because most cannot, and the ones that try are burning engineering capacity that should be going toward their actual products and things that provide direct value to their customers.

The supply chain cannot scale on trust alone. The math does not work. The threat landscape does not allow it. And the compliance environment will increasingly demand something better. The question for every security leader is whether they are going to keep running the current standard that March 2026 proved insufficient, or build on a foundation that was designed for the reality we are living in now.