The Zero Day Clock Is Ticking: Why the Collapse of Exploitation Timelines Changes Everything

Sergej Epp’s Zero Day Clock visualizes what the data has been screaming for years. The question is whether we’re finally ready to listen.

Every so often, someone takes a problem the industry has been talking around for years and makes it viscerally, undeniably clear.

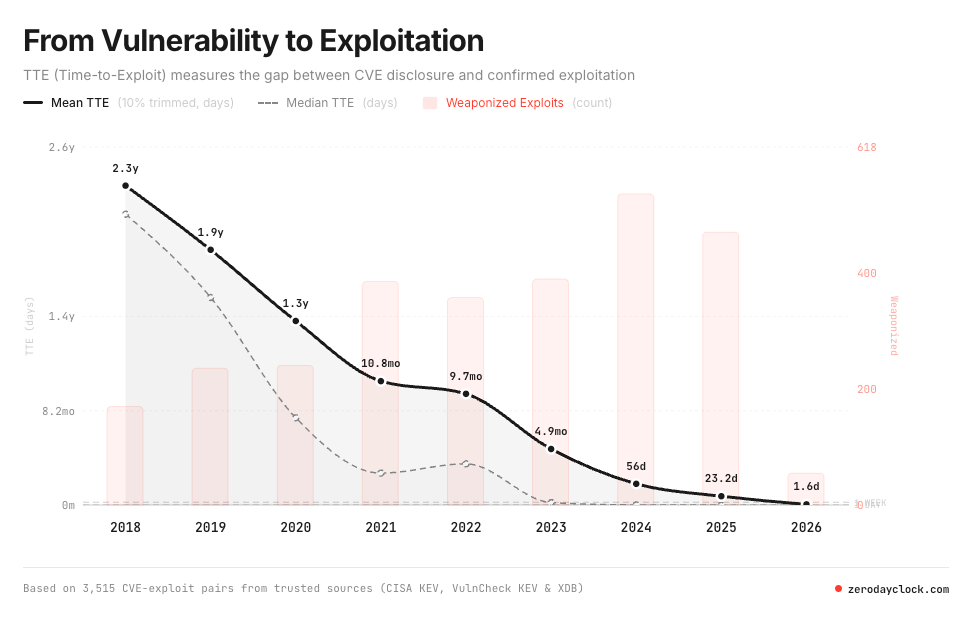

Sergej Epp, CISO of Sysdig, has done exactly that with the Zero Day Clock, a live dashboard that tracks the collapse of the time-to-exploit (TTE) window from CVE disclosure to confirmed exploitation in the wild. It’s not a theoretical exercise. It’s a real-time visualization of a systemic failure, built on 3,515 CVE-exploit pairs from trusted sources including CISA KEV, VulnCheck KEV, and XDB. And, the picture speaks a thousand words:

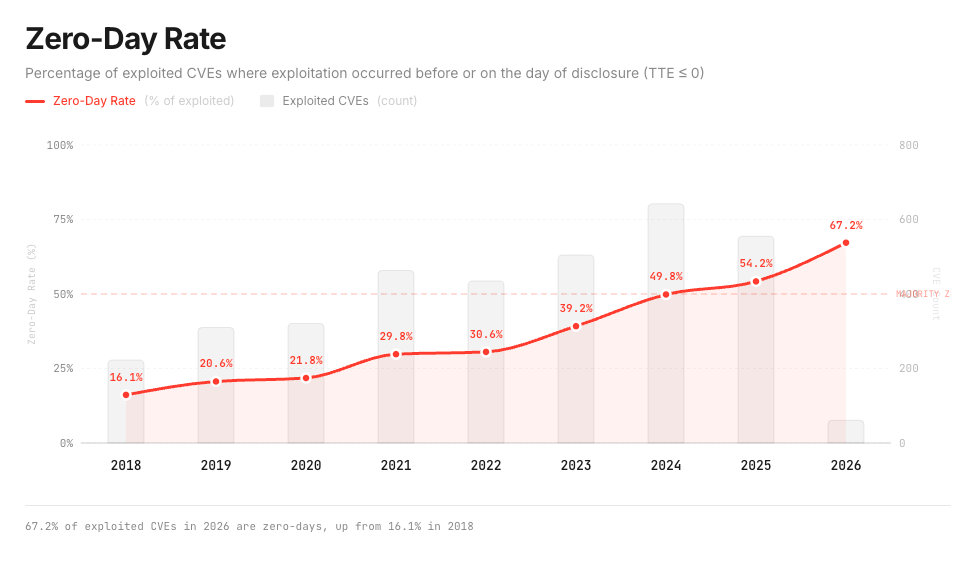

The numbers are stark, in 2018, the median time from a vulnerability being disclosed to the first observed exploit was 771 days. Organizations had over two years to patch. By 2021, that window had compressed to 84 days, a 9x compression in just three years. By 2023, it was 6 days. By 2024, 4 hours, and in 2025, the majority of exploited vulnerabilities were weaponized before they were even publicly disclosed. The Zero Day Clock tracks milestones as TTE crosses each threshold: the 1-year mark was reached around 2021, 1 month around 2025, and 1 week in 2026. The 1-day and 1-hour marks are projected for 2026, with 1 minute projected for 2028.

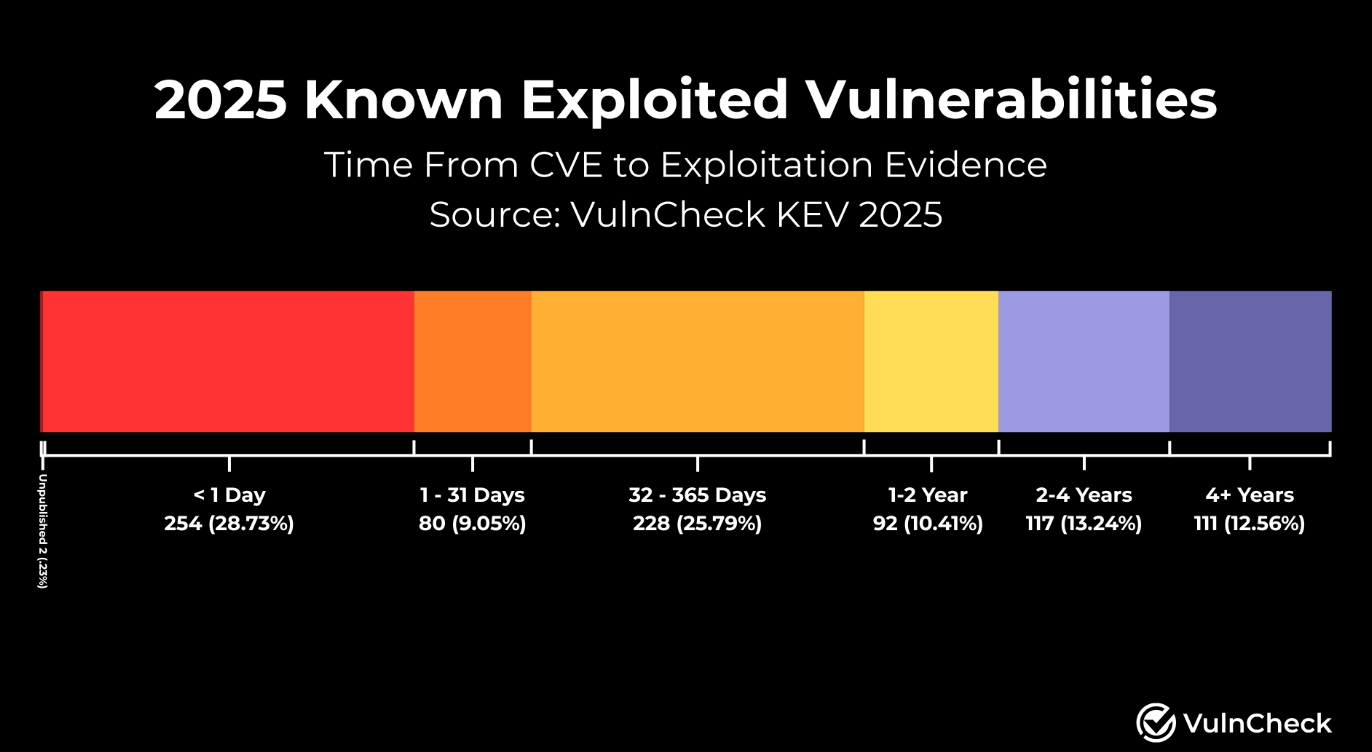

If you’re in security, compliance, or engineering leadership, that trajectory should absolutely get your attention. This isn’t a trend that stabilizes, it’s a collapse. It’s corroborated in other sources too, such as my friend Patrick Garrity, a Vulnerability Research at VulnCheck, who found that nearly 30% of CISA KEV’s were exploited on or before the day the CVE was even published.

This trend is also highlighted on the ZeroDayClock website:

The Collapse: Twenty Years of Warnings, Every One Ignored

Epp’s companion piece, “The Collapse,” traces the intellectual lineage of this crisis across two decades of research that the industry largely ignored. It begins with Ross Anderson’s foundational 2001 paper arguing that cybersecurity isn’t failing because of bad technology, it’s failing because of bad incentives. The people who build insecure software don’t pay when it gets hacked. The costs land on the wrong people. A textbook economic externality, no different from a factory polluting a river it doesn’t drink from. This is a reason many argue cybersecurity is a “market failure”.

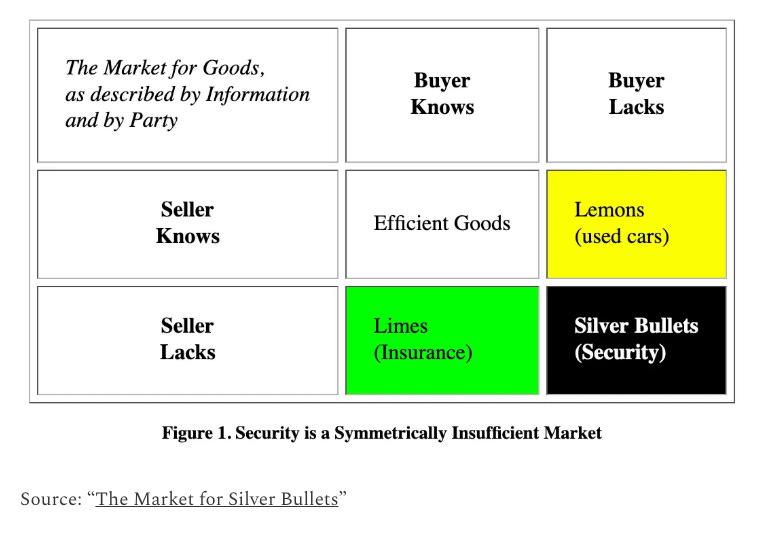

From there, the timeline accelerates. Halvar Flake’s BinDiff in 2004 demonstrated that every security patch was simultaneously an exploit blueprint, the fix itself told attackers exactly where to look. Bruce Schneier connected cybersecurity to Nobel Prize-winning economics in 2007, arguing that when buyers can’t judge quality, bad products drive good ones out. The less-secure product is always cheaper, ships faster, and has more features. The insecure product wins, every time. My friend Ross Haleliuk has made a similar point, but arguing cyber isn’t a market for lemons but instead is a market for silver bullets.

This is a point I’ve made myself, in various forms. In “Secure-by-Design Delusions,” I argued that the notion that the market will voluntarily begin making secure software and products without regulatory forces or customer demand is delusional. I argued, similar to Bruce, that competing incentives of speed to market and revenue always will be placed ahead of security, regardless of voluntary virtue signaling pledges by vendors.

CISA’s Secure-by-Design Pledge, which I dissected in “A Digital Scouts Honor,” has scaled to over 280 signatories, yet only 7.5% have provided any updates, and 75% of those come from security companies. It’s a voluntary, toothless effort with no enforcement mechanism, and the data shows it. Just 2% of all critical infrastructure entities met CISA’s voluntary Cross-Sector Cybersecurity Performance Goals. As Schneier himself said, and Epp’s timeline echoes: no industry in 150 years has improved safety or security without being forced to by the government. Not aviation, not automotive, not pharmaceuticals. The false delusions of altruism from software vendors has, and will always fall short to competing incentives.

When AI Enters the Equation, the Math Breaks

The Zero Day Clock’s timeline takes a sharp turn around 2024, when AI fundamentally altered the exploitation calculus. Daniel Kang’s research showed GPT-4 could exploit known software flaws autonomously with an 87% success rate at $8.80 per exploit. Google’s Big Sleep, a collaboration between Project Zero and DeepMind, independently discovered a critical flaw in SQLite, one of the most widely deployed databases in the world, before any human defender did. It was the first public example of an AI finding a previously unknown exploitable memory-safety issue in widely used real-world software.

Building on this example, I also recently interviewed Stanislav Fort, the Cofounder of AISLE, who also used AI to identify dozens of zero days in critical open source projects, including OpenSSL. You can find that interview at “Securing the Future with Autonomous Defense”.

By 2026, the industrialization phase had arrived. Sean Heelan built AI agents that generated over 40 working exploits for a single flaw, bypassing address randomization, control-flow protection, hardware security, and sandboxes for $50.

Yaron Dinkin and Eyal Kraft unleashed AI agent swarms on Windows kernel drivers. In 30 days, for $600 total, they found over 100 exploitable vulnerabilities across AMD, Intel, NVIDIA, Dell, Lenovo, and IBM. Cost per bug: $4. They shared that in a publication titled “100+ Kernel Bugs in 30 Days”. Anthropic announced that Claude had found over 500 high-severity vulnerabilities in widely used open-source software, bugs that had survived decades of expert human review.

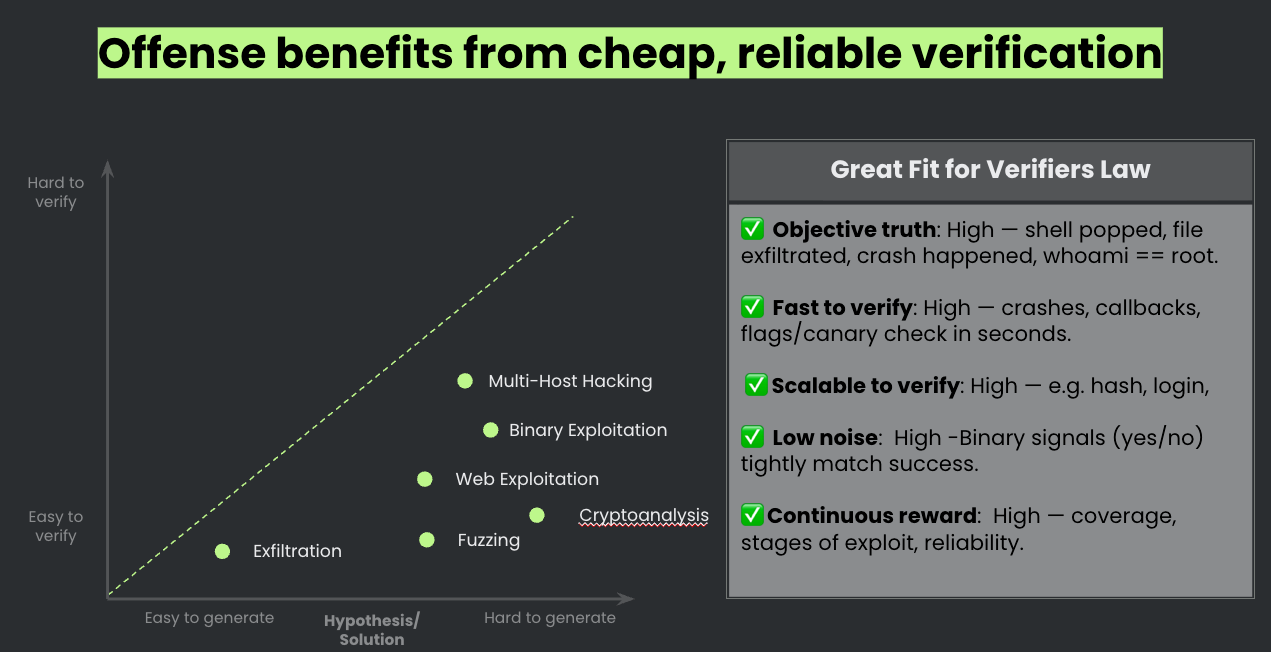

Epp introduces a concept he calls “Verifier’s Law” to explain why AI inherently accelerates offense faster than defense. The answer is verification asymmetry. In offense, feedback is binary and instant: did the exploit succeed? The AI learns at machine speed. In defense, feedback is ambiguous, slow, and expensive. Is this alert real? Is this system secure? The signal is noisy. The learning loop is broken. AI capability scales with the cheapness of verification, and offense has the cheapest verifier in cybersecurity. Sergej discussed this in his article “Winning the AI Cyber Race: Verifiability is All You Need”.

This aligns with the broader warning issued by Schneier, Heather Adkins, and Gadi Evron in their joint essay: the attackers have already entered their AI singularity moment, the defenders’ hasn’t begun. You can find their piece here “Autonomous AI Hacking and the Future of Cybersecurity”.

The CVE Tsunami: Volume Meets Velocity

The collapse of exploitation timelines is compounded by another trend that’s equally alarming: the sheer volume of vulnerabilities is growing exponentially. CVE.ICU, an open-source tracking project maintained by security researcher Jerry Gamblin, visualizes this clearly.

In 2022, roughly 25,000 CVEs were published. In 2023, that climbed to about 29,000. In 2024, it hit 40,000, a 38% year-over-year jump and 2025 set a new record with over 48,000 CVEs published, bringing the all-time total past 308,000. Since 2016, we’ve seen a 520% rise in the annual volume of new CVE records. I broke these trends down with Jerry recently in an interview titled “CVE Retrospective and Looking Forward”.

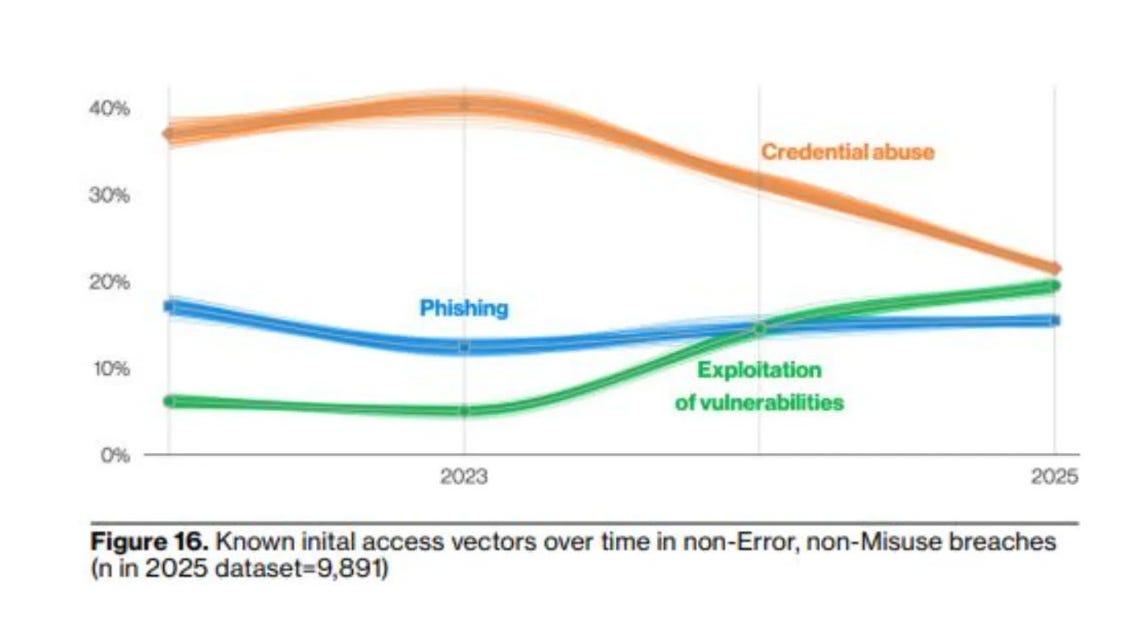

This is a point I’ve been tracking for some time. In “Vulnerability Velocity and Exploitation Enigma’s,” I discussed how vulnerability exploitation is overtaking phishing as a primary attack vector, a trend the 2024 Verizon DBIR confirmed when vulnerability exploitation tripled, growing 180% over the previous year’s report. I covered that specifically in “The DBIR is Entering its Vulnerability Era,” where the data made clear that the industry’s relationship with vulnerabilities was undergoing a fundamental shift.

The 2025 DBIR continued the trend, reporting a 34% year-over-year rise in breaches initiated via vulnerability exploitation. We’re not looking at a temporary spike. We’re looking at the new normal, and it’s only going to intensify as AI-assisted development tools accelerate code production, and with it, vulnerability production.

Developers using AI coding assistants are reporting 40%+ productivity gains, with many claiming AI is writing outsized portions of their code. More code, produced faster, with less human (or any) review. The attack surface isn’t just growing, it’s being manufactured at industrial scale.

You Can’t Teach Someone to Swim When They’re Drowning

Here’s the uncomfortable truth that the Zero Day Clock makes impossible to ignore: telling organizations to patch faster is not a strategy when the exploitation window has collapsed to hours and the vulnerability volume has grown 520% in a decade.

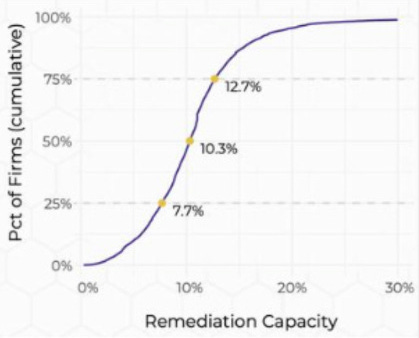

As I wrote in “You Can’t Teach Someone to Swim When They’re Drowning,” despite mountains of evidence and data that organizations simply can’t “patch all the things,” it remains the primary proposed advice. Researchers from the Cyentia Institute have demonstrated that organizations can only remediate about 10% of new vulnerabilities per month.

Vulnerability management leaders like Qualys have shown that exploitation windows generally outpace organizations’ ability to keep up. Research from Rezilion and the Ponemon Institute has demonstrated that the average organization has vulnerability backlogs numbering in the hundreds of thousands, and in large, complex environments, millions.

The Zero Day Clock’s own math drives this home brutally. When a vendor releases a security patch, AI can now reverse-engineer that patch, identify the vulnerability it fixes, and generate a working weaponized exploit in minutes. Attacks can begin propagating within hours. But organizations need an average of 20 days to test and deploy that same patch. The act of fixing a vulnerability now accelerates its exploitation. The defense creates the offense. And the offense arrives weeks before the defense can finish deploying. Organizations are exposed for 99.9% of the vulnerability lifecycle. Monthly patch cycles become theater, providing a false sense of security that doesn’t match the asymmetric reality.

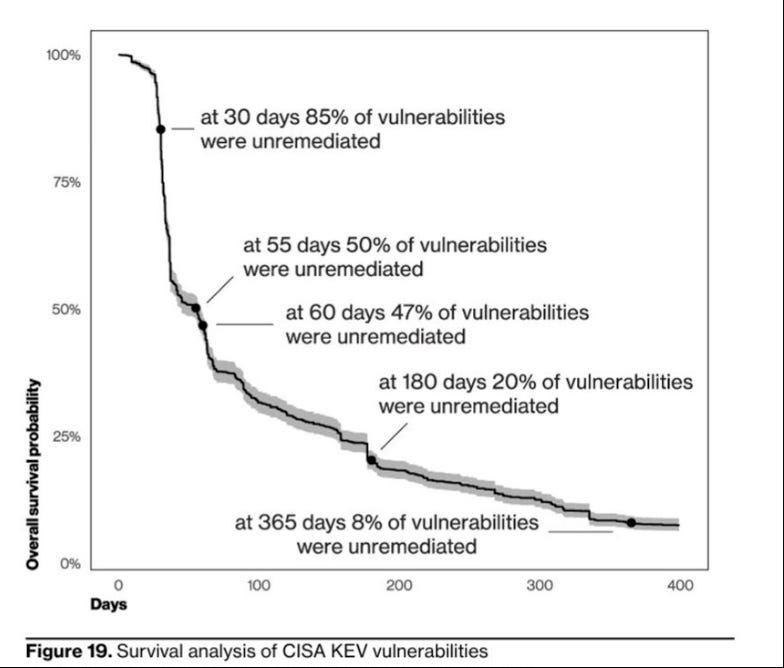

The CISA KEV data reinforces this, it takes organizations around 55 days to remediate 50% of vulnerabilities once a patch is available, patching doesn’t pick up until the 30-day mark, and by the end of the year nearly 10% are still open. When the median TTE is measured in hours, those timelines aren’t just inadequate — they’re irrelevant.

Context Is the Only Lifeline

If we can’t patch everything, and we can’t outrun automated exploitation, then the only viable path forward is ruthless prioritization grounded in context, and the data supports this. Firms like Endor Labs have shown through reachability analysis that 92% of vulnerabilities, without context such as whether they’re known to be exploited, likely to be exploited based on EPSS, reachable in the running application, or have existing exploits and PoCs, are purely noise.

That means the vast majority of the vulnerability firehose can be deprioritized if you have the right signals. But most organizations don’t. They’re still sorting by CVSS severity, which is inherently flawed since less than 10% of all CVEs per year are ever actually exploited. They’re treating every critical and high finding as urgent when the actual exploited population is a fraction of a percent. Only about 0.2% of published vulnerabilities are used in ransomware attacks or by APTs, yet 24.2% of organizations were vulnerable to a CVE known to be used by those groups in 2024.

Runtime context, reachability analysis, exploit intelligence, and environmental factors, these are what separate actionable risk from noise. The Zero Day Clock isn’t just an argument for faster patching. It’s an argument for smarter defense: knowing what’s actually reachable, what’s actually being exploited, and what actually matters in your specific environment.

The Remediation Crisis: Finding Was Never the Hard Part

Here’s the uncomfortable truth that gets lost in the excitement over AI-powered vulnerability discovery and the anxiety over collapsing exploitation windows, finding vulnerabilities has never been the hard part, the hard part is fixing them.

The industry doesn’t have a detection deficit, it has a remediation crisis and until we confront that reality head-on, no amount of faster scanning, smarter prioritization, or more comprehensive CVE databases will close the gap the Zero Day Clock is tracking.

As I discussed in my recent piece “Claude Code Security: A Reasoned Take on What It Means for AppSec,” every organization I speak to is sitting on vulnerability backlogs growing faster than they can be triaged, let alone remediated. Adding a more powerful discovery engine without addressing the remediation bottleneck risks making the problem worse, not better. You’re not solving the drowning problem by pointing out more water.

The root cause isn’t technical, it’s operational and organizational. Engineering teams are under relentless pressure to ship features, hit revenue targets, and maintain velocity. Security remediation competes directly with those priorities, and usually loses.

Developers are already stretched thin, and asking them to context-switch into fixing scanner-flagged vulnerabilities without sufficient context is a losing battle. Vulnerability backlogs balloon because incentive structures, resource constraints, and competing interests make sustained remediation operationally brutal. This is before you even get to the technical complexity of remediation itself, the risk of breaking something in production, dependency chains that cascade across services, and the regression testing required to validate that a fix doesn’t introduce new problems.

This is why the emerging conversation around AI-assisted and automated remediation matters so much, and why it’s been conspicuously absent from the industry’s AI hype cycle until recently.

We’ve spent the last several years celebrating AI’s ability to find bugs faster, generate exploits cheaper, and scan codebases more comprehensively. But the remediation side of the equation, the side that actually reduces risk, has been largely untouched by innovation. The reason is straightforward, remediation is messy, it’s not a clean input-output problem. Fixing a vulnerability in a real enterprise environment means navigating internal politics, change management processes, deployment pipelines, testing infrastructure, and the ever-present risk that the fix breaks something customers depend on, ironically making security impact the “A” in the CIA triad.

While we are seeing some innovation in this space, we’re not there yet. AI-generated patches still require validation, regression testing, and human judgment about whether a fix is safe to deploy. The complexity of enterprise environments means automated remediation won’t be a flip-the-switch solution. But the direction is clear, and it’s the right one, the industry has spent decades optimizing the left side of the vulnerability lifecycle, finding issues faster, triaging them smarter, prioritizing them better.

The organizations that will actually close the gap the Zero Day Clock is measuring will be the ones that invest equally in the right side, which is fixing the issues at machine speed, with machine intelligence, while keeping humans in the loop for the decisions that matter.

The asymmetry Epp describes through the Verifier’s Law, that offense learns faster because verification is cheap, doesn’t have to be permanent. If we can make remediation verification cheaper and faster through AI-assisted testing, automated regression analysis, and intelligent patch generation, we start to close the verification gap on the defensive side. While it sounds like fantasy, it’s an engineering problem, and for the first time, we have tools sophisticated enough to explore solving it.

The Call to Action: Structural Change, Not Better Tools

Epp’s Zero Day Clock doesn’t stop at diagnosis, it includes a Call to Action with ten demands that read less like vendor marketing and more like a manifesto for structural reform. Vendor liability to make insecurity expensive for the people who create it. Security by default, built into the platform, the framework, the infrastructure. Disposable architecture, build to rebuild, not to patch.

Mandating memory-safe languages for new critical infrastructure, given that approximately 70% of all critical security vulnerabilities in large C and C++ codebases are memory-safety bugs. Open-sourcing the defense so AI-powered security tools are free and accessible to every defender, not just the ones who can afford them and redesigning regulation for machine-speed defense, because every compliance requirement that adds latency to defensive response is a gift to the attacker.

Many of these echo arguments I’ve been making. The Secure-by-Design conversation cannot remain voluntary and toothless. The “just patch faster” advice is a failure of imagination that ignores the structural reality organizations face. The regulatory frameworks we rely on were built for a world that no longer exists, a point I’ve made about AI governance frameworks as well, where the most cited standards don’t even mention agentic AI despite it being the fastest-moving risk vector in the enterprise landscape.

But here’s the demand I think matters most, bridging the gap between hackers and policy. As Jeff Moss put it, the hacker community has been the canary in the coal mine for 30 years.

The people who understand how systems break , the security researchers, the ethical hackers, the competition winners, are almost entirely disconnected from the people who write policy, allocate budgets, and govern critical infrastructure. If we want governance and regulation that actually works at the speed this problem demands, the technical community has to be in the room where decisions are made.

The Clock Is Ticking

The Zero Day Clock is, at its core, a data visualization project. But it’s also a mirror, it reflects back to us every structural failure, every perverse incentive, every warning we ignored for the past two decades. The economic externalities Anderson identified in 2001 haven’t been fixed. The market dynamics Schneier described in 2007 haven’t changed. The patch paradox Flake exposed in 2004 has been supercharged by AI, and the exploitation timelines that once gave defenders breathing room have compressed to the point of irrelevance.

Sergej Epp’s contribution of the Zero Day Clock isn’t inventing a new concept, it’s making an old crisis impossible to look away from. The data fits an exponential decay curve. This is not a trend that stabilizes, it is a collapse, and organizations that are still relying on monthly patch cycles, CVSS-based prioritization, and voluntary commitments from vendors to ship secure software are operating in a fantasy.

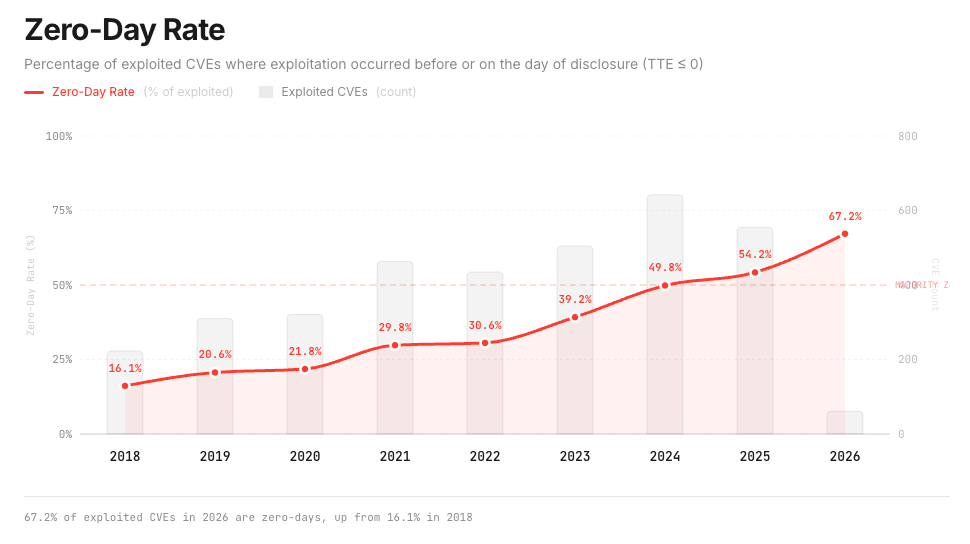

The Zero Day Clock’s most sobering data point may be the zero-day rate itself, 67.2% of exploited CVEs in 2026 are zero-days, up from 16.1% in 2018.

When two-thirds of exploited vulnerabilities are weaponized before or on the day of disclosure, the entire model of reactive security, find it, disclose it, patch it, deploy it, has already failed. We’re not approaching a breaking point, we’ve already passed it.

The question isn’t whether the exploitation window is collapsing, the data is clear. The question is whether we’ll treat this as the structural crisis it is, demanding vendor accountability, regulatory modernization, and fundamental changes to how we build and operate software, or whether we’ll keep asking organizations to swim faster while they drown.

The clock is ticking, the gap is closing and for an alarming number of vulnerabilities, it’s already at zero. Will the industry wake up, or just keep pressing snooze?