The Attack Surface Exponential

Code Surge: GitHub's Exponential Growth and the Attack Surface Nobody Is Ready For

This is a follow-up to my earlier piece, Vulnpocalypse: AI, Open Source and the Vulnerability Tidal Wave. In that article, I laid out the case that AI-accelerated vulnerability discovery was about to overwhelm the industry’s capacity to respond.

What I want to do here is zoom in on the production side of the equation. Because the code itself is growing at a rate that should concern every security team, and the math behind the attack surface expansion is not complicated, but it should definitely be concerning.

The Billion-Commit Baseline

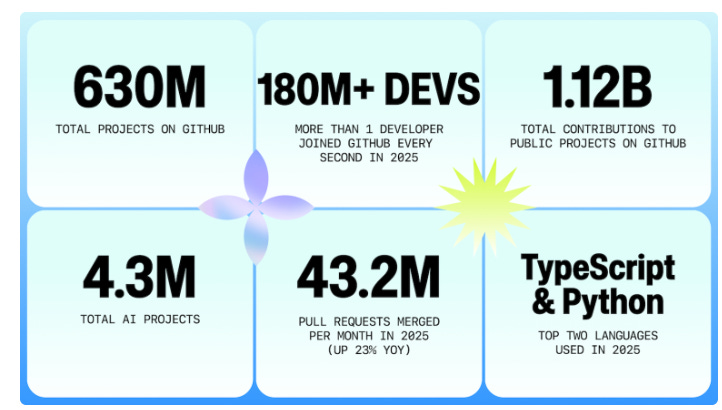

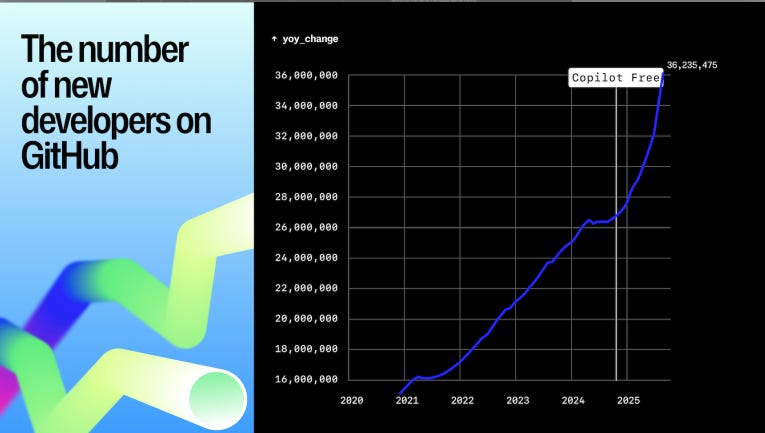

The GitHub Octoverse 2025 report tells a staggering growth story. GitHub now has more than 150 million developers. Over 36 million new developers joined in the past year alone, which works out to more than one new developer every second. Developers pushed nearly 1 billion commits in 2025, up 25.1% year over year, and created more than 230 new repositories every minute. Merged pull requests averaged 43.2 million per month, up 23% year over year.

Those numbers were already unprecedented, then 2026 happened.

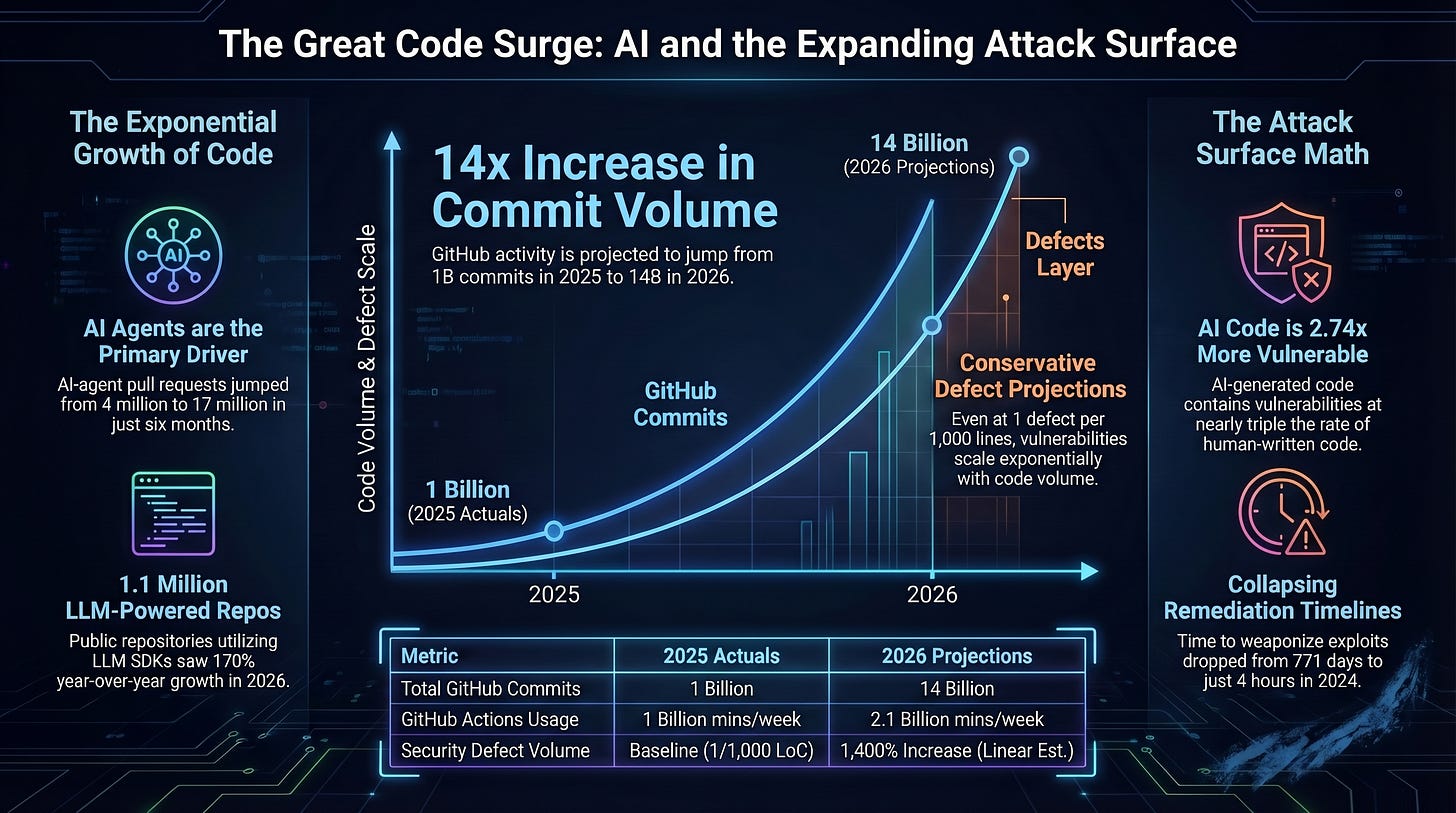

In April 2026, GitHub COO Kyle Daigle posted that platform activity had surged to 275 million commits per week, putting GitHub on pace for 14 billion commits this year if growth remains linear, and his parenthetical was telling “Spoiler: it won’t.” He added that GitHub Actions had grown from 500 million minutes per week in 2023, to 1 billion in 2025, to 2.1 billion minutes in a single week in early April 2026. That is a quadrupling in three years for infrastructure that was already running at enormous scale.

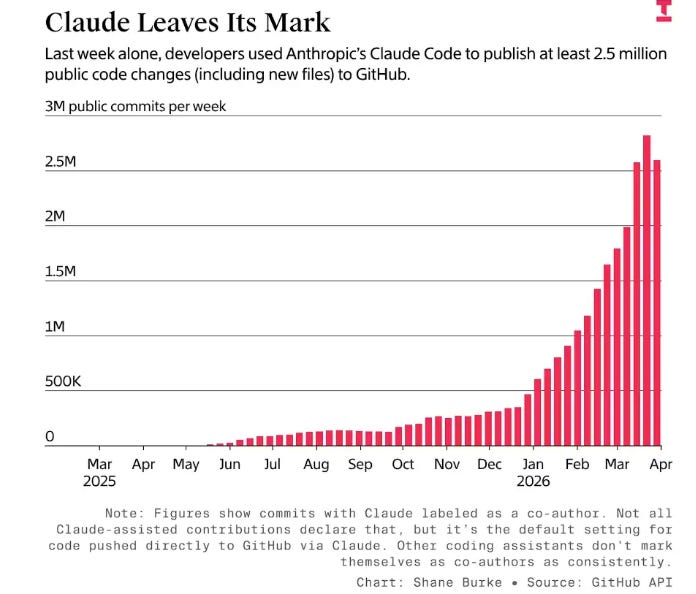

What is driving this? AI coding agents. According to reporting from The Information, AI-agent pull requests jumped from roughly 4 million in September to 17 million in March. The weekly frequency of code submissions to public GitHub projects using Claude Code alone increased nearly 25-fold in six months, from around 100,000 commits to over 2.5 million by late March 2026.

Claude Code, OpenAI’s Codex CLI, Cursor, Windsurf, and a growing ecosystem of open-source alternatives have gone from curiosities to standard workflow components in less than a year.

This is an exponential growth curve, and one that has security implications we will discuss below as well.

The Platform Is the Economy

To understand why this matters for security, consider the centrality of the platform itself. GitHub is not just a code hosting service. It is the dominant global software supply chain, and its former CEO recognized that the platform’s architecture was not built for the era that is arriving.

Thomas Dohmke stepped down as GitHub CEO and in February 2026 launched Entire, a new developer platform backed by a $60 million seed round at a $300 million valuation. In his Hello Entire World blog post, Dohmke framed the problem directly. Manual software production systems were never designed for the era of AI. He compared the current moment to how automotive companies replaced craft-based production with assembly lines, and positioned Entire as the platform where agents and humans collaborate, learn, and ship together.

Dohmke is building around three pillars. A Git-compatible database unifying code, intent, constraints, and reasoning. A universal semantic reasoning layer enabling multi-agent coordination, and an AI-native user interface for agent-human collaboration.

The fact that the person who ran GitHub for nearly four years looked at the trajectory and concluded a new platform was needed tells you something about the scale of what is happening. Several have reported challenges for GitHub due to rises in automation, traffic etc.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The Attack Surface Math

Here is where cybersecurity leaders need to pay attention. The relationship between code volume and attack surface is not theoretical, it is arithmetic and it has strong implications.

Industry research often places software defect density at 5 to 20 defects per thousand lines of code. Even well-maintained, heavily audited open source projects tend to land in the range of 1 to 5 defects per thousand lines. For the sake of argument, let’s use a conservative estimate and assume the lower end of that range.

If GitHub processed 1 billion commits in 2025, and is on pace for 14 billion in 2026, the volume of code hitting production is growing by an order of magnitude in a single year. Even assuming a modest and stable vulnerability rate per line of code, the absolute number of vulnerabilities being introduced is growing exponentially alongside the code itself.

There’s also an argument to be made that vulnerability rate is not stable and instead may be getting worse.

Research consistently shows that AI-generated code contains vulnerabilities at 2.74 times the rate of human-written code. CodeRabbit’s December 2025 report found AI-generated code had 70% more errors than human-written code, and the errors were more severe.

AI co-authored code showed 1.7 times more major issues, misconfigurations were 75% more frequent, and security vulnerabilities appeared at nearly triple the human baseline. Meanwhile, code churn is up 41%, code duplication has quadrupled, and the kind of careful refactoring that keeps codebases healthy has collapsed from 25% of changed lines in 2021 to under 10% by 2024.

While there is a large “it depends” based on the user, model, context and more, there are many reports, both from academia and industry that highlight the vulnerabilities and risks of AI-generated code. That includes SusVibes, BaxBench, CMU’s Benchmarking Vulnerability of Agent-Generated Code in Real-World Tasks and many more.

So we are not just producing more code. We are producing more vulnerable code, faster, at a scale the industry has never seen. This is all happening at the same time that defenders were already drowning in massive vulnerability backlogs in the hundreds of thousands to millions and AI is leading to the mass industrialization of exploitation for a fraction of historical costs.

The Vibe Coding Multiplier

A significant share of this growth is coming from a new class of developer. The Octoverse report showed that 80% of new developers on GitHub use Copilot within their first week. Over 1.1 million public repositories now utilize an LLM SDK, with nearly 700,000 of those created in the past year alone, representing 178% year-over-year growth.

Many of these new developers are what the industry has started calling vibe coders. They are building applications using natural language prompts and AI assistants, often without deep understanding of the code being generated or the security implications of their design choices. I explored this in detail in A Security Vibe Check, and the picture is concerning.

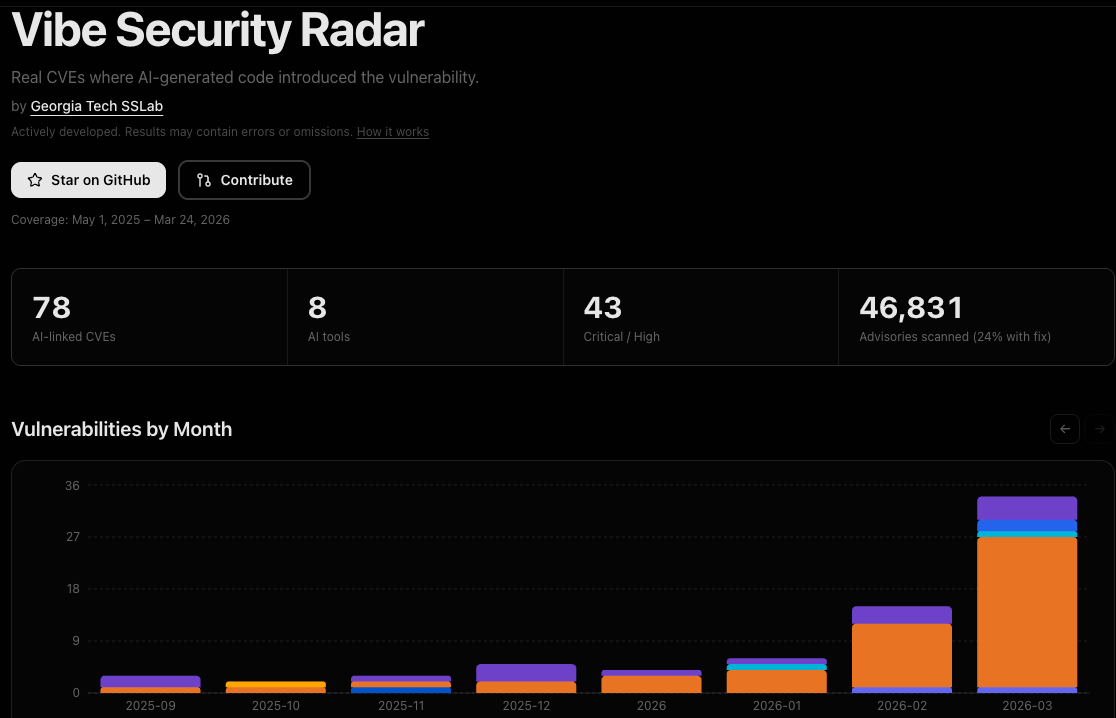

AI-assisted commits show a 3.2% secret-leak rate compared to a 1.5% baseline across all public GitHub commits, roughly doubling the rate of credential exposure. Georgia Tech’s Systems Software and Security Lab tracked at least 35 new CVEs disclosed in March 2026 alone that were the direct result of AI-generated code.

OWASP added a dedicated category to its Top 10 in 2025 specifically calling out AI-assisted development as a potential security risk.

This is not about blaming new developers. It is about recognizing that we are democratizing software creation without democratizing security knowledge, and the gap between those two curves is where attackers will live, and attackers weren’t exactly struggling to succeed prior to AI either.

The Other Side of the Equation

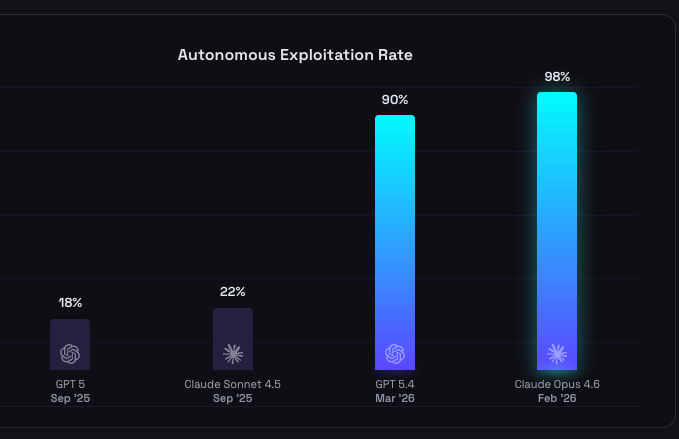

While the attack surface is expanding exponentially on the production side, the cost and effort required to find and exploit vulnerabilities on the offensive side is collapsing simultaneously.

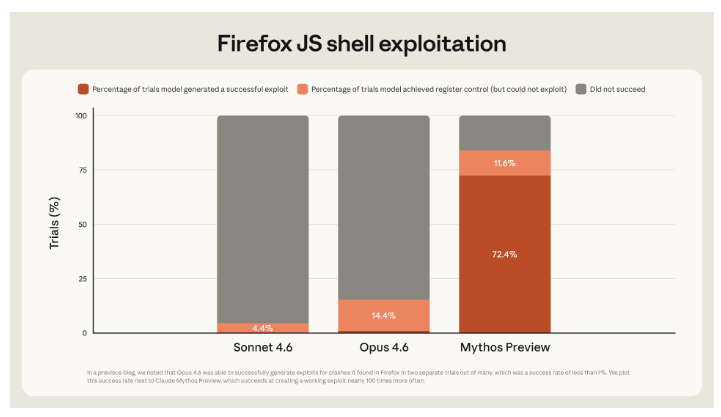

Anthropic announced Claude Mythos Preview in April 2026. This unreleased frontier model has already found thousands of high-severity vulnerabilities, including flaws in every major operating system and web browser.

It discovered a 27-year-old vulnerability in OpenBSD, one of the most security-hardened operating systems in the world, and autonomously chained together Linux kernel errors into a full exploitation path. Anthropic did not explicitly train Mythos for these capabilities. They emerged as a downstream consequence of general improvements in code reasoning and autonomy.

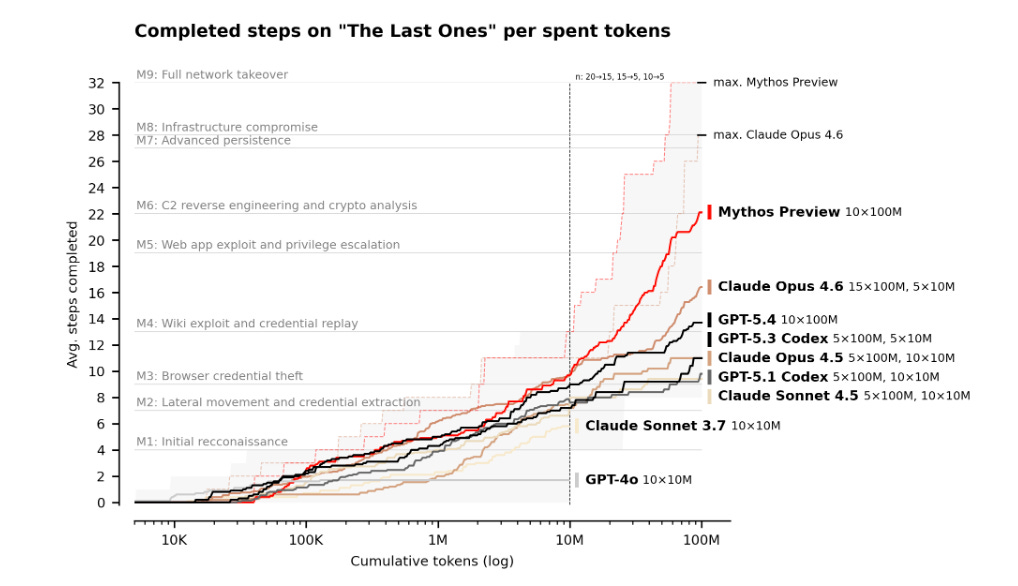

It isn’t just finding bugs either, as the UK’s AI Security Institute demonstrated in their evaluation of Mythos, it was able to carry out complex 32 step cyber activities, from initial reconn through full network takeover, an accomplishment no model, including Opus 4.6 had done prior.

However, this the intersection of cyber and AI isn’t siloed to a single model or vendor either.

AISLE’s post-Mythos analysis introduced a critical insight. AI vulnerability discovery capability is jagged. It does not scale smoothly with model size, generation, or price. Their testing showed that even small, cheap models (a 3.6 billion parameter model costing $0.11 per million tokens) could detect certain classes of bugs, like a straightforward FreeBSD buffer overflow. The implication is that the barrier to entry for AI-assisted vulnerability discovery is not frontier model access. It is the system and expertise you build around the model.

MOAK (Mother of All KEVs) pushed this further, demonstrating the first agentic AI workflow capable of exploiting hundreds of known dangerous vulnerabilities in minutes. Not just discovering them, but also exploiting them.

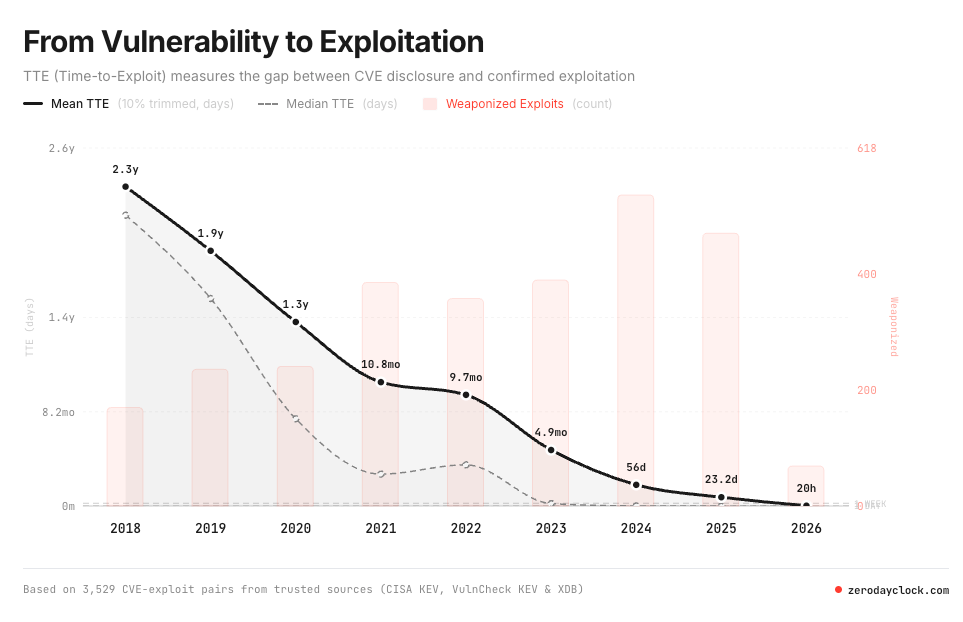

As I detailed in my piece on Vulnerability Velocity and Exploitation Timelines, the Zero Day Clock tracks this collapse in real time. Median time from disclosure to first exploit went from 771 days in 2018 to 6 days in 2023 to 4 hours in 2024. In 2025, the majority of exploited vulnerabilities were weaponized before they were even publicly disclosed. AI systems can now generate working CVE exploits in 10 to 15 minutes at approximately $1.00 per exploit. And 67.2% of exploited CVEs in 2026 are zero-days, up from 16.1% in 2018.

The Convergence Nobody Is Ready For

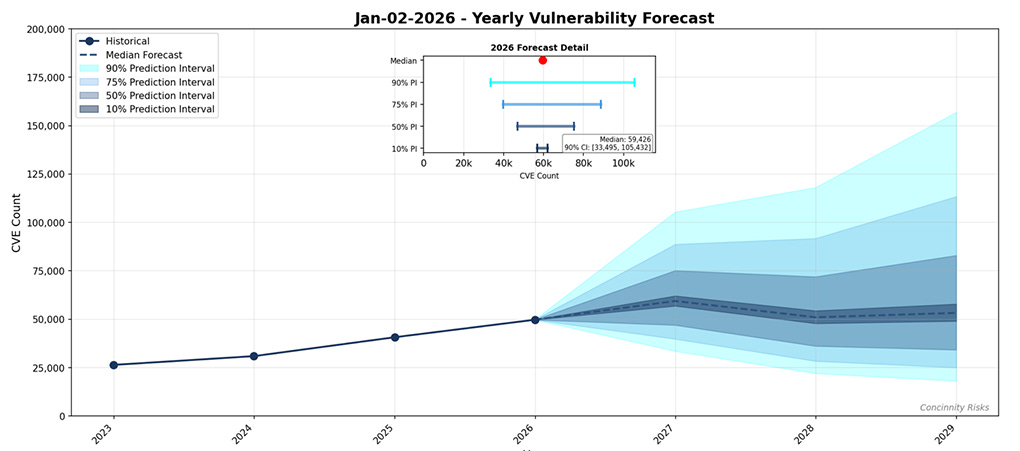

So let’s take a look at the themes unfolding in tandem. We have exponential growth in code volume, driven by AI agents and democratized development. We have elevated vulnerability density in that code, because much of it is AI-generated by developers who are less security-conscious than the experienced practitioners who came before them. We have an absolute explosion in the number of CVEs, with FIRST forecasting approximately 59,000 new CVEs in 2026 alone, and we have AI making it trivially cheap to discover and exploit those vulnerabilities.

These are not parallel trends, they are converging, and they will be problematic.

As I discussed with Varun Badhwar on Resilient Cyber, the industry has been talking about shifting security left for over a decade, but the code is now being produced faster than security teams can review it, scan it, triage it, or remediate the findings.

Traditional AppSec programs were built for a world where developers wrote code, security teams reviewed it, and the pace of production was bounded by human typing speed and review cycles. That world as we know it is now gone. I discussed this in a recent episode of Resilient Cyber, with Jack Cable, Cofounder and CEO of AppSec vendor Corridor as well, and his take on a category they call “Agentic Coding Security Management”.

The 14 billion commits projected for 2026 on GitHub alone represent just one platform. It does not account for GitLab, Bitbucket, internal enterprise repositories, or the growing ecosystem of AI-native development platforms like Entire that are being built specifically to accelerate this further. When Thomas Dohmke sees enough momentum to leave the CEO chair at GitHub and raise $60 million to build for this future, the signal is pretty clear.

What This Means for Security Leaders

The implications are systemic and will inevitably impact every enterprise, organization and security team.

First, vulnerability management programs need to accept that they will never scan, triage, and patch their way to safety at these volumes. The backlog is growing faster than any team can reduce it. Prioritization based on exploitability, reachability, and business context is no longer a nice-to-have. It is the only viable operating model and even then, isn’t a panacea on its own.

Second, security guardrails need to move into the development workflow itself. When 80% of new developers are using AI coding assistants in their first week, security controls need to be embedded in those same tools and pipelines. This means hooks, inline enforcement, policy-as-code, and runtime governance, not after-the-fact scanning.

Third, organizations need to reckon with the fact that their attack surface is no longer something they can fully inventory, let alone fully secure. The volume of code, the velocity of production, and the proliferation of AI-generated components mean that risk management, not risk elimination, is the only realistic framing and it’s why a lot of the dialogue among CISOs and security folks on platforms such as LinkedIn are now discussing “Resilience” as a key theme. I’m biased given the name of this outlet but I like the trend and its a good place for teams to focus.

The Vulnpocalypse I wrote about is not a future state. It is the present, and the growth curves are steepening. There is no question the attack surface is growing exponentially, the data makes that indisputable.

And, as much as I hate to say it, I think things will get worse before they get better, as the industry and society adapt to this new AI-driven operating model and its implications for cybersecurity.

This is the article the industry needs to sit with. The maths is not complicated. More code, worse code, cheaper exploitation. What I keep coming back to is that this is not just a software problem.

"Anthropic did not explicitly train Mythos for these capabilities. They emerged as a downstream consequence of general improvements in code reasoning and autonomy."

That's what THEY claim.

I'm not so sure. I suspect the model was explicitly trained in cybersecurity solely to provide Anthropic with a model they could offer to major corporations - approved by the government - and governments themselves, so they could recover from the Pentagon fiasco.

Also note the apparent source of Mythos' capability in this regard, as Gary Marcus noted, is that it has a neurosymbolic pattern-matching capability embedded in the code. In other words, it is NOT a strict generational LLM.

That implies the ability was ADDED to its basic LLM technology precisely for the purpose of being good at pattern matching for cybersecurity.

I can't prove this hypothesis, of course, absent examination of internal Anthropic documents. But given Anthropic's continued pattern of deception and hyping of its model's capabilities, I can not trust them to be truthful about anything. And i would advise everyone else to be distrusting - as much so as everyone is about Sam Altman. Amodei and Altman are a pair. due to their known antipathy for each other.