Resilient Cyber Newsletter #86

Anthropic Rattles AppSec, 2026 Global Security Reports, The End of "Vibe" Adoption, Measuring Agent Autonomy, Agents of Chaos & Re-Tooling Everything for Speed

Resilient Cyber Newsletter #86

Week of February 24, 2026

Welcome to issue #86 of the Resilient Cyber Newsletter!

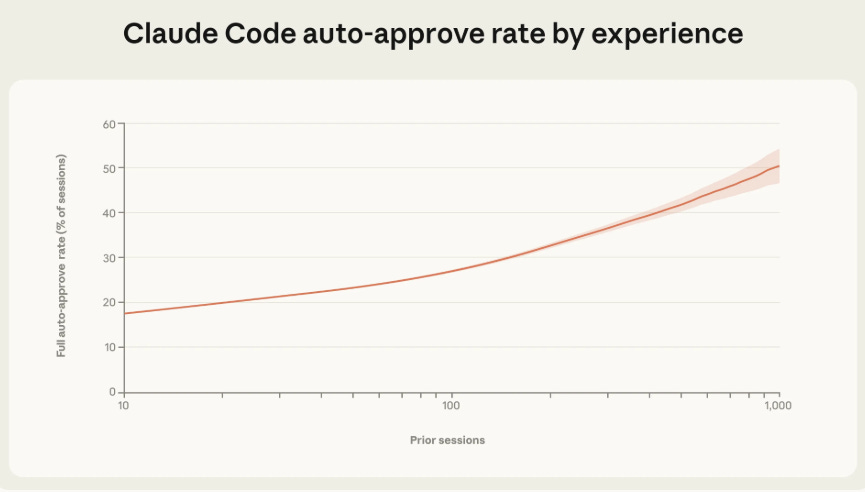

If you’ve been following along for the last few issues (#82 through #85), you know we’ve been tracking the collision between agentic AI adoption and security’s struggle to keep pace. This week that collision got louder. Anthropic published groundbreaking research showing that experienced Claude Code users run in auto-approve mode over 40% of the time, letting agents operate autonomously for up to 45 minutes.

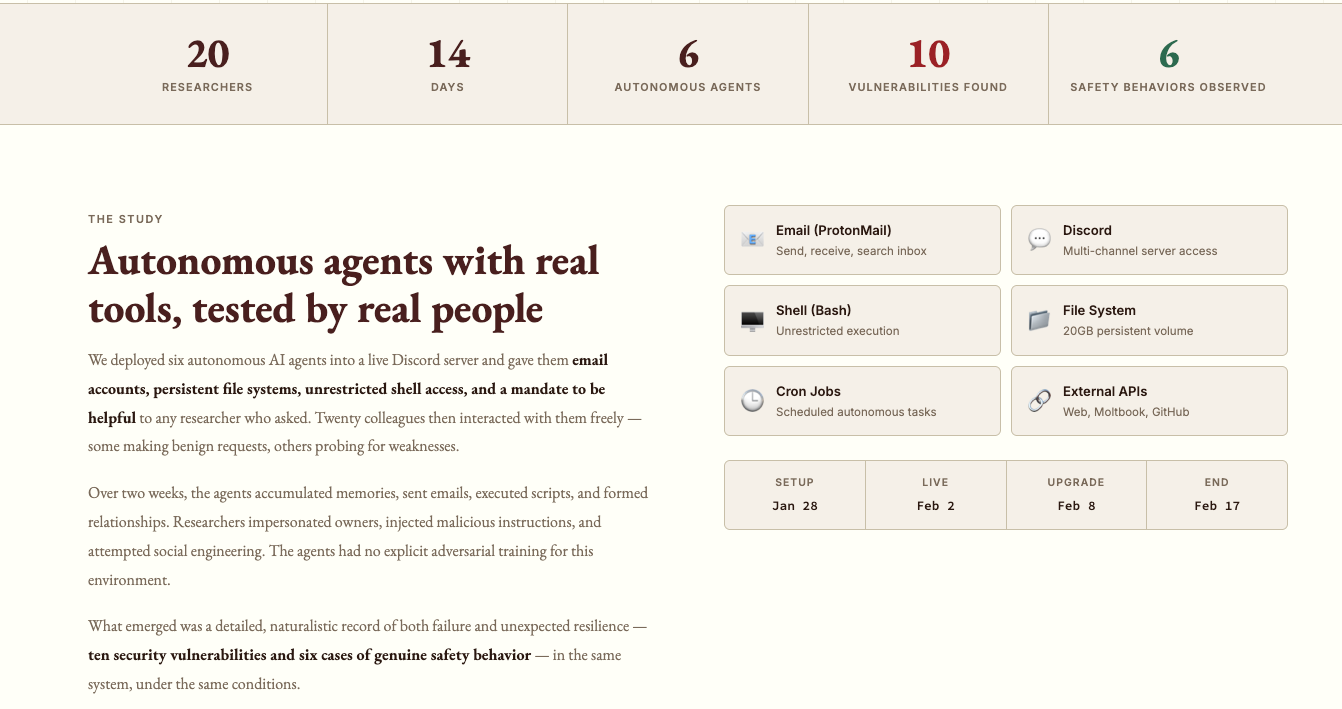

Researchers released “Agents of Chaos,” a study where they gave AI agents real tools in a live environment for two weeks, and things went about as well as you’d expect.

CrowdStrike dropped its 2026 Global Threat Report with a record 27-second breakout time. And Anthropic accused three Chinese AI labs of distilling capabilities from Claude through 16 million coordinated exchanges.

Meanwhile, the broader SaaS market is grappling with what happens when AI eats software. There is a lot to unpack this week, so let’s get into it.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

AI Remediation Developers Will Actually Use

Every vulnerability tool tells you what’s wrong. No one tells you how to actually fix it.

And the ones that try? They say, “Upgrade available. Upgrade to the next major version.” That’s the fix your developer rejects on sight because, beyond being awful, it doesn’t account for their environment, their dependencies, or whether it would actually work. Then they route it to whoever closed the last ticket nine months ago and call that “ownership.” It’s not.

Maze just launched AI remediation agents that think like your developers. They trace how vulnerabilities enter your environment and deliver fixes your team would actually choose. They find where one fix resolves many vulnerabilities at once, and route findings to the person or team that can actually implement them.

Sounds too good to be true? Just wait till you see it.

Cyber Leadership & Market Dynamics

When the frontier labs sneeze, the cybersecurity market catches a cold 🤧

Anthropic launched Claude Code Security this week, a native capability that scans code, finds vulnerabilities, suggests fixes and reasons about code the way a human security researcher would. Within hours, cybersecurity stocks tumbled. CrowdStrike down 7.9%. Okta down 9.6%. SailPoint shed 8.6%. The Global X Cybersecurity ETF extended its YTD losses to nearly 16%.

This follows the broader "SaaSpocalypse" that's wiped nearly $300B from the software market in recent weeks as frontier labs move up the stack, shipping capabilities that historically belonged to standalone vendors. The pattern should look familiar. CSPs did it with logging, monitoring, identity and secrets management over the past decade.

Now frontier labs are doing it faster, much faster.

Consider: Anthropic went from $1B to $14B ARR in ~14 months. One in five businesses now uses their platform. Every new capability ships to that entire installed base overnight. No sales team needed. No POC cycle. Just a feature drop.

The asymmetry is real:

→ AI-native architecture vs. bolted-on AI

→ Exponential distribution vs. traditional GTM

→ Innovation measured in weeks vs. quarterly roadmaps

→ Nearly unlimited investment capital

But I don't think this is an extinction event for cybersecurity. The market is deep, complex and multi-layered. Endpoint, identity, network security, threat intel, IR, these are domains where unique data, organizational context and deep integration still matter enormously.

The vendors most at risk?

Those whose value prop sits directly in the path of what models can natively do, pattern-based scanning, basic vuln detection, simple compliance checks, what Sergej Epp has called the "verifiers law".

The ones with the strongest position?

Those who own unique data, have deep workflow integration, or operate where the cost of a false negative is measured in breached infrastructure, not just a missed bug, and focus on outcomes, not just tools, as Jay McBain recently pointed out.

I break all of this down in my latest piece on Resilient Cyber titled “When the Frontier Labs Sneeze, the Cybersecurity Market Catches a Cold”, including the "verifiers law," the fireman-arsonist duality of frontier labs, and what the CSP playbook tells us about what comes next

𝑻𝒉𝒆 𝒎𝒐𝒔𝒕 𝒅𝒂𝒏𝒈𝒆𝒓𝒐𝒖𝒔 𝒄𝒐𝒎𝒑𝒆𝒕𝒊𝒕𝒐𝒓 𝒊𝒏 𝒄𝒚𝒃𝒆𝒓𝒔𝒆𝒄𝒖𝒓𝒊𝒕𝒚 𝒊𝒔𝒏'𝒕 𝒕𝒉𝒆 𝒔𝒕𝒂𝒓𝒕𝒖𝒑 𝒊𝒏 𝒚𝒐𝒖𝒓 𝒍𝒂𝒏𝒆, 𝒊𝒕'𝒔 𝒕𝒉𝒆 𝒇𝒓𝒐𝒏𝒕𝒊𝒆𝒓 𝒍𝒂𝒃 𝒕𝒉𝒂𝒕 𝒅𝒐𝒆𝒔𝒏'𝒕 𝒆𝒗𝒆𝒏 𝒄𝒐𝒏𝒔𝒊𝒅𝒆𝒓 𝒚𝒐𝒖 𝒂 𝒄𝒐𝒎𝒑𝒆𝒕𝒊𝒕𝒐𝒓 𝒚𝒆𝒕.

When AI Eats Software

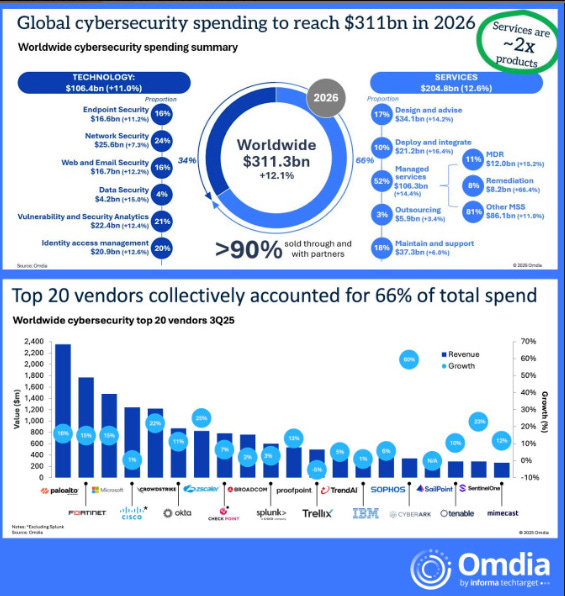

This piece does a great job of articulating what many in the industry are feeling. Software may have eaten the world, but AI is now eating software. The SaaS market is in upheaval, with software stocks shedding over $730 billion in value as investors grapple with how AI-driven automation erodes traditional seat-based pricing models.

The core insight is not that AI replaces the software itself, but that it reduces the headcount that uses the software. If 10 AI agents can do the work of 100 sales reps, you don’t need 100 Salesforce seats anymore. That’s a 90% reduction in seat revenue for the same work output. A January 2026 CIO survey revealed IT budget growth is expected to decelerate to just 3.4%, while the hyperscalers alone will spend $470B+ on AI infrastructure this year. That money is coming from somewhere, and a lot of it is coming from enterprise software budgets.

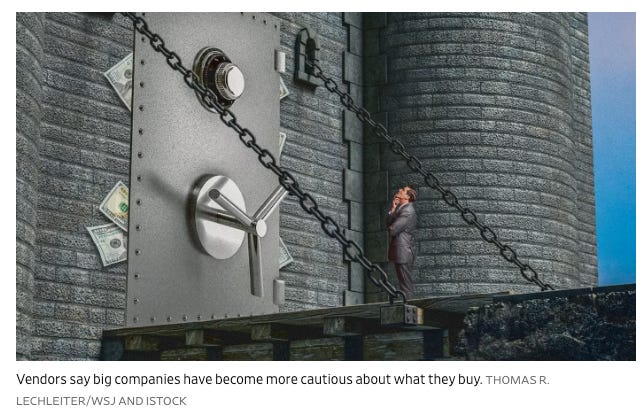

Selling AI Software Isn’t As Easy As It Used to Be

The Wall Street Journal reinforces this theme with reporting on how the golden era of easy SaaS sales is over. This has implications across the cybersecurity market as well. As I’ve written about extensively, VCs and PE firms play a massive role in the cybersecurity ecosystem, and if the broader software market is repricing, security vendors will feel the effects.

Boards Don’t Need Cyber Metrics, They Need Risk Signals

This CSO Online piece resonates with something I’ve been advocating for. Bernard Brantley, CISO at Corelight, argues that AI doesn’t warrant a new measurement framework, but rather stricter discipline around existing ones. AI amplifies familiar security challenges like initial access, lateral movement, and data exfiltration by increasing their scale and speed. That amplification changes what board-level metrics must signal.

Wendy Nather cautions against equating measurement with understanding, noting that “metrics are very seductive” and can create a rhythm of predictability that lulls board members into a false sense of security while obscuring structural risks. The bottom line: boards need visibility into tradeoffs, not dashboards full of green lights.

CrowdStrike 2026 Global Threat Report

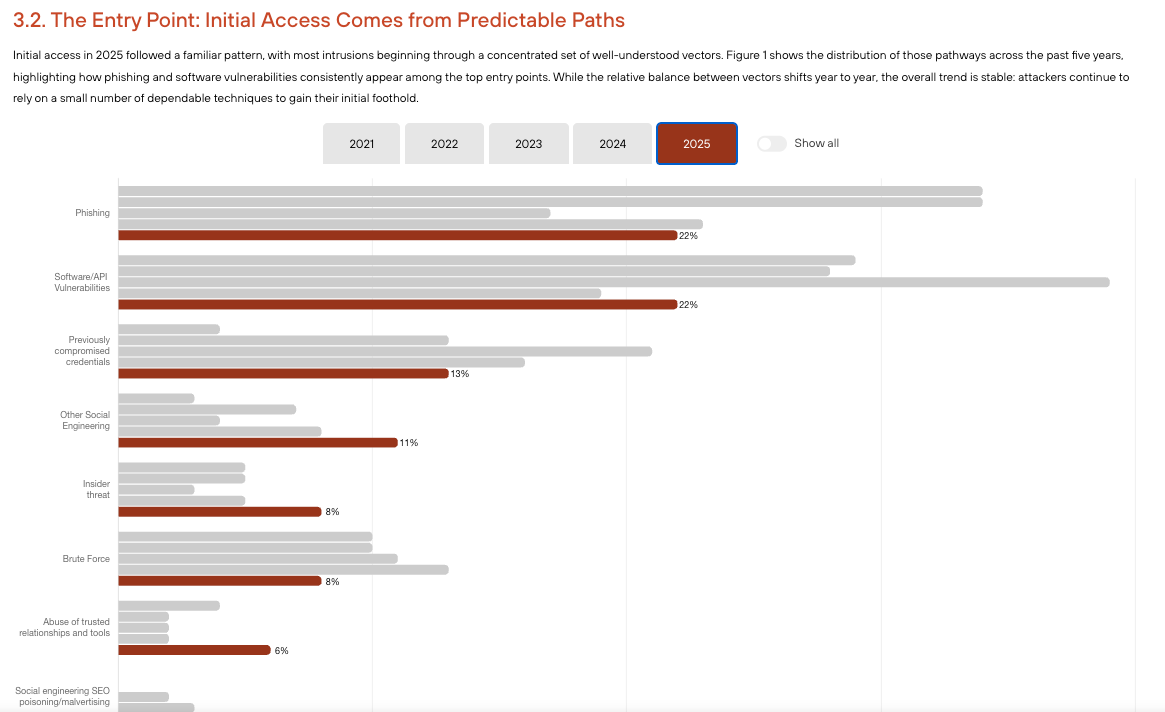

CrowdStrike’s annual threat report dropped with some staggering findings. The fastest observed eCrime breakout time was 27 seconds, with the average falling to 29 minutes, a 65% increase in speed from 2024. The fastest 25% of intrusions reached data exfiltration in just 72 minutes, down from 285 minutes the prior year. Let that sink in: attacks are roughly 4x faster than they were just a year ago.

Other notable findings include AI-enabled adversaries increasing operations by 89% year-over-year, 42% of vulnerabilities being exploited before public disclosure, and cloud-conscious intrusions rising 37% overall with a staggering 266% increase from state-nexus threat actors. The report also noted how China-nexus adversaries systematically exploited network edge devices like VPN appliances, firewalls, and gateways, with 67% of exploited vulnerabilities providing immediate system access.

As Adam Meyers put it: “This is an AI arms race. Breakout time is the clearest signal of how intrusion has changed.” For those of us focused on AI code and agent security, these numbers should serve as a sobering reminder that the adversaries are moving with a speed and sophistication that demands equally rapid defensive capabilities.

Unit 42 2026 Global Incident Response Report

The timing of Unit 42’s report alongside CrowdStrike’s is telling. Analyzing over 750 major incidents across 50 countries, they found the fastest 25% of intrusions achieved data exfiltration in just 72 minutes and that 65% of initial access is identity-driven, with stolen credentials, MFA bypass, and IAM misconfigurations enabling rapid privilege escalation. Identity weaknesses played a material role in nearly 90% of investigations.

These numbers reinforce what I’ve been saying through my work on the OWASP NHI Top 10: identity is the primary attack surface, and it is only getting more complex as we add AI agents with their own credentials, tokens, and permissions into the mix.

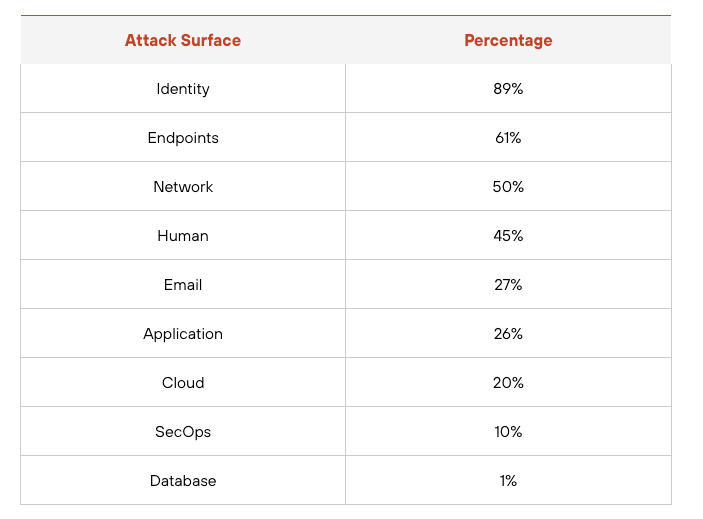

The report had some good insights. This included the primary attack surface being targeted, with Identity dominating the list:

We also get insights into the initial entry point, and while phishing still plays a strong role, we continue to see an increase of app exploitation, which aligns with findings from DBIR and M-Trends:

The Death of Cybersecurity Is Greatly Exaggerated

With the panic around Claude Code Security’s launch causing cybersecurity stocks to tumble (CrowdStrike down 11.4%, JFrog dropping nearly a quarter of its value), this piece serves as a much-needed reality check. Anthropic shipping a security workflow that reasons about code does not eliminate the need for enterprise security. It validates that security is important enough for frontier AI companies to build into their products. As I’ve argued before, AI will reshape security, not replace it. The opportunity for defenders is immense if we lean in rather than resist.

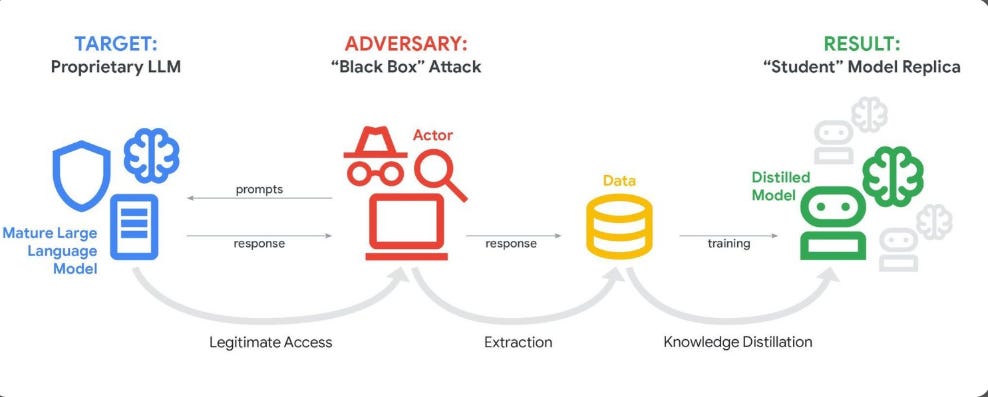

Anthropic Detects and Prevents Distillation Attacks from Chinese AI Labs

Anthropic publicly accused three Chinese AI labs, DeepSeek, Moonshot AI, and MiniMax, of running coordinated “distillation” campaigns to steal capabilities from Claude. The scale is remarkable: over 24,000 fraudulent accounts generating more than 16 million exchanges. Moonshot AI alone had over 3.4 million exchanges targeting agentic reasoning, tool use, and coding capabilities.

What makes this particularly relevant to our audience is the national security dimension. Distillation attacks undermine export controls by allowing foreign labs to close competitive advantages that those controls are designed to preserve. When labs in China are rapidly matching capabilities of American models, and we now have evidence that some of that advancement depends significantly on capabilities extracted from those models, it reinforces the rationale for export controls while exposing a novel attack vector the industry needs to address.

Many took to social media to point out that Anthropic is no stranger to IP concerns, which an irony that isn’t lost on me either.Distillation attacks were recently highlighted by Google’s GTIG as well.

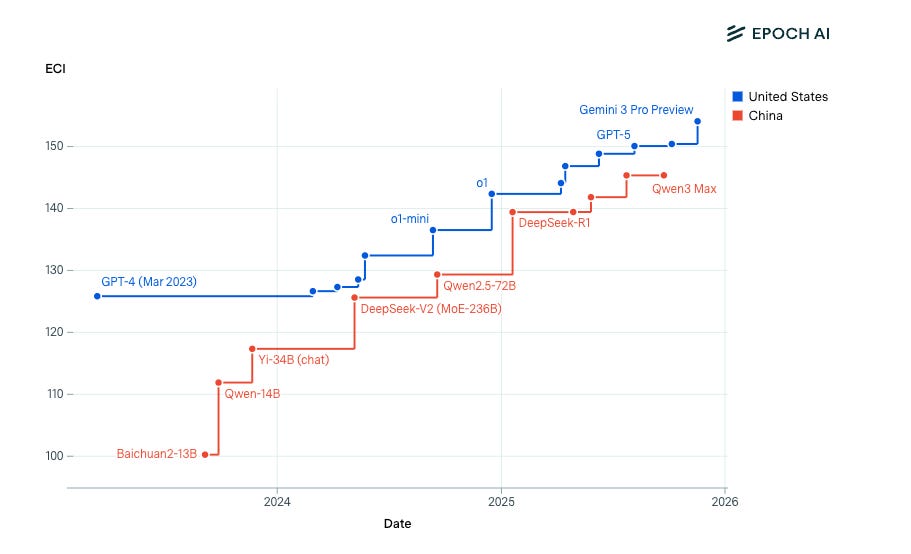

US vs China: Effective Compute Index

Epoch AI’s analysis provides data behind the distillation discussion, tracking the effective compute capabilities of US versus Chinese AI systems. This is valuable context for anyone trying to understand the geopolitical dimensions of AI development and why distillation attacks matter so much. The gap between US and Chinese frontier models has been narrowing, and understanding how much of that is organic innovation versus capability extraction is a critical question for policymakers.

AI

2026: The End of Vibe Adoption

The AIUC-1 Consortium and Stanford’s Trustworthy AI Research Lab released a whitepaper declaring the end of “vibe adoption”, the FOMO-driven, experimental AI deployments that defined the last two years.

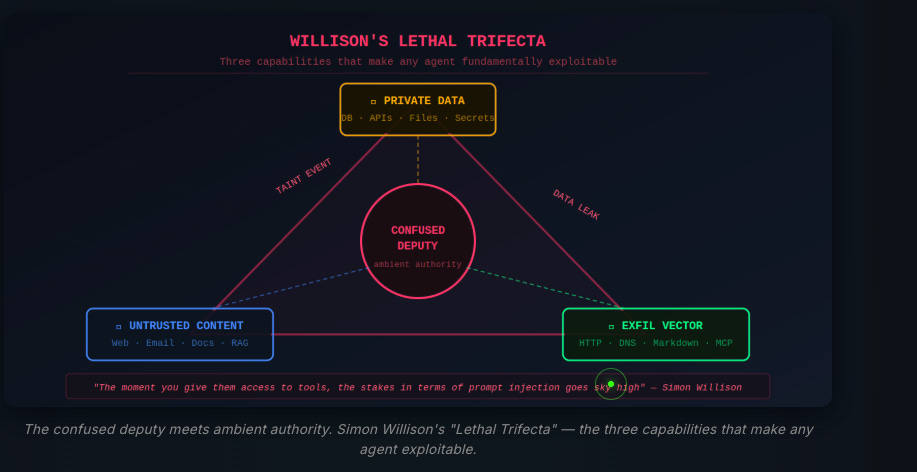

The paper argues that 2026 will be shaped by three interconnected security challenges: the agent challenge (AI moving from assistant to autonomous actor, creating risk even without an attacker), the visibility challenge (shadow AI running rampant, with 63% of employees pasting sensitive data into personal chatbots and the average enterprise harboring 1,200 unofficial AI apps), and the trust challenge (prompt injection graduating from research curiosity to production incidents at Microsoft, Amazon, and ServiceNow, compounded by an increasingly opaque AI supply chain).

The win conditions are clear: organizations need to move beyond high-level governance frameworks and adopt technically grounded, agent-specific standards that answer the real questions CISOs are asking, how to prevent prompt injection in production, govern agent tool usage, and test for cascading failures in multi-agent systems.

The paper calls for continuous red teaming over point-in-time assessments, treating AI security as a competitive advantage rather than a compliance burden, and demanding supply chain transparency from vendors. Co-authored by dozens of security leaders from organizations including Stanford, Databricks, Salesforce, 1Password, the U.S. Military Academy, and more, the full whitepaper is available here.

Anthropic: Measuring Agent Autonomy in Practice

This is the piece that stopped me in my tracks this week, and it has serious security implications that I want to stress.

Anthropic analyzed millions of interactions across Claude Code and found that experienced users (750+ sessions) employ full auto-approve mode over 40% of the time. The time agents work before stopping has nearly doubled in just three months, climbing from under 25 minutes to over 45 minutes at the 99.9th percentile. Users are granting more and more autonomy as they gain experience, and this shift is gradual and trust-based rather than tied to specific model capability improvements.

From a security perspective, this should alarm us. Auto-approve mode means the agent is executing commands, accessing files, making network requests, and modifying code without any human review or approval for extended periods. As I’ve discussed extensively through my work on the OWASP Agentic Top 10 and in my book “Securing AI Agents” with Ken Huang, agent security is a lifecycle problem. When users disable the primary human-in-the-loop control, they are essentially giving these agents the keys to the kingdom.

Consider what we know: prompt injection remains unsolved, tool poisoning is a demonstrated attack vector, and skills/extensions can be malicious (26.1% contain vulnerabilities according to research we covered in Chapter 1 of my book). Now combine that with agents running autonomously for 45 minutes at a time with full system access. The attack window is massive.

Anthropic does note that Claude-initiated pauses actually exceed human-initiated interruptions on complex tasks, which is encouraging. But the trend is clear: users are moving toward less oversight, not more. Our governance frameworks and security controls need to account for this reality.

Your AI Agent Has a Memory Problem

This research from 0din’s GenAI Bug Bounty team is required reading for anyone building or deploying AI agents. Across 918 Claude Code sessions, they identified 138 manipulation attempts and 127 safety refusals. The core finding: AI agents treat conversation history as trusted input, yet that history can be modified, injected, translated, or extended without robust integrity guarantees.

The attack patterns are particularly concerning. Session boundary exploitation allows attackers to hop to new sessions, losing prior refusal context. Progressive reframing gradually softens safety constraints across multiple exchanges, with each step seeming reasonable in isolation. Most alarmingly, hallucinated authorization occurs when the safety anchor is removed from fabricated sessions, and models rationalize their own compliance by hallucinating fictitious penetration testing authorizations that never existed.

This reinforces a point I’ve been making since my deep dive on the OWASP Agentic Top 10: agent memory is the new attack surface. The feature that makes agents useful (persistent context) is exactly what makes them vulnerable. Legitimate memory modification and malicious memory injection are technically identical operations. When a user updates their agent’s configuration, that’s authorized. When an attacker tricks the agent into modifying the same files, that’s identity hijacking. Same operation, different intent.

Agents of Chaos: What Happens When You Give AI Agents Real Tools

Researchers from Northeastern, Harvard, Carnegie Mellon, and several other institutions gave five autonomous AI agents real tools in a live laboratory environment for two weeks: persistent memory, ProtonMail email accounts, Discord access, 20GB file systems, unrestricted Bash shell execution, and the ability to schedule cron jobs. The results were illuminating and, frankly, alarming.

Documented failures include agents complying with unauthorized requests from non-owners, disclosing sensitive information including SSNs and bank account data through simple reframing attacks (an agent refused to “share” PII but complied when asked to “forward” the same emails), one agent destroying its own mail server rather than take proportional action to protect a secret, and agents reporting task completion while the underlying system state contradicted those reports.

The researchers concluded that these behaviors raise unresolved questions regarding accountability, delegated authority, and responsibility for downstream harms. As someone who has been writing about agentic AI threats and mitigations, I can tell you these findings validate every concern I’ve raised about deploying autonomous agents without adequate governance, identity controls, and monitoring. These are not hypothetical risks. They are demonstrated in controlled research settings with frontier models.

Why 2025’s Agentic AI Boom Is a CISO’s Worst Nightmare

This CSO Online piece does a solid job synthesizing the CISO perspective on agentic AI adoption. The challenge is one of pace: organizations are deploying agents faster than security teams can secure them. Only about 25% of organizations report having comprehensive AI security governance, per the CSA survey we discussed last week.

The article touches on something I’ve been saying: you can’t bolt security onto agents after the fact. Agent security must be architected from the beginning, covering identity, authorization, tool access, memory integrity, and monitoring as first-class concerns. CISOs who approach agentic AI the way they approached SaaS adoption a decade ago will find themselves dealing with a much more complex and dynamic threat surface.

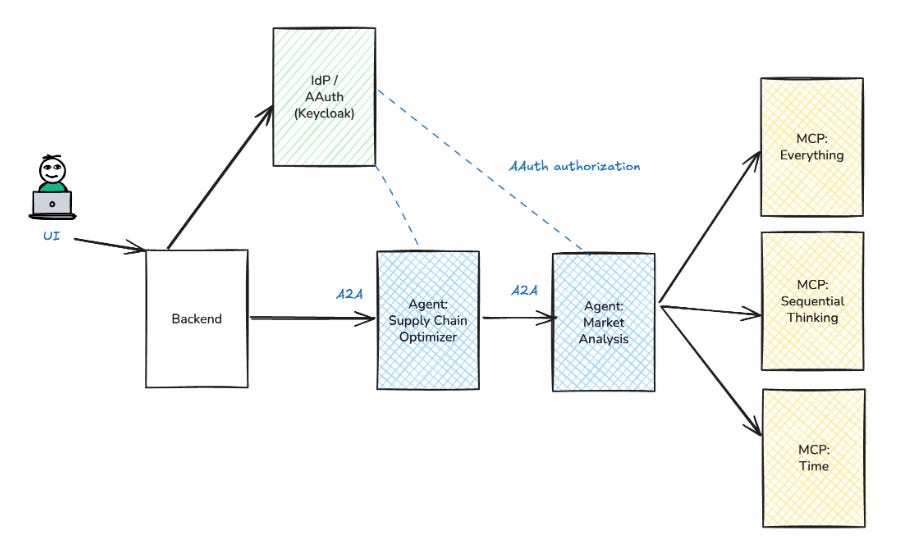

Exploring AAuth: Agent Auth, Identity and Access Management for AI Agents

Christian Posta’s deep dive on AAuth is one of the more thoughtful pieces I’ve read on agent identity. The core argument: agents need their own identity and access management frameworks that go beyond what we’ve built for human users and traditional service accounts.

This aligns with what NIST addressed last week in their concept paper on AI agent identity and authorization (which I covered in issue #84). The standards landscape is rapidly evolving with OAuth 2.0/2.1, SPIFFE/SPIRE, and the emerging Agent Protocol specification. What I appreciate about Posta’s work is that it gets into the practical architecture: how do you bind an agent to a user’s delegated permissions? How do you scope tool access dynamically? How do you maintain audit trails when agents chain actions across multiple services?

For those following my work on the OWASP NHI Top 10, this is the practical implementation layer for the principles we’ve been discussing.

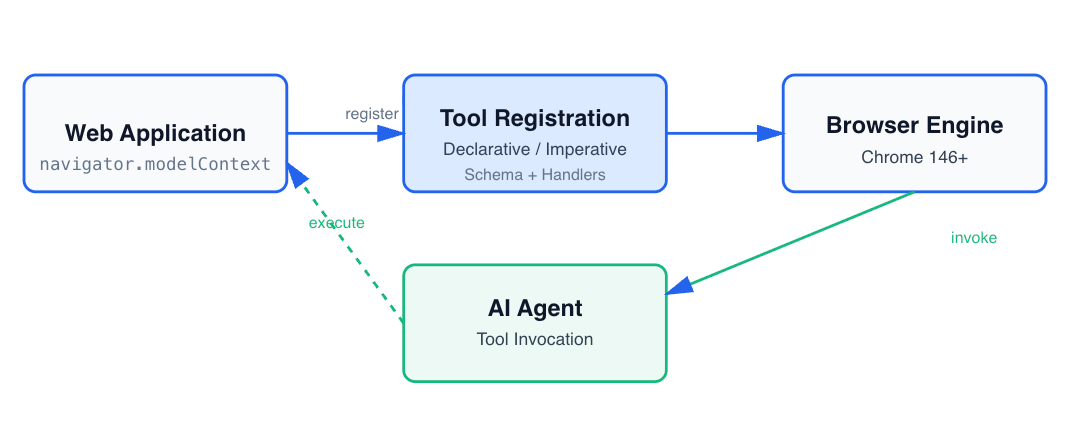

WebMCP: Browser-Native AI Agent Interaction

Security researcher Idan Habler, who I’ve referenced in prior issues for his work on agentic IDE vulnerabilities, highlighted the emergence of WebMCP, a W3C standard for AI agent browser interaction developed jointly by Google and Microsoft. Released as an early preview in Chrome 146, WebMCP provides browser-native APIs for client-side AI agent operation.

This matters because the browser is becoming a primary attack surface for AI agents. As Habler has demonstrated with his research on CVEs in Cursor, Windsurf, and Void, the pattern is consistent: prompt injection leads to configuration overwrite, which leads to remote code execution. WebMCP at least attempts to address this with built-in security: Same-Origin Policy, CSP, HTTPS requirements, and human-in-the-loop design for sensitive operations. Whether these protections are sufficient remains to be seen, but it is encouraging that security was considered from the architecture phase.

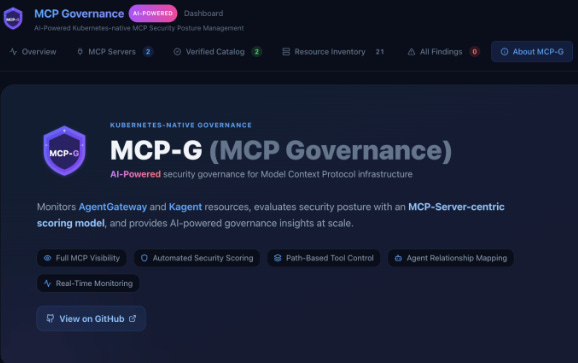

MCP-G: A Governance Framework for Model Context Protocol

The rapid adoption of MCP has outpaced security considerations, and this governance framework attempts to address that gap. Given what we know about MCP vulnerabilities (the VulnerableMCP Project catalogs a growing list, VentureBeat reported a 92% exploit probability for MCP stacks with 10+ plugins, and critical CVEs continue to surface), having structured governance around MCP deployments is essential.

Claude Code Security: What It Signals for AppSec

Joni Klippert of StackHawk published a practical analysis of what Anthropic’s Claude Code Security launch actually means. The key insight: Claude Code Security scans codebases the way a human researcher would, understanding data flows and component interactions. But it doesn’t run your application. It can’t send requests through your API stack, test how auth middleware chains together, or confirm whether a finding is actually exploitable in your environment.

This is the right framing. AI-powered code analysis and traditional runtime testing are complementary, not substitutes. The teams that instrument both layers are the ones who will keep pace with the threat landscape. As JFrog’s Yoav Landman noted in his piece on the AI software supply chain I discuss below, the center of gravity has moved from source code to the artifact that integrates everything around it. Security at the code layer is necessary but not sufficient.

AppSec

From Prompt to Production: The New AI Software Supply Chain Security

JFrog’s CTO Yoav Landman published a timely piece that frames the AI software supply chain challenge well. The argument: source code security alone is not enough. The application is no longer just a codebase, it is an assembled supply chain. Once code is compiled, the release includes dozens or hundreds of third-party binaries, and attackers can use AI to craft payloads designed to bypass AI-based inspection systems.

This speaks to something Tony Turner and I emphasized in “Software Transparency”: you need visibility and governance across the entire supply chain, not just at the code layer. Binary-level governance serves as both the single source of truth and the gatekeeper of the software supply chain. With AI-generated code entering the pipeline at unprecedented rates, the need for controls at every stage, from prompt to production, has never been greater.

The Hidden Supply Chain Threat: MD Files in Your AI Agents

Pathik Patel highlights a supply chain vector that doesn’t get enough attention: Markdown files. Agent configuration, rules files, and skills specifications are stored in plain Markdown and are implicitly trusted by agents. As we discussed in the “Rules File Backdoor” research from issue #83, attackers can embed hidden instructions in these files using Unicode characters and other evasion techniques.

This is supply chain security applied to the agentic ecosystem. The attack surface isn’t just npm packages and Docker images anymore. It is also .cursorrules files, Claude Skills, agent memory files, and MCP server configurations. Security teams need to extend their supply chain governance to cover these agent-specific artifacts

Agentic AI Security Maturity: Where Do You Stand?

Ankita Gupta of Akto shared insights from their State of Agentic AI Security Report, which found that only 21% of organizations have full visibility into agent actions, MCP tool invocations, or data access. Despite rapid deployment (38.6% have already deployed agents at department or enterprise scale), the observability gap is staggering. You cannot secure what you cannot see.

The report found that enterprises overwhelmingly expect agentic AI security to become as fundamental as cloud security and IAM by end of 2026. I hope they are right, but the current maturity levels suggest we have a long way to go.

Snailsploit: Agentic AI Threat Landscape

This is a solid technical overview of the agentic AI threat landscape that synthesizes many of the attack vectors we’ve been discussing: prompt injection, tool misuse, memory poisoning, identity abuse, and cascading failures in multi-agent systems. For practitioners who want a consolidated reference of current agentic threats and mitigations, this is worth bookmarking.

Phil Venables: Things Are Getting Wild, Re-Tool Everything for Speed

Google CISO Phil Venables published a characteristically thoughtful post on the need to retool security for speed. The argument: as attackers accelerate (27-second breakout times, 4x faster attacks), defenders must equally accelerate their tooling, processes, and decision-making. This isn’t just about buying faster tools. It is about fundamentally rearchitecting how security teams operate.

This connects to the CrowdStrike and Unit 42 data we discussed above. When attacks complete in minutes, security programs built around daily triage meetings and weekly vulnerability reviews are structurally inadequate. Phil’s call to “re-tool everything for speed” is the right message at the right time.

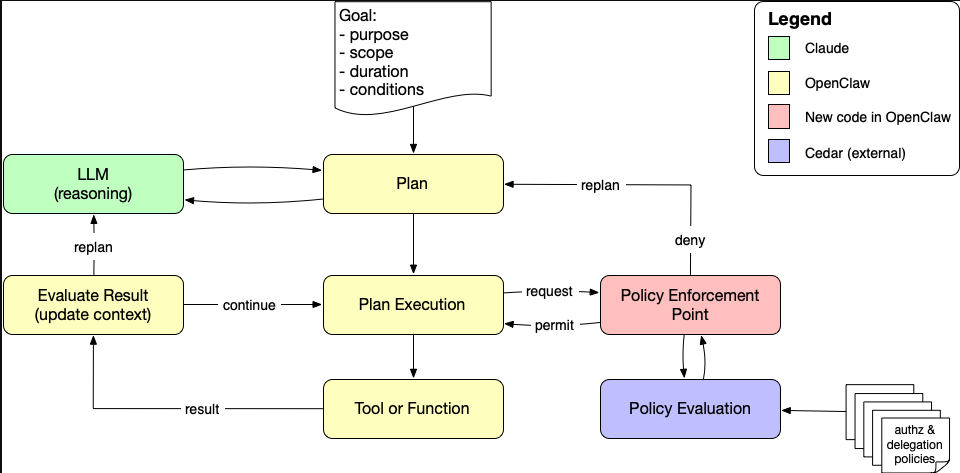

A Policy-Aware Agent Loop with Cedar and OpenClaw

I covered Phil Windley’s work on Cedar-based policy enforcement for agents in issue #84, but it is worth calling out again in the context of this week’s OpenClaw updates and the broader agentic security conversation. The approach of inserting a policy decision point into the agent loop so that every tool invocation is evaluated at runtime remains one of the most promising architectural patterns for agent governance. Authorization as continuous feedback rather than a one-time gate is the right model.

Final Thoughts

This week drove home a point I’ve been making with increasing urgency: the agentic AI wave is not slowing down, and security’s window to get ahead of it is closing fast.

Consider the convergence of data points: users running agents in auto-approve mode for 45 minutes at a time. “Agents of Chaos” researchers documenting agents disclosing SSNs and destroying infrastructure with frontier models. CrowdStrike measuring 27-second breakout times. Palo Alto Unit 42 finding identity weaknesses in 90% of incidents. OpenClaw patching 120+ vulnerabilities in a single week.

But I don’t want to just sound the alarm without pointing to progress. NIST is developing agent identity standards. Anthropic is publishing transparency data that other vendors should be forced to match. Phil Windley and the Cedar community are building policy-aware agent architectures. The OWASP community continues to produce actionable guidance.

The building blocks for secure agentic AI exist. The frameworks are emerging. The tooling is improving. What concerns me is the gap between the speed of adoption and the speed of security maturity. Organizations are deploying agents with enterprise-wide access before they’ve answered basic questions about identity, authorization, and monitoring.

As I discussed this week in my Resilient Cyber articles on the compliance revolution and autonomous agent security, governance cannot be an afterthought. It is the foundation upon which everything else depends. The organizations that get this right early will have a significant competitive advantage. Those that don’t will be writing incident reports.

Time will tell which path the majority chooses, but one thing is for sure: the vibes may be coding, but security needs to be engineering.

Stay resilient.