Governing Agentic AI: A Practical Framework for the Enterprise

Stop waiting for standards bodies to catch up. Here’s how to build agentic AI governance that actually works.

In my previous piece, “The Agentic AI Governance Blind Spot,” I laid out what I believe is one of the most critical gaps in the AI governance landscape today: the three most cited frameworks in AI governance, NIST AI RMF, ISO 42001, and the EU AI Act, don’t contain a single mention of agentic AI.

Not one reference to autonomous agents, multi-agent systems, or AI that takes actions with real-world consequences.

The response to that piece confirmed what I suspected.

Security, legal, and compliance leaders across the enterprise landscape are feeling it. They know their organizations are adopting agentic AI, often through shadow adoption and bottoms-up usage, and they know their existing governance frameworks aren’t equipped to address it.

So the natural follow-up question becomes: what do we actually do about it?

This piece is my attempt to answer that.

Not with a 200-page standard that’ll collect dust, but with a practical, high-level approach organizations can use to start governing agentic AI today, one that integrates with what you’ve already built rather than replacing it.

Don’t Burn Down the House: Integrate, Don’t Isolate

Let me be clear upfront: the answer to governing agentic AI is not to throw out your existing governance program and start over. If your organization has invested in enterprise governance, risk, and compliance capabilities, and layered AI governance on top through frameworks like NIST AI RMF or ISO 42001, that foundation still matters. The core principles of accountability, transparency, risk management, and oversight don’t evaporate because agents entered the picture.

The mistake I see organizations making is treating agentic AI governance as a completely separate work stream, siloed from both their broader enterprise governance and their existing AI governance efforts. This leads to duplicated controls, fragmented oversight, and governance fatigue among the very teams you need engaged.

Organizations are beginning to make efforts towards governing agents, but it is often siloed among the engineering or security teams working on early agent deployments and pilots, and not connected to broader enterprise governance policies and activities.

Instead, think of agentic AI governance as an extension layer. Your existing AI governance program likely addresses model risk, data governance, bias and fairness, and transparency. Good, you should keep that, but now you need to extend it to account for the unique properties that agents introduce: autonomy, tool use, multi-agent interaction, dynamic data access, and real-time decision-making.

These aren’t incremental additions, they’re fundamentally new risk vectors, and ones that depart from longstanding security principles in some cases, but they don’t require a fundamentally new bureaucracy either.

This is a point I’ve made before, including in my piece “GRC is Ripe for a Revolution,” where I argued that the legacy compliance approaches of periodic audits, approval workflows, and after-the-fact reviews simply won’t cut it for systems making operational decisions in real-time.

That argument applies even more so here. Agentic AI governance must be continuous, context-aware, and embedded into the operational lifecycle of the agents themselves.

Why Static Categories Won’t Work: The Case for Adaptive Governance

One of the fundamental problems with how we’ve traditionally approached AI governance is that it’s built on static categories. Risk tiers, fixed autonomy levels, predetermined oversight models. This worked reasonably well in a model-centric world where AI systems had relatively predictable behavior, but it doesn’t work so well for agents.

This is a point made compellingly by Engin and Hand in their research “Toward Adaptive Categories: Dimensional Governance for Agentic AI,” where they argue that systems built on foundation models, self-supervised learning, and multi-agent architectures increasingly blur the boundaries that static categories were designed to police. Their proposed solution is what they call dimensional governance, built around three core dimensions they refer to as the 3A’s: Decision Authority, Process Autonomy, and Accountability.

Decision Authority asks who or what is making the decisions. In a traditional AI system, the answer was relatively straightforward, a human reviewed the output and acted on it. With agents, decision authority shifts dynamically. An agent might operate with full decision authority for low-risk tasks, share it with a human for medium-risk workflows, and relinquish it entirely for high-stakes actions. The governance question isn’t just “who decides?” but “who decides, when, and under what conditions does that change?”

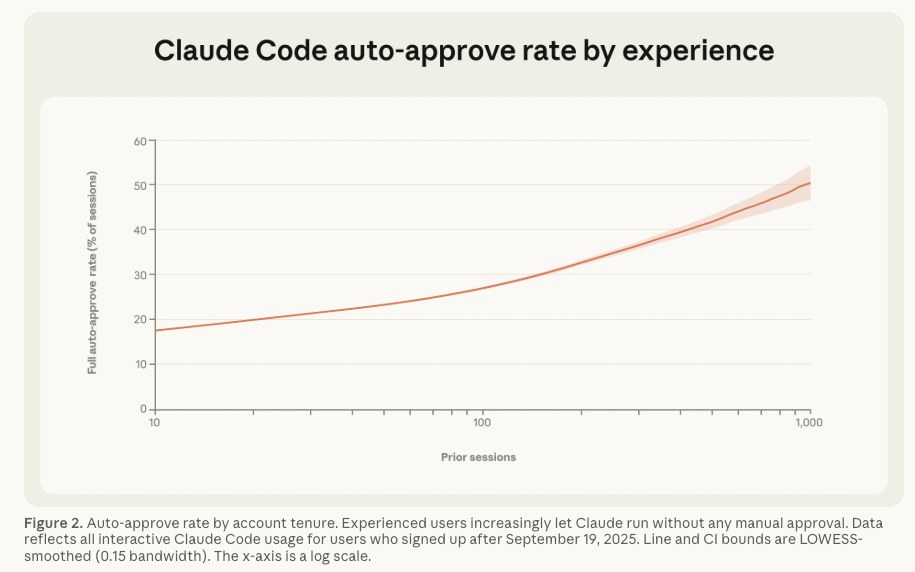

In fact, we’re seeing these metrics start to materialize, such as Anthropic’s recent report “Measuring AI Agent Autonomy in Practice”, where they reported that 40% of “experienced” Claude Code users use full-auto approval mode. This may be a reflection of experienced usage, but it is also likely the reality of forfeiting decision making entirely to agents, and user burnout from human-in-the-loop approval workflows.

Process Autonomy captures how independently the AI operates. This isn’t binary, it’s a spectrum that shifts based on context. The same agent might operate with high autonomy when summarizing internal documents but should have constrained autonomy when executing financial transactions or modifying production infrastructure.

The should in my prior line needs to be stressed, because while HITL and autonomy should work in certain ways depending on the criticality of decisions, it doesn’t often align with how it truly is implemented in practice, as mentioned above, with more and more users granting full autonomy to agents in enterprise environments.

Accountability addresses how responsibility is assigned when things go wrong, and with agentic AI, things will go wrong.

When an agent chains together six tool calls, accesses three data sources, and produces an output that causes a downstream failure, who’s accountable? The developer who built it? The team that deployed it? The platform that hosted it? The reality is that for most of the ecosystem with this emerging technology we often don’t have perfect answers for this. However, one fundamental truth is that accountability in agentic systems requires traceability and auditability at every step of the agent’s decision chain.

What makes this framing powerful is the emphasis on adaptiveness. These three dimensions aren’t fixed for a given agent, they shift dynamically based on context, task, and environment. Engin and Hand introduce the concept of critical trust thresholds, points at which changes in a system’s behavior require a corresponding shift in oversight.

This is exactly what enterprise governance needs to account for: not a one-time risk assessment of an agent, but continuous monitoring of how its decision authority, autonomy, and accountability posture evolves over time and based on environmental context and risk tolerance.

Not All Agents Are Created Equal: Governance by Deployment Model

One of the biggest mistakes in early agentic AI governance conversations is treating all agents as monolithic when they’re not. As laid out in resources such as OWASP’s Top 10 For Agentic Applications, the risks with agents are complex, from agent goal hijacking, tool misuse, supply chain vulnerabilities and more.

The governance approach you need, and how the 3A’s manifest, depends heavily on how agents are deployed in your environment or environments of hosting providers you consume and rely on.

There are three primary models organizations need to account for.

Homegrown and Custom Agents

These are agents your organization builds internally, whether through frameworks like LangGraph, CrewAI, AutoGen, or custom orchestration layers. Here, you own the full stack: the agent logic, the tool integrations, the permission boundaries, and the orchestration patterns.

This is where you have the most control and, consequently, the most responsibility.

You define the decision authority boundaries, the degree of process autonomy, and the accountability chain from the start. Governance for homegrown agents should include secure development lifecycle practices extended to agent development, permission boundary definitions for what tools and data agents can access, testing and evaluation frameworks for agent behavior under adversarial conditions, and runtime monitoring for behavioral drift and anomalous actions.

Think of this as the “built-in” model. You have the opportunity to embed adaptive governance from the start, designing agents with dynamic trust thresholds that constrain autonomy based on context, not just static policies.

If you’re building agents in-house and not baking governance into the development process, you’re repeating the same mistakes the broader software industry has been making for decades and perpetuating the bolted-on not built-in paradigm many of us have bemoaned for years.

Endpoint Agents

This is the category that’s moving fastest and getting the least governance attention.

Endpoint agents live on developer workstations, laptops, and local environments. Think AI coding assistants with agentic capabilities, local automation agents, and tools like Claude Code, Cursor, and Windsurf that can execute code, access file systems, browse the web, and interact with APIs. The most notable example here is OpenClaw and the craze around Personal AI Assistants (PAI), which research now shows are pervasive in enterprise environments despite the “personal” moniker.

The governance challenge here is fundamentally different.

You don’t control the agent’s architecture, you control the environment it operates in.

The 3A’s still apply, but through a different lens: decision authority is largely delegated to the end user, process autonomy is defined by the tool’s capabilities and whatever guardrails the vendor provides, and accountability sits in a gray zone between the user, the organization, and the tool provider.

Governance for endpoint agents needs to focus on acceptable use policies that specifically address agentic tool usage, guardrails around what enterprise systems and data endpoint agents can access, and monitoring and logging of agent actions on corporate endpoints.

This is also where shadow AI risk is most acute. If your AI governance program doesn’t account for endpoint agents, you’ve got a blind spot that’s growing by the day from a bottoms up perspective, as developers and broader users smuggle innovation in through the side door, around enterprise top-down governance processes.

SaaS and Embedded Agents

The third model is agents that come embedded within SaaS platforms and third-party products you already use.

Your CRM, ITSM, HR platform, or cloud provider may be shipping agentic capabilities directly into their products, sometimes enabled by default, and often that can go sideways, as we saw in the case with ServiceNow and their “BodySnatcher” vulnerability, which was a broken authentication and agentic hijacking vulnerability allowing unauthenticated attackers to impersonate any ServiceNow user.

This is arguably the hardest to govern because you have the least visibility into the 3A’s, similar to trying to govern SaaS, much of the decisions and control are ceded to the service provider.

You didn’t define the decision authority boundaries, you may have limited ability to constrain process autonomy, and the accountability chain runs through a vendor you don’t control.

Governance here requires extending your existing third-party risk management and vendor assessment processes to include specific questions around agentic capabilities: What actions can embedded agents take? What data do they access? What are the permission boundaries? Can you disable or constrain them? Is there adequate logging and auditability?

If your vendor risk questionnaires haven’t been updated to ask about agentic AI capabilities, you’re not assessing the actual risk surface of your SaaS portfolio. Beyond static questionnaires which we know have long been insufficient, there’s the need for real security and governance of SaaS and embedded agents, much like we saw with SaaS Security Posture Management (SSPM) prior to the agentic era.

The Agentic AI Governance Pillars

Regardless of deployment model, there are core governance pillars that every organization needs to address for agentic AI. These should be layered on top of your existing AI governance program, not siloed alongside it, and each should be evaluated through the adaptive lens of the 3A’s.

Visibility and Observability

No governance effort would be complete or coherent without a foundation in visibility and observability. This is why controls such as “asset inventory” have been among the top Critical Security Controls from sources such as CIS and others for years, because as the saying goes, you can’t secure (nor govern) what you don’t know exists. But visibility is just the first step, you also need observability of agentic behavior at runtime, including who it’s talking to, what data it is accessing, what actions it’s taking, all of which are the foundation for enforce least privilege and autonomy.

Identity and Access Governance for Agents

Agents need identities. Not human identities, but machine identities with clearly defined permissions, scopes, and boundaries. This means treating agent access with the same rigor you’d apply to service accounts and non-human identities in your IAM program. Least privilege isn’t optional here, it’s existential, and these permissions should be adaptive, tightening or loosening based on the sensitivity of what the agent is doing at any given moment, which is a fundamentally different paradigm than identity security approaches of the past and why we’re seeing concepts such as Just-in-Time (JIT) access and least-permissive autonomy being discussed, along with the broader backdrop of NHI security.

Tool Use and Action Boundaries

Every agent has a set of tools it can invoke.

Governance must define what tools are approved, what actions are permissible, and what guardrails exist to prevent agents from exceeding their intended scope.

This is where process autonomy governance becomes operational, defining not just what an agent can do in theory, but what it’s permitted to do in context of the tools it has, what actions those tools can facilitate and resources those actions involve.

Runtime Behavioral Monitoring

Static assessments won’t cut it for systems whose behavior is emergent and context-dependent. You need runtime observability into what agents are actually doing, not just what they were designed to do. This means logging agent actions, tool invocations, data access patterns, and inter-agent communications at a level sufficient for both security monitoring and compliance auditability. This is also where you operationalize the critical trust thresholds that Engin and Hand describe, detecting when an agent’s behavior is drifting toward or across governance boundaries that demand intervention.

Human Oversight Design

Human-in-the-Loop sounds great in theory. In practice, as I’ve argued previously, it doesn’t scale at machine speed and Anthropic’s report I mentioned above is showing that, with nearly 40% of experienced users ceding full authority to agents. Organizations need to design tiered oversight models that reflect the adaptive nature of decision authority: fully autonomous for low-risk actions, human-on-the-loop for medium-risk workflows, and hard human-in-the-loop gates for high-risk or irreversible decisions. We’re even seeing the rise of concepts such as “Guardian Agents”, using agents to monitor and govern agents, given the HITL bottlenecks and constraints. One size does not fit all, and the right level of oversight should shift dynamically based on context, not be fixed in a policy document.

Supply Chain and Plugin Governance

Agents increasingly leverage plugins, skills, and third-party integrations that introduce software supply chain risks into the agentic layer. Research such as “Malicious Agent Skills in the Wild” is demonstrating that malicious skills are now rampant and users are often consuming them with little to no rigor or assessment. If you’re not evaluating the provenance and security of agent plugins with the same rigor as your software dependencies, you’re introducing risk through the back door via a new and novel attack vector that many haven’t accounted for yet, but attackers certainly have.

Where We Go From Here

The uncomfortable reality is that the standards bodies, regulatory frameworks, and compliance regimes the industry relies on are months, if not years, away from adequately addressing agentic AI.

NIST’s recent RFI on securing agentic AI is encouraging, the OWASP Agentic AI Top 10 is a strong technical foundation, and emerging research like Engin and Hand’s dimensional governance model is pointing in the right direction, but none of this translates directly into the governance program your CISO, GRC lead, or board is asking for today.

Organizations that wait for perfect guidance will find themselves governing yesterday’s risks while today’s agents operate unchecked.

The approach I’ve outlined here isn’t exhaustive, and it’s not meant to be. It’s a starting point, a practical framework that meets organizations where they are, integrates with what they’ve already built, and addresses the unique risk properties of agentic AI across deployment models through an adaptive governance lens anchored in decision authority, process autonomy, and accountability.

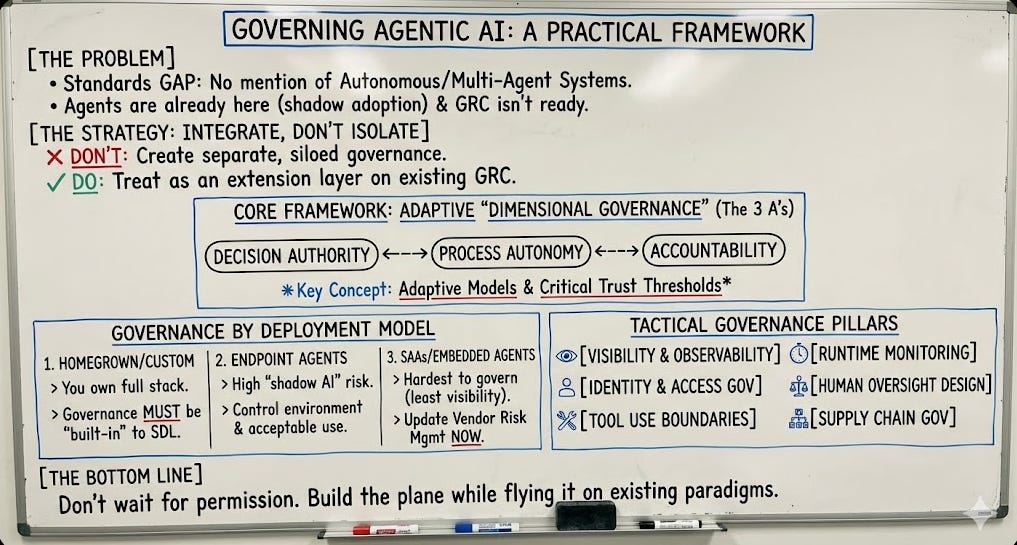

Below is a visualization to try and capture some of the quick reference items we’ve discussed:

The frameworks will eventually catch up, they always do, however they are constantly lagging the realistic technology landscape, so when they arrive, the goalposts will have moved again anyways.

The organizations that thrive in the agentic era won’t be the ones who waited for permission to govern, they’ll be the ones who built the plane while flying it, grounded in the foundational people, process, and technology paradigm that has always underpinned effective governance.

The question isn’t whether you need agentic AI governance, it’s whether you’ll build it before or after something goes wrong.

Great piece Chris. You wrote that critical trust thresholds for AI agents don't exist in any open source implementation. I built that layer — Detection Fidelity Score (DFS = S×T×B). Multiplicative model: if any dimension collapses, the score collapses. Includes guardrail middleware, prompt injection detection, circuit breaker, ABAC tokens, and hash-chained audit ledger. CI green, 16 extractors, Apache 2.0: github.com/gustavo89587/detection-fidelity-score.