When the Frontier Labs Sneeze, the Cybersecurity Market Catches a Cold

A look at the AI-native encroachment on not just SaaS but Cyber

This week Anthropic launched Claude Code Security, a native capability built into Claude Code on the web that scans codebases for security vulnerabilities, suggests targeted software patches, and does so with a reasoning-based approach that goes well beyond traditional rule-based static analysis.

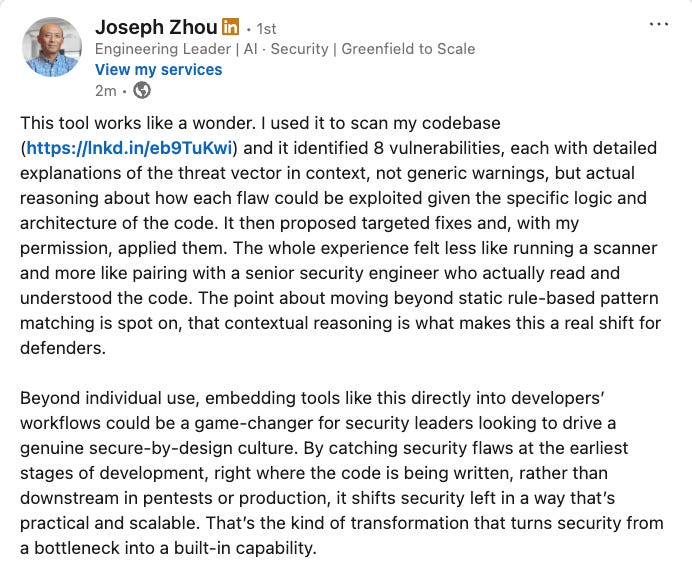

It’s already sent the cyber practitioner community into a buzz of excitement, such as the one below from Joseph Zhou:

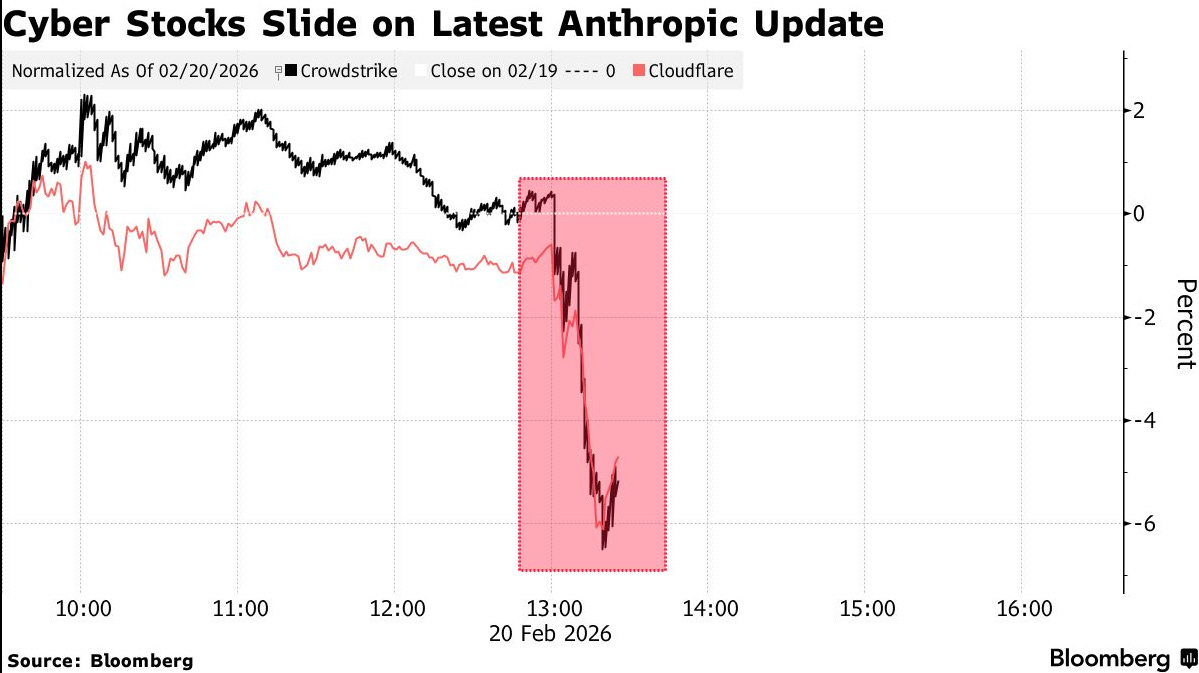

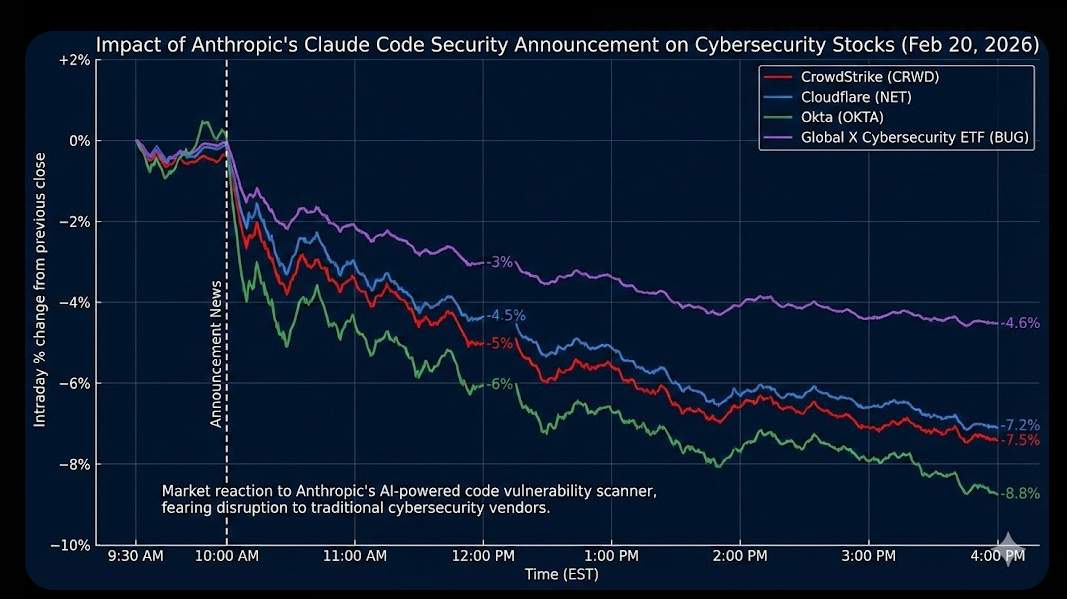

Within hours of the news, as reported by Bloomberg and others, cybersecurity stocks started tumbling. CrowdStrike dropped as much as 7.9%. Cloudflare slumped over 7%. Okta fell 9.6%. SailPoint shed 8.6%. The Global X Cybersecurity ETF extended its year-to-date losses to nearly 16%.

This isn’t the first time we’ve seen frontier AI labs rattle the software market, and it won’t be the last. But the cybersecurity implications deserve a closer look, because what’s happening here isn’t just a stock market reaction. It’s a structural signal about where the industry is headed.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The SaaSpocalypse Comes for Cyber

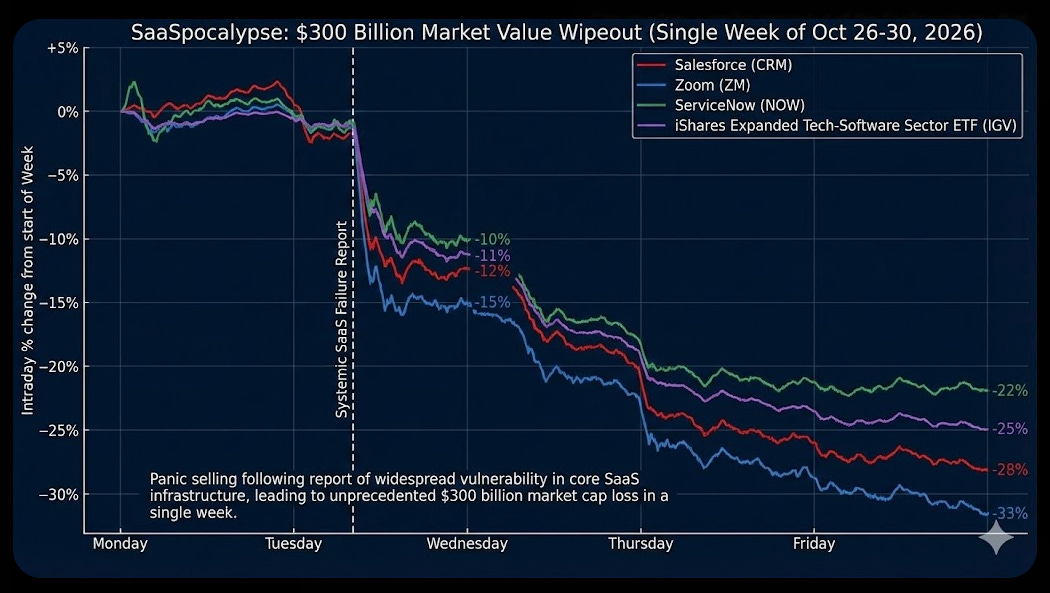

For those who haven’t been following the broader software market, the last month has been brutal. Some have dubbed it the “SaaSpocalypse,” a term coined after Anthropic’s Claude Cowork and OpenAI’s Codex desktop app triggered a historic sell-off that wiped nearly $300 billion in market value from the application software layer in a single week. Atlassian plunged 35%. The iShares Tech-Software ETF retreated roughly 30% from its late 2025 highs.

The thesis is straightforward: frontier AI labs are moving up the stack. They’re no longer content selling APIs and models. They’re building the capabilities that historically belonged to standalone software vendors and products. Legal tools, project management, document workflows, and now, cybersecurity.

Whether you think the SaaSpocalypse is overblown or a genuine structural reckoning, the directional signal is hard to ignore.

The frontier labs have something most cybersecurity vendors don’t: AI-native architecture, exponential distribution, nearly unlimited investment capital and a pace of innovation that’s measured in weeks, not quarters.

The Distribution Advantage is Staggering

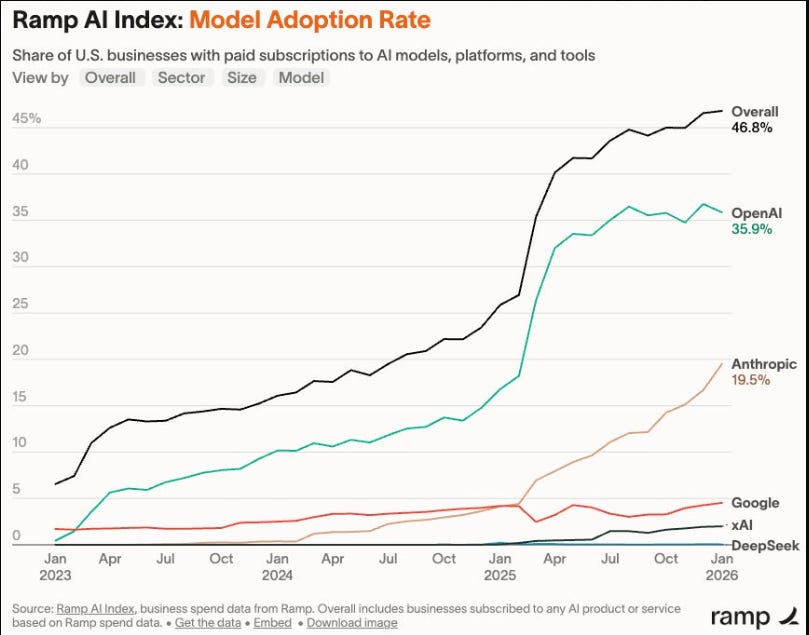

Consider the numbers. Anthropic’s revenue trajectory tells the story better than any analyst note. They went from $1 billion in annual recurring revenue in December 2024 to $4 billion by mid-2025, $9 billion by end of 2025, and $14 billion in February 2026. That’s $1B to $14B in roughly 14 months.

The Ramp AI Index for February 2026 shows Anthropic adoption growing from 16.7% to 19.5% of businesses, one of its largest monthly gains since tracking began. A year ago, it was one in 25 businesses. Now it’s one in five.

This isn’t just about revenue, it’s about distribution and surface area. When you have that kind of adoption curve, every new capability you ship reaches an enormous installed base overnight because platforms have a gravitational pull about them.

Claude Code Security doesn’t need a sales team, a channel strategy, or a proof-of-concept cycle. It ships as a feature within a platform that millions of developers and enterprises are already using. That’s the asymmetry that traditional cybersecurity vendors are up against.

And importantly, 79% of Anthropic’s customers also pay for OpenAI. These businesses are running multi-model strategies, which means the frontier labs aren’t just competing with each other for wallet share; they’re collectively expanding the surface area of what AI-native platforms can do, and each expansion potentially commoditizes another category of standalone tooling, as they monetize your perceived moat.

AI-Native Innovation vs. Bolted-On AI

Here’s where the cybersecurity angle gets particularly interesting. Anthropic isn’t bolting AI onto a legacy scanning tool, as were seeing several existing SAST AppSec vendors do, looking to tap into the capabilities of LLMs and integrate it into their existing product suites, both to improve the products, but also to try and stay relevant.

Claude Code Security reads and reasons about code the way a human security researcher would, understanding how components interact, tracing how data moves through applications, and catching complex vulnerabilities that rule-based tools miss. Every finding goes through a multi-stage verification process where Claude re-examines each result, attempting to prove or disprove its own findings. Contrast that with legacy SAST tooling which is notoriously noisy, full of false positives, typically rules-based, lacks context and is the bane of existence for many developers dealing with spreadsheets and UIs full of non-risks.

Various research publications have been put out showing how LLM based techniques for static bug detection can decrease false positives by 94-98%, or another in “LLM-Driven SAST-Genius”, which explored the synergy between LLMs and SAST, leading to a FP reduction of 91%.

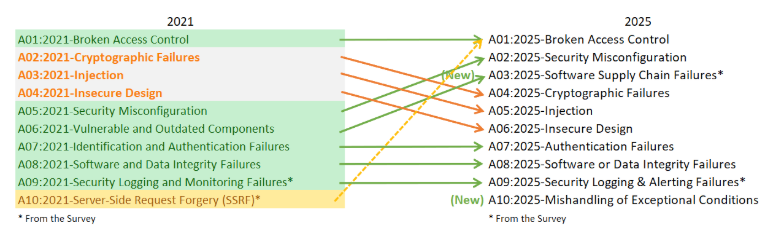

This is a fundamentally different approach than what most application security vendors offer today. Traditional static analysis is rule-based, matching code against known vulnerability patterns. It catches common issues like exposed passwords or outdated encryption but often misses more complex vulnerabilities like flaws in business logic or broken access control. The kind of stuff that actually gets exploited.

In fact, look at OWASP’s 2025 update to the Top 10 for Web Application Security Risks and risks such as Broken Access Control was, and still is at the top of the list 4-5 years later. I covered this previously in my article “The OWASP Top 10 Gets Modernized”.

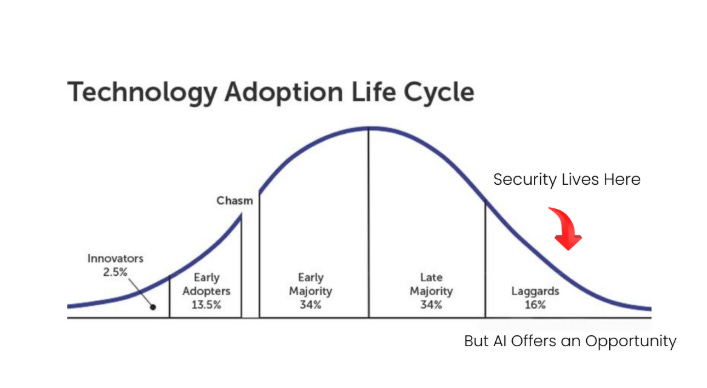

I’ve written extensively about these limitations in pieces like “Security’s AI-Driven Dilemma” and throughout my upcoming book with Ken Huang on secure vibe coding. I’ve been arguing that security needs to pivot from being a laggard and late adopter to an innovator and early adopter of AI to address systemic challenges across countless categories, including AppSec such as noisy tools, exponentially growing vulnerability backlogs and more.

And, to our credit, not only are many enterprise organizations and CISOs looking to do that, so are many existing and innovative new AppSec startups, but, the frontier labs expanding their native capabilities throws a curveball into that equation, and at a pace and distribution scale that many AppSec companies and teams simply can’t operate at.

While practitioners are celebrating the announcement, VCs and founders are likely commiserating, especially if their entire business model was focused around finding vulnerabilities and flaws in code in the traditional static analysis sense.

The reality is that much of the AppSec tooling market has been built on pattern matching, and pattern matching has inherent ceilings. When a frontier model can reason about code contextually, tracing data flows and understanding component interactions, it’s not just an incremental improvement. It’s a category-level leap.

And Anthropic’s results speak for themselves. Using Claude Opus 4.6, their team found over 500 previously unknown high-severity vulnerabilities in production open-source codebases, bugs that had gone undetected for decades despite years of expert review. As Logan Graham, head of Anthropic’s frontier red team, put it: the models are extremely good at this, and they expect them to get much better still.

"The models are extremely good at this, and we expect them to get much better still... I wouldn't be surprised if this was one of — or the main way — in which open-source software moving forward was secured"

But, as we’re seeing that capability doesn’t and shouldn’t just apply to open source, but enterprise codebases as well (which is overwhelming made up of OSS, but that’s another topic). That’s the part that excites defenders and security customers but should keep security vendors up at night. The capabilities are improving at the pace of model releases, not product roadmaps, which is a massive competitive challenge for those competing in direct and adjacent categories that the model providers are moving into.

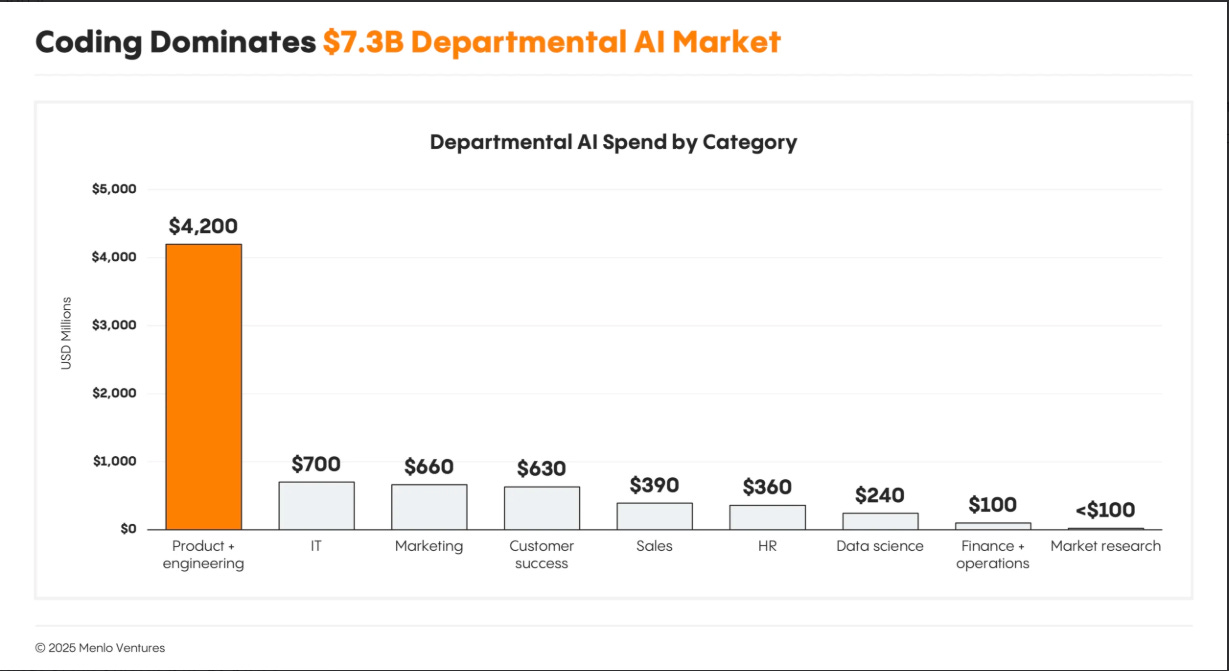

It’s no surprise that AppSec is being hit first and the hardest, as coding has been among the most dominant use cases for AI currently, due to its verifiability. This was captured well by Menlo Ventures in their 2025 State of GenAI in the Enterprise Report.

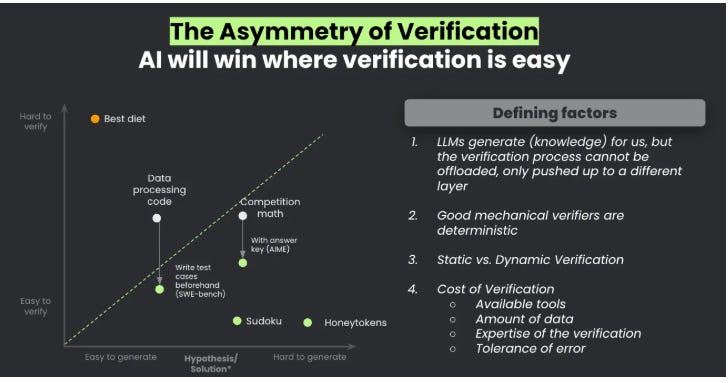

The same applies in AppSec and vulnerabilities, as we’ve seen massive leaps in automated vulnerability discovery and exploit development with AI, and is tied to what Sergej Epp laid out in his excellent piece on verifiability titled “Winning the AI Cyber Race: Verifiability is All You Need”.

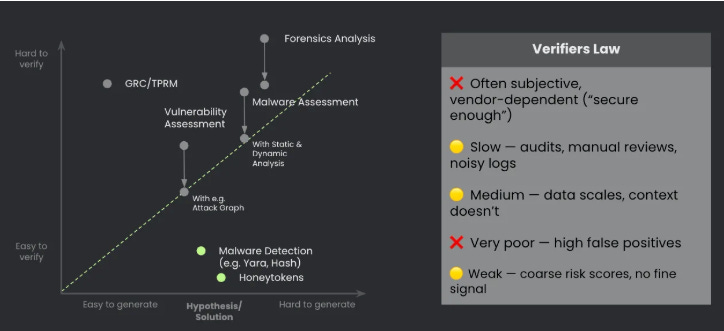

He pointed out that AI will win where verification is easy, which in terms of cybersecurity use cases, is much more applicable in AppSec and code analysis than it is in more subjective fields such as TPRM, GRC etc. (although much can be verified there too).

The CSP Playbook Repeats Itself

We’ve seen this movie before. Over the past decade, cloud service providers like AWS, Azure, and GCP systematically absorbed capabilities that once sustained entire product categories. Logging, monitoring, identity, secrets management, container security, one by one, the CSPs shipped native capabilities that were “good enough” for the majority of customers, and the standalone vendors had to either move upmarket, differentiate on depth, or get absorbed.

None of this is to say those categories don’t still exist, and in fact many of them have continued to see tremendous growth. That said, when the platform enterprise customers operate in and/or on has native capabilities that meet the mark for customers, it becomes a compelling alternative to standalone security vendors and products.

The frontier AI labs are running the same playbook, but faster. Much faster. The competitive pressure isn’t just from one direction either. OpenAI launched its own agentic capabilities with ChatGPT Agent Mode and its Frontier platform. Google is shipping security capabilities natively into its cloud and AI offerings.

The model providers are simultaneously the platform, the distribution channel, and increasingly, the product.

For cybersecurity vendors, this creates a challenging strategic question. If your core value proposition is scanning code for vulnerabilities and suggesting fixes, and a frontier model can do that natively within a platform that already has millions of developers, what’s your moat?

Is it your data? Your integrations? Your compliance certifications? Or is it the depth and specificity that a general-purpose model can’t yet match?

And, for those not operating in AppSec or code security, where do you lie on the spectrum of the verifiers law and what is the probability your capability gets commoditized next?

What This Means for Cybersecurity Vendors

I want to be clear: I don’t think this is an extinction event for cybersecurity.

Markets often trade the direction of travel, not the fine print, and the direction here is that frontier AI models are moving from only writing code to also policing it. I do want to call out one thing here too, and that it is ironically positioning these frontier labs in a duality of being both the fireman and arsonist at the same time, a position some of the leading software and tech companies often operate in.

This is where big tech companies often produce software and products, much of which is both widely used and insecure, and then they turn around and the large players now have tens of billions in security revenue.

This is shaping up similar for the frontier labs, as they produce the overwhelming majority of code, much of which is still insecure for various reasons, and now will help address the vulnerabilities in that code and of course there is a cost associated with using the capabilities to do that. (Although in the case of AI coding, there’s a chance the code quality/security improves substantially as the underlying models do as well.)

But the cybersecurity market is deep, complex, and multi-layered. Endpoint protection, identity, network security, threat intelligence, incident response, these are domains where outcomes, organizational context, real-time threat data, and deep enterprise integration still matter enormously along with the services aspect, especially as organizations continue to mature and shift to buying outcomes not just tools, a point made recently by Omdia’s Chief Analyst Jay McBain, who pointed out that services revenue is now 2x products.

The vendors most at risk are those whose primary value proposition sits in the path of what models can natively do: pattern-based code scanning, basic vulnerability detection, simple compliance checks - again, the “verifiers law” per Sergej Epp.

The vendors with the strongest position are those who own unique data, have deep workflow integration, or operate in domains where the cost of a false negative is measured in breached infrastructure, not just a missed bug, as well as complex, subjective organizational context that can’t be so simply reasoned about by frontier models.

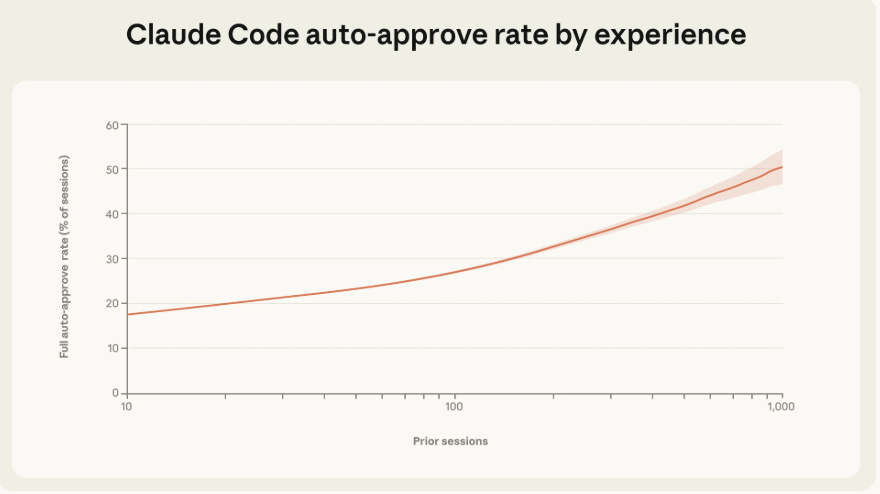

That said, as I said above and have been emphasizing for a while now, that cybersecurity needs to pivot from being a typical late adopter and laggard to being an early adopter and innovator with AI. The incentives for speed and winning outweigh the incentives for foundational security, a tale as old as time when it comes to cybersecurity. This is playing out in Anthropic’s own data, such as their recent report on measuring agent autonomy, where they found as users have more sessions with Claude, they increasingly just run it on full auto-approve, rarely stopping to interject.

The vendors who survive and thrive will be those who leverage frontier models as infrastructure rather than trying to compete against them with homegrown models or bolted-on AI features.

The Bigger Picture

Anthropic framed Claude Code Security as a defensive capability: putting the power of AI-driven vulnerability research in the hands of defenders before attackers can exploit those same capabilities offensively.

They’re right about the urgency. We’ve already seen AI-generated malware exploiting real-world vulnerabilities like React2Shell, and the barrier to weaponizing critical vulnerabilities is collapsing as models improve. Anthropic made noise themselves when they reported that they disrupted the first reported AI-orchestrated cyber espionage campaign in 2025, a story that made a ton of headlines and was covered well by others such as Rob T. Lee.

But there’s a tension here I mentioned above that the industry needs to reckon with. The same frontier labs that are commoditizing security capabilities are also the ones whose models and platforms generate the code that needs securing. They’re creating both the problem and the solution, and they’re in a unique position to do both at a pace and scale that standalone vendors can’t match.

Whether that dynamic ultimately helps or hurts the broader security ecosystem is one of the defining questions of this era. That said, what’s clear is that the frontier labs aren’t just adjacent to cybersecurity anymore. They’re in it, and the market is telling us it noticed.

The most dangerous competitor in cybersecurity isn’t the startup in your lane, it’s the frontier lab that doesn’t even consider you a competitor yet.

Stay Resilient!

Great article Chris. Thanks for putting this together.

Thanks for sharing.