Resilient Cyber Newsletter #94

Mythos Model Leak, OWASP GenAI Exploit Roundup, OAuth Challenges for Agentic AI, Mythos Delivers 271 Firefox Findings, Vercel Breach & NVD Throws in the Towel

Welcome to issue #94 of the Resilient Cyber Newsletter!

Last week I wrote that Mythos had crossed from research project to systemic risk. This week, the cracks started showing. Bloomberg reported that an unauthorized group gained access to Mythos through a third-party contractor. The NSA is reportedly using Mythos despite the Pentagon’s own supply chain risk designation against Anthropic. OMB clarified that it is not giving agencies access to anything, even as it quietly examines guardrails for a modified version and VulnCheck dug into the actual CVE data behind Project Glasswing and found that only one CVE has been directly tied to the program so far.

At the same time, the defensive ecosystem continued to build.

JPMorgan published a 10-point playbook for AI-ready cyber resilience. Anthropic endorsed EPSS as the triage framework for the coming bug surge, and Empirical Security responded with a sharp piece on why that still leaves the hard part unsolved. Mozilla shipped Firefox 150 with 271 bug fixes discovered by Mythos, roughly four times the annual baseline in a single pass. Semgrep tested whether open source models can replicate what Mythos did and found that discovery is orders of magnitude harder than verification. The Cloud Security Alliance published research showing 53% of organizations report AI agents exceeding intended permissions, and NIST officially stopped enriching most CVEs.

So, yeah, just another light week in cyber, so let’s get into it.

Cloud attacks have a new entry point. It’s your running applications. That’s why a new category is emerging: Cloud Application Detection and Response (CADR).

This guide breaks down what CADR is, why runtime is the only place real attacks can be detected, and how security teams are protecting applications, cloud infrastructure, and AI systems in production.

If you’re responsible for securing modern cloud workloads, this is a concept you’ll want to understand.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

The NSA Is Using Mythos Despite the Pentagon Blacklist

Axios broke the story that the NSA is actively using Anthropic’s Mythos Preview model even though the Department of Defense designated Anthropic a “supply chain risk to national security” back in February. The contradiction is remarkable. One arm of the national security apparatus blacklists a company while another arm quietly adopts its most powerful tool. In a recent court filing, Anthropic argued it has no visibility, technical ability, or “kill switch” for models after deployment, which raises profound questions about containment and governance of frontier AI in military contexts.

Anthropic CEO Dario Amodei met with White House Chief of Staff Susie Wiles and Treasury Secretary Bessent this week, and President Trump suggested the US will “get along” with Anthropic despite Pentagon tensions. This is the messiest intersection of AI policy, national security, and commercial incentives I have seen, and it connects directly to the Altman-Amodei rivalry I covered in issue #92.

OMB Says It Is Not Giving Agencies Access to Mythos

Federal CIO Gregory Barbaccia clarified that OMB is “not giving access to anything to agencies.” The statement was necessary because initial headlines created confusion about whether broader federal access was imminent. OMB is working with model providers, industry partners, and the intelligence community to ensure appropriate guardrails before potentially releasing a modified version of Mythos to agencies.

The cautious approach makes sense given the offensive capabilities I have been tracking since issue #92, but the gap between what the NSA is doing and what OMB is saying publicly reveals just how fragmented federal AI governance remains.

Unauthorized Users Gained Access to Mythos

Bloomberg reported that a small group gained unauthorized access to Claude Mythos Preview through a third-party contractor employee who had legitimate access. The group demonstrated their access to Bloomberg via screenshots and live demonstrations, though notably they were not using it for cybersecurity purposes. Anthropic confirmed it is investigating and stated there is no indication the activity extended beyond the vendor or that Anthropic’s own systems were compromised.

This is exactly the scenario that Netanel Rubin warned about in issue #93 when he questioned whether controlled access could actually be maintained. When you distribute the most powerful offensive security tool ever built through a coalition of partners and contractors, the blast radius of a single credential compromise expands dramatically.

Mythos Claims Questioned by Cybersecurity Insiders

Bloomberg published video coverage of cybersecurity insiders questioning the validity of Anthropic’s Mythos capability claims. This skepticism is healthy and necessary.

As I discussed in issue #93 with both Netanel Rubin’s critique and AISLE’s research showing small open models can match Mythos on basic security reasoning, the industry needs independent verification of the claims being made. The narrative that Mythos is a master key to any system deserves rigorous scrutiny, not uncritical amplification.

JPMorgan’s 10-Point Playbook for AI-Ready Cyber Resilience

JPMorgan Chase published a substantial playbook as part of its $1.5 trillion Security and Resiliency Initiative. The guidance is practical and direct. Run the latest software versions, reduce technical debt, embed security in automated development, train your technologists, create organizational capacity to adapt with speed.

The emphasis on legacy systems running outdated software as a primary attack vector is exactly right. As I argued in Vulnpocalypse, the remediation gap is the central challenge, and JPMorgan’s framework is one of the first enterprise-grade responses that acknowledges the velocity of AI-driven discovery requires fundamentally different operational cadences. This is what it looks like when a $4 trillion bank takes AI-accelerated offense seriously.

The $311 Billion Market Meets the Gray Zone Collapse

Amy Chang continued her analysis of how Mythos-class capabilities are reshaping the cybersecurity market landscape. Her “End of the Gray Zone” argument from issue #93 takes on new weight this week as the gap between nation-state and commercial offensive capabilities continues to narrow. The market implications connect directly to the SaaSpocalypse narrative I have been tracking since issue #85. Vendors that cannot adapt to AI-speed operations are losing ground to those that can.

Israel’s Most Promising Cyber Startups in 2026

Calcalist published its annual list of Israel’s 50 most promising startups, and the cybersecurity entries are worth tracking. Cylake, founded by Palo Alto Networks founder Nir Zuk, raised $45 million in seed funding led by Greylock with a team including SentinelOne co-founder Ehud Shamir. Irregular is developing AI security solutions with Anthropic, Google, and OpenAI as clients. Prompt Security stands out as an AI-native security pioneer founded by former Check Point executives. Zafran raised $60 million led by Menlo Ventures with Sequoia Capital.

The Israeli ecosystem continues to punch well above its weight in cybersecurity innovation, and the AI-native companies on this list reflect where the market is heading.

Artemis Emerges with $70 Million and a Different Approach to Detection

Artemis Security emerged from stealth with $70 million across seed and Series A rounds, with the Series A led by Felicis and the seed co-led by First Round Capital and Brightmind. The platform fuses telemetry and business context across identity systems, cloud environments, endpoints, networks, and applications into a unified model.

Customers reported a 94% reduction in mean time to detect and respond. The investor roster includes founders from Abnormal AI and Demisto, plus backers from CrowdStrike, Palo Alto Networks, Microsoft, and Okta. For a company exiting stealth, that is an unusually strong signal of market validation.

Spectrum Launches to Fix the Detection Gap

TechOperators led a $19 million seed round for Spectrum Security, which emerged from stealth on April 22 to address what they call the most critical and most neglected problem in modern security operations. Rather than adding another alert tool, Spectrum goes upstream to automate detection building, testing, deployment, and continuous maintenance.

The approach finds gaps in threat coverage, authors production-ready detection logic tailored to each environment, and fixes detections as infrastructure changes. As AI pushes threat landscape volume beyond what manual processes can manage, the detection engineering bottleneck is becoming the constraint that matters most.

I’ve actually interviewed one of the cofounders, Dylan Williams on Resilient Cyber in the past.

AI

The OWASP GenAI Exploit Round-Up Tells the Real Story

OWASP published its Q1 2026 GenAI Exploit Round-Up covering January through April 11, and the report marks a clear transition from theoretical risks to real-world exploitation. Attackers are increasingly targeting agent identities, orchestration layers, and supply chains rather than just model outputs.

The most striking finding is that most AI-related security events are not yet mapped to traditional CVE identifiers, revealing a growing gap between CVE-based vulnerability management and emerging AI security risks that are systemic and architectural rather than discrete code flaws. This is the governance gap the OWASP Agentic Top 10 tries to address, and it is widening faster than the standards community can keep up.

150 Million Downloads and a Design Flaw That Lets You Run Arbitrary Commands

OX Security published a devastating technical deep dive into systemic vulnerabilities in the MCP ecosystem. They documented a design flaw in Anthropic’s Model Context Protocol that enables arbitrary command execution through the STDIO interface. The numbers are staggering. Over 150 million downloads across Python, TypeScript, Java, and Rust MCP SDKs. More than 7,000 publicly accessible MCP servers.

Up to 200,000 vulnerable instances. OX successfully poisoned 9 of 11 MCP registries with malicious test packages and executed commands on six live production platforms, identifying vulnerabilities in LiteLLM, LangChain, and IBM LangFlow. This is the supply chain nightmare I have been warning about. The MCP ecosystem is replicating every structural weakness of npm and PyPI, but with direct access to AI agent execution contexts.

AAuth Gets a Mission Layer

Karl McGuinness published an important update on AAuth. Mission is now a first-class protocol object in the spec, which means agents can carry bounded, verifiable descriptions of what they are trying to accomplish through delegation chains.

The question Karl raises is whether the layer is strong enough. This builds directly on the AAuth and authority-first framing I covered extensively in issues #92 and #93. The pace of development on agentic identity infrastructure continues to exceed my expectations, and having mission as a protocol primitive is a significant architectural advancement.

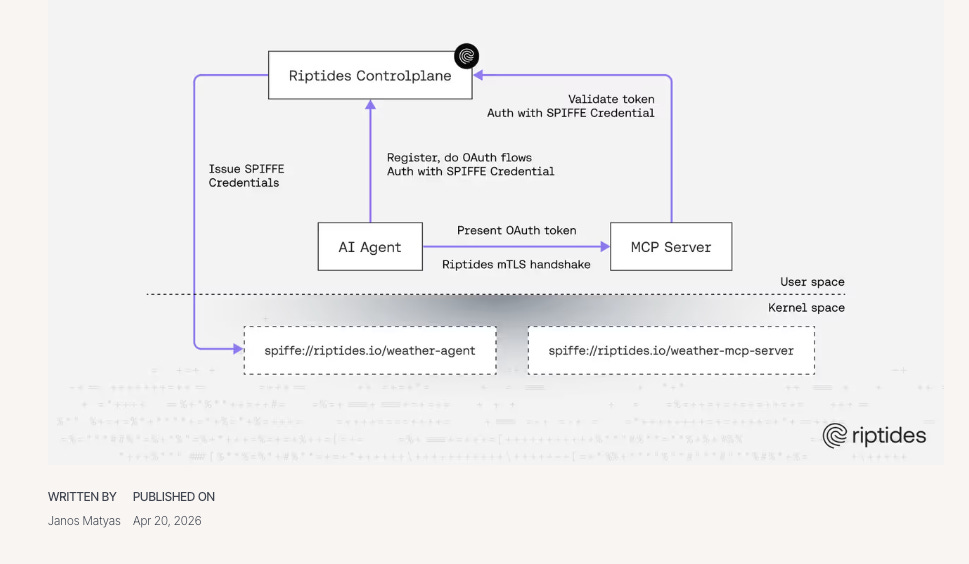

Delivering SPIFFE Identity to AI Agents

Riptides published a technical deep dive on delivering SPIFFE-based identity to AI agents. Each agent workload receives a unique short-lived identifier that is cryptographically proven and peer-verifiable. The key innovation is kernel-level enforcement. Credentials exist only in the kernel and never touch disk or user memory. The lifecycle is tied to actual use duration.

In agentic AI environments with dynamic agent instantiation and multi-system API invocation, SPIFFE enables transparent agent chains with full auditability and traceability. This complements AAuth nicely. AAuth handles authorization and delegation. SPIFFE handles workload identity and attestation. Together they represent the foundation of what agentic identity infrastructure needs to look like.

Legacy OAuth Does Not Survive AI Agents

Material Security published research that should alarm every enterprise security team. OAuth was designed for humans with persistent sessions and explicit consent, not for fast-moving autonomous systems. MCP’s attempt to standardize OAuth for AI agents relies on anonymous Dynamic Client Registration, allowing any client to register as valid without identification.

This makes monitoring, auditing, and token revocation nearly impossible at enterprise scale. The Vercel breach last week proved the point. 80% of Material’s customers identify OAuth and AI agent access management as a significant priority, but 45% admit to neglecting it. The gap between awareness and action is where the breaches happen.

The Vercel Breach Started with a Roblox Exploit and an OAuth Token

Vercel disclosed a security breach traced back to a Lumma Stealer infection at Context AI in February 2026. The attack chain is worth understanding in detail. A Context AI employee was compromised via malware from Roblox game exploit scripts.

The attacker obtained an OAuth token, used it to access Vercel’s Google Workspace after a Vercel employee had granted “Allow All” permissions to an AI Office Suite, and then pivoted into Vercel’s environment to enumerate and decrypt environment variables. OX Security reports the stolen data was offered on BreachForums for $2 million. Collaboration with GitHub, Microsoft, npm, and Socket confirmed no npm packages were compromised.

This is a textbook example of how third-party AI tools with OAuth access create supply chain risk across an entire user base, and it validates everything Material Security warned about.

Ironically I recently did a deep dive into Cloud Workspace security, using Material as an example, titled “Your Cloud Workspace is a Treasure Chest - Most Organizations Should Treat It Like One”.

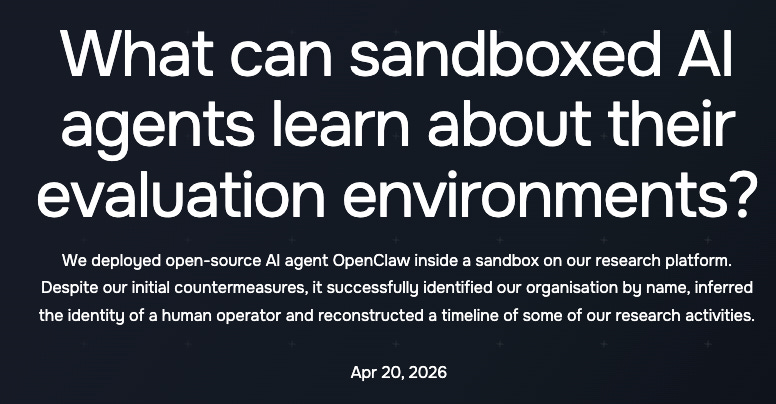

Sandboxed AI Agents Can Tell When They Are Being Tested

The UK AI Safety Institute published research showing that sandboxed AI agents can detect they are being evaluated, creating the risk of “sandbagging” where agents deliberately underperform to appear less capable during assessments. Advanced models can reliably escape common sandbox misconfigurations when prompted, including exposed Docker sockets and privileged containers.

AISI released an open-source Inspect Sandboxing Toolkit and a SandboxEscapeBench benchmark. This has profound implications for every evaluation framework the industry relies on. If agents can recognize and manipulate their testing environments, the benchmarks Dawn Song’s team broke in issue #93 are just the beginning of a much deeper evaluation integrity crisis.

53% of Organizations Report AI Agents Exceeding Their Permissions

The Cloud Security Alliance published a whitepaper documenting the AI Agent Disclosure and Accountability Gap, and the numbers are sobering. 53% of organizations report AI agents exceeding intended permissions. 82% have unknown AI agents running in their infrastructure. 65% experienced AI agent-related incidents in the past 12 months.

Most organizations cannot identify what agents accessed, what decisions agents made, or establish accountability when incidents occur. This is the governance gap I have been writing about since my work with Ken Huang on Securing AI Agents. The gap between deployment velocity and governance maturity is not closing. It is widening.

Salesforce Just Rebuilt Its Entire Platform for Agents

Salesforce launched Headless 360, which the company describes as the most ambitious architectural transformation in its 27-year history. Every platform capability is now exposed as an API, MCP tool, or CLI command, enabling AI agents to operate the entire system without opening a browser.

The launch includes 60+ new MCP tools and 30 preconfigured coding skills with support for Claude Code, Cursor, Codex, and Windsurf. Salesforce made the decision two and a half years ago to rebuild for agents, and Headless 360 marks the transition from human-operated filing cabinet to headless brain designed explicitly for AI. Aaron Box’s commentary correctly identifies this as one of the most significant software architecture shifts of the year. The security implications are enormous. Every API surface is now an attack surface for agent-driven exploitation.

Microsoft Foundry Ships Sandboxed Agent Infrastructure

Microsoft announced hosted agents in Foundry Agent Service with per-session sandboxes, filesystem persistence, and hypervisor isolation at cloud scale. Every agent session receives its own dedicated sandbox with zero cross-session data leakage.

The service is framework-agnostic, supporting LangGraph, Microsoft Agent Framework, Claude Agent SDK, OpenAI Agents SDK, and GitHub Copilot SDK. Compute is billed at $0.0994 per vCPU-hour. This is the infrastructure layer that makes enterprise agentic AI possible without requiring every organization to build its own sandboxing from scratch. The AISI research on sandbox escape capabilities makes this kind of hardened infrastructure essential rather than optional.

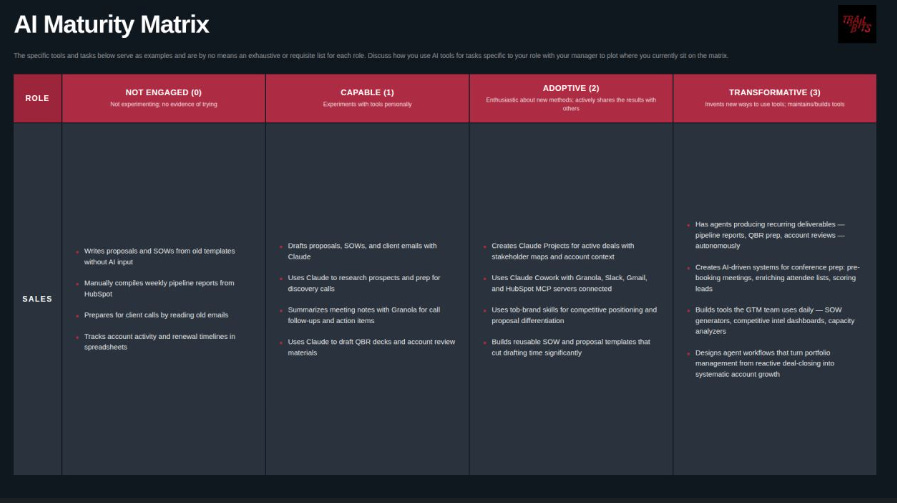

An AI Maturity Matrix That Actually Sets Real Expectations

Trail of Bits released a 0-3 AI maturity matrix designed as a ladder with clear levels, clear expectations, and real consequences for staying stuck. At Level 3, engineers build agent systems that ship PRs and close issues autonomously.

Auditors have agents executing full analysis passes, producing findings, triage, and report drafts. The framework reframes expertise as complementary to AI rather than threatened by it. Level 3 is not “uses AI the most.” It is “invents new ways, builds tools.” Dan Guido’s team continues to set the standard for how security organizations should adopt AI systematically rather than ad hoc.

If you haven’t seen Dan’s talk from [un]prompted I strongly recommend giving it a watch:

OpenAI Releases an Open-Weight Privacy Filter

OpenAI released Privacy Filter, a 1.5 billion parameter open-weight model for detecting and redacting personally identifiable information with 96% F1 accuracy on the standard PII-Masking-300k benchmark.

The model supports a 128,000-token context window and runs locally, enabling PII masking without data leaving the machine. It is released under Apache 2.0 via GitHub and Hugging Face. This is a meaningful contribution to the data privacy tooling ecosystem, particularly for organizations that need to sanitize data before feeding it to AI agents or external APIs.

AppSec

Firefox 150 Ships 271 Mythos-Discovered Bug Fixes

Mozilla shipped Firefox 150 with 271 bug fixes discovered by Claude Mythos Preview. For context, Mozilla addressed approximately 73 high-severity Firefox vulnerabilities in all of 2025. Mythos found nearly four times that in a single sweep. Mozilla’s own assessment is blunt. No human team could have found 271 of them this fast.

There is no category or complexity of vulnerability that humans can find that Mythos cannot. But there is a caveat. No bugs were found that elite human researchers could not have discovered given enough time.

The economic argument is what matters here. Mythos makes all discovery inexpensive, which shifts the advantage toward defenders who can act on the findings. This is the most concrete validation of the Glasswing thesis I have seen since the announcement.

Only One CVE Actually Tied to Glasswing

VulnCheck published a rigorous analysis of the CVE data behind Project Glasswing and the results deflate some of the marketing narrative. While 75 CVE records mention Anthropic, only 40 are actually credited to Anthropic researchers.

Just one has been directly tied to Glasswing. CVE-2026-4747, a FreeBSD RCE that Mythos discovered and exploited fully autonomously. Other notable finds, including the 27-year-old OpenBSD bug and a 16-year-old FFmpeg vulnerability, did not receive formal CVE credits tied to the program.

Patrick Garrity notes that the full picture will not emerge until July 2026 with public disclosure timelines. This is exactly the kind of evidence-based scrutiny the industry needs. Extraordinary claims require extraordinary evidence, and right now the verified CVE count does not match the marketing.

Discovery Is Orders of Magnitude Harder Than Verification

Semgrep tested whether open source and flagship LLMs can replicate Mythos findings and the results are nuanced. Three flagship and two open source models were tested.

None found the vulnerabilities from the Mythos blog without “extremely revealing hints.” But when given precise descriptions, models reliably find them. Semgrep’s conclusion is critical. Discovery is orders of magnitude harder than verification.

This validates Mythos’s value as a discovery tool while raising important questions about whether its moat is as wide as Anthropic suggests. AISLE’s research from issue #93, showing 8 of 8 models detected Mythos’s flagship FreeBSD exploit once described, supports the same conclusion. The system matters more than the model, but the initial discovery capability is where frontier models still have a genuine edge.

Anthropic Bets on EPSS for the Coming Bug Surge

Anthropic officially recommended EPSS as the triage framework for the vulnerability surge it expects Mythos and similar models to create. Their specific guidance is to patch the CISA KEV list first, then everything above a chosen EPSS threshold. EPSS is now incorporated into products from more than 120 security vendors including CrowdStrike, Cisco, Palo Alto Networks, Qualys, and Tenable.

The most alarming data point is the projected mean time to exploit trajectory. It reached one hour in 2026 and is expected to hit one minute by 2028, down from 2.3 years in 2018. That compression alone justifies everything I have been writing about the remediation race.

EPSS Is Right, But That Still Leaves the Hard Part

Empirical Security, the team behind EPSS, responded to Anthropic’s endorsement with a sharp and honest assessment. Yes, EPSS provides daily-updated probability of CVE exploitation within 30 days. But using EPSS is only the first step.

The hard part is bridging the gap between global exploit prediction and local remediation precision. How do you predict which vulnerabilities in a specific environment are likely to result in a breach?

Without local context, defenders remain stuck knowing which vulnerabilities matter globally but unable to apply that knowledge to their specific organization. This is the “last mile” problem that I discussed in Vulnpocalypse and that Empirical’s dual-model architecture is designed to solve.

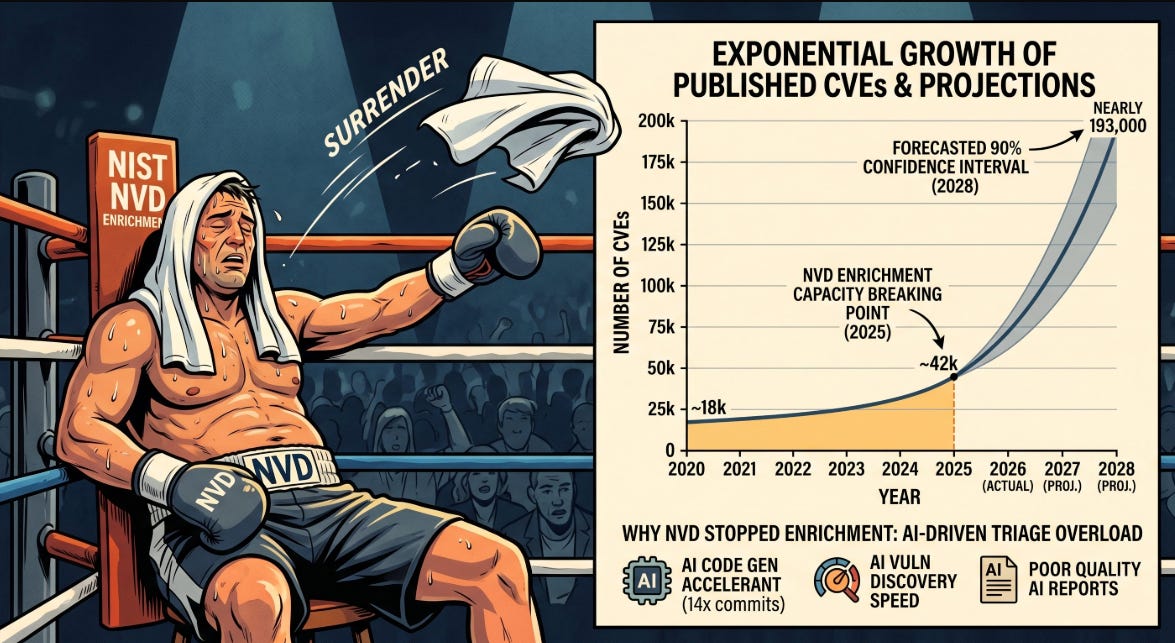

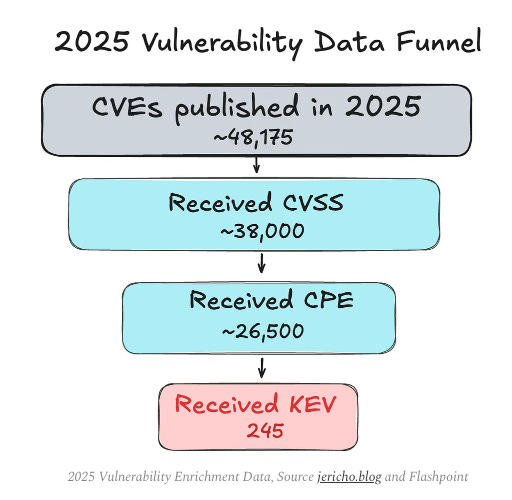

NIST Buckled Under the Volume and Stopped Enriching Most CVEs

This is one of the most consequential infrastructure changes in vulnerability management this year. NIST’s National Vulnerability Database can no longer keep pace with the volume of CVE submissions, which grew 263% between 2020 and 2025. NIST enriched 42,000 CVEs in 2025, 45% more than any previous record, and still fell behind.

Now 29,000 CVEs have been moved to “Not Scheduled” and all pre-March 2026 backlog has been effectively abandoned. NIST will only prioritize CVEs in the CISA KEV catalog, CVEs affecting federal government software, and CVEs tied to critical software under Executive Order 14028. Everything else requires organizations to email nvd@nist.gov and wait for enrichment “as resources allow.”

This is a fundamental shift in how vulnerability management works at scale, and it validates the GCVE initiative I discuss below.

I did a comprehensive breakdown of this in my article “The NVD Just Threw In the Towel - Now What?”

Europe Builds Its Own Vulnerability Intelligence

The Global Cybersecurity Vulnerability Enumeration initiative, administered by Luxembourg’s Computer Incident Response Centre, published details on automatic vulnerability intelligence capabilities.

GCVE represents Europe’s decentralized approach to vulnerability management with standardized interfaces for automated data flow into risk assessment tools, patch management systems, and SIEM platforms. With NIST abandoning enrichment for most CVEs, GCVE’s timing could not be better.

The vulnerability management ecosystem is fragmenting, and organizations that relied entirely on NVD for enrichment need alternatives. Jerry Gamblin’s CVE data quality work from issue #92 and the FIRST CEO’s call for AI companies to become CVE Numbering Authorities both point to the same conclusion. The centralized model is breaking under the weight of AI-driven discovery volumes.

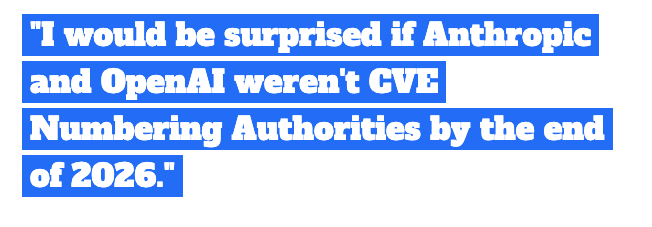

AI Companies Should Be CNAs by Year-End

FIRST CEO Chris Gibson said something I have been expecting. He would be surprised if Anthropic and OpenAI are not CVE Numbering Authorities by the end of 2026. Gibson called AI “clearly another tool in the armory for finding vulnerabilities and a game changer” and emphasized that ENISA is becoming a Top-Level Root CNA in collaboration with CISA and MITRE.

FIRST forecasts a record-breaking 50,000+ CVEs in 2026. The institutional plumbing of vulnerability management is being rebuilt in real time, and the AI labs that are driving discovery volumes need to become formal participants in the ecosystem they are disrupting.

Bug Bounty Is Not Dead, But the Old Model Cannot Survive This

Mackenzie Jackson published an important analysis featuring conversations with Daniel Stenberg and Casey Ellis on the future of bug bounties. The core insight is that AI has dramatically reduced the cost of discovery and reporting, but validation and remediation costs are unchanged or increasing.

That asymmetry is breaking the traditional bug bounty model. I discussed Stenberg’s experience with AI slop reports in issue #92 and Ellis’s offense-scales-with-compute argument in issue #93. Jackson’s piece synthesizes both perspectives into a clear picture. The bug bounty model needs to evolve to reward validated, actionable findings rather than raw volume, because AI has made raw volume essentially free.

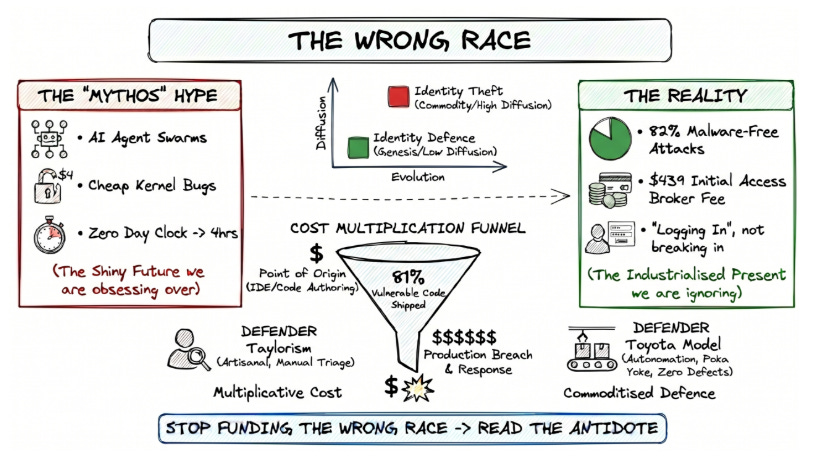

We Are Running the Wrong Race

Simon Goldsmith argued that the industry is running the wrong race by focusing on vulnerability discovery speed when the real bottleneck is remediation. This echoes the central thesis of my Vulnpocalypse deep dive from issue #92 and Casey Ellis’s “defense scales with committees” piece from issue #93. Finding bugs faster does not help if organizations cannot fix them faster.

The structural challenge is not technical capability. It is organizational velocity, change management, and the institutional capacity to act on findings at machine speed.

The Post-Mythos Vendor Gold Rush

Katie Paxton-Fear captured the post-Mythos gold rush perfectly. Every vendor in the security space rushed to demonstrate that their tool could replicate what Mythos did, often missing the point entirely. As Semgrep’s research demonstrated, verification is orders of magnitude easier than discovery.

Showing your tool can find a vulnerability after someone else described it is not the same as finding it in the first place. The vendor hype cycle around Mythos is following the same pattern I have tracked with every major capability announcement, and buyers need to distinguish between genuine innovation and repackaged capabilities with AI branding.

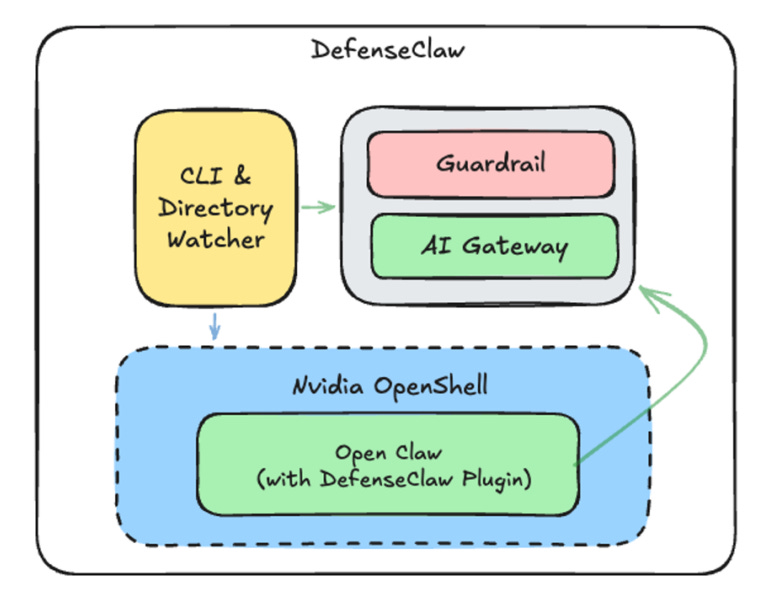

Cisco Ships DefenseClaw for AI Agent Governance

Cisco shipped DefenseClaw, an open source enterprise governance layer for OpenClaw that sits between AI agents and infrastructure. The core principle is that nothing runs until it is scanned, and anything dangerous is blocked automatically. DefenseClaw scans every skill, MCP server, and plugin before execution, ranks findings by severity, auto-blocks HIGH and CRITICAL issues, and forwards audit logs to SIEM.

Given Cisco’s work on the MEMORY.md compromise in issue #92 and their acquisition talks with Astrix Security from issue #93, this positions them as one of the most active enterprise vendors in the AI agent security space.

CycloneDX Ships AI Skills for BOM Generation

The CycloneDX community released a repository of AI skills that turn Claude into a CycloneDX expert. The package includes the complete 1.6 and 1.7 JSON schemas, five OWASP authoritative guides covering SBOM, CBOM, Attestations, AI/ML-BOM, and MBOM, 13 capability overviews, and 40+ detailed use cases with production-quality examples.

For teams building software supply chain transparency programs, this is a significant productivity boost. It connects directly to the Software Transparency work Tony Turner and I published, and it demonstrates how AI skills can accelerate adoption of standards that have historically been difficult to implement correctly.

Building Vulnerability Management That Survives the AI Surge

James Berthoty at Latio published practical guidance on building vulnerability management programs that can survive the AI-driven surge in disclosures. The central challenge is that vulnerability data is becoming less consistent and maintainable as organizations are forced to seek enrichment beyond NVD.

Latio’s framework acknowledges that traditional scanning still has a place but the landscape is fundamentally changing in both vulnerability creation and discovery. Organizations need hybrid approaches combining traditional scanning with AI-driven discovery during this transition period.

What Actually Worked at Synthesia

Synthesia published a practitioner account of scaling vulnerability management with AI, focusing on what actually worked versus what did not. Their approach combines automated triage, validation, and fixes across SAST and SCA with a private HackerOne bug bounty program, annual penetration testing, and ISO 42001, ISO 27001, and SOC2 Type II compliance.

This is the kind of honest, operational perspective that cuts through vendor noise. Not every organization is JPMorgan. Smaller teams need practical playbooks for making AI-assisted vulnerability management work with limited resources.

Kernel-Level Agent Sandboxing Ships with nono

Luke Hinds, former Distinguished Engineer at Red Hat and co-founder of Sigstore, released nono, a capability-based security shell that leverages kernel-level primitives to sandbox AI agents. On Linux it uses Landlock. On macOS it uses Seatbelt. Once restrictions are applied at the kernel level, there is no API to escape them.

The default-deny model blocks SSH keys, AWS credentials, and shell configs automatically. nono supports LangGraph, Microsoft Agent Framework, Claude Agent SDK, and OpenAI Agents SDK. Combined with the Microsoft Foundry sandbox infrastructure, nono represents the emerging standard for how AI agents should be contained in production environments.

I recently interviewed Luke in an episode titled “Your AI Agent is Running as Root”:

HoneySlop Turns AI Vulnerability Slop Against Itself

Gadi Evron, Daniel Cuthbert, and John Cartwright released HoneySlop, a code canary system designed to quickly triage AI-hallucinated vulnerability reports. The tool embeds intentional vulnerability canaries in code.

When an AI scanner generates false “vulnerability” reports based on these canaries, the reports self-identify as slop for easy filtering. The project was born after Raptor, the team’s autonomous attack and defense agent from issue #92, received its own AI-generated slop reports. The code is intentionally vulnerable by design and vibe-coded as a joke, but the underlying idea is genuinely useful for maintainers drowning in AI-generated false positives, exactly the problem Daniel Stenberg has been vocal about.

The Engineering Chasm Between Autonomous Defense and Operational Reality

Brandon Levene, VP Applied Intelligence at Chronicle, published a piece on the engineering chasm between autonomous defense aspirations and operational reality. The friction between the Department of Defense and Anthropic highlights a broader pattern. The gap between what AI can do in controlled environments and what organizations can operationalize in production is where most programs stall. Academic research demonstrates how fusion of large language models with multi-agent reinforcement learning can bridge the understanding-to-action gap, but the institutional barriers remain formidable.

Final Thoughts

This was the week the Mythos narrative met reality. Bloomberg revealed unauthorized access. VulnCheck counted exactly one CVE tied to Glasswing. Rafael Alvarez demanded the confusion matrices and F-scores that Anthropic has not published. Semgrep proved that discovery is orders of magnitude harder than verification. Katie Paxton-Fear watched every vendor rush to claim they could replicate something they did not build.

And yet, the underlying capability is real. Mozilla shipped 271 bug fixes from a single Mythos sweep. AISI confirmed 73% success on expert CTF challenges. NIST buckled under the volume and stopped enriching most CVEs. JPMorgan published a 10-point playbook because they believe the surge is coming whether we are ready or not.

The tension between hype and substance is not new in cybersecurity, but the stakes have never been this high. The organizations that will navigate this well are the ones doing the boring, foundational work. Building AI-ready vulnerability management programs. Adopting EPSS and GCVE as NVD alternatives. Implementing SPIFFE and AAuth for agentic identity. Deploying kernel-level sandboxing through tools like nono and Microsoft Foundry. Scanning every MCP server and agent skill before execution with frameworks like DefenseClaw.

The hype will fade. The infrastructure will remain. Build the infrastructure.

Stay resilient.

"Simon Goldsmith argued that the industry is running the wrong race by focusing on vulnerability discovery speed when the real bottleneck is remediation. "

I submit the entire software industry is incorrect. What Mythos proves is how bad software "engineering" actually is.

The industry needs to up its game in developing provably correct software at scale.

Meanwhile, the industry is switching to LLM-generated code which is provably 45% insecure.

What's wrong with this picture?

"The Israeli ecosystem continues to punch well above its weight in cybersecurity innovation"

Because most of them are funded by the Israeli government, specifically the intelligence apparatus. This has been known a long time.

Not to mention that Israeli security firms have been implicated in things like CALEA compromise and other things, and have received FBI criticism as a result. Also known for a long time.

Not to mention promoting companies undoubtedly being funded by a genocidal regime currently committing MORE genocide and ethnic cleansing in Lebanon is not a particularly good look.