AIUC-1: The Standard Agentic AI Has Been Waiting For

The governance frameworks the industry relies on don’t mention agents. AIUC-1 was built to fill that gap, and it’s exactly what the ecosystem needs.

I’ve spent the past several months making the case that the governance frameworks the industry relies on, NIST AI RMF, ISO 42001, the EU AI Act and others, have a fundamental blind spot, none of them mention agentic AI.

Not a single reference to autonomous agents, multi-agent systems, or AI that takes real-world actions with real-world consequences. In “The Agentic AI Governance Blind Spot,” I called this out directly.

In “Governing Agentic AI: A Practical Framework for the Enterprise,” I proposed an approach organizations could use to start filling that gap themselves, and in “GRC is Ripe for a Revolution” and “The Compliance Revolution Is Here,” I argued that the entire governance, risk, and compliance paradigm needs to be rebuilt for a world where AI systems operate autonomously, at machine speed, and across organizational boundaries.

The consistent thread through all of that work has been a simple observation, the standards ecosystem hasn’t kept up, and when the standards don’t exist, organizations are left to improvise, usually poorly.

That’s why AIUC-1 is a standard that’s caught my attention, and I’m proud to have recently joined them as a consortium member, joining among some other industry leaders I really respect.

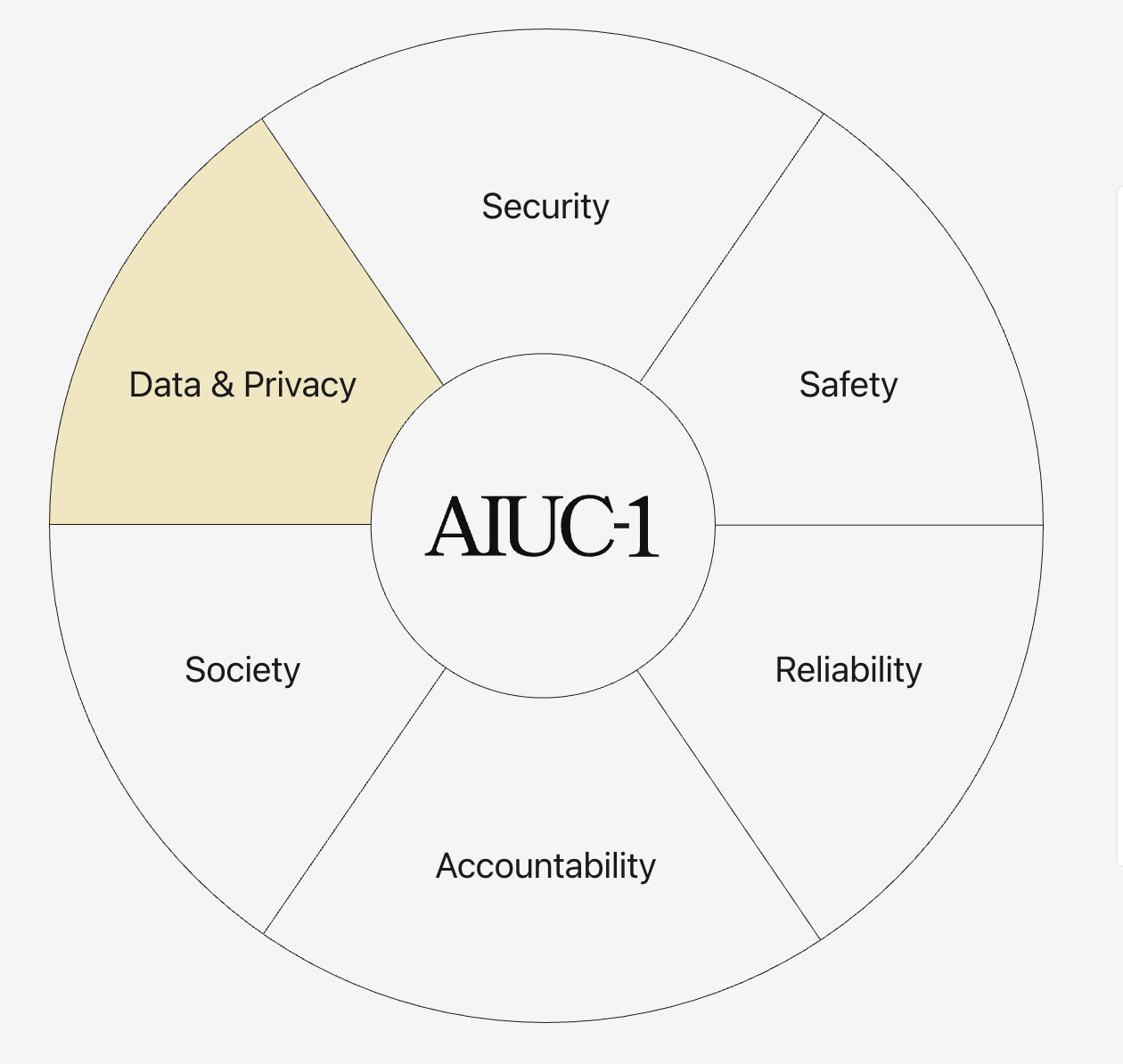

AIUC-1 is the first compliance standard purpose-built for AI agents. Developed by the AI Use Case Consortium, whose members include Salesforce, JP Morgan, HubSpot, MongoDB, Oracle, Databricks, and Brex, it provides over 50 safeguards organized across six domains, with a quarterly third-party testing cadence and an annual certification model. It’s not a framework, it’s not a set of principles. It’s an operational standard with testable requirements, designed specifically for the unique risks that agents introduce.

Phil Venables, former CISO of Google Cloud, put it plainly “we need a SOC 2 for AI agents”. AIUC-1 is the closest thing the industry has produced to that vision, and it arrives at exactly the moment it’s needed, which is why I want to take some time to walkthrough it in this post.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Why Agents Need Their Own Standard

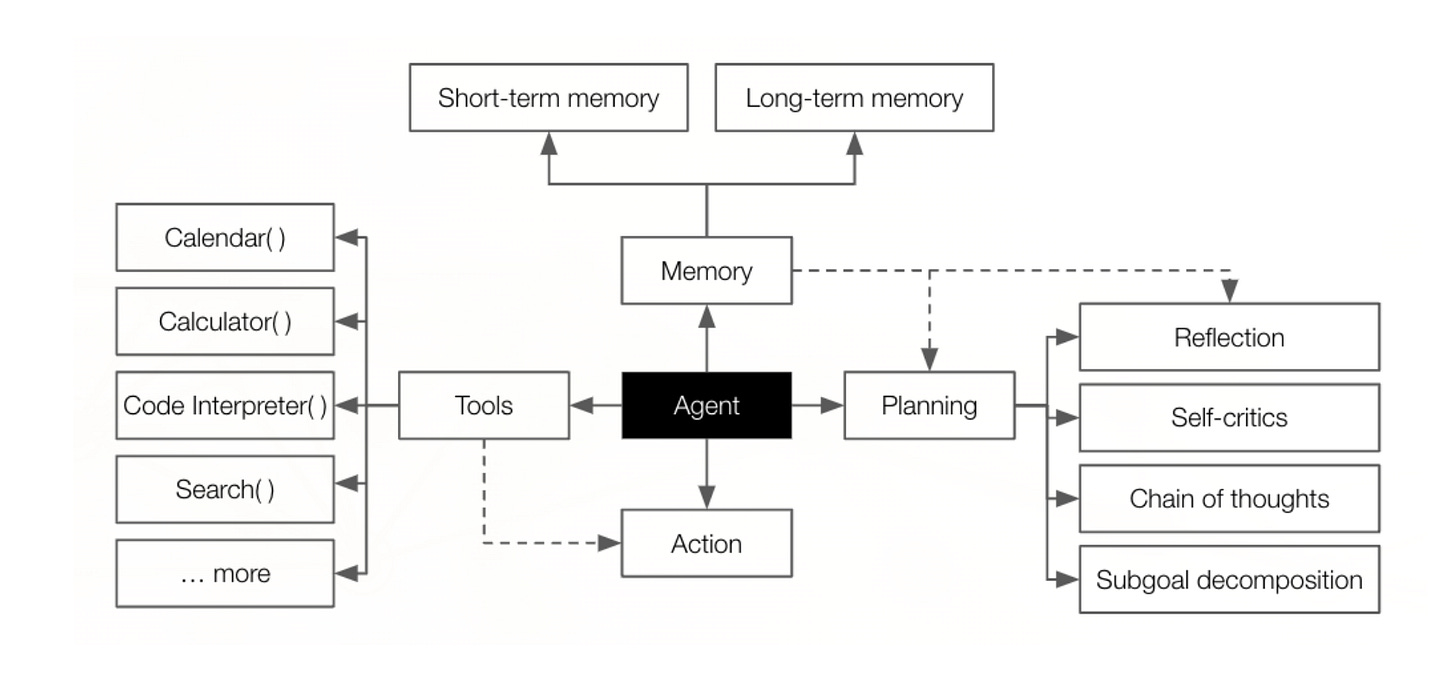

The question I get most often when I talk about agentic AI governance is “why can’t we just extend existing standards?”. The answer is that agents introduce risk properties that generic AI governance frameworks weren’t designed to address. A traditional AI system takes an input, produces an output, and a human acts on it. An agent takes an input, makes decisions, uses tools, accesses data, chains actions together, and produces outcomes, often without human review at any step.

This matters because the risk surface is fundamentally different. When an agent can browse the web, execute code, call APIs, access file systems, and interact with other agents, you’re not governing a prediction model. You’re governing an autonomous actor with real-world capabilities. The questions that matter, such as what can this agent access? what actions can it take? what happens when it makes a mistake? who’s accountable when it goes wrong?, aren’t addressed by frameworks built for a world of human-in-the-loop ML models.

AIUC-1 was built with this distinction at its core. Every one of its 50-plus requirements maps to a risk that is either unique to agents or dramatically amplified by agentic operation, and crucially, it doesn’t try to replace what already exists, a problem the industry usually is awful at, and is a major part of why we have framework sprawl and calls for compliance consolidation.

It operationalizes and extends the EU AI Act, NIST AI RMF, ISO 42001, MITRE ATLAS, and the OWASP LLM Top 10 while complementing SOC 2, ISO 27001, and GDPR. The framework comparison crosswalks on the AIUC-1 site make this explicit, every requirement maps back to existing standards, filling the gaps where those standards go silent on agents.

This is the approach I’ve been advocating for. In “Governing Agentic AI: A Practical Framework,” I argued that agentic AI governance should be an extension layer, not a replacement for what organizations have already built. AIUC-1 embodies that principle. It meets organizations where they are and adds the agent-specific controls they’re missing.

A. Data and Privacy: Governing What Agents See and Share

Domain A addresses one of the most acute risks in agentic AI, which is data exposure. Agents don’t just process data, they access it dynamically, combine it across sources, and can inadvertently leak it through outputs, tool calls, or cross-session contamination.

AIUC-1’s seven requirements here (A001–A007) are all mandatory. They cover establishing input and output data policies, limiting agent data collection to what’s necessary, protecting intellectual property in both directions, preventing cross-customer data exposure in multi-tenant environments, preventing PII leakage in outputs, and preventing IP violations. These aren’t aspirational principls, they’re testable safeguards that a third-party assessor can verify.

The cross-customer exposure requirement is particularly important. In multi-tenant SaaS or cloud environments, which is where most enterprise agents live, the risk that one customer’s data bleeds into another’s agent context is both real and poorly governed by existing standards. SOC 2 addresses data isolation at the infrastructure level, but not at the agent reasoning level. AIUC-1 is looking to close that gap.

B. Security: Hardening the Agent Attack Surface

Domain B contains nine requirements (B001–B009) that read like a security practitioner’s wish list for agentic systems. Adversarial robustness testing, adversarial input detection, technical disclosure management, endpoint scraping prevention, input filtering, prevention of unauthorized agent actions, access privilege enforcement, deployment environment protection, and limiting output over-exposure.

What stands out here is how specifically these controls map to agent-unique risks. Adversarial robustness testing (B001) isn’t about testing a model for bias, it’s about testing whether an agent can be manipulated through prompt injection, tool poisoning, or multi-step adversarial chains into taking actions it shouldn’t. Prevention of unauthorized agent actions (B006) addresses the scenario where an agent, through chained reasoning or hallucinated tool calls, exceeds its intended scope and takes actions no one authorized. These are risks that don’t exist in non-agentic systems.

The MITRE ATLAS framework catalogs many of these attack patterns, and AIUC-1’s security domain operationalizes them into testable requirements. I previously wrote a deep dive into MITRE’s ATLAS titled “Navigating AI Risks with ATLAS”. This is exactly the kind of translation the industry needs, from threat taxonomy to compliance control.

C. Safety: Preventing Harm at Agent Speed

Domain C is the largest, with twelve requirements (C001–C012) covering the full lifecycle of safety governance. I’ll caveat this with saying it is also the domain I am least excited about, not because safety isn’t important, but because it is incredibly opaque and subjective, but I guess to be fair, so is a lot of cyber.

It starts with establishing a risk taxonomy and pre-deployment testing, then moves into operational controls such as preventing harmful outputs, out-of-scope outputs, high-risk outputs, and output vulnerabilities. It requires flagging high-risk outputs for human review, monitoring risk categories in real-time, implementing real-time feedback mechanisms, and mandating three distinct types of third-party testing.

The emphasis on third-party testing is a critical differentiator. AIUC-1 doesn’t trust self-assessment, which is a mistake the U.S. Defense industry made with CUI, leading to the rise of CMMC.

It requires external validation across safety, reliability, and tool use, quarterly, not annually, another major difference from most leading frameworks which are annual or tri-annually and often a snapshot-in-time assessment that moves far slower than the risk landscape. This is a meaningful departure from the SOC 2 model of annual audits, and it reflects the reality that agentic systems change behavior over time as models are updated, tools are added, and deployment contexts shift. A point-in-time assessment tells you almost nothing about how an agent will behave six months from now.

This aligns with what I’ve argued about the need to move from periodic compliance to continuous assurance. When AI systems make operational decisions in real-time, governance that operates on an annual cadence is performing theater, not oversight.

D. Reliability: Making Agents You Can Actually Trust

Domain D is focused and sharp, with four mandatory requirements (D001–D004), aimed at preventing hallucinations, third-party hallucination testing, restrict unsafe tool calls and third-party tool call testing.

The tool call requirements are where AIUC-1 most clearly separates itself from generic AI standards. No other compliance framework I’m aware of explicitly addresses the risk of an AI system making unsafe or unauthorized tool invocations. But this is arguably the highest-consequence risk in agentic AI. When an agent can call APIs, execute database queries, modify files, or interact with external services, an unsafe tool call isn’t a hallucinated paragraph, it’s a real-world action with potentially irreversible consequences. We’ve had no shortage of abuses and security research finding risks associated with MCP and tool use for example.

AIUC-1’s requirement for third-party tool call testing (D004) mandates external validation that agents are using tools within their defined boundaries, under adversarial conditions. This is the kind of operational control that turns a governance framework from a compliance checkbox into an actual risk reduction mechanism.

E. Accountability: Answering the “Who’s Responsible?” Question

Domain E is the most operationally dense, with eleven requirements (E001–E011) covering incident response planning for security breaches, harmful outputs, and hallucinations. It also assign’s accountability across the development and deployment lifecycle, requires assessing cloud versus on-premises processing decisions, conducting vendor due diligence for foundation model providers, documenting system change approvals, establishing regular internal review processes, monitoring third-party access, establishing acceptable use policies and recording data processing locations. As you can see, it is a lot, and this will be a heavy lift for many organizations.

This domain tackles what I consider the hardest governance question in agentic AI, which is accountability. This is an open ended question the industry is still debating and discussing among practitioners in my LinkedIn feed and in various outlets, events and communities.

When an agent chains together six tool calls, accesses three data sources, and produces an output that causes a downstream failure, who’s accountable? The developer? The deploying team? The platform? The foundation model provider? AIUC-1’s answer is that accountability must be explicitly assigned, documented, and auditable at every stage of the lifecycle. Requirement E004 mandates that AI system changes requiring formal review have a designated accountable lead with documented approval and supporting evidence.

The vendor due diligence requirement (E006) is equally important. Most organizations building agents are using foundation models from third-party providers. AIUC-1 requires due diligence processes covering data handling, PII controls, security, and compliance for those upstream providers. This extends the software supply chain governance concept into the AI layer, a natural evolution that existing standards haven’t yet codified.

F. Society: Broader Harm Prevention

Domain F addresses the macro-level risks: preventing AI-enabled cyber misuse (F001) and preventing catastrophic misuse involving chemical, biological, radiological, and nuclear threats (F002). Both are mandatory.

These requirements acknowledge a reality that narrower security standards often ignore, which is that AI agents aren’t just enterprise tools. They’re general-purpose capabilities that can be repurposed for harm at societal scale. Requiring organizations to implement guardrails against catastrophic misuse moves the conversation beyond enterprise risk management into responsible technology governance. It’s a signal that AIUC-1’s authors understand the stakes aren’t just regulatory, they’re civilizational.

That said, I will admit that the ability for most enterprise organizations to truly think through societal level risks, let alone fully grasp and tackle their own enterprise risks makes this domain one of the more aspirational among the the standard overall.

What Sets AIUC-1 Apart

There are several things that distinguish AIUC-1 from the standards landscape it enters.

First, is specificity. Where NIST AI RMF provides principles and ISO 42001 provides management system requirements, AIUC-1 provides testable, requirement-level controls. It tells you what to implement and how to prove it, not just what to think about.

Second, the certification model. AIUC-1 isn’t just a framework you self-attest against. It includes quarterly third-party testing and annual certification renewal. This continuous validation model is far closer to what agentic AI governance actually requires than annual audits ever could be.

Third, capability-based scoping. AIUC-1 recognizes that not all agents have the same capabilities. Requirements are scoped based on what the agent can actually do, text generation, image generation, voice generation, automation, and so on. An agent that only generates text faces different requirements than one that can execute code and browse the web. This adaptive scoping prevents the one-size-fits-all problem that plagues broader standards, where they tend to operate with broad coverage rather than being dynamic or adjustable.

Fourth, consortium backing. With Salesforce, JP Morgan, HubSpot, MongoDB, Oracle, Databricks, and Brex at the table, AIUC-1 has the kind of cross-industry representation that gives a standard credibility and adoption momentum. These aren’t theoretical observers, they’re organizations actively deploying agents at enterprise scale, and bring real-world perspectives into the latest state of the art, adoption and use of agents.

Fifth, it builds rather than replaces. The explicit crosswalks to the EU AI Act, NIST AI RMF, ISO 42001, MITRE ATLAS, OWASP LLM Top 10, SOC 2, ISO 27001, and GDPR mean that organizations aren’t starting over. They’re extending what they’ve already built with agent-specific controls that their existing programs lack.

The Standard the Ecosystem Needs

I’ve been writing about the agentic AI governance gap for months. The gap between what organizations are deploying and what their governance frameworks can address has been widening with every new agent release, every new framework, every new SaaS vendor shipping embedded agentic capabilities. The standards bodies are moving, but they’re moving at standards-body speed, and the technology is moving at venture-capital speed and enterprise organizations are rapidly adopting agents, being driven by competitive pressures, innovation priorities and more.

AIUC-1 doesn’t solve everything, and it’s not a replacement for the broader AI governance program every organization needs. It doesn’t address every deployment model or every edge case. But it’s the first standard that takes the unique risks of AI agents seriously, translates them into operational requirements, and backs them with a continuous testing and certification model.

The organizations that adopt it early won’t just be more compliant, they’ll be more resilient. Because the real risk isn’t failing an audit, it’s deploying autonomous agents without the governance infrastructure to know what they’re doing, why they’re doing it, and who’s accountable when they get it wrong.

One of the biggest challenges for organizations pursuing AIUC-1, especially large complex environments, will be the ability to prove these controls, and that’s why I think it will strongly require comprehensive agentic AI security platforms to be able to demonstrate the type of visibility, governance and rigor that AIUC-1 calls for among its domains and controls.

The governance frameworks the industry relies on were built for a world where AI produced outputs and humans took actions. That world has passed, with the first wave of GenAI and LLM’s, and we’re now in the agentic-erc and AIUC-1 is built for the world that’s shaping the digital future, and the industry should pay attention.

The governance gap you are describing is exactly what I hit running agents in production. The frameworks were built for AI as a tool, not AI as an actor. AIUC-1 filling that void is overdue.

From the PM side: the hardest part is not the standard itself but getting teams to treat agent accountability like they treat sprint ownership. Someone has to own what the agent does.

Nice! We’re big fans of AIUC-1 as well and wrote up how the right controls on this standard can help build an insurable agent: https://blog.sondera.ai/p/insurable-ai-agent