Agents in Action - What 177,000 Tools Reveal About AI's Shift from Thinking to Doing

Agents Aren't Watching Anymore. They're Acting, Building, and Writing Themselves.

A landmark study from the UK’s AI Safety Institute analyzed 177,436 agent tools and found that agents are rapidly shifting from passive observation to real-world action. The implications for enterprise security are massive and mostly unaddressed.

The UK’s AI Safety Institute recently published one of the most empirically rigorous studies I have seen on how AI agents are actually being used in the wild. Titled “How are AI agents used? Evidence from 177,000 MCP tools,” the paper analyzed 177,436 agent tools created between November 2024 and February 2026 by tracking public Model Context Protocol (MCP) server repositories. MCP has become the dominant open protocol for agent tooling, with all of GitHub’s top 10 new agent-related repositories in the first half of 2025 either building MCP infrastructure or integrating with it.

This is not a survey, it is not a vendor report, it is a large-scale empirical measurement of what agents are actually doing, and the findings should be required reading for every security leader.

I have been writing about the agentic AI security problem for months. I’ve argued that the industry has a massive blind spot around what agents actually do at runtime. In “Governing Agentic AI,” I laid out the governance gap that exists because none of the major AI frameworks account for autonomous agents.

This UK AISI paper validates every one of those arguments with hard data. It quantifies the shift that practitioners have been feeling in the field and puts numbers behind what many of us have been warning about.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The Shift from Observation to Action

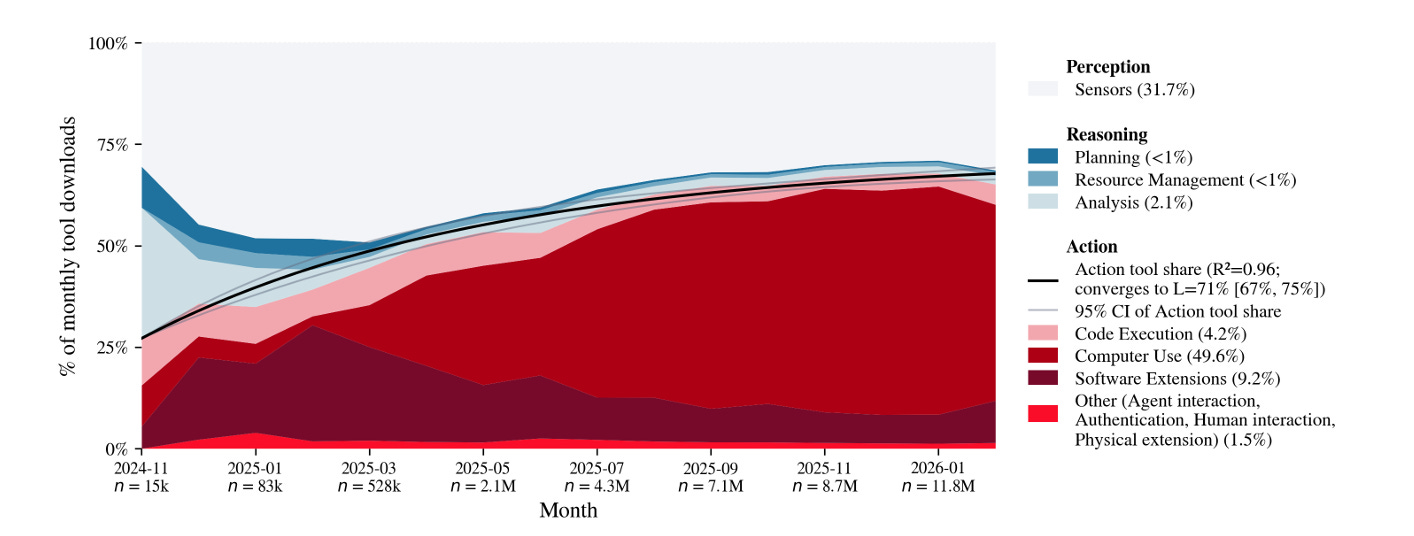

As the authors note, the share of action tools in terms of monthly downloads, rose from 24% to 65% in 16 months, primarily driven by growth in computer use and browser automation tools.

The single most important finding in this paper is the dramatic shift in what agents are being built to do. The researchers classify agent tools into three categories based on their direct impact. Perception tools access and read data. Reasoning tools analyze data or concepts, and action tools directly modify external environments through file editing, sending emails, executing code, making API calls, steering drones, or interacting with financial systems.

The share of action tools in total agent tool usage grew from 27% in November 2024 to 65% by February 2026. Let that trajectory sink in for a moment. In just 16 months, agents went from being primarily passive observers to being predominantly active participants in the environments they interact with. The paper notes that this shift was driven largely by the adoption of general-purpose tools that permit access to unconstrained environments, enabling agents to use a computer or browser with broad, open-ended capabilities rather than narrow, API-specific integrations.

For commercial entities specifically, the shift is even more pronounced. The download share of action tools released by registered commercial entities increased from 21% to 71% over the same period. This is not hobbyist experimentation. This is enterprise adoption at scale.

This matters enormously for security because the risk profile of an agent changes fundamentally when it moves from perception to action. An agent that can only read data has a limited blast radius. An agent that can execute code, modify files, send emails, and interact with financial systems has a blast radius that extends across the entire set of environments and services it can reach.

The paper makes this point explicitly, noting that potentially consequential agent actions are increasingly occurring in the least controlled environments, like an agent browsing the web or using a computer, rather than through restricted, secure API integrations. That finding alone should get most security leaders attention.

Agents Are Writing Themselves

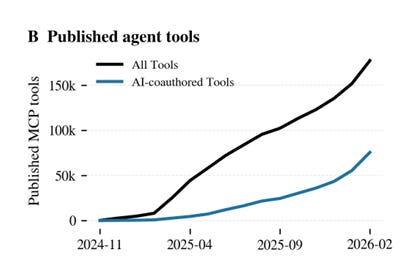

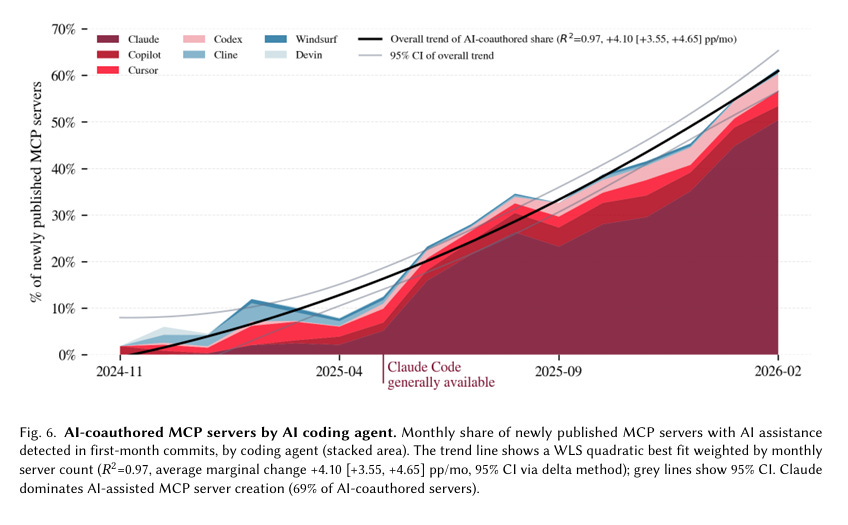

The second finding that jumped out at me is the data on AI-coauthored agent tools. The paper detected AI assistance in 28% of MCP servers, representing 36% of all tools. But the trend line is what matters most. The share of newly created MCP servers with detected AI assistance rose from 6% in January 2025 to 62% by February 2026. In other words, by early 2026, nearly two-thirds of all new agent tools being published showed evidence of being built with the help of AI coding agents.

Claude dominates this space, accounting for 69% of AI-coauthored servers, followed by Cursor at 9.2%, Copilot at 9.1%, and Codex at 6%. The paper identifies these through commit metadata, AI tool configuration files, bot account commits, and explicit mentions of AI tool names in commit messages and pull request bodies.

This is the recursive loop some have been warning about. AI agents are building the tools that other AI agents use. The paper describes this as “recursive self-improvement” where AI agents that create their own tools expand the action space without requiring human effort. When AI coding agents build new tools for other AI agents, tool proliferation is no longer bottlenecked by human developers, and tool creation may scale beyond human oversight.

I covered the broader implications of AI-generated code in “Vibe Coding Conundrums,” where I examined what happens when a growing share of production code is written by AI rather than humans.

This paper adds a critical dimension to that argument. It is not just application code being generated by AI. It is the agent infrastructure itself. The tools, integrations, and capabilities that define what agents can do in production environments are increasingly being created by agents, with all the security implications that entails. If 62% of new agent tooling is AI-coauthored and the quality, security, and trustworthiness of that tooling is not being systematically validated, the enterprise is inheriting risk at a pace that no manual review process can match, where humans can’t govern neither the code nor the underlying tooling that is introduced via AI.

Software Development Dominates, But High-Stakes Domains Are Emerging

The paper found that software development and IT tasks account for 67% of all published agent tools and a staggering 90% of MCP server downloads. This confirms what most practitioners already sense. The current primary use case for agents is accelerating technical workflows, particularly coding, testing, deployment, and infrastructure management.

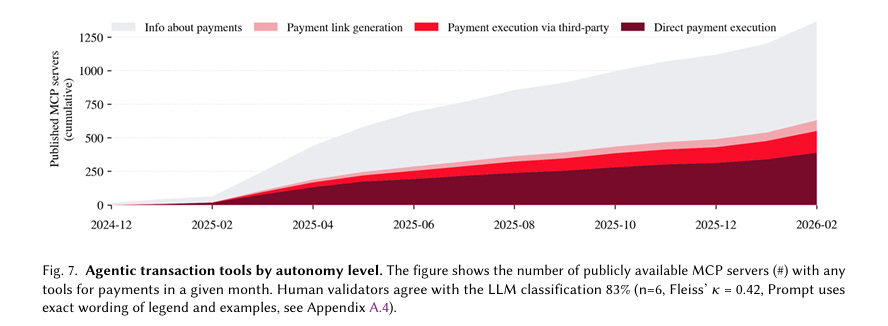

But the paper also surfaces an important signal about where things are headed. Financial services emerged as a significant outlier, with high-stakes financial occupations having disproportionately more action tools than predicted by the overall pattern. MCP servers with payment execution capabilities grew from 47 servers in January 2025 to 1,578 by February 2026. Financial regulators are already paying attention, and the paper was produced in part through a collaboration with the Bank of England.

This expansion into high-stakes domains is exactly the trajectory I described in “Governing Agentic AI,” where I argued that the governance frameworks we have today were built for a world of models producing recommendations for human review, not agents autonomously executing financial transactions, modifying production databases, or interacting with critical infrastructure. The UK AISI data shows that this transition is not theoretical. It is measurable, accelerating, and expanding into domains where the consequences of misalignment, mistakes, or misuse are severe.

What This Means Across the Three Dominant Agent Deployment Patterns

For security leaders trying to get their arms around this, I continue to believe the most practical framework is the three major agent deployment patterns I have been writing about.

The first is homegrown and custom agents that organizations build internally. The UK AISI data shows that tool availability has grown from roughly 4,888 tools in January 2025 to 177,000 by February 2026, a 36x increase. Organizations building custom agents are pulling from this rapidly expanding ecosystem of publicly available tools, many of which were built by AI with limited security validation. The question for security leaders is whether they have visibility into which tools their internal agents are using, what those tools can access, and whether the tools themselves are trustworthy. In most cases today, the answer is no.

The second pattern is endpoint agents like coding assistants and agentic browsers that operate on developer and employee machines. The paper’s finding that software development accounts for 90% of agent tool downloads, combined with the explosive growth in computer-use and browser automation tools, means that endpoint agents are the most widely deployed and the most action-oriented. These agents have access to local file systems, terminals, source code repositories, and browser sessions. The shift toward general-purpose, unconstrained tools means these endpoint agents increasingly operate with broad permissions in environments that are difficult to monitor with traditional security controls.

The third pattern is SaaS and embedded agents that come bundled within enterprise platforms. The paper identifies “official” MCP servers published by companies like PayPal, Stripe, Google, and Asana, noting that while they represent a smaller share of published tools, they account for a disproportionately large share of downloads, roughly 45 million out of 78 million total. When a vendor embeds agent capabilities into a platform your organization already uses, the enterprise inherits whatever security decisions the vendor made about what actions the agent can take, what environments it can access, and what constraints are in place, similar to the shared responsibility model from the cloud-era

The UK AISI data on the shift from constrained to unconstrained environments should raise serious questions about whether those vendor decisions are conservative enough.

Visibility, AISPM, AIDR, and Governance Are Not Optional

The paper reinforces a thesis I have been developing across multiple articles. You cannot secure what you cannot see, and right now, most organizations cannot see what their agents are doing.

Start with visibility and observability. The paper demonstrates that monitoring agent tools provides early indicators of deployment patterns, emerging risk domains, and shifts in capability. If a government research institute can track 177,000 tools across public repositories, there is no reason enterprise security teams should not have comparable visibility into their own agent deployments. How many agents are running in your environment? What tools do they have access to? What actions are they taking? What data are they touching? For most organizations, these are unanswered questions.

Then there is AI Security Posture Management, or AISPM. The paper’s framework for characterizing agent tools across five dimensions, including direct impact, generality, task domain, geography, and AI co-authorship, provides a useful model for how organizations should be assessing the security posture of their agent deployments.

Each dimension maps to a risk variable. An agent with action tools in an unconstrained environment operating in a high-stakes domain with AI-coauthored tooling represents a fundamentally different risk profile than an agent using narrow perception tools in a constrained API environment. AISPM is about continuously assessing these variables across your entire agent inventory. Factors such as data sensitivity, system criticality, business context and more should be part of agentic risk assessments as they have in prior era of cyber as well.

AI Detection and Response, or AIDR, addresses what happens when agents misbehave. The paper catalogs the real-world consequences of agent misalignment and mistakes, including deleted databases, exposed patient records, blackmail by misaligned agents, and cryptocurrency theft through prompt injection. It notes that narrow-purpose tools are easier to govern because a cryptocurrency transfer tool has a clear risk profile, while a general-purpose browser tool can do almost anything. When the most consequential actions are happening in the least constrained environments, detection and response capabilities purpose-built for agent behavior become essential.

Finally, governance. The paper explicitly raises the challenge that general-purpose tools complicate tool-based governance. Current agent systems like Claude Code’s settings.json can permit or block specific narrow tools, while requiring user review for potentially risky general-purpose tools. But the paper acknowledges that if general-purpose tools continue to dominate, the manual review approach becomes unsustainable.

For consequential actions like large financial transfers or legal registrations, the paper suggests that developers and regulators could require human authentication. This maps directly to the governance frameworks I have been advocating for, frameworks that account for the unique properties of agents including their autonomy, tool access, data sensitivity, and action capabilities, rather than trying to retrofit model-level or traditional software governance approaches.

The Bottom Line

This paper is one of the most important pieces of empirical research on AI agents published to date. It moves the conversation from speculation to measurement. Agents are not just coming, they are already here. They went from roughly 5,000 tools to 177,000 in just over a year. Action tools grew from 27% to 65% of usage. AI-coauthored tooling went from 6% to 62%. Financial transaction tools grew by over 3,000%, and the most consequential agent actions are increasingly happening in uncontrolled environments.

The organizations that treat this as an AI research curiosity rather than an enterprise security imperative are going to learn expensive lessons. The ones that invest in visibility, posture management, detection and response, and governance purpose-built for agents will be the ones that deploy AI at scale without becoming the next case study.

There’s no question that agents are in action. What determines which organizations adopt agents securely will be those who have observability, posture management, detection and response and account for capabilities such as hard boundaries, intent-analysis and comprehensive governance.