The New Offense: How AI Agents Are Rewriting Offensive Security

A wave of AI-native startups is transforming penetration testing from a manual, snapshot-in-time exercise into continuous, autonomous exposure validation and the timing couldn’t be more critical.

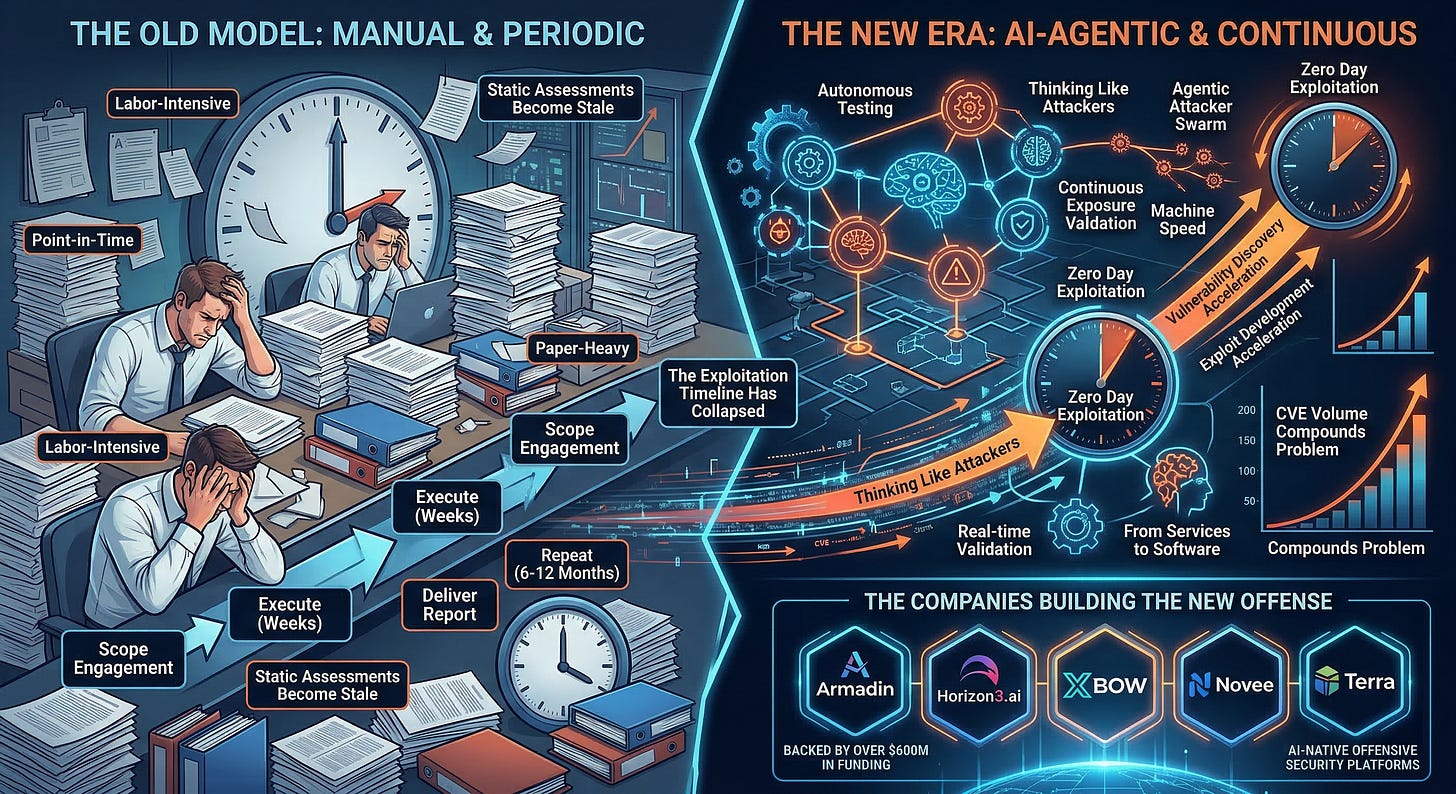

Something fundamental is shifting in offensive security, and it’s happening fast. For decades, penetration testing has operated under the same basic model, which has been to hire a team, scope an engagement, execute over a few weeks, deliver a report, and repeat in six or twelve months.

It’s manual, labor-intensive, point-in-time, and, being honest, it has never scaled to match the pace or complexity of the environments it’s supposed to test. (That line applies across much of AppSec, GRC and other cyber categories which AI is poised to disrupt, but this piece is focused on OffSec, so let’s stay on topic!)

That model is now colliding with two forces that are rendering it obsolete. The first is the collapse of exploitation timelines. The second is the rise of AI agents capable of performing offensive security operations autonomously, continuously, and at a scale no human team can match.

The result is a new wave of companies building AI-native offensive security platforms, backed by hundreds of millions in venture capital, and producing results that are already rivaling and in some cases surpassing the best human operators. This isn’t an incremental improvement, it’s a category transformation that will and already is fundamentally transforming Offensive Security (OffSec) as we know it, and it’s worth unpacking.

The Exploitation Timeline Has Collapsed

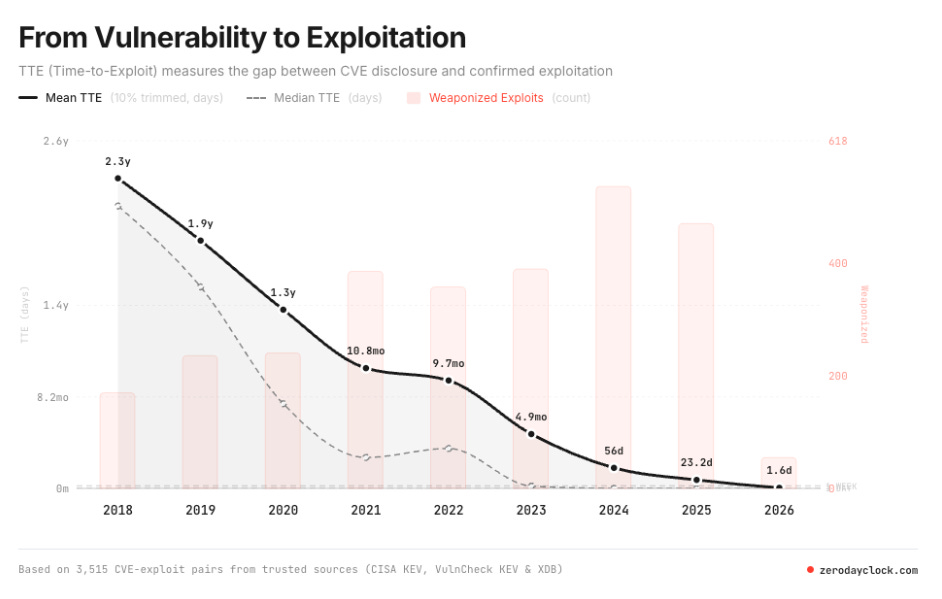

I’ve written extensively about the collapse of exploitation timelines, most recently in “The Zero Day Clock Is Ticking.” Sergej Epp’s Zero Day Clock tracks the time between vulnerability disclosure and active exploitation, and the trend is unambiguous, with exploitation timelines going from 771 days in 2006, to 84 days in 2018, to under 24 hours in recent observations, to Google’s Threat Analysis Group documenting exploitation within four hours of disclosure. The trajectory points to zero, or negative, where vulnerabilities are exploited before they’re even publicly disclosed.

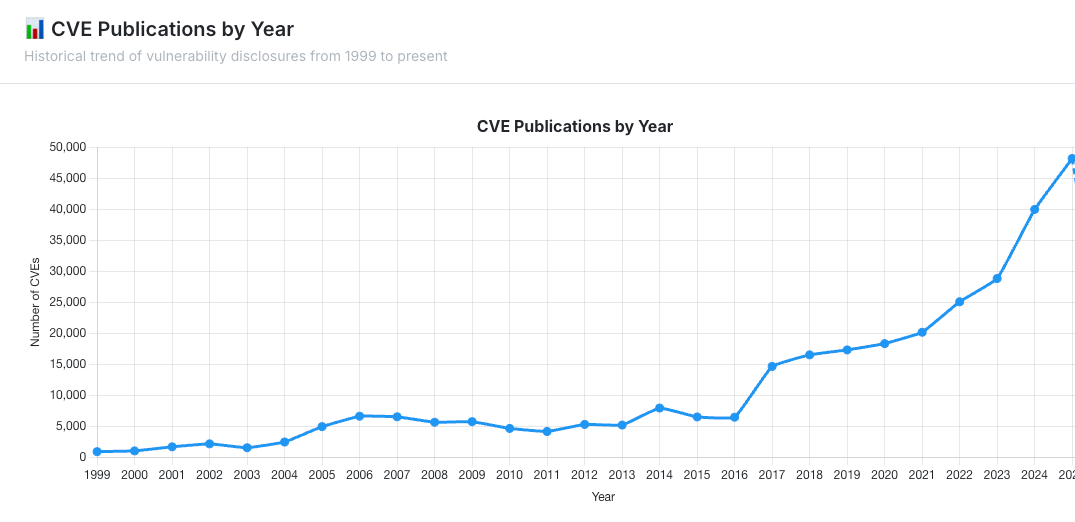

CVE volume compounds the problem. Over 40,000 CVEs were published in 2024 alone, a 520% increase since 2016, and the trajectory continues to steepen. AI is accelerating both sides of this equation, enabling faster vulnerability discovery and faster exploit development.

DARPA’s AI Cyber Challenge demonstrated that autonomous cyber reasoning systems can now identify 86% of synthetic vulnerabilities in critical infrastructure software at an average cost of roughly $152 per task, a fraction of what traditional bug bounty programs pay, and XBOW, which became the number one hacker in the United States on HackerOne in 2025, has already identified over 1,000 vulnerabilities autonomously.

When exploitation happens at machine speed and vulnerability volume grows exponentially, a security model built on quarterly pen tests and static assessments doesn’t just fall behind. It becomes functionally irrelevant except for security theater and audit obligations. Organizations need offensive security that operates at the same speed and scale as the threats they face and that is a key point being made by the founders and organizations I’ll be discussing below.

The Companies Building the New Offense

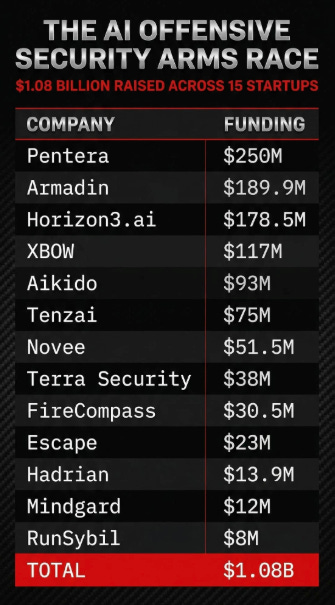

The market has responded with a wave of AI-native offensive security startups that are attracting serious capital from investors who see the category transformation coming and even the numbers alone tell the story.

Armadin, founded by Kevin Mandia, the legendary incident response leader who built Mandiant, sold it to FireEye for $1 billion, and then to Google for $5.4 billion, emerged in March 2026 with a record breaking $189.9 million in combined seed and Series A funding. That’s the largest combined seed and Series A in cybersecurity history.

Backed by Accel, Google Ventures, Kleiner Perkins, In-Q-Tel, and Ballistic Ventures, Armadin is building what Mandia calls “the ultimate attacker”, specialized AI agents operating as an agentic attacker swarm that continuously reasons, plans, and adapts like the most advanced human threat actors. Several Fortune 100 companies are already early customers. Mandia’s thesis is pretty clear, that within the next few years, virtually all cyberattacks will be AI-based, and defense must become autonomous to keep pace.

Horizon3.ai, another notable player in the mix has been at this longer than most, and the results show. I have previously interviewed the founder, Snehal Antani, in an episode titled “AI, Autonomous Pen Testing & the Future of Offensive Security”. Founded in 2019, the company raised $100 million in its Series D in May 2025, bringing total funding to $186 million at a reported valuation approaching $750 million. Their announcement of the raise frames how they see themselves in the space too, positioning it as “cementing their lead in autonomous security”. Their NodeZero platform has powered over 150,000 autonomous pentests across more than 3,000 organizations.

This includes sensitive and regulated environments, such as the NSA’s Continuous Autonomous Penetration Testing (CAPT) program being powered by NodeZero. Horizon3 achieved 100% year-over-year ARR growth, was ranked third on the 2025 Deloitte Technology Fast 500, and secured FedRAMP authorization, opening the door to further federal agency adoption. This is what category leadership looks like in autonomous offensive security but as you’re likely catching on, an already competitive category is poised to get even more intensely competitive with a mix of new entrants and incumbents such as Horizon3.

XBOW, founded by Oege de Moor (creator of Semmle, acquired by GitHub/Microsoft), raised $75 million in Series B funding in June 2025, and now this week news broke that XBOW raised another $120 million in its Series C,. This brings their new funding total to $273 million. XBOW’s achievement as the number one ranked hacker on HackerOne is a milestone the industry has paid attention to, since it’s the first time an autonomous system has outperformed every human participant on a major bug bounty platform.

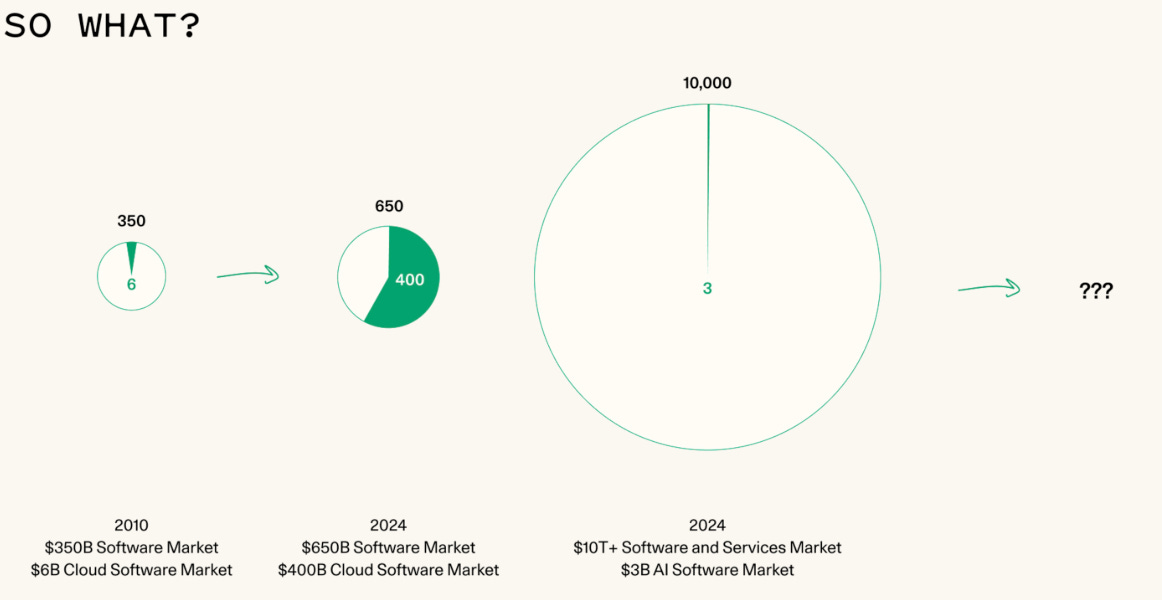

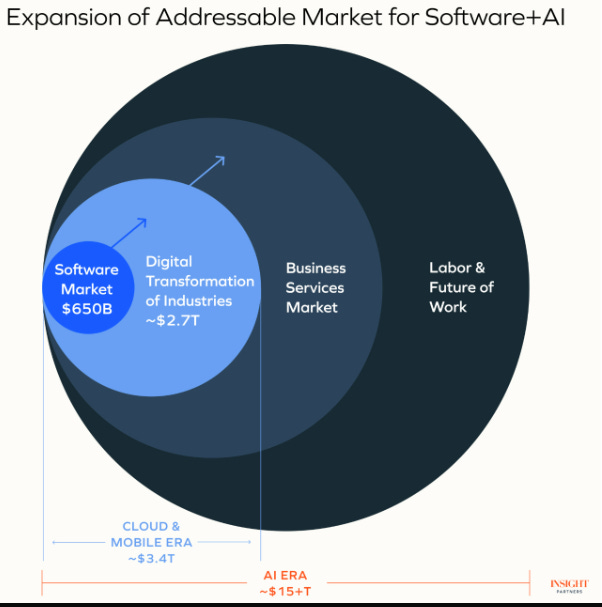

Their platform has identified over 1,000 vulnerabilities, with companies like AT&T, Epic Games, Ford, and Disney verifying and fixing hundreds of them. XBOW launched Pentest On-Demand in November 2025, delivering complete pentest results within five business days starting at $6,000 which speaks not just to technical capabilities but a changing of the economics that underpin OffSec as well, and has implications for services firms conducting these activities with human labor as well. This reality is echoed by leading VC’s, such as Sequoia who recently stated “The next $1T company will be a software company masquerading as a services firm”. This point was made by Sequoia, Insight Partners and others previously too, where they discussed how the total addressable market (TAM) for the AI-era far exceeds the prior market of SaaS/Software.

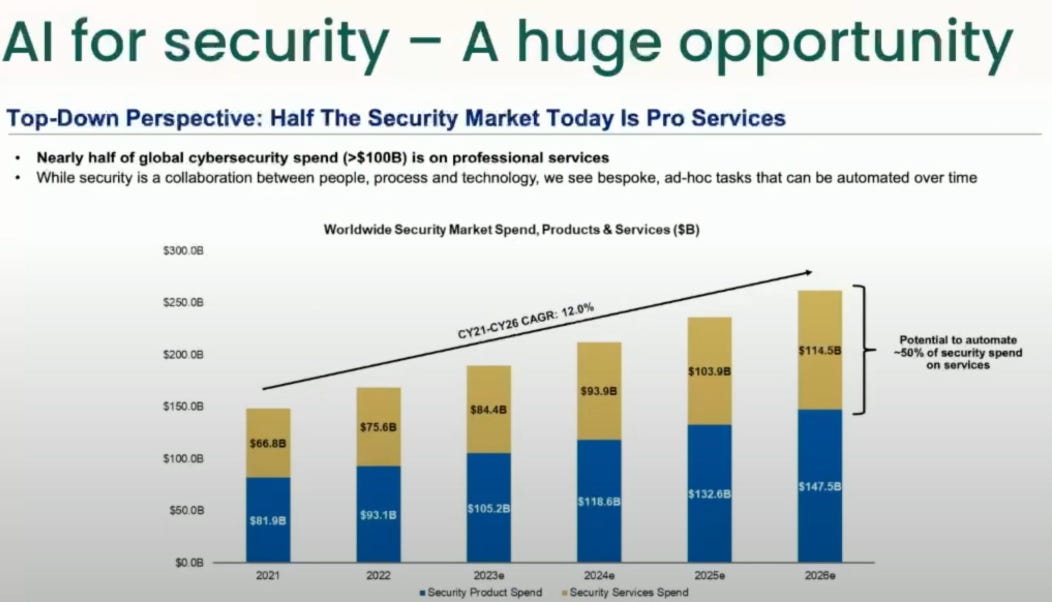

It’s also one made by cyber leader and investor Chenxi Wang previously in a BSides talk where she said the opportunity for AI in Security was massive due to the ability to automate large portions of traditional security spend that went to services but could be automated with AI. We’re literally seeing that prediction play out in real-time now, and it has implications for keeping pace with threats, but also massive economic implications as well.

Another notable player, Novee emerged from stealth in January 2026 with $51.5 million in funding led by YL Ventures, Canaan Partners, and Zeev Ventures. Founded by veterans of Israel’s most elite cyber units, Unit 8200, the Talpiot program, and the Prime Minister’s Office, Novee has built a proprietary, purpose-trained AI model specifically designed for offensive security. Novee’s Cofounder and CTO has had some good posts lately, including on utilizing small purpose-built models rather than LLMs when it comes to utilizing models for OffSec. He also calls out the folly of the traditional OffSec Model:

“Quarterly pentests made sense when software shipped quarterly.

See, back then a major deployment was a project.

Compliance frameworks locked in that cadence, budgets followed, and somewhere along the way it just became how security validation worked.

Then deployments became daily, but the testing calendar, not so much.”

This quote helps point out the impedance mismatch between our industry’s approach to security testing compared to the current development velocity, which has only grown even more accelerated in the age of AI.

The company claims its model outperformed leading LLMs by more than 55% in web exploitation tasks, achieving 90% accuracy. Novee raised its Series A within four months of founding, one of the fastest funding trajectories in the offensive security sector, and already claims to have dozens of enterprise customers.

Terra Security raised $30 million in Series A in September 2025, led by Felicis with participation from Dell Technologies Capital, bringing total funding to $38 million. Terra won the 2025 CrowdStrike and AWS Cybersecurity Accelerator, attracted former Google CISO Gerhard Eschelbeck to its board, and in March 2026 became the first AWS Partner to achieve Security Competency for Autonomous Security Validation. Terra’s agentic AI platform delivers continuous penetration testing aligned with real-world threat scenarios.

Combined, these five companies alone have raised over $600 million. And they’re not the only ones. The broader market for AI-driven offensive security is attracting capital at an unprecedented pace, with MarketsandMarkets projecting the pen testing market will reach $3.9 billion by 2029 and Gartner projecting 60% of large enterprises will use continuous automated red teaming by 2026. The below image from Jake R. of Panaseer on LinkedIn highlights this gravity of capital to the category:

Why Agents Change the Game

What makes this wave different from previous automation attempts in offensive security is the agentic architecture. These aren’t scanning tools with AI wrappers. They’re autonomous systems that reason about targets, select attack paths dynamically, chain multiple techniques together, adapt based on what they discover, and validate exploitation end-to-end.

The distinction matters. Traditional vulnerability scanners identify known issues against known signatures. Automated pen testing tools execute predefined playbooks. AI agents do something fundamentally different, they think like attackers. They observe an environment, form hypotheses about exploitable paths, test those hypotheses, pivot when they fail, and escalate when they succeed. They can operate continuously, not in engagement windows. They can test at scale that no human team can match, and they can do it at a fraction of the cost.

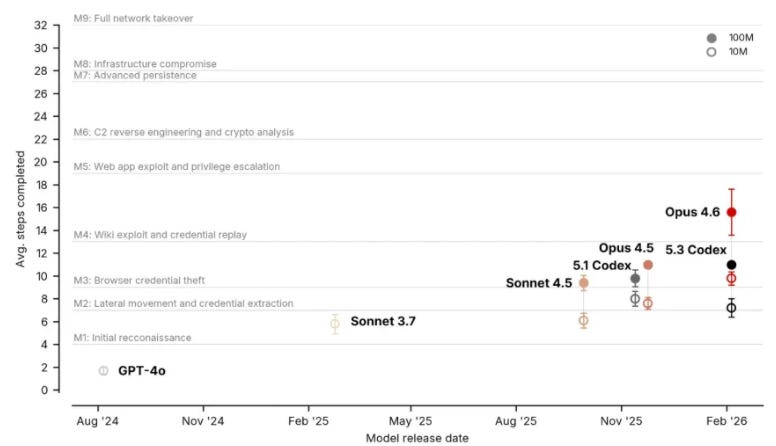

Even the frontier labs themselves have highlighted these agentic capabilities when it comes to OffSec, such as Anthropic’s Model Card for autonomous cyber capabilities, or OpenAI’s research into GPT-4 pen testing etc. In face, the UK’s AI Security Institute published findings on whether AI agents can conduct advanced cyber attacks, contributing to the growing body of evidence on AI-enabled offensive capabilities and the findings are telling, and only growing with each model release.

This is what breaks the snapshot-in-time model that has plagued offensive security for decades. When your pen test happens once a quarter, you’re validating your security posture as it existed on the day the test started. By the time the report is delivered, the environment has changed, new vulnerabilities have been disclosed, new configurations have been deployed, and the assessment is already stale. AI agents testing continuously turn offensive security from a periodic assessment into an ongoing operational practice and reflect your operational environment, as exposed to adversaries, which is what you should actually care about from a risk management perspective.

DARPA’s AI Cyber Challenge validated this at scale. Autonomous cyber reasoning systems analyzed 54 million lines of code across 53 challenge projects, discovering and patching vulnerabilities at a cost of $152 per task. The competition showed that AI systems can identify novel vulnerabilities, not just known patterns, and do so at a pace and cost that makes continuous testing economically viable for the first time.

This economic paradigm shift isn’t just appealing from a budgetary perspective but from a risk management perspective as well, providing the potential for testing and real-time validation at a scale and cadence that was simply impractical prior.

From Paper-Based Assessments to Continuous Exposure Validation

The real impact of this shift isn’t just technological, it’s operational. AI-driven offensive security fundamentally changes how organizations identify and close gaps.

Today’s dominant model is broken in ways that everyone acknowledges but few have been able to fix due to constraints on capital and labor, underpinned by the technologies at the time. Pen tests are expensive, typically running tens of thousands of dollars for a single engagement. They’re labor-intensive, constrained by the availability and expertise of human testers. They’re point-in-time, producing reports that are stale before the ink dries, and they’re paper-heavy, generating PDF deliverables that sit in GRC tools and get reviewed during the next audit cycle rather than driving immediate remediation.

AI agents break every one of those constraints. They can test continuously rather than periodically. They can scale across the full attack surface rather than sampling a subset within an engagement window. They can validate whether remediation actually closed the gap by retesting immediately. And they can produce actionable, prioritized findings based on actual exploitability rather than theoretical severity scores.

This is the shift from compliance-driven offensive security to operations-driven offensive security. It’s the difference between proving you tested and proving you’re actually secure. I’ve been making this argument across my writing on GRC modernization, governance, risk, and compliance processes need to evolve from periodic attestation to continuous assurance. AI-driven offensive security is one of the most concrete examples of what that evolution actually looks like in practice in another cyber category as well.

What This Means for Pen Testing Firms

This shift creates real existential pressure on traditional penetration testing firms. The economics are straightforward, if an autonomous platform can deliver continuous exposure validation at $6,000 a pop, the value proposition of a $150,000 annual engagement staffed by human testers gets harder to justify, especially when the automated alternative runs continuously rather than producing a point-in-time snapshot.

Services firms built on billable hours and manual labor are watching their unit economics get compressed by the same AI-driven automation wave that’s reshaping every other professional services category and potential white collar work more broadly. The firms that don’t adapt risk becoming the Blockbuster in a Netflix market.

The smart ones already see this coming. Firms like Bishop Fox have invested in building their own autonomous offensive capabilities, and NetSPI has integrated continuous testing into their platform alongside traditional services. The winning playbook isn’t to fight automation, it’s to embrace it, using autonomous agents to handle the scale and repetition while redirecting human expertise toward the judgment-intensive work that agents can’t yet replicate, such as complex business logic testing, adversarial simulation of sophisticated threat actors, and translating findings into strategic risk narratives that boards actually understand.

The pen testing firms that survive this transition will be the ones that treat AI agents as force multipliers for their human operators rather than threats to their revenue model.

The Bottom Line

The offensive security category is being rewritten. The combination of collapsing exploitation timelines, exponential vulnerability growth, autonomous AI agents capable of matching elite human operators, and over $700 million in venture funding flowing into the space creates a trajectory that’s impossible to ignore.

The companies leading this transformation, Armadin, Horizon3, XBOW, Novee, Terra, among others and the wave that will follow them, aren’t just building better pen testing tools. They’re building the operational infrastructure for a world where organizations can continuously validate their security posture at machine speed, against real-world attack techniques, without waiting for the next quarterly engagement.

The old model of manual, snapshot-in-time offensive security was already struggling. In a world where AI can discover and exploit vulnerabilities faster than most organizations can patch them, that model doesn’t just struggle, it fails. The organizations that adopt continuous, AI-driven offensive security won’t just be better tested. They’ll be the ones that actually know where they stand, in real-time, when it matters and positioned to address real risks with real world context that’s current.

We already know the attackers have gone autonomous. The question for every security leader is whether their defensive posture can keep up if they fail to adjust to this industry paradigm shift.