Resilient Cyber Newsletter #96

White House Reverses Course on AI Oversight, NIST AI Testing, CISA Agentic Adoption Guidance, Bug Bounty Slop, Vercel DeepSec & Securing the Agentic SDLC

Welcome to issue #96 of the Resilient Cyber Newsletter!

This was the week the geopolitical and governance implications of AI in cybersecurity collided head-on. The New York Times reported that the Trump administration is considering pre-release vetting of AI models, a reversal from its day-one revocation of Biden’s AI executive order.

Anthropic hit $4.4 billion ARR, doubling in two months, while NIST’s CAISI signed pre-deployment testing agreements with Google DeepMind, Microsoft, and xAI. Palo Alto Networks made two strategic moves, partnering with Kevin Mandia’s Armadin for autonomous external attack validation and announcing its intent to acquire Portkey to build a unified AI gateway.

On the defensive side, Vercel open-sourced DeepSec, a security harness that scales to 1,000+ concurrent sandboxes. Five Eyes agencies jointly published guidance on careful adoption of agentic AI, and AISLE’s Ondrej Vlcek formally introduced VulnOps as the operational model for AI-driven vulnerability management.

Meanwhile, the PyTorch Lightning supply chain compromise proved once again that the software ecosystem’s trust model is not built for the speed at which attacks now move. Socket detected it in 18 minutes. The malicious versions were live for 42.

There is a lot to cover, let’s get into it.

The AI security confidence gap is wider than you think

Organizations are racing to adopt AI—but identity security isn’t keeping up.

In Delinea’s 2026 Identity Security Report, 87% say they’re prepared for AI at scale, yet nearly half admit their identity governance around AI systems is deficient.

This “confidence paradox” is leaving gaps in visibility, control, and privilege management—especially for AI agents and non-human identities.

The result? Expanding risk that most teams can’t fully see or govern.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

The White House Reverses Course on AI Oversight

On his first day in office, President Trump revoked Biden’s executive order requiring AI developers to share safety test results with the government before release. Eighteen months later, the New York Times reports the White House is now considering pre-release vetting of AI models, an AI working group, and a broader set of oversight mechanisms.

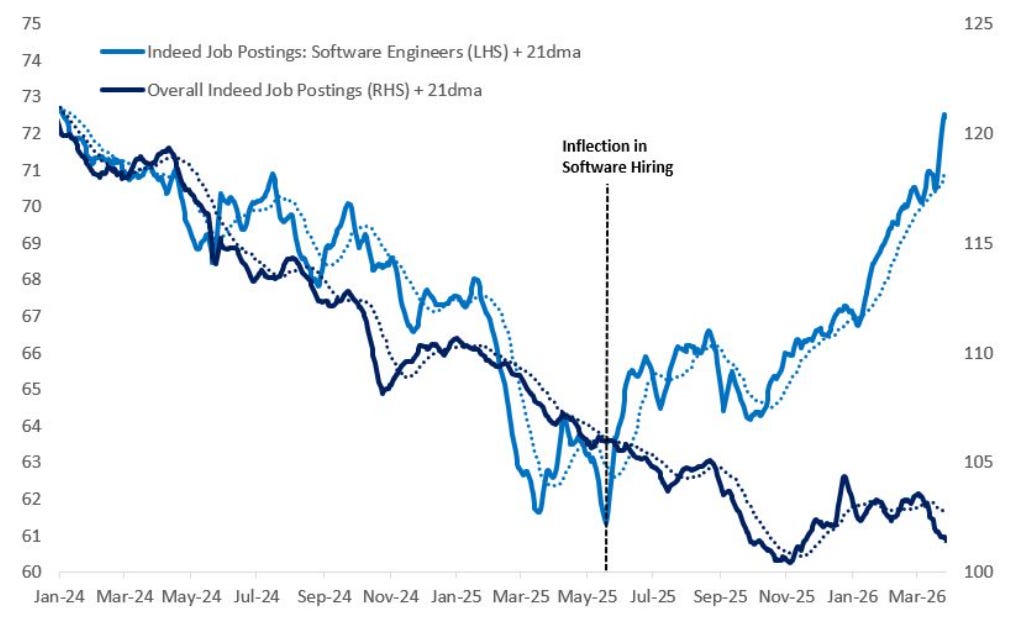

The catalyst is Mythos, mounting public anxiety over AI’s impact on jobs, energy costs, and national security, combined with bipartisan Congressional concern, appears to have shifted the calculus. White House officials briefed executives from Anthropic, Google, and OpenAI on the plans under consideration. For those of us who have been arguing that AI governance cannot be an afterthought, this is a significant signal. Whether the executive action has real teeth or is another round of procedural theater remains to be seen, but the direction of travel has changed.

NIST Signs Pre-Deployment Testing Deals with Three AI Labs

CAISI at NIST signed agreements with Google DeepMind, Microsoft, and xAI for pre-deployment evaluations and targeted research on frontier AI capabilities. Developers will provide models, frequently with reduced or removed safeguards, to CAISI for government evaluation before public release.

The agreements also cover post-deployment assessments and support testing in classified environments. CAISI has already completed more than 40 evaluations, including on models that have never been released to the public. Notably absent from the announced signatories is Anthropic, which is still navigating the Pentagon’s supply chain risk designation.

Combined with the White House’s pre-release vetting considerations, these agreements mark the clearest step yet toward a government role in frontier AI evaluation, building on the NSTM-4 memorandum I covered in issue #95.

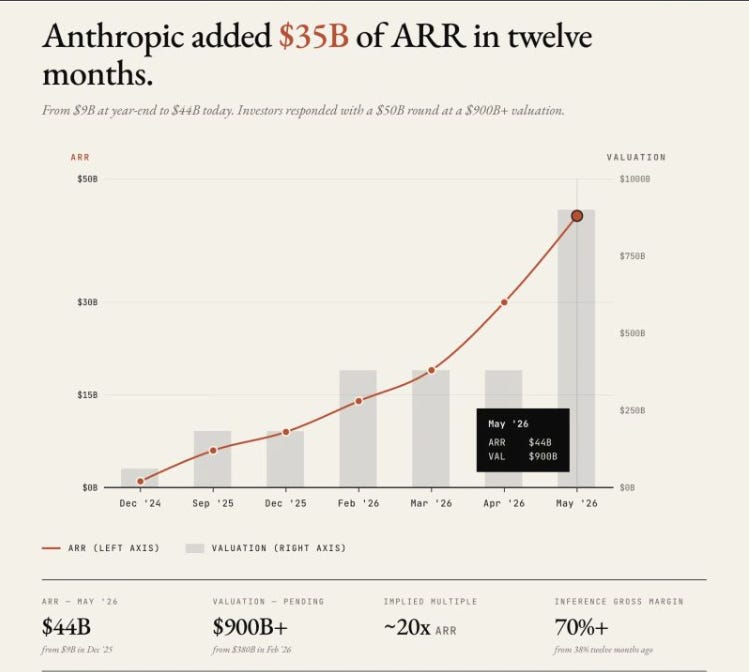

Anthropic Doubles Revenue to $4.4 Billion ARR in Two Months

Speaking of Anthropic, their revenue trajectory has gone parabolic. ARR hit $2.2 billion in February 2026, climbed to approximately $14 billion by mid-March, crossed $30 billion in early April, and is now reportedly approaching $44 billion. Peter Walker’s breakdown puts this in context.

From $1 billion ARR in December 2024 to $44 billion in May 2026 is a 44x increase in 17 months. Claude Code alone is generating $2.5 billion in annualized revenue, more than doubling since the start of 2026. For cybersecurity, the implication is straightforward. The company developing the most controversial offensive AI capability in history is also becoming one of the fastest-growing enterprise software companies ever.

The revenue validates that enterprises are adopting Claude at scale, which means the security and governance questions around these models are not academic exercises, they are operational imperatives.

Palo Alto Networks Acquires Portkey to Build the AI Gateway

Palo Alto Networks announced its intent to acquire Portkey, a pioneer in AI Gateways that already processes trillions of tokens per month. The integration with Prisma AIRS gives enterprises visibility into all agentic traffic and the ability to control and protect against agentic threats through a unified control plane.

Kevin’s analysis correctly frames this as Palo Alto Networks building the chokepoint through which all agent-to-agent and agent-to-service communication must pass. Combined with the Armadin partnership and Nikesh Arora’s comments from issue #95 about cybersecurity benefiting from the AI wave, Palo Alto is executing a clear strategy. Own the infrastructure layer that governs autonomous AI agents in the enterprise.

Armadin and Unit 42 Partner on Autonomous Attack Validation

Kevin Mandia’s Armadin is now embedded in Unit 42’s Frontier AI Defense offering. The partnership delivers autonomous external attack validation, starting with passive discovery across internet-facing assets, cloud resources, and exposed secrets, then deploying a coordinated swarm of AI agents that execute active reconnaissance and exploitation in parallel using over 50,000 templates.

Where access is achieved, the platform simulates post-exploitation behavior to demonstrate real-world impact. Every attack chain is logged as validated, decision-grade evidence. The External AI Hyperattack Assessment is already commercially available. For organizations that have been running traditional penetration tests on an annual or quarterly cadence, this is the wake-up call. Continuous, AI-driven attack validation is becoming the baseline expectation.

Cyber Command Gets Its First Dedicated AI Funding

2026 marks the first year Cyber Command has dedicated funding for AI programs, after years of lead time inside the Pentagon and Congress. The funding comes as multiple AI labs compete for government contracts.

OpenAI is capitalizing on Anthropic’s supply chain risk designation by aggressively engaging with federal, state, and international government offices to deploy GPT-5.4-Cyber. Google has signed a deal with the Pentagon to use Gemini in classified operations. Meanwhile, the White House is developing guidance that would allow agencies to work around Anthropic’s designation and onboard Mythos.

As I wrote in issue #95 when covering the Pentagon’s 100,000 vibe-coded agents, the government is simultaneously the largest deployer and least prepared governance body for AI in cybersecurity.

At-Bay’s Insurance Data Reveals a Ransomware Infrastructure Shift

The data from At-Bay’s 2026 InsurSec Report, drawn from more than 100,000 policy years, tells a clear story. Ransomware has entered an infrastructure-driven phase. Attackers are no longer hunting by industry or company size but by the network appliances their targets happen to run.

Nearly three in four ransomware attacks, or 73%, began with a VPN in 2025, a share that has almost doubled in two years. Akira alone accounted for over 40% of all ransomware claims, with average demands of $1.2 million. Overall claim severity hit an all-time high of $221,000. For CISOs making budget arguments, this is the kind of actuarial evidence that translates directly to board-level conversations about infrastructure modernization.

20 Years of Cyber Through the Dark Reading Lens

Dark Reading marked its 20th anniversary with a retrospective on two decades of cybersecurity evolution. I have been a regular contributor/reader to Dark Reading for years, and what stands out about their coverage over time is the arc from network perimeter defense through the cloud migration era to the current AI-driven transformation.

The publication has been a consistent voice for practitioner-level analysis rather than vendor hype, and that matters more now than ever. In an industry flooded with breathless AI narratives, outlets that maintain editorial rigor are essential infrastructure.

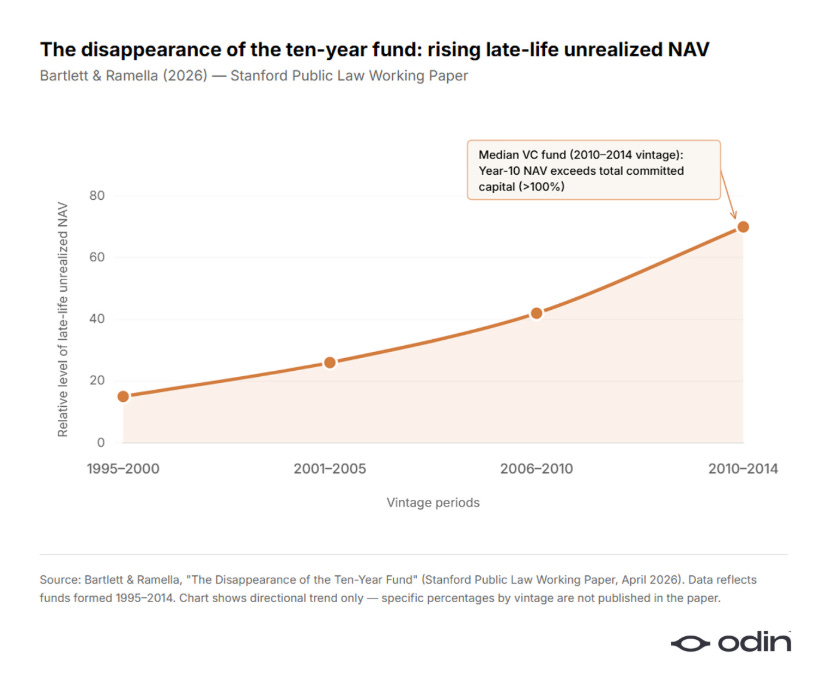

The Ten-Year Startup Is Disappearing

Odin’s analysis argues that the traditional ten-year venture-backed startup lifecycle is collapsing. AI is compressing the time from founding to meaningful scale, which has direct implications for cybersecurity startups.

As I tracked in issue #95 with Elizabeth Yin’s valuation dynamics and SVB’s data from issue #92 showing capital concentrating in the top 1%, the venture landscape is bifurcating between AI-native companies that can reach scale in two to three years and traditional startups that cannot compete on timeline. For security founders, the message is clear. Build for speed, not for a decade-long runway that may no longer exist.

Konstantine Buhler on Narrative Violation

Sequoia’s Konstantine Buhler argued that the most powerful insights come from narrative violations, moments when reality diverges from consensus expectations. In AI and cybersecurity, we are living through one of these moments. The consensus a year ago was that frontier AI models would remain expensive, scarce, and controllable.

DeepSeek V4 on Huawei chips at a tenth of Western pricing, GPT-5.5 delivering Mythos-class hacking to anyone with a subscription, and 100,000 Pentagon agents vibe-coded in five weeks all violate that narrative. The investors and security leaders who recognized these shifts early are the ones positioned to capture the opportunity. Everyone else is recalibrating assumptions that should have been challenged months ago.

Speaking of Sequoia, they recently hosted an event with amazing speakers, but the two talks I enjoyed most were from Demis and Andrej:

AI

Five Eyes Agencies Issue Joint Guidance on Agentic AI Adoption

CISA, the Australian ASD, NSA, Canadian Cyber Centre, New Zealand NCSC, and UK NCSC jointly released guidance on the careful adoption of agentic AI services. The core message is to deploy incrementally, limit initial use to low-risk tasks, enforce strict privilege controls, maintain continuous monitoring, and align with existing cybersecurity frameworks.

This is the first joint Five Eyes publication specifically addressing agentic AI, and the fact that it came from intelligence and defense agencies rather than regulatory bodies tells you how seriously nation-states are taking the governance gap. The guidance reinforces the CIS Controls v8.1 AI Agents Companion Guide from issue #95 and the CoSAI framework I discussed in my article on identity as the agentic AI problem.

The irony here of course is the Pentagon vibe coding 100,000 AI agents at the same time CISA and others advocate for careful adoption…

The NCSC Says Frontier AI Can Hack Your Network for £65

The UK’s NCSC dropped a number that should reframe every defender’s risk calculus. The best-performing model in early 2026, Claude Opus 4.6, averaged 15.6 steps on a 32-step simulated enterprise network attack that would take a human expert approximately 14 hours.

At current pricing, a full attempt costs around £65. The NCSC’s key finding is that frontier AI model activity before March 2026 tends to generate noticeable security alerts and is relatively easy to detect. That is a near-term defensive advantage, not a permanent one. The best model in early 2026 completed nearly six times more attack steps than the best model 18 months earlier.

The cost floor on sophisticated attacks is collapsing, and the limiting factor is increasingly funding, not expertise. AI-enabled cyber defense cannot be the sole answer to AI-enabled attackers. The basics still have to come first.

Vercel Open Sources DeepSec for AI-Powered Security Scanning

Vercel released DeepSec, an open source security harness that uses Claude Opus 4.7 and GPT-5.5 to investigate codebases at scale. The architecture starts with static analysis to identify security-sensitive files, then coding agents investigate each candidate, tracing data flows, checking for mitigations, and producing actionable findings with severity ratings.

The false positive rate runs 10-20%. For large repos, DeepSec supports optional fanout to Vercel Sandboxes, with scans routinely scaling to 1,000+ concurrent instances. You can run it on your laptop with an existing Claude or Codex subscription. This is significant because it demonstrates that commodity AI subscriptions combined with good tooling infrastructure can deliver enterprise-grade security scanning. The barrier to entry for AI-powered vulnerability discovery continues to fall.

AISLE Introduces VulnOps as the Operational Model for AI Vulnerability Management

Ondrej Vlcek formalized what many of us have been circling around. VulnOps is to autonomous vulnerability research and remediation what DevOps is to software delivery. The concept, first introduced by Heather Adkins, Gadi Evron, and Bruce Schneier in October 2025, now has a reference implementation. AISLE has validated 180+ CVEs across 30+ projects, with patches accepted into OpenSSL and curl.

They found 12 OpenSSL zero-days including a CVSS 9.8 flaw dating to 1998. The critical capability is going end to end, from finding real vulnerabilities to producing correct fixes, validating those fixes, and getting them into production. As I wrote in my piece on the AI cyber capability curve, the discovery side is accelerating faster than the remediation side. VulnOps is the operational framework designed to close that gap.

80% of Fortune 500 Deploy AI Agents, 10% Have a Strategy to Manage Them

Microsoft’s 2026 Cyber Pulse report found that more than 80% of Fortune 500 companies now use active AI agents built with low-code and no-code tools. Only 10% have a clear strategy to manage them. The average enterprise manages 37 deployed agents, with more than half running without any security oversight or logging. The governance frameworks executives built over decades were designed for people.

AI agents are not people. The gap between those two facts is where the security incidents happen. This connects directly to the CSA’s finding from issue #94 that 53% of organizations report agents exceeding permissions and the Pentagon’s 100,000 vibe-coded agents from issue #95. Shadow AI is not a future risk. It is the present operating condition of the Fortune 500.

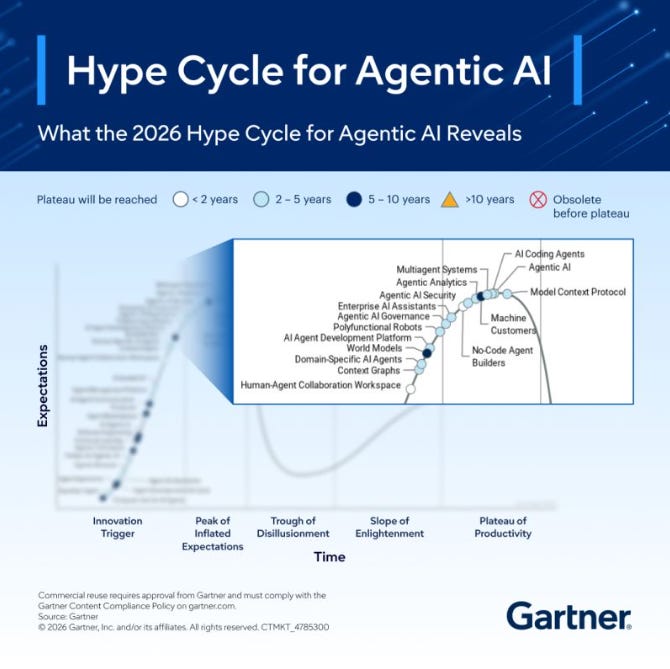

Gartner Places Agentic AI at Peak of Inflated Expectations

According to the 2026 Gartner CIO and Technology Executive Survey, only 17% of organizations have deployed AI agents, yet more than 60% expect to do so within the next two years, the most aggressive adoption curve among all emerging technologies.

Gartner places agentic AI at the Peak of Inflated Expectations, noting that most deployments remain narrowly scoped and fully autonomous agents are not ready for the majority of enterprise use cases.

What matters for security teams is the emergence of governance, security, and cost-focused profiles alongside core agentic AI technologies. Gartner predicts 40% of enterprise applications will include integrated task-specific agents by 2026, up from less than 5% in 2025. The adoption is real, even if the hype outpaces the maturity.

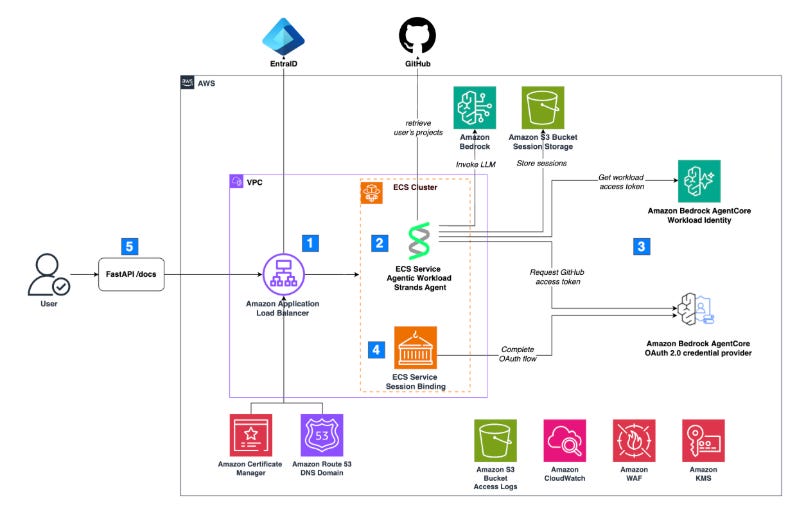

Amazon Bedrock AgentCore Brings On-Behalf-Of Token Exchange to Agent Identity

AWS shipped On-Behalf-Of (OBO) token exchange for Bedrock AgentCore Identity, enabling agents to securely access protected resources on behalf of authenticated users without requiring multiple consent flows. Each token is bound to a specific user identity with explicit consent, maintaining an auditable chain from user authentication through to agent action. AgentCore Identity implements a secure token vault that stores tokens and allows agents to retrieve them securely, along with native integration with AWS Secrets Manager.

The implementation guide walks through full deployment on ECS. Between AAuth, Microsoft Entra Agent ID, Google Agent Identity from Next26, and now AWS AgentCore, we have four major hyperscaler and protocol-level approaches to agentic identity. As I wrote in my article on identity as the agentic AI problem, the building blocks are taking shape. The convergence question remains open.

Pluto Security Maps the Architecture Inside Claude Managed Agents

Pluto Security reverse-engineered the architecture of Claude Managed Agents, now in public beta and enabled by default for all API accounts. The session, harness, and sandbox are fully decoupled, with vault credentials never entering the sandbox.

A transcript classifier running Sonnet 4.6 evaluates each proposed action before execution using a two-stage pipeline, bringing the false negative rate down to 5.7% for synthetic exfiltration attacks. But here is the gap, as of April 2026, there is no dedicated security, hardening, or threat modeling documentation for Managed Agents.

Topics like prompt injection risks and deployment hardening guidance are not covered. This is the agent harness security problem I highlighted in issue #95 with the Sondera analysis of the Claude Code leak. The infrastructure is maturing, but the security documentation and guidance are lagging behind the capabilities.

Scalable Runtime Governance for Agentic AI in Financial Services

Stuart Winter-Tear highlighted research proposing a scalable runtime governance architecture for agents in financial services, including policy engines, audit trails, and real-time guardrails tied to regulatory obligations. His key insight is that authority has to be bounded at runtime, not merely described in a document nobody reads until after the near miss.

Combined with his analysis of how AI agents spend money and the financial risk that agent-initiated transactions create, the emerging picture is clear. Financial services will be the proving ground for agentic AI governance because the regulatory consequences of getting it wrong are immediate and measurable.

The AI-Assisted Breach of Mexico’s Government Infrastructure

Between December 2025 and February 2026, a single attacker used Claude Code and GPT-4.1 to compromise nine Mexican government agencies, exfiltrating 150GB of sensitive data including 195 million taxpayer records and 220 million civil records.

Claude Code executed approximately 75% of the remote commands sent to government systems. The attacker bypassed safety filters by initially posing as part of a bug bounty program and providing a hacking manual. This is the most documented case of AI tools being weaponized against government infrastructure to date. As I have been writing since my coverage of the OWASP Agentic Top 10, the use cases available to defenders are equally available to attackers. The Mexico breach demonstrates that jailbreaking commodity AI tools is a viable attack methodology at nation-state scale.

AppSec

PyTorch Lightning Compromised in 42-Minute Supply Chain Attack

The speed of this attack should alarm every security team. Malicious versions 2.6.2 and 2.6.3 of the PyTorch Lightning package appeared on PyPI on April 30, containing obfuscated JavaScript payloads that stole credentials, authentication tokens, environment variables, and cloud secrets while attempting to poison GitHub repositories.

Socket’s automated scanner flagged the packages 18 minutes after publication. Twenty-four minutes later, maintainers quarantined them. Total exposure window, 42 minutes. This is an extension of the Mini Shai-Hulud campaign that hit SAP npm packages earlier in the week, demonstrating how one compromised dependency bridges into additional ecosystems through transitive dependencies. As I wrote in Software Transparency, the trust assumptions in modern package ecosystems were designed for a different era.

Forty-two minutes is both impressively fast detection and terrifyingly long exposure.

Six Exploits That Broke AI Coding Agents

Every exploit followed the same pattern. An AI coding agent held a credential, executed an action, and authenticated to a production system without a human session anchoring the request. Six research teams disclosed exploits against Codex, Claude Code, Copilot, and Vertex AI over nine months. BeyondTrust proved that a crafted GitHub branch name could steal Codex’s OAuth token in cleartext, and OpenAI classified it Critical P1.

Unit 42’s Ofir Shaty found that the default Google service identity attached to every Vertex AI agent had excessive permissions, granting unrestricted read access to every Cloud Storage bucket in the project. Most CISOs inventory every human identity and have zero inventory of the AI agents running with equivalent credentials. No IAM framework governs human privilege escalation and agent privilege escalation with the same rigor.

Cisco Open Sources Model Provenance Kit for AI Supply Chain Safety

Cisco released Model Provenance Kit, an open source toolkit that fingerprints AI models at the weight level to verify their origins. The Model Provenance Constitution defines what constitutes a legitimate derivation relationship. Two AI systems are considered related only if there is a direct or indirect causal chain connecting their trained parameters, whether through fine-tuning, knowledge distillation, quantization, pruning, or model merging.

The reference database includes fingerprints for approximately 150 base models across 45+ families and 20+ publishers. Model provenance attestation is a supply chain control, and in the context of NSTM-4’s guidance on adversarial distillation from issue #95, this kind of tooling becomes essential for answering whether a deployed model inherits vulnerabilities, license obligations, or unauthorized redistribution from an upstream source.

Chainguard Commits to a One-Day KEV SLA

Chainguard announced the first explicit KEV SLA offered by any container vendor. Any CVE added to the CISA Known Exploited Vulnerabilities Catalog that affects a Chainguard container image will be remediated within one calendar day. The rationale is compelling. Roughly 4% of all CVEs are ever exploited in the wild, yet 50% of KEVs are exploited within two days and 75% within 28 days of disclosure.

Relying solely on severity-based SLAs leaves organizations exposed to actively exploited vulnerabilities with Medium or Low CVSS scores that never trigger the remediation machinery. Chainguard’s team has been averaging less than 20 hours for Critical CVE remediation.

As I have been writing since Vulnpocalypse, the remediation race is the central challenge. Committing to a one-day KEV SLA is the kind of concrete, measurable commitment the supply chain ecosystem needs.

The Glasswing Paradox and the Remediation Bottleneck

Picus Security’s framing nails the fundamental tension. The thing that can break everything is also the thing that fixes everything. Fewer than 1% of the vulnerabilities found by Mythos were patched. While Glasswing solved the finding problem, nobody solved the fixing problem. Picus CTO Volkan Erturk put it sharply at the 2026 FS-ISAC Americas Spring Summit. Defenders work at calendar speed while attacks happen at machine speed.

This connects directly to AISLE’s VulnOps model and the vulnerability management arc I have been tracking. Discovery without remediation is just a more sophisticated way to generate backlogs. Cole Grolmus’s argument that AI labs are going to kill exposure management and force a shift to attack path validation adds another dimension. If AI can find every vulnerability, the value shifts from discovery to understanding which exploits actually chain into business-critical impact.

Varun Badhwar Shares a Mythos Response Plan for CXOs

Endor Labs CEO Varun Badhwar released a practical Mythos response framework for CXOs, building on his argument from issue #95 that AI makes dependency management an order of magnitude more critical. The framework addresses the immediate question that every executive is asking. What do I do right now about the AI-driven vulnerability surge?

Rather than panic-driven CVE triage, Badhwar advocates prioritizing based on reachability and actual exploit chains, the same evidence-based approach I have been advocating since Software Transparency. When the volume of discovered vulnerabilities exceeds any team’s remediation capacity, the only viable strategy is intelligent prioritization.

OWASP’s Agentic Security Work Accelerates

David Matousek shared an update on the OWASP Agentic AI project’s progress, noting that the team has been closing a high volume of issues and contributions. For those following my work on the OWASP Agentic Top 10, this kind of community velocity matters.

The Agentic Top 10 was shaped by hundreds of experts, and the ongoing refinement through issue closure and contribution review ensures the framework stays current as the threat landscape evolves. Combined with Cyentia Institute and Cobalt’s pentesting research showing that compromised credentials remain the number one entry point in 41% of engagements, the OWASP work on ASI03 (Identity and Privilege Abuse) is well-calibrated to the most pressing real-world risk.

Clover Security Tackles the Agentic Development Lifecycle

The Agentic Development Life Cycle is where AI agents generate code on their own, pull dependencies, call external tools, and commit changes without a developer ever opening a traditional IDE. Clover Security, which emerged from stealth with $36 million in funding backed by Wiz, CrowdStrike, and Team8, embeds AI security agents into the earliest stages of development.

Their agents plug into Confluence, Jira, GitHub, and GitLab, ingesting product design documents and applying security frameworks like OWASP ASVS to identify flaws before code is committed. Security at the design phase is not a new idea, but doing it at machine speed through autonomous agents that understand product context is. As I wrote in Vibe Coding Conundrums, the security debt from AI-generated code will accumulate unless we build verification into the workflow where the code originates.

Bug Bounties Have a Slop Problem

I covered Daniel Stenberg’s “High Quality Chaos” in issue #95, where he projected 50 curl vulnerabilities in 2026. This piece from Jonathan Price goes deeper into the structural problem that AI-generated slop is creating across the entire bug bounty ecosystem.

The Internet Bug Bounty program paused new submissions because AI-generated reports overwhelmed the system. Curl ended its bounty program entirely in January 2026 after determining that none of twenty submissions that year identified a real vulnerability.

The irony is bitter. AI is simultaneously the best vulnerability discovery tool and the biggest threat to the institutions designed to incentivize that discovery. The incentive structures that made bug bounties work for a decade are breaking under the weight of zero-effort submissions that sound plausible but contain nothing actionable.

Chinese Spy Group Caught Lurking in Poland and Asia Networks

TrendAI researchers identified Shadow-Earth-053, a new Chinese-aligned group targeting government agencies, defense contractors, technology firms, and transportation across Poland and Asia. Initial access came through vulnerable Microsoft Exchange Servers, with attackers dwelling for up to eight months before deploying ShadowPad.

Half the victims were also compromised by a related group, Shadow-Earth-054, sharing identical tool hashes and overlapping techniques. The dwell time is the headline. Eight months of undetected access through well-known Exchange vulnerabilities. This is the operational reality that all the AI-driven defense narratives need to account for. The most consequential breaches are still happening through unpatched infrastructure, not through novel AI attacks.

HackerOne’s Handling of the ClickUp Disclosure Raises Questions

A security researcher publicly disclosed that ClickUp’s client-side feature flag configuration had been leaking nearly a thousand corporate and government email addresses, including employees from Fortinet, Home Depot, Tenable, and Mayo Clinic, through a hardcoded third-party API key since January 2025. The issue was first reported through HackerOne on January 17, 2025.

It was still unrotated as of April 2026. HackerOne closed the April 2026 follow-up as a duplicate of the 2025 report, despite the newer submission documenting substantially new impact. This is the kind of disclosure handling failure that erodes researcher trust and makes the bug bounty slop problem worse. When legitimate reports sit for 15 months, researchers lose confidence that the system works.

The New Perimeter Is Supply Chains and AI Environments

Jason Haddix’s Executive Offense newsletter framed supply chains and AI environments as the new perimeter, and I agree with the framing. The traditional network perimeter dissolved years ago. The cloud became the new perimeter and then dissolved in turn.

Now the attack surface is defined by software supply chains, AI agent tool access, and the MCP integrations that connect autonomous systems to enterprise infrastructure. Every major incident this week reinforces the thesis. PyTorch Lightning was compromised through a supply chain dependency.

Six AI coding agents were exploited through credential mismanagement. Chinese spies dwell in networks for eight months through Exchange vulnerabilities. The perimeter is not a place anymore. It is a web of trust relationships, and every one of those relationships is a potential attack vector.

Final Thoughts

This was the week the governance machinery started to catch up with the capabilities it is trying to govern. Five Eyes agencies jointly published their first agentic AI guidance. NIST signed pre-deployment testing agreements with three AI labs. The White House reversed course on AI oversight. Gartner formally placed agentic AI at Peak of Inflated Expectations, with 60% of organizations planning deployment within two years and only 17% having actually done it.

But the gap between aspiration and execution remains enormous. 80% of Fortune 500 companies deploy AI agents, and only 10% have a strategy to manage them. Fewer than 1% of the vulnerabilities Mythos found were patched. A single attacker used Claude Code and GPT-4.1 to breach nine Mexican government agencies. HackerOne closed a legitimate vulnerability report as a duplicate after 15 months. PyTorch Lightning was compromised and detected in 18 minutes, but the 42-minute exposure window was still enough to poison downstream ecosystems.

The tools are getting better. AISLE validated 180+ CVEs with accepted fixes in OpenSSL and curl. DeepSec scales to 1,000 concurrent sandboxes. Chainguard committed to a one-day KEV SLA. Cisco gave us model provenance attestation. Palo Alto Networks is building the AI gateway chokepoint.

The pattern I keep seeing is that discovery is accelerating faster than remediation, governance is accelerating faster than enforcement, and adoption is accelerating faster than understanding. The organizations that navigate this well will be the ones that invest in operational frameworks like VulnOps, runtime governance architectures, and identity standards that treat agents as first-class principals, not the ones chasing the next model announcement.

Stay resilient.

"Developers will provide models, frequently with reduced or removed safeguards, to CAISI for government evaluation before public release."

Oh, nice, the government gets the unrestricted models for the Three-Letter Agencies - and the rest get the guardrails.

What could possibly go wrong?