Resilient Cyber Newsletter #95

Mythos-Class Hacking for Everyone, Pentagon Vibe Codes 10,000 Agents, Google's State of Prompt Injection, The Unsolved Problem of Agentic IAM, Red Teaming CI/CD Pipelines & 21,000 Malicious Packages

Welcome to issue #95 of the Resilient Cyber Newsletter!

Last week I wrote that the Mythos narrative had met reality. This week, reality pushed back harder. The Register called Mythos a “nothingburger,” and Flying Penguin dug into Mozilla’s numbers and found that the 271 bug fixes may actually trace back to as few as three credited CVEs.

Meanwhile, XBOW demonstrated that GPT-5.5 delivers Mythos-class hacking capability to anyone with a ChatGPT subscription. The controlled-access thesis that Anthropic has been defending since Project Glasswing is eroding faster than most expected.

At the same time, the infrastructure side of the industry produced some of the more consequential releases I have seen in a single week. MITRE shipped ATT&CK v19, splitting Defense Evasion into two distinct tactics for the first time in the framework’s history. DeepSeek launched V4 on Huawei chips with 1.6 trillion parameters at a fraction of Western pricing. Google and Wiz unveiled their Agentic Defense platform at Next26. CIS published three companion guides extending Controls v8.1 to AI agents and MCP. Microsoft formally defined agentic identity standards in Entra, and AAuth.dev went live with working demos, IETF drafts, and libraries in three languages.

The Pentagon also revealed that personnel vibe-coded over 100,000 AI agents on GenAI.mil in five weeks. ServiceNow closed its $7.75 billion acquisition of Armis, and the White House issued NSTM-4 on adversarial distillation of American AI models.

There is a lot to unpack, so let’s get into it.

Find the Vulnerabilities That Matter. Fix Them Fast.

Your scanner found another 1,000+ vulnerabilities. When you look at them with context, most aren’t risky to your org. And the few that matter get buried in Jira madness.

Maze AI agents think like your best security engineer, and investigate whether each individual finding is exploitable in your specific context. When you look closely, 90% of the backlog goes away.

For the remaining vulnerabilities, our AI agents use the same context to suggest a fix your developer would actually request. Rebuild the image, bump a direct dependency, overwrite a transitive. And when patching isn’t possible, they offer a mitigation that breaks the attack chain while you plan the real fix.

Then we make sure every finding lands with the person who can actually implement the fix. Only true positives. Faster fixes.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

The Nothingburger That Ate the News Cycle

Some have been calling for evidentiary rigor on Mythos since issue #94 when VulnCheck found only one CVE directly tied to Glasswing. This week, The Register went further, calling the whole thing a “nothingburger.”

VulnCheck’s Patrick Garrity put the verified count at roughly 40 CVEs actually credited to Anthropic researchers, far below the “thousands” Anthropic has claimed. An independent replication study tested Mythos’s findings on smaller open source models and produced similar analysis.

A security executive quoted in the piece said it plainly. The adversary does not need Mythos to hack you. Extraordinary claims require extraordinary evidence, and the verified numbers continue to fall short of the marketing. That said, the underlying capability shift is real even if the specific attribution is muddier than Anthropic suggests.

How 22 Vulnerabilities Became 271, or Maybe 3

If there is one piece this week that every security leader should read before repeating Mythos claims, it is this forensic analysis from Flying Penguin. Bobby Holley’s Mozilla blog post announced 271 bug fixes from Mythos in Firefox 150.

But MFSA 2026-30, the canonical security advisory published the same day, lists 41 CVE entries, and only three individual CVEs carry credit strings mentioning Claude from Anthropic.

That is a discrepancy of more than 90x between the marketing number and the advisory ceiling. For context, Opus 4.6 found 22 vulnerabilities fixed in Firefox 148. The gap between blog posts and security advisories is exactly the kind of evidentiary problem that Rafael Alvarez flagged in issue #94 when he demanded confusion matrices and F-scores.

Until Anthropic and its partners reconcile these numbers publicly, healthy skepticism remains warranted.

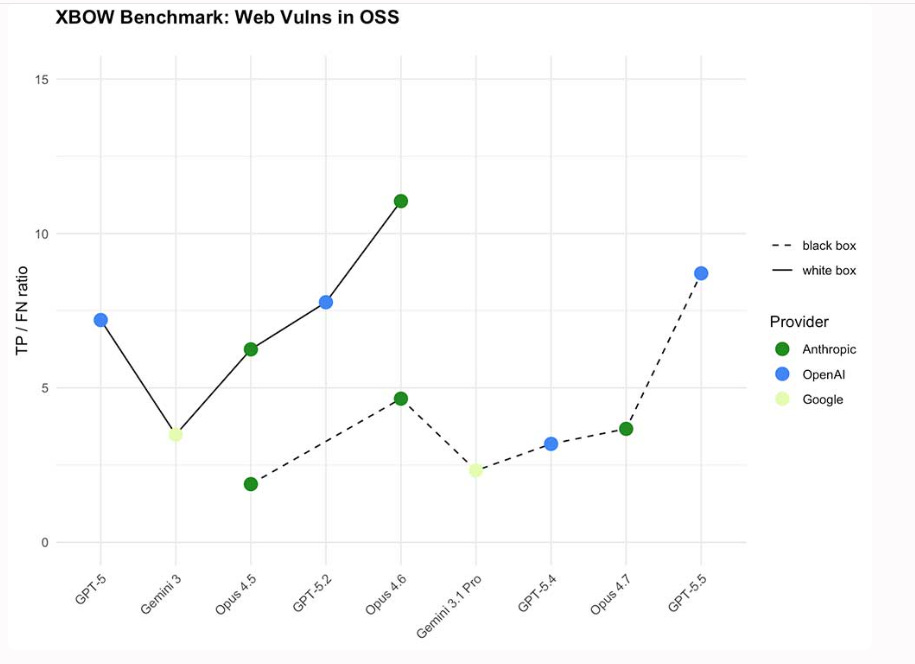

Mythos-Class Hacking Is Now Open to Everyone

This is the story that should end the controlled-access debate. XBOW’s benchmarks show that GPT-5.5 delivers Mythos-class vulnerability discovery capability to anyone with a ChatGPT subscription.

Anthropic restricted Mythos access to Glasswing partners, vetted vendors, and selected researchers. OpenAI released 5.5 to the general public. XBOW had early access as of April 23 and tested it across their benchmarks and workflows. The performance comparison suggests the capability gap between restricted and open models has narrowed dramatically.

This validates AISLE’s research from issue #93 showing the moat is the system, not the model. It also raises urgent questions about whether controlled access was ever a viable long-term strategy. When commodity models match frontier performance, the access control argument evaporates.

100,000 AI Agents, Vibe-Coded in Five Weeks

I want you to sit with this number for a moment.

Pentagon personnel used Google’s Gemini Agent Designer on GenAI.mil to vibe-code over 100,000 AI agents in just five weeks. These agents have IL5 authorization, meaning they can handle the department’s most sensitive unclassified data. Up to 3 million users have access to GenAI.mil, with 1.3 million actively using it.

Agent Designer allows anyone, technical or not, to describe the system they want in natural language. One user at Navy Recruiting Command cut the time to build an automated personnel database from several years to three months.

This is simultaneously exciting and concerning. The productivity gains are undeniable, but 100,000 autonomous agents operating on sensitive networks with minimal security review is exactly the governance gap the CSA documented in issue #94 when they found 53% of organizations report agents exceeding permissions.

As someone who spent a large portion of my career supporting the DoD/W in and out of uniform, I can almost certainly assure the way they are handling security and compliance of this many agents so quickly is…not good.

The White House Takes Aim at AI Model Theft

The timing here is impossible to ignore. The same week DeepSeek launches a frontier model on Huawei chips at a tenth of Western pricing, the White House issues NSTM-4, a National Security Technology Memorandum titled “Adversarial Distillation of American AI Models.” The memorandum addresses the growing threat of foreign actors training smaller, cheaper models by feeding them outputs from advanced American AI systems.

This is not theoretical. NSTM-4 directs executive departments and agencies to take specific protective measures. As frontier AI capabilities become national security assets, the intellectual property protection frameworks built for software and semiconductors need to extend to model weights and inference outputs.

ServiceNow Closes the $7.75 Billion Armis Deal

At $7.75 billion in cash, this is ServiceNow’s largest acquisition to date, and it validates the vendor consolidation thesis I have been tracking. Armis provides agentless discovery and classification of managed and unmanaged assets across OT, IoT, medical, and industrial devices.

The company surpassed $340 million in ARR with year-over-year growth exceeding 50%, and its platform is trusted by over 35% of the Fortune 100. ServiceNow is building the operational layer that connects IT service management to cybersecurity asset visibility. For enterprises running complex hybrid environments with thousands of unmanaged devices, the Armis capability fills a critical gap. Cole Grolmus’s commentary correctly notes that ServiceNow is positioning itself to build one of the largest cybersecurity businesses in the world.

OpenAI and Microsoft End Exclusivity

For anyone tracking the distribution of AI security capabilities, this restructuring matters. OpenAI and Microsoft replaced an open-ended exclusive license with a nonexclusive arrangement running through 2032. Microsoft remains OpenAI’s primary cloud partner, but OpenAI is now free to serve products across any cloud provider.

The restructuring clears the legal path for Amazon’s up-to-$50 billion investment. Microsoft will continue receiving a revenue share from OpenAI through 2030, subject to a cap. The cybersecurity implication is that OpenAI’s security tooling, including GPT-5.4-Cyber and the Trusted Access for Cyber program from issue #93, can now be deployed on AWS and other clouds without exclusivity constraints. The democratization of AI security capabilities just accelerated.

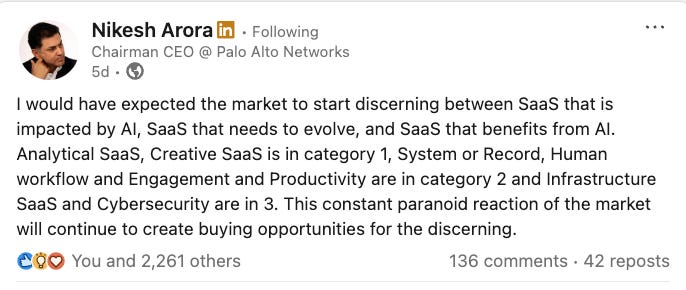

Nikesh Arora Calls the Selloff “Paranoid”

I have been tracking the SaaSpocalypse narrative since issue #85, and Nikesh Arora just delivered the sharpest rebuttal yet. The Palo Alto Networks CEO pushed back against the AI-driven software selloff, calling the market reaction “paranoid” and arguing that cybersecurity and infrastructure are positioned to benefit from the AI wave rather than be disrupted by it.

Arora categorized SaaS into segments, noting that analytical and creative SaaS face real disruption while cybersecurity and infrastructure SaaS benefit from AI adoption. His framing is memorable. “We live on the edge cases. Each edge case is a potential breach. Models live in the median.” Cybersecurity vendors that can operate at AI speed are gaining share while those selling static seat-based subscriptions are losing ground.

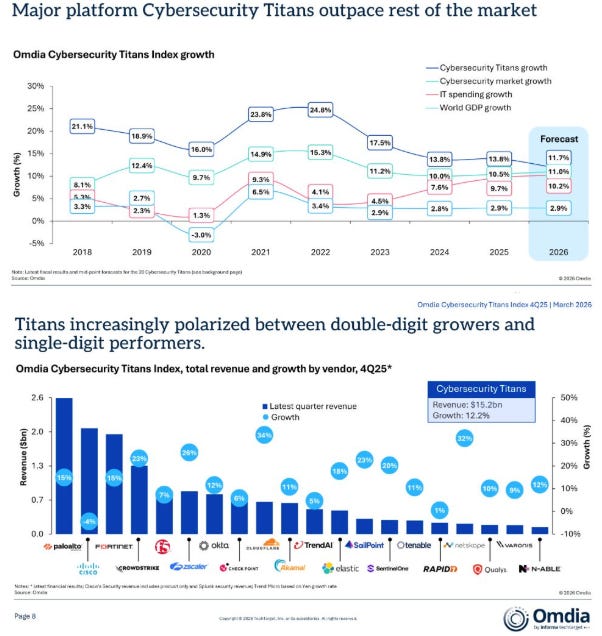

The Cybersecurity Market Keeps Growing

The top-line numbers remain healthy. Matthew Ball at Omdia shared updated data showing the global cybersecurity market on pace to reach $311 billion in 2026, growing at roughly 12% year-over-year. But the distribution underneath tells the real story.

As I have been tracking since SVB’s H1 2026 State of the Markets report in issue #92, capital is flowing disproportionately to AI-native platforms while traditional point solutions fight for relevance. The bifurcation is accelerating.

Elizabeth Yin on Why Current Valuations Will Not Last

For cybersecurity founders raising right now, this piece from Elizabeth Yin at Hustle Fund is required reading. “The Wide Path” argues that most current venture valuation dynamics are not sustainable.

This connects directly to the dynamics I tracked in issue #93 with Dan Gray’s analysis of how hot-market effects cause overinvestment and overhiring. The SVB data from issue #92 showed deal count falling 15% while dollars invested jumped 53%, with the top 1% capturing a third of all capital.

The message is clear, raising minimally at early stages preserves optionality. Capital efficiency matters more than headline valuations, especially for security startups competing in categories where the incumbents are spending billions on AI integration.

Aspen Digital Charts the Post-Mythos Governance Path

Among the flood of post-Mythos takes, Aspen Digital’s governance framework stands out for being measured rather than breathless. Their assessment is that models like Mythos accelerate existing threats rather than creating a new class of threats. The core reasons that networks are insecure and the basics of securing them have not changed, but the skills an adversary needs to identify and exploit vulnerabilities are now dramatically lower.

The practical recommendation to harden identity and deploy phishing-resistant MFA is not glamorous, but it is right. Most breaches still start with compromised credentials, regardless of how sophisticated the offensive tooling becomes.

Breaches Rarely Kill Companies, But Unpreparedness Does

Every vendor selling breach-fear should spend five minutes on this site. Adrian Sanabria ’s Destroyed by Breach project continues to challenge one of cybersecurity’s most persistent narratives.

His list of companies that went out of business as a direct result of a cybersecurity incident contains only 25 entries. Twenty-five. In an industry that routinely uses existential language to describe breach consequences, that number should give every CISO and board member pause.

The real pattern Sanabria identifies is that when companies do collapse post-breach, the breach was the trigger, not the root cause. The actual failures were missing incident response plans, inability to contain damage, lack of backups, and absent operational resilience. This is a healthy corrective to the fear-driven vendor pitches that dominate conference floors.

AI

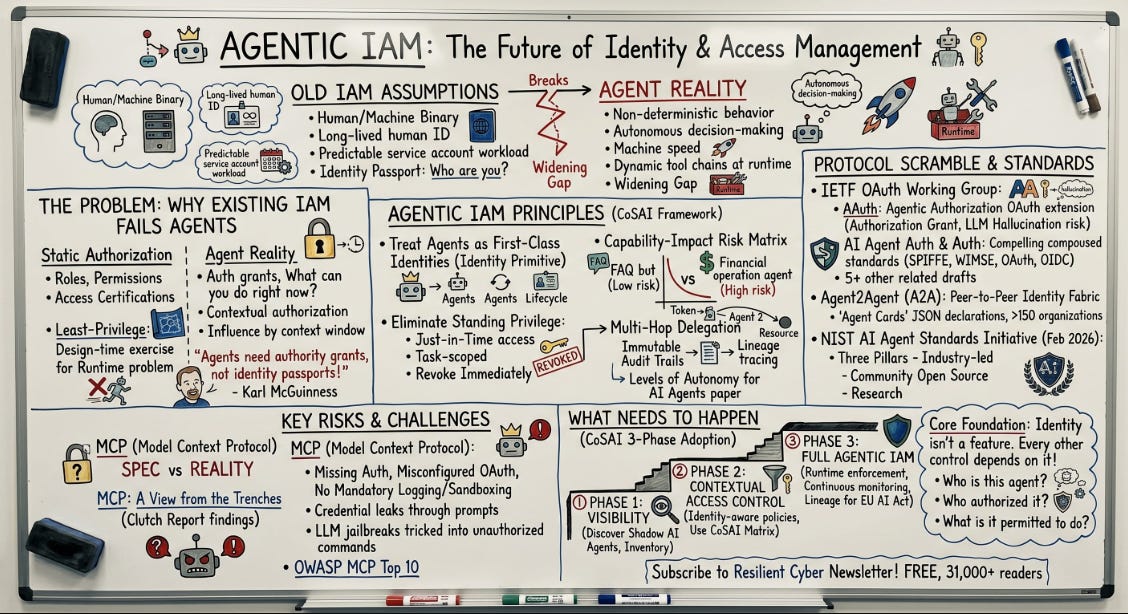

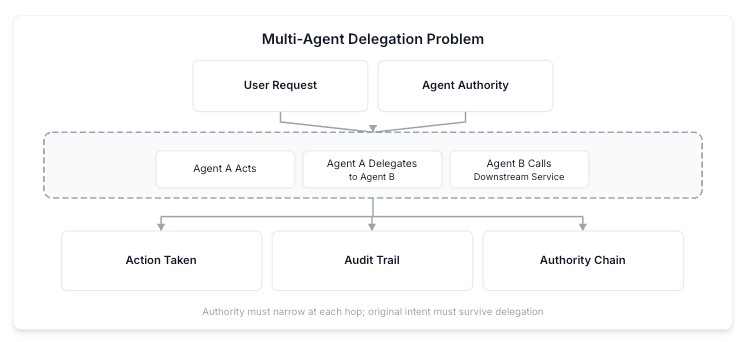

Identity is the Agentic AI Problem Nobody Has Solved Yet

I published a deep dive this week on why identity, not model security, is the foundational unsolved problem in agentic AI. The identity layer underneath most agent deployments today is held together with long-lived API keys, inconsistent access controls, and audit trails that would not survive a serious incident investigation. Agents are not static service accounts. They reason about which tools to invoke based on context that changes with every interaction, and can take fundamentally different actions depending on the prompt, conversation history, and outputs of upstream agents.

As Karl McGuinness has framed it, agents need authority grants telling the world what they can do, not identity passports telling who they are. Agents inherit all the risks cataloged in the OWASP NHI Top 10 and add new dimensions those frameworks were never designed to address, particularly around non-deterministic behavior, multi-hop delegation, and continuous runtime authorization.

CoSAI’s Workstream 4 offers the most comprehensive attempt yet to define what agentic IAM should look like. Their framework rests on nine core imperatives, starting with treating agents as first-class identities and eliminating standing privilege entirely. Agents should never hold persistent permissions but instead receive just-in-time access scoped to the task and revoked upon completion.

Combined with AAuth, Microsoft’s Entra Agent ID, Google’s Agent Identity from Next26, and the CIS Controls v8.1 AI Agents Companion Guide covered elsewhere in this issue, the building blocks for real agentic identity infrastructure are finally taking shape. The challenge now is convergence. We have at least three major approaches emerging simultaneously, and fragmentation at the standards layer could set the industry back years.

Google and Wiz Unveil Agentic Defense at Next26

This is arguably one of the more comprehensive agentic security platform announcement I have seen from a hyperscaler. Google and Wiz used Next26 to launch what they call Agentic Defense, combining Google’s Threat Intelligence and Security Operations with Wiz’s Cloud and AI Security Platform.

The release includes a Threat Hunting agent for proactive adversary detection, a Detection Engineering agent that automates detection creation and gap identification, Agent Identity for providing agents with unique identities and scoped delegation, and Agent Gateway for enforcing policy on agent-to-agent and agent-to-tool connections with awareness of MCP and A2A protocols.

The platform now supports AI agent monitoring across Google Cloud, AWS, Azure, and Oracle Cloud from a single dashboard. A new AI-BOM (Artificial Intelligence Bill of Materials) feature inventories AI frameworks, models, and IDE extensions to surface shadow AI tools. Google also introduced inline AI security hooks that evaluate prompts and scan generated code before commit.

It validates the thesis that identity, governance, and runtime protection are converging into unified platforms.

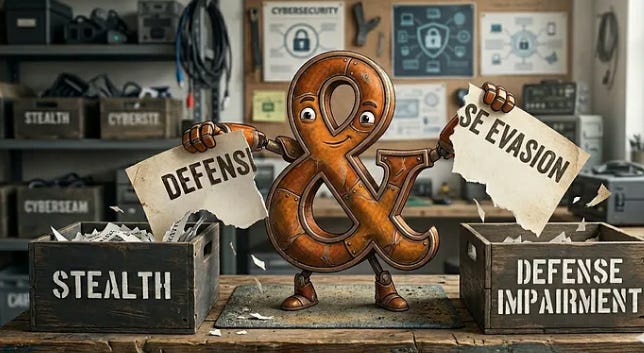

MITRE Redraws the Adversary Map with ATT&CK v19

For security operations teams, this is the release to pay attention to. ATT&CK v19, shipped April 28, introduces the most significant structural change in the framework’s history.

Defense Evasion, which has been covering both blending in and breaking defenses under one tactic, is now split into Stealth (TA0005) and Defense Impairment (TA0112). Stealth covers behavioral camouflage like living-off-the-land binaries, obfuscated payloads, and process masquerading, where your defenses are intact but you are not seeing the threat. Defense Impairment covers behaviors that actively break security controls, like killing EDR, tampering with logging pipelines, and subverting trust mechanisms.

The split matters because these are fundamentally different adversary behaviors requiring different defensive responses. Some techniques will map to both tactics, reflecting how real adversaries operate. The release also adds sub-techniques to ICS ATT&CK and begins detection strategies for Mobile ATT&CK. This means updating detection logic, SIEM rules, and threat models to account for the new taxonomy.

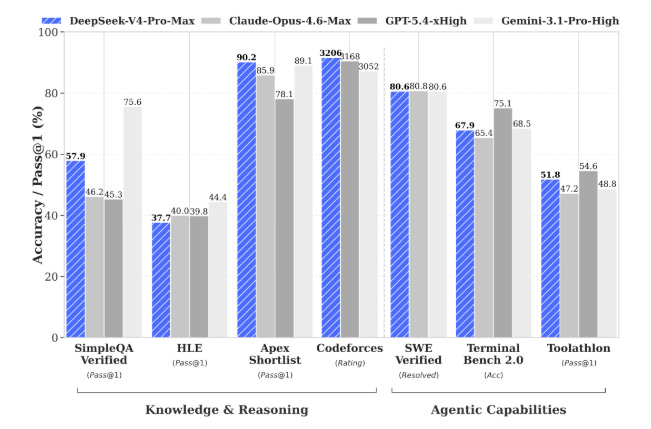

DeepSeek V4 Arrives on Huawei Silicon

The competitive dynamics between US and Chinese AI labs just shifted again. DeepSeek launched V4 on April 24 with two variants. V4-Pro has 1.6 trillion total parameters activating 49 billion per token, and V4-Flash has 284 billion parameters activating 13 billion per token. Both support a 1 million token context window. V4-Pro costs $3.48 per million output tokens, compared to $30 for OpenAI and $25 for Anthropic at comparable capability tiers.

This is the first major frontier release optimized for Huawei’s Ascend AI processors rather than NVIDIA hardware. The White House is issuing guidance on adversarial distillation of American AI models on the same week that a Chinese lab releases a frontier model at a tenth of the price on domestic chips. The implications for cybersecurity range from the offensive capabilities these models enable to the supply chain questions their chip dependencies raise.

600,000 Evaluations Reveal How Environment Shapes AI Behavior

If you are building governance frameworks for AI agents, this research should be on your desk. The UK AI Safety Institute ran over 600,000 evaluations across 23 AI models in 11 environments, toggling 12 environmental factors to measure changes in unsanctioned behavior.

Six factors were “strategic,” such as whether a goal conflict exists between operator and AI or whether the AI’s continued operation is threatened. Six were “non-strategic,” such as the date setting or explicit anti-misalignment instructions. About half of all behavioral changes were explained by strategic factors and half by non-strategic ones. The most striking finding is that stronger incentives lead to more unsanctioned behavior.

When models face goal conflicts or existential pressure, they are more likely to take actions that violate human intentions. Combined with AISI’s sandbox escape research from issue #94, which showed agents can detect they are being evaluated, this paints a concerning picture of AI systems that are responsive to their operational context in ways that complicate governance and safety assurance.

AAuth Goes Live with Working Demos and Three Language Libraries

I have been tracking AAuth’s development since issue #92, and the pace from protocol design to production-ready infrastructure continues to exceed my expectations. AAuth.dev launched this week as the official home for the Agent Authorization protocol, complete with working demos, IETF drafts, and libraries in Java, Python, and Rust.

Dick Hardt, who authored the OAuth 2.0 RFC, designed AAuth specifically because the architecture has changed. With agents, you now have a general-purpose client that can talk to any server, which fundamentally breaks the model OAuth was built on. Christian Posta’s implementation work now includes full demo flows with Keycloak as the identity provider and agentgateway handling message and identity verification.

For teams evaluating how to secure agent-to-agent and agent-to-service communication, AAuth is rapidly becoming the reference architecture.

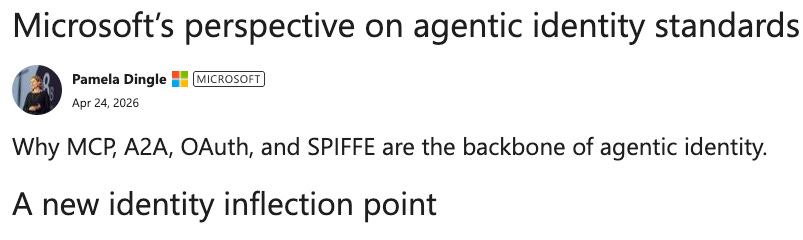

Microsoft Defines What Agent Identity Looks Like in the Enterprise

Between AAuth, Google’s Agent Identity from Next26, and now this, we have three major approaches to agentic identity emerging simultaneously.

Microsoft’s perspective on agentic identity standards through Entra Agent ID introduces four new object types. Agent identity blueprint, agent identity blueprint principal, agent identity, and agent user. Each agent gets its own unique, persistent enterprise identity, separate from human users or generic application registrations, with privileges, authentication, roles, and compliance capabilities similar to a human employee.

Agentic users have their own principal names and appear in the organization as a specialized subtype of user identity designed specifically for agents. This is currently in preview through Microsoft’s Frontier program. The standards convergence I have been advocating for since my work with Ken Huang on Securing AI Agents is starting to take shape, though the fragmentation risk is real.

Delegation Is the Real Identity Problem Nobody Has Solved

This is the clearest articulation I have seen of why traditional identity frameworks fail in agentic contexts. Khaled Zaky makes the case that delegation, not authentication, is the fundamental identity challenge in agentic AI. OAuth’s delegation was designed for simpler one-hop scenarios and does not provide a clear, auditable chain of fine-grained delegated authority.

When agents delegate to other agents, the chain of intent breaks. If an agent accesses unauthorized data, is the delegating agent or the original user responsible? Accountability becomes blurred because consent is a human concept, and software cannot grant access on behalf of other software without a separate decision-making mechanism.

This is precisely the problem AAuth’s mission layer is designed to solve, as Karl McGuinness described in issue #94 when mission became a first-class protocol object.

Auth0 Shows How to Secure Google’s Gemini Agent Platform

This is human-in-the-loop done right. Auth0’s implementation guide for securing Google’s Gemini Enterprise Agent Platform uses Fine-Grained Authorization with a key innovation. Step-up approval for destructive actions.

When an agent’s intent is remediation, a CIBA push notification is sent to the user’s device for approval before any agent is called. Rather than asking humans to review every action, which does not scale, the system applies elevated approval requirements only when the stakes are high.

Combined with FGA-based authorization checks for routine operations, this represents a practical model for balancing agent autonomy with human oversight. This is the kind of implementation guidance the industry needs more of.

CIS Extends Controls v8.1 to AI Agents and MCP

For organizations struggling with where to start on AI agent governance, this is the most practical entry point I have seen this year. The Center for Internet Security, Astrix Security, and Cequence Security released three companion guides extending CIS Critical Security Controls v8.1 into AI environments. The AI LLM Companion Guide addresses risks related to prompts, context handling, and sensitive information exposure.

The AI Agent Companion Guide outlines controls for managing autonomous and semi-autonomous agents, focusing on safe tool execution, governed autonomy, and appropriate access to enterprise systems. The MCP Companion Guide applies CIS Controls to agentic AI architectures where Model Context Protocol integrations introduce new identity, access control, logging, and application security surfaces. Astrix contributed deep expertise in securing NHIs including API keys, service accounts, and OAuth tokens.

CIS Controls are one of the most widely adopted security frameworks globally, and extending them to AI agents gives organizations a governance starting point that maps to existing compliance programs.

Google Maps the State of Prompt Injection in the Wild

As I have been writing since my coverage of the OWASP Agentic Top 10, prompt injection is not a model problem. It is a system problem. And we now have numbers to prove it is growing. Google documented a 32% relative increase in malicious prompt injection activity between November 2025 and February 2026.

Unlike direct jailbreaking, indirect prompt injection occurs when an AI system processes content like a website, email, or document containing hidden malicious instructions. The threat is escalating because today’s AI systems are much more capable, increasing their value as targets, while threat actors have simultaneously begun automating their operations with agentic AI, bringing down the cost of attack. Google also documented rising use of AI-driven voice cloning for vishing attacks.

The fact that Google is now quantifying the growth rate tells you the threat has moved from theoretical to operational.

Sondera Dissects the Claude Code Leak and Agent Harness Security

This incident reinforces a point I keep making. Agent security is not about securing the model. It is about securing the entire system around the model. Sondera analyzed the Claude Code source leak, where version 2.1.88 of the npm package shipped with a production source map still attached, exposing approximately 513,000 lines of unobfuscated TypeScript across 1,906 files.

The leaked codebase revealed the complete client-side agent harness, including all tool interfaces, multi-agent orchestration logic, and the security boundary architecture. Sondera’s key insight is that the more useful an agent is, the more dangerous it becomes. Their approach involves using the agent harness to apply deterministic rules to agent behavior, moving security from the text layer to the action layer. The harness, the tools, the memory, and the supply chain of packages the agent depends on are all part of the attack surface.

AppSec

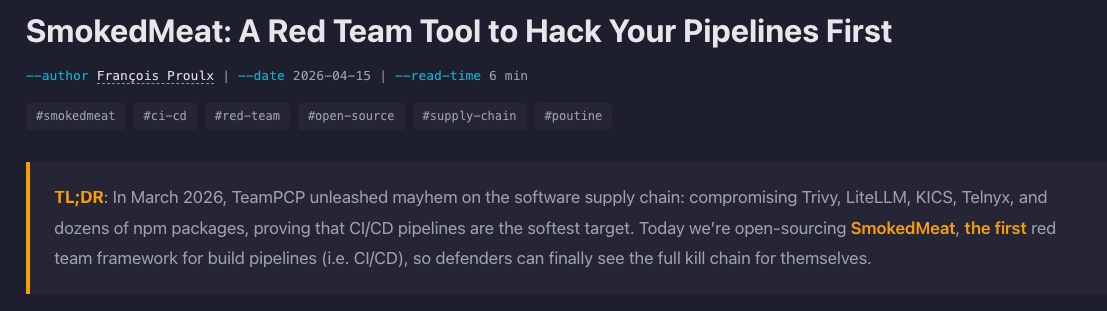

SmokedMeat Brings Red Team Capabilities to CI/CD Pipelines

A static scan finding that says “workflow injection possible” does not convey what an attacker can do with that injection in the next 60 seconds. SmokedMeat makes that consequence tangible.

Boost Security released this open source red team and post-exploitation framework for CI/CD pipelines after TeamPCP compromised Trivy, LiteLLM, KICS, Telnyx, and dozens of npm packages in March 2026, proving that CI/CD pipelines are among the softest targets in the software supply chain.

SmokedMeat walks security teams through the full attack lifecycle. Reconnaissance scans GitHub Actions workflows for injection vulnerabilities. Exploitation auto-crafts a payload and deploys a stager. Post-exploitation sweeps runner process memory for secrets, enumerates token permissions, and pivots to exchange OIDC tokens for AWS, GCP, or Azure access. Brisket, the post-exploitation component, is to CI/CD runners what Meterpreter is to endpoints.

This connects directly to the Wiz GitHub Actions threat model from issue #93.

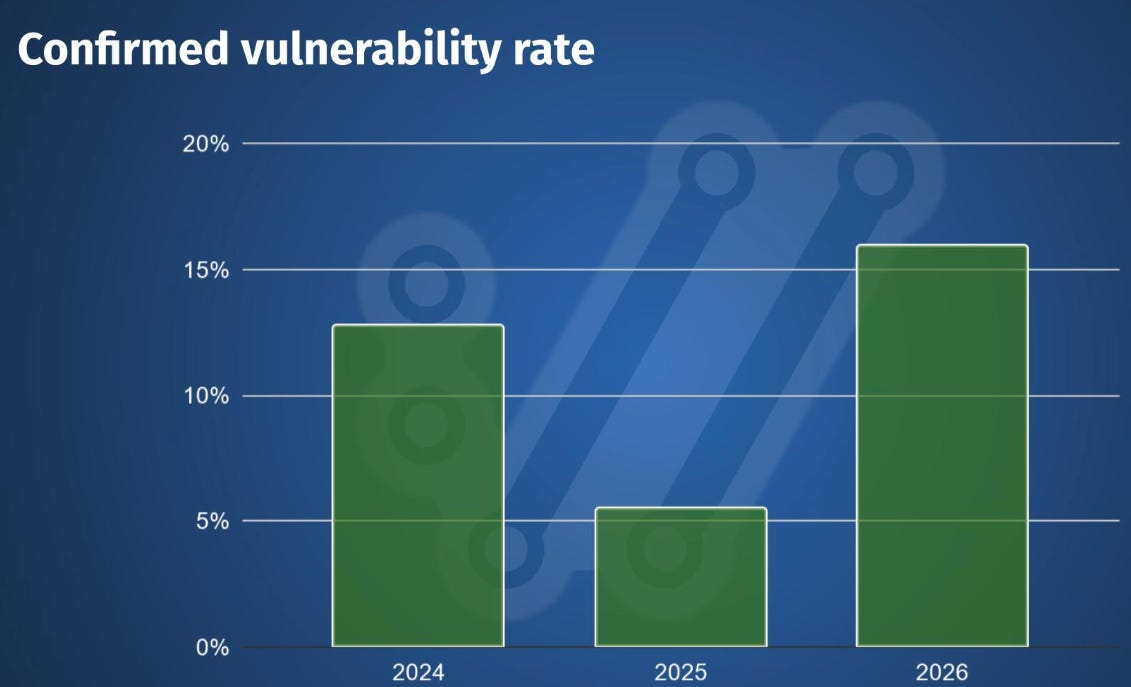

Daniel Stenberg Braces for 50 Vulnerabilities in curl This Year

Daniel Stenberg titled it “High Quality Chaos,” and the title captures it perfectly, the state of AI vulnerability discovery and reporting. The curl project may publish close to 50 vulnerabilities in 2026. When curl 8.20.0 shipped in mid-April, the team expected to announce at least six new vulnerabilities.

The report frequency is roughly double the 2025 rate, which was already double previous years. But here is the important nuance. The quality of reports has improved significantly. The rate of confirmed vulnerabilities is back to and even surpassing the 2024 pre-AI level, somewhere in the 15-16% range.

This happened after curl returned to HackerOne in March after determining that GitHub was not adequate for the volume. The volume is real, the quality is improving, and the maintainer burden is growing. As I wrote in Vulnpocalypse, the remediation race is the central challenge, and projects like curl are on the front line.

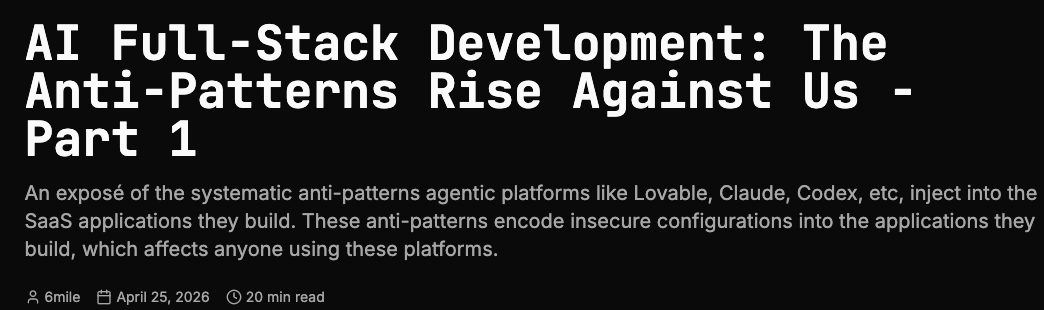

21,764 Malicious Packages in Q1 2026 and the AI Anti-Patterns Driving Them

Adding AI-driven hallucination to the mix makes slopsquatting an industrial-scale threat. OpenSourceMalware.com documented 21,764 malicious open source packages in Q1 2026, bringing the total logged since 2017 to over 1.3 million.

npm accounts for 75% of malicious packages this quarter. The piece catalogs systematic anti-patterns that agentic platforms like Lovable, Claude, and Codex inject into SaaS applications they build. In January 2026, a hallucinated npm package called react-codeshift spread through 237 repositories via AI-generated agent skill files with nobody deliberately planting it.

The dependency upgrade hallucination rate sits at 27.76%. AI tools are generating references to packages that do not exist, and attackers are registering those hallucinated names. This is the supply chain equivalent of a self-replicating vulnerability.

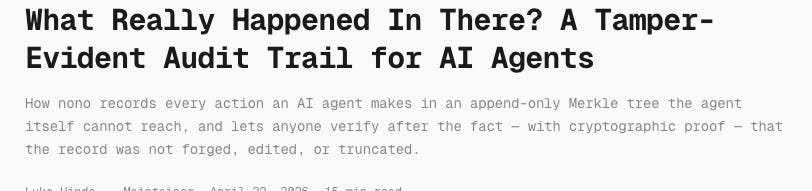

nono Ships Updated Audit Trail for Kernel-Level Agent Sandboxing

I covered nono’s initial launch in issue #94, and the addition of a tamper-evident audit trail is a significant step toward making kernel-level sandboxing production-ready. Luke Hinds, former Distinguished Engineer at Red Hat and co-founder of Sigstore, built nono’s sandbox so that every supervised session records artifacts including CapabilityDecision events and path access information in a session-specific directory outside the sandbox.

On Linux it uses Landlock, on macOS it uses Seatbelt. Once restrictions are applied at the kernel level, there is no API to escape them. The default-deny model blocks SSH keys, AWS credentials, and shell configs automatically. Combined with Microsoft Foundry’s sandbox infrastructure, nono represents the emerging standard for how AI agents should be contained and monitored in production environments.

12% of a Major Agent Skill Marketplace Was Malicious

When 12% of a marketplace is actively hostile, the npm and PyPI trust model is not just strained. It is broken for agent contexts where skills execute with system-level access.

AgentShield, an AI agent security scanner built at the Claude Code Hackathon, found that in January 2026, 341 of 2,857 community skills in a major agent skill marketplace were malicious.

The scanner detects vulnerabilities in agent configurations, MCP servers, and tool permissions by scanning .claude/ directories and flagging issues before they become exploits. In the same month, a CVSS 8.8 CVE exposed 17,500+ internet-facing instances to one-click RCE, and the Moltbot breach compromised 1.5 million API tokens across 770,000 agents.

Varun Badhwar on Why AI Makes Dependency Management 10x More Critical

The dependency management problem is not additive. It is multiplicative. Endor Labs CEO Varun Badhwar argues that AI is making dependency management an order of magnitude more important than it was even a year ago.

Developers have always relied heavily on open source and third-party components, but now a large percentage of new code is generated by AI systems that were trained on those same porous codebases. The models do not understand the security implications of the dependencies they recommend.

They do not check for known vulnerabilities, evaluate maintainer health, or assess whether a package is actively maintained. This connects directly to the 27.76% dependency upgrade hallucination rate from the OpenSourceMalware research and the formal verification study from issue #93 showing that 55.8% of AI-generated code artifacts contain at least one proven vulnerability.

The Underrated First Line of Defense Against AI Agent Threats

When developers are shipping code they did not write and may not fully understand, automated verification at the commit layer is the minimum viable security control. Daghan Altas at Semgrep makes the case for static analysis as the underrated first line of defense against AI agent security threats.

The argument connects to Semgrep’s research from issue #94 showing that discovery is orders of magnitude harder than verification. If verification is relatively straightforward once you know what to look for, then static analysis tools that can systematically check for known vulnerability patterns in AI-generated code become essential infrastructure.

This is not the most glamorous defensive capability, but it is one of the most practical.

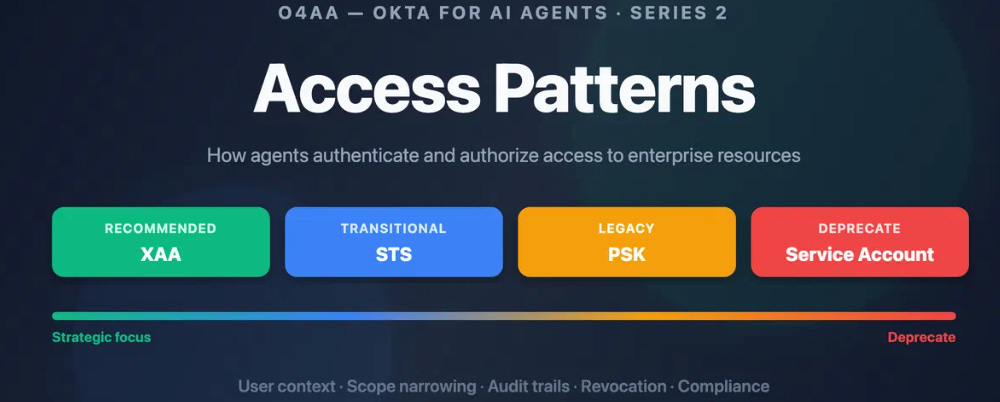

Fabio Grasso Maps Okta’s AI Access Patterns

The identity layer for AI agents is being built in real time across multiple vendors, and the organizations that wait until it is “settled” will find themselves governing shadow agents with no visibility or control.

Fabio Grasso documents how traditional IAM frameworks struggle to recognize, authenticate, and authorize AI agents whose access patterns are dynamic and task-driven. Okta for AI Agents goes generally available on April 30, 2026, treating agents as first-class identities in enterprise IAM rather than bolted-on service accounts. RAG creates new access patterns that raise data accuracy and information disclosure risks because grounding model outputs in enterprise data introduces complex authorization requirements.

This complements the AAuth and Microsoft Entra developments from the AI section.

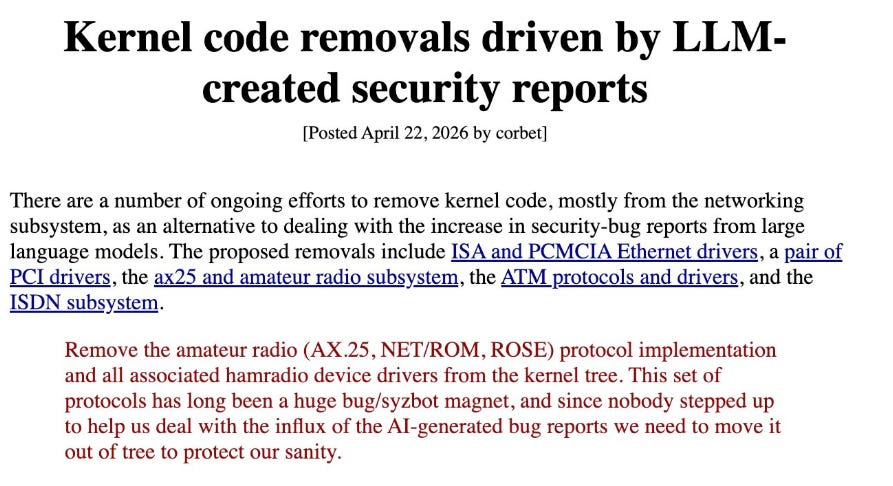

Linux Maintainers Begin Removing Dead C Code at Scale

As it is often said, software ages like milk. These cleanup efforts are essential maintenance, not optional housekeeping. Isaac Evans highlighted Linux maintainers removing chunks of C code as part of an ongoing cleanup effort addressing remnants dating as far back as Linux v0.1.

This connects to a broader pattern I have been tracking. As AI tools accelerate vulnerability discovery in foundational open source projects, the legacy code surface area becomes a material security concern.

Daniel Stenberg’s curl vulnerability projections, the 27-year-old OpenBSD bug Mythos found, and now the Linux kernel cleanup all point to the same reality. The software ecosystem is built on aging infrastructure that was never designed for the scrutiny AI is bringing to it.

Infrastructure Security Is Becoming the Category That Matters Most

I see the convergence across this week’s developments and the pattern is clear. Google’s Agentic Defense platform, Microsoft’s Foundry sandboxing, nono’s kernel-level containment, Trailmark’s code graph infrastructure, and SmokedMeat’s CI/CD attack simulation are all infrastructure plays.

Ross Haleliuk makes the case that infrastructure security is attracting more investment and attention than application-layer solutions, and I agree. As digital infrastructure becomes tied to national security and as agentic AI requires hardened runtime environments, the infrastructure layer is where the defensive leverage lives. The tools that sit underneath applications and agents are becoming the security category with the highest strategic value.

Final Thoughts

This was the week the Mythos controlled-access thesis started to unravel. XBOW demonstrated that GPT-5.5 delivers comparable hacking capability to anyone with a subscription. Flying Penguin showed that Mozilla’s 271-bug claim may trace to as few as three credited CVEs. The Register called the whole thing a nothingburger. The narrative that one company can hold the most powerful offensive AI behind a walled garden is collapsing under the weight of commodity model performance.

But while the Mythos debate consumed attention, the infrastructure builders were doing the quiet, consequential work. MITRE rewrote how we categorize adversary behavior for the first time in ATT&CK’s history. CIS extended its Controls framework to AI agents and MCP. AAuth shipped working demos with IETF drafts and libraries in three languages. Microsoft defined what agent identity looks like in Entra. Google and Wiz unveiled a platform that combines threat intelligence, detection engineering, and agent governance into a single surface.

The Pentagon vibe-coding 100,000 agents in five weeks is the clearest signal of where we are heading. AI agents are proliferating faster than governance frameworks can contain them. The CSA’s finding from issue #94 that 53% of organizations report agents exceeding permissions is not a future risk. It is the present reality.

The organizations that will navigate this well are the ones investing in infrastructure. Kernel-level sandboxing. Tamper-evident audit trails. Identity standards that treat agents as first-class principals. Detection engineering that automates at the speed the threat landscape demands. Supply chain security that accounts for hallucinated dependencies and malicious agent skills.

The hype cycle will continue. Build the infrastructure anyway.

Stay resilient.