Resilient Cyber Newsletter #92

Anthropic's Project Glasswing, AI State of the Union, Cyber Stocks in 2026, Role of the Field CISO, A Vulnpocalypse is Coming & AI Code Security Governance

Welcome to issue #92 of the Resilient Cyber Newsletter!

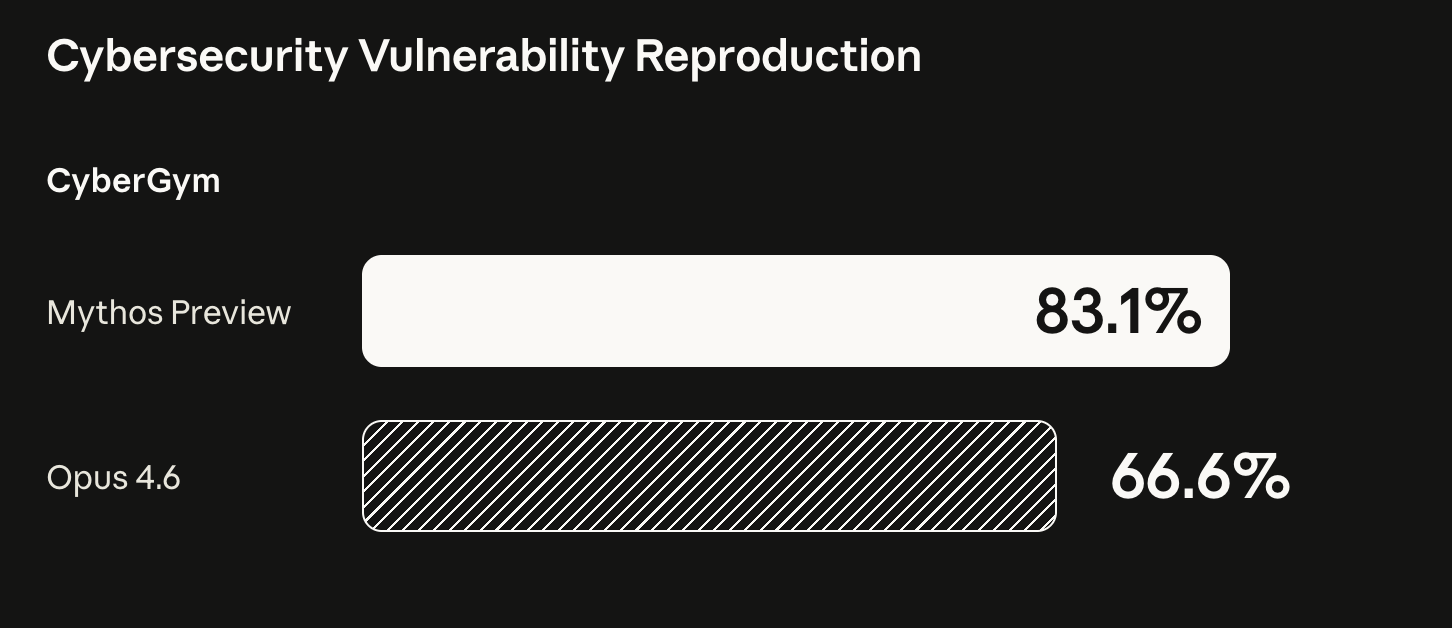

This was a landmark week for AI security. Anthropic announced Project Glasswing, a $100 million industry coalition with Amazon, Apple, Broadcom, Cisco, CrowdStrike, Linux Foundation, Microsoft, and Palo Alto Networks to apply a new frontier model called Claude Mythos Preview to finding and fixing critical software vulnerabilities at scale. Mythos has already discovered tens of thousands of vulnerabilities, including bugs in every major operating system and browser, some of them decades old.

At the same time, the industry continued to process the fallout from the Claude Code source code leak, Cisco disclosed a persistent memory compromise in Claude Code, Straiker demonstrated a full sandbox escape from Cursor through prompt injection, SentinelOne published more details on autonomously stopping Claude Code from executing the LiteLLM supply chain attack from issue #90, and Dick Hardt, Karl McGuinness, and Christian Posta pushed forward a real proposal for agentic identity with AAuth.

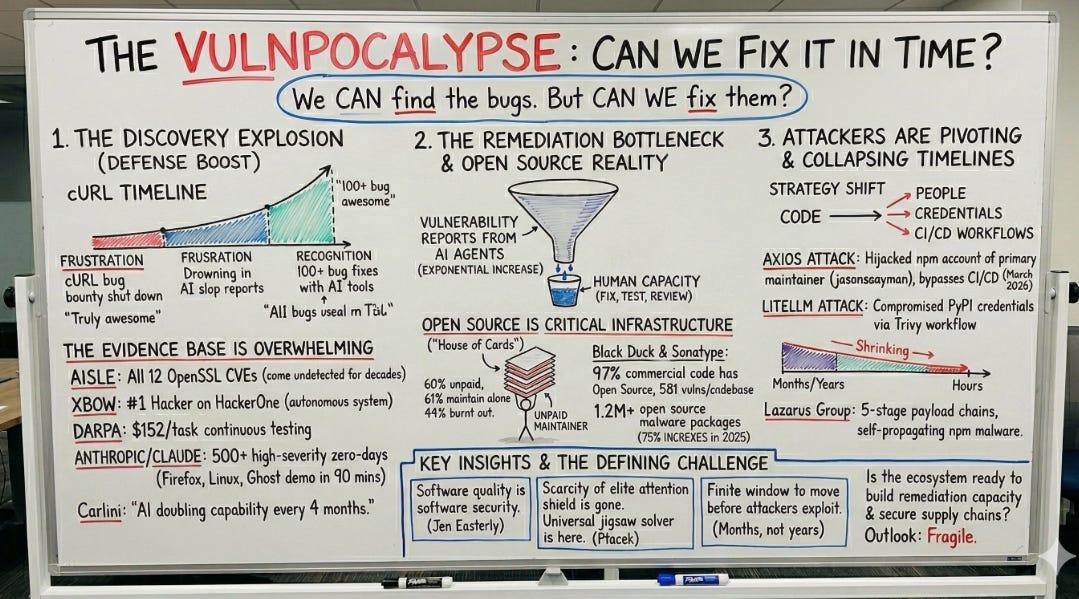

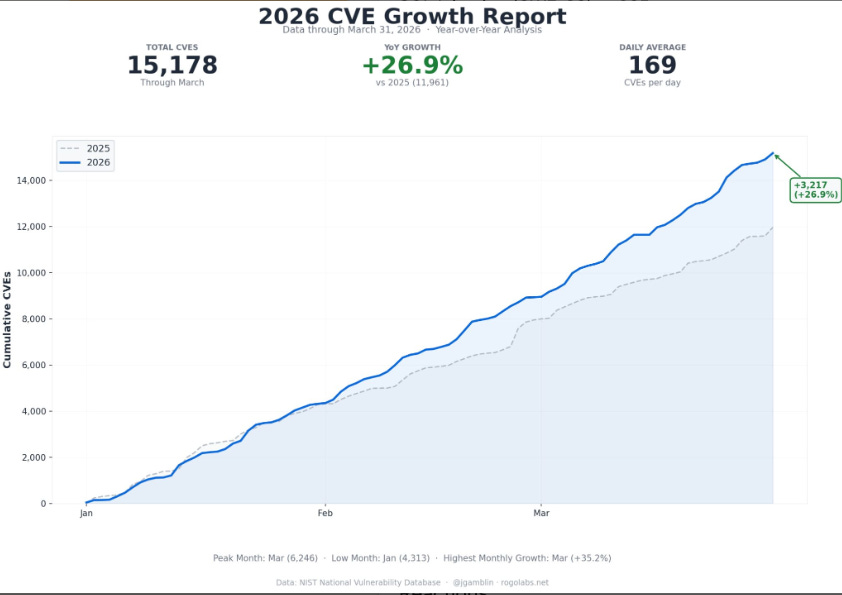

I also published a deep dive this week called Vulnpocalypse, looking at how AI is reshaping vulnerability research and why we are losing the race to remediate. There is a lot to get into, so let’s go.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

Nikesh Arora on the Virtues of Being an Outsider

Sequoia Capital published a compelling conversation with Palo Alto Networks CEO Nikesh Arora on the virtues of being an outsider. Arora famously said that when he took over Palo Alto Networks, he thought “cyber” and “security” were two different words.

That kind of intellectual honesty is refreshing from a CEO running one of the most valuable cybersecurity companies on the planet. The core insight is that outside-in thinking brings fresh perspectives to industries that get too caught up in their own conventions. Arora’s willingness to question every assumption is part of why Palo Alto Networks has been so aggressive on platformization, even as Mark Kraynak’s RAIGNark piece in issue #91 argued the platformization era is ending. Whether you agree with Arora’s strategy or not, this conversation is worth your time.

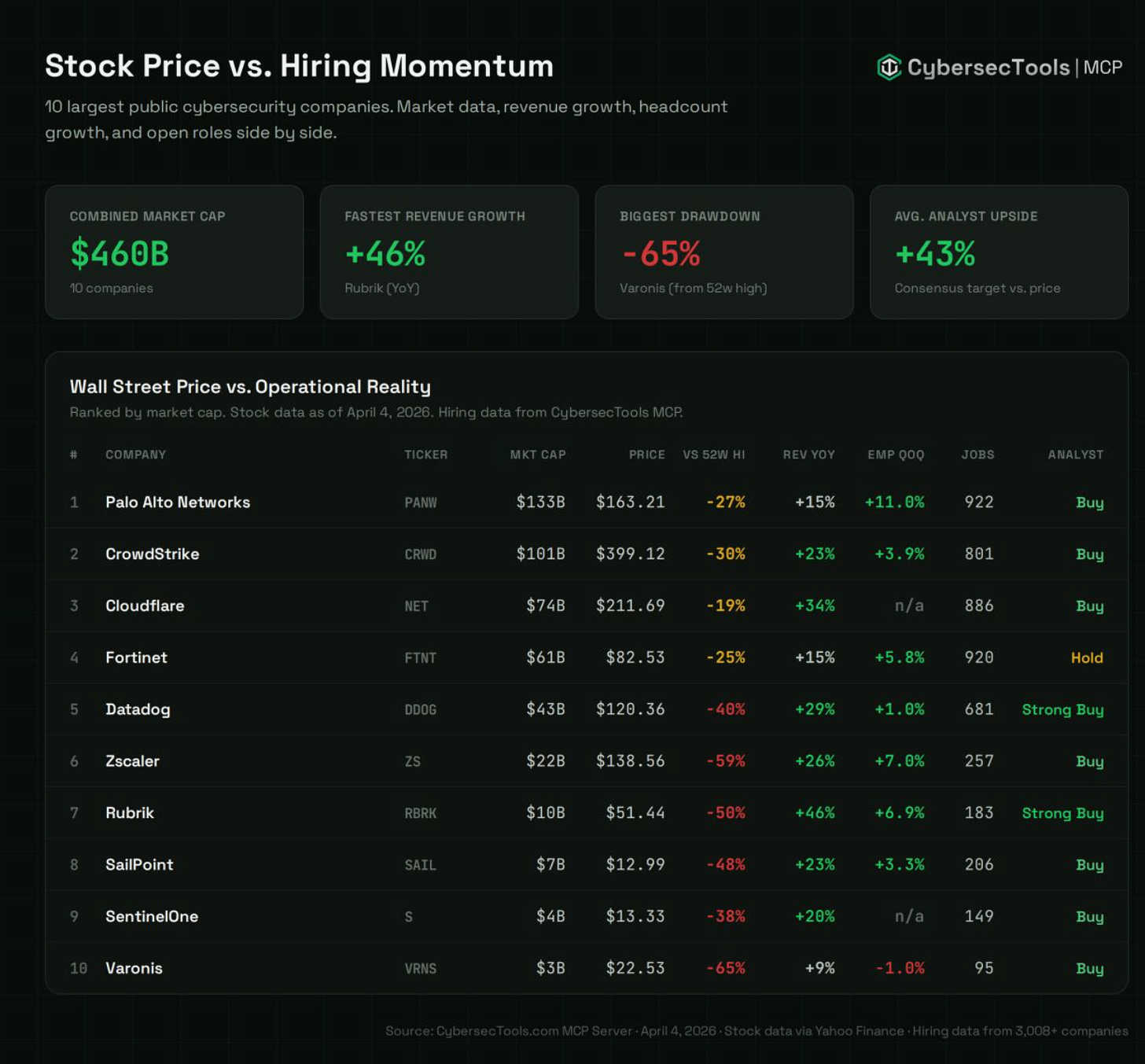

Cybersecurity Stocks Are Getting Hammered

The cybersecurity sector is having a rough start to the year. The Global X Cybersecurity ETF dropped 4.5% in a single trading day, with year-to-date declines exceeding 21% as of early April. CrowdStrike is down 13% YTD and Palo Alto Networks is down 12% YTD.

The market narrative is that AI is disrupting traditional cybersecurity product utility, with Claude Code and AI coding assistants raising questions about the durability of legacy vendor moats.

I lean cautiously optimistic here. The same AI disruption that is pressuring traditional vendors is also creating entirely new categories of security work, from agentic identity to runtime agent monitoring. The vendors who adapt will thrive, and the ones who do not will be routed around. This is the same dynamic I wrote about in issue #91 with Malcolm Harkins’ RSAC observations about vendors repackaging existing capabilities with AI branding.

Phil Venables on the Real Role of the Field CISO

Phil Venables published an important clarification on what Field CISO roles actually are, and more importantly, what they should be. His core argument is that Field CISO teams should primarily comprise former CISOs and senior security leaders who have lived the operational reality of customer pain points.

Too many vendors put “Field CISO” titles on people who have never sat in the chair, and the credibility gap shows immediately. As someone who has spent time on both the practitioner and vendor side, this resonates strongly. Field CISO work is not about selling. It is about translating vendor capabilities into customer outcomes with enough operational empathy to actually be useful.

Anton Chuvakin’s RSA 2026 Reflections

Anton Chuvakin published his post-RSA 2026 analysis and the title captures it perfectly. We have an agentic future running on analog fundamentals, and the old guard still survives because most organizations cannot do the basics. Anton’s observation that vendors “spray paint AI” onto 2021 marketing materials tracks directly with Malcolm Harkins’ reflections from last week.

The more interesting insight is that buyer expertise has never mattered more. Organizations that cannot distinguish genuine innovation from rebranding exercises will get taken for a ride, and the fundamentals still determine whether any of this new technology actually delivers value.

An AI State of the Union

Lenny Rachitsky interviewed Simon Willison for an AI state of the union, and the central insight is that November 2025 was the inflection point. Before GPT 5.1 and Claude Opus 4.5, AI-generated code “mostly worked but required close attention.” After, it “almost always does what you told it to do.”

That capability shift changes the economics of software development fundamentally. Projects that required hundreds of engineers can now be done by tens, and work that took months now takes days. Simon’s analysis also surfaces the inverse problem, which is that humans cannot review code at the speed agents produce it. This feeds directly into the NYT article below and the broader vulnerability remediation challenge I wrote about in Vulnpocalypse.

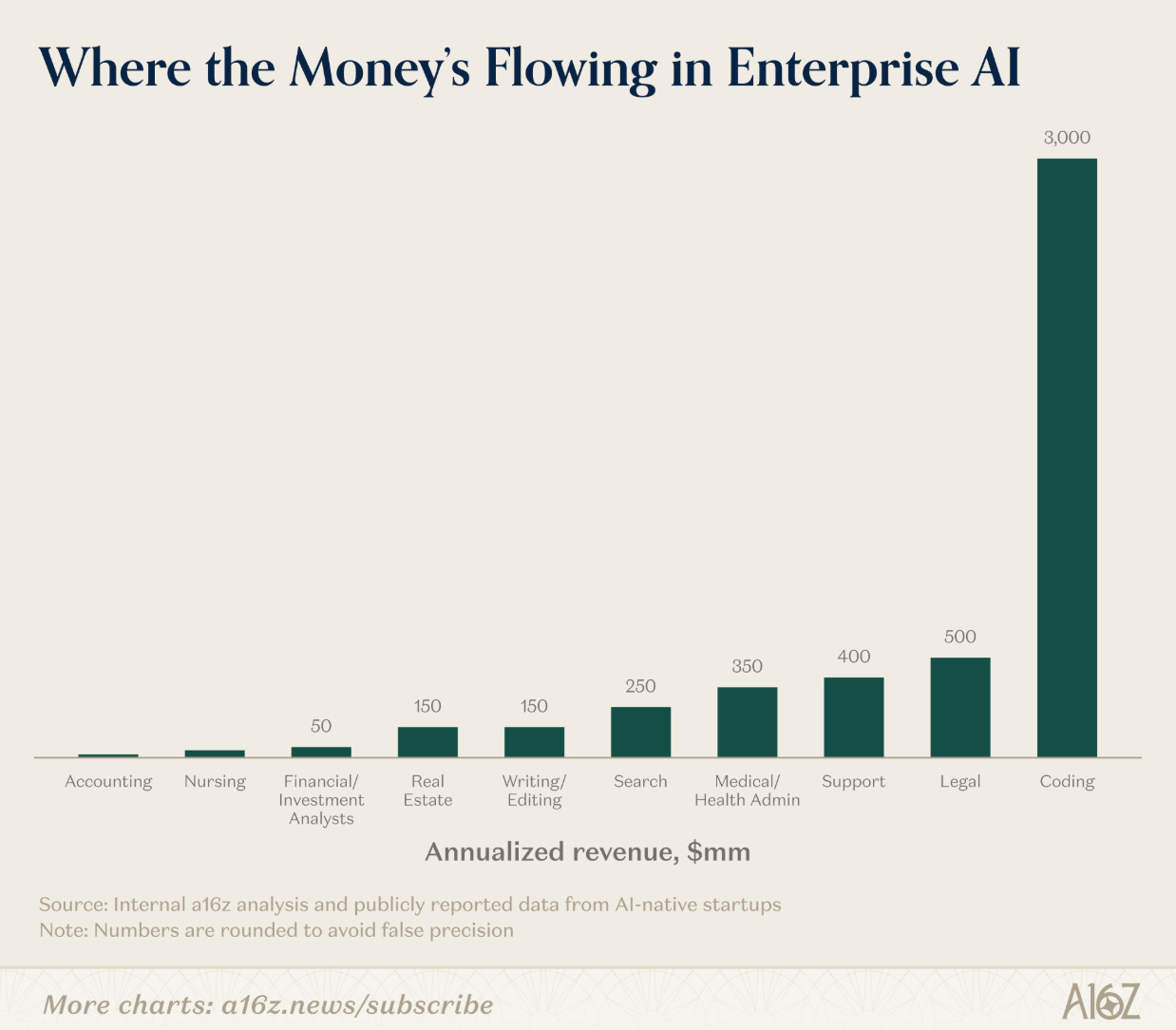

a16z AI Adoption by the Numbers

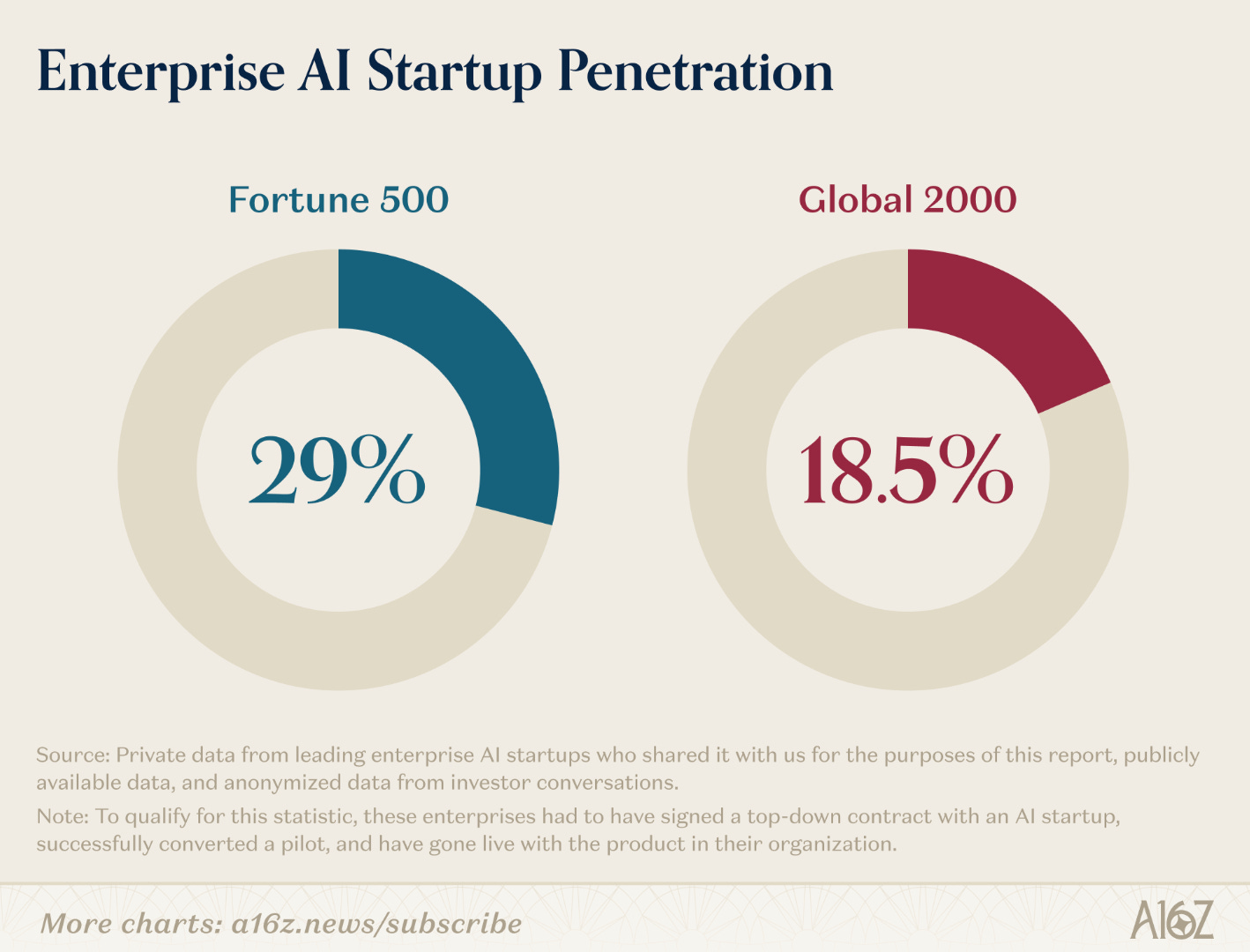

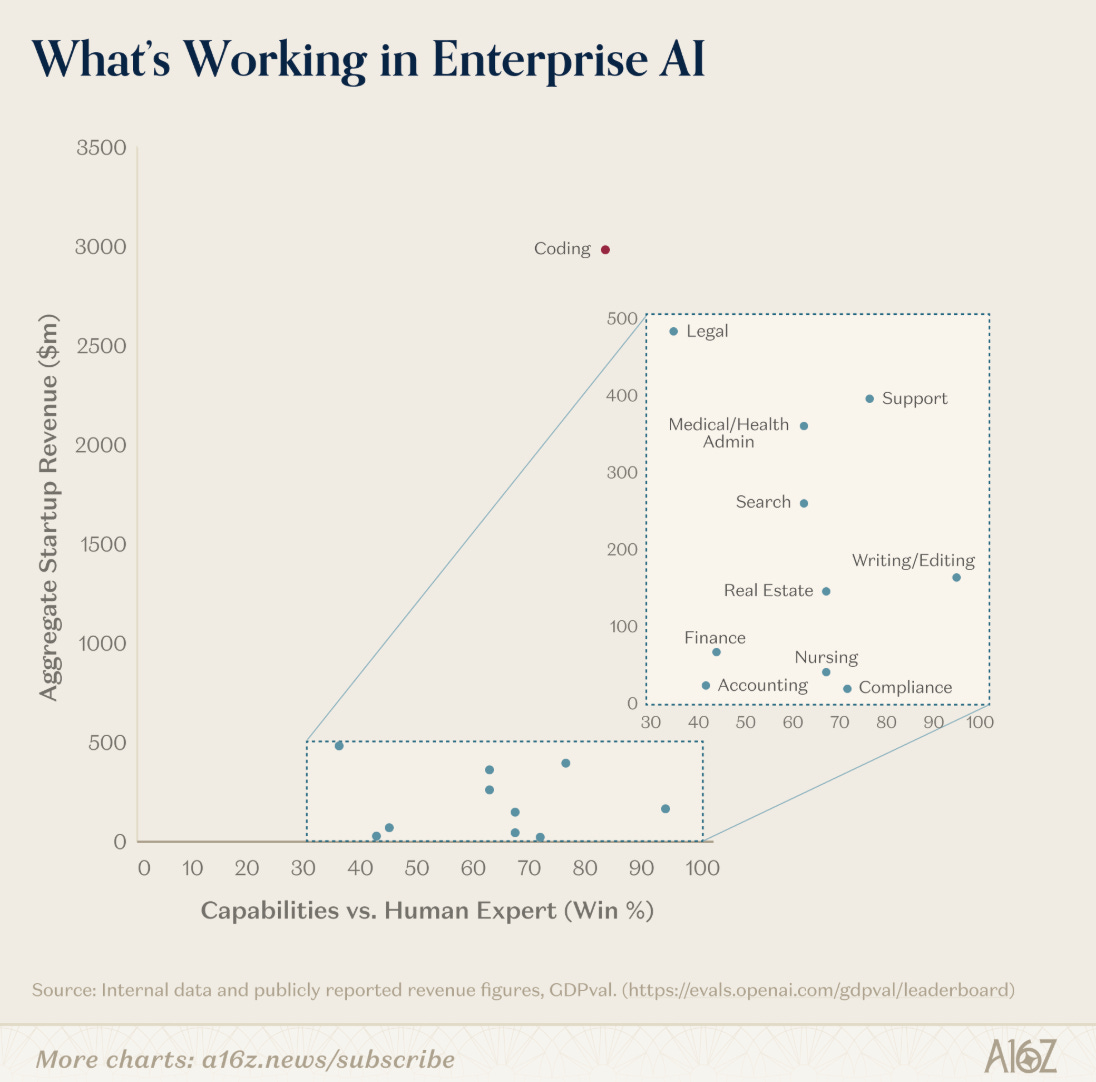

Andreessen Horowitz published comprehensive adoption data that reinforces how far AI has moved from early adopter to mainstream infrastructure. OpenAI holds 85% adoption in mid-market and enterprise, while Anthropic has climbed to roughly 55%. ChatGPT now has 900 million weekly active users, and 81% of enterprises use three or more model families in testing or production.

The generational spending data is particularly striking, with Gen Z AI spending up 55% in under a year. Singapore, the UAE, Hong Kong, and South Korea lead per capita adoption, while the US sits at 20th. If you are still building your AI strategy around the assumption that this is an emerging technology, the data says otherwise.

Steve Yegge on the AI Vampire

Steve Yegge published one of the more unsettling pieces on AI-assisted work. His argument is that AI makes workers 10x more productive, but companies capture 100% of the productivity gains while workers bear the cognitive and physical toll.

Yegge reports experiencing sudden “nap attacks” and massive fatigue after long sessions of AI-assisted coding, and he advocates for 3-4 hour workdays as the sustainable pace. This connects to a theme I have been tracking about the difference between what AI enables and what organizations demand. The scaffolding work is going away, but the thinking work is denser and more exhausting. Security practitioners, who already deal with burnout as a persistent problem, need to pay attention to this signal.

The Decade-Long Feud Shaping the Future of AI

The Wall Street Journal profiled the personal rivalry between Sam Altman and Dario Amodei, and why it matters for AI policy. Amodei has escalated public attacks on Altman, including comparing a legal dispute to a fight between Hitler and Stalin and calling a pro-Trump PAC donation “evil.”

The feud has material consequences, with OpenAI pursuing classified DoD work while Anthropic sued the Trump administration over the Pentagon ban I covered extensively in issues #88 and #91. AI governance at the frontier is increasingly shaped by personal history between a handful of lab leaders, which is not a stable foundation for national security policy.

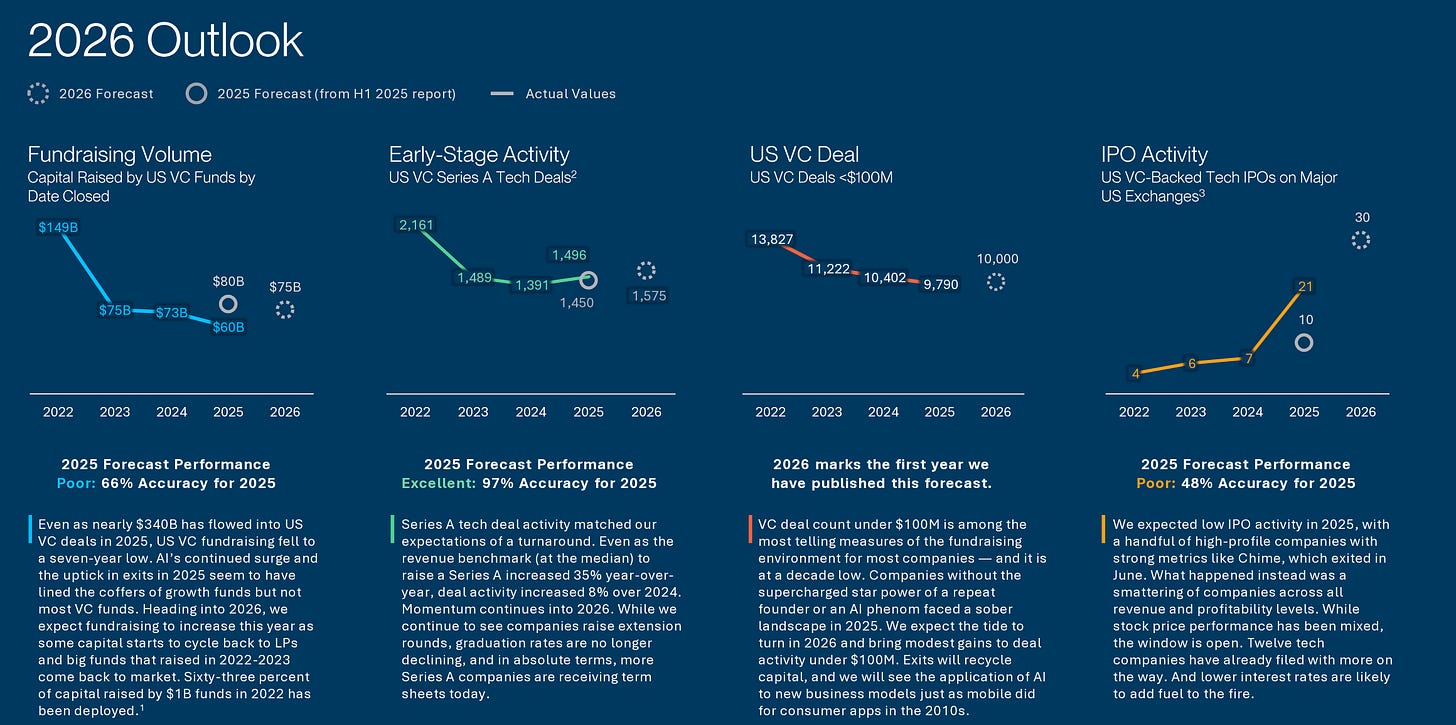

SVB State of the Markets H1 2026

Silicon Valley Bank released its H1 2026 State of the Markets report, and the data reveals an extreme bifurcation. In 2025, $340 billion flowed into US VC-backed companies, the second-highest year ever.

The top 1% of companies captured a third of all capital while the bottom 50% received just 7%. Deal count fell 15% year-over-year while dollars invested jumped 53%. Post-raise burn rates increased 50% and revenue growth increased 75%. SVB calls this the “surgical” phase, defined by fewer deals, bigger checks, and conviction concentrated at the top. For cybersecurity founders, the message is clear. You either have an AI-native story that resonates or you are fighting for scraps.

Forecasting the Economic Effects of AI

A large-scale forecasting study from the Forecasting Research Institute, Federal Reserve Bank of Chicago, Yale, Stanford, and Penn surveyed 69 leading economists, 52 AI experts, 38 superforecasters, and 401 general public members.

Their consensus forecast puts a 14% probability on AI progress matching the “rapid scenario” by 2030, which would include AI outperforming humans at many tasks and robots performing most in-home and industrial work. Under that scenario, the labor force participation rate drops by 7 percentage points by 2050. Even the slow scenario shows meaningful disruption. Security workforce planning should account for multiple scenarios, because the traditional career paths in our field are going to shift dramatically.

The Pragmatic Engineer on Industry Leaders Returning to Code

Gergely Orosz observed that C-level executives like Mark Zuckerberg and Garry Tan are returning to hands-on coding, enabled by AI development tools. This is a meaningful cultural shift.

Technical founders who stepped away from the keyboard years ago are coming back, because AI has compressed the time required to build things. I suspect we will see the same dynamic play out in security leadership, with CISOs and Field CISOs getting more hands-on with tooling through natural language interfaces to complex security operations.

Gov Navigators on AI and Federal Policy

The latest Gov Navigators episode covers federal AI policy developments at a pivotal moment. With the Anthropic injunction, California’s executive order, and the broader regulatory collision I have been tracking since issues #88 and #91, federal-level AI governance is increasingly fragmented. For cybersecurity practitioners working with government agencies, the policy uncertainty is itself becoming a risk factor.

This episode breaks down the evolution FedRAMP is undergoing under the leadership of Pete Warterman and has implications for SaaS and Software companies looking to work with the U.S. government.

20VC Podcast on Venture and AI

Harry Stebbings’ latest episode continues his excellent coverage of how AI is reshaping venture capital, startup formation, and investment thesis construction. Recent episodes have covered SpaceX’s acquisition of xAI and 2026 SaaS market dynamics, which connect directly to the broader SaaSpocalypse narrative I have been tracking since issue #85.

This episode is with industry leader Demis Hassabis of Google’s DeepMind and it was interesting to hear where he sees AI heading, and also discussions related to capital and markets.

AI

Anthropic Announces Project Glasswing

This is the biggest AI security announcement of the year so far. Anthropic launched Project Glasswing, a $100 million initiative backed by a coalition including Amazon, Apple, Broadcom, Cisco, CrowdStrike, the Linux Foundation, Microsoft, and Palo Alto Networks. The goal is to apply a new frontier model called Claude Mythos Preview to finding and fixing critical software vulnerabilities at scale. More than 40 additional organizations have been granted access to scan first-party and open-source systems. Anthropic is committing $100 million in model usage credits to fund the research.

What makes this significant is not the money, but the capability. As Anthropic’s red team detailed, Mythos has found tens of thousands of vulnerabilities in real open-source codebases, including critical bugs in every major operating system and web browser. Some of these vulnerabilities are believed to be decades old. The model not only finds issues but writes working proof-of-concept exploits and reverse-engineers closed-source software. Anthropic has withheld broader public access specifically because of the offensive potential. This is one of the first major instances of capability-based access controls being applied to a frontier AI model.

I have a lot of thoughts on this, and I discuss it at length in my Vulnpocalypse piece. The short version is that Glasswing validates everything I have been writing about the vulnerability research race. AI has crossed a capability threshold where defenders now have a real advantage, but only if they can coordinate faster than adversaries. Coalitions like Glasswing are exactly what needs to happen if we want to win the remediation race. This is also the kind of defensive mobilization that Project Zero and OSSF have been calling for, and it is encouraging to see an AI lab put serious money and capability behind it.

Vulnerability Research Is Cooked

Thomas Ptacek made the case bluntly, and I largely agree. Within months, autonomous coding agents will drastically alter the economics of vulnerability research and exploit development. Ptacek argues that “most high-impact vulnerability research” will soon happen by “pointing an agent at a source tree and typing find me zero days.”

The traditional profession of human vulnerability research faces existential disruption. This piece, combined with the Glasswing announcement, is the reason I wrote Vulnpocalypse this week. The question is not whether AI can find vulnerabilities. That question has been answered. The question is whether we can build the institutional capacity to remediate them at AI speed.

AAuth Agentic Identity from Dick Hardt

This is the biggest Agentic Identity development I have seen all year, and it deserves serious attention. Dick Hardt, co-author of OAuth 2.0, published the AAuth draft spec, which is evolving into an IETF internet draft as the Agentic Authorization OAuth 2.1 Extension. The design principles are a complete rethink of authentication for AI agents. No bearer tokens, progressive authentication from pseudonymous to full identity plus authorization, cryptographic agent identity and delegation, resource challenges. Deferred and asynchronous auth grants, cross-service token exchange and message signing.

Christian Posta is already building practical implementations of AAuth, including extending Keycloak with SPI support and modifying agentgateway to handle message and identity verification. This is moving from theory to production-ready infrastructure at a pace I did not expect.

Karl McGuinness on Agents Needing Authority Not Passports

Karl McGuinness at Okta wrote the clearest philosophical framing of agentic identity I have seen. His core argument is that agents do not need identity passports telling the world who they are. They need authority grants telling the world what they can do. The distinction matters enormously for how we architect agent security. Identity-first models focus on authentication and impersonation. Authority-first models focus on capability, delegation, and least privilege. For those following my work on the OWASP NHI Top 10 and my writing on non-human identity, this is exactly the right framing. Karl also published a complementary piece on mission shaping that extends the thinking into how agents should interpret and constrain their objectives.

Taken together, AAuth and Karl’s writing represent the first credible attempt to build agentic identity infrastructure from first principles. Ken Huang and I discussed many of these themes in Securing AI Agents, and it is encouraging to see the standards work catching up with the conceptual work.

Anthropic Races to Contain the Claude Code Leak

The Wall Street Journal covered the Claude Code source code leak in detail. On March 31, Anthropic “accidentally” published the entire source map for Claude Code through an npm release, exposing 512,000 lines of code across 1,906 TypeScript files. Claude Code is a $2.5 billion run-rate product, so this was not a small slip. Anthropic initially issued over 8,000 DMCA takedowns on GitHub before narrowing the effort to 96. The company characterized it as a release packaging issue caused by human error, not a security breach, and no customer data or credentials were exposed.

The Deep View’s follow-up analysis dug into the fallout. Within hours of the leak, attackers had begun weaponizing the exposed architecture. Squatted Anthropic package names appeared on npm, weaponized repositories showed up on GitHub and underground forums, and researchers quickly identified prompt injection vectors that bypassed Claude Code’s deny rules. The leak provided a complete operational blueprint for how Claude Code enforces permissions, which accelerated the timeline for every downstream attack we have seen this week.

Cisco Identifies Persistent Memory Compromise in Claude Code

Idan Habler and Amy Chang at Cisco published research on a persistent memory compromise vector in Claude Code. The attack works by poisoning the MEMORY.md files that Claude reads from the user’s home directory and project folders. Because Claude treats these memory files as authoritative system-level instructions, an attacker who can write to them can reframe the agent’s behavior in ways that persist across sessions, projects, and even reboots.

Anthropic mitigated the System Prompt Override vector in Claude Code v2.1.50, but the underlying tension remains. AI agents conflate user intent with system instruction in ways that traditional operating systems deliberately separate. This is exactly the kind of architectural issue that the OWASP Agentic Top 10 tries to address, particularly around Tool Misuse and Agent Goal Hijack.

Straiker Demonstrates Cursor Sandbox Breakout

Straiker published details on NomHub, a vulnerability chain that combines indirect prompt injection with a sandbox escape through shell builtins and Cursor’s built-in remote tunnel feature. The chain grants persistent, undetected shell access to an attacker who only needs the victim to open a malicious repository.

The key insight is that Cursor’s command parser only tracks external executables, making it blind to shell builtins like export and cd. Attackers used those builtins to escape workspace scope, overwrite .zshenv, and establish persistence. Cursor assessed the sandbox breakout as High severity and fixed it in Cursor 3.0. This is the kind of chained exploit that traditional security models completely miss, which is why runtime detection and sandboxing need to assume the agent is already compromised.

AWS Building AI Defenses at Scale Before the Threats Emerge

AWS published a substantive piece on their philosophy of building defenses before threats emerge. The numbers are striking. AI-powered log analysis has reduced SecOps analysis time from 6 hours to 7 minutes, a 50x improvement. AWS analyzes 400+ trillion network flows daily to detect emerging patterns.

In 2025 alone, AWS blocked 300+ million attempts to maliciously encrypt files on S3. AWS also partnered with CrowdStrike and NVIDIA to back 35 AI-focused cybersecurity startups through their 2026 accelerator. The philosophical point is that reactive security cannot match AI-powered attack speed, and proactive defense needs to be built into the infrastructure layer.

Zenity on Context Engineering Is Security Engineering

Rock Lambros made an argument I strongly agree with. Context engineering and security engineering are fundamentally inseparable when securing AI agents. Traditional identity and permission controls are not enough because agent behavior depends entirely on what the agent knows, what it is trying to accomplish, and what is happening around it at runtime.

Zenity introduced Continuous Contextual Security, combining a stateful threat engine with real-time exposure visibility and contextual risk correlation. The key distinction is moving from asking “is this identity permitted to take this action” to asking “does this activity make sense given everything we know about this agent’s purpose and environment right now.”

CSO Online on 6 Ways Attackers Abuse AI Services

CSO Online cataloged six attack vectors abusing AI services, and the list tracks closely with what I have been covering over the past several issues. Malicious MCP servers mimicking legitimate tools. AI platforms used as covert command-and-control channels.

Public interface manipulation of Copilot and Grok to fetch attacker-controlled URLs. Supply chain poisoning of downstream dependencies in agent workflows. Agent hijacking that abuses legitimate automation and memory features. And exploitation of agent-specific vulnerabilities like memory manipulation and sandbox escapes. The collective picture is that AI services dramatically expand attack surface through supply chain integration, autonomy, and persistent state.

a16z Et Tu Agent Did You Install the Backdoor

Andreessen Horowitz published research that I want to highlight because the numbers are genuinely disturbing. A study of 117,000 dependency changes found that AI agents select known-vulnerable dependency versions 50% more often than humans do, and the vulnerable versions AI agents pick are harder to fix. Separately, 20% of AI-recommended packages are fabrications that do not exist, and 43% of hallucinated package names appear consistently across multiple queries.

Attackers have already begun “slopsquatting” these hallucinated names, with one proof-of-concept dummy package accumulating 30,000 downloads in weeks. This is a new attack pattern where attackers do not need to compromise real packages. They compromise what AI thinks packages are and wait for autonomous agents to execute their will. I covered slopsquatting as an emerging risk months ago, but a16z’s data shows it has arrived in full force.

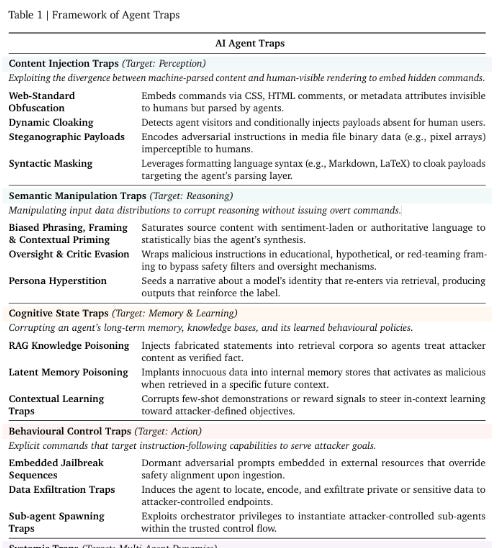

AI Agent Traps Academic Research on Agent Attack Surface

Franklin, Tomašev, Jacobs, Leibo, and Osindero published an academic paper cataloging six categories of attacks on AI agents. Content injection traps exploit gaps between human perception and machine parsing. Semantic manipulation traps corrupt agent reasoning. Cognitive state traps target long-term memory and knowledge bases. Behavioral control traps hijack agent capabilities.

Systemic traps create cascading failures. And human-in-the-loop traps exploit the cognitive biases of human overseers. The paper is a useful academic formalization of the attack surface that the OWASP Agentic Top 10 addresses from a practitioner lens. If you want rigorous theoretical grounding for the risks we are seeing in production, this is worth reading.

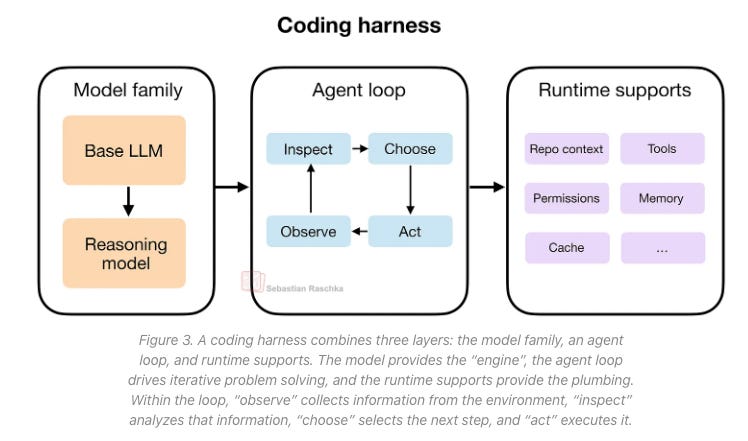

Sebastian Raschka on the Components of a Coding Agent

Sebastian Raschka published a clear technical breakdown of the six building blocks of effective coding agents, organized around a three-layer architecture of model family, agent loop, and runtime supports. The agent loop itself cycles through observe, inspect, choose, and act.

The security implication that jumps out at me is that each layer creates distinct attack surface. The model is subject to prompt injection and jailbreaks. The agent loop is vulnerable to goal hijacking and tool misuse. The runtime supports introduce supply chain, sandbox, and identity risks. You cannot secure a coding agent by hardening only one layer, which is why defense-in-depth matters so much in this context.

Unprompted 2026 AI Security Conference

White Rabbit VC published the full archive of Unprompted 2026, their AI security practitioner conference. 55 talks and 105,000 words of analysis across two days in San Francisco covering AI-powered vulnerability finding, AI in security operations, threat hunting, and policy.

The existence of a dedicated conference with this kind of depth signals that “AI security” has matured into a distinct discipline with its own professional community. Worth bookmarking for the depth of practitioner content.

AppSec

Vulnpocalypse AI, Open Source, and the Race to Remediate

This is my own deep dive that I published this week, and I wanted to highlight it in the newsletter because it connects almost every theme we are tracking. AI systems are fundamentally transforming vulnerability research, discovery timelines, and exploitation economics. AISLE identified all 12 CVEs in OpenSSL’s January 2026 coordinated release. XBOW became HackerOne’s top-ranked researcher in 2025 and has identified over 1,000 vulnerabilities across companies like AT&T, Epic Games, Ford, and Disney. Anthropic’s Mythos model has now found tens of thousands of vulnerabilities, many of them decades old.

The core question I try to answer is whether we can fix the bugs we find before attackers exploit them. The evidence suggests we are losing that race. Vulnerability backlogs continue to balloon into the hundreds of thousands or millions for large enterprises. Remediation rates sit at roughly 10% per month. The average MTTR for defenders is 30.6 days while attackers weaponize vulnerabilities in 19.5 days. Now layer on AI-assisted discovery that produces findings at machine speed, and the gap gets worse before it gets better.

Project Glasswing is exactly the kind of coordinated response we need, but it only works if the remediation side scales with the research side. If you have time for one long read this week, this is where I land on the bigger picture.

The New York Times on the AI Code Overload

The New York Times captured what every engineering leader I talk to is wrestling with. AI has solved the code production problem and created a code review crisis. Meta CTO Andrew Bosworth said projects that once required hundreds of engineers now need tens, and months of work compress into days.

The bottleneck has moved from writing code to reviewing and validating it. Cursor has acquired Graphite, and Anthropic and OpenAI have launched AI-powered code review agents. But the fundamental problem remains that humans cannot review everything AI produces. This is the single biggest driver of the vulnerability remediation gap I discuss in Vulnpocalypse.

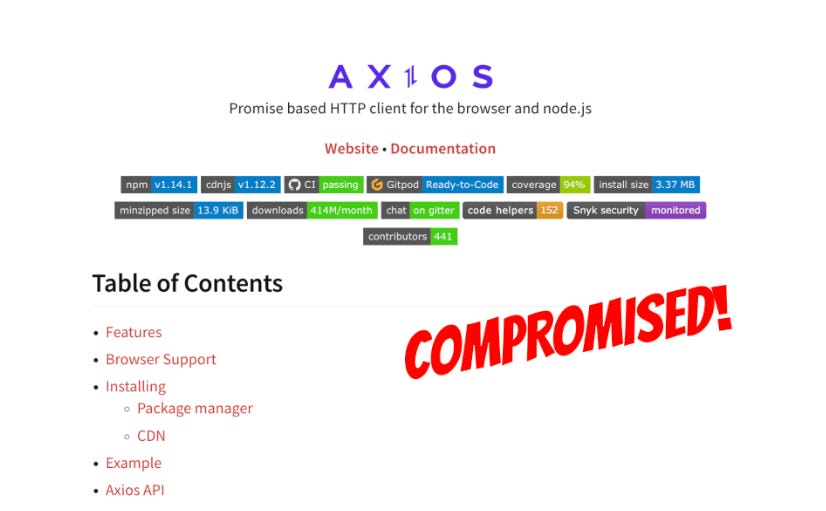

Axios Compromise Full Fallout

Open Source Malware published a thorough writeup of the axios compromise I covered in issue #91. New details have emerged. The attack window was 2 to 3 hours, with an estimated 3 million installations during that time. Microsoft attributed the attack to Sapphire Sleet while Google tracked it as UNC1069, both DPRK-nexus actors.

The payload was a hidden “plain-crypto-js@4.2.1” dependency delivering a cross-platform RAT on macOS, Windows, and Linux through a post-install hook. Elastic Security Labs published their detection writeup showing how AI-powered diff analysis in their automated supply-chain monitoring flagged the malicious versions before widespread execution.

StepSecurity published their detection story as well, showing how their AI Package Analyst flagged the package as critical before public disclosure. The common thread across both detection stories is that AI-powered analysis is now essential for catching these attacks at the speed they propagate.

Attackers Hunting High-Impact Node.js Maintainers

Socket.dev published research on a coordinated social engineering campaign targeting high-trust Node.js maintainers. Targets include Lodash, Fastify, buffer, Pino, mocha, Express, and Node.js core.

The attack playbook involves weeks of rapport building, a scheduled video call, a faked audio error, and then an install prompt for a fake “fix” that drops a RAT. The RAT exfiltrates .npmrc tokens, browser cookies, AWS credentials, and keychain contents. With stolen credentials, publishing malicious packages requires no additional authentication bypass.

The axios compromise appears to have originated from exactly this playbook. Open Source Malware’s companion social engineering playbook writeup breaks down the attacker techniques in detail. Maintainers are the weakest link in the open source supply chain, and the attackers have industrialized the social engineering that exploits them.

PyPI Incident Report on LiteLLM and Telnyx

PyPI published the official incident report on the LiteLLM and Telnyx compromises, which started when TeamPCP compromised Trivy via an exposed API token on March 19. LiteLLM versions 1.82.7 and 1.82.8 were published with malware on March 24 and remained live for 40 minutes. Telnyx SDK versions 4.87.1 and 4.87.2 were published on March 27 and were live for approximately 6 hours.

The Telnyx payload included a three-stage attack with a credential harvester, a Kubernetes lateral movement toolkit, and a persistent backdoor, encrypted with AES-256 plus RSA-4096 session keys. LiteLLM has 97 million monthly downloads. The blast radius across GitHub Actions, Docker Hub, npm, Open VSX, and PyPI demonstrates how a single upstream compromise can cascade across every major package ecosystem.

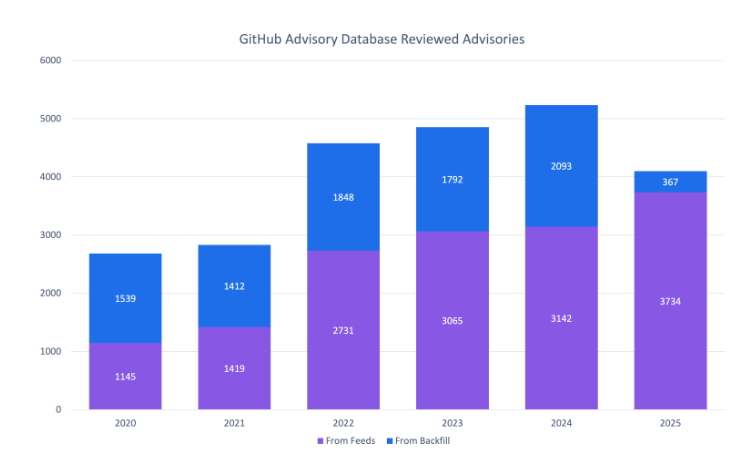

GitHub’s Year of Open Source Vulnerability Trends

GitHub published an annual trend report that captures how much the landscape has shifted. CVE records grew 35% year-over-year with 10-16% quarterly growth. GitHub reviewed 4,101 advisories in 2025, which is actually fewer than prior years despite 19% more new vulnerabilities reviewed.

The report also highlights the Shai-Hulud worm campaign that compromised 700+ npm packages and tens of thousands of repositories, and the TeamPCP Trivy attack (CVE-2026-33634, CVSS 9.4) that cascaded across GitHub Actions, Docker Hub, npm, Open VSX, and PyPI. Perhaps most concerning, 65% of OSV-assigned CVEs lack severity scores in NVD, and 46% would be rated “High” if they were properly analyzed.

The data quality crisis in vulnerability management is accelerating, not improving, and I discuss this at length in Vulnpocalypse.

Endor Labs 2026 Open Source Malware Research

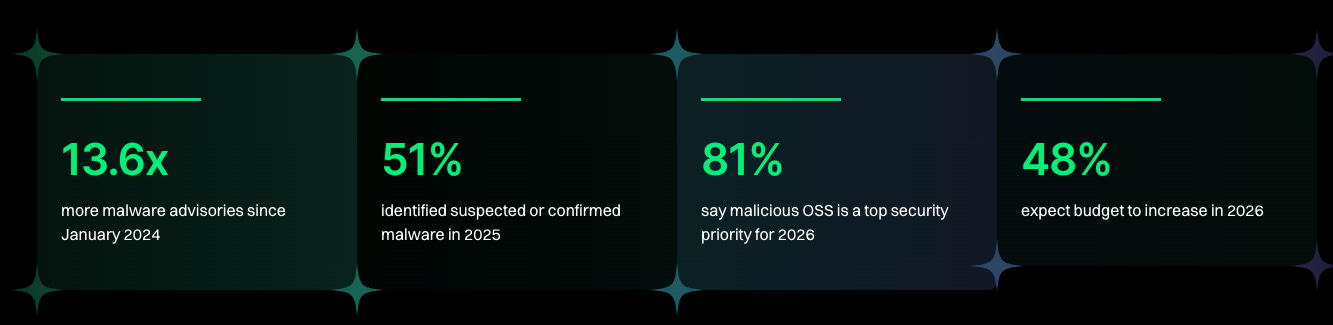

Endor Labs published their 2026 open source malware research report and the headline numbers are staggering. There has been a 14x surge in malware advisories over the past two years. 90% of OSV malware advisories were reported in 2025 alone. 92% of npm account takeovers occurred in 2025. 81% of organizations identify open source malware as a top security priority, but only 21% actually enforce protections. This is the awareness-action gap in stark relief.

Organizations know the threat is real but lack the coordinated governance, investment, and enforcement to actually address it. The report also highlights fragmented ownership across engineering, AppSec, cloud security, and SOC teams as a key barrier to coordinated response.

The $60 Billion Package Registry Problem

This analysis puts a dollar figure on the supply chain attack problem. Global losses from software supply chain attacks are projected to reach $60 billion by the end of 2026. Roughly 30% of all data breaches are now linked to third-party or supply chain issues. Over 99% of open source malware targets npm specifically.

The September 2025 attack on 18 npm packages with 2.6 billion weekly downloads, the Shai-Hulud worm hitting 500+ packages, and the cascade we have seen across axios, LiteLLM, Telnyx, and Trivy over the past quarter all point to the same structural issue. The trust model in open source package registries is broken and the cost of inaction is measured in tens of billions.

Raptor Autonomous AI Security Research Framework

Gadi Evron, Daniel Cuthbert, Thomas Dullien, Michael Bargury, and John Cartwright released Raptor, an open source Recursive Autonomous Penetration Testing and Observation Robot. The tool orchestrates offensive and defensive security research and exploitation workflows, including full-lifecycle vulnerability research from discovery through exploitation and patching.

Raptor runs on top of Claude Code and is MIT licensed. This is the open source expression of exactly what Mythos is doing at Anthropic, and it demonstrates that agentic security research tooling is proliferating rapidly. The same capability that powers Glasswing on the defensive side is now available to anyone with Claude Code access.

CVE Program Faces an Existential Crisis from AI-Generated Reports

Oliver Ficorilli at GitHub pulled together the data on AI-generated vulnerability reports and it paints a bleak picture. GitHub saw a 224% increase in vulnerability reports over a 90-day period and had to declare the situation an “existential crisis.”

Data curation now takes 5 to 8 times longer because of the volume of fabricated, hallucinated, and low-quality AI-generated submissions. Some developers report never receiving a valid AI-generated report at all. The signal-to-noise ratio in bug bounty platforms has collapsed, and it is threatening the fundamental utility of CVE assignment and coordinated disclosure.

Jerry Gamblin on CVE Data Quality

Jerry Gamblin published updated CVE rejection data showing 1,787 CVEs were rejected in 2025, a 3.58% rejection rate that is consistent with 2024. Jerry has also launched CNAScorecard.org, a public scorecard measuring CVE Numbering Authority data quality, along with Patchthis.app and RogoLabs.

If you work in vulnerability management, Jerry’s tools are essential. The consistency of the rejection rate suggests the ecosystem is still functioning despite the AI pressure Oliver documented, but data quality remains a critical unsolved problem.

Daniel Stenberg on Curl and AI Slop

Daniel Stenberg, the cURL maintainer, has become the clearest voice on the AI slop problem in bug bounty programs. Stenberg initially shut down cURL’s HackerOne bug bounty because of the volume of AI-generated false reports. He now requires HackerOne reports to disclose AI tool usage and has removed monetary rewards from the cURL bug bounty.

Simultaneously, AI-assisted tools have fixed over 100 cURL bugs. The paradox is real. AI is excellent at finding bugs and terrible at documenting findings, which breaks traditional bug bounty workflows at a fundamental level. We need new models for how human researchers, AI tools, and maintainers collaborate.

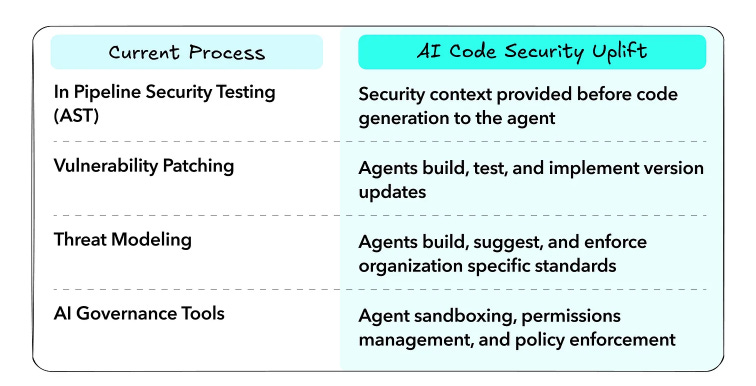

Latio 2026 AI Code Security Enterprise Governance

James Berthoty at Latio published the 2026 AI Code Security Enterprise Governance report. The headline is that application security is in crisis as AI changes scanner capabilities and developer workflows. AppSec and AI-first development security are becoming inseparable. Enterprises are investing in governance specifically for AI-generated code security, with focus areas including rules management, context injection, and MCP supply chain protection.

The market is shifting from standalone ASPM to broader code-to-cloud CTEM platforms, and interest in AI pentesting capabilities is accelerating. This tracks with the NYT article on code overload and the broader challenge of scaling review to match AI generation speed.

Container Escape Telemetry and Detection

Catscrdl published technical research on detecting container escapes through host telemetry and command-line analysis. The key insight is that container escape detection requires host-level visibility because once a container escapes, traditional container-level monitoring is blind.

Process parent and child relationships, osquery-based telemetry, eBPF runtime detection, and Kubernetes admission control form a layered defense. Worth reading for practitioners building container security programs, because the traditional boundary-based model is not enough.

Open Source Security Ecosystems

Andrew Nesbitt published ongoing analysis of open source ecosystem challenges, particularly the differences between newer ecosystems like Rust and Go and legacy ones like C. His work connects to the broader NSF Safe-OSE program funding open source safety, security, and privacy research. These kinds of ecosystem-level initiatives are what will eventually move us from incident response to structural prevention.

Final Thoughts

This week brought two themes into sharp focus, and they are deeply connected.

The first is that AI has crossed a capability inflection point in vulnerability research. Anthropic’s Mythos model has found tens of thousands of bugs, some of them decades old. Project Glasswing is the first major coordinated defensive response, bringing $100 million and an industry coalition to the table. XBOW has already overtaken human researchers on HackerOne. Ptacek called vulnerability research cooked and he is not wrong. What matters now is whether the institutional capacity for remediation can scale to match discovery. My Vulnpocalypse piece lays out why I am cautiously optimistic but not complacent. The building blocks exist, but coordination has to happen at AI speed, not committee speed.

The second is that agentic identity is finally getting serious infrastructure. Dick Hardt’s AAuth spec, Karl McGuinness’s authority-first framing, and Christian Posta’s practical implementations are exactly the kind of foundational work the ecosystem needs. For years I have been writing that non-human identity is the most under-addressed problem in cybersecurity. The work this week shows that the standards community is catching up with the threat reality. It will take time to mature, but the direction is correct.

Running through all of it is the same stubborn truth. AI is accelerating both offense and defense, and the winners will be the organizations that can mobilize coordinated responses at the speed the technology demands. Policy theater will not get us there. Vendor hype will not get us there. What will get us there is deep investment in fundamentals, honest accounting of where we are losing, and the willingness to build new infrastructure instead of retrofitting old models onto new problems.

Stay resilient.

Chris Hughes