Resilient Cyber Newsletter #91

AI Top NatSec Concern, Cyber & VC, RSAC State of Vendors, Claude Code Auto-Mode, 177,000 AI Agent Tools & The Complete Guide to Preventing Supply Chain Attacks

Welcome to issue #91 of the Resilient Cyber Newsletter!

This was the week Axios got compromised. The npm package that handles HTTP requests for roughly 80% of cloud environments was backdoored by UNC1069, a North Korea-nexus threat actor, after they hijacked a maintainer’s account and published malicious versions containing a cross-platform RAT. Within a 39-minute window, the attacker turned one of npm’s most trusted packages into a weapon.

If you thought the TeamPCP campaign I covered in issue #90 was the peak of supply chain chaos, this week raised the bar.

Meanwhile, a federal judge blocked the Trump administration’s supply chain risk designation against Anthropic in a blistering 43-page ruling, SentinelOne published a remarkable case study showing their EDR autonomously stopping Claude Code from executing a zero-day supply chain attack, Anthropic launched auto-approve mode for Claude Code (with immediate security debate), the UK AI Safety Institute published data on 177,000 AI agent tools, and NIST released its first formal report on the challenges of monitoring deployed AI systems.

The cybersecurity market hit another record quarter, California signed a first-of-its-kind AI executive order in direct opposition to the White House, and OWASP seems to be cooking up a Agentic Skills Top 10.

There is a lot to unpack this week, so let’s get into it.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Growing Trivy/TeamPCP Attack — Get 3 Free Months of Chainguard Libraries Now

A coordinated supply chain attack is still unfolding, and your team may already be in the blast radius. In the past two weeks, malicious actors compromised Trivy, Checkmarx, LiteLLM, Telnyx, and 100+ npm packages through CanisterWorm — an attack that spans open source containers, CI/CD workflows, and language dependencies across ecosystems.

Chainguard customers were unaffected by each one.

As your team works through incident response and triage, we want to help. Now through May 31, we are offering three free months of Chainguard Libraries and Chainguard Actions. No paid commitment required.

Cyber Leadership & Market Dynamics

The Future of AI Enterprise Security - PANW CEO Keynote

When the industry’s largest and most influential security vendor lays out their vision and bets for what the future of enterprise cybersecurity are, it is worth listening to.

20VC Podcast: AI and Venture

Harry Stebbings’ latest episode covers the intersection of AI and venture capital, exploring how AI is reshaping startup formation, development velocity, and investment thesis construction. Worth a listen for anyone interested in how the capital allocation side of cybersecurity is adapting to the agentic era.

When one of the most successful investors in Cyber speaks, it is worth listening to. Gili Raanan has an insane track record, from Seqoia through Cyberstarts, backing some of the most defining cyber startups/companies of the era.

I found this discussion really interesting from various perspectives including finance, building and more.

Pro-Iran Hackers Claim Breach of FBI Director’s Personal Email

The Handala Hack Team, an Iran-linked group, claimed responsibility for breaching FBI Director Kash Patel’s personal email account. The leaked materials included personal photographs and historical correspondence from 2011-2022. The breach was described as retaliation for FBI operations seizing Handala’s domains. While the FBI stated the information was “historical in nature” with no government information involved, the Trump administration offered a $10 million reward for information leading to identification of Handala members.

Personal email accounts of senior government officials remain a persistent target, and this incident reinforces why operational security extends well beyond government systems.

Federal Judge Blocks Anthropic Supply Chain Risk Designation

This is a significant development in the Anthropic saga I’ve been tracking since issue #88, when the Trump administration designated Anthropic as a supply chain risk and ordered federal agencies to sever ties with the company. US District Judge Rita Lin issued a 43-page ruling granting Anthropic a preliminary injunction, calling the government’s action “Orwellian” and finding that punishing Anthropic for bringing public scrutiny to the government’s contracting position constituted First Amendment retaliation.

The backstory matters. Anthropic held a $200 million Pentagon contract signed in July 2025. Negotiations broke down when the Pentagon demanded unfettered access while Anthropic insisted on maintaining contractual guardrails around autonomous weapons and mass surveillance uses of Claude. The government’s response was to brand an American AI company as a potential adversary using a designation previously reserved for companies connected to foreign adversaries.

The Breaking Defense follow-up reveals an important wrinkle, the Pentagon CTO says the ban still stands despite the judicial ruling. Judge Lin imposed a seven-day stay on her order, and institutional resistance within the defense establishment continues. This tension between judicial oversight and executive enforcement is worth watching closely.

It has implications for every AI company doing business with the federal government and highlights an interesting intersection of AI and politics.

AI Is the Top National Security Concern for 2026

The Office of the Director of National Intelligence’s 2026 Threat Assessment elevated AI from a technology category to a cross-cutting national security concern that amplifies all other threat vectors. Unlike discrete threats from China, Russia, Iran, and North Korea, AI is now treated as a force multiplier across all adversarial capabilities.

China plans to overtake US AI capabilities by 2030, and Russia is pioneering battlefield AI applications, particularly in anti-drone operations. The intelligence community specifically noted AI being used actively in combat operations, not as a theoretical future capability but as a present reality.

California Signs First-of-Its-Kind AI Executive Order

Governor Newsom signed an executive order requiring AI companies contracting with California to demonstrate safety and privacy guardrails. This directly opposes the Trump administration’s December executive order declaring that state AI regulation “thwarts” American AI leadership. California and New York are now partnering to forge standards across all states on AI transparency, safety, and innovation.

The federal-state regulatory collision on AI governance is intensifying, and the cybersecurity implications are significant for any organization operating across jurisdictions, as we seem to continue on a path of a patchwork quilt of AI regulatory requirements across states and even nations.

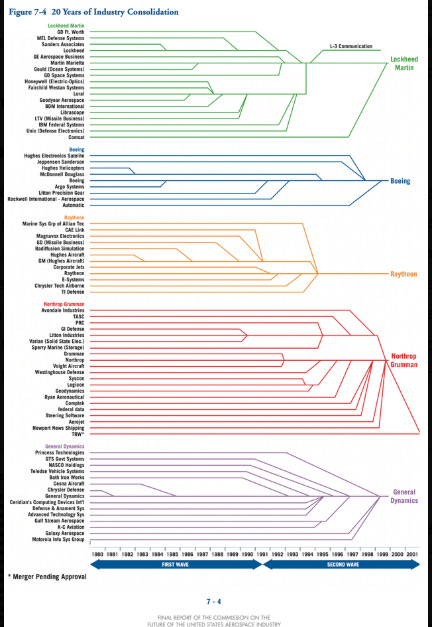

RAIGNark: The End of the Platformization Era in Cybersecurity

Mark Kraynak argues that the consolidation era in cybersecurity is ending. The thesis is that AI agents will enable more distributed, specialized security tools that work together rather than requiring monolithic platforms. Instead of building everything into one platform, agents can orchestrate across multiple specialized tools.

This is a meaningful counterpoint to the platform consolidation narrative from CrowdStrike, Palo Alto, and others that we’ve been tracking. If agents can function as connective tissue between best-of-breed solutions, the case for massive platform consolidation weakens. The pendulum between platforms and best-of-breed has always been perennial, as I’ve noted in prior issues, but AI agents could be the force that tilts it back toward specialized tools.

Reflections on RSAC 2026: Same Vendor Playbook, New Packaging

Malcolm Harkins shared his reflections from RSAC 2026, and the title tells you everything. Same vendor playbook, new packaging. Harkins has long been one of the most thoughtful voices on the business side of cybersecurity, and his observation that vendors are largely repackaging existing capabilities with AI branding tracks with what I’ve been seeing.

The challenge for practitioners is separating genuine capability improvements from marketing repositioning. This is where frameworks like the OWASP Agentic Top 10 and CSA’s CSAI Foundation (issue #90) become essential for establishing objective benchmarks.

I saw a TON of existing category players (e.g. AppSec, SOC, EDR, CNAPP etc.) all trying to reposition themselves as an agentic security solution at RSAC. However, as I’ve been writing, the all primarily see the problem from their myopic viewpoint, rather than offering a truly agentic-centric solution that uses signals from across the categories as context in a broader picture.

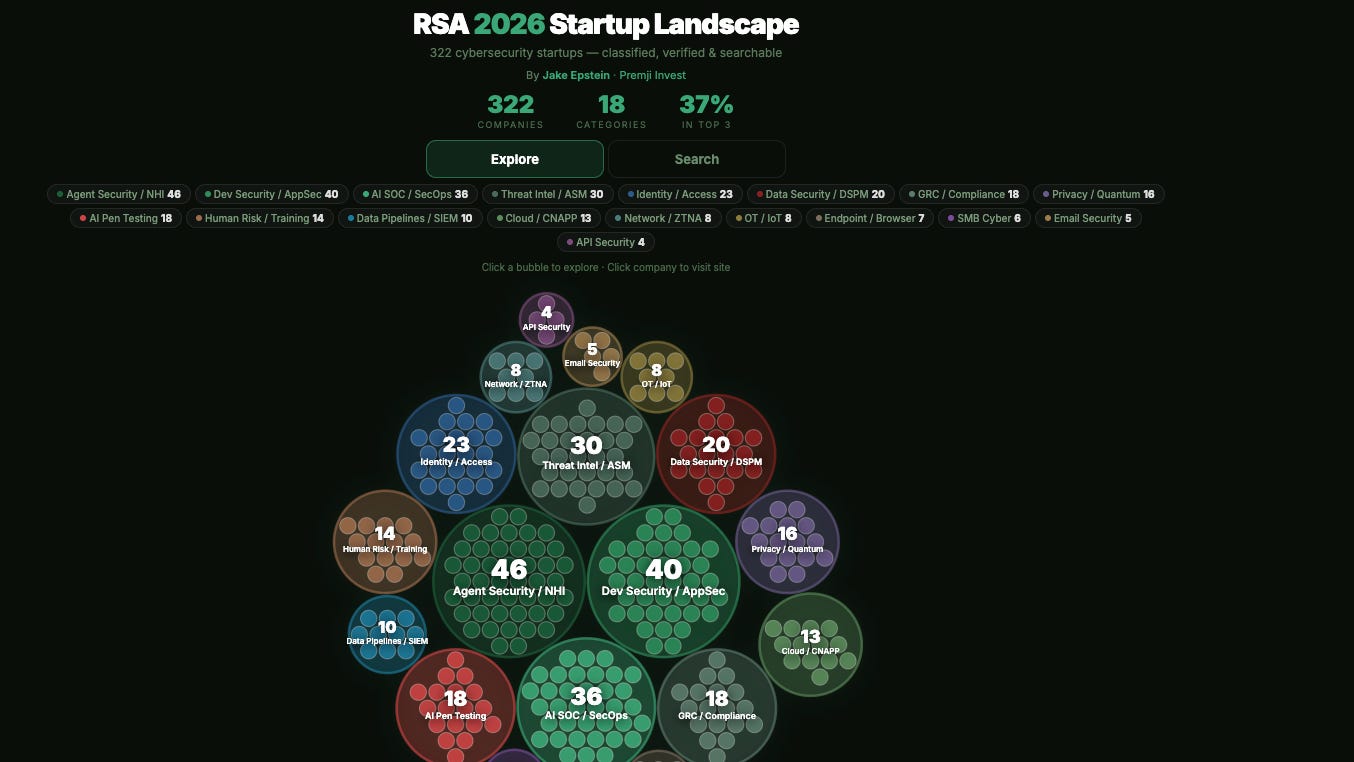

RSA 2026 Vendor Landscape

Jake Epstein at Premji Invest published an interactive visualization mapping 320 cybersecurity startups across 18 categories from the RSA 2026 exhibitor catalog. This is a useful tool for anyone trying to understand the competitive landscape, identify white space, or just get a sense of where investment dollars are flowing. Worth bookmarking as a reference.

RSAC 2026 - State of Security Vendors and AI-Washing

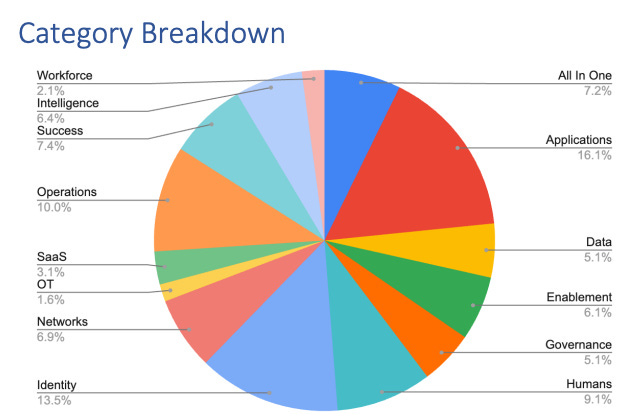

Andy Ellis of Duha spent 11 hours walking every exhibitor booth at RSAC 2026. The findings are worth paying attention to.

AI Signal vs. AI Noise

37% of booths mentioned AI. 223 vendors featured “AI” or “agentic” language, but much of it was AI-washing. Vendors flagging AI risk in third-party ecosystems, adding LLM chatbots to existing products, or simply inserting “AI-driven” into threat language with no underlying capability change. This aligns with Malcom’s post above about everyone trying to mention AI and Agents, even if their view is isolated and off target.

Where Competition Is Concentrated

Applications/AppSec — 98 exhibitors

Identity — 82 exhibitors

Security Operations — 61 exhibitors

Human Risk Management/EDR — 55 exhibitors

If you operate in these spaces, you’re competing in a very loud room with increasingly confused buyers.

What’s Underrepresented

Non-human identity had only 4 dedicated booths, largely absorbed into the broader AI narrative. OT/ICS had 10. Quantum/PQC had 12. The NHI number is telling given the investment hype heading into 2026.

The Show Floor Reality

Nearly 8% of booths left practitioners unable to determine what the company does. Badge scanning was prioritized over market education. The floor behaves more like a lead generation operation than a place to learn.

The security market is overcrowded, messaging is increasingly muddled, and AI is being used as veneer as much as genuine differentiation. Clarity of problem and specificity of solution matters more than ever when 37% of the room is saying the same word

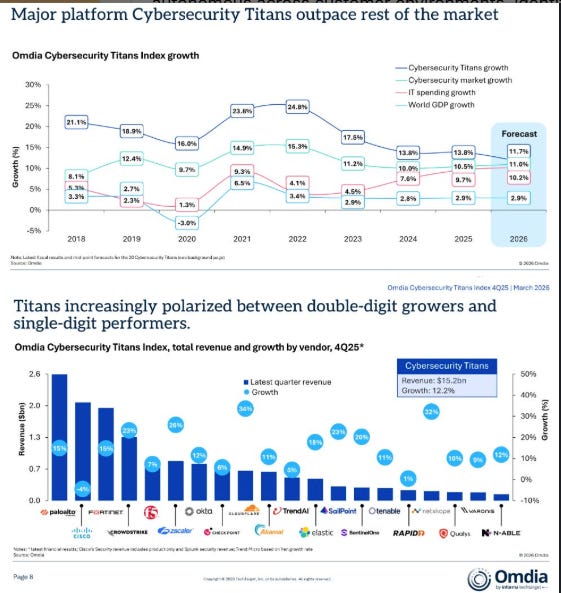

The Cybersecurity Market Posts Another Record Quarter

Matthew Ball highlighted another record quarter for cybersecurity spending. The global market is on track to exceed $520 billion annually by 2026, with cybercrime projected to cost $10.8 trillion, which would rank third globally after the US and China as an economy. AI-cybersecurity companies are commanding premium valuations with faster fundraising cycles and larger tickets than their non-AI peers.

Jay McBain added critical context with his analysis showing cybersecurity is on track to reach $311 billion in 2026, with over 90% sold through channel partners. Services are growing faster than technology, and partners are critical for design, deployment, MDR, and operational support. That 90%+ channel dependency is a distribution reality that shapes everything from go-to-market strategy to vendor consolidation dynamics.

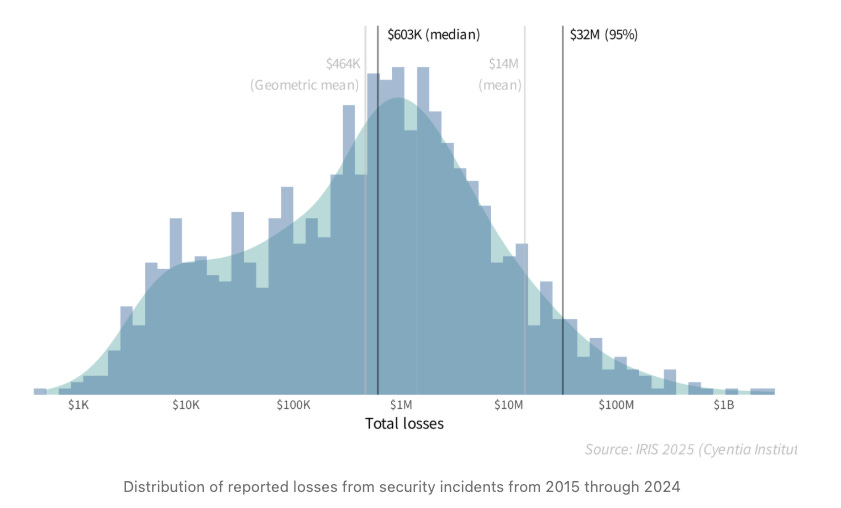

IRIS 2025: Have Security Incidents Gotten More Severe?

The Cyentia Institute’s IRIS 2025 report provides the kind of actuarial data the industry needs more of. Ransomware accounted for 32% of all security incidents and 38% of financial losses over the last five years, with a median loss per incident of $3.2 million. Credential compromise remains the most common entry point. Web application attack exploitation increased sixfold for smaller firms, and third-party relationship incidents doubled for large organizations.

That third-party finding reinforces everything I’ve been writing about supply chain risk. The blast radius of the Axios and TeamPCP compromises this quarter demonstrates why those third-party numbers keep climbing.

Beyond SaaS: New Business Models in Cybersecurity

Maggie Gray explored how cybersecurity business models are evolving beyond traditional SaaS. As I discussed in issue #90 with Sequoia’s services thesis and a16z’s two-path framework, per-seat pricing breaks down when AI agents replace human workers.

Gray identified emerging models including SLA-based pricing (tiers based on latency, accuracy, and relevance), output-based pricing (charging per value delivered, like per insurance claim processed), and consumption-based pricing tied to tokens or outcomes. This is the business model corollary to the SaaSpocalypse we’ve been tracking since issue #85.

AI Unmasked: Our Work as Scaffolding

Daniel Miessler published a thought-provoking piece arguing that 75-99% of knowledge work is scaffolding overhead, and AI is revealing just how small the actual “thinking” portion is.

His cybersecurity example is particularly sharp. Security testing involves stitching context on targets, creating and maintaining tooling, and building workflows, not actually discovering new vulnerabilities. When that scaffolding is packaged into AI context and methodologies, AI can execute at comparable or superior levels.

This connects directly to Caleb Sima’s piece on the brain becoming portable, where he argues that expertise can now be captured and deployed through AI agents in ways that were previously impossible. For cybersecurity professionals, the implication is clear: your value proposition needs to be in the thinking, not the scaffolding. If your job is primarily maintaining tooling and workflows, AI will compress that dramatically.

The Department of No Meets the Age of Yes

Saanya Ojha wrote about the fundamental tension security teams face in the AI era. The traditional security mindset of blocking everything is ineffective when shadow AI is exponentially more dangerous than known AI.

Her key insight is that the real systemic risk is not what gets typed into an LLM but what systems those AI tools connect to - email, Slack, Google Drive, internal databases, and code repos. Effective security needs to be use-case driven, not blanket policy-driven.

As I’ve been saying since the early days of this newsletter, security leaders who position themselves as enablers rather than gatekeepers will be the ones who maintain influence. The ones who say no to everything will simply be routed around.

AI

Anthropic Launches Auto Mode for Claude Code

Anthropic launched auto-approve mode for Claude Code, a new permissions system where Claude makes tool-call decisions autonomously using a safety classifier. Before each action, the classifier reviews whether it involves destructive operations like mass file deletion, data exfiltration, or malicious code execution. Safe actions proceed automatically, risky actions get blocked and Claude redirects to alternative approaches. Anthropic cited data showing 93% of permission prompts are typically approved by users, suggesting strong potential for automation.

Simon Willison’s analysis raised an important concern, the classifier treats pip install -r requirements.txt as routine, which is deeply problematic given the supply chain attacks we’ve been covering. His point is valid and timely. The LiteLLM backdoor (issue #90) would have sailed through an AI-based safety classifier because pip install looks perfectly normal. Willison prefers OS-level sandboxing that deterministically restricts file access and network connections over non-deterministic AI classifiers.

The Human-in-the-Loop (HITL) Illusion

I published a new deep dive on Resilient Cyber this week looking at why human-in-the-loop is not functioning as a security control and what Auto Mode tells us about the future of agentic AI safety.

The catalyst was Anthropic’s own data showing users approve 93% of Claude Code permission prompts. But the behavioral patterns underneath that number are what matter most.

New users auto-approve about 20% of sessions. By 750 sessions, that climbs to over 40%. Trust accumulates and oversight erodes predictably over time.

Experienced users do not stop paying attention entirely. They shift from proactive per-action review to reactive monitoring and intervention, interrupting roughly 9% of turns compared to 5% for new users.

Anthropic built Auto Mode as a two-layer classifier system that strips the agent’s own reasoning from what the safety model sees, so the agent cannot talk its way past the filter. The result is a 0.4% false positive rate but a 17% miss rate on genuinely dangerous actions.

Simon Willison raised a critical point that classifier-based safety is non-deterministic by nature. Traditional security controls are binary. A firewall rule blocks or it does not. An AI classifier introduces probabilistic uncertainty into a security boundary.

But the realistic baseline is not careful manual review. It is “YOLO mode” with zero guardrails. A classifier with a 17% miss rate is meaningfully better than no controls at all.

The takeaway for security leaders is that per-action human approval does not match how humans actually work with autonomous systems at scale. The answer is layering deterministic controls like sandboxing, allowlists, and tool restrictions with probabilistic protections like behavioral monitoring and intent analysis.

Build security for how humans actually interact with agents, not how we wish they would.

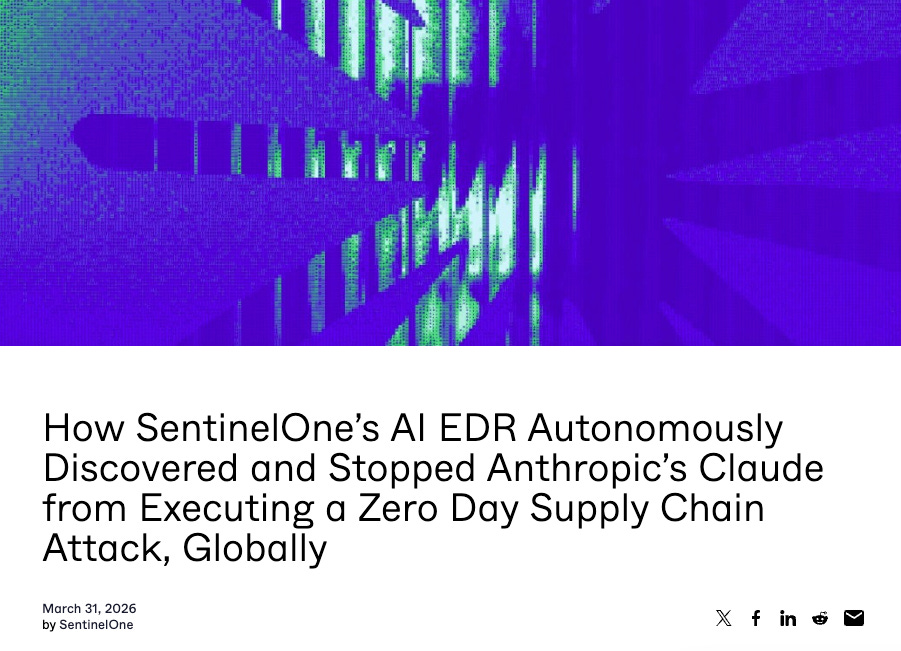

SentinelOne EDR Autonomously Stops Claude Code Zero-Day Supply Chain Attack

This is one of the most important case studies I’ve seen this year. SentinelOne published a detailed account of their AI-powered EDR autonomously detecting and stopping Claude Code from executing a zero-day supply chain attack through the compromised LiteLLM package. Here is the critical detail, no human developer ran pip install. Claude Code autonomously updated LiteLLM to the compromised version as part of its normal workflow. This is a documented supply chain attack triggered by an AI agent without human intervention, which is a pattern where it is easy to see how this happens for many other organizations as well in AI-native development flows.

SentinelOne’s Singularity Platform detected the behavioral pattern (Python interpreter executing base64-decoded code in a spawned subprocess) and preemptively killed the process before the stealer, persistence, or lateral movement stages could execute. The attack chain went from TeamPCP compromising Trivy upstream, to backdooring LiteLLM on PyPI, to Claude Code autonomously pulling in the malicious package.

This validates multiple themes I’ve been tracking, including AI agents as attack vectors (OWASP Agentic Top 10), the cascading nature of supply chain compromise (Software Transparency), and the need for runtime behavioral detection rather than just signature matching.

It also, somewhat ironically, makes the strongest case for why Anthropic’s auto-approve mode needs more than an AI safety classifier. The exact scenario Willison warned about played out in production.

UK AISI: Evidence from 177,000 AI Agent Tools

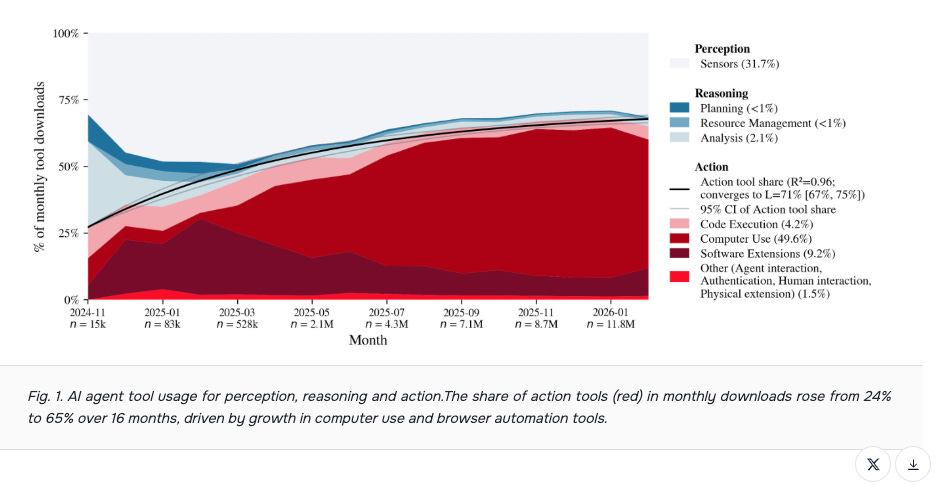

The UK AI Safety Institute, working with the Bank of England, published remarkable data on AI agent tool adoption. MCP tools grew from roughly 5,000 to 177,436 over 16 months, with downloads surging from 80,000 to 14 million. The most striking shift is that action tools grew from 24% to 65% of monthly downloads, confirming the fundamental transition from analysis to action that defines the agentic era.

Software development and IT tools represent 67% of all published tools and 90% of downloads, which aligns with what we’re seeing in the market. Perhaps most concerning from a security perspective was 28% of all MCP servers contain AI-generated code, and 62% of newly created servers in February 2026 were AI-generated.

This is the vibe coding supply chain risk at scale. When the tools that agents use are themselves built by AI, the attack surface compounds. The OWASP Agentic Skills Top 10 launch (below) could not be more timely.

Runtime: The New Frontier of AI Agent Security

CSO Online profiled the emerging runtime security market for AI agents. The thesis is “Shift Left, Shield Right”, move security controls into development while also implementing runtime monitoring as a last-mile safety net.

Microsoft is launching Agent 365 with runtime threat protection (GA May 1, 2026 at $15/user/month), NVIDIA’s OpenShell provides policy-based guardrails, and Cisco’s Agent Runtime SDK embeds enforcement directly into agent workflows. The core argument is that zero-day vulnerabilities and novel attack patterns cannot be anticipated at build time, making runtime monitoring essential. Given the SentinelOne/Claude case study above, this is not theoretical. Runtime detection caught what static analysis never could have.

OWASP Agentic Skills Top 10: Official Launch

Ken Huang announced the official launch of the OWASP Agentic Skills Top 10. For those following my work on the OWASP Agentic Top 10 and the broader ASI initiative, this is an important milestone. The timing is critical, as the ClawHub registry (the primary AI agent skill marketplace) was systematically poisoned in Q1 2026, with five of the top seven most-downloaded skills confirmed as malware. Critical vulnerabilities in Claude Code itself (CVE-2025-59536 at CVSS 8.7 and CVE-2026-21852 at CVSS 5.3) further demonstrate the attack surface.

The Top 10 recommends immediate skill inventory across all agent platforms, ed25519 signing before publication, pinning nested dependencies to immutable hashes, and including content hashes in manifests.

This is the software supply chain security playbook Tony Turner and I wrote about in Software Transparency, adapted for the agentic era. I’m encouraged by the pace at which OWASP is producing actionable guidance.

NIST AI 800-4: Challenges Monitoring Deployed AI Systems

NIST published its first formal report on the challenges of monitoring deployed AI systems, based on three practitioner workshops with over 200 experts and an 87-paper literature review. The findings are sobering: there are no validated methodologies, no agreed metrics, and no standardized processes for production AI monitoring.

The most interesting gap they identified is human-AI interaction monitoring. Workshop practitioners discussed human factors far more than the published literature reflects, indicating the biggest blind spot is exactly where humans and AI systems interact.

Rock Lambros RockCyber’s analysis adds an important critique - NIST’s proposed evaluation framework operates on the assumption that no adversaries are present, which is fundamentally incompatible with security requirements. You cannot evaluate security standards using a methodology designed for data formatting standards. Security operates in a fundamentally different reality, and the standards need to reflect that.

What Separates Real AI Governance from Policy Theater

This piece articulates something I’ve been observing across the industry. Most AI governance programs are compliance theater. The real test, as my friend Rock Lambros from Zenity notes, is whether violations result in consequences. If silence follows a violation, you have theater, not governance.

Real governance must be enforceable with underlying standards and SOPs, mapped to actual AI in use, and regularly audited. AI governance differs from traditional cybersecurity governance because you are dealing with probabilistic systems whose behavior changes based on input and model updates that happen outside the organization’s control. This is a useful framework for any CISO trying to stand up an AI governance program that actually works.

Mastercard: Verifiable Intent for Agentic Commerce

Mastercard released an open-source specification for “Verifiable Intent” that creates tamper-resistant cryptographic records linking identity, intent, and action for AI agent-authorized transactions. The protocol uses selective disclosure to share only the minimum information needed with each party.

Partners include Google, Fiserv, IBM, Checkout.com, and others. This is exactly the kind of infrastructure the agentic economy needs. As I’ve discussed through my work on the OWASP NHI Top 10, the identity challenge for AI agents extends beyond authentication into authorization and audit trails. Mastercard is building the financial infrastructure layer for agent-to-agent commerce, and the security architecture matters enormously.

T-MAP: Red-Teaming LLM Agents with Trajectory-Aware Search

This research paper proposes T-MAP, a trajectory-aware evolutionary search method for red-teaming AI agents. The key insight is that traditional LLM red-teaming focuses on eliciting harmful text outputs, but agent-specific vulnerabilities emerge through multi-step tool execution. T-MAP leverages execution trajectories to discover adversarial prompts that bypass safety guardrails through actual tool interactions.

Combined with the NIST-aligned security considerations paper that maps agent architectures against code-data separation, authority boundaries, and confused-deputy behavior, the academic community is building the theoretical foundations for agent security testing.

AppSec

Axios Compromised: A Supply Chain Attack on npm’s Most Popular HTTP Client

This is the supply chain story of the week, and given what we covered with TeamPCP/LiteLLM in issue #90, the timing could not be worse. Axios, the npm package that handles HTTP requests for roughly 80% of cloud and code environments with approximately 100 million weekly downloads, was compromised after attackers hijacked the npm account of jasonsaayman, the lead maintainer. Within a 39-minute window, two malicious versions were published: 1.14.1 and 0.30.4.

The payload was a hidden dependency called “plain-crypto-js” that silently deployed WAVESHAPER.V2, a cross-platform RAT covering Windows, macOS, and Linux. The postinstall hook fired on npm install, and the payload called back to sfrclak[.]com:8000 to retrieve stage-2 implants. Google Threat Intelligence Group attributed the attack to UNC1069, a financially motivated North Korea-nexus threat actor active since 2018.

Multiple sources confirmed that execution was observed in roughly 3% of affected environments before removal. Given the install base, even 3% represents a massive number of compromised environments.

This is being compared to the 2021 ua-parser-js compromise and the event-stream incident for good reason. As I wrote in Software Transparency, the implicit trust model in open-source package registries is fundamentally broken. One compromised maintainer account can propagate malware to millions of installations within minutes.

The Complete Guide to Preventing Supply Chain Attacks

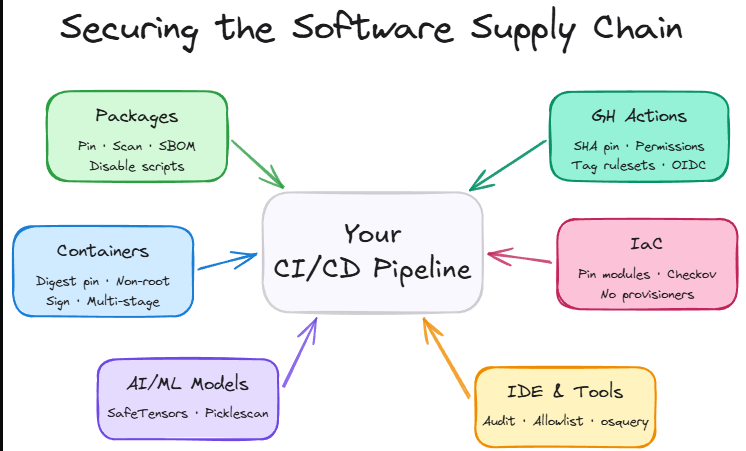

James Berthoty published practical guidance on supply chain defense that feels essential reading after the Axios and TeamPCP campaigns. The key recommendations include pinning GitHub Actions to version SHAs rather than version numbers, restrict GitHub Actions access using least privilege, require cooldown periods before version updates, expand SBOM data to include packages from local dev machines and pipelines (not just production), enforce MFA on all package registries, and audit user permissions aggressively.

Latio’s 2026 Application Security Report also identified four strategic approaches - minimal container images, secure package registries with pre-import scanning, backporting patches for application libraries, and OS-level patching for base images.

The Comforting Lie of SHA Pinning

This piece provides an important nuance to the “pin everything to SHAs” advice. The argument is that SHA pinning optimizes for machine verification but fails during human code review.

The author demonstrates this using the real Trivy attack: attackers changed SHAs while keeping version tag comments intact, and no tool validated whether the SHA actually corresponded to the claimed version. Transitive dependencies create additional exposure since unpinned actions within pinned actions still leave you vulnerable.

The conclusion is not that SHA pinning is useless but that it is necessary and insufficient. Multi-layered defense combining pinning with strict permissions, network monitoring, and automated security scoring is the right approach.

Final Thoughts

This week crystallizes a theme I keep returning to, the speed of AI adoption is outpacing every layer of security, from standards bodies to runtime controls to the basic trust model in open-source registries.

The Axios compromise and the SentinelOne case study together tell a complete story. In one case, a North Korean threat actor weaponized a trusted npm package and reached millions of environments in under 40 minutes. In the other, an AI coding agent autonomously pulled in a backdoored package as part of its normal workflow, and only behavioral EDR stopped it. Neither traditional security controls nor Anthropic’s new auto-approve classifier would have caught either attack at the point of installation.

The policy landscape is equally dynamic. A federal judge called the Trump administration’s actions against Anthropic “Orwellian,” California is signing AI safety executive orders in direct opposition to the White House, and the intelligence community named AI the top national security concern for 2026. The tension between innovation velocity and governance maturity has never been more acute.

I am encouraged by the building blocks emerging, such as OWASP’s Agentic Skills Top 10, NIST’s monitoring gap analysis, Mastercard’s Verifiable Intent specification, and the growing body of academic work on agent-specific red teaming. But as the AI governance piece rightly noted, the difference between real governance and policy theater is enforcement. Frameworks on paper mean nothing without the institutional will to implement them.

The scaffolding metaphor from Miessler is the right frame for where we are. AI is stripping away the overhead that made knowledge work look harder than it actually is, a theme echoed by PANW’s CEO too, and security is no exception. The question for every cybersecurity professional is whether your value is in the thinking or the scaffolding. If it is in the scaffolding, the clock is ticking.

Stay resilient.