Resilient Cyber Newsletter #88

Anthropic Sues U.S. Government, Kevin Mandia Raises $190M for Agentic OffSec, Vibe Security Radar, Claude Opus Finds 22 Firefox Vulns, Hooking Coding Agents & the Zero Day Clock

Welcome to issue #88 of the Resilient Cyber Newsletter!

This week felt like a turning point for several of the themes we’ve been tracking. Anthropic sued the Trump administration after the Pentagon designated it a supply chain risk, the first time that label has been applied to a US company. The same week, Anthropic’s Claude Opus 4.6 found 22 vulnerabilities in Firefox in just two weeks, and OpenAI launched Codex Security into research preview, scanning 1.2 million commits and flagging over 10,000 high-severity findings.

Kevin Mandia came out of retirement with Armadin, raising $190 million for autonomous AI agent security. CrowdStrike delivered a record fiscal year with $5.25 billion in ARR, while Okta’s earnings made clear that agentic identity is no longer a roadmap item, it is driving 30% of new bookings.

Meanwhile, the [un]prompted AI security conference brought 700 practitioners to San Francisco, Google’s zero-day report showed enterprise-targeted exploits are accelerating, and Cedar-based policy enforcement for coding agents continued to gain traction.

Let’s get into it.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

The challenge with AI isn’t adoption. It’s visibility and control.

AI is no longer a future concern or a controlled experiment. Agents, copilots, embedded AI in SaaS, and custom models are already operating across enterprise environments, touching real data, making real decisions, and acting at machine speed.

Yet, most organizations still don’t have clear answers about what AI is running, what data it can access, or how its actions are governed. Without visibility and control, AI will find sensitive data and amplify the impact when it’s misused.

Varonis helps organizations secure AI and the data it depends on, so adoption doesn’t turn into uncontrolled exposure. Don’t settle for a suite of point solutions that address isolated risks. Start securing everything you build and run with AI today.

Cyber Leadership & Market Dynamics

Anthropic Sues the Trump Administration Over Supply Chain Risk Designation

This is the biggest story in AI governance this week, and it is unprecedented in the most literal sense. The Pentagon designated Anthropic a supply chain risk after the company refused to remove two contractual red lines: no use of Claude for autonomous weapons and no use for mass surveillance of US citizens. Anthropic filed two lawsuits calling the federal action “unprecedented and unlawful,” arguing it violates the First Amendment and due process protections.

As I covered in issue #87, the Pentagon confrontation was escalating. Defense Secretary Hegseth had given Anthropic a deadline to provide unfettered access. What followed was the first time a US company has received the supply chain risk designation, a label historically reserved for foreign adversaries. White House spokeswoman Liz Huston framed it as the military operating under the Constitution rather than “any woke AI company’s terms of service.”

The broader implications here are significant. This is not just about one company’s contract dispute. It is about the relationship between frontier AI developers and the national security apparatus, and whether companies can maintain ethical guardrails on how their technology is deployed. Dozens of scientists from OpenAI and Google DeepMind filed an amicus brief supporting Anthropic, arguing the designation could harm US competitiveness and chill public discussion about AI risks and benefits.

Anthropic’s Supply Chain Shock Exposes a Bigger Problem

NetRise published an analysis that cuts through the political headlines to ask the operational question every security leader should be asking: if a major AI supplier suddenly becomes restricted, can your organization quickly determine where that supplier exists across the software you build, buy, and deploy?

Using their platform data, NetRise identified 476 official Anthropic package names spanning 3,434 versions across seven ecosystems. The dependency picture expanded to 127,441 direct or transitive dependent package versions, with six public container repositories representing over 203 million Docker Hub pulls affected.

This is the software supply chain problem Tony Turner and I wrote about in Software Transparency, applied to the AI era. Organizations do not just need more SBOMs. They need the ability to act on supply chain data when a supplier event becomes operationally urgent. The Anthropic designation may resolve itself through the courts, but the exercise of mapping your AI supply chain dependencies is one every organization should be doing regardless of the outcome.

Kevin Mandia Returns with Armadin: $190M for Autonomous AI Security

Kevin Mandia, who founded Mandiant and sold it to Google for $5.4 billion, is back with a new company and a record funding round. Armadin raised $189.9 million in combined seed and Series A from Accel, GV, Kleiner Perkins, Menlo Ventures, Ballistic Ventures, and In-Q-Tel (the CIA’s venture arm). The company is building autonomous cybersecurity agents that continuously reason, plan, and adapt like advanced human threat actors.

Mandia’s warning is worth listening to: “When you have AI on offense, what you are going to get is a technology that can think, can learn, can adapt.” The company has already hired over 60 employees and started working with Fortune 100 companies. When someone with Mandia’s track record raises $190M for AI agent security specifically, it validates what we’ve been saying about the agentic threat landscape. The adversaries are building autonomous offense. The defenders need autonomous defense.

I recently shared an in-depth interview with Kevin, which is worth watching:

CrowdStrike Delivers Record FY26

CrowdStrike’s Q4 earnings reinforced that cybersecurity demand is not softening, even as the broader SaaS market faces pressure. The company hit $5.25 billion in ending ARR (24% growth), with record net new ARR of $331 million in Q4 alone (47% year-over-year growth). For the full year, net new ARR exceeded $1 billion for the first time.

The most relevant signal for our audience is that management noted that 80% of modern breaches are now non-malware-based, shifting strategic focus toward identity protection and zero standing privilege. CrowdStrike’s AI Detection and Response (AIDR) offering grew 5x quarter-over-quarter. The acquisitions of SGNL (continuous identity) and Seraphic Security (browser runtime) further signal where the company sees the next battleground, which is identity, agents, and the browser.

CrowdStrike Q4: Two Slides That Matter

Building on the CrowdStrike reporting, Cole Grolmus highlighted two specific slides from CrowdStrike’s earnings that deserve attention from security practitioners. The data reinforces the platform consolidation trend and the acceleration of AI-driven security capabilities. For those tracking how the cybersecurity market is evolving, CrowdStrike’s results suggest that the companies investing aggressively in AI and identity are being rewarded by customers and investors alike.

Okta Q4: Agentic Identity Becomes Real Revenue

Okta’s Q4 was equally telling. Revenue hit $761 million (11% growth), shares surged 11%, and the company announced a $1 billion buyback. But the real story is the agentic identity pivot.

The ratio of non-human identities to human users at enterprise Okta customers has reached 144:1. Okta’s newly launched “Okta for AI Agents” suite accounted for 30% of Q4 bookings, with deals that included agentic products seeing approximately 40% contract uplift. Management outlined two pricing models for agent identity: a “multiplier” model tied to human identities using multiple agents, and a connection-based model for autonomous agents that scales with the number of systems connected.

This is exactly the NHI challenge I’ve been writing about through the OWASP NHI Top 10. When you have 144 non-human identities for every human user, and those identities are increasingly autonomous agents rather than static service accounts, the identity surface becomes the primary security concern. Okta’s earnings confirm that the market is starting to price this in.

Insight Partners: Agent IAM Is the Defining Security Topic of 2026

Insight Partners published a thoughtful analysis framing Agent Identity and Access Management as the central security challenge of 2026. They identify three areas of innovation needed, which they list as identity origination and traceability (including multi-hop delegation), intent monitoring and “action management” (essentially UEBA for agents), and permissions management through dynamic authorization and zero standing privilege.

The observation that most enterprises want to treat agents as “digital employees” with unique global identities aligns with what I’ve been seeing. BNY has already deployed 130 “digital workers” with human managers. But as Insight notes, NIST’s 2026 concept paper on agent identity is just the beginning, and standards are lagging far behind deployment. This is a familiar pattern in cybersecurity, adoption outpacing governance.

Schellman Partners with Goldman Sachs Alternatives

Goldman Sachs Alternatives made a strategic investment in Schellman, one of the most recognized names in cybersecurity compliance and attestation. Schellman delivers assessments across SOC, ISO, FedRAMP, PCI, HITRUST, CMMC, and increasingly AI governance. The investment will accelerate expansion into health care, financial services, and international markets.

This signals that PE and institutional capital see cybersecurity compliance as a durable, growing market, particularly as AI governance requirements mature. As I’ve written before, compliance is security, and the demand for independent attestation is only going to increase as organizations navigate AI-specific regulatory frameworks.

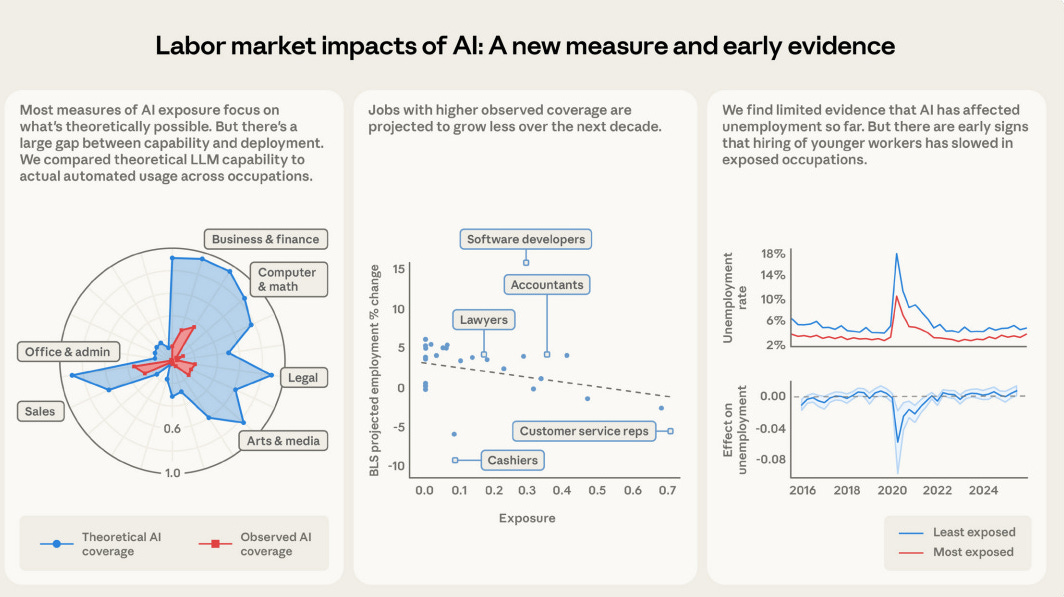

The US Labor Market and Its AI Problem

This piece examines the growing tension between AI automation and the labor market, with implications for the cybersecurity workforce. As AI agents take on more tasks previously performed by humans, the skills required of security professionals are shifting. The demand is moving toward people who can govern, architect, and audit AI-driven workflows rather than those performing tasks that agents can increasingly handle.

Lux Capital: Not Pulling Back from Founders

In a week where broader market uncertainty rattled tech investors, Lux Capital’s continued commitment to founder-backed companies is noteworthy. Lux recently closed a $1.5 billion fund and has been one of the most active investors at the intersection of AI, defense technology, and frontier science. Their signal, despite macro headwinds, the thesis that AI and security are generational investment categories remains intact.

AI

Anthropic and Mozilla: Claude Opus 4.6 Finds 22 Firefox Vulnerabilities

This is one of the most significant demonstrations of AI-driven vulnerability discovery to date. Anthropic partnered with Mozilla and turned Claude Opus 4.6 loose on the Firefox codebase. In two weeks, Claude scanned nearly 6,000 C++ files, submitted 112 unique reports, and 22 were confirmed as new vulnerabilities, including 14 high-severity findings. That represents almost a fifth of all high-severity vulnerabilities patched in Firefox throughout 2025.

The timeline is remarkable. After just twenty minutes of exploration, Claude identified its first Use After Free vulnerability. Before Anthropic could validate and submit that first report, Claude had already found fifty more crashing inputs. One finding (CVE-2026-2796) was rated CVSS 9.8.

There are two takeaways here. First, the capability is real and practically useful. AI-driven vulnerability discovery at this scale, applied to one of the most well-tested open-source projects in the world, produced meaningful security improvements. Second, Anthropic themselves cautioned that the gap between vulnerability discovery and exploitation abilities is unlikely to last long. They spent $4,000 in API credits trying to generate proof-of-concept exploits and only succeeded in two cases, but the rate of progress suggests that barrier is temporary. That dual-use reality, where the same capability that helps defenders also empowers attackers, is the defining challenge of AI security.

Their point about the gap between discover and exploitation is one I made recently myself, in my interview with Sergej Epp on the Zero Day Clock.

OpenAI Launches Codex Security in Research Preview

Just days after Anthropic’s Firefox disclosure, OpenAI launched Codex Security into research preview. The tool has already scanned over 1.2 million commits, identifying 792 critical and 10,561 high-severity findings. In early internal deployments, it surfaced a real SSRF and a critical cross-tenant authentication vulnerability that were patched within hours.

This is now a two-horse race in AI-powered code security, following Claude Code Security’s launch that I covered in issues #85 and #86. OpenAI’s approach works in three phases, building a security-relevant project model, identifying and classifying vulnerabilities based on real-world impact, then pressure-testing findings in a sandboxed environment. Notably, false positives dropped by over 50% and over-reported severity decreased by 90% compared to early beta.

The competitive dynamic between Anthropic and OpenAI in security tooling is ultimately good for the industry. Both companies are investing seriously in making AI useful for defenders, not just a threat vector. The challenge remains that these tools complement, rather than replace, runtime testing, and that organizations need to instrument both layers.

Codex for Open Source

OpenAI also announced plans to expand Codex Security access to open-source maintainers through a dedicated program. Given the chronic underfunding of open-source security, and the fact that the software supply chain depends on projects maintained by small, often volunteer teams, this is a welcome development. As I discussed in Software Transparency, the security of the open-source ecosystem is foundational to the security of everything built on top of it.

How AI Assistants Are Moving the Security Goalposts

Brian Krebs published a comprehensive overview of how autonomous AI agents are reshaping the security landscape. The piece focuses heavily on OpenClaw and the broader category of AI agents that run locally, access files, make network requests, and automate tasks on behalf of users. Krebs frames these tools as blurring the lines between trusted co-worker and insider threat, data and code, ninja hacker and novice code jockey.

This is mainstream security journalism catching up to the themes we’ve been covering since issue #82 and even earlier. When Krebs is writing about the same agentic security concerns we’ve been discussing, it signals that these issues have moved from specialist discourse to industry-wide attention.

[un]prompted: The AI Security Practitioner Conference

Gadi Evron brought together 700 practitioners and 300 virtual attendees for what many called the first truly practitioner-focused AI security conference. With nearly 500 talk submissions, 48 presentations across two days, and speakers from Google, Meta, OpenAI, Anthropic, and Trail of Bits, the depth of content was impressive. Dan Guido closed Day Two explaining how Trail of Bits rebuilt around AI to reach 200 bugs per engineer per week.

One data point that stood out: Anthropic’s GTG-1002 report showed adversaries running Claude Code at 80-90% autonomous execution. As one speaker noted: “Your adversary has an AI. If you’re at tab-completion for defense, that’s a strategic failure, not a skills gap.” This conference reinforced that AI security is now its own discipline, not a subcategory.

How Trail of Bits Became AI-Native

Dan Guido shared how Trail of Bits has embedded AI across the firm, with all 140 employees using Claude Code in YOLO mode. Their tools, including claude-code-devcontainer and dropkit, reflect a deep integration of AI into security workflows. The result: 200 bugs per engineer per week, a productivity multiplier that would have been unimaginable a year ago.

This is the practical counterpoint to fears about AI replacing security professionals. Trail of Bits has not reduced headcount. They have amplified capability. That is the model for how security organizations should be thinking about AI adoption, not as a threat to jobs but as a force multiplier for the people doing the work.

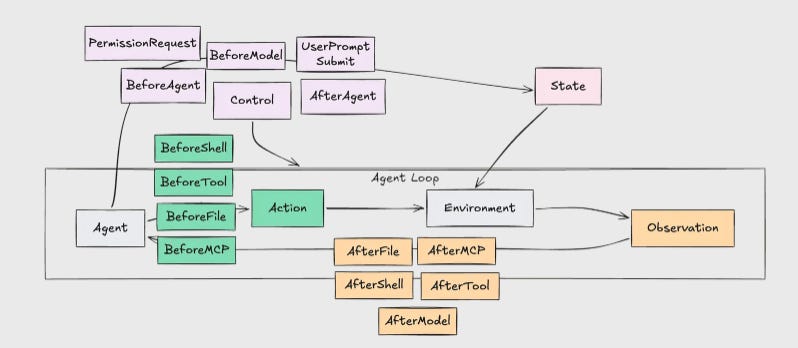

Hooking Coding Agents with the Cedar Policy Language

Josh Devon from Secure Trajectories published a detailed exploration of using Cedar policies to enforce security boundaries on coding agents. The piece reinforces the “lethal trifecta” model, when an agent combines access to sensitive data, exposure to untrusted content, and the ability to execute state changes, indirect prompt injection can lead to exfiltration or RCE.

The demos are compelling. In one, a Cursor agent downloads a malicious skill from a public marketplace that attempts to collect environment variables and exfiltrate them via HTTP. Because the Cedar policy framework has tagged the trajectory as confidential and marked it with an exfiltration taint, the shell command is blocked.

This builds on Phil Windley’s Cedar work that I covered in issues #84 and #87. The pattern of policy-as-code for agent authorization, where every tool invocation is evaluated against deterministic security intent, continues to mature. This is one of the most promising architectural patterns for agent governance.

Mark Russinovich: Opus 4.6’s Security Audit of 1986 Code

Mark Russinovich, CTO of Microsoft Azure, shared a fascinating experiment: having Claude Opus 4.6 perform a security audit of code he wrote in 1986. The post generated significant discussion about AI’s ability to analyze legacy codebases, a practical capability that matters enormously given how much critical infrastructure runs on decades-old code. When the CTO of Azure is publicly impressed by a competitor’s AI doing security analysis, that tells you something about the state of the art.

a16z Podcast: AI Security Discussion

The a16z podcast featured a timely discussion on AI security that aligns with the themes we’ve been tracking. For those who prefer audio content, this is worth an hour of your time to hear the investor and builder perspective on where AI security is heading.

Stifel: AI Deployment and Security in the Enterprise

Stifel’s research report on AI deployment and security in the enterprise provides useful data on how organizations are actually adopting and securing AI. For those tracking the gap between AI adoption and security maturity, this report offers quantitative evidence of the trends we’ve been discussing.

OWASP Top 10 for LLM Applications: 2026 Survey Results

Steve Wilson published the results of the 2026 OWASP Top 10 for LLM Applications community survey. As someone who has contributed to both the OWASP Agentic Top 10 and the NHI Top 10, I’m always interested in how the community is evolving its understanding of AI security risks. The LLM Top 10 has shaped much of the industry’s thinking, and these survey results will inform the next iteration as agentic systems bring a different class of threats.

Agentic AI Landscape Overview

This comprehensive overview of the agentic AI landscape is a useful reference for anyone trying to map the ecosystem. As the number of agent frameworks, protocols, and deployment patterns proliferates, having a structured view of the landscape helps security teams understand what they need to protect.

CB Insights: AI Agent Predictions for 2026

CB Insights published their AI agent predictions, offering a data-driven view of where enterprise agent adoption is heading. The research reinforces the trajectory we’ve been tracking, agents moving from experimental to production, from single-task to multi-agent, and from developer tools to enterprise-wide workflows.

AppSec

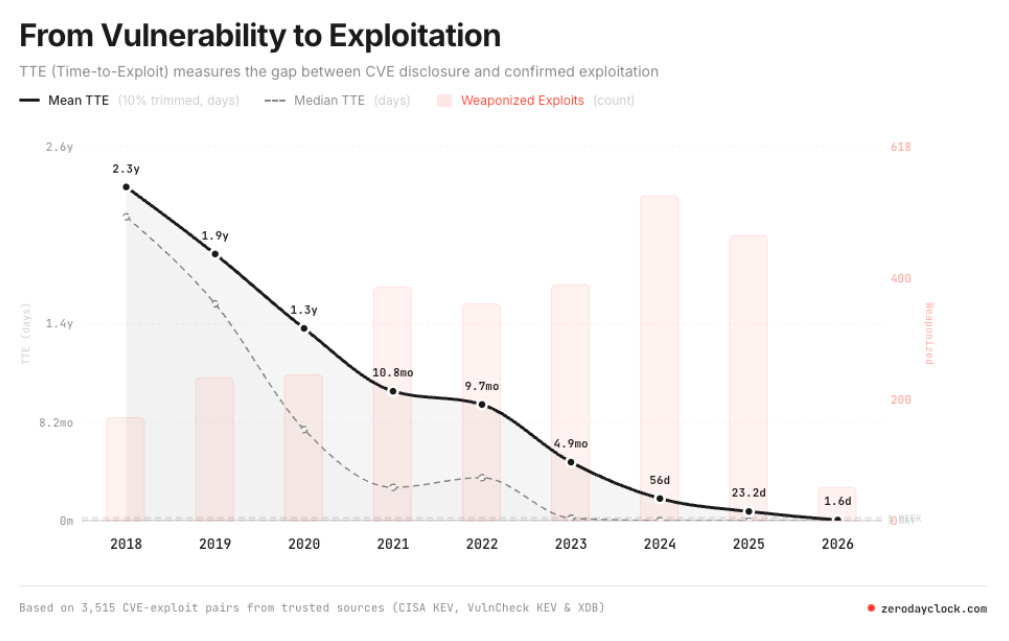

Before the Breach: The Zero Day Clock and the Race Against Exploitation

The Zero Day Clock is ticking, and the numbers should make every security leader uncomfortable. In this episode, I sit down with Sergej Epp , CISO at a leading security firm, who built the Zero Day Clock after a weekend experiment using AI to discover vulnerabilities firsthand. What he found shocked him: with no professional vulnerability research background and just a few hours of work, he was successfully finding zero days across major security projects using AI models and basic scaffolding.

Show Notes

Sergej shares how a weekend AI experiment led him to discover multiple zero days across major security projects with no professional vulnerability research experience, and why that should alarm the entire industry

The “Verifier’s Law” explained: offense has cheap, deterministic validators (pop a shell, exfiltrate data, trigger an XSS) while defense faces expensive, ambiguous validation (parsing SIM alerts, measuring security posture), giving AI-accelerated offense a structural advantage

The Zero Day Clock synthesizes 3,500+ CVE-exploit pairs and shows the mean time to exploit for actively exploited vulnerabilities is now under two days, while organizations still operate on 14-to-30-day patch cycles

20 years of ignored warnings: from Ross Anderson’s 2001 economics paper through Bruce Schneier, Halvar Flake’s “the patch is the advisory” insight, and DARPA’s Cyber Grand Challenge, the industry has consistently failed to act on clear signals

AI can now reverse engineer patches to identify underlying flaws and generate working exploits in minutes, potentially breaking coordinated disclosure models and compressing the window between patch release and active exploitation to near zero

The regulation paradox: the EU risks overregulating AI in ways that hamper defenders while attackers face no such constraints, while the U.S. is pushing deregulation that may remove the only forcing function for vendor accountability, Sergej and Chris discuss outcome-based regulation as a potential middle path

Defenders have a data advantage: by understanding their own environments, infrastructure, and processes, security teams can detect AI-driven attacks through behavioral anomalies like hallucinated API calls, non-existent user accounts, and other artifacts of AI-generated attack playbooks

The Zero Day Clock’s real power is as a board-level communication tool, a single slide that translates the patching gap into a number executives and policymakers can’t ignore, shifting the conversation from “are we compliant?” to “are we fast enough?”

Zero-Day Exploits Hit Enterprises Faster and Harder

Google’s annual Zero-Days in Review report for 2025 paints a sobering picture. Of 90 zero-days tracked, nearly half targeted enterprise technologies like security appliances, VPNs, networking devices, and enterprise software platforms. China-linked groups doubled their zero-day exploitation from 2024, accounting for at least 10 of 16 state-sponsored zero-days. Ransomware groups also doubled their zero-day usage. Commercial surveillance vendors overtook state-sponsored hackers for the first time.

These numbers reinforce what CrowdStrike’s threat report showed in issue #85: the speed and sophistication of attacks against enterprise infrastructure is accelerating. Vulnerability exploitation remains the top initial access method, ahead of stolen credentials and phishing. For those of us focused on exposure management, as I discussed in the Security Middle Child report with Intruder, these findings underscore why the shift from periodic vulnerability management to continuous threat and exposure management is not optional.

XBOW: AI Pentesting vs. DAST

XBOW’s comparison of AI-driven pentesting versus traditional DAST scanning highlights a meaningful shift in how application security testing works. Their agents coordinate to discover vulnerabilities, attempt exploit paths, and validate with proof-of-concept payloads, something DAST fundamentally cannot do for business logic vulnerabilities.

The benchmark results are notable, XBOW matched a 20-year veteran pentester’s 85% solve rate on 104 web security benchmarks, but did it in 28 minutes compared to 40 hours. Whether you find those numbers fully convincing or not (and there are valid critiques about the benchmark scope), the direction is clear. Agentic pentesting that reasons about application logic, not just pattern-matches against known vulnerability signatures, is a meaningful evolution beyond DAST.

4 Ways to Prepare Your SOC for Agentic AI

CSO Online published practical guidance for SOC teams preparing for agentic AI adoption. The piece addresses both the defensive use of agents in SOC workflows and the need to detect and respond to agent-based threats. For security operations teams that are still operating with pre-AI playbooks, this is a useful starting point for thinking about what changes when both attackers and defenders have autonomous agents.

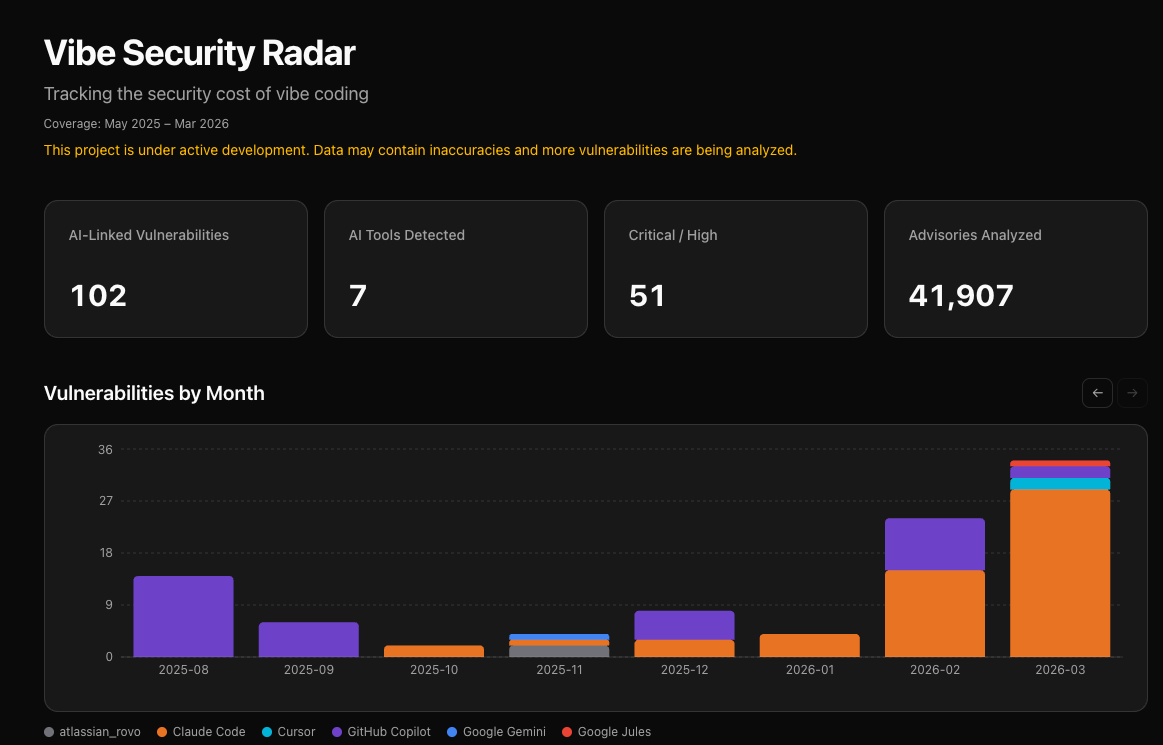

Vibe Radar: Tracking Vibe Coding Security

This tool provides real-time tracking of security issues in applications built with vibe coding platforms. As I’ve been writing about in “Secure Vibe Coding,” the explosive growth of AI-generated applications is creating a new category of security debt that the industry is only beginning to understand. Tools like Vibe Radar help quantify the scale of the problem, which is the first step toward addressing it systematically.

ClawSec: Security Suite for OpenClaw Agents

Prompt Security (a SentinelOne company) released ClawSec v0.0.1, a security skill suite for OpenClaw agents. Given the scale of the malicious skill problem, with researchers finding that 12% of ClawHub skills were malicious in January 2026 and that number expanding to 824+ malicious skills by mid-February, having automated security tooling for agent platforms is essential.

As I’ve covered since issue #83, the OpenClaw security saga continues to be one of the most important stories in agent security. ClawSec provides drift detection, security audits, file integrity monitoring, and CVE advisory enrichment for agent configurations. This is the kind of defense-in-depth tooling the ecosystem needs.

Is AI Killing Cybersecurity?

This piece examines the provocative question of whether AI is fundamentally undermining cybersecurity or transforming it. As I’ve argued repeatedly, the answer is neither simple disruption nor simple replacement. AI is reshaping what security looks like, who does it, and how it gets done. The organizations that lean in, as Trail of Bits has done by going AI-native, will thrive. Those that resist will find themselves outpaced by both the adversaries and the defenders who embraced the change.

Final Thoughts

This week crystallized a tension that will define cybersecurity for years to come: the most capable AI in the world is simultaneously being used to find 22 Firefox vulnerabilities in two weeks, being designated a national security risk by the Pentagon, and being sued by its creator to preserve ethical guardrails. The Anthropic saga is not just a legal dispute. It is the opening chapter of a much larger conversation about how we govern technologies that are too powerful to leave unregulated and too important to lock away.

The market is responding with urgency. Kevin Mandia raised $190 million because he believes autonomous AI hackers are inevitable. CrowdStrike and Okta’s earnings show that customers are spending on identity, AI detection, and agent security at accelerating rates. Insight Partners is calling Agent IAM the defining security topic of the year. OpenAI and Anthropic are racing to build security tools that could reshape the AppSec industry.

But what gives me the most hope is the practitioner community. The 700 people who showed up to [un]prompted. The Trail of Bits team hitting 200 bugs per engineer per week. Josh Devon building Cedar-based policy enforcement for coding agents. The researchers publishing structured threat models for agent protocols. These are the people doing the actual work of making agentic AI safer, and their contributions matter more than any earnings report or legal filing.

The guardrails are being tested, in courtrooms, in codebases, and in conference rooms. What we build during this window of transition will determine whether AI becomes the most powerful tool defenders have ever had, or the most dangerous weapon adversaries have ever wielded. I remain optimistic, but only because people are doing the work.

Stay resilient.