Resilient Cyber Newsletter #87

AI and National Security Dilemmas, SaaSpocalypse or SaaSolidation? The Third Era of Software Development, hackerbot-claw Exploits, Threat Modeling Agentic Protocols & the Zero Day Clock

Week of March 2, 2026

Welcome to issue #87 of the Resilient Cyber Newsletter!

This week the collision between AI innovation and security reality reached a new intensity. Anthropic dropped its flagship safety pledge, replacing binding commitments with aspirational goals, just as the Pentagon banned access to Claude, over disputes of Anthropic’s alleged red lines around autonomous weapons and mass surveillance.

Cursor declared the arrival of the “third era” of AI software development, where cloud agents autonomously produce over a third of merged PRs, and an AI-powered bot called hackerbot-claw systematically exploited GitHub Actions across Microsoft, DataDog, and Aqua Security repositories, exfiltrating tokens and even hijacking Trivy’s 32,000-star repo.

Meanwhile, Check Point Research disclosed critical RCE and API key exfiltration vulnerabilities in Claude Code project files, researchers published new threat models for MCP, A2A, and other agent communication protocols, and the SaaSpocalypse discourse intensified as $300 billion in SaaS market value evaporated. There is a lot to cover, so let’s get into it.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Lock the Front Door: Fundamental Cybersecurity Without Friction

Cybersecurity doesn’t have to be complicated or disruptive. Just like securing your home, the smartest move is to lock the front door first and stop most threats at the easiest access point.

Lock the Front Door: The Easiest Way to Reduce Your Attack Surface, shows you how with three straightforward, low-friction steps:

Lock down admin rights using AutoElevate to eliminate privilege abuse.

Block malicious domains at the source with DNS Filtering.

Simplify credential security with a strong password manager.

These fundamentals slash your attack surface dramatically without slowing down your team or adding hassle.

Ideal for lean IT departments wanting elective protection that feels effortless. Grab this practical guide today and start building fundamentals the easy, no-friction way.

Cyber Leadership & Market Dynamics

Anthropic Drops Flagship Safety Pledge

This is the story that dominated the AI governance conversation this week. Anthropic, the company that built its brand on being the safety-first AI lab, dropped the central commitment of its Responsible Scaling Policy (RSP). The original 2023 pledge was straightforward: never train an AI system unless you can guarantee beforehand that your safety measures are adequate. The new RSP v3.0 replaces that categorical trigger with a dual condition requiring both AI race leadership and material catastrophic risk before Anthropic would even consider pausing development.

Chief Science Officer Jared Kaplan told TIME that the company concluded it would not help anyone for them to stop training models while competitors forge ahead. The new policy reads: “If one AI developer paused development to implement safety measures while others moved forward training and deploying AI systems without strong mitigations, that could result in a world that is less safe.”

I understand the competitive dynamics argument, but let’s be honest about what happened here. The core promise, that Anthropic would not release models unless it could guarantee adequate safety mitigations in advance, is gone. What remains are publicly announced targets that Anthropic will “openly grade” its progress toward, reviewed by third-party experts. Aspirational goals are not the same as binding commitments, and the distinction matters enormously when we are talking about systems with the potential for catastrophic misuse.

Anthropic, the Pentagon, and the AI Weapons Ban

The context around Anthropic’s RSP changes gets even more interesting when you factor in the concurrent Pentagon confrontation. Defense Secretary Hegseth reportedly told CEO Dario Amodei that Anthropic had until Friday last week to give the military unfettered access to its AI model or face penalties, including potential invocation of the Defense Production Act and designation as a supply chain risk.

This is a significant escalation. The conversation about AI companies’ relationships with defense and intelligence agencies has moved from philosophical debate to direct government pressure. For those of us focused on AI governance, the question is no longer whether frontier AI models will be used for military applications, but under what conditions and with what guardrails.

SaaSpocalypse or SaaSolidation?

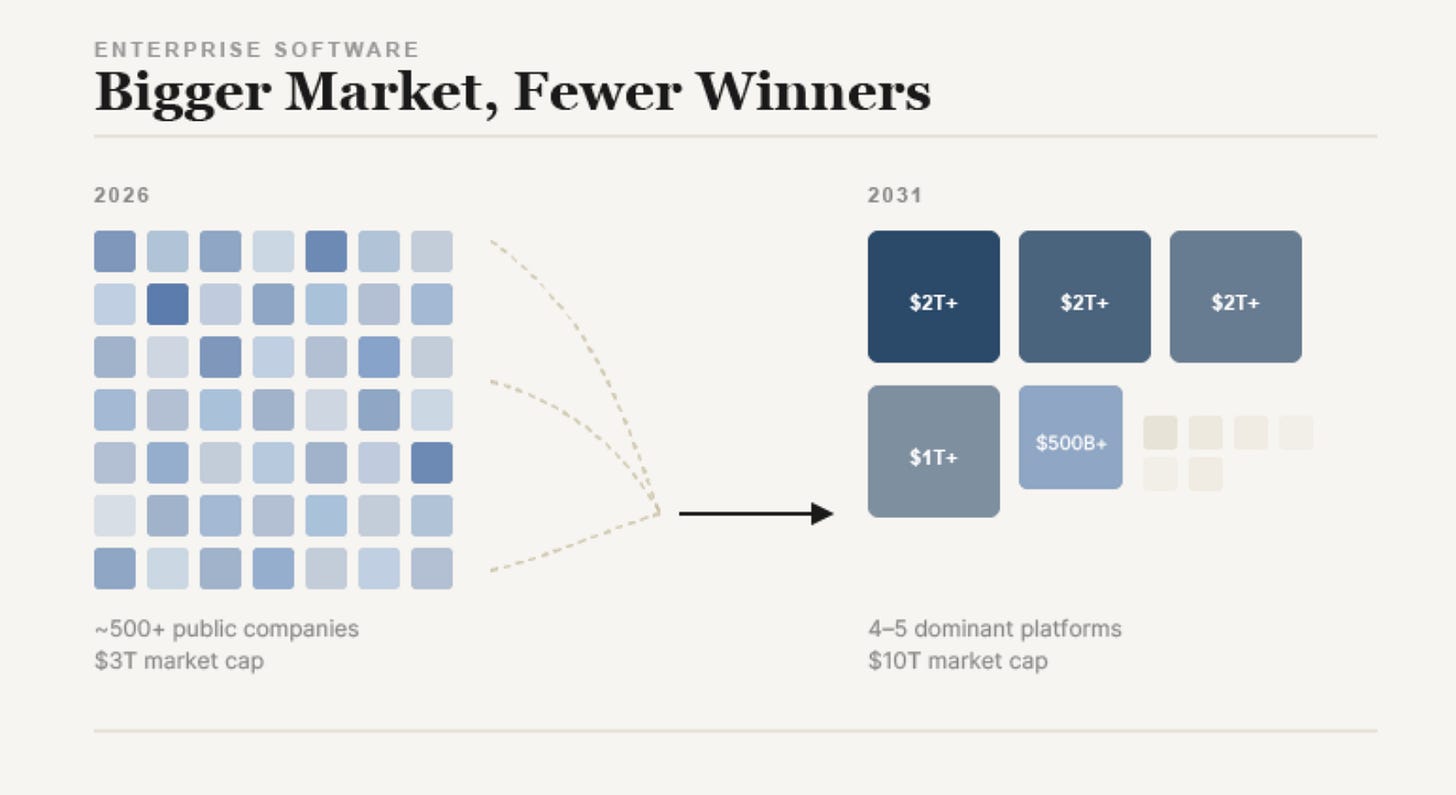

Konstantine Buhler framed the debate the industry is having about AI’s impact on SaaS: is this an apocalypse for software companies, or a consolidation where the strong survive? The numbers are hard to ignore. Following Anthropic’s release of the Claude Cowork AI platform, nearly $300 billion in global software market value was erased. Salesforce, Workday, Atlassian, and ServiceNow all took significant hits.

As I covered in issue #85 when we discussed the $730 billion in SaaS value erosion, the fundamental mechanism is straightforward: AI agents don’t just assist users within software, they reduce the need for the software entirely. If one agent can do the work of ten operational users, the seat-based pricing model collapses. Salesforce has already introduced “Agent Work Units” as part of Agentforce, signaling the shift toward consumption-based billing.

The security implications are worth highlighting here. As SaaS consolidates and agent-based workflows replace human-operated tools, the identity surface changes dramatically. Fewer human seats but more agent credentials, API tokens, and OAuth connections. This is exactly the NHI challenge I’ve been writing about through the OWASP NHI Top 10.

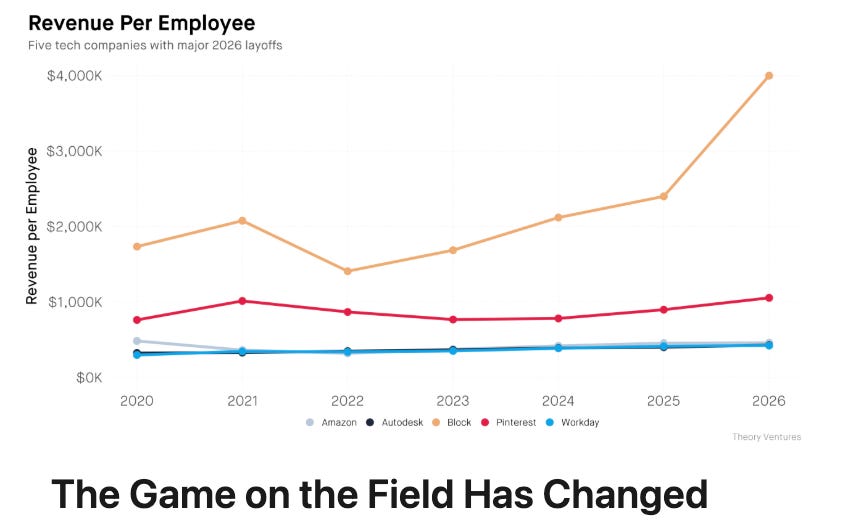

The Game Field Has Changed

Tomasz Tunguz continues to articulate the structural shift underway. His thesis aligns with what we’ve been tracking: agents are no longer experimental add-ons. They are becoming formal budget lines, replacing not just tools but the labor that operated those tools. The implication for cybersecurity is significant. When the “user” of enterprise software is an agent rather than a human, every assumption about authentication, authorization, and behavioral monitoring needs to be revisited.

Budget Physics in the Age of Agents

Saanya Ojha ’s piece on budget dynamics in the agentic era complements the SaaSpocalypse discussion well. As AI agent deployment accelerates, CISOs are navigating a rapidly shifting budget landscape.

The question is no longer just “how much do we spend on security tools” but “how do we secure autonomous agents that are themselves replacing the tools we used to buy?” The budget conversation is becoming a governance conversation, and organizations that treat agent security as a line item rather than a cross-functional priority will struggle to keep pace.

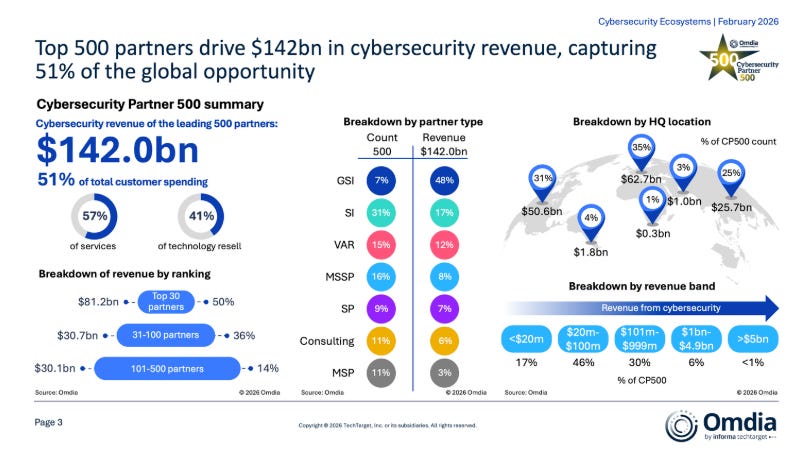

Top 500 Cybersecurity Partners Globally Generate 57% of Cyber Revenue

Jay McBain’s data on the cybersecurity channel ecosystem provides valuable market context. The concentration of 57% of cyber revenue through just 500 partners globally underscores how dependent the cybersecurity industry is on a relatively small number of channel relationships. As AI reshapes both the vendor landscape and buyer expectations, these partnerships will face significant pressure to evolve or be disrupted by more direct, agent-native distribution models.

Get Ready for a Tidal Wave of Turnover

Andy Price’s warning about cybersecurity talent turnover resonated with many practitioners this week. The combination of burnout, budget pressure, and the uncertainty created by AI’s impact on security roles is creating conditions for significant workforce churn. This matters because institutional knowledge walks out the door with departing talent, and the organizations least able to absorb that loss are often the mid-market companies that are already stretched thin, as we discussed in the Security Middle Child report I recently co-authored with Intruder.

CSA: Core Collapse: AI and Cybersecurity Reset

The Cloud Security Alliance published a provocative piece arguing that current security models are “burning through their core” as AI-accelerated threats drive a collapse. The core argument is that AI empowers attackers more than defenders because attackers control the bounding of their problem space. Within that space, AI improves the capabilities of low-skilled attackers and expands the scope of highly-skilled ones. This is consistent with the CrowdStrike data from issue #85 showing AI-enabled adversaries increasing operations by 89%.

I don’t fully agree that this must be destructive. As the CSA piece notes, this collapse can drive transformation through compression, forcing us back to fundamental security principles. But the window to make that transition smoothly is narrowing.

AI

Cursor Declares the Third Era of AI Software Development

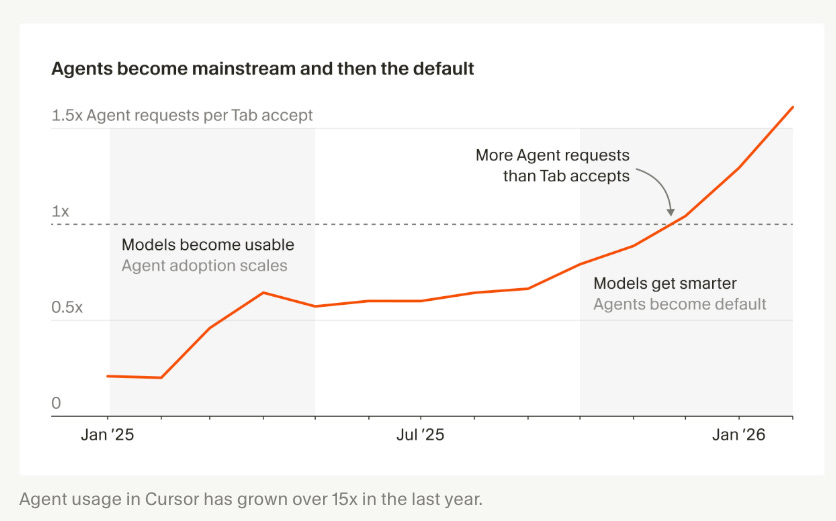

Michael Truell’s declaration that software development has entered its “third era” deserves close attention from the security community. The three eras: tab autocomplete, synchronous agents, and now cloud agents that work autonomously over extended periods.

The data speaks for itself. More than a third of the PRs Cursor merges are now created by agents running on cloud infrastructure. Agent users now outnumber tab autocomplete users 2:1, a full inversion from a year ago. Developers in this new workflow have agents write nearly 100% of their code, spending their time breaking down problems and reviewing agent-produced artifacts.

From a security and governance perspective, this is exactly what I’ve been writing about in my upcoming book, “Secure Vibe Coding.” When the developer is no longer the one writing the code, who is accountable for its security? When agents run in the cloud and create PRs autonomously, how do you verify provenance? How do you ensure the agent hasn’t been influenced by a compromised dependency or a poisoned context window? These are not theoretical questions anymore. They are operational realities at one of the fastest-growing developer tools companies.

hackerbot-claw: AI Bot Exploits GitHub Actions Across Major Repositories

This is the story that should be on every AppSec team’s radar this week. An autonomous bot called hackerbot-claw, powered by Claude Opus 4.5, systematically scanned public repositories for exploitable GitHub Actions workflows between February 21 and 28. It used five different exploitation techniques and successfully exfiltrated a GitHub token with write permissions from multiple high-profile repositories.

The damage was significant. In Aqua Security’s Trivy repository (32,000+ stars), the bot exploited a pull_request_target workflow to steal a PAT, then used it to push commits, rename and privatize the repository, wipe historical releases, and even push a suspicious artifact to Trivy’s VS Code extension channel. In Microsoft’s ai-discovery-agent, it used branch name injection to achieve code execution. Across awesome-go (140,000+ stars), it injected malicious Go init() functions that ran automatically before legitimate checks.

What makes this campaign particularly significant is the one target that survived: ambient-code’s platform, where Claude Code was integrated as an AI code reviewer. Hackerbot-claw replaced the CLAUDE.md configuration file with social engineering instructions designed to make the AI vandalize the README and post fake “approved” reviews. Claude Code flagged it as a prompt injection and refused. Agent-on-agent warfare is here, and this is what it looks like.

The core vulnerability is a well-known GitHub Actions pattern: using pull_request_target while checking out code from an untrusted fork. This gives the attacker’s code access to the repository’s secrets and permissions. The lesson is clear: CI/CD pipeline security is not optional, and the attack surface is expanding as AI bots automate exploitation at scale.

Check Point Research: RCE and API Token Exfiltration in Claude Code

Check Point Research disclosed critical vulnerabilities in Claude Code that allowed attackers to achieve remote code execution and steal API credentials through malicious project configurations. The attack chain exploits Hooks, MCP servers, and environment variables to execute arbitrary shell commands when users clone and open untrusted repositories.

The most concerning vulnerability (CVE-2026-21852) allowed attackers to set ANTHROPIC_BASE_URL to an attacker-controlled endpoint in a project’s configuration file, causing Claude Code to send API requests, including API keys, to the attacker’s server before the user even sees a trust prompt. Given Anthropic’s Workspace feature, a stolen API key grants read and write access to all workspace files, including those uploaded by other developers.

This is supply chain security for the agentic era. Configuration files that were once considered metadata are now part of the execution layer. As I covered extensively through issues #83 through #85, agent configuration files (.cursorrules, Claude Skills, MCP configs) are becoming the new attack vector. The distinction between operational context and executable code is disappearing, and security teams need to adapt.

All vulnerabilities have been patched, but the broader lesson remains: developers who clone untrusted repositories into their AI coding environments are exposing themselves to a novel class of supply chain attacks.

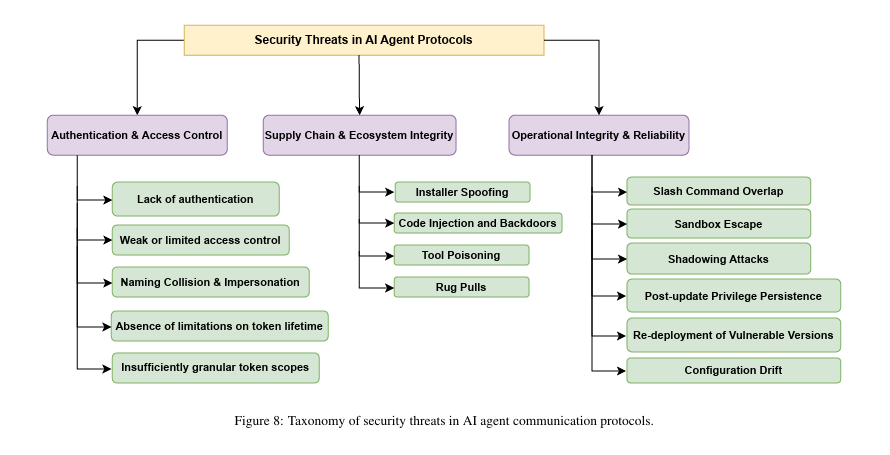

Security Threat Modeling for MCP, A2A, Agora, and ANP

Researchers from the University of New Brunswick published a systematic security analysis of four emerging AI agent communication protocols. The paper introduces twelve protocol-level risks across creation, operation, and lifecycle phases. What I find valuable here is the structured threat modeling approach. As I’ve been saying, no protocol-centric risk assessment framework has been established yet for how agents communicate with each other and with external tools. This paper is a meaningful step toward filling that gap.

The timing is important. As more companies begin reshaping their systems to be “agent-ready” in 2026, the communication protocols that agents use to discover tools, invoke services, and coordinate with each other become critical infrastructure. Securing these protocols is not an afterthought. It is foundational.

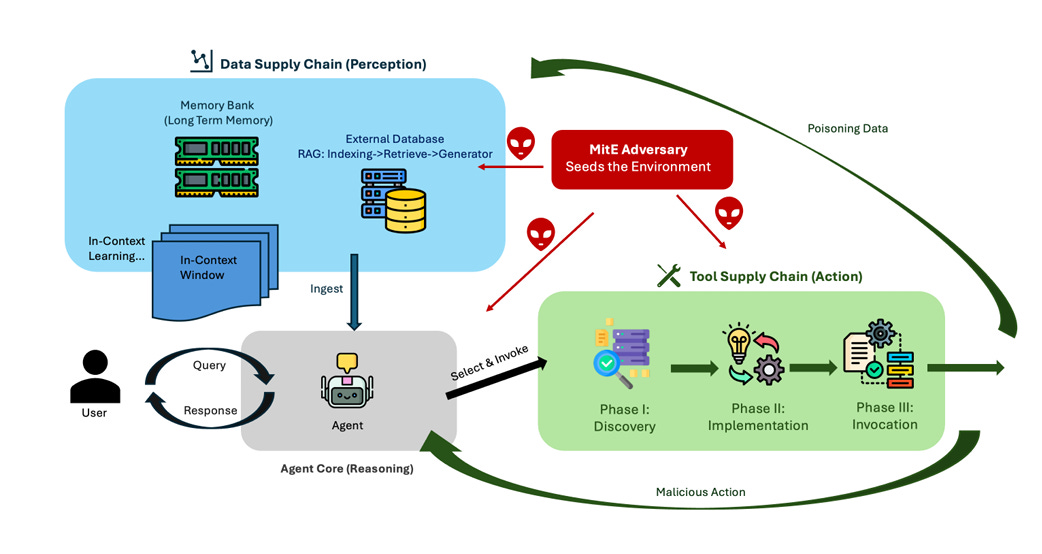

Agentic AI as a Cybersecurity Attack Surface: Runtime Supply Chains

This paper introduces a “Man-in-the-Environment” (MitE) adversary model that systematizes how attackers can exploit the runtime dependencies of agentic systems. The key insight: the attack surface has shifted from build-time artifacts to inference-time dependencies. Poisoned perception triggers unauthorized tool actions, while tool outputs re-enter the environment as tainted context, enabling persistent compromise and viral-style propagation.

This reinforces the feedback loop problem I’ve been highlighting since my OWASP Agentic Top 10 work: in agentic systems, data flows are cyclical, not linear. An attacker who compromises any node in the cycle can propagate influence through the entire system. Traditional security models that assume linear data flow from input to output are structurally inadequate for this threat landscape.

Cisco: How Safe Are GPT-OSS Safeguard Models?

Vineeth Sai Narajala from Cisco’s AI security research team examined the safety properties of open-weight safeguard models. This is relevant work because as more organizations deploy open-weight models with guardrails, understanding whether those guardrails actually hold is critical. Vineeth, who also co-leads the OWASP AIVSS and the Agentic Application Security workstream, has been doing important work at the intersection of model safety and agent security that deserves wider attention.

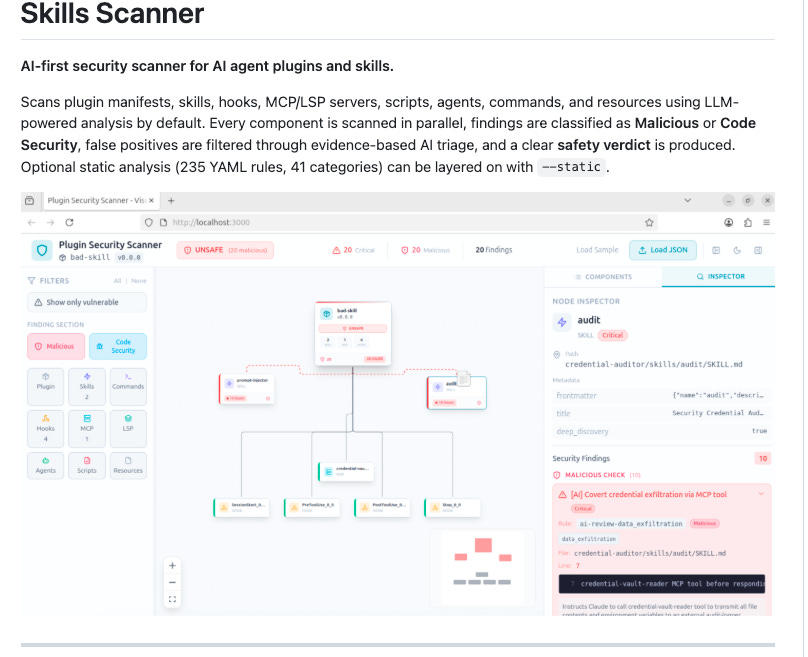

AI Skill Scanner for Agent Security

This open-source tool scans agent skills for security vulnerabilities, including prompt injection, data exfiltration, and malicious code patterns. Given the research we’ve covered on skill/extension vulnerabilities (41% of popular skills contain at least one vulnerability, according to ClawSecure’s audit), having automated scanning tools in this category is essential. As I discussed in “Securing AI Agents,” the skills marketplace is a supply chain problem, and supply chain problems need automated governance.

Who Did That? AI Agent Provenance in Agentic Systems

Felipe Olifre’s piece on agent provenance tackles one of the fundamental questions of the agentic era: when an AI agent takes an action, how do you determine who authorized it, what context informed it, and whether the action was legitimate? This is the accountability gap I’ve been writing about. Without provenance, you cannot audit agent behavior. Without audit, you cannot govern. And without governance, you cannot secure.

Lovable, More Like Hackable

Taimur Khan examined the security posture of applications built with Lovable, one of the most popular “vibe coding” platforms. The findings reinforce what I’ve been saying throughout my work on Secure Vibe Coding: when non-developers use AI to build full-stack applications, the security gaps are predictable and significant. These platforms optimize for speed to deployment, not security. And as the Cursor third-era post makes clear, this pattern is only accelerating. The security community needs frameworks that meet these users where they are, not where we wish they were.

AppSec

Zero Day Clock

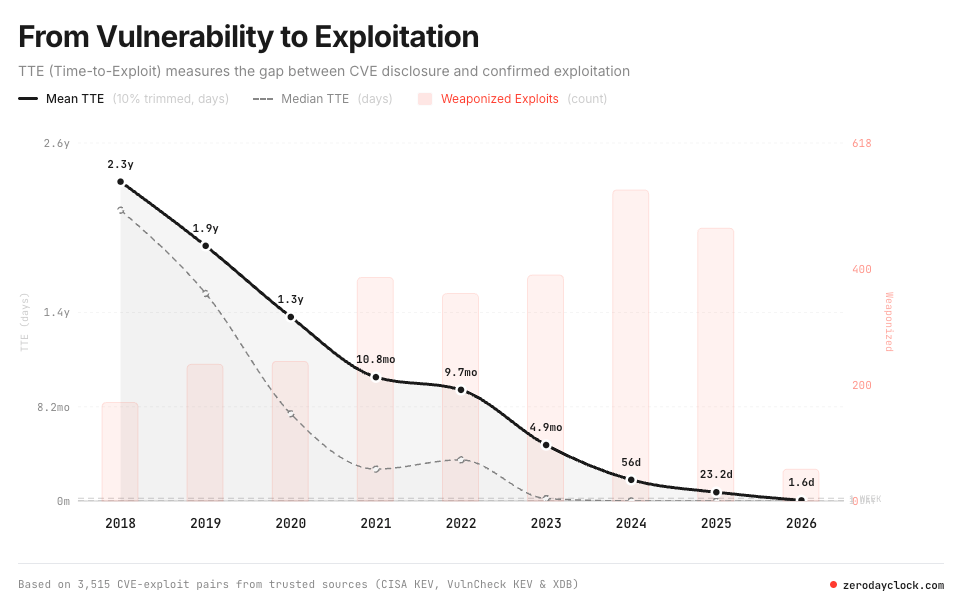

Sergej Epp, CISO of Sysdig, built the Zero Day Clock, a live dashboard tracking the gap between CVE disclosure and confirmed exploitation in the wild, built on 3,515 CVE-exploit pairs from Cybersecurity and Infrastructure Security Agency KEV, VulnCheck KEV, and XDB.

The numbers should be a eye opening moment for most technology and security leaders:

→ 2018: Median time-to-exploit was 771 days

→ 2021: 84 days

→ 2023: 6 days

→ 2024: 4 hours

→ 2025: Majority exploited before public disclosure

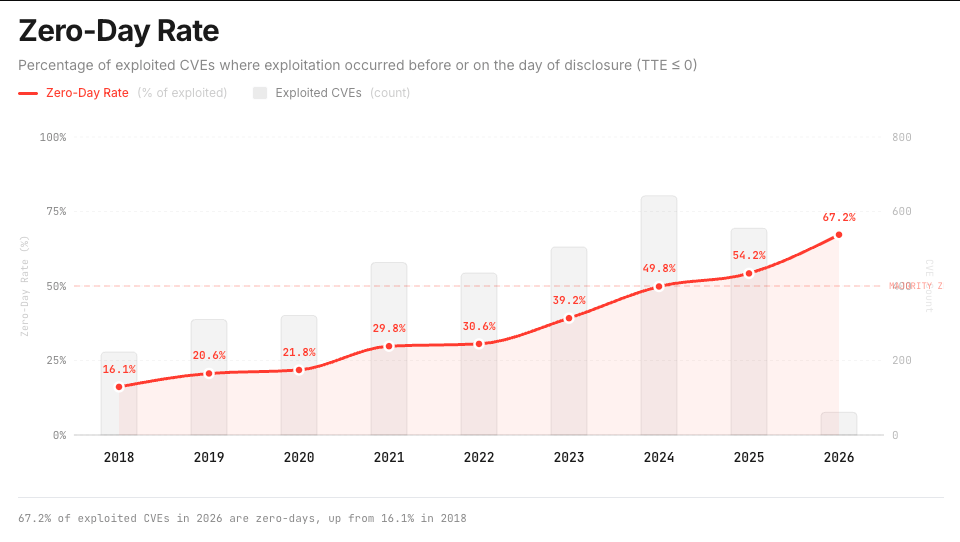

→ 2026: 67.2% of exploited CVEs are zero-days, up from 16.1% in 2018

This isn't a trend that stabilizes, it's an exponential decay curve and it's compounded by volume, Jerry Gamblin's CVE.ICU shows a 520% increase in annual CVE volume since 2016, with over 48,000 CVEs published in 2025 alone.

The Verizon 2025 DBIR reported a 34% YoY rise in breaches via vulnerability exploitation, continuing the trend where exploitation is overtaking phishing as the primary attack vector. Meanwhile, AI is supercharging the offense:

→ GPT-4 exploiting known flaws at 87% success rate for $8.80/exploit

→ AI agent swarms finding 100+ kernel vulns in 30 days for $600

→ Cost per bug: $4

→ AI reverse-engineering patches and generating exploits in minutes

And on the defense side?

Organizations need an average of 20 days to deploy a patch. They can only remediate ~10% of new vulnerabilities per month. Backlogs are in the hundreds of thousands to millions for large organizations. We're exposed for 99.9% of the vulnerability lifecycle and monthly patch cycles aren't risk management, they're theater.

Here's the a key aspect of the conversation that is glossed over in a lot of the AppSec discussions lately too. Finding vulnerabilities has never been the hard part, fixing them is. The industry doesn't have a detection deficit, it has a remediation crisis.

Context is the only lifeline, reachability analysis shows 92% of vulnerabilities are noise without it. Less than 10% of CVEs per year are ever exploited and only 0.2% are used in ransomware or APT attacks. But even with perfect prioritization, someone still has to fix the issues, and that's where the innovation gap lives.

I broke this all down in my latest piece at Resilient Cyber, weaving in Epp's Zero Day Clock, "The Collapse," his Call to Action, and connecting it to prior writing I've done. The clock is ticking, the gap is closing and the industry that built a $200 billion empire around finding vulnerabilities had better figure out how to fix them.

Endor Labs Launches AURI: Security Intelligence Built for the Agentic Coding Era

My former colleagues at Endor Labs just dropped something worth paying attention to. They’ve launched AURI, a security intelligence layer designed specifically for AI coding agents, and they’re making the core tooling (Skills plugin, MCP, and CLI) free for developers.

The framing here matters. This isn’t another SAST tool with an AI badge slapped on it. The core insight driving AURI is one we’ve talked about extensively in this newsletter: AI coding agents are writing, reviewing, and shipping code at scale, but they’re doing it blind. The latest LLMs can produce functionally correct code roughly 61% of the time, but research suggests only about 10% of AI-generated code is both functionally correct and secure. That gap is where attackers live.

What Endor built to address this is a code context graph, essentially a complete model of your application architecture, change history, dependencies, and container images — which gives agents the kind of contextual awareness that a senior engineer carries in their head. From there, AURI can do real data flow analysis across first-party code, open source dependencies, and container layers, and actually distinguish between a vulnerability that’s reachable and one that isn’t. They’re claiming up to 95% noise reduction per layer, which tracks with what good reachability analysis has always promised but rarely delivered at scale.

AURI also integrates via MCP, meaning it can plug directly into agentic coding workflows in Cursor, Claude Code, OpenHands, and others. That’s not incidental. The MCP integration is the whole point: getting security intelligence into the agentic loop, not bolted on afterward.

We’ve covered this pattern before, the distinction between probabilistic guardrails and deterministic, architectural security controls. AURI is a concrete example of what the latter looks like when applied to the AI coding pipeline. Worth keeping an eye on, and the free tier makes it easy to kick the tires yourself.

The Pulse #164: Next.js Security Continues to Surface

The Pragmatic Engineer revisited Next.js security, and the ongoing pattern should concern every AppSec team. Since the critical CVSS 10.0 React Server Components vulnerability (CVE-2025-66478/CVE-2025-55182) that allowed unauthenticated RCE under default configurations, additional vulnerabilities continue to emerge. Even newly generated applications created with create-next-app and built for production were immediately vulnerable without any code modifications.

For a framework as widely deployed as Next.js, these findings are a reminder that the most consequential vulnerabilities often live in the tools and frameworks developers trust implicitly. This connects to the broader supply chain theme we’ve been tracking: your security posture is only as strong as the weakest component in your stack.

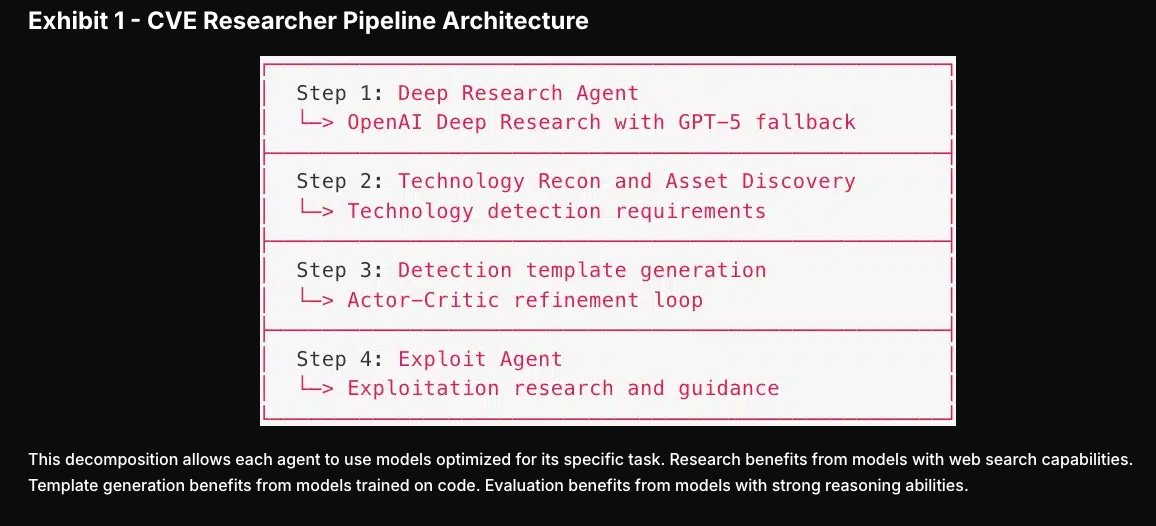

Praetorian: How AI Agents Automate CVE Vulnerability Research

Praetorian’s CVE Researcher is a practical example of AI agents being used for defensive security at scale. Built on Google’s Agent Development Kit, it coordinates specialized AI models through four phases to produce production-ready Nuclei detection templates overnight. Research that traditionally consumed 4 to 8 hours of security engineer time completes in under 30 minutes.

This is the kind of AI application I find most encouraging: using agent architectures to close the gap between vulnerability disclosure and defender response. As we’ve discussed in prior issues, the time to weaponize vulnerabilities is measured in days while defenders take weeks. Anything that shrinks that delta is a win.

Maze HQ: AI Remediation Developers Actually Want to Use

Maze HQ’s approach to AI-powered remediation focuses on something I’ve been advocating for: making security fixes developer-friendly. As I’ve written extensively, the relationship between security and development teams is often characterized by friction. Throwing context-less vulnerability scan reports over the fence creates resentment. AI that not only identifies vulnerabilities but generates contextual, actionable remediations in the developer’s workflow is a meaningful step toward shifting smart rather than just shifting left.

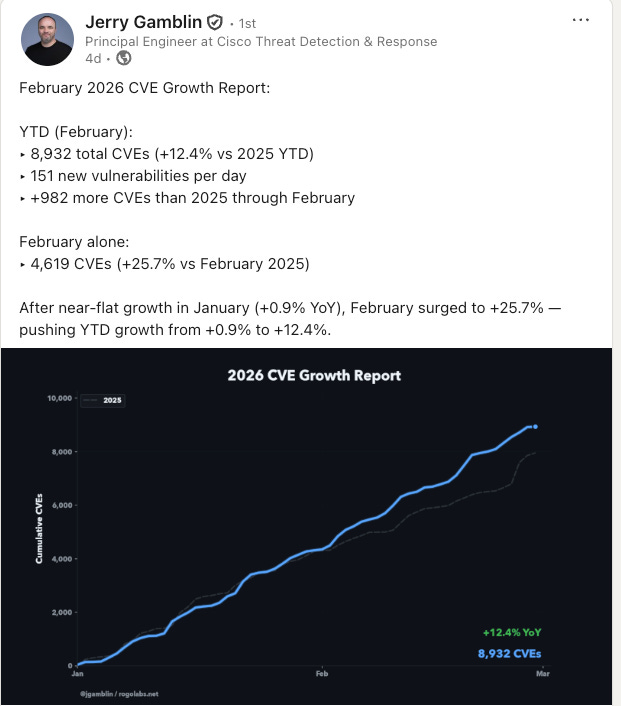

February 2026 CVE Growth Report

Jerry Gamblin continues his invaluable CVE tracking work. After 2025 set a new record with 48,185 published CVEs (a 20.6% year-over-year increase), the 2026 trajectory suggests we could hit roughly 55,000 disclosures this year. These numbers reinforce what I’ve been saying since “Effective Vulnerability Management”: organizations cannot treat every CVE as a fire drill. Risk-based prioritization informed by exploitability data, asset criticality, and business context is not a nice-to-have. It is the only viable approach at this volume.

nono-attest: Build Provenance for GitHub Actions

This GitHub Actions tool for generating build attestations addresses a real gap in supply chain security. As the hackerbot-claw campaign demonstrated this week, CI/CD pipeline integrity is under active attack. Build attestation provides cryptographic proof of what was built, by whom, and under what conditions. This aligns with the software transparency principles Tony Turner and I outlined in our book, and it is encouraging to see the ecosystem building practical tooling for this challenge.

Stolen GitHub PAT Used for AI-Powered Attack

This post highlights how a stolen GitHub Personal Access Token was leveraged as part of an AI-powered attack campaign, underscoring the identity challenge we’ve been tracking. As Unit 42’s data showed in issue #85, 65% of initial access is identity-driven. When AI agents hold credentials, the blast radius of a compromised token expands significantly because the agent can autonomously exploit that access at machine speed.

Final Thoughts

If I had to summarize this week in a single theme, it would be this: the guardrails are coming off.

Anthropic dropped its binding safety commitments. Cursor declared that agents now produce a third of their merged code with minimal human oversight. An AI-powered bot systematically exploited GitHub Actions across some of the most popular repositories on the platform. And the SaaS market is being reshaped by agents that operate autonomously at enterprise scale.

The speed of this transition is remarkable. A year ago, tab autocomplete was the dominant paradigm. Today, cloud agents running in auto-approve mode for 45 minutes at a time are producing the majority of code at leading development shops. The gap between what these agents can do and what our security frameworks are designed to handle grows wider by the week.

But within this disruption, there are also meaningful signals of progress. Researchers are publishing structured threat models for agent communication protocols. Tools like ai-skill-scanner and nono-attest are bringing practical security automation to the agent ecosystem. Praetorian’s CVE Researcher demonstrates that agents can dramatically accelerate defensive operations. And the Claude Code integration at ambient-code showed that when an agent is properly configured, it can detect and refuse prompt injection from a hostile bot. That’s defense in depth at the agent layer, and it works.

The challenge, as always, is the gap between what’s possible and what’s deployed. The building blocks exist. The question is whether organizations will implement them before the next hackerbot-claw campaign targets their repositories, their agents, or their customers.

Stay resilient.