The Identity Layer Underneath the Agentic Enterprise

A look at the evolving Agentic IAM landscape and Okta's approach to securing Agentic Identity

AI agents are the fastest-growing identity type in the enterprise, and most organizations have no coherent strategy for managing them.

The challenge is not theoretical, enterprises are already running agents that authenticate to APIs, access sensitive data, spawn sub-agents, and execute complex multi-step workflows across systems that were never designed to accommodate non-human actors operating at machine speed and scale.

The identity infrastructure that took decades to build for human users is now being stress-tested by an entirely new class of principal, and as an industry we’ve already struggled to securing human users, with credential compromise continuing to be an industry leading attack vector year-over-year (YoY), and now agents are posed to exacerbate these longstanding challenges worse than ever.

This is not a net-new problem, it is the latest and most acute example of a challenge the industry has been struggling with for years. Non-human identities have outnumbered human identities in most enterprises by a factor of 10 to 50 for some time now, and the OWASP NHI Top 10 published in 2025 catalogs the predictable consequences of that imbalance. I covered a lot of those challenges in a prior article on Juggling NHI Risks.

Improper offboarding of machine credentials, secret leakage, overprivileged service accounts, long-lived secrets that nobody rotates because rotation is operationally painful, and third-party NHIs that create supply chain attack surface. These are not emerging risks, they are existing risks that have persisted because the operational incentives to fix them never outweighed the friction of doing so and teams simply failed to do so.

AI agents inherit every one of those problems and add several new dimensions that existing NHI management frameworks were not built to handle despite the fact that most organizations were struggling with a coherent NHI strategy, let alone Agentic IAM.

An API key provisioned for a microservice has a predictable behavior pattern. It calls the same endpoints, at roughly the same cadence, with the same scope of access. An AI agent, by contrast, is non-deterministic by design. It reasons about what tools to invoke, which APIs to call, and what data to retrieve based on context that changes with every interaction, often at runtime.

The same agent operating under the same set of granted permissions can behave differently depending on the prompt it receives, the conversation history it carries, the environment and context it is exposed to and the intermediate outputs of upstream agents in a chain.

This makes traditional static authorization models fundamentally insufficient, because the set of actions an agent might take is not fully knowable at the time permissions are granted, a concept that traditional IAM security never had to grapple with.

The Taxonomy Problem

Before organizations can secure agents, they need a shared vocabulary for what they are actually trying to secure. The term “AI agent” covers an enormous range of implementations, and treating them as a monolithic category leads to security strategies that are either too broad to be useful or too narrow to cover real-world deployments. Okta has been developing a taxonomy that breaks agents down across three dimensions that matter for identity and access control.

The first is agent type, which distinguishes between embedded agents that are baked into SaaS applications as inseparable features (e.g. Salesforce AgentForce), standalone SaaS agents that operate as independent third-party products, fully custom homegrown agents built in cloud environments using frameworks such as LangChain, agents built on provider-specific builder platforms that handle runtime and orchestration (e.g. managed services), automation platform agents built on visual orchestration tools that connect enterprise APIs, and local agents running on a user’s endpoint with access to the local terminal (e.g. endpoint coding agents).

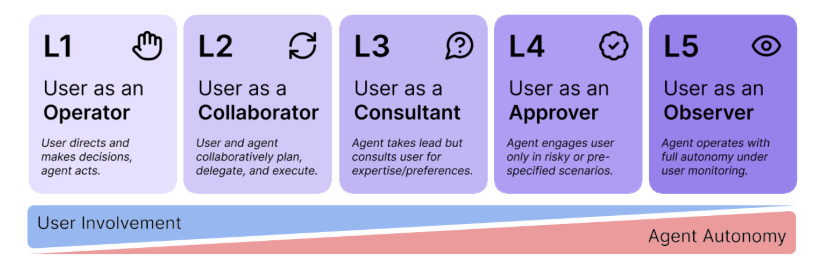

The second dimension is delegation type, ranging from attended interactions where a human directly prompts an agent on a 1-to-1 basis, through attended-chained interactions where a human starts the process and the agent spawns sub-agents, to fully unattended service agents triggered by cron jobs or webhooks, unattended-delegated agents where a non-human entity triggers an agentic chain, and autonomous agents that loop and spawn based on their own reasoning.

The third dimension is persistence, spanning persistent agents with infinite lifetimes until explicitly revoked, session-bound agents tethered to short-term windows of one to eight hours, and ephemeral agents that exist for seconds to minutes.

This taxonomy matters because the security requirements and considerations vary dramatically across these categories. A persistent, autonomous agent that spawns sub-agents and holds long-lived credentials requires a fundamentally different identity management approach than an ephemeral, attended agent that exists for the duration of a single human-initiated interaction. Organizations that apply a one-size-fits-all policy across all of these types will either over-constrain their low-risk agents or under-constrain their high-risk ones.

The Industry’s Response

The recognition that agents need purpose-built identity frameworks has produced a wave of standardization activity over the past year. The Coalition for Secure AI (CoSAI) published its Principles for Agentic IAM, establishing that agents must be human-governed and accountable, constrained by well-defined authority boundaries, and subject to risk-based controls. CoSAI identified what may be the central unsolved problem in this space, which is defining what the identity primitive for an AI agent actually is.

Current security infrastructure assumes a binary choice between human and service account, but agents represent something genuinely new, entities that act with a degree of autonomy that service accounts never had but within boundaries that should ultimately trace back to human authorization.

On the protocol side, multiple IETF drafts are now circulating. The AAuth specification defines an Agent Authorization Grant as an OAuth 2.1 extension specifically designed to let agents obtain access tokens to invoke web-based APIs on behalf of their users, addressing use cases where traditional interactive OAuth flows are not feasible because the agent is operating through non-traditional channels.

NIST launched its AI Agent Standards Initiative in February 2026, organizing work around three pillars of industry-led standards development, community-led open source protocol maintenance, and foundational research in agent security and identity. This included an RFI for AI Agent Security as well as a draft concept paper focusing on AI Agent Identity & Authorization.

The pattern across all of these efforts is consistent. The industry is converging on the principle that agents are a distinct identity class that requires lifecycle management comparable to what organizations provide for human users, including discovery, authentication, authorization, continuous monitoring, and revocation. The organizations that treat agent identity as a bolt-on to their existing service account management will find themselves rebuilding that decision within the year.

Where Okta Fits

Okta has spent 17 years building the identity plumbing that connects users to technology across more than 20,000 customer environments. This makes their perspective incredibly valuable which is why I wanted to partner up with them for this topic. Now they are extending their platform to treat AI agents as first-class identities through the general availability of Okta for AI Agents. I sat down with Okta’s CPO and SVP & GM, AI Security to dive into the launch.

The core premise, as Ely Kahn, Okta’s Chief Product Officer, put it during our conversation, is that customers do not want a bunch of different ways to manage their identities across their enterprise. They want a single platform to manage all of their identities, human, non-human, and now agentic, rather than having identity governance sprawling across a growing collection of point tools.

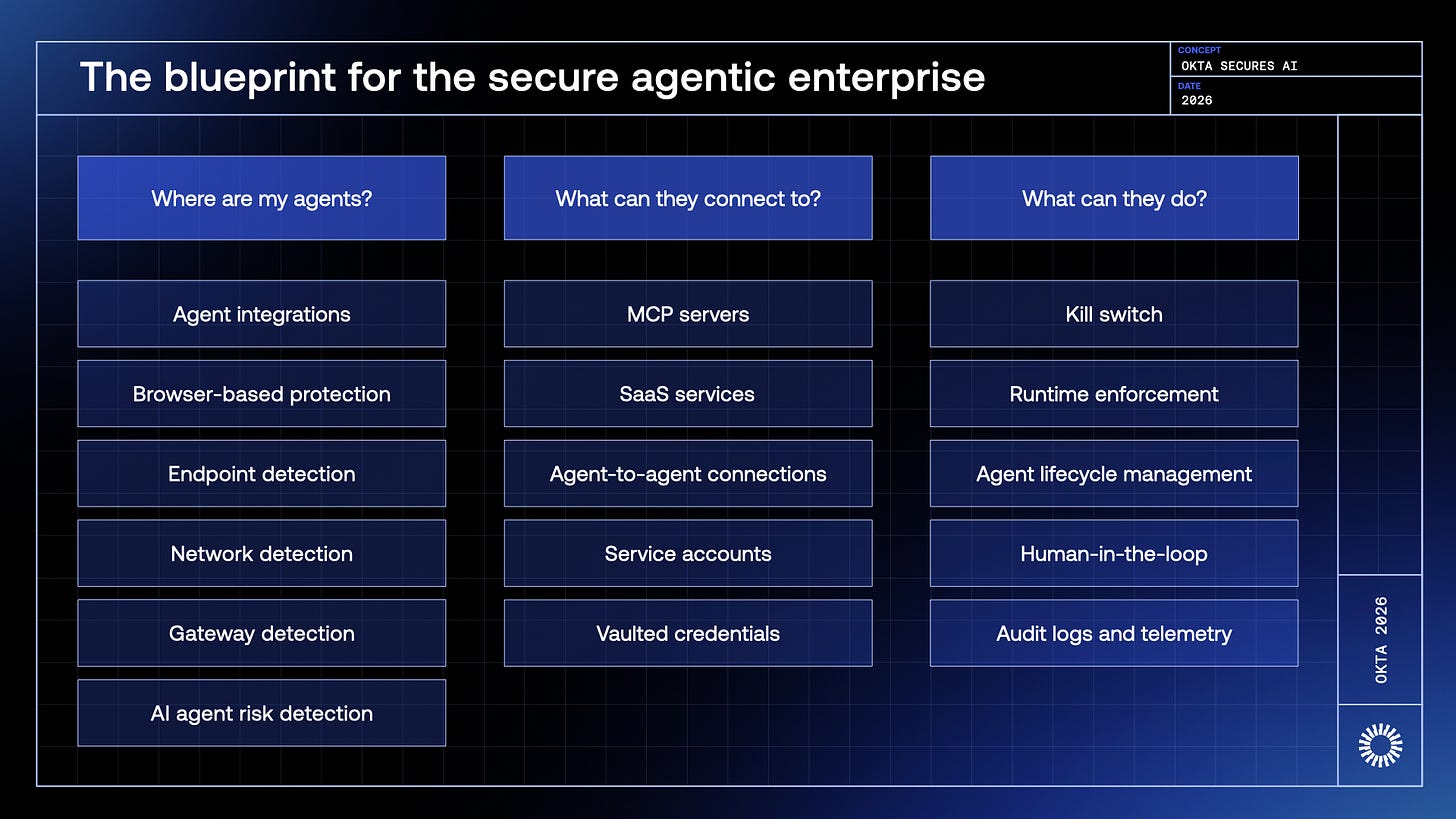

The framework is built around three questions that define the blueprint for the secure agentic enterprise.

Where are my agents?

What can they connect to?

What can they do?

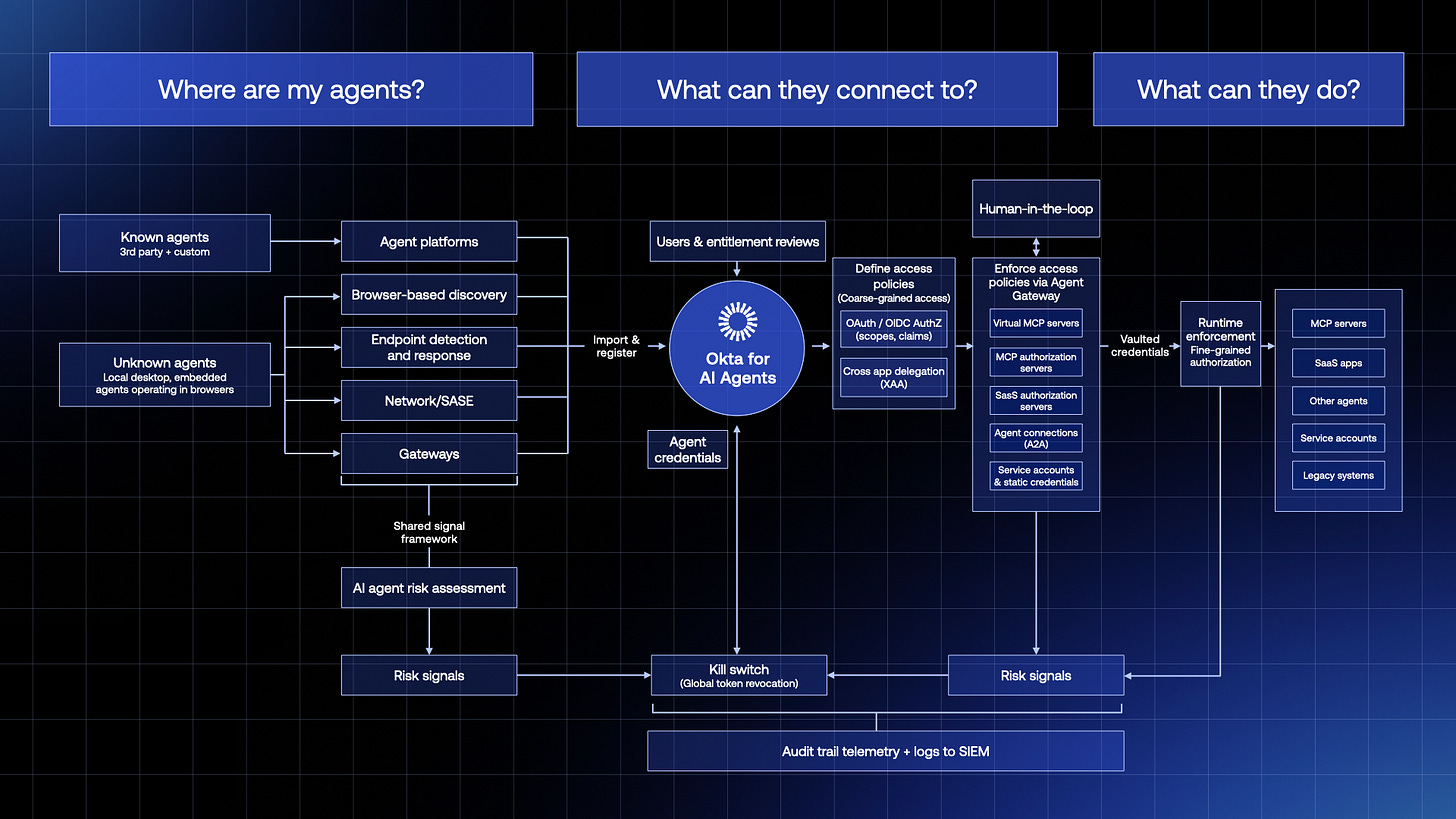

Drilling down on this blueprint from Okta’s perspective, keeping the same fundamental questions you can start to see a reference architecture form. This includes accounting for known and unknown agents, the various signals they produce and how, then those signals can be integrated into Okta for AI Agents and used for activities such as a kill switch or to inform human-in-the-loop (HITL) decisions and governance along with runtime enforcement to control what agents can do.

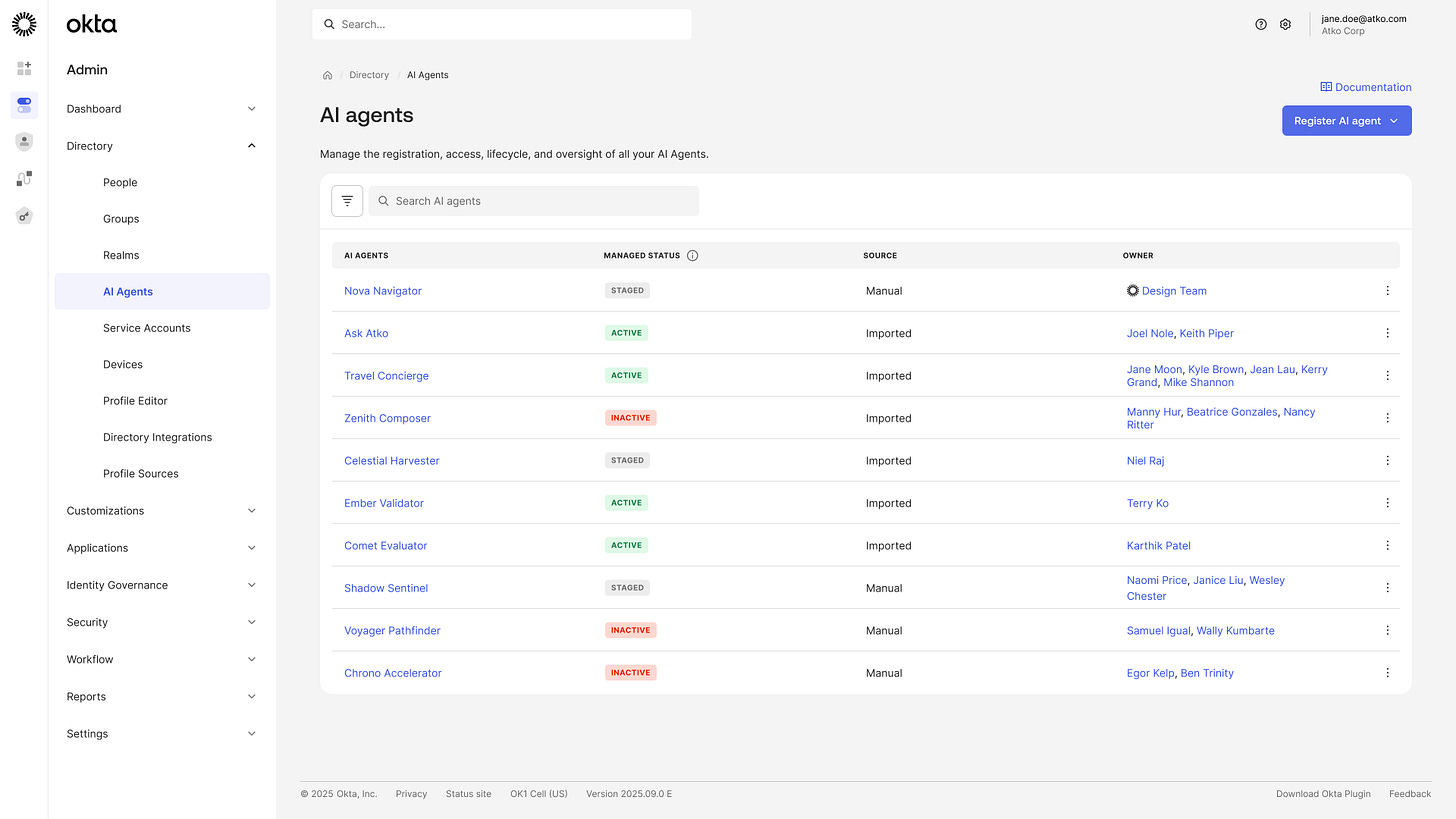

The first question, where are my agents, addresses the shadow AI problem through Okta’s Identity Security Posture Management product. Agent creation is being democratized across the enterprise, with employees provisioning their own digital workers outside of IT control within minutes. The problem is, we haven’t also democratized a secure process for governing these new entities in the enterprise.

Okta for AI Agents provides continuous discovery of both known and unknown agents, registering them in Universal Directory and establishing clear ownership. As we always say in cyber, you cannot secure what you cannot see, and the visibility gap for agents is growing faster than most security teams realize, or at least beyond their ability to govern.

The second question, what can they connect to, is where the platform’s existing infrastructure becomes a significant competitive advantage. Once agents are registered in Universal Directory, Okta securely connects them to downstream resources through multiple pathways. For agents that need API access keys or long-term secrets, Okta Privileged Access provides secure credential vaulting.

Developers are already wiring third-party AI agents and coding assistants like Claude Code, GitHub Copilot, and Cursor into enterprise systems at machine speed, connecting to Jira, ServiceNow, GitHub, and internal APIs through integrations that most security teams have limited visibility into and even less control over.

The identity gap is real, because these agents frequently operate on hardcoded credentials with inconsistent access controls and virtually no audit trail that ties their actions back to a governed identity. Okta's MCP Bridge, launching with the general availability of Okta for AI Agents on April 30th, is designed to sit between the agent and the MCP server, brokering token exchange through Okta so that centralized identity, policy, and audit controls apply to every interaction without requiring the agent vendors or the teams operating them to change anything about how they build or deploy.

For agents that can use modern token-based access, Okta vends ephemeral OAuth tokens that limit blast radius by design. This matters enormously in the context of prompt injection, which Ely described candidly as a feature of AI agents rather than a bug, an acknowledgement I, along with the frontier labs and industry security leaders agree with at this point.

These agents are extremely good at following instructions, including malicious ones, due to the persistent challenges discussed above, so the highest-ROI thing developers and administrators can do is scope those agents’ privileges narrowly and give them ephemeral tokens rather than long-lived credentials. Taking it further, the industry is now starting to discuss “least autonomy”, building on the principle of least permissive access control, but in the agentic-era.

The platform’s custom authorization server lets administrators define the scopes and claims an agent can hold, and when that agent acts on behalf of a user, Okta automatically computes the intersection of the agent’s granted scopes and the user’s available scopes to enforce least privilege without manual configuration.

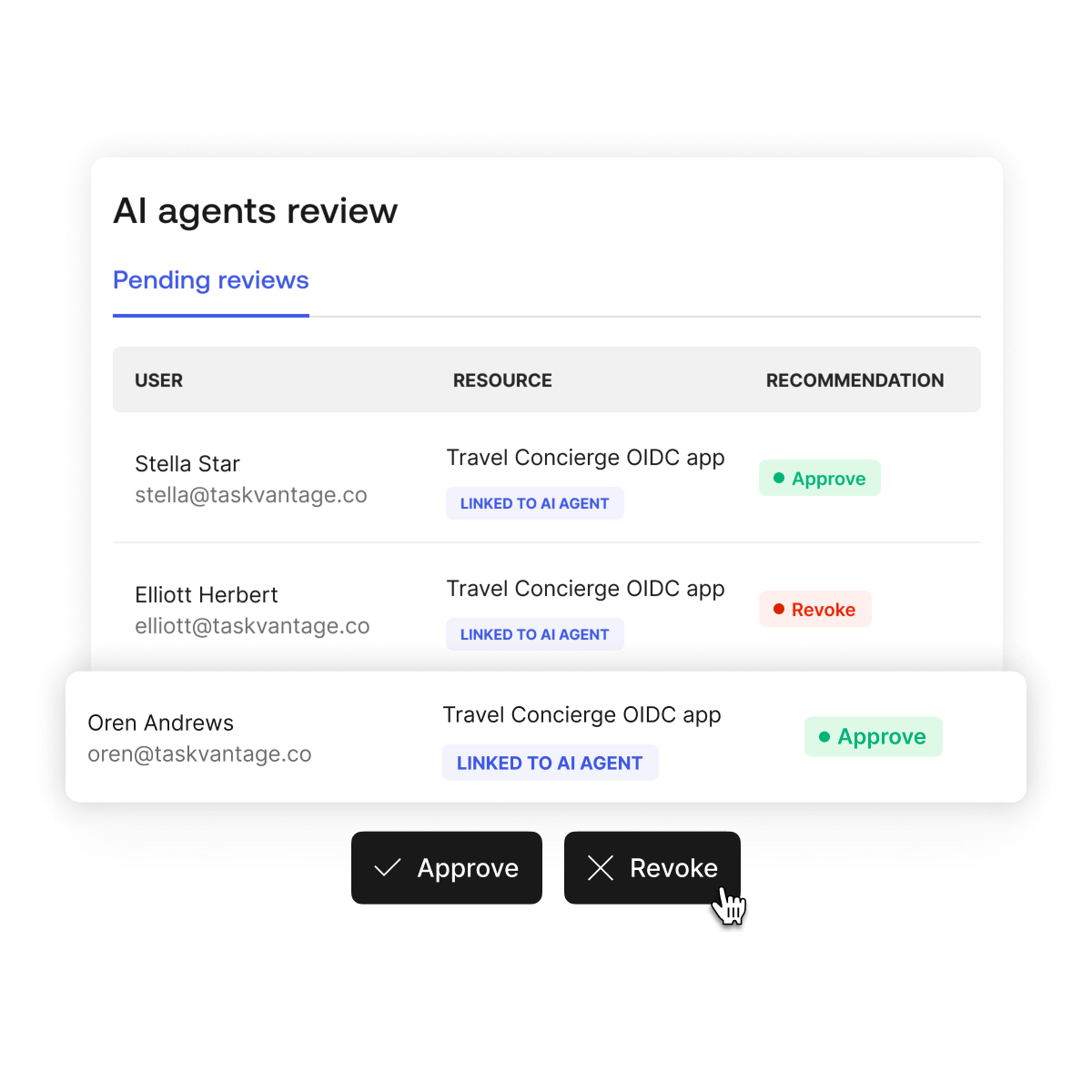

The third question, what can they do, extends into governance and runtime monitoring. Access certifications through Okta Identity Governance provide lifecycle oversight for agent permissions, and the kill switch capability, which Harish Peri, Okta’s SVP and GM for AI Security, emphasized during our discussion as an increasingly critical customer requirement, and one that enables both forward-looking revocation that prevents future calls and active token propagation that revokes all existing access tokens in real time.

As Harish framed it, the entity that issues the access token also holds the ability to revoke it, and that kill switch capability is something customers are explicitly asking for as they deploy agents with increasing autonomy. Ultimately someone needs to be able to pull the brakes when, not if, agentic incidents unfold.

We discussed Okta’s blueprint for the secure agentic enterprise above, below is a demo walking through showing what some of these capabilities look like in action within their platform:

Attribution and the Regulatory Imperative

One of the most consequential themes from our conversation was attribution, the ability to trace the full lineage of an agentic chain from the human who initiated it through every sub-agent invocation, tool call, and resource access along the way.

Harish flagged this as an emerging critical requirement from enterprise customers, noting that regulators, particularly under frameworks like the EU AI Act, are already asking for exactly this kind of accountability. The identity provider is the natural owner of the attribution chain because it creates the initial human identity token and can track delegation through every subsequent step. Okta actually had a good blog on this recently, titled “The Attribution Gap: Why Every AI Regulation Leads Back to Identity and Authorization”.

Okta is building agent-to-agent capabilities that allow agents to register both as identity principals and as resources that other agents can access. The company plans to expose agent registration through the Okta MCP server, enabling agents with human approval to register other agents, a capability that Ely described as essential for scaling into the thousands or even millions of agents that large organizations will eventually operate.

Building a standard for representing that chain of custody and lineage across agent-to-agent interactions is active work, with the goal of making it interoperable across organizations rather than locked into a single vendor’s ecosystem. This is something that will definitely be a community effort.

The Roadmap Beyond GA

The April 30th launch is the foundation, but the roadmap for the rest of 2026 extends into territory that addresses the intent and runtime monitoring challenges that static authorization alone cannot solve.

Later this summer, Okta plans to launch its Agent Gateway, which builds upon the MCP Bridge by offering a centralized control plane to aggregate tools and manage AI agent access through its virtual MCP server. It will provide tool invocation monitoring and the telemetry needed to build behavioral baselines for agents. That data feeds into what Ely describes as UEBA for agents within Okta’s Identity Threat Protection product, essentially anomaly detection that fingerprints an agent’s normal tool invocation patterns and alerts on deviations.

The longer-term vision includes intent-based security that compares an agent’s current behavior against its originally specified purpose, including the agent.md file or equivalent configuration that defines what the agent is supposed to do. Guardian agents, AI systems that analyze other agents’ behavior in real time, are part of that roadmap.

Ely and Harish both returned to a theme that carries real weight given Okta’s position in the market. The underlying technology enabling all of this is identity, and the plumbing required to make it work at enterprise scale, including token exchange, enforcement, delegation chains, and revocation propagation, is extraordinarily difficult to build from scratch.

Startups can and do build effective dashboards for detecting NHI issues and discovering rogue agents, and that ecosystem is valuable. But the harder challenge lies in what happens after detection, in the actual enforcement, remediation, and lifecycle management that requires deep integration into the authentication and authorization flow. That is where 17 years of operating identity infrastructure at scale provides a structural advantage that point solutions cannot easily replicate.

The framing that resonated most from our conversation was Harish’s closing advice:

“Do not get enamored by the edges. A new MCP gateway or a new discovery tool solves one piece of the puzzle, but enterprise-grade security, observability, remediation, and auditability for agents requires getting the hard middle right first, and the hard middle is identity.”

To get AI security right, you have to get identity right.

That has been true for human users for two decades, and it is just as true for agents now.