The Data-Centric Approach to Securing Agentic AI

Why Protecting AI Starts with Protecting the Data

I recently sat down with James Rice, VP of Product Marketing and Strategy at Protegrity, for an episode of Resilient Cyber. We covered a lot of ground, from the failure of traditional security models in the AI era, to the growing risks of AI data leakage and why agentic AI demands a fundamentally different approach to security.

It was one of those conversations that reinforced something I’ve been thinking about for a while now. We spend a lot of time as an industry talking about securing AI models, governing AI agents, and building guardrails around AI outputs. All of those conversations are important and necessary. But there’s a foundational layer underneath all of it that doesn’t get nearly enough attention: the data itself.

AI doesn’t operate in a vacuum. Every model, every agent, every RAG pipeline, every orchestration workflow is ultimately consuming, transforming, and generating data.

If the data flowing through those systems isn’t protected, classified, and governed, then no amount of model-level or agent-level security is going to save you.

That’s the thesis behind Protegrity’s approach, and it’s one worth unpacking.

AI Is Ready for Production - Security Isn’t.

If you’ve been following the AI space at all, you know the trajectory. Organizations have moved from experimentation and PoCs into full-blown production deployments of AI across the enterprise. From customer service chatbots and internal copilots to autonomous agents handling complex multi-step workflows, the technology has matured rapidly.

The problem is that security, risk, and compliance haven’t kept pace.

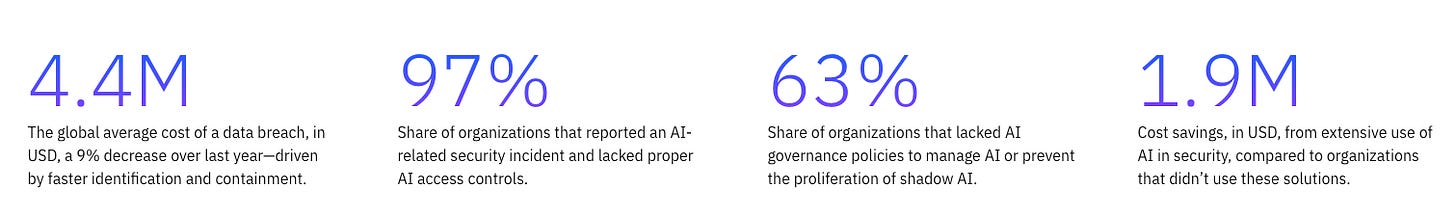

IBM’s 2025 Cost of a Data Breach Report, subtitled “The AI Oversight Gap”, painted a stark picture. 97% of organizations reported an AI-related security incident and lacked proper AI access controls. 63% of organizations lacked AI governance policies to manage AI usage or prevent shadow AI proliferation. These numbers should be alarming to anyone paying attention.

As I wrote in a recent piece on the Agentic AI Governance Blind Spot, the leading governance frameworks, including NIST’s AI RMF, ISO 42001, and the EU AI Act, don’t contain a single mention of agentic AI. We’re trying to govern the future with frameworks built for the past.

James and I talked extensively about what he described as “AI circularity”, a term Protegrity’s CEO Michael Howard has also used. Organizations want to leverage AI but can’t, because the complexity of securing critical business data throughout the AI lifecycle creates a paralyzing loop. They know the data needs to flow to power AI. They also know it can’t flow freely without proper protections. So they either deploy agents so restricted they’re effectively useless, or they take on unacceptable risk. Either way, AI fails to deliver on its promise.

Breaking out of that circularity requires rethinking where and how we apply security controls.

Traditional Security Models Are Failing

One of the themes James and I kept returning to throughout our conversation was the fundamental inadequacy of traditional, perimeter-based, and infrastructure-centric security models when it comes to AI.

For decades, the security industry has been built on a straightforward assumption: if you surround data with enough layers of perimeter, network, and identity controls, you can keep it safe. For traditional applications accessing data in predictable ways through well-defined access paths, that worked. Not perfectly, but well enough.

AI breaks that assumption completely.

LLMs don’t access data through predictable, linear paths. Agentic workflows dynamically retrieve information from multiple systems, combine it across domains, infer new relationships, and generate outputs that cross the very boundaries traditional controls were designed to enforce. Retrieval-Augmented Generation (RAG) pipelines pull context on the fly from potentially dozens of data sources. Agents initiate sequences of API calls, database queries, and tool invocations that don’t map neatly to existing IAM roles or DLP policies.

The traditional model is siloed, with controls tied to individual systems rather than the data that flows between them. It’s static, built around predefined roles and permissions that don’t account for real-time context or purpose. It’s also reactive, dependent on tickets, audits, and after-the-fact reviews rather than inline enforcement at the moment data is being consumed.

As I’ve discussed in pieces like my breakdown of the OWASP Top 10 for Agentic Applications, agents introduce risks around tool misuse, identity and privilege abuse, supply chain vulnerabilities, and unexpected code execution. These risks all share a common thread: they involve data moving in ways that traditional security controls weren’t designed to monitor, govern, or protect.

The industry has spent decades adding more layers around data. AI forces us to rethink protection from the inside out.

The Case for Data-Centric Security

This is where the concept of data-centric security becomes critical, and it was the core of my conversation with James.

Data-centric security flips the traditional model. Instead of trying to protect data by securing the systems, networks, and identities around it, you embed protection directly into the data itself. The controls travel with the data, regardless of where it goes, which system processes it, which model reasons over it, or which hyperscaler it runs on.

Think about it through the lens of Zero Trust. The whole premise of Zero Trust is “never trust, always verify,” applied to network access, identity, and authorization. Data-centric security extends that principle to the data layer itself. You don’t trust that a model or agent should see raw sensitive data just because it has system-level access. You verify, at the data level, what should be exposed, in what form, for what purpose, and under what context.

This is a meaningful shift. Traditional approaches protect where data lives. Data-centric approaches protect what the data is and how it’s used.

For AI workloads specifically, this matters because data flows through multiple stages across the AI lifecycle, from ingestion and curation, to training and fine-tuning, to retrieval and inference, to output and action. At each stage, sensitive information (PII, PHI, PCI, intellectual property) can be exposed, memorized by models, leaked through outputs, or combined with other data in ways that violate compliance requirements.

The risk of AI data leakage was something James and I spent a good amount of time on. Sensitive information can inadvertently surface through training data memorization, model outputs, prompt injection attacks, and RAG retrieval flows. This isn’t a theoretical concern. It’s happening today, and organizations are often unaware of the extent of the exposure because they lack visibility into how data moves through their AI pipelines.

A data-centric approach addresses this by ensuring that protection is applied before and during every stage of the AI lifecycle, not bolted on after the fact, which would perpetuate the age old challenge of security not being built in.

Embedded Controls and Semantic Guardrails: A Two-Layer Approach

One of the things that stood out to me in my conversation with James, and that Protegrity’s approach makes tangible, is the idea that securing AI requires two complementary layers of control.

The first layer is what Protegrity calls embedded controls. These operate upstream, at the data source and within the pipeline, before any model, agent, or retrieval step ever touches the data. The goal is to ensure that data entering AI workflows is already governed, masked, tokenized, anonymized, or synthesized as the use case requires.

This is where techniques like tokenization, dynamic masking, anonymization, format-preserving encryption, and synthetic data generation come into play. These aren’t new concepts in the data security world, but their application to AI workloads is increasingly critical.

Not all protection techniques serve the same purpose, and this is an important nuance. Tokenization and encryption support referential integrity, meaning AI and analytics can still group, count, correlate, and join across datasets using consistent tokens without ever exposing the raw sensitive values. Masking supports least-privilege consumption, ensuring that agents, users, or models only see the level of detail they need for a given task. Anonymization and synthetic data enable privacy-preserving analytics and model training, allowing teams to experiment and fine-tune without touching real sensitive data at all.

Choosing the right protection technique for the right use case is not trivial, and getting it wrong can break AI utility or leave sensitive data exposed. This is one of those areas where the precision of the approach matters enormously.

The second layer is semantic controls, or what Protegrity refers to as semantic guardrails. These operate downstream, at the point of interaction, analyzing prompts, retrievals, reasoning chains, and outputs for risk in real time. They stop leakage, prevent unsafe disclosures, detect injection attempts, and enforce purpose-of-use policies at the exact moment AI is generating or consuming information.

This two-layer approach is what creates end-to-end trust. Embedded controls ensure the data entering AI is safe. Semantic guardrails ensure the AI’s behavior and outputs remain governed.

Neither layer alone is sufficient. Without upstream data protection, even the best runtime guardrails are trying to stop sensitive data that should never have been exposed in the first place. Without downstream semantic controls, even well-protected data can be combined, inferred upon, or surfaced in ways that violate policy during multi-step agent reasoning.

Why This Matters for Agentic AI Specifically

Agentic AI amplifies every challenge I’ve described above.

As I’ve written about extensively, including in pieces on the OWASP Agentic AI Top 10 and the Agentic AI Governance Blind Spot, agents don’t just take prompts and produce outputs like a simple chatbot. They have autonomy. They take actions. They invoke tools, query databases, send messages, chain decisions together, and interact with other agents, often with minimal human oversight.

This fundamentally changes the security calculus.

Traditional access control models assume relatively stable identities accessing data through predictable paths. Agents destroy that stability. A single agentic workflow can cascade across multiple systems, APIs, and data sources in a matter of seconds, creating dynamic data flows that traditional IAM, DLP, and perimeter controls simply can’t see, let alone govern.

This is where the data-centric approach becomes not just valuable but necessary. When you can’t predict or control the path data will take through an agentic workflow, the only reliable approach is to ensure the data itself carries its protection with it.

James framed this well during our conversation. He emphasized that organizations need adaptive privilege for AI, where access to data shifts based on context, intent, and risk rather than static roles. An agent performing fraud detection might need tokenized transaction data that preserves referential integrity but obscures individual account numbers. The same agent, in a different workflow context, might need a completely different view of the same underlying data. Static role-based access control simply can’t accommodate that kind of dynamic, purpose-driven data consumption.

This maps directly to the Zero Trust principle of least privilege, applied at the data layer. Not just “who can access this system” but “what form of this data should this specific agent, in this specific workflow, at this specific moment, be allowed to see?”

The Broader Enterprise Reality

It’s important to recognize that AI security challenges don’t exist in isolation. They sit on top of challenges organizations have been grappling with for years.

Regulatory compliance (GDPR, HIPAA, PCI DSS, and emerging AI-specific regulations), data sovereignty requirements, cross-border data flows, fragmented data ownership, inconsistent classification, and the sheer complexity of hybrid and multi-cloud environments. These constraints haven’t gone away. If anything, AI magnifies them.

AI workflows naturally span multiple teams, systems, and jurisdictions. RAG pipelines retrieve data from across the enterprise. Agents make cross-domain joins that traditional governance models never anticipated. Multi-model orchestration layers blend data from structured databases, unstructured documents, and real-time feeds.

The organizations James described working with, including some of the largest banks, healthcare insurers, government agencies, and retailers in the world, are dealing with billions of sensitive records, trillions of transactions, and strict regulatory expectations. These are exactly the environments where AI introduces the most risk and the most opportunity simultaneously.

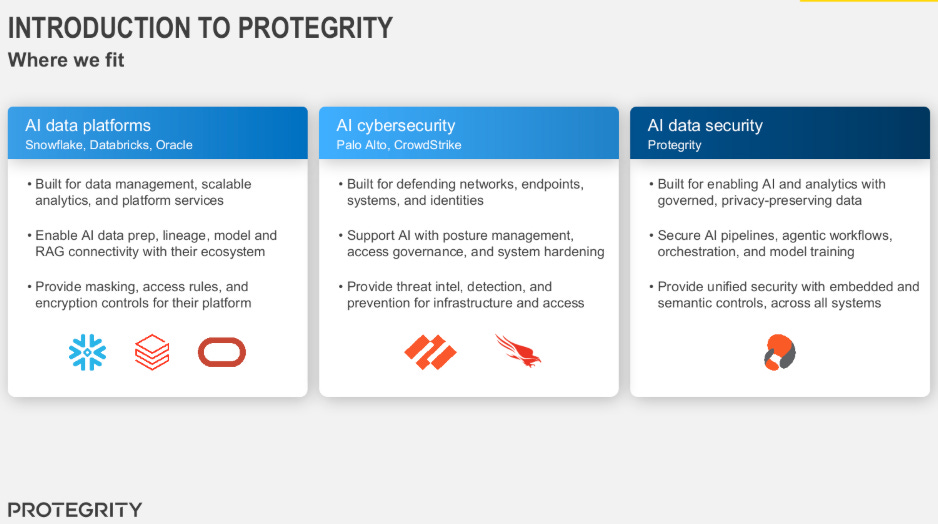

What Protegrity brings to these environments is a unified data control layer that spans the entire AI lifecycle, from data discovery and classification through governance, protection, privacy, and runtime semantic enforcement. It’s platform and cloud-agnostic by design, which matters in a world where enterprises are running AI workloads across multiple hyperscalers, data platforms, and orchestration frameworks.

Where We Go From Here

The conversation with James reinforced something I’ve been saying for a while now: the AI security conversation needs to evolve beyond model-centric and even agent-centric thinking to include a serious focus on data-centric security.

This isn’t an either/or proposition. You need model security. You need agent governance. You need identity controls for non-human identities. You need runtime monitoring and behavioral analysis. All of those things remain important.

But without a data-centric foundation underneath all of it, the rest is built on sand.

The organizations that will successfully operationalize AI at scale will be the ones that solve the data problem first. They’ll be the ones who can answer the fundamental question: “Is the data powering our AI protected, classified, governed, and used appropriately across every step of every workflow?”

If you can’t answer that question today, no amount of guardrails at the model or agent layer will compensate for the gap underneath.

The road to successful AI runs directly through data security. Not around it. Through it.