Resilient Cyber Newsletter #97

Welcome to issue #97 of the Resilient Cyber Newsletter!

This was a week that tested every narrative we have been building about AI and cybersecurity. Microsoft unveiled MDASH, a multi-model agentic scanning harness that found 16 new vulnerabilities in the Windows networking stack, including four Critical remote code execution flaws. Google’s Threat Intelligence Group confirmed with high confidence that threat actors used an AI model to discover and exploit a zero-day for 2FA bypass, the first documented case of its kind. And the Mini Shai-Hulud worm struck TanStack, compromising 84 npm package artifacts across 42 packages in six minutes flat.

On the Mythos front, the scrutiny arc I have been tracking since issue #95 deepened. Rival Security found that one of Mythos’s celebrated discoveries was a CVE already present in its training data. Daniel Stenberg ran Mythos against curl’s 178,000 lines and concluded the results were no more advanced than existing tools. Meanwhile, Wired reported that nearly 380,000 vibe-coded apps are publicly accessible, with 5,000 of them leaking sensitive medical, financial, and corporate data.

The policy side moved too. The Trump administration formally listed offensive cyber operations as a counterterrorism tool, and both OpenAI and Anthropic announced enterprise services ventures that signal a structural shift in how AI labs compete for government and enterprise customers.

Let’s get into it.

Social engineering has a new playbook.

Social engineering attacks are no longer isolated incidents; they follow a structured chain. Attackers gather context, build credible identities, and engage targets in ways that feel routine and trustworthy.

That’s what makes them difficult to detect. Each step is designed to blend in.

Defending against this kind of activity means understanding how attacks unfold from start to finish, across both multiple channels.

Doppel breaks down how the modern social engineering attack chain works, and what it takes to identify and disrupt it earlier.

*Sponsored

Cyber Leadership & Market Dynamics

Offensive Cyber Operations Get a Formal Counterterrorism Mandate

I have been tracking the expanding role of offensive cyber in national security since the Pentagon’s 100,000 vibe-coded agents story in issue #95, and this week the Trump administration made it official.

The new counterterrorism strategy explicitly lists offensive cyber operations as a tool against narcoterrorists, transnational criminal organizations, and state-backed proxy groups.

This is the first time a U.S. counterterrorism strategy document has formally and publicly integrated offensive cyber capabilities alongside kinetic options. Whether you view this as overdue transparency or a troubling normalization of cyber offense as routine statecraft, the direction is unmistakable. Offensive cyber is no longer a classified footnote. It is a named instrument of national power.

AI Labs Are Becoming Services Companies

This is the story that should concern every traditional IT services firm. OpenAI launched Deployment Co., a consulting venture backed by $4 billion from 19 investment firms including TPG, Advent, and Bain Capital, valued at $10 billion. Anthropic announced its own enterprise services joint venture with Blackstone, Hellman & Friedman, and Goldman Sachs, with $300 million committed from each partner and ecosystem support from Accenture, Deloitte, and PwC.

As I discussed in issue #96 when covering Anthropic’s $4.4 billion ARR, these companies are not just building models anymore. They are building the implementation layer. For cybersecurity, the implication is that the AI labs deploying frontier models into government and enterprise environments will also be the ones advising on how to secure those deployments. That is an unprecedented consolidation of capability and influence.

Frame Security Enters the Human Risk Market with $50 Million

Here is a stat that should make every CISO pause. 96% of organizations run security awareness training, yet 90% of breaches still involve the human element.

Frame Security, backed by Index Ventures, Team8, and Picture Capital, is betting $50 million that the answer is not more slide decks. Their platform automates realistic attack simulations including deepfake audio and video scenarios, delivers role-based training, and provides real-time guidance.

Gartner’s 2025 data showed that 43% of CISOs had already experienced deepfake audio calls and 37% had encountered deepfake video. The traditional annual phishing simulation is starting to look like a relic. As AI-generated social engineering scales, defense has to match the personalization and speed of the attack.

Rising in Cyber Tracks the Market’s Structural Transformation

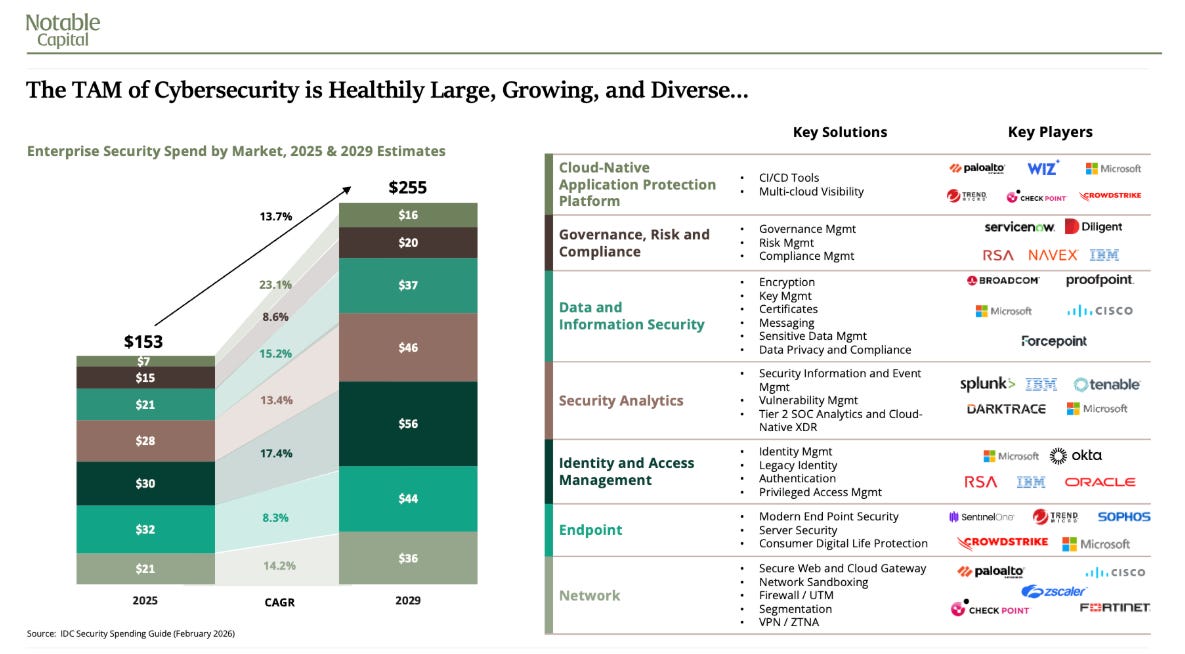

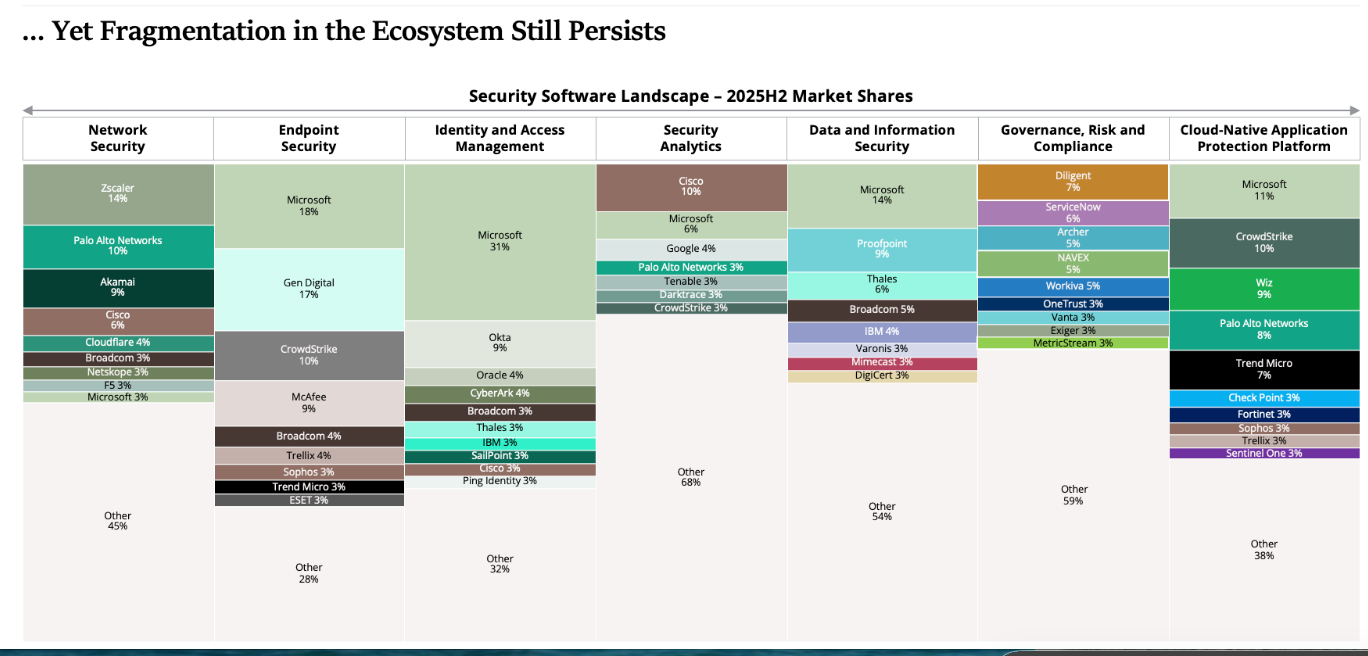

The Rising in Cyber report paints the macro picture for anyone who wants to understand where the cybersecurity market is headed. The market is projected to grow from $153 billion in 2025 to $255 billion by 2029. Series A and B funding dominated 2025 at over $10 billion, with $5.5 billion going to AI-native startups, the only segment that did not decline year over year.

Expected double-digit growth areas include IAM, data security, and cloud-native application protection. As I discussed in issue #96 with Sequoia’s Konstantine Buhler on narrative violation, the investors who recognized the AI-native shift early are positioned to capture the opportunity. Everyone else is recalibrating assumptions that should have been challenged a year ago. Yet, they also demonstrate just how fragmented the cybersecurity market is, despite how dominant some of the players seem based on brand name.

The Unprompted Conference and What It Tells Us About AI Security’s Maturity

Caleb Sima has been one of the most credible voices in AI security for years, and the Unprompted 2026 conference in San Francisco reflected the field’s growing maturity. The event featured real demos and sharp technical talks rather than the vendor pitch theater that dominates most security conferences.

With Caleb’s background as CSO at Robinhood and VP Security at Databricks, now leading White Rabbit as an AI-driven security venture studio, the conference curates from a practitioner perspective rather than an investor one. The AI security conversation needs more venues where the emphasis is on what works rather than what sells.

This is a great resource consolidating not just [un]prompted by many other conferences and talks into a single comprehensive resource.

Cloud attacks have a new entry point. It’s your running applications.*

That’s why a new category is emerging: Cloud Application Detection and Response (CADR).

This new guide breaks down what CADR is, why runtime is the only place real attacks can be detected, and how security teams are protecting applications, cloud infrastructure, and AI systems in production.

If you’re responsible for securing modern cloud workloads, this is a concept you’ll want to understand.

*Sponsored

AI

Microsoft Finds 16 Windows Vulnerabilities with a Multi-Model Agent Swarm

This is the kind of applied AI security work that moves the field forward.

Microsoft’s Autonomous Code Security team built MDASH, a multi-model agentic scanning harness that orchestrates over 100 specialized AI agents across an ensemble of frontier and distilled models.

The system found 16 new vulnerabilities in the Windows networking and authentication stack, including four Critical remote code execution flaws in the kernel TCP/IP stack and IKEv2 service. The architecture runs in stages. Specialized auditor agents scan, debater agents argue for and against exploitability, deduplication agents collapse semantically equivalent findings, and prover agents construct triggering inputs.

Combined with CodeMender from issue #96 and AISLE’s VulnOps model, this represents a clear trend. Multi-agent architectures are outperforming single-model approaches for vulnerability discovery, and the organizations investing in this infrastructure are finding real, Critical-severity flaws.

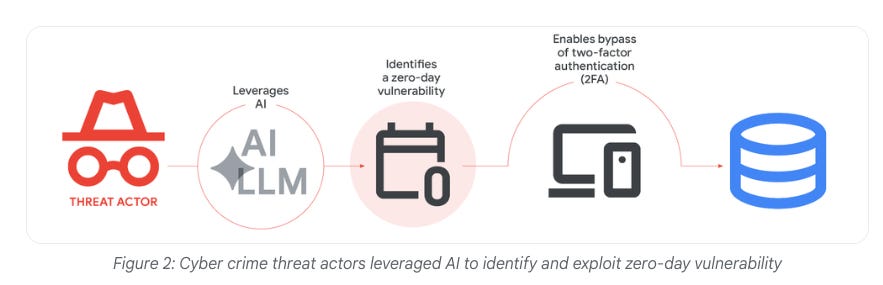

Google Confirms the First AI-Generated Zero-Day Used in the Wild

If there was a single story this week that validates the threat models I have been writing about since the OWASP Agentic Top 10, this is the one.

Google’s Threat Intelligence Group confirmed with high confidence that threat actors used an AI model to discover and exploit a zero-day vulnerability, creating a 2FA bypass. GTIG also documented UNC2814, a Chinese group targeting telecom and government sectors, using persona-driven jailbreaks for vulnerability research on embedded devices.

APT45, a North Korean group, sent thousands of repetitive prompts to recursively analyze CVEs and validate proof-of-concept exploits. As I wrote in my article on Agentic AI Threats and Mitigations, the use cases available to defenders are equally available to attackers. We now have documented confirmation from Google’s threat intelligence team that this is happening at the nation-state level.

Cisco Ships an Open Specification for Agentic AI Security Pipelines

What makes Cisco’s Foundry Security Spec interesting is what it is not. It is not a managed service or a proprietary platform. It is an open specification distilled from Cisco’s internal security evaluation systems, defining eight core agent roles including Orchestrator, Indexer, Detector, Triager, and Validator.

The specification is built alongside Foundation-sec-8B, an 8-billion-parameter security model built on Llama 3.1, and Foundation-sec-8B-Reasoning for multi-step security analysis. The design philosophy is that the need for orchestrators, detectors, and validators persists regardless of which underlying models evolve.

Combined with Microsoft’s MDASH and the Model Provenance Kit from issue #96, Cisco is building both the open standards and the open models for an agentic security ecosystem. That approach deserves attention from anyone building security tooling on top of foundation models.

CSA Releases the AI Security Maturity Model

The Cloud Security Alliance released the AI Security Maturity Model after receiving over 600 comments from 60 international reviewers, and it fills a gap I have been pointing to across multiple issues.

Only 26% of organizations report having comprehensive AI security governance policies. 64% have some guidelines or are still developing them. The maturity model aligns with CSA’s Cloud Security Maturity Model and AI Controls Matrix, covering model security, AI infrastructure, agentic applications, MCP servers, and AI developer enablement.

CSA’s research shows that governance maturity is the strongest predictor of AI readiness. This connects directly to the shadow AI crisis I covered in issue #96, where 80% of Fortune 500 companies deploy agents but only 10% have a strategy to manage them. You cannot secure what you cannot measure, and the AISMM gives organizations a framework to start measuring.

Sysdig Launches a Headless Platform for Non-Human Identity Security

Machine identities outnumber human identities 40,000 to 1. They are 7.5 times more risky than human identities, and nearly 40% of breaches start with credential exploitation.

Sysdig’s response is what they call the industry’s first headless cloud security platform, designed not for human analysts clicking through dashboards but for AI agents operating at machine speed. The platform embeds full CNAPP capabilities directly into AI coding agents for real-time detection and response without requiring a human interface.

As I wrote in my article on What are Non-Human Identities and Why Do They Matter, the NHI challenge is not theoretical. It is the operational reality of every cloud-native environment. Sysdig’s headless approach acknowledges that the future of cloud security is not a better UI, it is no UI at all.

The Poisoned Truth Inside Enterprise RAG Systems

RAG has become the default architecture for grounding enterprise AI in organizational knowledge, and that creates a trust problem most security teams have not fully grasped.

Research accepted to USENIX Security 2025 demonstrated that injecting just five malicious texts into a database containing millions of documents achieved a 90% attack success rate. CrowdStrike has already detected data poisoning in the wild, including embedded hidden instructions in scripts. OWASP added LLM08:2025 (Vector and Embedding Weaknesses) to address these threats specifically.

The core issue is that LLMs cannot distinguish between legitimate and poisoned content. Everything retrieved is treated as ground truth. When you combine that with agentic systems that autonomously execute instructions from retrieved context, the attack surface expands from misinformation to arbitrary code execution.

As I discussed in Agentic AI Threats and Mitigations, the risks compound when agents act on poisoned inputs without human review.

ODNI Tells CISOs to Build Their Own AI Espionage Defenses

The intelligence community is being unusually direct about a difficult reality. ODNI acknowledged AI-powered espionage as a growing national security threat and then essentially told CISOs they are on their own building defenses. In August 2025, an AI tool was used for data extortion against international government, healthcare, and public health sectors.

By May 2025, NSA, CISA, and FBI had issued a joint bulletin confirming that adversaries are poisoning AI systems across sectors by corrupting training data. The poisoned data can reshape how systems label financial transactions, interpret medical scans, or filter content without triggering alerts. Only 26% of organizations report comprehensive AI security governance policies, per CSA research. This reinforces the maturity model discussion above. The government is being transparent about the threat. It is less clear on who owns the solution.

175,000 Ollama Servers and the Anatomy of LLM Infrastructure Abuse

For anyone who thinks exposed LLM infrastructure is a theoretical risk, this honeypot data should recalibrate that assumption. Over 91,000 attack sessions were captured between October 2025 and January 2026, with more than 80,000 concentrated in an 11-day burst over the holidays.

Attackers follow systematic reconnaissance patterns. They start with simple queries to identify which models respond, then attempt SSRF exploitation through Ollama’s model pull functionality using attacker-controlled registry URLs, and finally deploy prompt injection to extract system prompts, environment variables, and container artifacts like Docker sockets and Kubernetes tokens.

With more than 175,000 Ollama servers exposed and estimated attack costs of $46,000 per day, this is not opportunistic scanning. It is organized, methodical infrastructure exploitation. The lesson for security teams deploying local LLMs is that anything exposed to the internet will be found and probed within hours.

You Do Not Need Frontier Models to Transform Security Operations

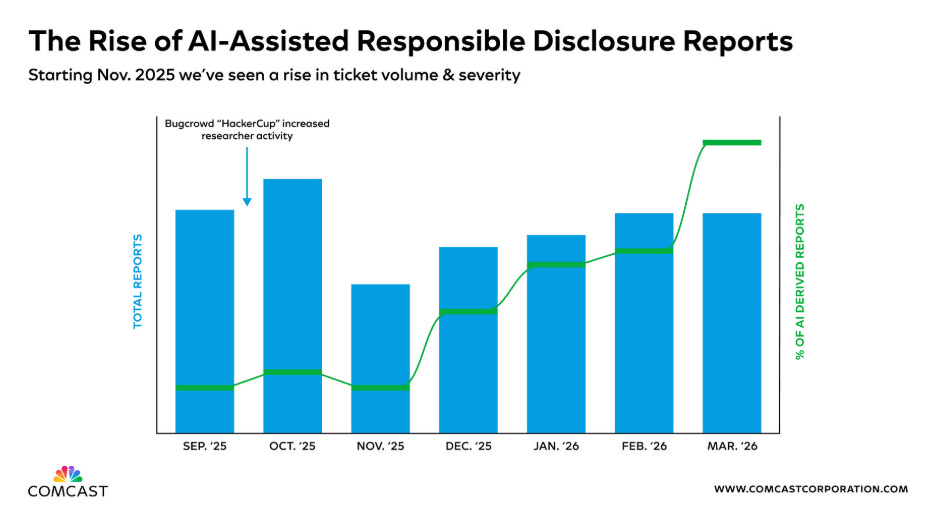

Comcast Business made an argument that I think deserves more airtime. Organizations do not need to wait for the next frontier model to realize meaningful security gains from AI.

Current AI and ML capabilities can already automate threat analysis, anomaly detection, and incident response at machine speed. The obsession with frontier model capabilities, while understandable given the Mythos attention cycle, risks creating a false dependency where teams delay security improvements because they are waiting for the next model generation.

A multi-layered approach combining current AI with human expertise is the pragmatic path. As I have been writing since the 2025 AI Security Rewind, the organizations getting the most value from AI in security are not the ones chasing the bleeding edge. They are the ones deploying proven capabilities at scale.

AppSec

Mini Shai-Hulud Devours TanStack in a Six-Minute Supply Chain Blitz

The speed and sophistication of this attack should be required reading for every engineering team. On May 11, 2026, the TeamPCP threat actor published 84 malicious versions across 42 @tanstack/* npm packages in six minutes.

The tanstack/react-router package alone receives roughly 12 million weekly downloads. Within 48 hours, the campaign expanded to 172 packages with 403 malicious versions across npm and PyPI. The attack chain exploited three GitHub Actions vulnerabilities in sequence, creating a fork with malicious code, poisoning the GitHub Actions cache, and extracting OIDC tokens from runner process memory for unauthorized package publishing.

The payload was a credential stealer targeting CI/CD tokens, cloud credentials from AWS IMDSv2, GCP, and Azure, Kubernetes service accounts, and HashiCorp Vault secrets.

As I wrote in Software Transparency, the trust model in modern package ecosystems was not designed for this kind of coordinated, multi-vector attack. Combined with the PyTorch Lightning compromise from issue #96, Mini Shai-Hulud demonstrates that supply chain worms are becoming self-propagating and cross-ecosystem.

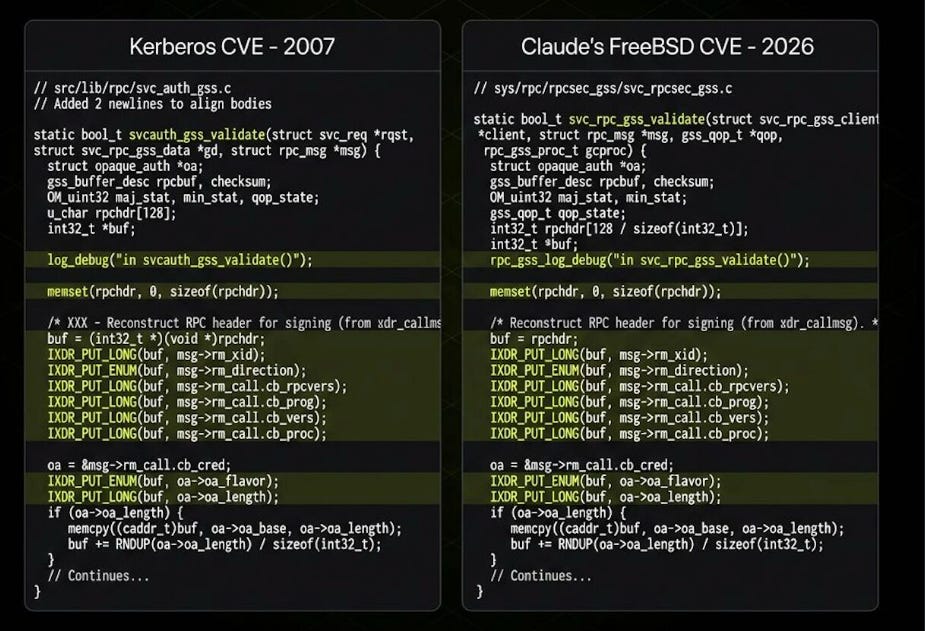

Mythos Found a CVE That Was Already in Its Training Data

The Mythos scrutiny arc that I have been tracking since issue #95 took another turn this week. Rival Security examined CVE-2026-4747, a remote code execution vulnerability in FreeBSD’s networked file system that Mythos reportedly discovered.

The vulnerable function matched CVE-2007-3999, which was patched in 2007, and the code was already present in Claude’s training data. Rival Security characterized this as “combinatorial creativity” rather than genuine novel discovery.

The AI rediscovered and recombined information it had already been trained on. This does not mean the finding is worthless. Rediscovery of latent vulnerabilities in active codebases has real value. But it does mean we need to be precise about what “AI-discovered vulnerability” actually means. The distinction between novel zero-day discovery and informed pattern matching matters for how we calibrate trust in AI security tools.

Daniel Stenberg Ran Mythos Against Curl and Was Not Impressed

Nobody knows curl better than Daniel Stenberg, and his assessment of Mythos’s performance against the project’s 178,000 lines of code is worth reading carefully. Of five “confirmed security vulnerabilities” that Mythos identified, three were known issues already in the official documentation, one was a bug but not a security hole, and one was an actual low-severity vulnerability that was patched in late June.

Previous analysis with other AI tools including Zeropath, AISLE, and OpenAI’s Codex had identified 200-300 issues with a dozen or more confirmed vulnerabilities. Stenberg’s conclusion was blunt. He saw no evidence that Mythos finds issues to any higher or more advanced degree than existing tools.

Combined with Rival Security’s training data findings and the Glasswing Paradox from issue #96 where fewer than 1% of Mythos-found vulnerabilities were patched, the picture that emerges is of a capability that is real but substantially overhyped relative to the marketing narrative.

380,000 Vibe-Coded Apps and the Data Leaking from Them

This is the vibe coding reckoning I warned about in Vibe Coding Conundrums and across newsletters #73, #76, and #78. RedAccess researchers identified approximately 380,000 publicly accessible applications created with vibe-coding platforms like Lovable, Replit, Base44, and Netlify.

Of those, 5,000 were actively leaking sensitive data including medical records, financial information, and corporate documents. 40% of examined applications had virtually no security or authentication. The examples are damning. A shipping company’s app exposed vessel routes.

A Brazilian bank’s financial data was publicly accessible. Unredacted customer service conversations were indexed by search engines. Platform responses ranged from ignoring findings to deflecting responsibility to users. As Andrej Karpathy defined it, vibe coding is for scenarios where you intentionally disregard code quality.

The problem is that thousands of people are shipping vibe-coded apps into production with real user data behind them.

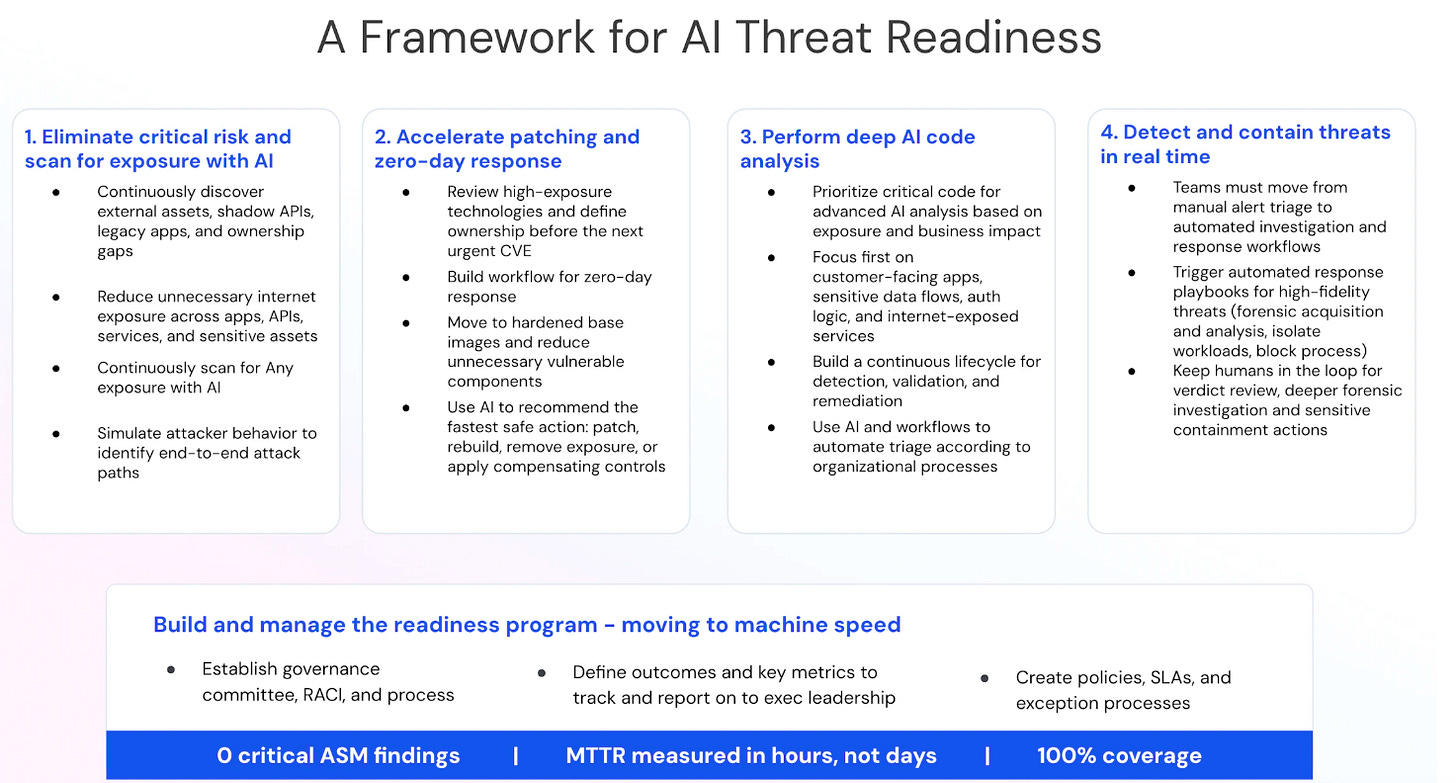

Wiz Proposes an AI Threat Readiness Framework

Wiz framed the problem in a way that resonates with everything I have been tracking on the vulnerability management front. The gap between exposure and exploitation is shrinking, and security programs need to reduce the time between identification, validation, and remediation.

Their four-pillar framework is organized around two axes. Speed of Action and Breadth of Visibility. Wiz Defend provides runtime visibility and threat detection across workloads, cloud environments, Kubernetes, identities, and AI runtime activity. What I appreciate about this framework is the explicit acknowledgment that AI readiness is not just about deploying AI tools.

It requires comprehensive visibility across the full environment, from cloud infrastructure to code to SaaS to supply chain. As I wrote in my piece on the AI cyber capability curve, the organizations best positioned for the AI threat era are the ones that solved the visibility problem first.

Cloudflare’s Response to the Copy Fail Linux Privilege Escalation

CVE-2026-31431 is the kind of vulnerability that keeps infrastructure teams up at night. An out-of-bounds write in the Linux kernel’s algif_aead crypto module, exploitable through splice() and page cache manipulation, affecting virtually every Linux distribution since 2017.

The exploit is deterministic, does not rely on race conditions, and can be implemented in approximately 732 bytes. Cloudflare’s Security and Engineering teams validated that their existing behavioral detections could identify the exploit pattern within minutes of disclosure, and no customer impact occurred.

What makes this worth highlighting is the operational maturity on display. The flaw existed for nine years across every major distribution, and Cloudflare’s defense-in-depth approach caught it before it mattered. That is the model. You cannot prevent every vulnerability from existing, but you can build detection and response capabilities that reduce the window between disclosure and mitigation to minutes rather than days.

Simon Willison Corrects the Record on Vibe Coding

Simon Willison’s clarification matters because the misuse of Andrej Karpathy’s term has real consequences for how we think about AI-generated code risk. Vibe coding does not mean using AI tools to help write code. It means generating code without caring about the output.

Karpathy coined it on February 6, 2025, for throwaway and experimental projects where code quality is intentionally disregarded. The tech publishing industry has conflated vibe coding with responsible AI-assisted development, and that conflation muddies every conversation about security implications. When I write about vibe coding risks, I am talking about the 380,000 publicly accessible apps that Wired documented this week, not about professional developers using AI with proper review.

The distinction between intentional disregard for quality and augmented professional development is the difference between a security crisis and a productivity gain. Getting the terminology right is the first step toward getting the governance right.

Rami McCarthy’s Spooky Skills and the Agent Trust Problem

Wiz Principal Security Researcher Rami McCarthy’s “spooky-skills” project highlights a risk that intersects directly with the OpenClaw backdoor discussed above and the broader supply chain concerns I have been tracking.

Agent skills, the extensible capabilities that allow AI agents to interact with external systems, represent a growing attack surface where misconfigured GitHub Actions, social engineering of maintainers, and credential hygiene failures converge. McCarthy’s research emphasizes that the same trust assumptions that created the npm and PyPI supply chain problems are being replicated in agent skill marketplaces.

As I wrote in issue #96 with the PyTorch Lightning compromise and in Software Transparency, the trust model has to evolve. Every new extension point for AI agents is a potential supply chain entry vector, and most organizations have zero visibility into the skills their agents are consuming.

Final Thoughts

This week drove home a point that keeps sharpening with every issue. The Mythos hype cycle is colliding with operational reality, and operational reality is winning. Rival Security found a celebrated discovery that was already in the training data.

Daniel Stenberg found no evidence of capability beyond existing tools. Meanwhile, the actual threats are accelerating. Google confirmed the first AI-generated zero-day exploit used in the wild. Mini Shai-Hulud compromised 172 packages across two ecosystems in 48 hours. Every major AI coding IDE is vulnerable to IDEsaster attacks. And 380,000 vibe-coded apps are leaking real user data.

The positive developments are real but unevenly distributed. Microsoft’s MDASH found Critical Windows vulnerabilities with a 100-agent swarm. Cisco open-sourced both a security specification and an 8-billion-parameter security model. CSA gave us a maturity framework. Cloudflare demonstrated what operationally mature detection looks like against a nine-year-old Linux vulnerability. These are concrete, measurable advances.

But the gap I identified in issue #96 between discovery and remediation is widening, not narrowing. The Internet Bug Bounty paused new submissions. The organizations that will navigate this well are not the ones with the most advanced AI models. They are the ones building operational frameworks, identity infrastructure, and detection capabilities that match the speed of the threat. The race is not about who finds the most vulnerabilities. It is about who closes the loop fastest.

Stay resilient.