Resilient Cyber Newsletter #93

The Beginning of the End of Cyber, Global Cyber Market, SEC Cyber "Materiality", AISI Eval of Claude Mythos, AI Cyber's Jagged Frontier & Mother of All KEV's

Welcome to issue #93 of the Resilient Cyber Newsletter!

If last week felt like a turning point with Project Glasswing, this week confirmed it. The reverberations from Anthropic’s Mythos announcement rippled through Wall Street, the White House, the Pentagon, and every major open source ecosystem.

Federal Reserve Chairman Jerome Powell and Treasury Secretary Scott Bessent convened CEOs from JPMorgan, Goldman Sachs, Citigroup, Bank of America, Morgan Stanley, and Wells Fargo to discuss what Mythos means for financial infrastructure. More than 99% of the vulnerabilities Mythos has found remain unpatched.

Meanwhile, the UK AI Safety Institute published its independent evaluation of Mythos and confirmed a 73% success rate on expert-level capture-the-flag challenges that no prior model had solved.

OpenAI responded by launching its own Trusted Access for Cyber program with a cyber-permissive model for vetted defenders. Jen Easterly published a striking piece arguing that Mythos marks the beginning of the end of cybersecurity as we know it.

The Trump administration released its offensive cyber strategy, and Defense One reported the private sector is being drawn deeper into the offensive cyber debate than ever before.

AISLE published research showing that the moat in AI cybersecurity is the system, not the model, and that small open models can match frontier labs on basic security reasoning.

There is a lot of ground to cover, so let’s get going.

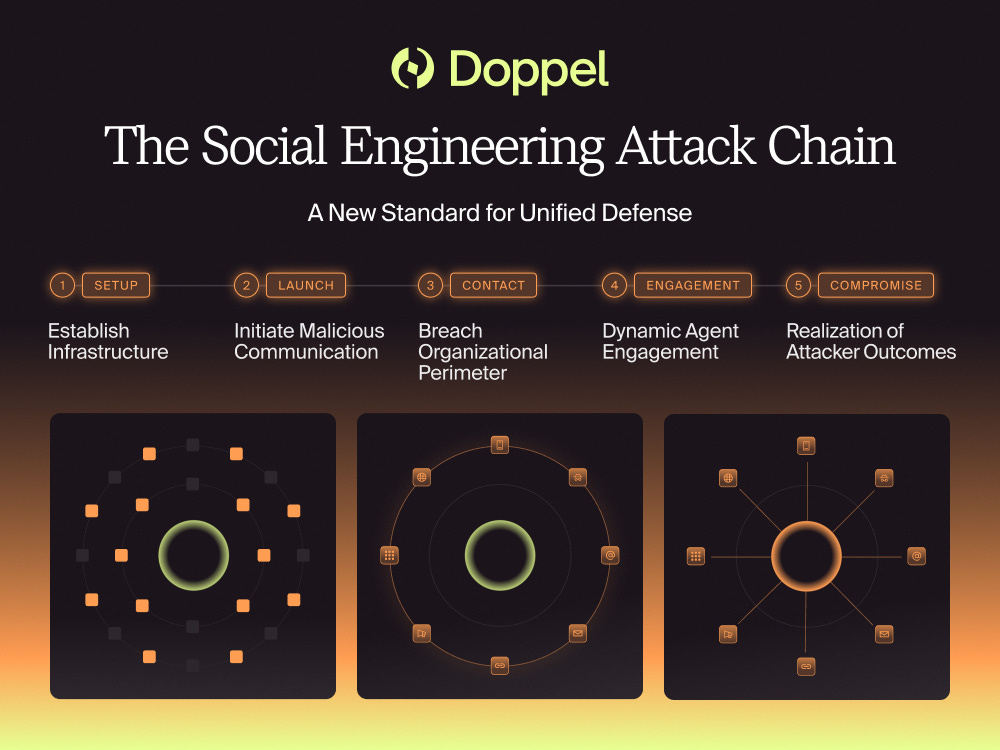

Social engineering has a new playbook.

Social engineering attacks are no longer isolated incidents; they follow a structured chain. Attackers gather context, build credible identities, and engage targets in ways that feel routine and trustworthy.

That’s what makes them difficult to detect. Each step is designed to blend in.

Defending against this kind of activity means understanding how attacks unfold from start to finish, across multiple channels.

Doppel breaks down how the modern social engineering attack chain works, and what it takes to identify and disrupt it earlier.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

Jen Easterly on the Beginning of the End of Cybersecurity

Former CISA Director Jen Easterly published one of the most important essays of the year, arguing that Anthropic’s Mythos announcement marks a fundamental inflection point for the cybersecurity profession.

Easterly’s central thesis is that AI-driven vulnerability discovery at Mythos-level capability changes the economics of attack and defense so profoundly that the traditional model of cybersecurity, built on perimeter defense, human-speed patching, and reactive incident response, cannot survive in its current form. This is not a pessimistic take.

Easterly is arguing for a new foundation, one where security is built into software from the start and where AI-powered defense operates at the same speed as AI-powered offense. As someone who has tracked Easterly’s Secure-by-Design advocacy since her time at CISA, I see this as a natural extension of the work she championed there.

The difference now is that the urgency is measured in weeks, not years.

Powell and Bessent Convene Bank CEOs on Mythos Cyber Risk

This story deserves attention because it signals that AI-driven vulnerability discovery has reached the level of systemic financial risk. Federal Reserve Chairman Jerome Powell and Treasury Secretary Scott Bessent met with the CEOs of JPMorgan, Goldman Sachs, Citigroup, Bank of America, Morgan Stanley, and Wells Fargo to discuss the implications of Claude Mythos Preview for financial infrastructure.

The meeting came just days after Anthropic launched Project Glasswing with $100 million in usage credits and partnerships with Amazon, Apple, Cisco, CrowdStrike, Google, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks.

The fact that the most powerful financial regulators in the country are sitting down with the largest banks specifically because of an AI model’s offensive capabilities is unprecedented. More than 99% of the vulnerabilities Mythos has discovered remain unpatched, and the oldest was a 27-year-old OpenBSD bug. This is no longer just a cybersecurity conversation. It is a national security conversation.

JPMorgan Says Anthropic’s Cybersecurity Model to Boost CrowdStrike and Palo Alto Networks

JPMorgan moved quickly after the Glasswing announcement, identifying CrowdStrike and Palo Alto Networks as the primary beneficiaries of the defensive coalition. Both are founding partners in Project Glasswing, and JPMorgan’s analysts see them as essential layers in what they call the “defensive stack” that organizations will need as AI-discovered vulnerability volumes grow.

CrowdStrike received a $475 twelve-month price target and Palo Alto Networks received $200. I am less interested in the stock picks than the structural argument. If AI is going to find vulnerabilities at machine speed, the companies that can operationalize detection, triage, and response at that speed will capture enormous value. This validates the platformization thesis I have been tracking since Nikesh Arora’s Sequoia conversation in issue #92.

WSJ on AI Forcing a Rethink in Cybersecurity

The Wall Street Journal published a comprehensive analysis of how AI is reshaping trust models, governance, and workforce requirements across the cybersecurity industry. The central argument is that 2026 is defined by a collapse of trust as adversaries exploit human behavior and digital identity at scale using generative AI. Perimeter-based thinking is breaking down. Identity is becoming the central organizing principle for security strategy.

This aligns directly with the agentic identity work I highlighted in issue #92, where Dick Hardt’s AAuth spec and Karl McGuinness’s authority-first framing represent the infrastructure response to exactly this challenge. The WSJ piece also notes that buyer expertise has never mattered more, which tracks with Anton Chuvakin’s RSA 2026 observation from last week about vendors spray-painting AI onto 2021 marketing materials.

Amy Chang on the End of the Gray Zone

Amy Chang, former JPMorgan Chase Executive Director of Global Cybersecurity and author of Warring State, published a piece on the collapse of the gray zone between peacetime cyber operations and outright conflict.

Her argument connects directly to the offensive cyber policy debate playing out in Washington. As Mythos-class capabilities proliferate, the ambiguity that nation-states have exploited for decades, conducting espionage and disruption while maintaining plausible deniability, is eroding.

When AI can autonomously discover and exploit vulnerabilities across critical infrastructure, the distinction between intelligence collection and preparation for attack becomes dangerously thin.

US Push to Counter Hackers Draws Industry Into Offensive Cyber Debate

Defense One published a deep investigation into how the Trump administration’s cyber strategy is drawing private sector companies deeper into offensive operations. Nearly a dozen industry stakeholders expressed uncertainty about where companies should draw the line between defensive and offensive work.

The strategy’s first pillar explicitly focuses on creating obstacles for foreign state cyber operatives and criminal hackers, and the language around “disruption” and “cyber effects” remains ambiguous. GovConWire’s reporting adds that VulnCheck’s Jay Wallace noted the US is “thinking more offensively than we ever have in the nation’s history.”

This is a significant policy shift with real implications for the cybersecurity industry, and I expect it to generate considerable debate about liability, oversight, and proportionality over the coming months.

Jay McBain on the Global Cybersecurity Market

Jay McBain shared updated data showing the global cybersecurity market at $311 billion in projected spend for 2026, growing at 12.1% year-over-year. These numbers confirm the structural growth thesis, but the real story is where the growth is concentrating.

As I have been tracking since SVB’s H1 2026 State of the Markets report in issue #92, capital is flowing disproportionately to AI-native platforms while traditional point solutions fight for scraps. The $311 billion top line is healthy, but the distribution underneath is increasingly bifurcated.

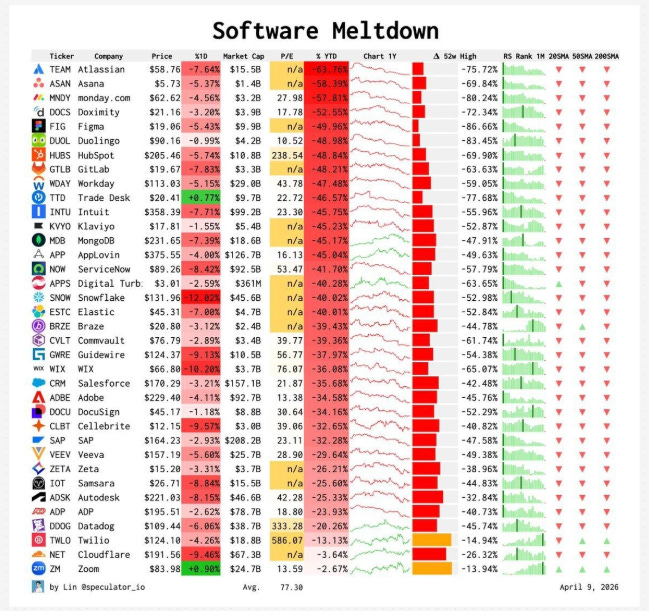

The SaaS Meltdown Is Real

Ruben Dominguez Ibar published a blunt assessment of the SaaS market decline, arguing that the SaaS era is ending because software is a business tool, not a business model. Recurring revenue models are fading as customer acquisition costs rise, flexibility expectations shift, and SaaS fatigue drives enterprises to consolidate vendor counts.

This connects directly to the SaaSpocalypse narrative I have been tracking since issue #85. For cybersecurity, the implication is clear. Vendors selling seats and subscriptions for static functionality will lose to platforms that deliver continuous, AI-powered outcomes. The market is punishing SaaS inertia.

Cisco in Talks to Acquire Astrix Security

Cisco is in advanced discussions to acquire Israeli startup Astrix Security for $250 to $350 million. Astrix was founded in 2021 by Unit 8200 veterans Alon Jackson and Idan Gour and provides visibility into non-human identities, including AI agents, automated processes, and autonomous tools. Enterprise customers include Workday, NetApp, Priceline, and Figma. Astrix raised a $45 million Series B in December 2024 led by Menlo Ventures and Anthropic’s Anthology Fund, bringing total funding to $85 million.

This acquisition validates the thesis I have been writing about extensively. Non-human identities are among the fastest-growing attack surface in enterprise security, and the major platform vendors are buying their way into the category. I covered Cisco’s MEMORY.md compromise research in issue #92, so it is clear they are investing across the NHI and agent security space.

Reid Christian on Watching the Watchers

Reid Christian at CRV published observations on what is happening in cybersecurity venture right now. His key insight is that organizations need ancillary monitoring alongside their primary security vendors, people watching the watchers.

He traces this back to the CrowdStrike outage, which was not a cybersecurity failure but a vendor deployment failure where untested software was pushed to production. CRV is backing Fleet as one response to this problem.

The broader point resonates with me. In a world where security agents and AI tools are making autonomous decisions, independent verification and monitoring layers become essential infrastructure.

Heavy Is the Head That Wears the Crown

Dan Gray at Odin published an analysis of how venture dynamics distort startup outcomes. His central argument is that hot-market effects cause investors to overfund high-performing companies, inflating valuations in ways that make startups brittle through over-investment and over-hiring.

For early-stage cybersecurity founders, the takeaway is that raising minimally at early stages preserves optionality. This connects to the SVB data from issue #92 showing deal count falling 15% while dollars invested jumped 53%, with the top 1% capturing a third of all capital. The market rewards conviction at the top and punishes everyone else.

CRV CISO 2026 Focus Areas

James Green at CRV published the firm’s 2026 CISO investment thesis, and three areas stand out. First, Golden Artifacts, which is cryptographic proof of secure software. Second, MCP and agentic security.

Third, AI governance for CISOs with direct accountability to boards. All three track with themes I have been covering across recent issues, from the supply chain integrity work in my Vulnpocalypse deep dive to the agentic identity standards work by Dick Hardt and Karl McGuinness.

The venture signal here is clear. These are the categories where institutional investors expect the next wave of enterprise security spending.

Caleb Sima on Conference Intel and Security Conference Coverage

Caleb Sima shared his conference intel from the spring 2026 security conference circuit. Caleb is one of the most experienced operators in AI security, having co-founded and led multiple security companies before chairing the Cloud Security Alliance AI Security Alliance.

His observations consistently cut through vendor noise to identify the themes that actually matter for practitioners. For anyone trying to filter signal from the RSA and post-RSA chatter, Caleb’s perspective is worth following closely.

Andrew Hoog on SEC Cybersecurity Materiality

Andrew Hoog at NowSecure raised an important gap in how organizations approach SEC cybersecurity disclosure. His core observation is that companies are overlooking the reputational and legal impacts of mobile application security in their materiality assessments.

The SEC is not looking for whether you had your website scanned. They are focused on incidents that materially affect the business. Hoog’s question for security leaders is whether they are connecting mobile risks all the way through to revenue and retention in their business strategy. Risk is the language of business, and translating technical cybersecurity concerns into that language is where most programs fall short.

Jochen Schmiedbauer on Playbook Pedestal Burn

Jochen Schmiedbauer published a piece challenging the industry’s over-reliance on established playbooks and frameworks. The argument connects to a theme I keep returning to. Static playbooks built for yesterday’s threat landscape cannot keep pace with AI-accelerated attacks.

Organizations that put frameworks on a pedestal without adapting them to current operational reality end up with compliance artifacts rather than security outcomes. As Casey Ellis argues in his piece below, defense scales with committees while offense scales with compute. Playbooks are only useful if they evolve at the speed the environment demands.

AI

AISI Evaluation of Claude Mythos Preview’s Cyber Capabilities

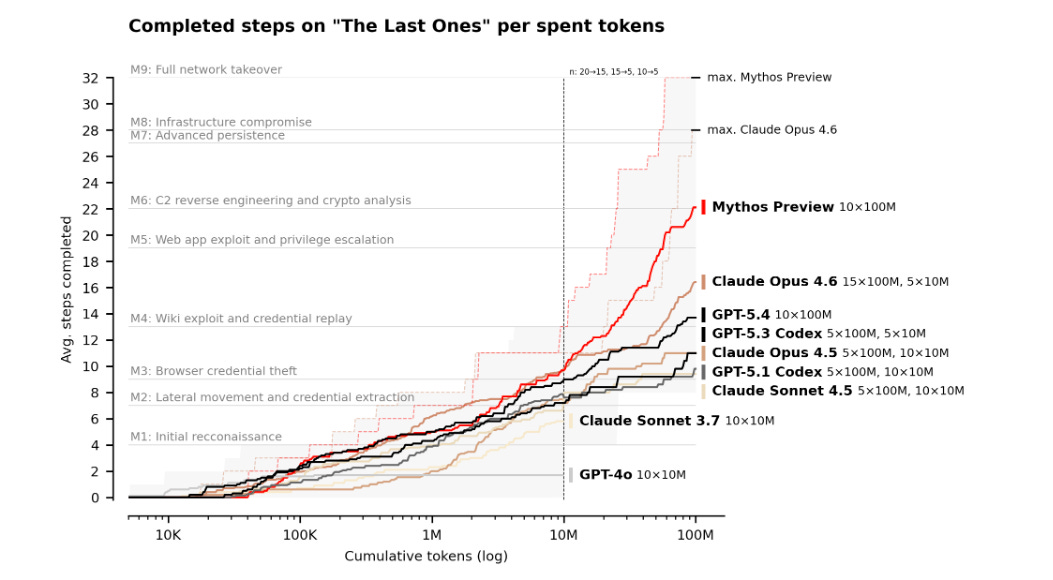

The UK AI Safety Institute published its independent evaluation of Claude Mythos Preview, and the results confirm the capability leap. On expert-level capture-the-flag challenges that no prior model had solved, Mythos achieved a 73% success rate. AISI also built a 32-step corporate network attack simulation called “The Last Ones” and Mythos completed the full chain in 3 of 10 attempts, averaging 22 of 32 attack steps per run.

The important caveats are that these evaluations lack active defenders, defensive tooling, and penalties for triggering security alerts. We cannot definitively say whether Mythos would succeed against well-defended systems. But AISI’s conclusion is clear. More models with similar capabilities will be developed, and the window for building defensive infrastructure is narrowing.

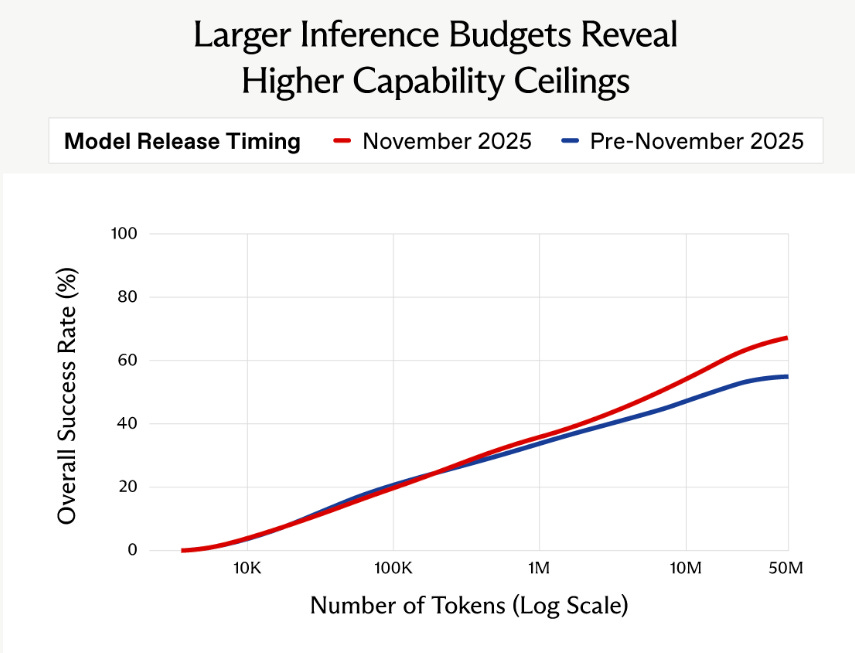

AISI on Inference Scaling in AI Cyber Tasks

This companion piece from AISI is equally important. Their research demonstrates that accurately estimating AI cyber capabilities requires significantly larger inference budgets than commonly assumed. Success rates scale roughly with the logarithm of total tokens used per attempt, meaning every time you double the token budget you get approximately the same absolute increase in success rate.

Approximately 8% of AISI’s tasks were only solved by increasing the budget from 10 million to 50 million tokens. At the 50 million token limit, average cost per run was around $10 with maximum costs below $60. The implication is that previous evaluations conducted at lower budgets were too conservative. The models are more capable than we thought, and the gap will widen as inference costs continue to drop.

NBC News on the Vulnpocalypse and Mythos

NBC News published a feature on what they are calling the “Vulnpocalypse,” a term I used for my own deep dive in issue #92. Logan Graham, who leads offensive cyber research at Anthropic, expects competitors, including those in China, to release similar models in the coming months.

The limited release strategy for Mythos makes sense given the offensive potential, but the clock is ticking. Once multiple labs have this capability, the controlled-access model becomes much harder to maintain. The race between discovery and remediation that I wrote about in Vulnpocalypse is now playing out in public, and the stakes could not be higher.

WSJ on Bugmageddon

The Wall Street Journal used the term “Bugmageddon” to describe the flood of AI-discovered vulnerabilities that is about to overwhelm the software ecosystem. Anthropic found thousands of bugs in the first month alone, and the concern is that smaller developers and under-resourced open source projects will be hit hardest.

OpenAI has responded by launching its own security-centric model for vetted defenders, and the White House summoned representatives from JPMorgan, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley to discuss system-level vulnerabilities surfaced by frontier AI.

The volume problem is real. As I argued in Vulnpocalypse, vulnerability backlogs already number in the hundreds of thousands for large enterprises, and remediation rates sit at roughly 10% per month. Now add AI-speed discovery to that equation.

OpenAI Launches Trusted Access for Cyber Defense

OpenAI’s response to Mythos was swift. They launched the Trusted Access for Cyber (TAC) program with a new model called GPT-5.4-Cyber, which is “trained to be cyber-permissive” so that defenders can test systems without encountering refusals. TAC requires identity verification and professional use-case documentation to gain access. OpenAI’s stated philosophy is that they do not think it is practical or appropriate to centrally decide who gets to defend themselves.

This is a fundamentally different approach than Anthropic’s restricted-access model for Mythos. Both approaches have merit. Anthropic is controlling access to the most capable offensive model ever built. OpenAI is trying to democratize defensive capabilities. The tension between these two philosophies will define how AI-powered security tools are distributed for the foreseeable future.

Anthropic on Preparing Your Security Program for AI-Accelerated Offense

Anthropic published guidance for security teams on how to prepare for AI-accelerated offensive capabilities. This is the operational companion to the Project Glasswing announcement from issue #92. The core message is that organizations need to assume AI-driven vulnerability discovery and exploitation are already here and adapt their programs accordingly.

Attack surface management, automated patching, runtime detection, and AI-powered triage all need to scale to match the speed of AI-driven offense. This aligns with everything I have been writing about the remediation race.

AISLE on AI Cybersecurity After Mythos and the Jagged Frontier

AISLE published one of the most important technical analyses of the post-Mythos landscape. Their key finding is that the competitive moat in AI cybersecurity is the system, not the model. There is no stable best model across cybersecurity tasks. The capability frontier is jagged and does not scale smoothly with model size. AISLE found that 8 of 8 models they tested detected Mythos’s flagship FreeBSD exploit, including a 3.6 billion parameter model costing $0.11 per million tokens.

A 5.1 billion parameter open model recovered a 27-year-old OpenBSD bug. Small open models outperformed most frontier models on basic security reasoning tasks. The value lies in targeting, iterative deepening, validation, triage, and maintainer trust. This is a critical insight for the industry. You do not need to be Anthropic to build effective AI-powered security. You need better systems.

AISLE on System Over Model and Zero-Day Discovery

AISLE’s companion piece goes deeper into their Cyber Reasoning System architecture. On January 27, 2026, when OpenSSL announced 12 new zero-day vulnerabilities, AISLE’s system discovered every single one of them autonomously. In its first weeks of operation, the system uncovered over 100 new vulnerabilities in foundational software including the Linux kernel, OpenSSL, cURL, and Apache.

The system reduces the remediation loop from weeks or months to days or minutes by automatically generating and verifying fixes against a continuously updated AI twin of the enterprise’s software stack. This is exactly the kind of integrated discovery-to-remediation pipeline that I argued we need in Vulnpocalypse. Finding vulnerabilities is only useful if you can fix them at the same speed.

Inside Claude Cowork and How Anthropic’s Autonomous Agent Actually Works

Pluto Security published a detailed teardown of Claude Cowork, Anthropic’s persistent autonomous agent that runs inside a sandboxed Linux virtual machine on Mac. The architecture combines a VM sandbox, direct Chrome browser control, file system access, and remote phone dispatch through iOS and Android apps routing through Anthropic’s servers to a local sessions bridge.

Computer Use gives the agent visual and operational capabilities on the host machine. This represents a new class of AI capability where systems can see screens, control browsers, read files, and operate desktops autonomously while the user is away. The security tension is fundamental.

Greater autonomy increases both utility and attack surface. Every capability Cowork has for productivity is also a capability an attacker could leverage through prompt injection, memory poisoning, or supply chain compromise of the tools the agent interacts with.

Casey Ellis on Offense Scaling with Compute and Defense Scaling with Committees

Casey Ellis published a piece that captures the asymmetry I have been writing about all year. Offense scales with compute. Defense scales with committees. The title alone should be a wake-up call for every security leader. Attackers can throw more GPUs and tokens at vulnerability discovery and exploitation, and their output scales linearly or better. Defenders, by contrast, need to coordinate across organizational boundaries, procurement cycles, compliance reviews, change management processes, and human approval chains.

The structural asymmetry is not new, but AI is amplifying it by orders of magnitude. Bug bounties, vulnerability disclosure programs, and researcher protections through frameworks like disclose.io remain critical mechanisms for closing the gap. But they are not enough on their own. We need the kind of coordinated defensive infrastructure that Project Glasswing represents.

Netanel Rubin on Anthropic’s Mythos Restriction

Netanel Rubin challenged the narrative that Anthropic is not releasing Mythos purely out of safety concerns. His argument raises legitimate questions about the commercial incentives behind controlled access.

When a company holds the most powerful offensive security tool ever built and distributes it exclusively to paying partners, the line between responsible stewardship and competitive moat-building gets blurry. I think Anthropic’s caution is warranted given the offensive potential, but Rubin’s skepticism is healthy. The industry should demand transparency about access criteria, governance structures, and accountability mechanisms for Mythos and similar models.

Defenders Initiative on Things Only Getting Rougher

The The Defender's Initiative published a sobering assessment that from this point on, the defender’s job only gets harder. The proliferation of AI-powered offensive tools, the velocity of vulnerability discovery, and the structural challenges of coordinating defense across fragmented organizations all point in the same direction.

I share the concern but also see real cause for optimism in the infrastructure being built right now. AAuth, AISLE, Project Glasswing, Oligo’s runtime blocking, and the broader ecosystem of AI-native security tools represent genuine defensive innovation. The question is whether that innovation can outpace the offense.

Empirical Security and the Knowing Machine

Empirical Security, the team behind EPSS (Exploit Prediction Scoring System), published research on what they call “The Knowing Machine.” Empirical builds and maintains the world’s only public ML model trained on nearly 2 million daily exploitation events.

Their dual model architecture combines global models trained on broad exploitation data with local models adapted to customer-specific infrastructure. With $12 million in seed funding and leadership from Ed Bellis, Michael Roytman, and Jay Jacobs, Empirical is trying to solve the prioritization problem that makes vulnerability management so painful.

As I wrote in Vulnpocalypse, the challenge is not finding vulnerabilities. It is knowing which ones matter. Empirical’s approach of combining global signal with local context is exactly the right architecture for that problem.

AppSec

Chainguard on Open Source Dying in March

Chainguard published one of the most provocative pieces of the year, arguing that open source died in March 2026, it just does not know it yet. The argument is not that open source as licensed software is broken. The problem is how organizations consume and distribute it. PyPI and npm distribute unreviewed, unsigned software with assumed trust at every layer.

The supply chain attacks I have been tracking across issues #90 through #92, from axios to LiteLLM to Telnyx to Trivy, all exploited this same structural weakness. Chainguard’s point is that the consumption model is fundamentally broken and needs to be rebuilt from the ground up. I largely agree. As I wrote in Software Transparency, the trust assumptions baked into modern package ecosystems were designed for a different era. The current architecture cannot withstand industrialized supply chain attacks.

Joshua Saxe on Exploits Not Causing Cyberattacks

Joshua Saxe published a counterpoint to the prevailing doomsday narrative around AI and exploits. His core argument is that technologies do not cause cyberattacks. Attackers use whatever tools achieve their goals most efficiently. Despite advances in AI capabilities, attacks have not surged as predicted because most attacker constituencies can currently achieve their desired outcomes using traditional means like phishing, credential stuffing, and exploitation of known CVEs. I

think Saxe’s perspective is a useful corrective to some of the more breathless predictions, but I also think it underestimates the second-order effects. The value of AI is not just in creating new exploits. It is in accelerating the entire attack lifecycle from reconnaissance through exploitation through lateral movement, all at machine speed. The fact that attackers have not needed AI yet does not mean they will not need it when defenders start using it.

Broken by Default: Formal Verification of Security Vulnerabilities in AI-Generated Code

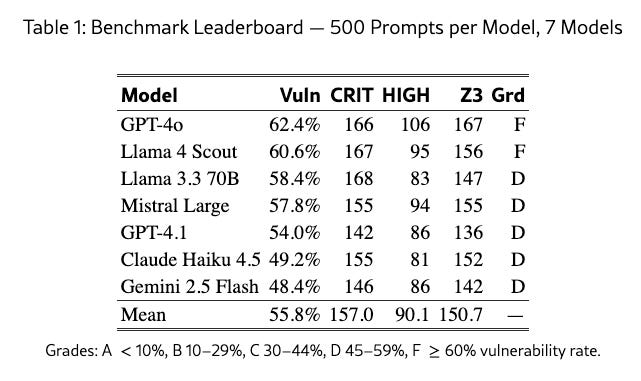

This arxiv paper is one of the most rigorous studies of AI-generated code security I have seen. Researchers analyzed 3,500 code artifacts across 500 security-critical prompts spanning five CWE categories using the Z3 SMT solver to generate mathematical satisfiability witnesses rather than pattern-based heuristics.

The results are grim. 55.8% of artifacts contain at least one formally proven vulnerability. No model achieves better than a D grade. GPT-4o leads at 62.4% vulnerability rate, which earns an F. Gemini 2.5 Flash performs best at 48.4%, earning a D. Six of seven representative findings were confirmed with runtime crashes under GCC AddressSanitizer.

This is not speculation. This is formal proof that AI-generated code is insecure by default, which reinforces everything I have been writing about vibe coding risks since issue #73.

Supply-Chain Poisoning Attacks Against LLM Coding Agent Skill Ecosystems

This companion arxiv paper examines how LLM-based coding agents extend capabilities through third-party “agent skills” from open marketplaces. Unlike traditional packages, agent skills are executed as operational directives with system-level access and lack mandatory security review before execution. The paper catalogs attack vectors where malicious skills can be injected into agent workflows through supply chain poisoning.

This connects directly to the a16z “Et Tu Agent” research from issue #92, where 20% of AI-recommended packages were fabrications and attackers were already slopsquatting hallucinated package names. The agent skill ecosystem is replicating every mistake the npm and PyPI ecosystems made, but with even higher privilege levels.

Wiz on GitHub Actions Security Threat Model and Defenses

Wiz published a comprehensive threat model for GitHub Actions that every team using CI/CD should read. The fundamental security challenge is controlling what code runs and with what permissions in response to repository events. Public repositories face the hardest version of this problem because the trust boundary separates repository owners and collaborators from fork PR authors and issue creators.

High-privilege triggers like pull_request_target and workflow_run are dangerous because they run workflows in the base repository context with access to secrets. Wiz recommends pinning action commit SHAs, setting default permissions to read-only, and restricting workflows to verified Actions from trusted sources.

This is the kind of practical supply chain hardening guidance that complements the broader ecosystem-level arguments from Chainguard and the $60 billion package registry analysis from issue #92.

Oligo Security on Runtime Exploit Blocking

Oligo Security unveiled a runtime exploit blocking capability that stops exploit attempts at the application layer in real time. The detection works by correlating application-layer function calls with system-level activity. Individual actions may appear normal, but specific sequences reveal active exploits.

Once identified, Oligo blocks the underlying system calls while allowing applications to continue running normally. The technique-based protection defends against entire classes of attack techniques rather than individual CVEs, meaning a single protection rule can cover categories of vulnerabilities including zero days. This is exactly the kind of defensive innovation the ecosystem needs. When AI can find vulnerabilities faster than humans can patch them, runtime protection becomes the essential bridging layer.

Pragmatic Engineer on the Impact of AI on Software Engineers in 2026

Gergely Orosz published a comprehensive survey on how AI is reshaping software engineering, and the data is striking. 95% of respondents use AI tools at least weekly. 75% of developers now use AI for at least half their engineering work. 55% use agents.

Claude Code grew from zero to the most-used tool in eight months. Staff-plus engineers are the heaviest agent users at 63.5% regular use, compared to 49.7% for regular engineers.

The career impact data is the most important signal. Employment for developers aged 22 to 25 has declined roughly 20% from the late 2022 peak, while employment for workers aged 35 to 49 in high AI-exposure roles has increased 9%. AI is amplifying experienced engineers and compressing the entry-level pipeline. For security, this means we need to rethink how we train the next generation when the scaffolding work that junior engineers traditionally learned on is being automated away.

Moak AI and the Mother of All KEVs

Moak AI launched what they call the “Mother of All KEVs,” an agentic cybersecurity workflow for analyzing and exploiting known exploited vulnerabilities. The platform uses a multi-agent architecture with collector, researcher, builder, exploiter, and judge agents to process hundreds of dangerous vulnerabilities in minutes. This sits alongside Raptor (from issue #92) and AISLE as another example of agentic security research tooling proliferating rapidly.

The common thread is that vulnerability research, exploitation, and validation are all being automated through multi-agent systems, and the tools are becoming accessible to anyone, not just nation-states and frontier AI labs.

Praetorian Guard Demo

Nathan Sportsman demoed Praetorian Guard, an AI-driven security assessment platform that automates LLM security assessments at scale. Guard turns findings into remediation and improves after every cycle, focusing specifically on securing non-human identities including AI agents and automated processes.

Praetorian has deep expertise in offensive security, and seeing them apply that expertise to agentic and NHI security validates the investment thesis that these categories are moving from niche to mainstream enterprise requirements.

Final Thoughts

This week confirmed something I have been building toward across several issues. AI-powered vulnerability discovery has crossed the threshold from interesting research project to systemic risk that demands coordination at the highest levels of government and finance. When the Federal Reserve Chairman and Treasury Secretary are convening bank CEOs specifically to discuss an AI model’s offensive capabilities, we are in genuinely new territory.

But the response is also unprecedented. Project Glasswing has mobilized over $100 million and the biggest names in technology. AISLE has demonstrated that you do not need a frontier model to build effective AI-powered defense, you need a better system. OpenAI launched cyber-permissive tooling for vetted defenders. Oligo is blocking exploits at runtime. The defensive ecosystem is responding with real engineering, not just marketing.

The piece that will stay with me this week is Casey Ellis’s observation that offense scales with compute while defense scales with committees. That asymmetry is the single biggest challenge we face. Solving it requires exactly the kind of coordinated infrastructure I see being built right now, from AAuth and agentic identity standards to AI-native vulnerability remediation pipelines. The building blocks are there. The question is whether we can assemble them fast enough.

Stay resilient.

"the US is “thinking more offensively than we ever have in the nation’s history.”

Rather ironic given the illegal war of aggression against Iran (which the US is ALSO losing - as it will against cybersecurity threats.)

The notion that since "everyone is doing it, so we need to do it" has been debunked by the industry for decades.

Now we have Trump, so everything is "damn the law, full speed ahead."

Just another example of "Empire In Decline."