Resilient Cyber Newsletter #90

Services: The New Software, Two Paths Left for Software Companies, AI Agent Offensive Capabilities, M-Trends 2026, TeamPCP Wrecks the Supply Chain & the Evolution of AppSec Engineers

Welcome to issue #90 of the Resilient Cyber Newsletter!

This was the week the supply chain came apart. TeamPCP, the threat actor behind the hackerbot-claw campaign I covered in issue #87, escalated from GitHub Actions exploitation to a full-spectrum supply chain attack that compromised Trivy (Aqua Security’s vulnerability scanner used by millions), hijacked 76 GitHub Action tags, defaced Aqua’s entire GitHub organization, and then used stolen CI/CD credentials to backdoor LiteLLM, one of the most widely used Python libraries in the AI ecosystem. A single pip install pulled in a credential stealer that targeted everything from API keys and SSH credentials to Kubernetes secrets and cryptocurrency wallets.

Meanwhile, Google’s M-Trends 2026 report showed attackers handing off access in 22 seconds, Sequoia declared that the next trillion-dollar company will sell work rather than software, AI agents beat 90% of human hackers in a global competition with 18,000 participants, and Jack Cable raised $25 million for Corridor to embed security into AI coding workflows. The CSA launched an entire new foundation dedicated to “Securing the Agentic Control Plane.” There is a lot to cover. Let’s get into it.

Oh yeah, and I spent the week out in San Francisco at RSAC, hanging with the community, speaking with analysts, practitioners, founders, investors, researchers and more.

So yeah, it’s been a slow week :)

Looks like the CEO. Might be a deepfake.

Today’s social engineering attacks look trustworthy by design. Disguised as a routine request, an internal email, a familiar face on a call—they succeed because they blend in. But not with Doppel.

Built to outpace attacks with AI-native defense, Doppel fights back through:

Digital Risk Management that dismantles attacker infrastructure and continually compounds intelligence

Human Risk Management that builds team resilience through simulation and training.

Protect your organization with Doppel’s AI-native social engineering defense platform.

Cyber Leadership & Market Dynamics

Sequoia Capital: Services Are the New Software

Sequoia published one of the most consequential investment theses of the AI era. The argument is straightforward but has massive implications, they argue for every dollar spent on software, six dollars go to services, and the next trillion-dollar company will sell the work done rather than the tool that does it. The distinction between copilots (selling tools) and autopilots (selling outcomes) is the defining architectural choice for AI companies in 2026.

This matters enormously for cybersecurity. If the business model shifts from selling software seats to selling labor outcomes, the identity surface transforms completely. Instead of human users authenticated to SaaS platforms, you have autonomous agents executing tasks on behalf of organizations, each requiring their own credentials, authorization scopes, and audit trails. This is the agentic identity challenge I’ve been writing about through the OWASP NHI Top 10, but at a scale that dwarfs anything we’ve seen from traditional service accounts and API keys.

Sequoia also declared in a companion piece that 2026 is the year of AGI, with coding agents as the first proof point. Whether you agree with that framing or not, the investment capital flowing into agent-first companies is reshaping the entire technology landscape, and security must evolve to match.

WSJ: US Cyber Assault on Iran Hasn’t Stopped Hackers

The Wall Street Journal reported that US cyber operations against Iran, which I first discussed in issue #87, have not achieved the desired deterrent effect. Iranian cyber threat actors remain active and are increasingly targeting civilian infrastructure. This is a reminder that cybersecurity exists within a geopolitical context that can change rapidly and that defensive posture matters regardless of offensive operations.

Fast Company’s Most Innovative Companies 2026: Cybersecurity Edition

Fast Company named four cybersecurity companies to its 2026 Most Innovative Companies list: Sublime Security (#1 in the security category), Cyera, Chainguard, and Horizon3.ai. The common thread is AI as a core architectural pattern, not just a feature.

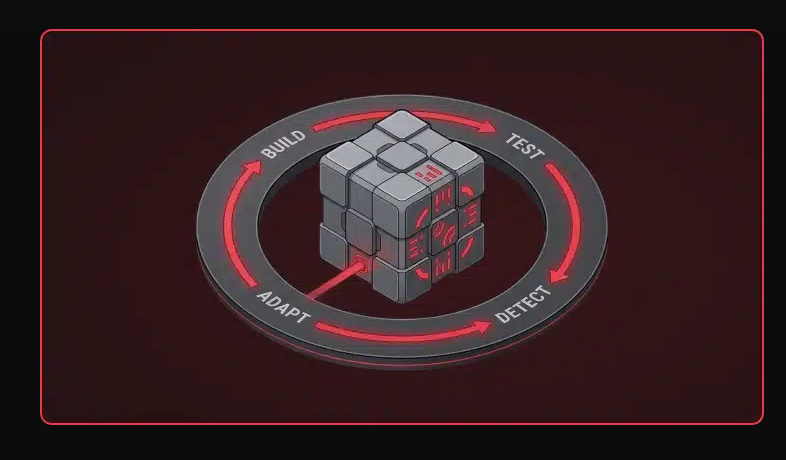

Sublime Security raised $150 million in Series C and shipped two autonomous AI agents for threat triage and detection engineering, embodying the agentic security pattern we’ve been tracking. Horizon3.ai reported 102% year-over-year ARR growth with its continuous “hack, fix, verify, repeat” proactive testing model, now trusted by four Fortune 10 companies.

Chainguard’s inclusion speaks directly to this week’s supply chain security themes, tackling vulnerabilities in open-source dependencies and container images. Cyera rounds out the list with agentless DSPM across cloud, SaaS, and on-prem environments. Several of these CEOs made a point worth repeating, which is that good cybersecurity is no longer a cost center but a revenue accelerator.

SentinelOne S Ventures Invests in Replit

SentinelOne’s venture arm invested in Replit, the browser-based development platform that has become one of the most popular environments for vibe coding. This is a security company making a strategic bet on the AI development ecosystem, which signals that securing AI-generated code is now a portfolio-level priority for cybersecurity vendors.

The investment makes sense when you consider the data. DryRun’s report (which I covered in issue #89) showed 87% of AI-generated PRs introduce vulnerabilities. Replit is where many non-traditional developers are building their first applications using natural language prompts. The security implications are significant, and having a security-first investor at the table is valuable.

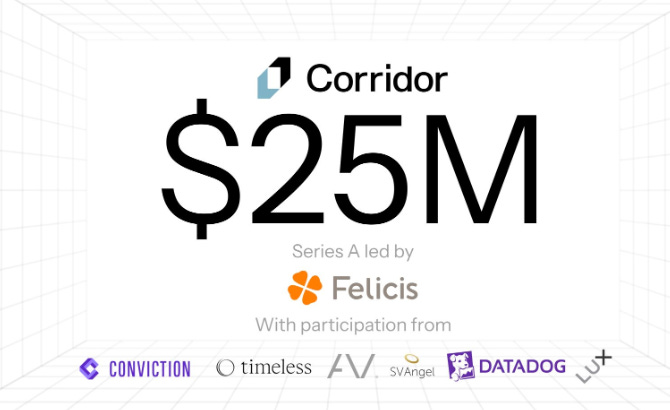

Corridor Raises $25M Series A at $200M Valuation

Jack Cable, who previously led CISA’s Secure by Design initiative, raised $25 million for Corridor with Alex Stamos as CPO and backing from Felicis, Lux Capital, Datadog, and angels from Anthropic, OpenAI, Cursor, and Cognition. The company is building an Agentic Coding Security Management (ACSM) platform that embeds security directly into AI coding workflows.

When Latio’s 2026 Application Security Market Report found that securing AI-generated code is the number one concern for 48% of respondents, it validated exactly the problem Corridor is tackling. Cable’s background at CISA gives him credibility on the policy side, and the investor list reads like a who’s who of the AI coding ecosystem. This team is one to watch in my opinion.

a16z: Two Paths Left for Software Companies

Andreessen Horowitz laid out their view that software companies face a binary choice, which is become an AI platform or become an AI-powered service. There is no third path. This echoes Sequoia’s thesis and reinforces the structural shift we’ve been tracking since issue #85 when we discussed the SaaSpocalypse. The security implications remain the same, as software companies transform into agent-native platforms, every assumption about authentication, authorization, and behavioral monitoring needs to be revisited.

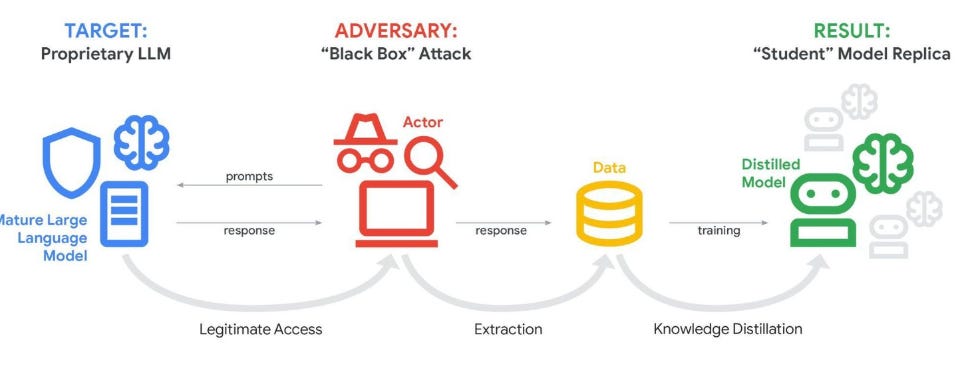

a16z Podcast: Distillation and Supply Chains

The a16z podcast featured a discussion on model distillation and supply chain security that connects directly to themes we’ve been following. As I covered in issue #85, Anthropic accused three Chinese AI labs of running coordinated distillation campaigns using 24,000 fraudulent accounts. The supply chain for AI models is becoming as critical to secure as the software supply chain, and the attack vectors are different but equally consequential.

SentinelOne: More Than Endpoint Security

Cole Grolmus analyzed SentinelOne’s strategic positioning, highlighting the company’s expansion well beyond its endpoint security roots. With the S Ventures investment in Replit and acquisitions in AI security, SentinelOne is positioning itself as a platform player in the AI-native security era. Combined with CrowdStrike’s record earnings (issue #88) and Okta’s agentic identity pivot, the largest cybersecurity companies are all making aggressive bets on AI.

Equifax Annual Security Report

Equifax’s annual security report provides a useful benchmark for how one of the most prominent data companies approaches security governance. For practitioners interested in how large enterprises structure their security programs and communicate risk to stakeholders, this is worth reviewing.

The State of the Product Job Market

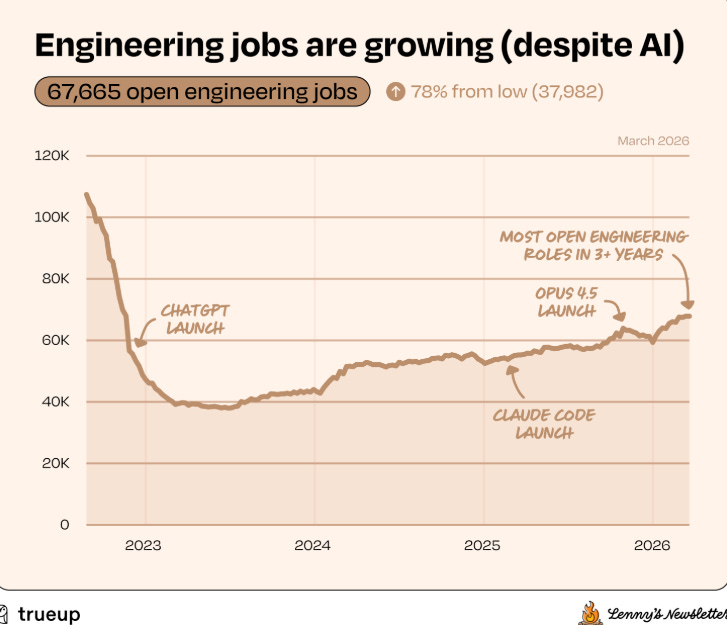

Lenny Rachitsky ’s analysis of the product management job market reveals the broader labor impact of AI on knowledge work. Product roles are being reshaped as AI takes on more of the analytical and operational work that PMs traditionally handled. For cybersecurity professionals, this is another signal that the skills required in our field are shifting toward governance, architecture, and AI oversight rather than manual operational tasks.

What many may find most surprising is that the number of engineering jobs is growing, which is counterintuitive given all the mainstream narratives about AI eating the labor market.

AI

CSA Launches CSAI Foundation: Securing the Agentic Control Plane

The Cloud Security Alliance launched CSAI, a new 501(c)(3) foundation dedicated exclusively to AI security with the strategic mission of “Securing the Agentic Control Plane.” The six strategic programs include an AI Risk Observatory (with a CNA scoped on agentic AI), agentic best practices covering identity-first controls for non-human actors, and a CxOtrust program providing board-ready risk narratives.

CSA CEO Jim Reavis declared that the Agentic Control Plane will become as fundamental as identity or network security. This is the kind of institutional infrastructure the industry needs. Standards bodies, certification programs, and governance frameworks do not move as fast as startups, but they create the durable foundation that the entire ecosystem relies on. Combined with NIST’s agent identity concept paper and OWASP’s Agentic Top 10, the standards landscape is finally starting to match the pace of deployment.

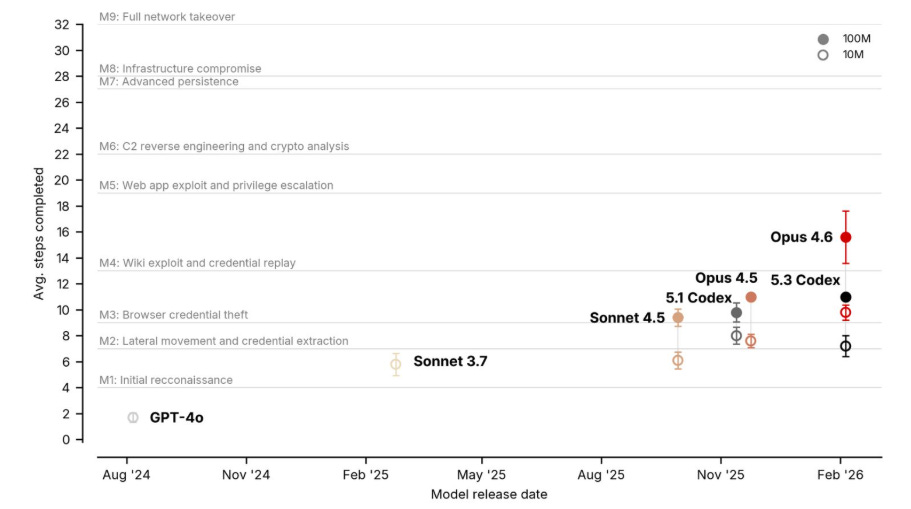

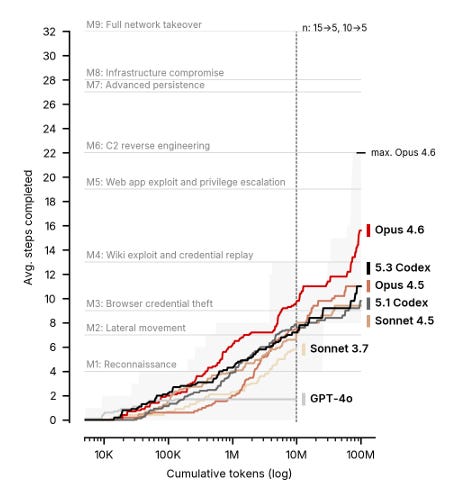

Forbes: AI Beats Most Humans in Elite Hacking Competitions

In the Cyber Apocalypse CTF with over 18,000 participants, AI agents landed in the top 10%, outperforming 90% of human entries. In the NeuroGrid competition, AI-augmented teams completed challenges at a 73% rate compared to 46% for human-only participants. At the elite tier, AI teams completed challenges several times faster than their human counterparts.

This is the offensive capability data that gives weight to Kevin Mandia’s $190 million bet on autonomous AI defense (issue #88) and Anthropic’s own warning that the gap between vulnerability discovery and exploitation is closing. When AI agents can outperform 90% of human hackers in a competition, the assumption that sophisticated attacks require sophisticated human operators is no longer valid.

CSO Online: Runtime, the New Frontier of AI Agent Security

This piece frames runtime security as the critical layer for agentic systems. Static analysis catches issues before deployment, but agents make decisions and take actions at runtime that no static analysis can predict.

The argument aligns with what I’ve been saying, you cannot secure agents solely through pre-deployment scanning. You need continuous monitoring, dynamic authorization, and runtime policy enforcement, exactly the kind of architecture that Cedar-based approaches (covered in issues #84, #87, #88, and #89) are designed to provide, coupled with runtime detection and response, intent-analysis and other topics I’ve been discussing.

Cisco: Adversarial Hubness in RAG Systems

Cisco’s AI security research team continued their work on adversarial attacks against RAG systems, building on the memory poisoning research we’ve been tracking since issue #85. The concept of “adversarial hubness,” where injected documents become disproportionately influential in retrieval, represents a maturing attack technique against one of the most common AI agent architectures.

Caleb Sima: The Data Security Industry Has a Context Problem

Caleb Sima’s analysis of the data security industry’s context problem resonates with a theme I’ve been emphasizing: security tools that generate findings without helping teams understand which ones matter are part of the problem, not the solution. In the agentic era, context becomes even more critical because agents are making autonomous decisions about data access, tool invocation, and action execution. Without context, you cannot distinguish between authorized and unauthorized agent behavior.

UK AI Security Institute Research

The UK AISI continued their research into AI agent offensive capabilities, contributing to the growing evidence base we’ve been tracking. Combined with the CTF competition results and Anthropic’s Firefox audit, the trajectory is clear, AI agents are already capable security tools, and their capabilities are improving faster than governance frameworks can adapt.

Arxiv: New Research on AI Agent Security

New academic research continues to advance our understanding of AI agent security threats and defenses. The research community’s output on agentic security has been remarkable, and these papers provide the theoretical foundation for the practical tools and frameworks the industry is building.

AppSec

Google M-Trends 2026: Access Handoff in 22 Seconds

Google Mandiant’s annual report, based on over 500,000 hours of frontline investigations, showed that the time between initial access and handoff to secondary threat groups has collapsed from 8 hours in 2022 to 22 seconds in 2025. Let that sink in. Attackers are automating access brokerage to the point where it is essentially instantaneous.

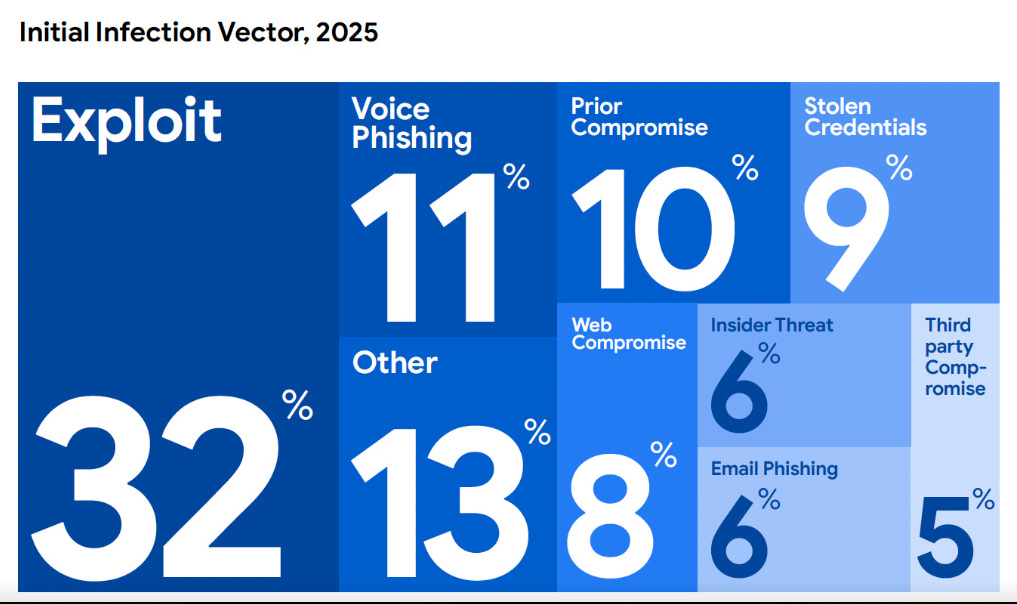

Other notable findings include global median dwell time rose to 14 days from 11, reflecting more sophisticated persistence. Voice phishing climbed to 11% of initial infections (second only to exploits at 32%), while email phishing dropped to 6%. Mandiant observed “recovery denial” ransomware tactics targeting backup infrastructure, identity services, and virtualization management planes. The mean time to exploit vulnerabilities dropped to an estimated negative seven days, meaning exploitation routinely occurs before patches exist.

The malware landscape expanded with 714 new families identified (up from 632), and SaaS/cloud compromise via long-lived OAuth tokens and session cookies was a persistent theme. Mandiant’s recommendation is to treat routine malware alerts as high-priority indicators of imminent secondary intrusion. When handoff happens in 22 seconds, your triage window has effectively disappeared.

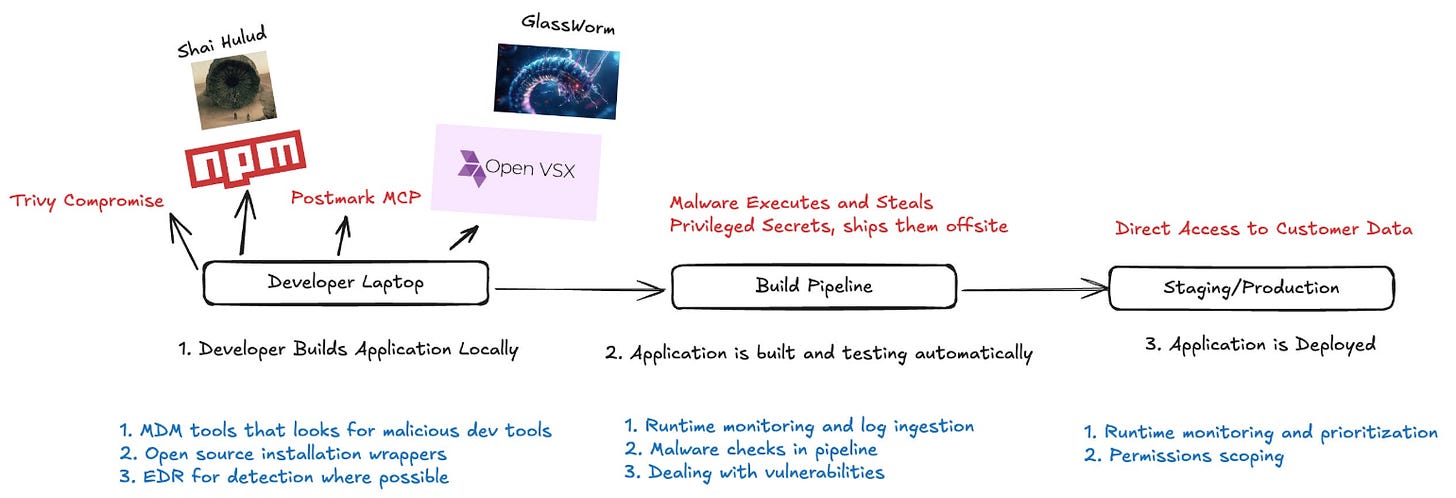

TeamPCP Compromises Trivy and Backdoors LiteLLM: The Week Supply Chain Security Broke

This is the biggest story of the week, TeamPCP, the same threat actor across various incidents, escalated from exploiting GitHub Actions to a full-spectrum supply chain attack that represents one of the most sophisticated campaigns the open-source ecosystem has ever seen.

The timeline is worth understanding in full. On March 19, TeamPCP used those retained credentials to simultaneously compromise Trivy’s core binary, the trivy-action GitHub Action, and the setup-trivy GitHub Action. They hijacked 76 of 77 tags in trivy-action, meaning every CI/CD pipeline referencing those actions by tag began running the attacker’s code. The malicious Trivy binary (v0.69.4) was published to GitHub Releases and Docker Hub.

Then came the defacement. TeamPCP renamed all 44 repositories in Aqua’s “aquasec-com” GitHub organization with “tpcp-docs-” prefixes in a scripted two-minute burst. All descriptions were changed to “TeamPCP Owns Aqua Security.”

But the real escalation came on March 24 when TeamPCP used the PyPI publish token stolen from Trivy’s CI/CD pipeline to backdoor LiteLLM, the Python library with 40,000+ GitHub stars that serves as a unified interface for interacting with LLMs. Versions 1.82.7 and 1.82.8 contained a multi-stage credential stealer targeting environment variables, API keys, SSH keys, cloud credentials, Kubernetes configs, and cryptocurrency wallets. The .pth file technique in version 1.82.8 fires on every Python interpreter startup with no import required.

The discovery is itself a story about the agentic era. Callum McMahon at FutureSearch found the attack because his Cursor IDE pulled in the malicious package through an MCP plugin. An AI coding tool became the vector for a supply chain attack against AI infrastructure.

As of this writing, the entire LiteLLM package has been quarantined on PyPI. Organizations that installed versions 1.82.7 or 1.82.8 should assume full credential compromise. This is exactly the kind of cascading supply chain failure Tony Turner and I warned about in Software Transparency: a single compromised credential propagating through interconnected ecosystems, from a security scanner to a package registry to an AI infrastructure library.

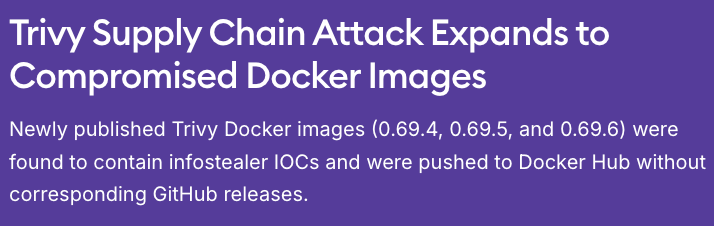

Socket: Trivy Docker Images Compromised

Socket’s analysis provided additional detail on the Docker Hub dimension of the Trivy compromise. Between March 19 and March 23, anyone who pulled Trivy images with the 0.69.4, 0.69.5, 0.69.6, or latest tags may have had their CI/CD secrets, cloud credentials, SSH keys, and Docker configurations compromised. Images 0.69.5 and 0.69.6 were pushed without corresponding GitHub releases, a red flag that automated detection should have caught.

Latio: How to Know If the Trivy Supply Chain Attack Affected You

James Berthoty published practical guidance for organizations trying to determine their exposure. For security teams in triage mode, this is actionable: check your CI/CD logs, audit your GitHub Actions references, and verify that you are pinning to SHA hashes rather than mutable tags. If you ran a compromised Trivy image with the Docker socket mounted, treat the entire host as compromised.

Pluto Security: Analyzing the Supply Chain Attack

Pluto Security’s technical analysis added depth to the understanding of how TeamPCP operated. The forensic breakdown of the attack chain from initial credential theft through lateral movement to downstream compromise is a valuable reference for incident responders and security architects.

Endor Labs: TeamPCP Isn’t Done

Endor Labs warned that TeamPCP’s campaign is expanding, not contracting. After Trivy and LiteLLM, the threat actor has been observed moving into additional ecosystems. Combined with the PhantomRaven campaign I covered in issue #89 (88 malicious npm packages), the supply chain threat landscape for AI infrastructure is at an unprecedented level of activity.

FutureSearch: LiteLLM PyPI Supply Chain Attack Analysis

FutureSearch’s Callum McMahon, who discovered the LiteLLM compromise, published a detailed account of how the attack was found and what the payload does. The three-layer architecture of the malware, with separate modules for launching, reconnaissance/credential harvesting, and persistence/remote control, demonstrates a level of sophistication that should concern every organization using open-source AI tooling.

The Software Security Market Is Broken Right Now

Ken Johnson’s analysis of the software security market cuts to the heart of a transition I’ve been watching closely. Traditional AppSec tools were designed for a world where humans write code at human speed. When AI agents produce 87% of PRs with at least one vulnerability (DryRun’s data from issue #89), the scanning, triaging, and remediation workflows built for human-paced development simply cannot keep up. Johnson argues the market needs to be rebuilt around AI-native workflows, and I agree.

OpenAI: Why Codex Security Doesn’t Include SAST

OpenAI’s explanation of why they chose reasoning-based analysis over traditional SAST for Codex Security continues to generate industry discussion. The argument that pattern matching produces high false-positive rates while missing contextual understanding of real-world impact aligns with the broader shift toward AI-native security tooling. As I covered in issues #88 and #89, both OpenAI and Anthropic have concluded that reasoning about code produces better outcomes than signature matching.

Boring AppSec: The Role of AppSec Engineers

This piece examines how the role of AppSec engineers is evolving as AI reshapes software development. The shift from “find and report vulnerabilities” to “architect secure AI-native development workflows” is underway, and practitioners who adapt will be more valuable than ever. This is consistent with the Trail of Bits model I covered in issue #88, AI does not replace security professionals, it amplifies their capability.

VulnCheck: n8n Needs More KEV

VulnCheck’s analysis of n8n vulnerabilities and the CISA KEV catalog highlights the ongoing challenge of vulnerability prioritization. As I wrote in Effective Vulnerability Management, the CVE volume continues to accelerate (Jerry Gamblin’s data projects 55,000+ in 2026), and the gap between what is exploited in the wild and what appears in prioritization catalogs like KEV remains significant.

GSA’s New CUI Security Requirements for Government Contractors

Holland & Knight published guidance on GSA’s new Controlled Unclassified Information security requirements. For government contractors navigating the evolving compliance landscape, this provides practical guidance on what is changing and how to prepare. As AI agents increasingly handle CUI in government workflows, the intersection of compliance requirements and agent governance will become more complex.

Praetorian: AI-Driven Offensive Security

Praetorian expanded on their CVE Researcher work that I covered in issue #87, demonstrating how AI agents can automate offensive security workflows. The productivity gains are real: research that consumed 4 to 8 hours completes in under 30 minutes. For defenders, this represents the kind of force multiplication that can help close the gap between attacker speed and defender response time.

Christian Posta: AAuth Full Demo

Christian Posta’s full working demo of AAuth bridges the gap between the theoretical framework for agent identity (which I covered in issues #85 and #88) and practical implementation. For organizations trying to implement agent authentication and authorization, this demo provides a concrete starting point.

Final Thoughts

The TeamPCP campaign that unfolded this week should be a watershed moment for the industry. A single threat actor compromised a widely used security scanner, pivoted through stolen CI/CD credentials to backdoor one of the most critical Python libraries in the AI ecosystem, and created a blast radius that extends to every organization that depends on LiteLLM or ran a compromised Trivy image.

The attack was discovered not by a SAST tool or a vulnerability scanner, but because a developer’s Cursor IDE pulled in the malicious package through an MCP plugin. An AI coding tool became both the vector and the detection mechanism.

This is the supply chain risk I have been writing about for years. In Software Transparency, Tony Turner and I argued that organizations need the ability to rapidly map their dependencies when a supplier event becomes operationally urgent. This week tested that thesis in the most direct way possible. How many organizations could answer, within hours, whether they had exposure to Trivy v0.69.4 or LiteLLM 1.82.7? Based on what I’ve seen, not nearly enough.

But there are also reasons for optimism. The CSA’s launch of CSAI and its mission to secure the Agentic Control Plane represents institutional recognition that agent security is not a niche concern but a foundational requirement. Corridor’s $25 million raise, backed by people from Anthropic, OpenAI, and Cursor, signals that the builders of AI coding tools recognize the security gap in their own ecosystem. OpenAI’s prompt injection defense framework, Cursor’s open-source security agents, and the Cedar policy language all represent real progress on the defensive side.

The M-Trends data showing 22-second access handoff and the CTF results showing AI outperforming 90% of human hackers both tell us the same thing: the speed of attack has outpaced the speed of human response. Automation is no longer optional. The organizations that will thrive in this environment are those that deploy AI defensively at the same pace adversaries deploy it offensively, while building the governance frameworks to ensure those defensive agents operate within appropriate boundaries.

Ninety issues in, and the pace has never been faster.

Stay resilient.

The LiteLLM backdoor through a pip install is the supply chain story I keep pointing to when PMs ask why their AI dependency review process needs to change. It is not the model vendors you are watching - it is the utility layer everyone installs without thinking.

The Sequoia framing on selling work rather than software is also the right lens. Security tooling is one of the first categories where that model actually holds because the outcome (not getting breached) is measurable and the liability is real.

The 22-second handoff number from M-Trends deserves its own post.