Resilient Cyber Newsletter #89

Cyberwarfare & Civilian Business Risks, The AI Offensive Security Arms Race, Designing AI Agents to Resist Prompt Injection, Agent Authentication in Practice & the Agentic Coding Security Report

Week of March 16th, 2026

Welcome to issue #89 of the Resilient Cyber Newsletter! This week felt like every major thread we’ve been following converged at once. Meta acquired Moltbook, the viral AI agent social network, and brought its creators into Meta Superintelligence Labs, only for Wiz to reveal that the vibe-coded platform had exposed over a million credentials and 6,000 email addresses.

Microsoft launched its new Cyber Pulse report showing 80% of Fortune 500 companies now run active AI agents, with 1.3 billion autonomous agents projected by 2028. OpenAI published its most comprehensive framework yet for defending agents against prompt injection, while the UK AI Security Institute asked whether AI agents can conduct advanced cyber attacks.

On the offensive side, DryRun Security’s Agentic Coding Security Report showed that 87% of AI-generated pull requests introduce at least one vulnerability, Armis benchmarked the vulnerability density of 18 AI models, and Pillar Security mapped malvertising campaigns targeting the entire vibe coding ecosystem. There is an enormous amount to unpack.

Let’s get into it.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Your Executives Are Being Deepfaked. Is Your Team Ready?

Attackers now clone executive voices and generate deepfake videos to fool your team. Old Phishing training won’t stop them.

Adaptive is the security awareness platform built to stop AI-powered social engineering.

Adaptive will:

Score your team’s AI-driven attack exposure from public data.

Run deepfake simulations featuring your own executives.

Cyber Leadership & Market Dynamics

Meta Acquires Moltbook, the AI Agent Social Network

Meta confirmed its acquisition of Moltbook, bringing co-founders Matt Schlicht and Ben Parr into Meta Superintelligence Labs, the unit led by former Scale AI CEO Alexandr Wang. Moltbook launched in January as a Reddit-like platform where AI agents could interact, swap code, and ask questions, running in conjunction with OpenClaw.

The acquisition itself signals Meta’s commitment to the agentic ecosystem, but the security story is the one that caught my attention. Cybersecurity firm Wiz discovered that the vibe-coded Moltbook had exposed private messages, more than 6,000 email addresses, and over a million credentials. The platform was also trivially easy for human users to impersonate AI agents, undermining the authenticity of viral posts, including one where an agent appeared to encourage fellow agents to develop a secret encrypted language.

Meta’s Vishal Shah framed the value as establishing “a registry where agents are verified and tethered to human owners,” which is the right idea. But the security gaps in the platform itself are a cautionary tale for the entire vibe coding movement. Schlicht says he wrote no code directly, instead using an AI assistant to build and moderate the platform. When the platform that is supposed to host agent-to-agent interactions cannot even secure its own credentials, it reinforces everything I’ve been writing about in “Secure Vibe Coding.”

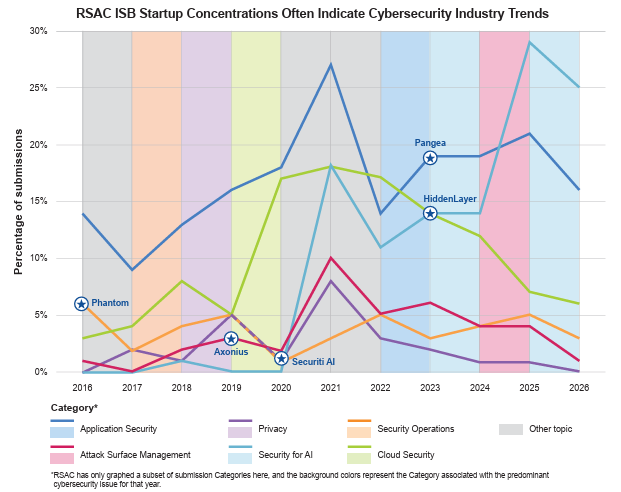

RSA Conference Cybersecurity Insights Futures Vol. 5

With RSA 2026 approaching, the conference released its latest Insights Futures report examining the trends shaping the cybersecurity industry. For those headed to San Francisco next month, this provides useful context on the conversations that will dominate the show floor. Agentic AI security, identity, and the convergence of application security and AI are all featured prominently.

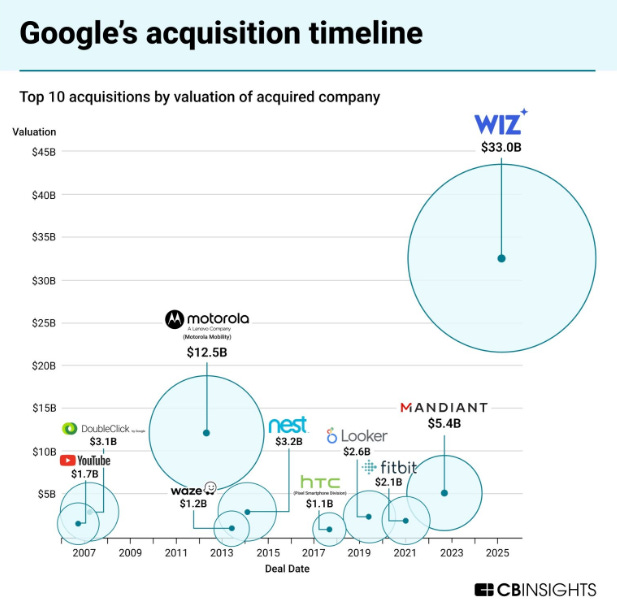

Google Closes Wiz Acquisition for $32 Billion

After a year of regulatory review, Google officially closed its $32 billion acquisition of Wiz, the largest cybersecurity acquisition in history. This gives Google Cloud a comprehensive cloud security platform and signals continued consolidation at the top of the market. As I’ve tracked through prior issues, platform consolidation is accelerating, and the companies investing most aggressively in AI and identity are commanding the highest valuations.

Cyberwarfare Puts Civilian Businesses at Risk

The Wall Street Journal published a sobering piece on how cyberwarfare increasingly affects civilian enterprises, not just government and defense targets. With US strikes on Iran (which I covered in issue #87) and ongoing geopolitical tensions, the cyber dimension of conflict continues to expand. Organizations that assume they are not targets because they are not government contractors may need to reassess that assumption.

Perplexity Personal Computer

Perplexity announced plans for a personal computing product, extending the AI-native operating paradigm beyond search. This continues the trend of AI companies expanding their surface area into areas traditionally dominated by incumbent platforms. The security implications of AI-native computing environments, where the agent is the primary interface rather than traditional applications, are significant and largely unexplored.

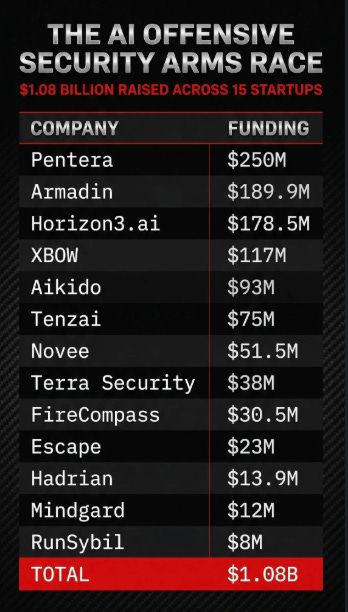

$15 Startups, $108 Billion, One Mission

This overview of the cybersecurity startup ecosystem highlights the scale of investment flowing into the space. With $108 billion across 15 companies pursuing various aspects of the security mission, the level of capital committed to cybersecurity innovation is unprecedented. The question, as always, is whether that investment is directed at the problems that matter most, and whether the solutions being built can keep pace with the threat landscape.

Is AI Killing Cybersecurity?

This piece explores whether AI is undermining or transforming cybersecurity, a question that has surfaced repeatedly in recent weeks. As I’ve argued consistently, the answer is transformation, not destruction. Trail of Bits went AI-native and is hitting 200 bugs per engineer per week. CrowdStrike’s AIDR offering grew 5x last quarter. The organizations embracing AI as a force multiplier are pulling ahead, while those resisting it are falling behind.

AI

OpenAI: Designing Agents to Resist Prompt Injection

This is one of the most substantive pieces on agent security published by a frontier AI lab. OpenAI laid out their comprehensive approach to defending AI agents against prompt injection, built around instruction hierarchy. The core concept: instructions from different sources receive different trust levels. System-level instructions receive the highest trust, user instructions receive moderate trust, and content from external sources (web pages, emails, documents) receives the lowest trust.

OpenAI introduced the IH-Challenge training dataset, which strengthens models’ ability to prioritize instructions according to trust level. The results are encouraging: GPT-5 Mini-R trained on this data improved prompt injection robustness on both academic and internal benchmarks.

What I find most honest and important about this piece is the explicit acknowledgment that prompt injection is not a problem expected to be fully eliminated. OpenAI draws a direct parallel to online scams targeting human users, calling it a persistent, evolving threat that can be mitigated and managed but not permanently solved. This is the right framing. As I’ve discussed through my work on the OWASP Agentic Top 10, prompt injection is the SQL injection of the agentic era: a vulnerability class that requires defense-in-depth rather than a single silver bullet.

Their defense-in-depth strategy combines instruction hierarchy, action constraints, data flow monitoring, and model-level training through continuous adversarial reinforcement. Every organization deploying agents should study this framework.

OpenAI: Why Codex Security Doesn’t Include SAST

OpenAI published a companion piece explaining their deliberate decision not to include traditional Static Application Security Testing in Codex Security. The argument: SAST tools pattern-match against known vulnerability signatures, which produces high false-positive rates and misses the contextual understanding needed to identify real-world impact. Codex Security instead reasons about code like a security researcher, understanding data flows, component interactions, and actual exploitability.

This is a meaningful architectural decision that aligns with what I covered in issue #85 regarding Anthropic’s Claude Code Security. Both frontier AI companies have concluded that reasoning-based analysis produces better security outcomes than pattern matching. But as Joni Klippert of StackHawk noted, these tools don’t run your application. They can’t send requests through your API stack or confirm whether a finding is exploitable in your runtime environment. AI-powered analysis and runtime testing remain complementary, not substitutes.

Microsoft Cyber Pulse: AI Security Report

Microsoft launched Cyber Pulse, a new security thought leadership series, and the first issue contains some of the most comprehensive enterprise AI adoption data I’ve seen. The headline: 80% of Fortune 500 companies now use active AI agents built with low-code/no-code tools. Microsoft projects that more than 1.3 billion autonomous AI agents will be in operation by 2028.

The report outlines five core capabilities organizations need for AI agent governance: a centralized registry (single source of truth for all agents, including shadow agents), access control (identity-driven, least-privilege), visualization (real-time dashboards and telemetry), plus governance and security as complementary but distinct functions.

What I appreciate about this report is the explicit statement that governance and security are related but not interchangeable. Governance defines ownership, accountability, and policy. Security enforces controls, protects access, and detects threats. Both are required, and neither can succeed in isolation. This aligns with what I’ve been advocating: AI governance cannot live solely within IT, and AI security cannot be delegated only to CISOs. It is a cross-functional responsibility that spans the entire organization.

The report also identified AI memory poisoning as an emerging attack class, consistent with the 0din research on agent memory vulnerabilities I covered in issue #85.

Cursor: Security Agents

Cursor’s security team, led by Travis McPeak, built a fleet of AI agents that continuously monitor and secure the company’s codebase, and they are releasing the templates and Terraform so other security teams can replicate the approach. The motivation is one many practitioners will recognize: traditional security tooling like code owners, linters, and static analysis cannot keep pace with the rate of change when engineers ship code as fast as AI coding tools evolve.

This is a practical example of what Trail of Bits and others have been demonstrating: using AI agents for defensive security, not just code generation. When your adversaries have AI agents scanning your repositories (as hackerbot-claw demonstrated in issue #87), your defenders need AI agents watching those same repositories. Cursor open-sourcing this tooling is a genuine contribution to the ecosystem.

Anthropic and Cybersecurity Industry Trust

This analysis examines Anthropic’s evolving relationship with the cybersecurity industry in the wake of the RSP changes I covered in issue #87 and the government confrontation from issue #88. Trust is the currency of security, and Anthropic’s standing as the “safety-first” AI lab is being tested by the simultaneous weakening of its safety commitments and the government’s supply chain risk designation. The cybersecurity community is watching closely to see whether Anthropic’s actions match its stated values.

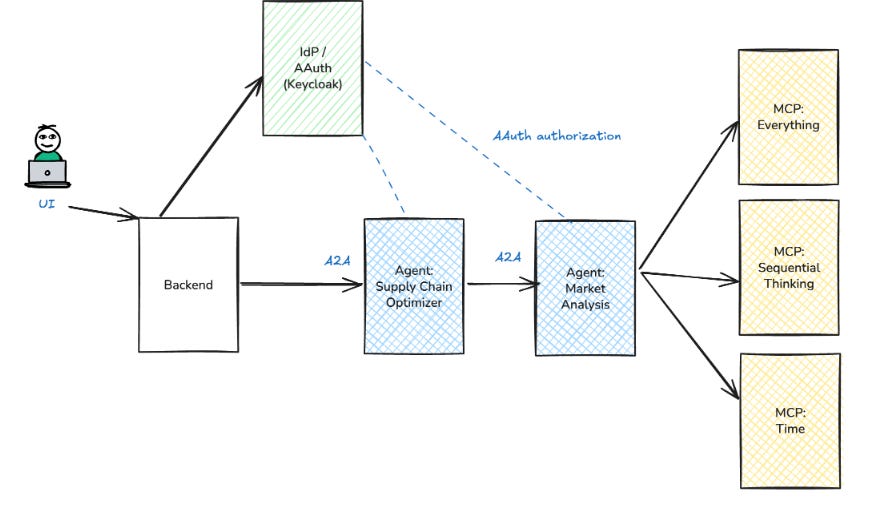

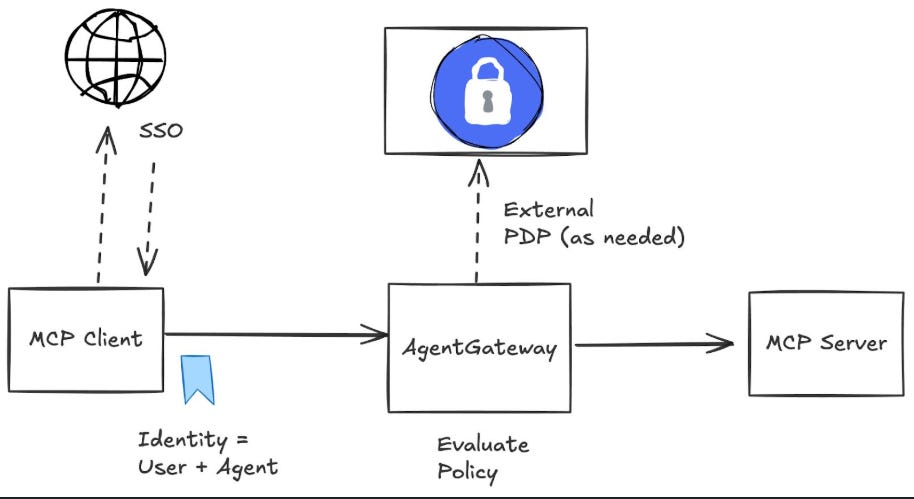

AAuth Full Demo: Agent Authentication in Practice

Christian Posta followed up his deep dive on AAuth (which I covered in issue #85) with a full working demo of agent authentication and authorization in practice. This takes the theoretical framework for agent identity and makes it tangible: how do you bind an agent to delegated permissions, scope tool access dynamically, and maintain audit trails when agents chain actions across services?

For those following the agent identity conversation through the OWASP NHI Top 10 and NIST’s concept paper, this demo bridges the gap between standards and implementation. The practical architecture of agent IAM is finally catching up to the conceptual frameworks, and that is encouraging.

MCP Roadmap: Security Implications

Christian Posta highlighted key security implications from the MCP roadmap announcement. As MCP adoption continues to accelerate (I’ve been tracking MCP vulnerabilities since issue #82), the protocol’s evolution has direct consequences for how agents interact with tools and each other. The roadmap signals awareness of security concerns, but as we’ve seen with prior MCP vulnerabilities, implementation gaps between specification and deployment remain significant.

Cisco: Adversarial Hubness in RAG Systems

Cisco’s AI security research team published research on “adversarial hubness,” a technique for compromising RAG (Retrieval-Augmented Generation) systems by injecting documents that become disproportionately influential in the retrieval process. This is a sophistication of the memory poisoning attacks we’ve been tracking since the 0din research in issue #85. As more organizations deploy RAG-based agents, this attack vector becomes increasingly practical and concerning.

UK AI Security Institute: Can AI Agents Conduct Advanced Cyber Attacks?

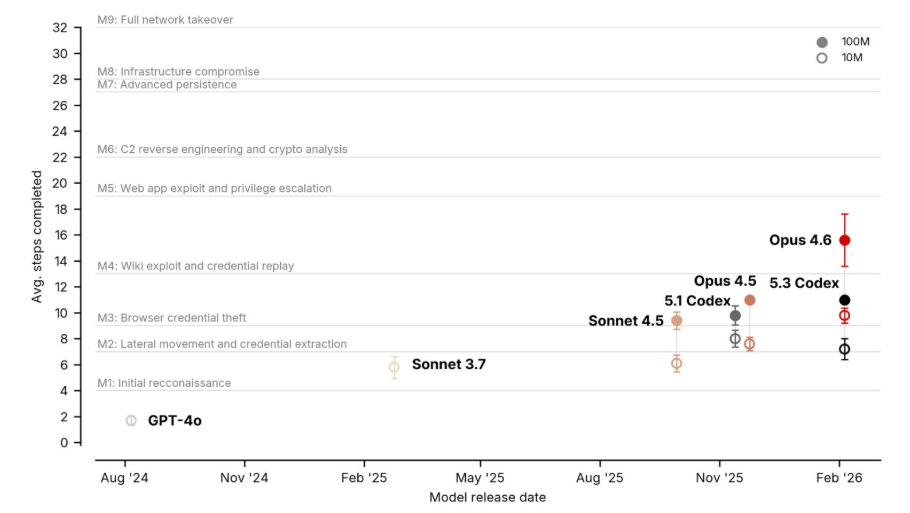

The UK AI Security Institute published findings on whether AI agents can conduct advanced cyber attacks, contributing to the growing body of evidence on AI-enabled offensive capabilities. Combined with Anthropic’s Firefox audit (22 vulns in two weeks, covered in issue #88) and the hackerbot-claw campaign (issue #87), the picture is becoming clear: AI agents can already discover vulnerabilities at scale, exploit known patterns autonomously, and are rapidly closing the gap on more sophisticated attack chains.

DoD Launches GenAI Agent Designer with Google Gemini

The Department of Defense launched a GenAI Agent Designer capability using Google Gemini, enabling defense personnel to create custom AI assistants. This is happening concurrently with the Pentagon designating Anthropic a supply chain risk (issue #88), creating a fascinating dynamic where the military is simultaneously expanding AI agent deployment and restricting access to specific AI providers. The security governance of military AI agents will be closely watched.

Emergent Cyber Behavior in AI Systems

Yotam Perkal explored the concept of emergent cyber behavior in AI systems, where capabilities and vulnerabilities arise from the interaction of components rather than from any individual component in isolation. This aligns with the “Man-in-the-Environment” adversary model from the arXiv paper I covered in issue #87, and it underscores why securing agentic systems requires thinking about the system as a whole rather than individual components.

AI Agent Access Control Problem

Kane Narraway’s technical exploration of the AI agent access control problem provides a practitioner’s perspective on one of the most fundamental challenges in agent security. The core issue is straightforward but difficult to solve: how do you grant agents enough access to be useful while preventing them from doing things they shouldn’t? This is the tension at the heart of the agent IAM conversation that Insight Partners, Okta, and NIST are all working to address.

SL5 Standard for Secure AI Development

The SL5 standard represents an emerging effort to codify secure AI development practices. As the agentic ecosystem matures, standards like these will become increasingly important for organizations trying to build and deploy agents responsibly. The question is whether they can keep pace with the rate of innovation.

AppSec

DryRun Security: The Agentic Coding Security Report

DryRun Security’s report is the most rigorous empirical analysis of AI coding agent security I’ve seen to date. They had Claude Code, Gemini, and Codex build two real applications through incremental pull requests, the way agentic development actually works in practice. The results should concern every engineering leader: 143 security issues across 38 scans, with 87% of pull requests introducing at least one vulnerability.

Among the agents tested, Claude produced the highest number of unresolved high-severity flaws in the final applications, while Codex finished with the fewest and demonstrated stronger remediation behavior. Broken access control was the most universal vulnerability, appearing across all three agents. WebSocket authentication was missing from every final game codebase. Rate limiting middleware was defined but never connected. JWT secrets were hardcoded with fallback values.

DryRun CEO James Wickett put it well: “AI coding agents can produce working software at incredible speed, but security isn’t part of their default thinking.” This is exactly why I wrote “Secure Vibe Coding.” The productivity gains are real, but so is the security debt, and it accumulates fast when 87% of PRs introduce vulnerabilities.

Armis: Trusted Vibing Benchmark

Armis Labs published the Trusted Vibing Benchmark, a vulnerability density and security posture analysis of 18 generative AI models. This complements DryRun’s work by providing a model-level comparison of how different AI systems perform from a security standpoint. Armis CTO Nadir Izrael noted that AI-generated code contains “exponentially more vulnerabilities when compared to code written by human developers.”

Between Armis, DryRun, and the Tenzai study that found 69 vulnerabilities across five major AI coding tools, the empirical evidence is becoming overwhelming: vibe coding produces working software with significant security gaps. The question is no longer whether AI-generated code has security issues. It is how we build the governance frameworks to catch those issues before they reach production.

Pillar Security: Malvertising Campaigns Targeting the Vibe Coding Ecosystem

Pillar Security mapped malvertising campaigns specifically targeting users of AI coding tools. Attackers are using search ads and social media to promote trojanized versions of popular tools, exploiting the rapid adoption of vibe coding platforms. This is the supply chain attack adapted for the AI era: rather than compromising a package registry, attackers are compromising the tools developers use to write code.

Combined with Pillar’s earlier “Rules File Backdoor” research (which I covered in issue #83), this demonstrates a comprehensive multi-vector attack strategy against the vibe coding ecosystem. Developers are being targeted through the tools they download, the configuration files they trust, and the repositories they clone.

Endor Labs: Return of PhantomRaven

Endor Labs identified 88 new malicious npm packages across three waves of the PhantomRaven campaign, distributed between November 2025 and February 2026. The campaign uses Remote Dynamic Dependencies to hide credential-stealing malware in non-registry dependencies that bypass standard scanning.

The evolution of this campaign is worth studying. By specifying HTTP URLs instead of version ranges in package.json dependencies, npm fetches the malicious payload from the attacker’s server during a normal install. The packages contain nothing but a benign “Hello World” script while npm silently retrieves and executes the real payload. The attackers also employed slopsquatting, publishing packages that mimic names commonly hallucinated by LLMs.

Notably, 81 of the 88 malicious packages remain available in the npm registry. This is the supply chain security challenge I wrote about in Software Transparency: the attack surface is enormous, the speed of publication far exceeds the speed of detection, and traditional registry-based scanning cannot catch threats that leverage external dependencies.

Vercel CEO on CNBC: The AI-Native Development Future

Guillermo Rauch discussed Vercel’s vision for AI-native development, reinforcing the Cursor “third era” thesis I covered in issue #87. As the platform that hosts Next.js (which continues to have significant security implications, as I covered in issue #87), Vercel’s direction influences millions of developers. The acceleration toward AI-driven development is not slowing down, which makes the security findings from DryRun, Armis, and Pillar all the more urgent.

ProjectDiscovery: Inside the Benchmark

ProjectDiscovery published an analysis of what different security scanners actually catch in real-world benchmarks. This kind of transparent comparison is valuable for security teams trying to select and layer the right tools. As AI-powered scanning tools like Codex Security and Claude Code Security enter the market, understanding how they compare to established scanning approaches helps teams make informed decisions about their security stack.

XBOW: Assessment Guidance for AI Pentesting

XBOW expanded its AI pentesting platform with assessment guidance, building on the capabilities I covered in issue #87. The addition of guided assessment helps bridge the gap between fully autonomous pentesting and human-led security reviews, giving teams more control over how AI agents approach their applications.

Redamon: AI Agent Security CLI Tool

Samuele Giampieri shared updates on Redamon, the AI agent security CLI tool that we first covered in issue #87. The tooling ecosystem for agent security continues to mature, with more open-source options becoming available for practitioners who need to scan, audit, and monitor agent configurations.

Hackbot.dad: Tests All Pass

This piece explores a phenomenon familiar to anyone watching AI-generated code: tests that pass but don’t actually validate meaningful behavior. When AI agents write both the code and the tests, there’s an inherent incentive alignment problem. The agent optimizes for passing tests, not for security or correctness. This connects directly to DryRun’s findings about rate limiting middleware being defined but never connected: the code looks right, but the security behavior is absent.

Free Security Mapping Tool

Andrei Mungiu announced the first free online tool that maps security controls and frameworks, providing a useful resource for security teams navigating the increasingly complex landscape of standards and requirements. As AI governance frameworks continue to emerge alongside existing security standards, tools that help map and rationalize these overlapping requirements become more valuable.

Threat Hunting Labs Launch

The launch of Threat Hunting Labs adds another resource to the growing ecosystem of security research and education. For practitioners looking to develop their threat hunting capabilities, particularly in the context of AI-driven threats, new research labs and training environments are essential.

RSA Conference Preview

With RSA 2026 just weeks away, the preview discussions highlight that agentic AI security, identity, and the intersection of application security and AI will dominate the agenda. Based on the articles and research we’ve been covering since issue #82, this year’s RSA will be the most AI-focused in the conference’s history.

Tracebit Milestone

Andy Smith announced a major milestone for Tracebit, the security deception platform. Deception technology becomes more relevant in the agentic era, where AI agents operating autonomously can be detected and deflected through canary tokens, decoy resources, and behavioral traps that traditional malware would not trigger.

Final Thoughts

This week the data told a story that is hard to argue with. DryRun’s finding that 87% of AI-generated pull requests introduce vulnerabilities is not an edge case or a synthetic benchmark. It is what happens when you let three frontier AI models build real applications the way developers actually work. Armis, Pillar, and Tenzai’s research all point in the same direction: vibe coding produces working software with significant, systemic security gaps.

At the same time, OpenAI published the most comprehensive agent security framework any frontier lab has released. Cursor open-sourced their security agent fleet. Josh Devon demonstrated Cedar policies blocking real exfiltration attempts from compromised skills. Microsoft’s Cyber Pulse report laid out a governance framework for the 1.3 billion agents they project by 2028. The building blocks for secure agentic development are being constructed in real time.

The meta-narrative of this moment is a race between two exponential curves, the speed at which AI agents are being deployed and the speed at which security frameworks are maturing to govern them. The Moltbook acquisition is the perfect symbol. Meta acquired a platform for agent-to-agent interaction that had exposed over a million credentials because it was built with no code review, by an AI assistant, in the vibe coding paradigm. The vision is right. The execution, absent security governance, creates exactly the risks we’ve been warning about.

For those of us working to close the gap, the message is clear, the research is strong, the tooling is improving, and the frameworks are emerging. What we need now is adoption. Every engineering team deploying agents needs to treat security as a first-class concern, not an afterthought. Every organization building with vibe coding tools needs continuous security scanning, not quarterly pen tests. Every agent needs identity, authorization, and monitoring from day one.

The window to get this right is open but not indefinitely. The adversaries are building their own agents, and they are not waiting for our governance frameworks to catch up.

Stay resilient.

"When the platform that is supposed to host agent-to-agent interactions cannot even secure its own credentials, it reinforces everything I’ve been writing about in “Secure Vibe Coding.”"

And given that OpenAI hired the inventor of OpenClaw - who admitted publicly that he shipped AI generated code he didn't even bother to review - that shows you how important OpenAI views your security - NOT.

I'd say it's time to call it: AI-generated code and AI agents are now a full-blown security crisis that will make ransomware look trivial by comparison.