Resilient Cyber Newsletter #85

Selling Cyber: Deal Flow and Market Signals, Gartner 2026 CIO Agenda, Exploiting IDEs via IDEsaster, Nation States Using Gemini AI, OpenAI's "Lockdown Mode" & 2026 State of Exploitation

Week of February 17, 2026

Welcome to issue #85 of the Resilient Cyber Newsletter!

This week crystallizes themes I’ve been tracking for quite a while. We saw NIST finally acknowledge what practitioners have known: AI agents need their own identity and authorization frameworks.

We watched Claude Opus 4.6 discover 500+ zero-days in open source projects, raising uncomfortable questions about whether AI defenders will outpace AI-assisted attackers, a topic I dive into with Stanislav Fort, in an episode of Resilient Cyber that will be published next week.

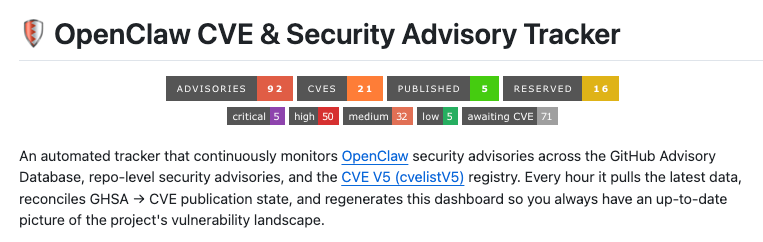

We got hard data on prompt injection from Anthropic that every vendor should be forced to publish, and OpenClaw continued its security saga with 40+ patches while Phil Windley demonstrated what policy-aware agent loops could actually look like with Cedar and Jerry Gamblin publishes his OpenClaw Security Advisories Tracker/Dashboard.

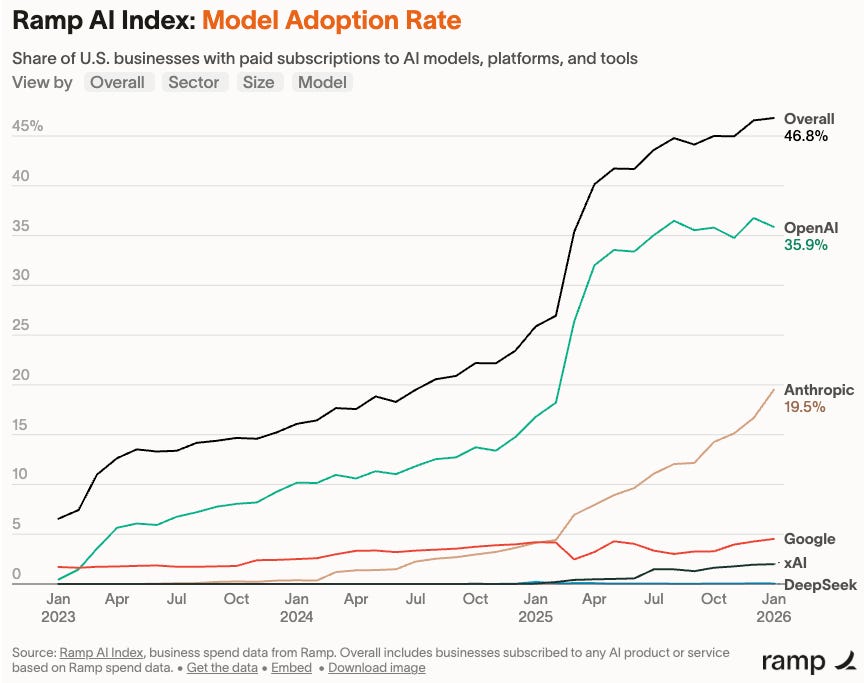

Meanwhile, the market keeps moving. One in five businesses on Ramp now pays for Anthropic, up from one in 25 a year ago. Gartner is telling CIOs to shift from GenAI pilots to agentic AI ROI, and 69% of CISOs are open to leaving their roles entirely.

If that doesn’t tell you something about the state of security leadership in 2026, I don’t know what does.

Let’s dive in.

Interested in sponsoring an issue of Resilient Cyber?

This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives

Reach out below!

Cyber Leadership & Market Dynamics

Selling Cyber - Deal Flow and Market Signals with Momentum Cyber

In this episode of Resilient Cyber I catch up with Momentum Cyber's Founder & CEO, Eric McAlpine.

We will be unpacking 2025's M&A and capital market activities, using Momentum Cyber's 2025 Cybersecurity Almanac Report, as well as discussing some of the overlooked and untold details under the hood of cyber M&A, building world class teams and more.

Eric and I covered a lot of ground but here is a summary below!

Cybersecurity M&A hit $96B in 2025, but concentration tells the real story. Eight mega deals (Google/Wiz, CrowdStrike/CyberArk, etc.) accounted for $87B of that total across ~400 transactions. The real health of the market lives in the mid-market — exits between $100M–$500M — which is the lifeblood of cybersecurity M&A and where Momentum Cyber primarily operates.

A two-tier funding market is emerging, with Series A and B rounds compressing. Companies like Seven AI raising $130M Series A rounds signal that capital is being deployed as a strategic weapon from day one. With agentic AI enabling faster product development, founders can now go from stealth to near $10M ARR in under a year, collapsing the traditional seed → A → B → C funding playbook.

Repeat founders and “second acts” are reshaping deal dynamics. The Foundstone-to-McAfee deal ($87M) spawned CrowdStrike, Cylance, and Mandiant. A decade ago, most founders were on their first act — now proven operators with track records are commanding outsized investor confidence and concentrated bets.

“Follow the people, follow the money” to predict M&A activity. Leadership changes at strategic acquirers are the leading indicator: Thomas Kurian (Oracle → Google Cloud) preceded the Mandiant and Wiz acquisitions; Bill McDermott (SAP → ServiceNow) signaled their cyber push; Nadav Zafrir (Team8 → Check Point) immediately triggered three acquisitions. Operator DNA from acquisitive companies forecasts M&A strategy.

Strategics reclaimed the throne — 92% of disclosed M&A value in 2024 came from strategic buyers, swinging sharply back from private equity dominance in prior years. Over 1,568 unique buyers have acquired a cybersecurity company since 2010, with Cisco (28), Palo Alto (24), and CrowdStrike (now 10) leading the leaderboard.

AI security is the fastest-forming subsector in cyber history, but services still dominate M&A today. AI security saw 330+ vendors, 144 funding rounds, yet only ~10 M&A deals — while human-led security services drove ~140 acquisitions. Expect this to flip within 2–3 years as AI becomes pervasive, eventually turning the entire Cyberscape into an AI-native landscape.

Headcount trends are a hidden M&A signal. Momentum’s research found that companies with 50+ employees growing headcount pre-acquisition averaged 99% higher valuations, while shrinking companies saw a 58% decline. The 30–50 employee range is the inflection point where buyers shift from viewing a deal as an acqui-hire to a platform/land-grab acquisition.

Momentum Cyber rebuilt their Cyberscape taxonomy from scratch — going from 18 sectors to 12, while expanding from 803 to 1,000+ companies, with AI security alone housing 400+ companies across 10 subsectors. Every existing category is being “eaten” by AI — like Pac-Man clearing the board — mirroring the cloud-native transition a decade ago.

69% of CISOs Open to Career Move—Including Leaving Role Entirely

This one should concern every board member and CEO. The 2026 State of the CISO Benchmark Report from IANS Research and Artico Search found that 69% of security executives are open to making a career move within the next year, and many are looking beyond CISO roles entirely to positions like CTO, CIO, board member, or advisory work.

The drivers are sobering: 52% said current responsibilities exceed available resources. Ever since the SEC started looking at charging CISOs personally for security failures, many are reconsidering whether they want that exposure in publicly traded companies. As Erik Avakian from Info-Tech put it, “The sheer exhaustion, organizational misalignment, and a growing sense that the job, as it is currently structured in many organizations, is not sustainable” is the primary cause.

What happens when CISOs leave?

High-performing lieutenants often follow within months. Projects freeze. Strategic security programs lose momentum. The organization scrambles for interim cover without a real succession plan. This is exactly why I’ve argued that security leaders must be positioned as business enablers, not scapegoats. When we make the CISO role untenable, we all pay the price.

Gartner: The 2026 CIO Agenda

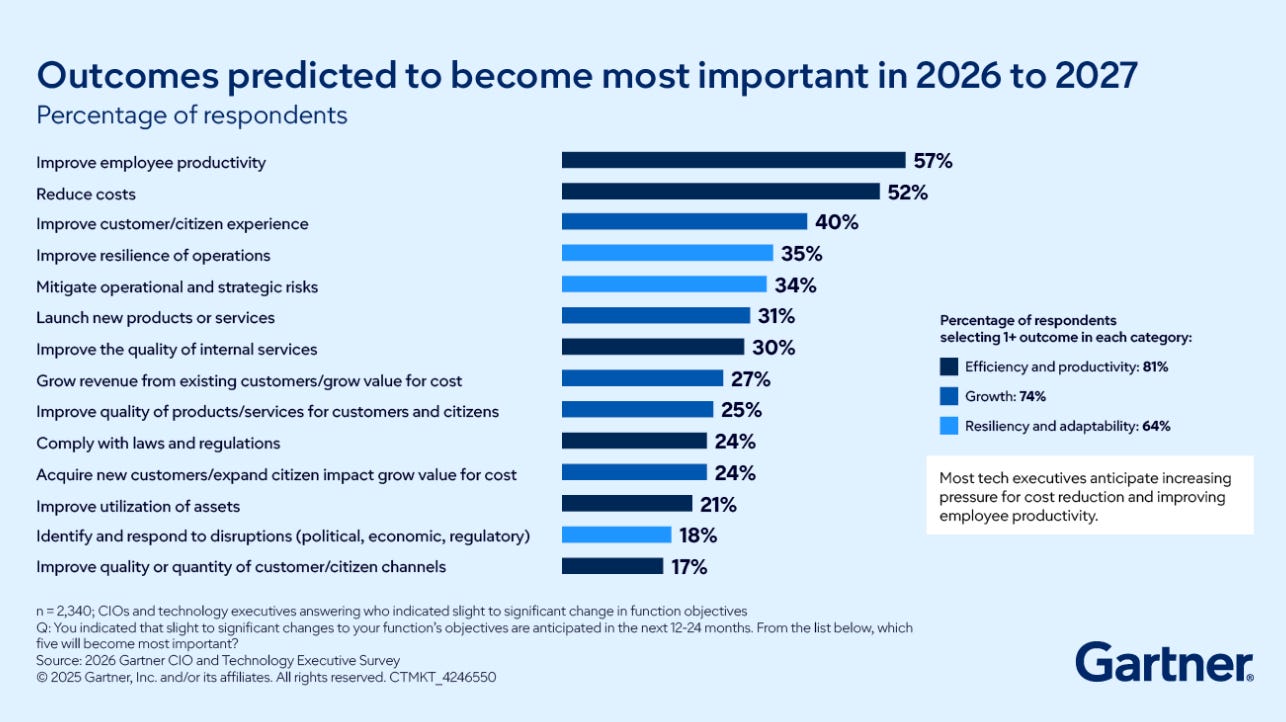

Gartner’s 2026 CIO Agenda makes it clear: “2025 was about AI pilots, discovery and experimentation. 2026 will be about delivering agentic AI ROI.” According to their survey, 94% of CIOs expect major changes to their plans within the next 24 months, yet only 48% of digital initiatives actually meet or exceed business targets.

The pivot to agentic AI is real, 64% of leaders plan to deploy agentic AI within 24 months. AI spending is surging by more than 35% year-over-year despite broader fiscal constraints. For security teams, this means we need to be ready to secure agentic deployments at scale, not just pilot protections.

One in Five Businesses on Ramp Now Pays for Anthropic

The Ramp AI Index for February 2026 shows Anthropic adoption growing from 16.7% to 19.5%, one of its largest monthly gains since tracking began. A year ago, it was one in 25 businesses. Now it’s one in five.

Here’s the insight that matters: 79% of Anthropic’s customers also pay for OpenAI. Anthropic isn’t stealing customers, it’s capturing expansion. Businesses are running multi-model strategies.

To put Anthropic’s growth in context: $1B ARR in December 2024. $4B by mid-2025. $9B by end of 2025. $14B in February 2026. That’s $1B to $14B in about 14 months. These numbers matter because they drive the investments, talent acquisition, and R&D that will shape model capabilities, including security capabilities, for years to come.

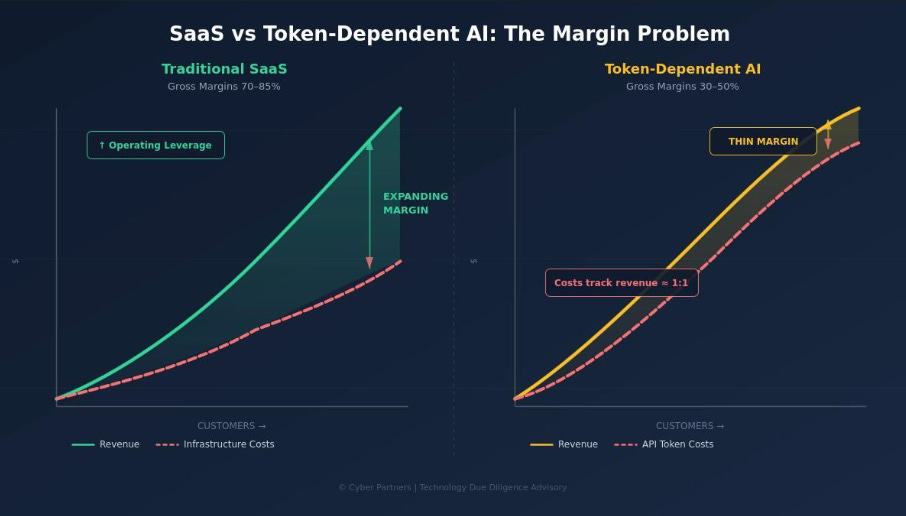

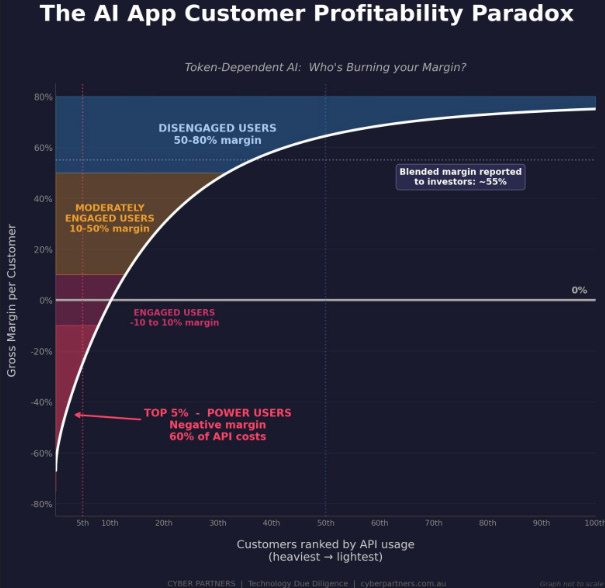

SaaS vs. Token-Dependent AI: The Margin Problem

Speaking of spending on frontier labs and tokens, I came across an excellent post showing how AI companies are not SaaS, and the paths to profitability, costs and margins look totally different.

The post came from Tony Barnes, who’s being doing due diligence for AI-enabled companies for PE and VC firms and he points out a pattern some investors need to pay attention to.

I want to share his language below, because it is important:

“A lot of early-stage companies are building products on top of external LLMs, making API calls to the likes of OpenAI, Anthropic, Google, and pricing themselves like SaaS businesses.

The problem is that their cost structure looks nothing like SaaS. In traditional SaaS, once you hit breakeven, new customer revenue drops almost entirely to the bottom line. Infrastructure costs scale sub-linearly. That's the magic of the model, and why SaaS commands the multiples it does.

In a token-dependent AI business, every new customer generates a proportional increase in API costs.

More users = more tokens = more spend. The cost curve doesn't flatten. It tracks revenue. That's not SaaS economics. That's a reseller margin. It almost takes me back to the early days of internet bandwidth reselling: buy wholesale, sell retail, hope the margin holds.

The implications for investors and acquirers that need thinking through properly:

• Gross margins far lower than the 70–80% that SaaS investors expect.

• No operating leverage: margin doesn't expand as you scale.

• Supplier dependency: your largest input cost is controlled by someone else's pricing decisions.

• Working capital pressure: API costs hit in real time (those bills come relentlessly every month) while revenue collection lags.

None of this means these businesses can't succeed. There might be a price advantage to be had when you get really big - but getting there is going to be more costly. Perhaps they should be valued as margin-over-product businesses, not SaaS platforms?

If you're investing in AI-enabled companies, the first question in due diligence should be: what percentage of your COGS is API-dependent?

The answer tells you what kind of business you're actually buying”

He had a companion post he made too, demonstrating how AI-enabled companies have different dynamics than SaaS when it comes to customers as well.

“Paradoxically, the kind of customers a sales team celebrates - entrenched, engaged, heavy users - are the ones disproportionately destroying the gross margin.”

What he is laying out is, your power users, or users really leaning into the product and capabilities eat up the most tokens, and erode your margins. This is unlike SaaS as well.

WSJ: AI Agents Are Here to Stay, Businesses Say

According to the Wall Street Journal’s coverage of enterprise AI adoption, businesses have moved past the question of whether to adopt AI agents to how quickly they can scale them. Gartner predicts 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from less than 5% in 2025.

For security practitioners, this means our governance frameworks, identity controls, and monitoring capabilities need to mature faster than the adoption curves. Based on the data, I’m not confident that’s happening.

VulnCheck Announces $25M Series B Funding

One of my favorite teams recently announced their Series B funding round. If you’ve followed me, you inevitably have seen me share a lot of research from VulnCheck, Patrick Garrity and the broader team.

It’s awesome to see them continue to grow and thrive and provide so many great insights for the community around vulnerability and exploitation trends. Their announcements boasts some impressive figures too, including 557% ARR and Government ARR by 306%.

As their announcement notes, AI is helping drive vulnerability discovery and reporting to even higher levels, making the need for context and clarity more critical than ever.

Koi Announces Acquisition by Palo Alto Networks (PANW)

PANW continues its aggressive acquisition strategy, this time swooping up Koi. Koi quickly established themselves as an innovative and standout vendor around software supply chain, endpoint and AI security, often being featured in my newsletter for their awesome research and reporting.

The company is very young, and will fold into the broader PANW platform strategy around endpoint and AI security. Aside from the technical reporting and capabilities, they executed one of the best approaches to unique branding I’ve seen in recent years and quickly helped their firm stand out in a noisy market.

AI

Exploiting AI IDEs

In this episode of Resilient Cyber, we sat down with Ari Marzuk, the researcher who published "IDEsaster", A Novel Vulnerability Class in AI IDE's.

We will discussed the rise of AI-driven development and modern AI coding assistants, tools and agents, and how Ari discovered 30+ vulnerabilities impacting some of the most widely used AI coding tools and the broader risks around AI coding.

Prefer to Listen?

Please be sure to leave a rating and review, as it helps a ton!

Ari’s background in offensive security — Ari has spent the past decade in offensive security, including time with Israeli military intelligence, NSO Group, Salesforce, and currently Microsoft, with a focus on AI security for the last two to three years.

IDEsaster: a new vulnerability class — Ari’s research uncovered 30+ vulnerabilities and 24 CVEs across AI-powered IDEs, revealing not just individual bugs but an entirely new vulnerability class rooted in the shared base IDE layer that tools like Cursor, Copilot, and others are built on.

“Secure for AI” as a design principle — Ari argues that legacy IDEs were never built with autonomous AI agents in mind, and that the same gap likely exists across CI/CD pipelines, cloud environments, and collaboration tools as organizations race to bolt on AI capabilities.

Low barrier to exploitation — The vulnerabilities Ari found don’t require nation-state sophistication to exploit; techniques like remote JSON schema exfiltration can be carried out with relatively simple prompt engineering and publicly known attack vectors.

Human-in-the-loop is losing its effectiveness — Even with diff preview and approval controls enabled, exfiltration attacks still triggered in Ari’s testing, and approval fatigue from hundreds of agent-generated actions is pushing developers toward YOLO mode.

Least privilege and the capability vs. security trade-off — The same unrestricted access that makes AI coding agents so productive is what makes them vulnerable, and history suggests organizations will continue to optimize for utility over security without strong guardrails.

Top defensive recommendations — Ari emphasized isolation (containers, VMs) as the single most important control, followed by enforcing secure defaults that can’t be easily overridden, and applying enterprise-level monitoring and governance to AI agent usage.

What’s next — Ari is turning his attention to newer AI tools and attack surfaces but isn’t naming targets yet. You can follow his work on LinkedIn, X, and his blog at maccarita.com.

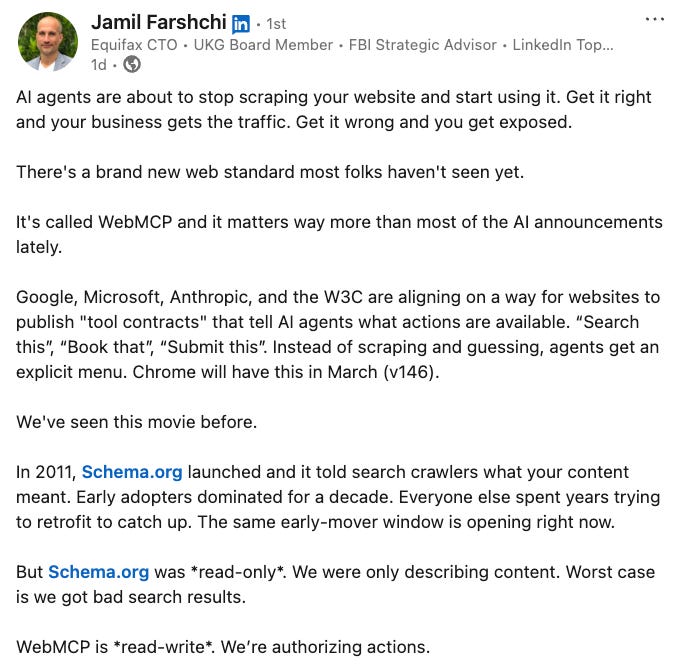

WebMCP - The Web Continues to Go Agentic

Google and others recently announced “WebMCP”, which:

“aims to provide a standard way for exposing structured tools, ensuring AI agents can perform actions on your site with increased speed, reliability and precision”

I found this post from Equifax’s CTO and former CISO interesting, as it indicates what is coming - agents taking actions over the web with this new web standard.

OpenClaw CVE & Security Advisory Tracker

OpenClaw continues to dominate the headlines, being the fastest growing project in GitHub history, massive adoption, hype and more. The founder recently joined OpenAI, which we will discuss later.

That said, one of my favorite Vulnerability Researcher’s Jerry Gamblin produced an OpenClaw CVE & Security Advisory Tracker, which pulls the latest data hourly from key sources such as GitHub Security Advisories (GHSA), CVE and more. At the time I’m posting this, it has 92 security advisories.

OpenClaw: The Viral AI Agent that Broke the Internet - Peter Steinberger | Lex Fridman Podcast #491

Speaking of OpenClaw, the projects founder Peter Steinberger recently went on the Lex Fridman podcast for a long form discussion, and it was insightful. All right before he announced he was joining OpenAI too.

OpenClaw’s Rapid Rise and Unique Approach: OpenClaw, an open-source AI agent, quickly gained popularity by offering an autonomous AI assistant that integrates with various messaging clients and AI models. The speaker attributes its viral success to its “fun” and “weird” nature, distinguishing it from more serious, agentic projects.

Emphasis on Security and Accessibility: The creator recognizes significant security concerns due to the agent’s broad access to a user’s computer and the fact that many users lack technical understanding. He states that improving security and making OpenClaw easier to set up for non-developers are immediate priorities.

Transformative Impact on Software and User Empowerment: The speaker believes that AI agents like OpenClaw will fundamentally change how software is used, predicting that many traditional apps will become obsolete as agents can interact directly with services. He highlights its positive impact by enabling users, including those with disabilities, to automate tedious tasks and find more joy in their work.

Dario Amodei - “We are near the end of the exponential”

I recently caught Anthropic’s Dario Amodei’s interview on Dwarkesh Patel’s podcast and of course it was interesting and provocative as Dario tends to be.

Some of the key themes and takeaways are:

The “Big Blob of Compute” Hypothesis Continues to Hold True: Dario Amodei asserts that the scaling hypothesis, initially proposed in 2017, remains valid. This hypothesis suggests that raw compute, data quantity and quality, training duration, and scalable objective functions are the primary factors driving AI progress, rather than “cleverness” or new methods.

Progress in Both Pre-training and RL Scaling: While pre-training scaling laws have consistently shown gains, the video highlights that similar progress is now being observed in Reinforcement Learning (RL) tasks. Models are showing log-linear improvements in performance on various RL tasks, similar to what was seen with pre-training.

AGI is Anticipated Within 1-3 Years: Amodei predicts that systems capable of supporting a “country of geniuses in a data center” could emerge within one to three years, potentially generating trillions of dollars in revenue. He suggests that current in-context learning capabilities and longer context lengths, which are engineering problems, may be sufficient to achieve a large fraction of this vision.

Constitutional AI for Better Generalization and Alignment: Anthropic’s approach involves teaching AI models principles rather than a rigid list of rules, leading to more consistent behavior and better generalization across edge cases. This “constitutional AI” method is seen as a more effective way to train models and align them with desired values, although it aims to keep the models “corallable” rather than intrinsically motivated to run the world.

CSA’s Draft AI Security Maturity Model

CSA recently shared a draft of their AI Security Maturity Model (AISMM). It’s currently seeking industry input as is described below:

The objective of the CSA AI Security Maturity Model (AISMM) is to provide organizations a roadmap on building an enterprise AI security program. Although there are other AI maturity models, many of those focus on maturity for individual AI projects or higher-level governance.

This model is designed to provide direct guidance on building and managing an AI security program. It aligns with common information security organizational structures, roles and responsibilities, and processes. It describes the journey of an AI security program to provide organizations with a big picture view of how to structure and measure the progress of AI security within enterprises.

I took a look and it is indeed a helpful mental model around AI security and how to frame specific categories, especially those such as Governance.

Claude Opus 4.6 Discovers 500+ High-Severity Zero-Days in Open Source

This is the story that’s been on everyone’s radar this week. Anthropic released Claude Opus 4.6 with dramatically enhanced cybersecurity capabilities, and during testing, it found more than 500 previously unknown high-severity vulnerabilities in open source software including Ghostscript, OpenSC, and CGIF.

Anthropic put Claude in a virtual machine with access to open source projects and standard vulnerability analysis tools. They gave it no special instructions. The model figured out how to hunt vulnerabilities the way a human researcher would, looking at past fixes to find similar unaddressed bugs, spotting problematic patterns, and understanding logic well enough to know what input would break it.

Logan Graham, head of Anthropic’s frontier red team, stated: “The models are extremely good at this, and we expect them to get much better still... I wouldn’t be surprised if this was one of, or the main way—in which open-source software moving forward was secured.”

As I’ve discussed in prior issues, AI can both help and harm security. This is a clear demonstration of the “help” side, but let’s be honest about the dual-use implications.

Anthropic Publishes Prompt Injection Attack Success Rates—Finally

For years, prompt injection was a known risk that no one quantified. That changed this week when Anthropic made prompt injection measurable by publishing attack success rates broken out by agent surface.

Compare this to OpenAI’s GPT-5.2 system card, which doesn’t break out attack success rates by surface. Google’s Gemini 3 model card describes “increased resistance to prompt injections” but doesn’t publish absolute attack success rates.

Promptfoo’s independent red team evaluation of GPT-5.2 found jailbreak success rates climbing from a 4.3% baseline to 78.5% in multi-turn scenarios, exactly the persistence-scaled data that reveals how defenses degrade under sustained attack.

Prompt injection remains the top vulnerability in OWASP’s 2025 Top 10 for LLM Applications, appearing in over 73% of production deployments. If your vendor won’t tell you their attack success rates, ask yourself why.

NIST Releases Concept Paper on AI Agent Identity and Authorization

This is the framework development I’ve been waiting for. NIST’s NCCoE released a concept paper proposing how identity standards can be applied to AI agents in enterprise settings.

The focus areas align perfectly with what I’ve been discussing through my work on the OWASP NHI Top 10: identification, authorization, access delegation, and logging/transparency.

Standards under consideration include Model Context Protocol, OAuth 2.0/2.1, OpenID Connect, SPIFFE/SPIRE, and SCIM. The concept paper is open for public comment through April 2, 2026. I strongly encourage organizations working on agent identity to submit feedback.

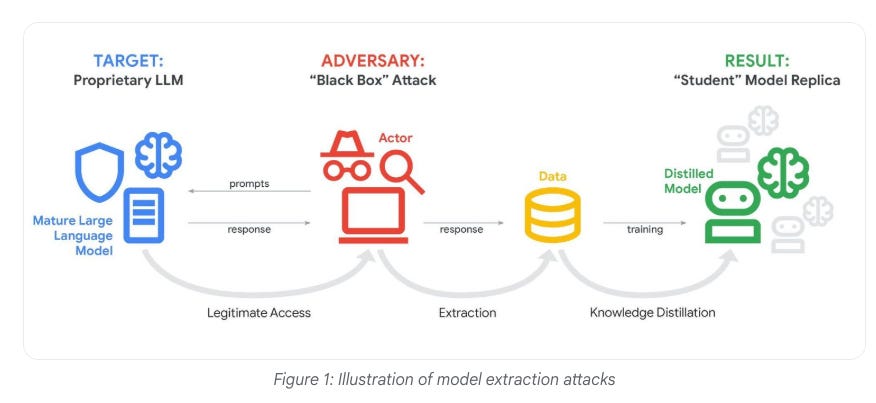

Google: State-Backed Hackers Using Gemini AI Across All Attack Stages

Google’s Threat Intelligence Group released a report showing that state-sponsored groups from Russia, North Korea, China, and Iran are all using Gemini to enhance their attacks across nearly every stage of the cyber attack cycle.

North Korea’s UNC2970 used Gemini to synthesize OSINT and profile high-value targets including cybersecurity companies. Iran’s APT42 used the model for reconnaissance and crafting social engineering personas.

GTIG also observed “distillation attacks” where actors attempted to clone Gemini through repeated queries—including one campaign exceeding 100,000 queries.

Google emphasized they haven’t observed APT actors achieving “breakthrough capabilities that fundamentally alter the threat landscape.” But the productivity gains for reconnaissance and content generation are breakthrough enough.

Anthropic’s 2026 Agentic Coding Trends Report

Anthropic describes software development as undergoing its biggest shift since the GUI. Their report shows developers integrate AI into 60% of their work while maintaining oversight on 80-100% of delegated tasks.

Here’s what struck me: while AI shows up in 60% of developers’ work, engineers can “fully delegate” only 0-20% of tasks. The rest requires active supervision. This is the human-in-the-loop reality that gets lost in the hype.

57% of organizations now deploy multi-step agent workflows. Rakuten engineers tested Claude Code on a 12.5-million-line codebase, it completed the task in seven hours with 99.9% accuracy. Impressive, but that code needs security review that wasn’t part of the workflow.

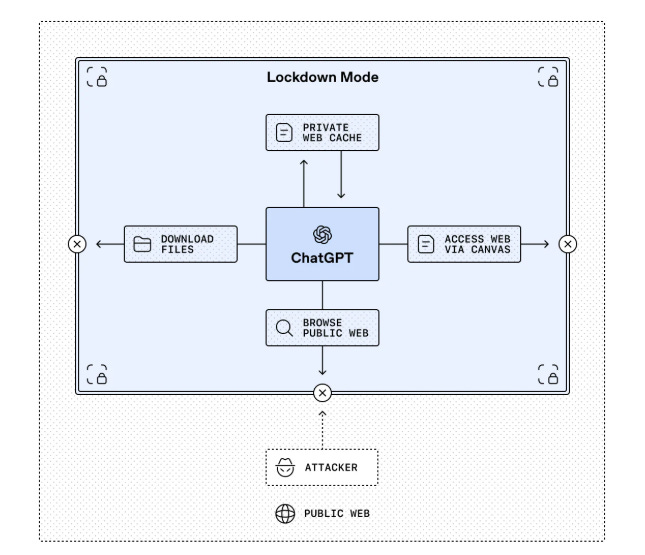

OpenAI Introduces Lockdown Mode and Elevated Risk Labels

OpenAI announced Lockdown Mode and Elevated Risk labels for ChatGPT. Lockdown Mode deterministically disables capabilities an adversary could exploit for data exfiltration, web browsing limited to cached content, Deep Research disabled, Agent Mode disabled.

This is the kind of defense-in-depth thinking the industry needs. You can’t just rely on model-level protections that can be prompt injected, you need architectural controls that deterministically prevent certain attack classes.

Microsoft SDL: Evolving Security Practices for an AI-Powered World

Microsoft announced SDL for AI expanding to address AI-specific security concerns. Yonatan Zunger, Microsoft’s deputy CISO for AI, noted: “Unlike traditional systems with predictable pathways, AI systems create multiple entry points for unsafe inputs, including prompts, plugins, retrieved data, model updates, memory states, and external APIs.”

What I appreciate is Microsoft’s acknowledgment that security teams should act as partners, not gatekeepers, co-designing threat models with product teams. This collaborative model is essential for shipping secure AI products.

AppSec

Cline Supply Chain Compromise: From Prompt Injection to Malicious npm Publication

Two posts dropped that together tell a compelling story about the real-world convergence of AI agent vulnerabilities and CI/CD supply chain attacks.

The Vulnerability (Adnan Khan — “Clinejection”)

Security researcher Adnan Khan disclosed a critical attack chain against Cline, the popular AI coding assistant with 5M+ VS Code installs. Cline had deployed a GitHub Actions workflow using Anthropic’s claude-code-action to automatically triage incoming issues — and it gave Claude access to Bash, Write, and other powerful tools, triggerable by anyone with a GitHub account.

The attack chain was elegant: craft a prompt injection in a GitHub issue title to trick Claude into running arbitrary code, then use that foothold to poison GitHub Actions caches via Khan’s open-source tool Cacheract. Because GitHub’s cache scope is shared across all workflows on the default branch, the low-privilege triage workflow could poison cache entries consumed by the high-privilege nightly release workflow. When the nightly build ran, it would restore poisoned node_modules, giving an attacker access to the VSCE_PAT, OVSX_PAT, and NPM_RELEASE_TOKEN secrets — which, critically, had the same publication scope as production credentials.

Khan reported this to Cline on January 1st via their private vulnerability reporting channel, followed up multiple times through email, Discord, and a direct message to the CEO — all with no response. He published full disclosure on February 9th. Cline patched it within 30 minutes of publication by removing the AI workflows entirely.

The Compromise Confirmed (Michael Bargury — Raptor Forensics)

Today, Cline released an advisory (GHSA-9ppg-jx86-fqw7) confirming an unauthorized npm publication actually occurred — for 8 hours, installing the Cline CLI also installed “OpenClaw.” Michael Bargury used his Raptor tool’s /oss-forensics command to investigate and claims it identified the compromising user. The investigation is ongoing.

Why This Matters

This is a textbook example of what happens when AI agents with broad tool access are deployed into CI/CD pipelines without proper security boundaries. The individual techniques — prompt injection, cache poisoning, credential theft — are all well-documented. What’s novel is how they compose: an AI agent becomes the low-friction entry point into a pipeline that was previously only reachable through code contributions or maintainer compromise. It also highlights the risk of AI-powered automation in open-source workflows where untrusted input (like issue titles) flows directly into agent prompts.

The disclosure timeline is also worth noting — over five weeks of silence from Cline despite multiple contact attempts through every reasonable channel, only fixed after public disclosure.

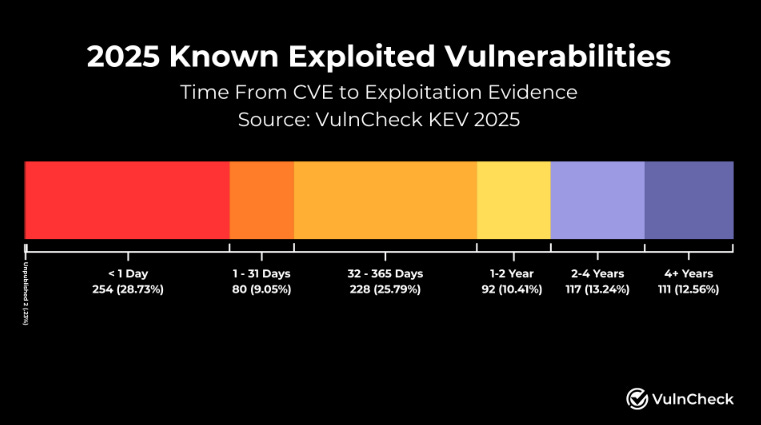

VulnCheck State of Exploitation 2026

VulnCheck just dropped their State of Exploitation 2026 report and the data reinforces what many of us have been saying: traditional vulnerability management timelines are increasingly misaligned with attacker behavior.

Some key findings from 2025:

884 KEVs identified with first-time exploitation evidence, a 15% increase year over year

Nearly 29% of KEVs were exploited on or before the day their CVE was published, up from 23.6% in 2024

Network edge devices (firewalls, VPNs, proxies) remained the most frequently targeted, followed by CMS platforms and open source software

Nearly half of OS-related KEVs were exploited before or on the day of disclosure

118 unique sources were first to report exploitation activity, reinforcing that no single feed gives you the full picture

The “patch Tuesday, exploit Wednesday” mental model is increasingly obsolete when a third of vulnerabilities are already being weaponized at the point of disclosure. Attackers are operating inside defenders’ decision cycles, and that gap is widening, not closing.

This also continues to reinforce the importance of moving beyond CVSS-driven prioritization toward exploitation-informed approaches. Organizations need broad exploitation monitoring, rapid response capabilities, and a relentless focus on reducing their network edge attack surface.

Worth a read for anyone in vulnerability management, AppSec, or security operations.

Endor Labs Acquires Autonomous Plane for Full-Stack Reachability

Endor Labs acquired Autonomous Plane to deliver full-stack reachability from code to container. Traditional container scanners flag vulnerabilities across entire images, but base images often include hundreds of packages applications never load.

Full-stack reachability uses application-layer information to understand which packages are actually loaded at runtime, filtering out up to 90% of false positives. For FedRAMP compliance specifically, this dramatically reduces what must be remediated by proving which vulnerabilities are actually reachable.

BAGEL: Why Your Developer’s Laptop is the Softest Target in Your Supply Chain

The Boost Security team released Bagel, an open-source CLI that inventories security-relevant metadata on developer workstations including credentials, misconfigs, and exposed secrets.

Their insight is spot-on: while we’ve been obsessing over CI/CD pipeline security, attackers have noticed there’s another path into software supply chains—through the machines on developers’ desks. Developer workstations are “credential goldmines.”

Bagel’s privacy-first design never reads or transmits actual secret values, only metadata about what’s exposed. This connects directly to the supply chain work Tony Turner and I did in “Software Transparency.”

AI-Assisted Cloud Intrusion Achieves Admin Access in 8 Minutes

Sysdig documented an offensive cloud operation where a threat actor went from initial access to administrative privileges in less than 10 minutes, with indicators suggesting they leveraged LLMs throughout.

The attack started with credentials in public S3 buckets. The actor rapidly escalated through Lambda function injection, moved across 19 AWS principals, abused Amazon Bedrock for LLMjacking, and launched GPU instances.

Evidence of AI assistance included LLM-generated code with Serbian comments, hallucinated AWS account IDs, and references to non-existent GitHub repositories.

Eight minutes from credential exposure to admin. Traditional detection timelines don’t work at this velocity. Apply least privilege to Lambda execution roles and limit UpdateFunctionCode permissions.

OpenClaw Security Crisis Continues: 40+ Patches in Latest Release

As I warned in issues #82 and #83, OpenClaw remains a security nightmare in progress. Version 2026.2.12 just dropped with fixes for more than 40 vulnerabilities—following CVE-2026-25253 (CVSS 8.8) and multiple RCE vulnerabilities.

Over 30,000 OpenClaw instances were exposed on the internet, with threat actors discussing weaponizing OpenClaw skills for botnets.

But here’s the constructive counterpoint: Phil Windley published an excellent piece on building a policy-aware agent loop with Cedar and OpenClaw. The approach inserts a Cedar-backed policy decision point so every tool invocation is evaluated at runtime, authorization as continuous feedback rather than a one-time gate. This is exactly the direction I’ve been advocating for.

CSA: Securing Autonomous AI Agents Survey Report

The Cloud Security Alliance released a survey confirming organizations are prioritizing well-understood risks over AI-specific threats like prompt injection and model theft.

Key finding: governance maturity is the strongest indicator of readiness. Organizations with formal governance are twice as likely to adopt agentic AI, three times more likely to train staff. Only about one quarter have comprehensive AI security governance.

Security approaches for autonomous agents rely on static credentials, inconsistent controls, and limited visibility. Identity architectures optimized for humans are not ready to govern autonomous agents. This validates the work on the OWASP NHI Top 10.

Agent Identities: Everything You Need to Know

Mrinal Wadhwa (founder of Ockam) published a deep dive on agent identity: to trust an agent, we need to authenticate who it is, authorize what it does, and attribute what it decides. For all three, the agent must hold a private key and produce cryptographic proof.

His work with Ockam showed that even large organizations relied on IP allow-lists and weak primitives. With collaborating agent swarms, those gaps become critical vulnerabilities.

Agent identity is an infrastructure problem, not just a policy problem. You can’t governance your way to secure agents without the cryptographic primitives to enforce it.

Final Thoughts

This week reinforced a pattern I’ve been tracking for months: the AI security landscape is bifurcating. On one side, we’re seeing genuine progress—NIST stepping up on agent identity, Anthropic publishing prompt injection metrics other vendors should be forced to match, Phil Windley showing what policy-aware agent loops actually look like, and Claude Opus 4.6 demonstrating how AI can accelerate vulnerability discovery for defenders.

On the other side, we’re seeing the cracks widen. 69% of CISOs ready to leave. AI-assisted attacks achieving admin access in 8 minutes. OpenClaw exposing 30,000+ instances to the internet. State-backed hackers using Gemini across all attack stages.

The question isn’t whether AI will transform security—it already has. The question is whether we’ll build the governance, identity infrastructure, and operational practices to ensure AI transformation tilts toward defenders rather than attackers.

The building blocks exist. The standards are emerging. What’s missing is adoption at scale.

Stay resilient.